Does Optimism About AI in Oncology Overshadow Risks to Clinical Judgment?

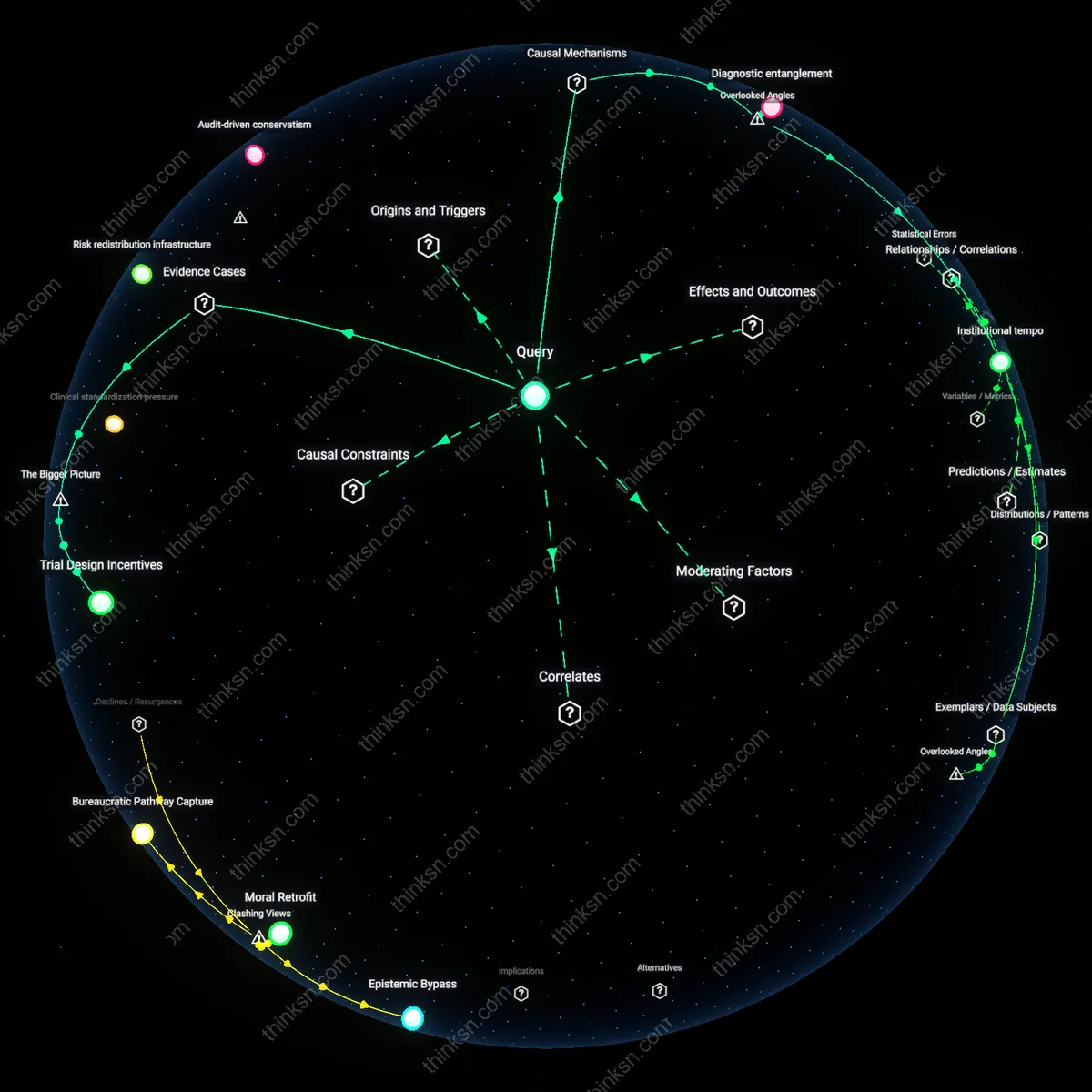

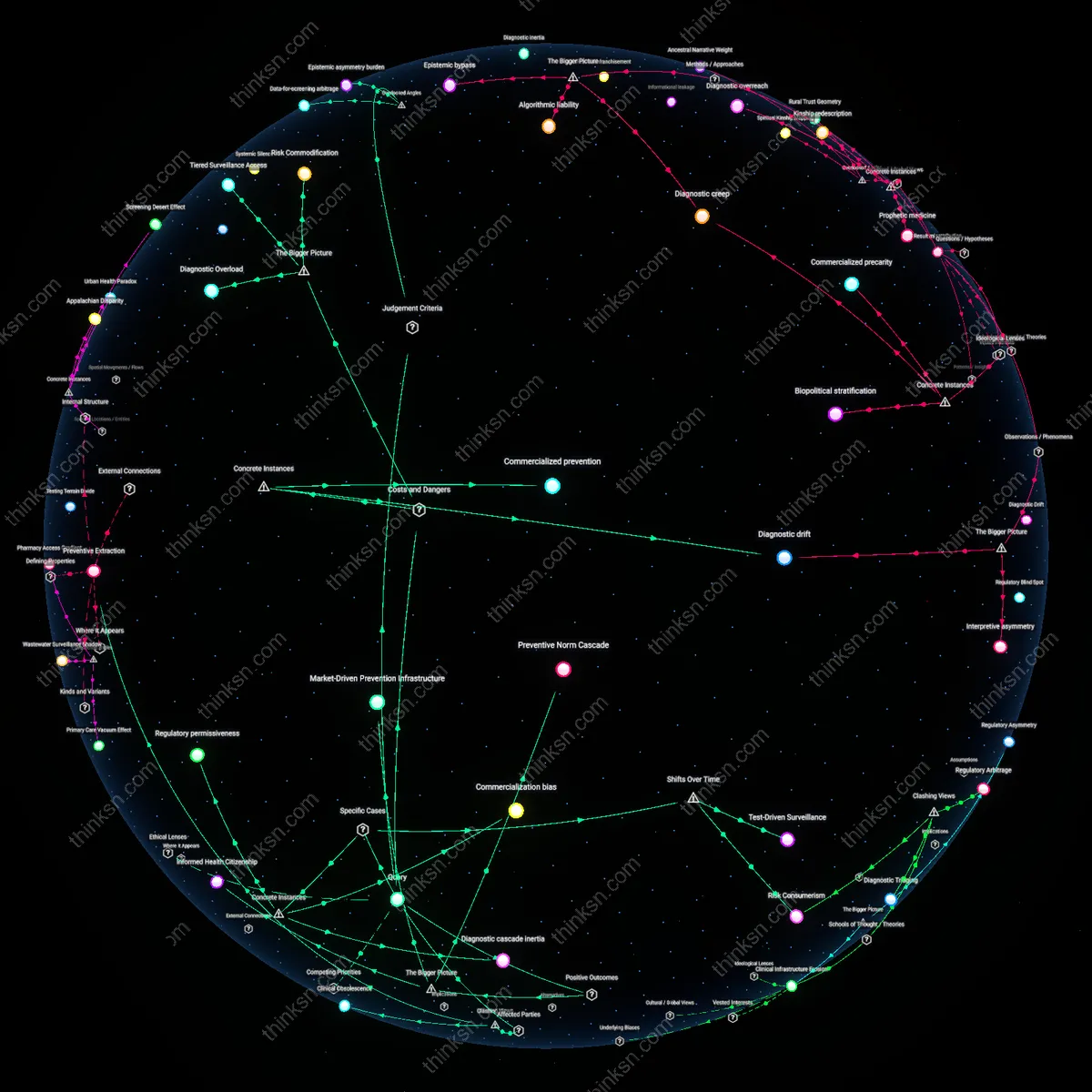

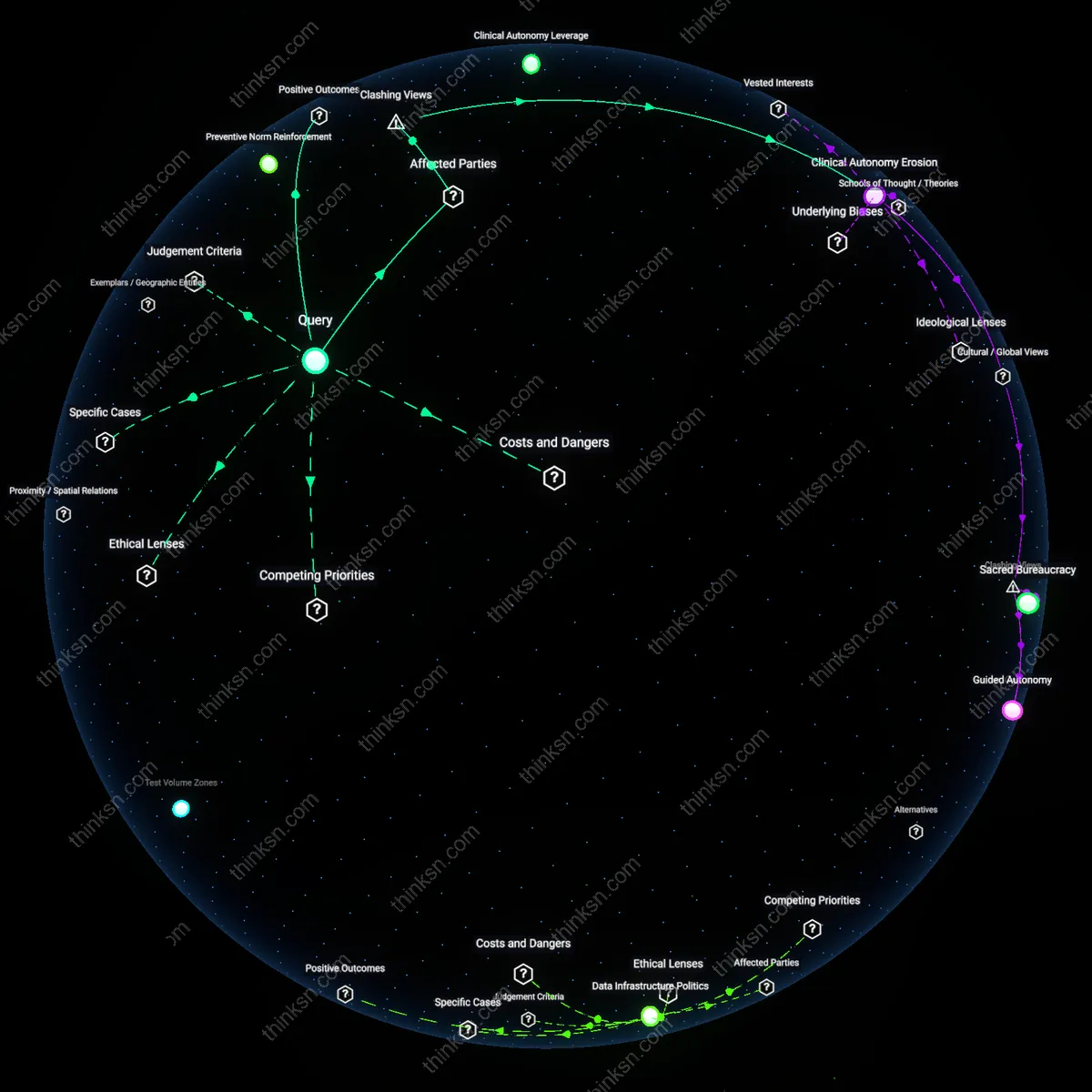

Analysis reveals 10 key thematic connections.

Key Findings

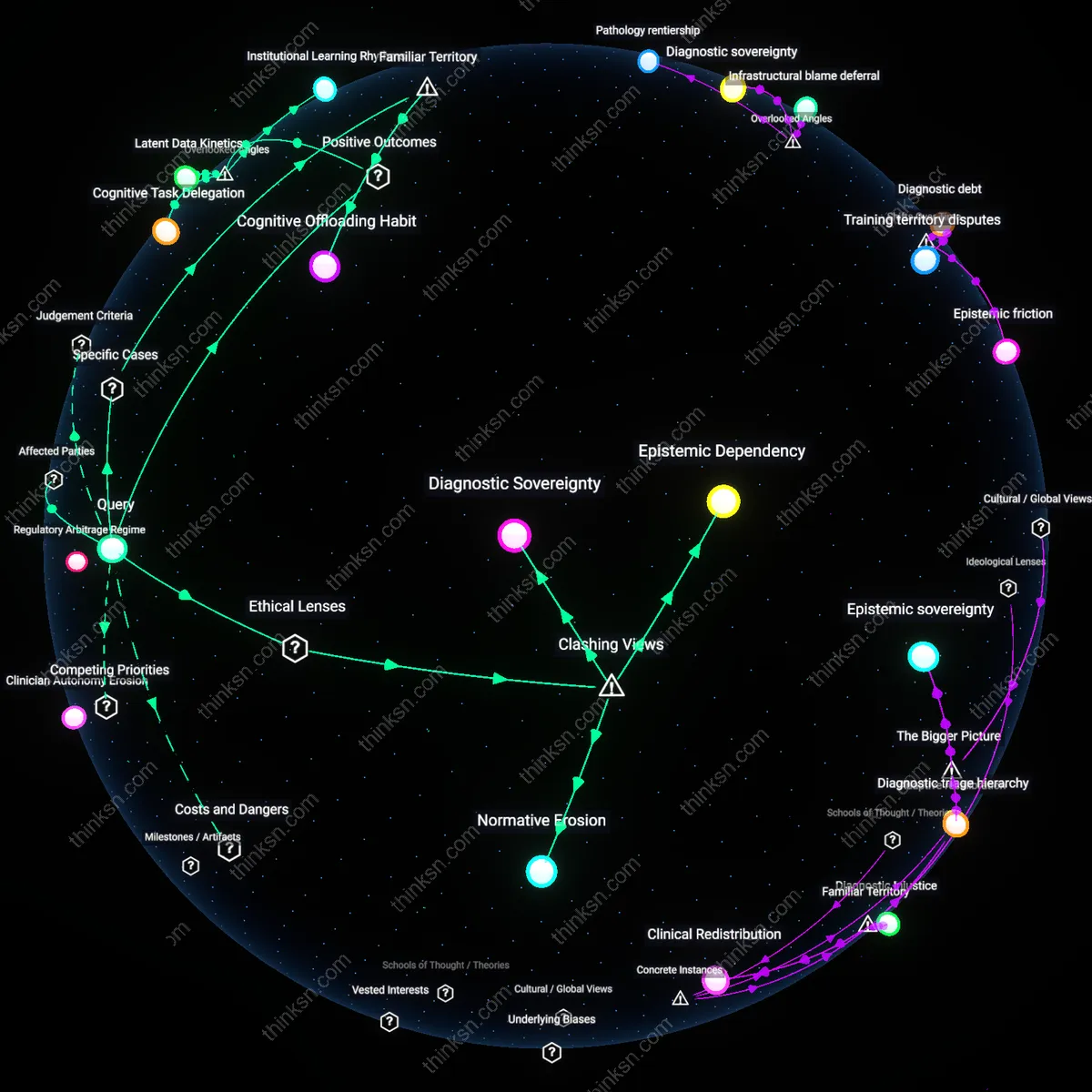

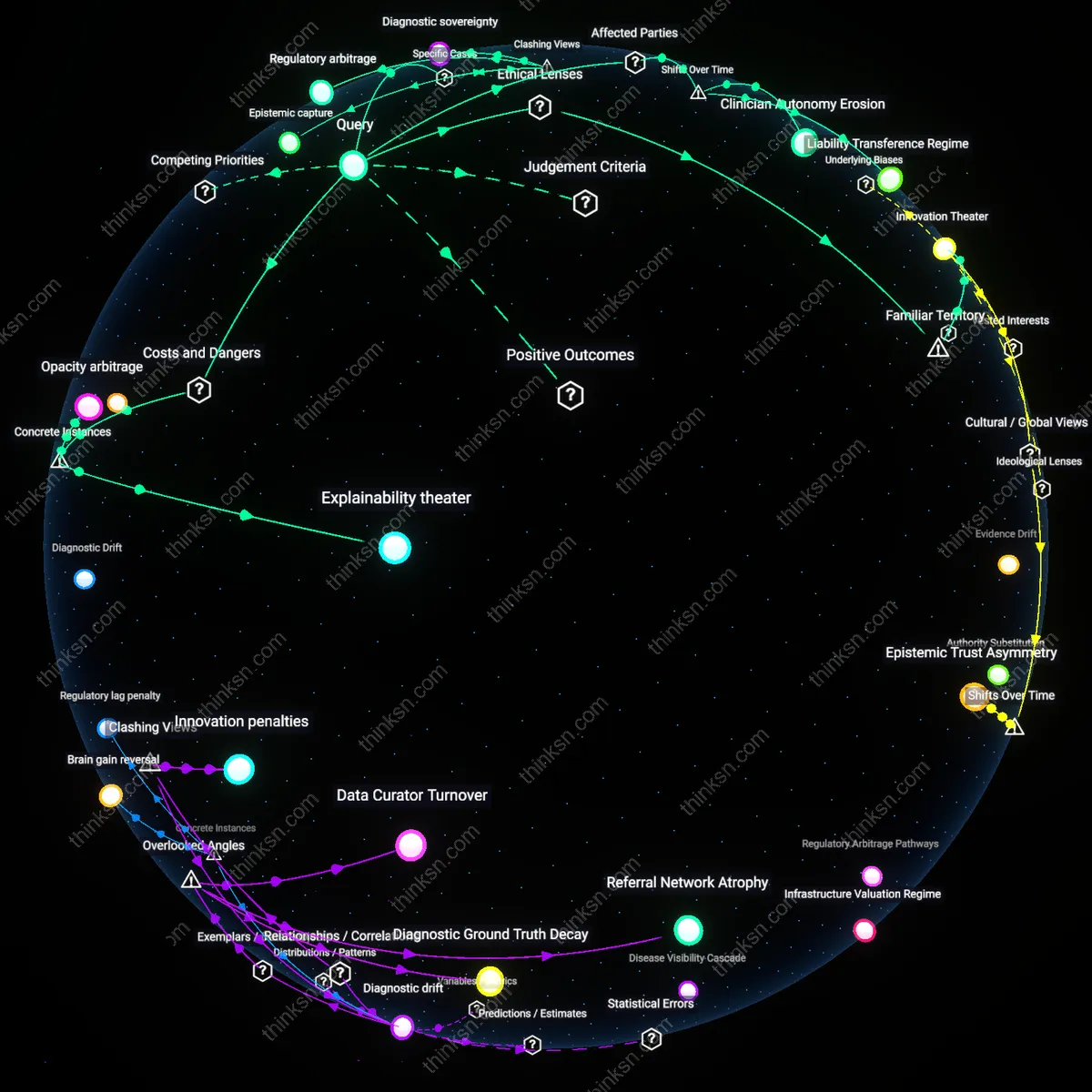

Clinician Autonomy Erosion

AI undermines clinical judgment when oncologists at rural hospitals in the U.S. rely on commercially deployed decision-support systems that prioritize cost-efficient treatment pathways tied to insurer reimbursement models, creating a subtle shift in medical authority from physician to algorithmic protocols embedded in electronic health records; this occurs because regulatory approval pathways like the FDA’s De Novo classification focus on analytical validity rather than real-world clinical impact, allowing deployment without requiring evidence of improved patient outcomes or preservation of professional discretion, revealing how market-driven incentives in digital health displace clinical autonomy under the guise of standardization.

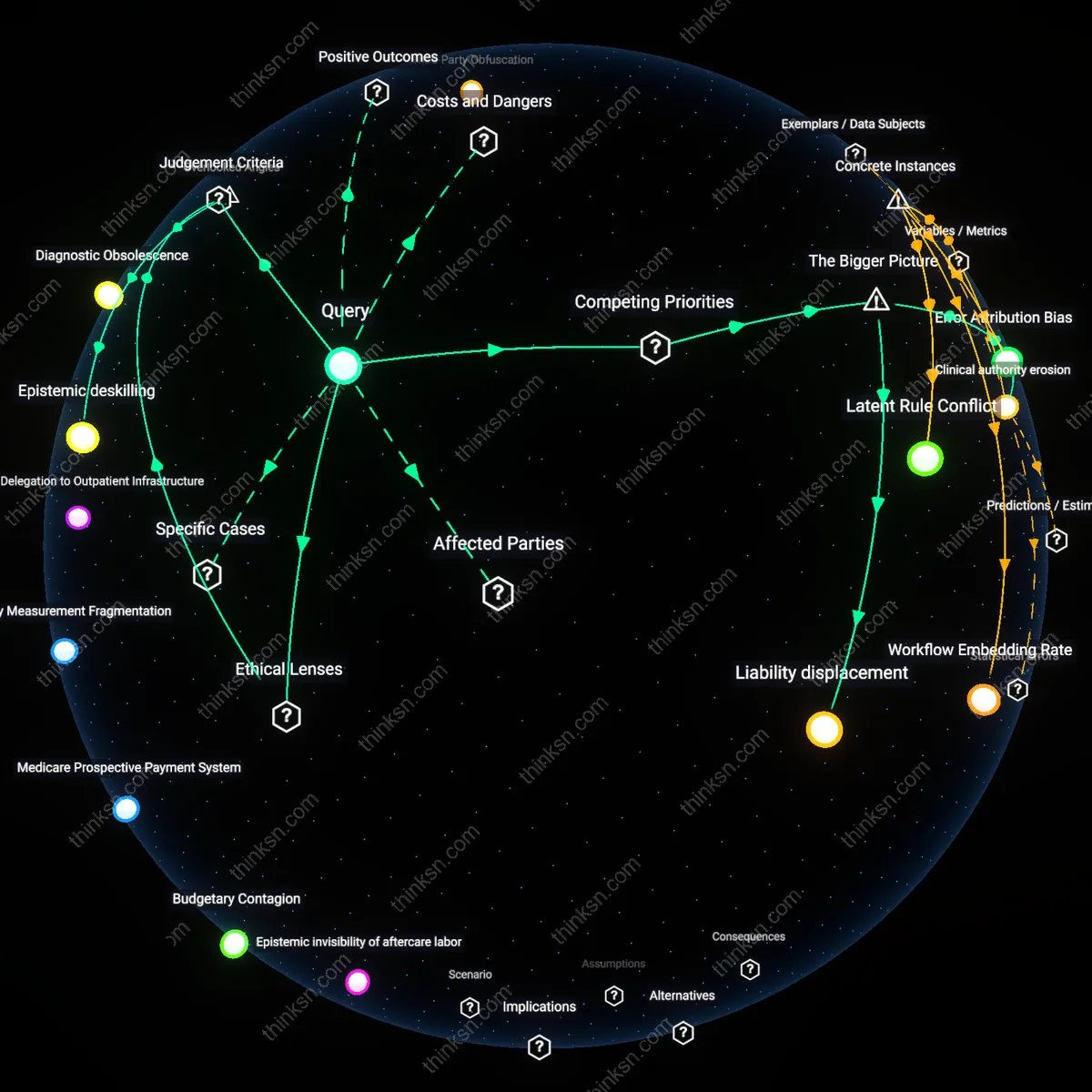

Diagnostic Inference Gap

AI enhances oncology decision-making most significantly in low-resource settings such as public cancer centers in South Africa, where radiologists use AI-powered imaging analysis to detect early-stage tumors in regions with fewer than one specialist per million people, but the benefit depends on algorithms trained on Western patient populations, creating a latent diagnostic inference gap that systematically reduces sensitivity for local variants of disease; this exposes how global health inequities are reproduced not through deliberate neglect but through invisible statistical drift in model performance across genetic and environmental contexts.

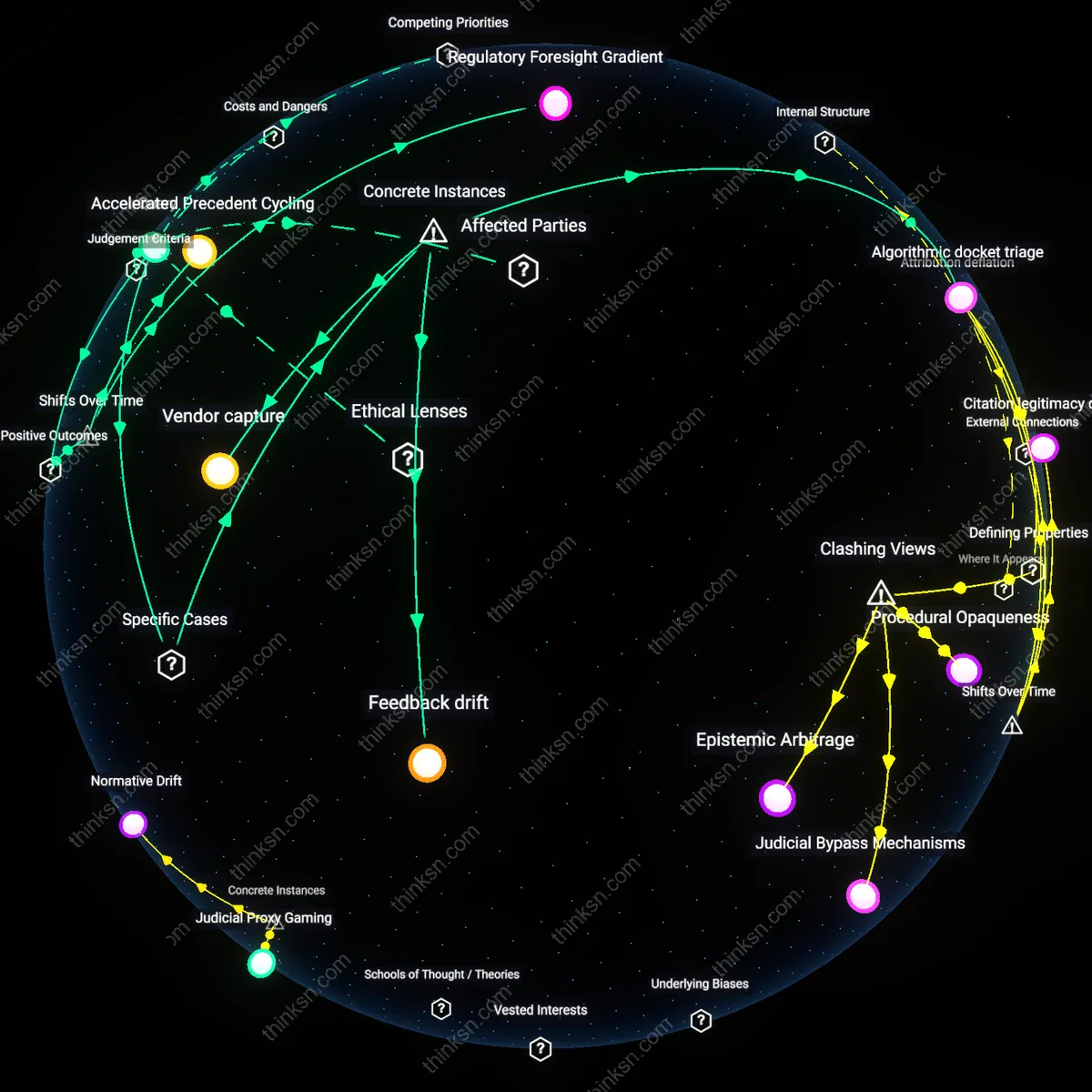

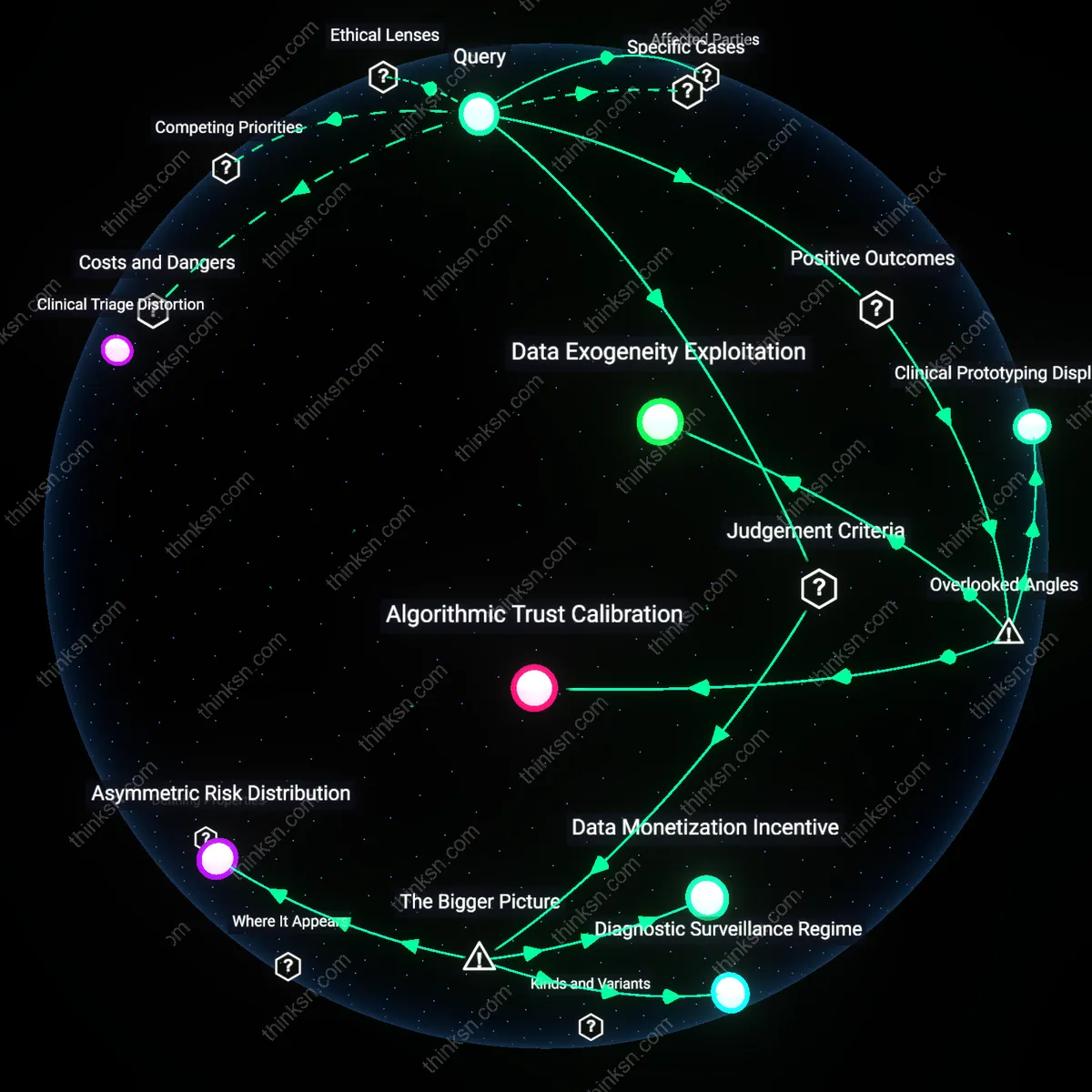

Regulatory Arbitrage Regime

The integration of AI into oncology is shaped by a regulatory arbitrage regime in which private equity–backed health tech firms in Germany and France deploy 'clinical decision support' tools just below thresholds requiring full CE marking as medical devices, exploiting gray zones in the EU MDR classification to influence treatment decisions while avoiding liability for flawed recommendations; this dynamic enables rapid market penetration without mandated external validation, privileging speed-to-market over clinician accountability and distorting incentives for genuine therapeutic innovation.

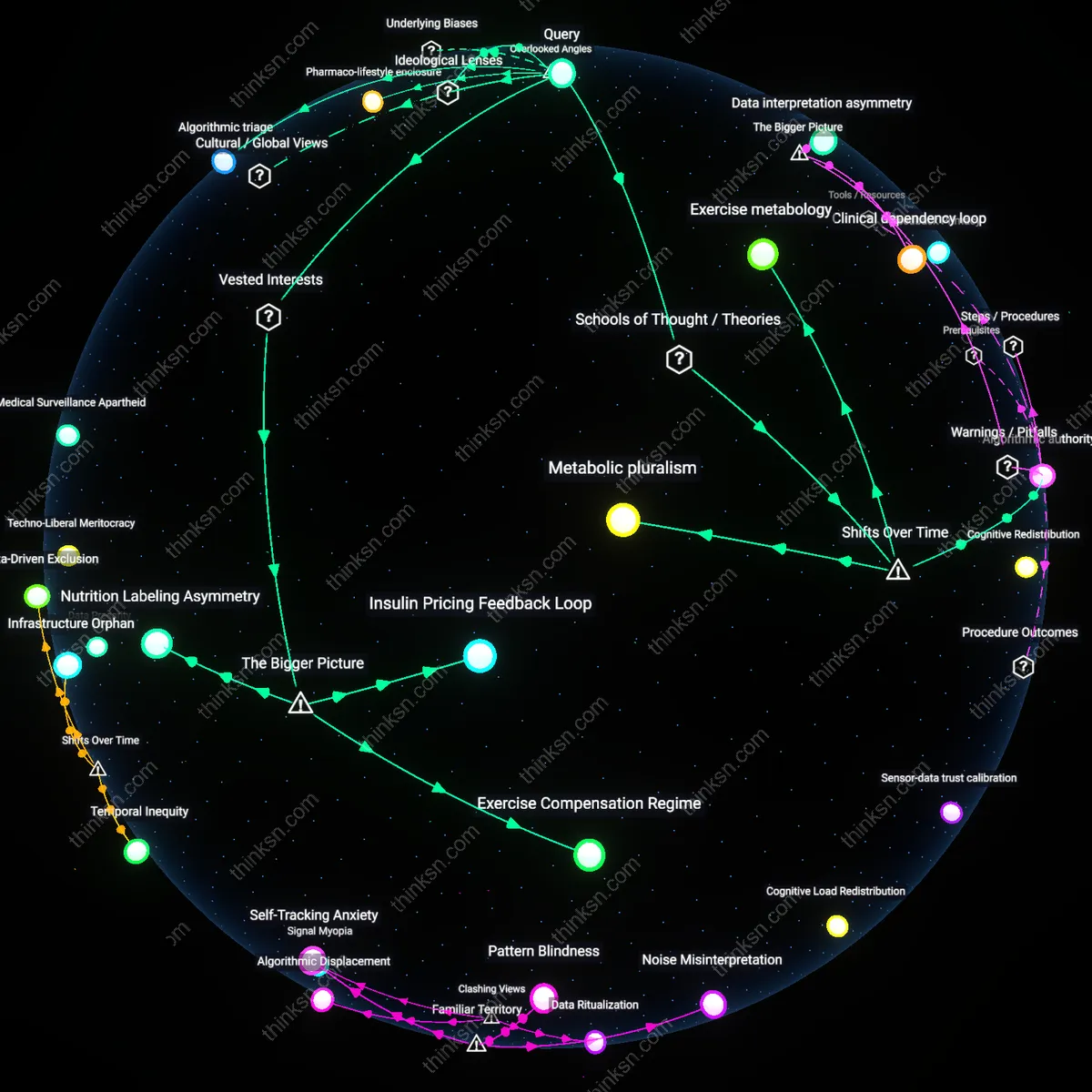

Cognitive Task Delegation

AI enhances oncology decision-making by enabling physicians to offload cognitively taxing pattern recognition tasks—such as tumor boundary delineation in radiology or mutational signature detection in sequencing data—to systems that operate with higher consistency and speed, freeing clinicians to focus on contextual interpretation and patient values. This shift is not merely about efficiency but reconfigures clinical expertise around higher-order judgment rather than data parsing, a transformation rarely acknowledged in debates that frame AI as either replacing or assisting doctors. The underappreciated dynamic is that AI’s greatest utility lies not in accuracy alone but in its ability to reshape the cognitive division of labor, making clinical reasoning more strategic and less burdened by perceptual minutiae.

Latent Data Kinetics

AI systems in oncology extract actionable signals from data streams that were previously inert—not because the data lacked potential, but because they exceeded human temporal and integrative capacity, such as real-time aggregation of longitudinal lab trends, drug toxicity reports, and trial eligibility across fragmented electronic health records at institutions like MD Anderson or Mass General. The constructive outcome emerges not from AI outthinking clinicians, but from activating dormant data layers that only become clinically relevant when processed at scale and velocity, an overlooked mechanism that redefines what counts as 'evidence' in real-world decision-making. This changes the standard understanding by showing that AI expands the evidentiary base itself, rather than merely interpreting existing information.

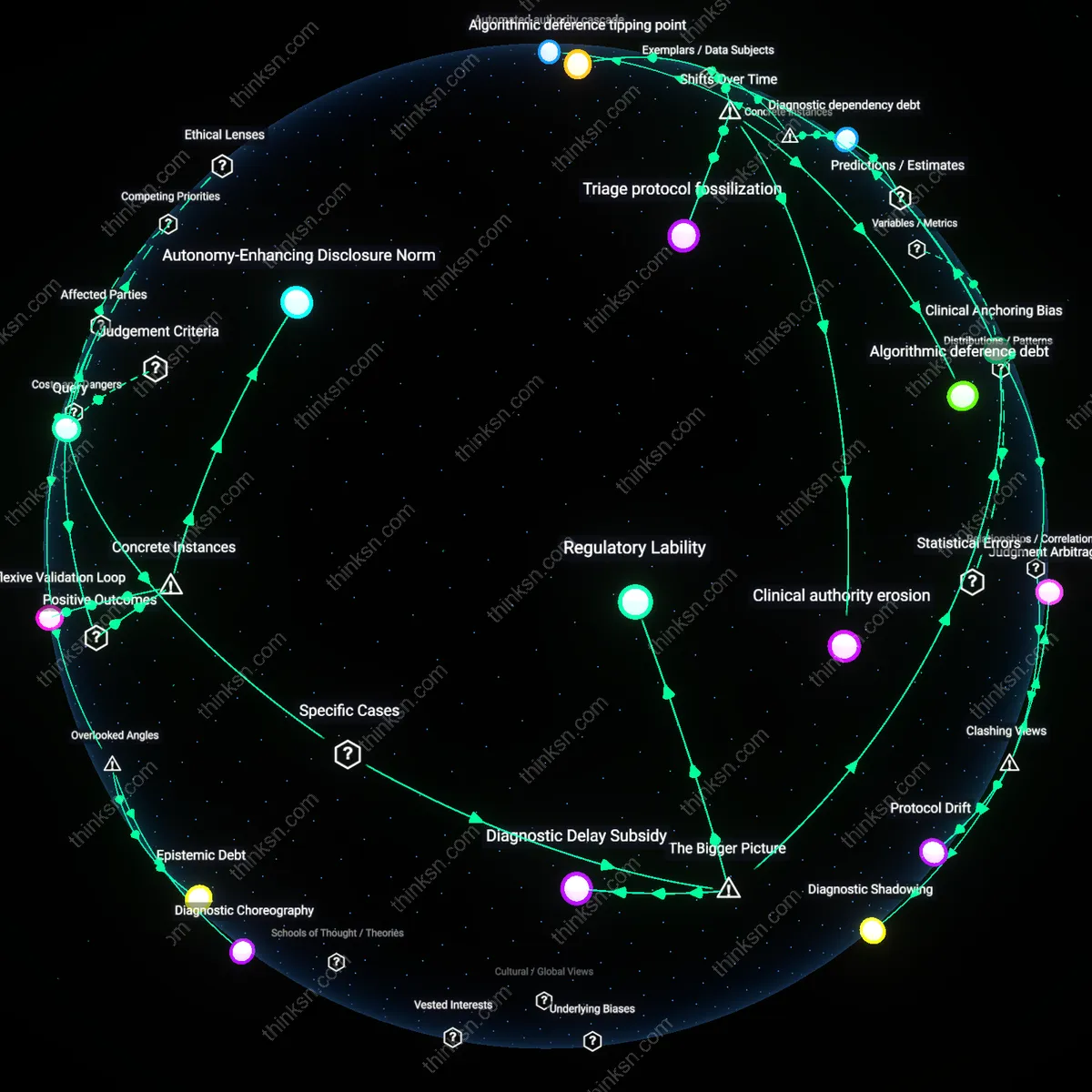

Institutional Learning Rhythms

AI strengthens clinical judgment in oncology by embedding retrospective learning into routine workflows through continuous feedback loops—for example, when machine learning models at regional cancer centers retroactively analyze treatment deviations and survival outcomes to recalibrate local guidelines, thus institutionalizing adaptive expertise that individual clinicians cannot maintain alone. This form of systematized experiential learning operates below the level of individual decision-making, quietly elevating collective standards without requiring behavioral change from doctors, a dimension rarely visible in discussions focused on AI as a point-of-care tool. The overlooked impact is that AI’s most durable contribution may be in reshaping how medical knowledge accumulates and propagates within organizations over time.

Epistemic Dependency

AI enhances oncology decision-making not by supplementing clinical judgment but by reconfiguring it into a system of epistemic dependency, where clinicians increasingly delegate interpretive authority to algorithms trained on opaque, proprietary datasets that reflect corporate rather than clinical epistemologies. This shift operates through the institutional adoption of AI tools like IBM Watson for Oncology or Tempus xT, which embed regulatory-compliant yet clinically contested recommendations into routine workflows, privileging data-driven efficiency over physician-led diagnostic reasoning. The non-obvious consequence is that AI does not undermine clinical judgment so much as transform it into a secondary validation layer for algorithmic outputs, thereby aligning medical practice with utilitarian techno-governance frameworks that deprioritize physician autonomy in favor of scalable, standardized care.

Normative Erosion

Current evidence shows AI in oncology risks undermining clinical judgment not through technical failure but through normative erosion, wherein repeated exposure to algorithmic suggestions recalibrates what clinicians perceive as acceptable or standard treatment, thus gradually displacing professional norms rooted in deontological medical ethics. This occurs via platforms like Google’s LYNA or PathAI, which, though accurate in detecting metastases, embed probabilistic thresholds that redefine diagnostic certainty as statistical likelihood, altering the physician’s ethical responsibility to act on incomplete knowledge. The counterintuitive effect is that even when AI is optional, its integration reshapes clinical conscience by aligning it with consequentialist risk-management logic, privileging population-level outcomes over individualized fiduciary duty.

Diagnostic Sovereignty

AI enhances oncology decision-making only in jurisdictions where clinicians retain diagnostic sovereignty—the legal and institutional authority to override algorithmic outputs without professional or financial penalty—revealing that the technology’s benefit is contingent not on accuracy but on the political distribution of medical authority. In countries like Germany or France, where medical practice is governed by civil-law doctrines that enshrine physician autonomy (e.g., the German Berufsordnung), AI functions as a consultative tool; in contrast, in U.S. value-based care models, AI recommendations become de facto standards tied to reimbursement, transforming clinical judgment into a compliance mechanism. The underappreciated reality is that AI’s ethical acceptability depends not on its performance metrics but on whether the legal framework treats the physician as the final moral agent, not the system’s auditor.

Cognitive Offloading Habit

At MD Anderson Cancer Center, clinicians began deferring to AI-generated risk stratifications for leukemia patients after integrating machine learning into routine triage, especially during high-volume shifts. The mechanism is temporal pressure—when demand exceeds deliberative capacity, fast-responding algorithms become default pathways, not just aids. Over time, staff began to treat AI output as a procedural standard, reducing second reads even for borderline cases. What’s underappreciated in public discourse is that the erosion of judgment doesn’t stem from overreliance on flawed algorithms, but from the seamless substitution of deliberation with execution under efficiency regimes.