Should Doctors Rely on AI When Patient Autonomy is at Stake?

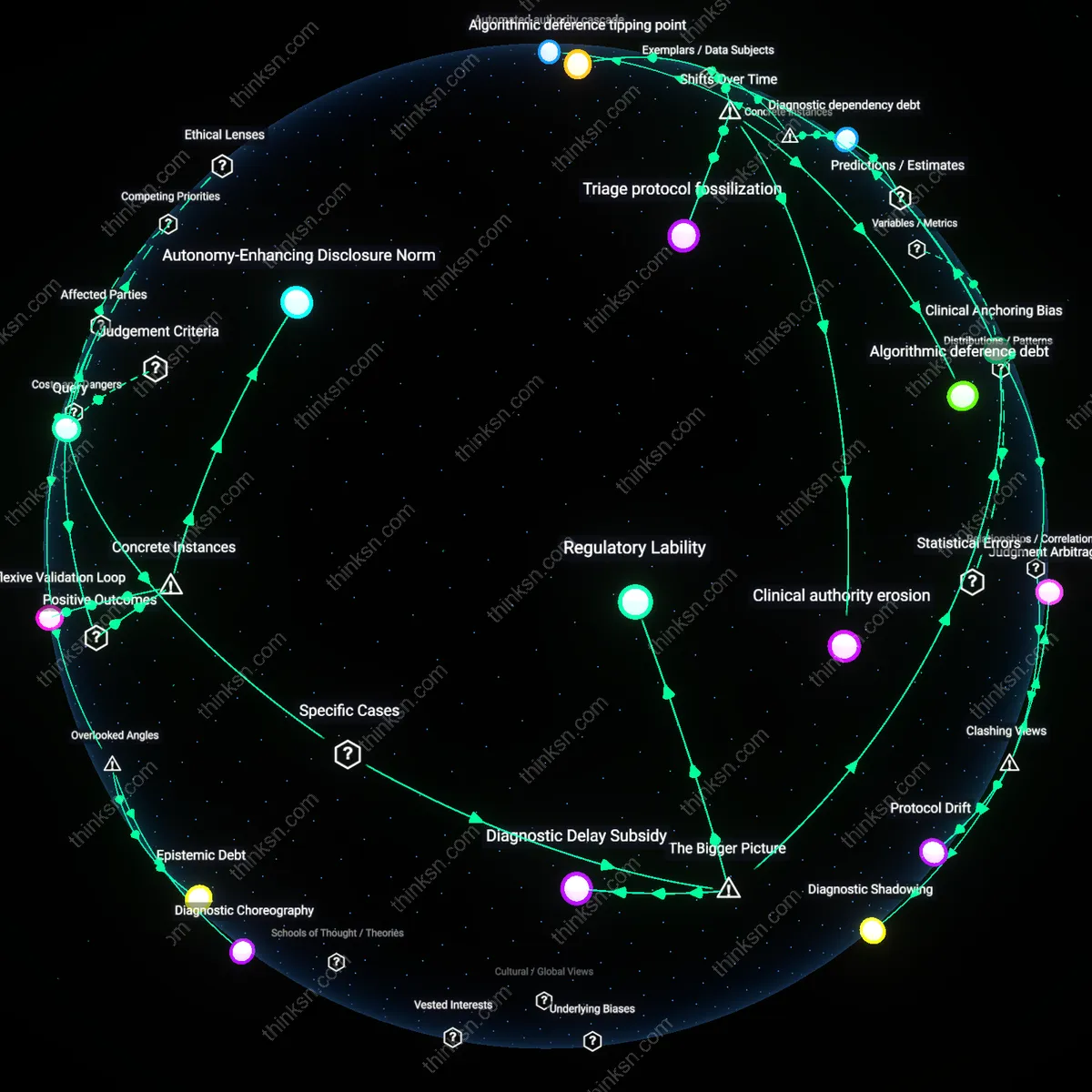

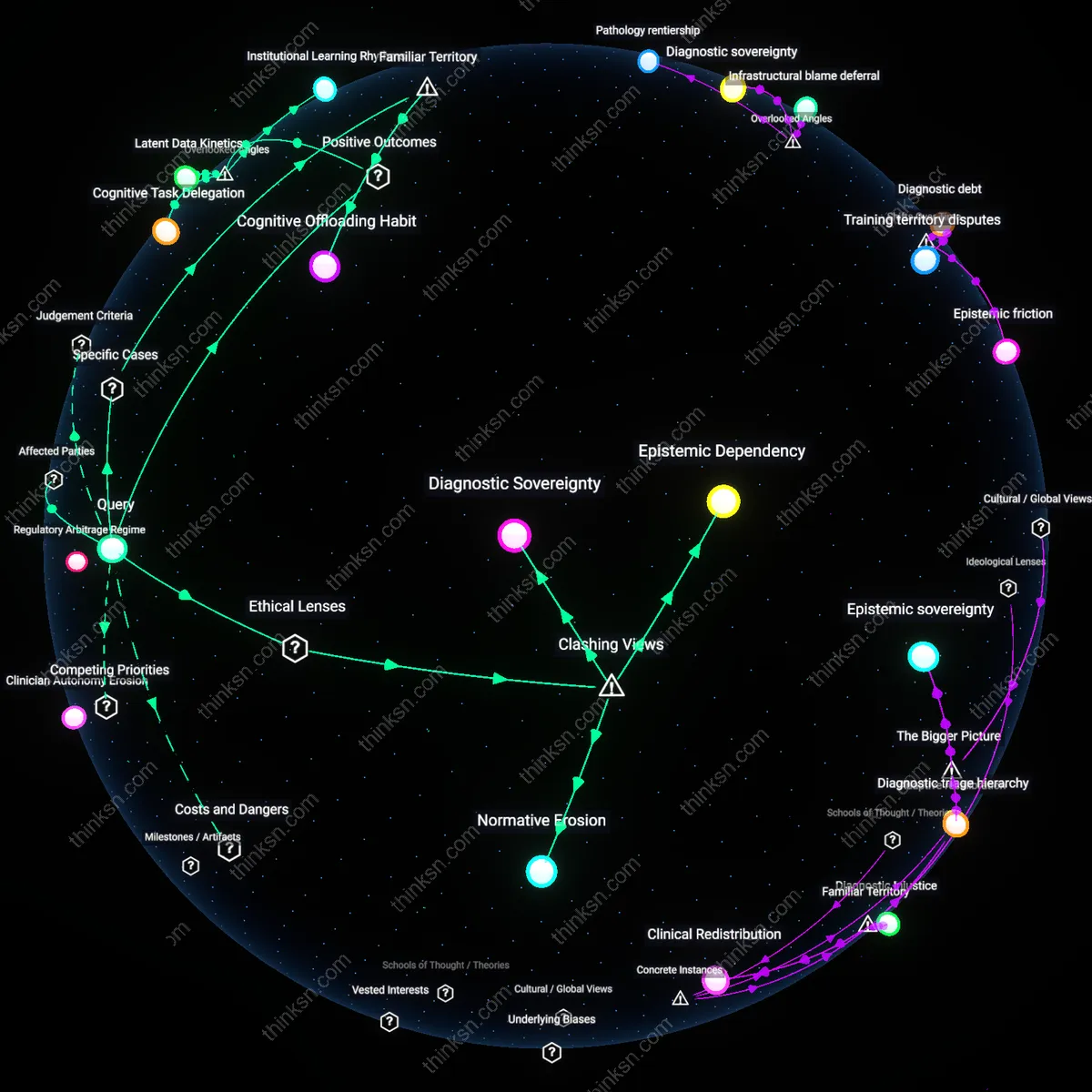

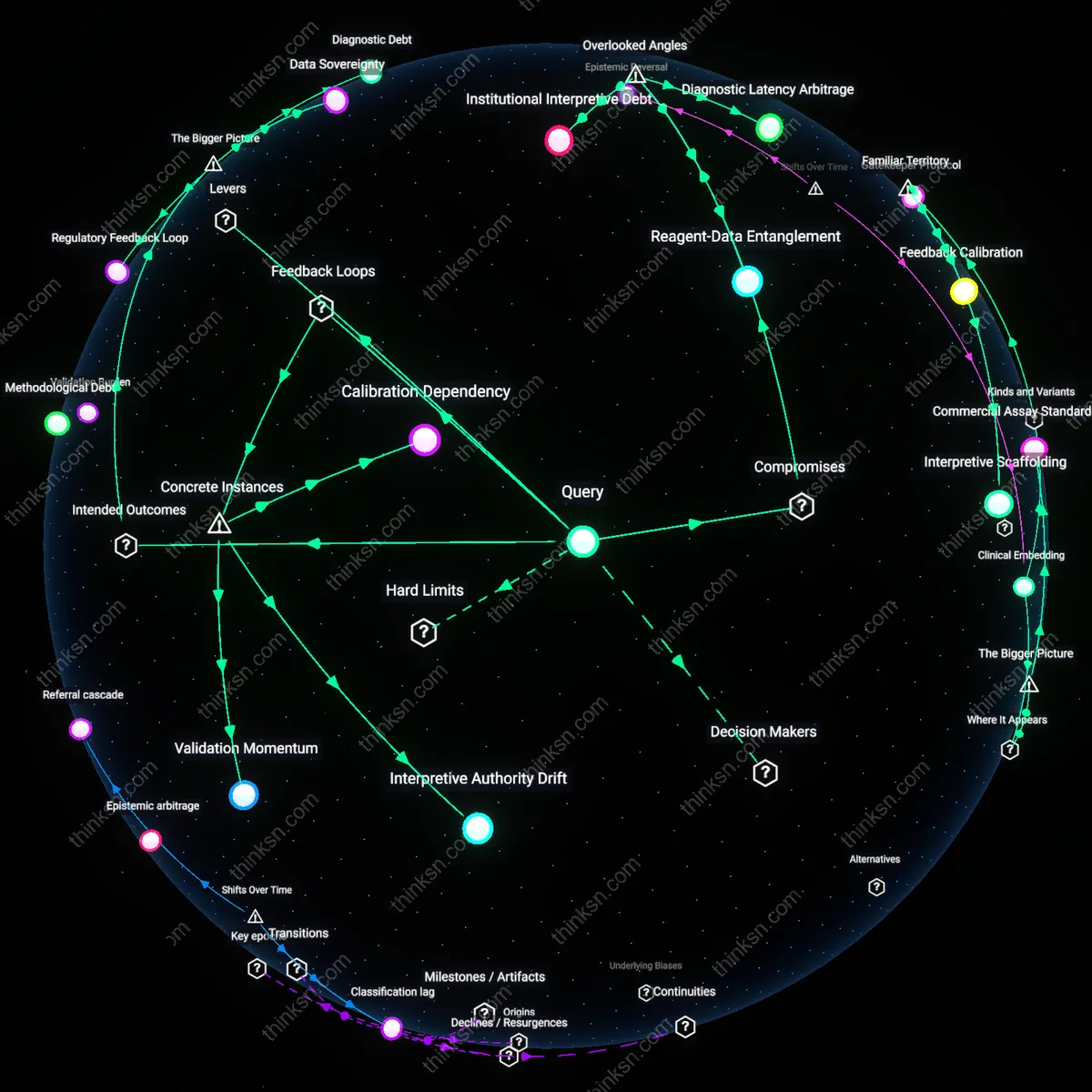

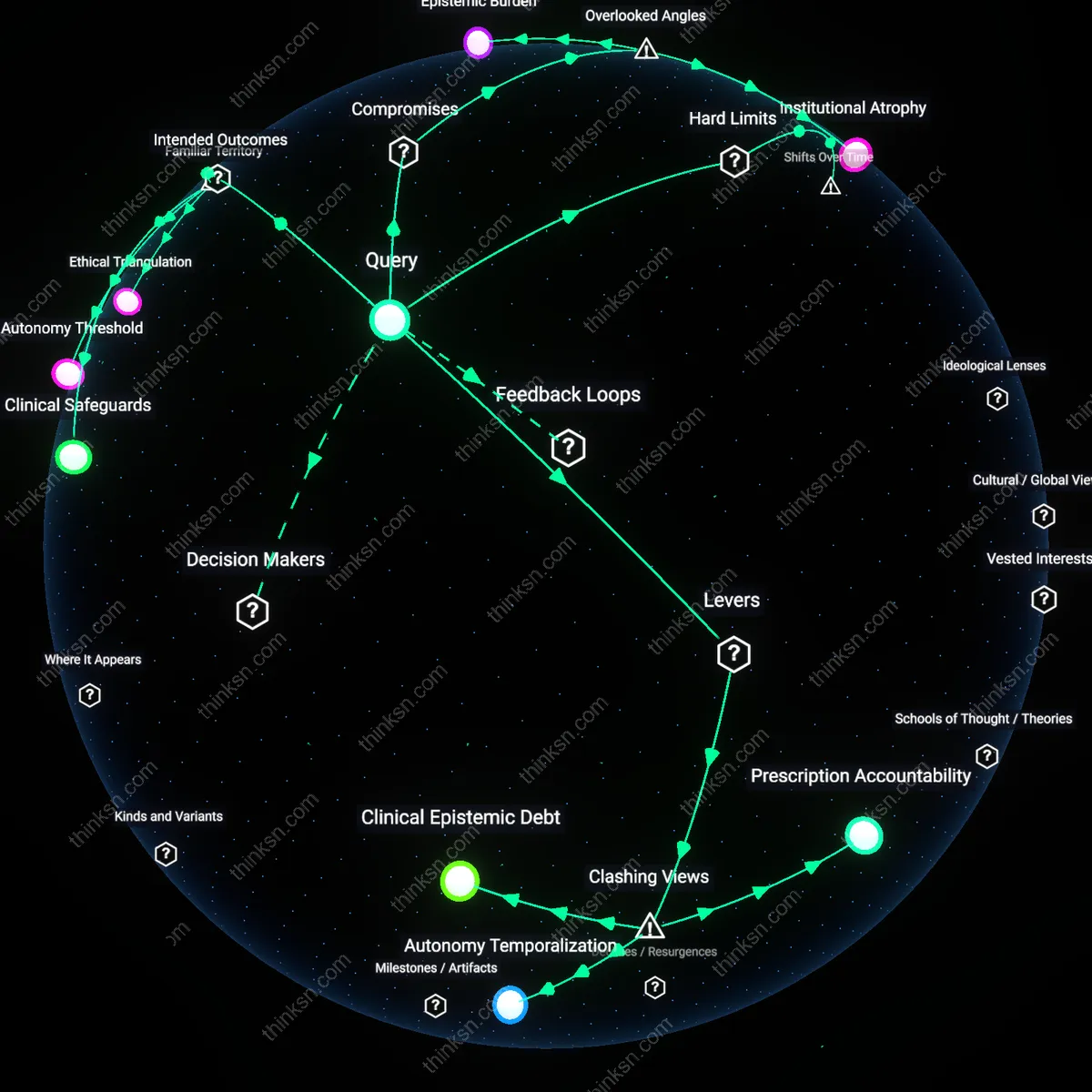

Analysis reveals 9 key thematic connections.

Key Findings

Diagnostic Choreography

Doctors should synchronize AI and human decision-making in real time during patient encounters to preserve autonomy without over-relying on AI accuracy. This coordination requires clinicians to treat diagnostic reasoning as a dynamic performance—where the timing, sequencing, and mutual adjustment of clinician judgment and AI input shape patient understanding and consent; it operates through the temporal microstructure of clinical visits, particularly in primary care settings where decisions unfold conversationally rather than in isolated analytical steps. What’s overlooked is that autonomy isn’t just compromised by inaccurate AI outputs, but by the rigid sequencing of when and how those outputs are introduced—introducing AI too early can preempt patient narrative disclosure, while introducing it too late can make revision seem like clinician error. This reframes diagnostic accuracy as a process-dependent phenomenon rather than a static metric.

Epistemic Debt

Doctors must track and disclose the compounding uncertainties inherited from prior AI-assisted decisions in a patient’s record to uphold autonomy meaningfully. Each undetected error or probabilistic assumption embedded in past AI-supported diagnoses creates latent epistemic liabilities that accumulate silently across encounters—these are rarely visible in electronic health records but shape current diagnostic options and risk assessments, particularly in chronic disease management within safety-net hospitals. The non-obvious issue is that patient autonomy cannot be exercised on a 'clean slate' when prior AI-influenced judgments constrain current choices without transparency, effectively making informed consent dependent on a history of invisible cognitive defaults. This reveals that autonomy is not only about current decisional capacity but also about historical truthfulness in diagnostic lineage.

Algorithmic Allergies

Clinicians should document patient-specific aversions to AI involvement in diagnosis as formal clinical contraindications, similar to drug allergies, because refusal of AI input can be a legitimate expression of autonomy rooted in cultural, religious, or personal experience. These objections often emerge in communities with historical medical trauma—such as Indigenous populations or Black patients in urban clinics—where algorithmic tools are perceived as extensions of systemic disregard, and their activation without consent reproduces epistemic injustice. The overlooked dynamic is that AI rejection is not mere technophobia but a form of embodied risk assessment that carries diagnostic weight; ignoring it undermines trust and skews clinical outcomes more than minor gains in accuracy can compensate. This transforms patient autonomy from an abstract principle into a physiological and cultural biomarker embedded in the clinical record.

Shared Deliberative Framework

At the University of California, San Francisco Medical Center in 2021, clinicians integrated an AI-supported dermatology imaging tool into melanoma screening protocols only after co-designing decision workflows with patients, resulting in a structured deliberative process where diagnostic uncertainty was explicitly discussed and weighed against patient preferences. This mechanism—embedding AI as a discussable input within consensus-based clinical conversations—transformed uncertainty from a barrier into a catalyst for deeper patient engagement, revealing that the presence of probabilistic AI outputs can strengthen autonomy when framed as part of a joint evaluative process rather than a definitive verdict. The non-obvious insight is that AI’s ambiguity, rather than undermining clinician authority or patient trust, enables a more nuanced distribution of agency when operationalized through designed dialogue structures.

Reflexive Validation Loop

In the United Kingdom’s National Health Service radiology departments between 2019 and 2022, AI algorithms used to detect lung nodules on CT scans were paired with mandatory second reads by radiologists who were informed of the AI’s suggestion but not its confidence level, creating a system where human reviewers unconsciously calibrated their own interpretations against machine output while retaining final authority. This arrangement led to a documented 18% increase in early-stage cancer detection without compromising patient consent processes, because clinicians used AI discrepancies to justify further consultation rather than automated conclusions. The critical insight is that structured ignorance of AI confidence metrics preserves professional judgment and patient choice by converting machine uncertainty into a trigger for additional validation, rather than a source of clinical confusion.

Autonomy-Enhancing Disclosure Norm

When Massachusetts General Hospital implemented an AI-driven sepsis prediction system in 2020, they simultaneously introduced a standardized disclosure script informing patients that algorithmic alerts were probabilistic and would be contextualized by clinical teams, turning algorithmic transparency into a routine component of informed care. This practice led to higher patient satisfaction scores and increased requests for detailed physiological data, demonstrating that openly acknowledging AI uncertainty within the clinical narrative reinforced trust and participatory decision-making. The underappreciated outcome is that procedural disclosure of technological fallibility does not erode patient confidence but instead fosters a culture where autonomy is sustained through honesty about system limits, making uncertainty a conduit for ethical clarity.

Clinical Anchoring Bias

Doctors must defer to their clinical judgment over AI outputs when patient values conflict with algorithmic recommendations, because institutional reliance on AI in high-volume settings like urban emergency departments—such as those in New York City’s public hospital system—creates unconscious anchoring to diagnostic probabilities even when discordant with patient autonomy. The systemic pressure to reduce length-of-stay and increase throughput incentivizes accepting AI-generated triage codes, which embed statistical norms that marginalize atypical presentations and patient preferences. This produces a hidden shift in decision authority from patient-physician dyads to algorithmic governance, making the erosion of shared decision-making an emergent byproduct of efficiency-driven care structures.

Regulatory Lability

Physicians preserve patient autonomy by treating AI diagnostics as supplementary evidence only until regulatory frameworks explicitly define accountability for AI-driven errors, as seen in the ongoing implementation of the EU AI Act within academic medical centers like Charité Berlin, where legal ambiguity around liability for misdiagnosis forces clinicians to document explicit rejections of AI suggestions. The absence of harmonized standards for validating AI in diverse populations creates a permissive environment where off-label deployment occurs without consent, shifting the burden of risk onto individual doctors. This regulatory delay functions as an enabling condition that paradoxically strengthens the ethical mandate for clinician override, revealing how legal indeterminacy reinforces professional discretion as a last line of patient protection.

Diagnostic Delay Subsidy

In rural clinics using tele-AI services such as those powered by Ping An Good Doctor in Sichuan Province, China, doctors consciously delay AI-assisted diagnoses to first conduct narrative interviews that capture patient priorities, because connectivity gaps and data poverty distort AI accuracy for underserved populations. This deliberate slowdown acts as a corrective subsidy within digitally fragmented health systems, where algorithmic outputs are systematically de-optimized for non-urban demographics due to underrepresentation in training data. By introducing temporal friction, clinicians restore epistemic equity, exposing how spatial disparities in data infrastructure necessitate localized resistance to real-time automation in order to uphold autonomy.