AI Transparency in MedTech: Innovation vs. Public Safety?

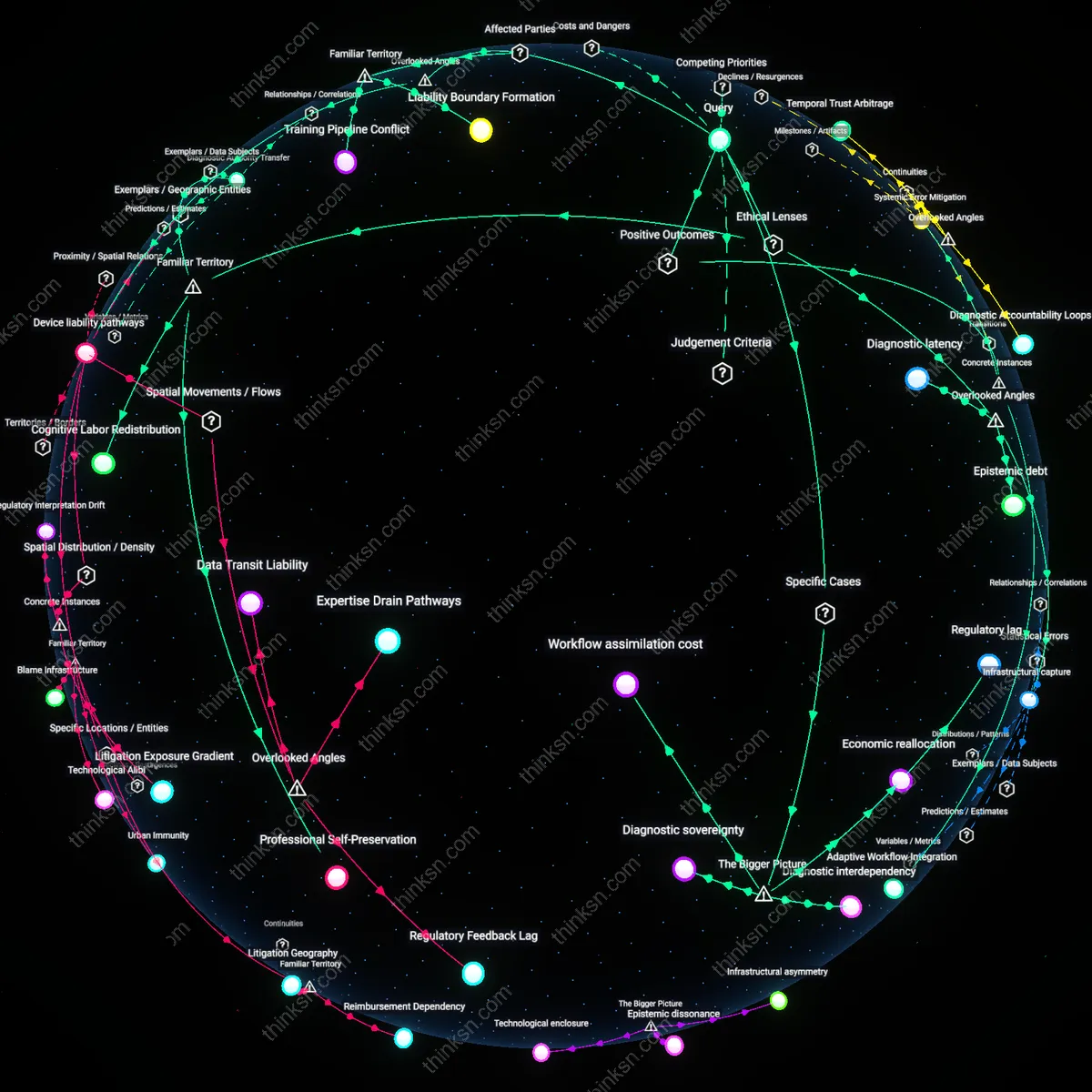

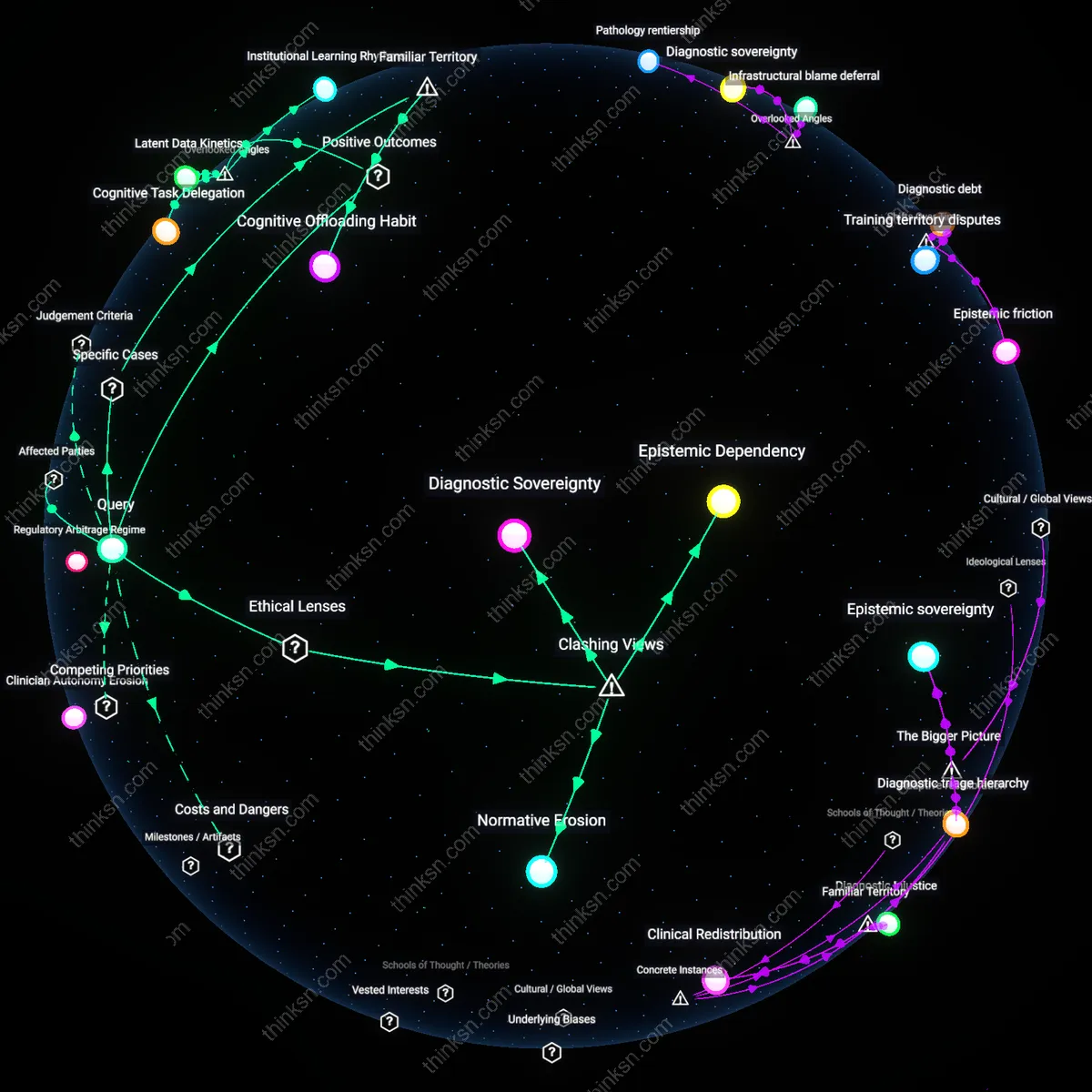

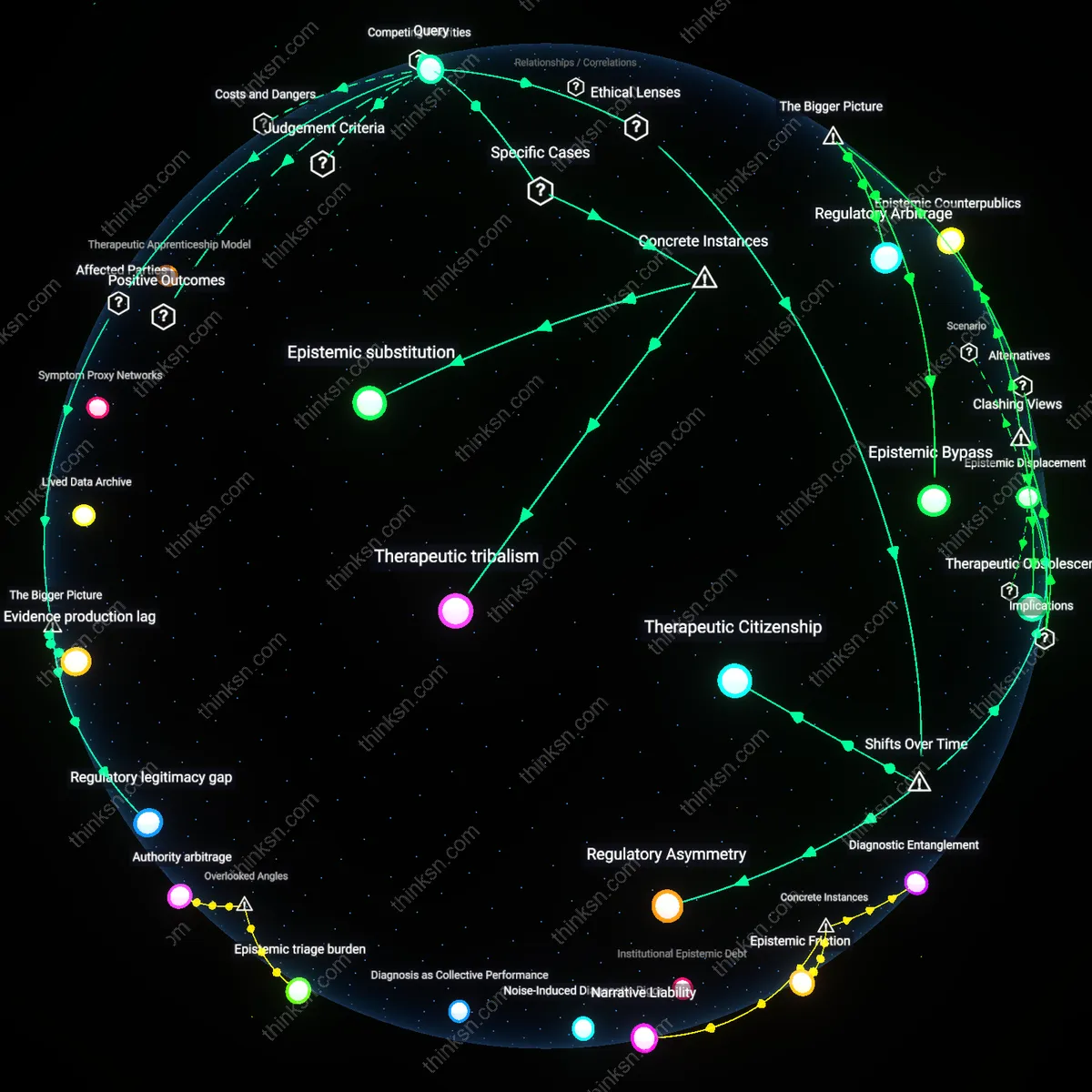

Analysis reveals 10 key thematic connections.

Key Findings

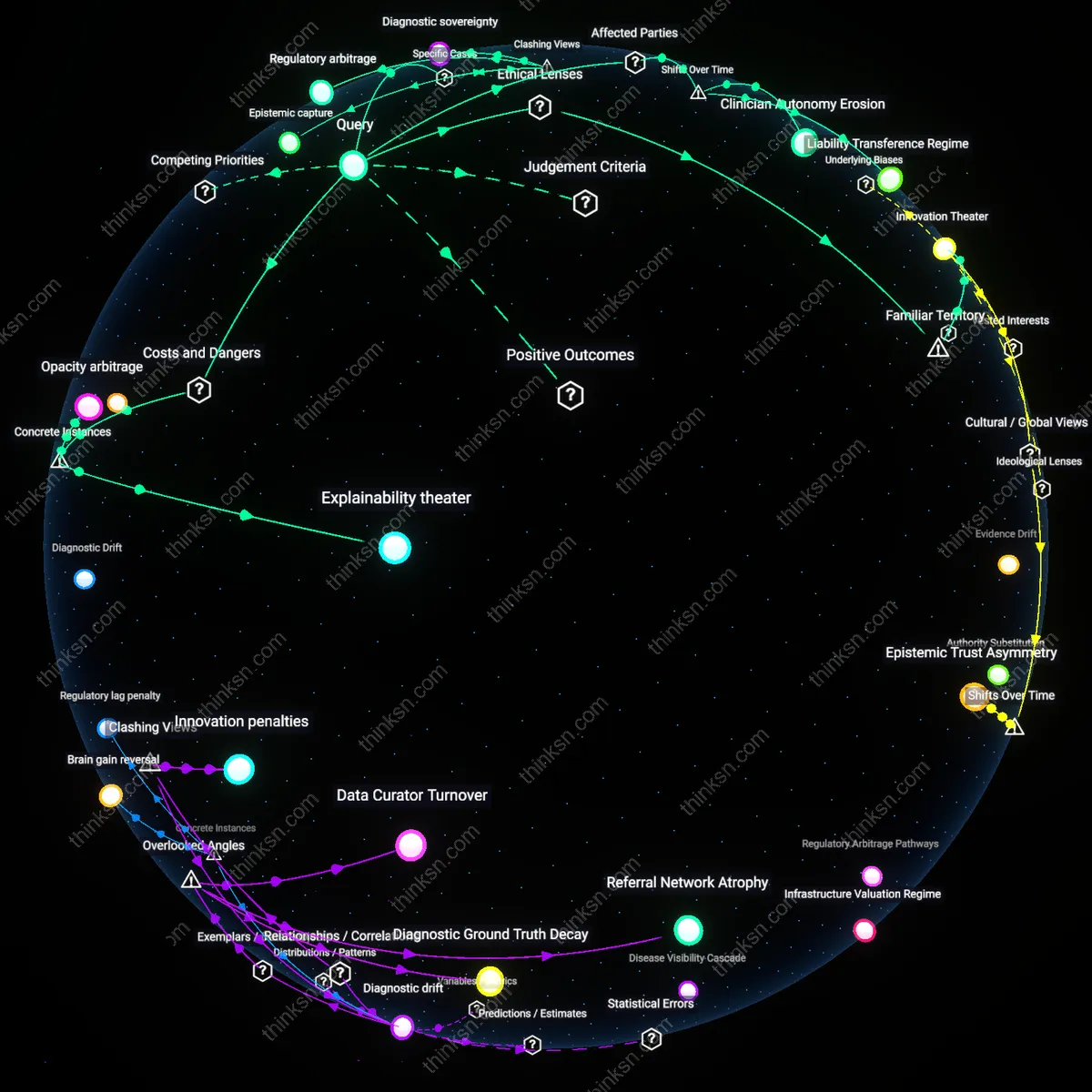

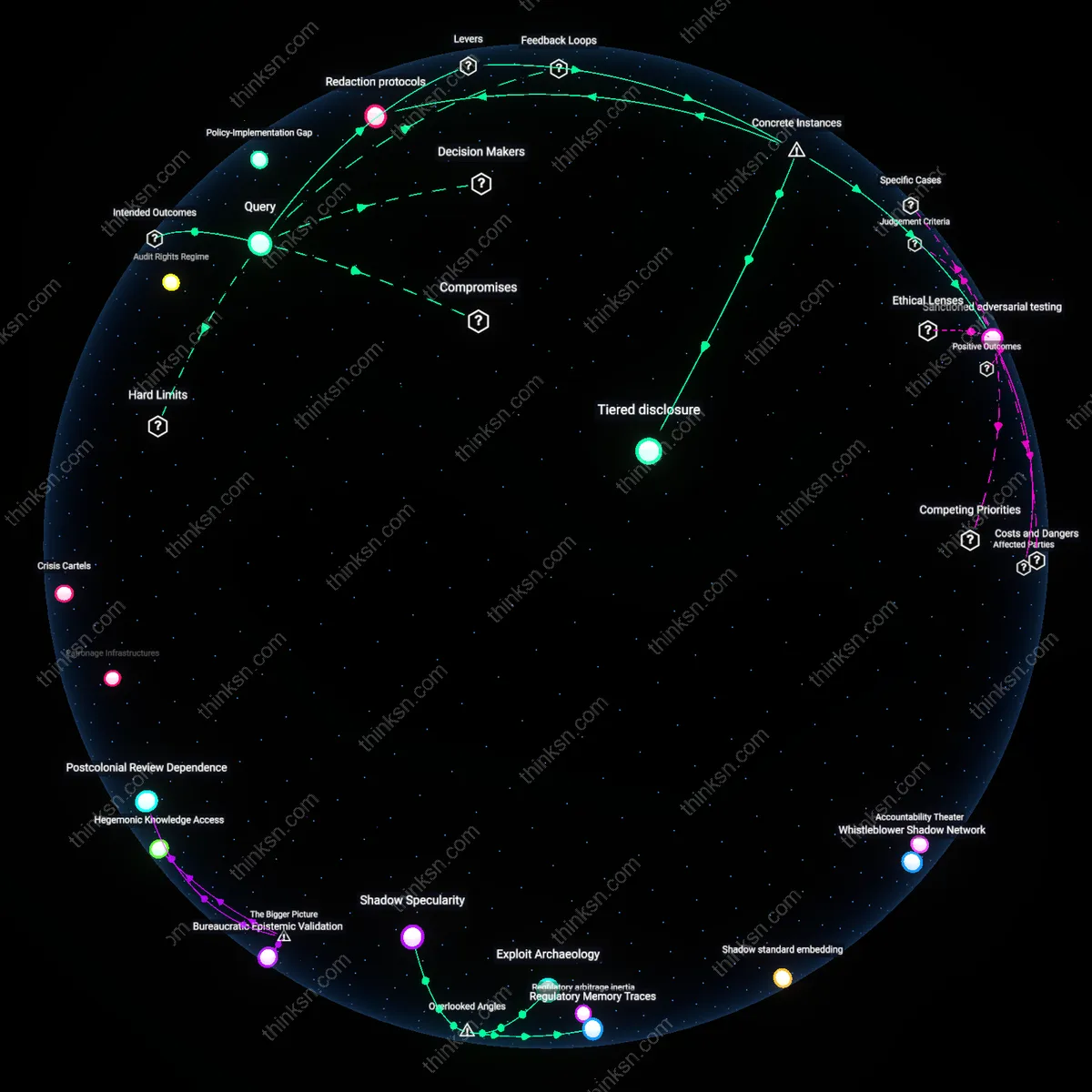

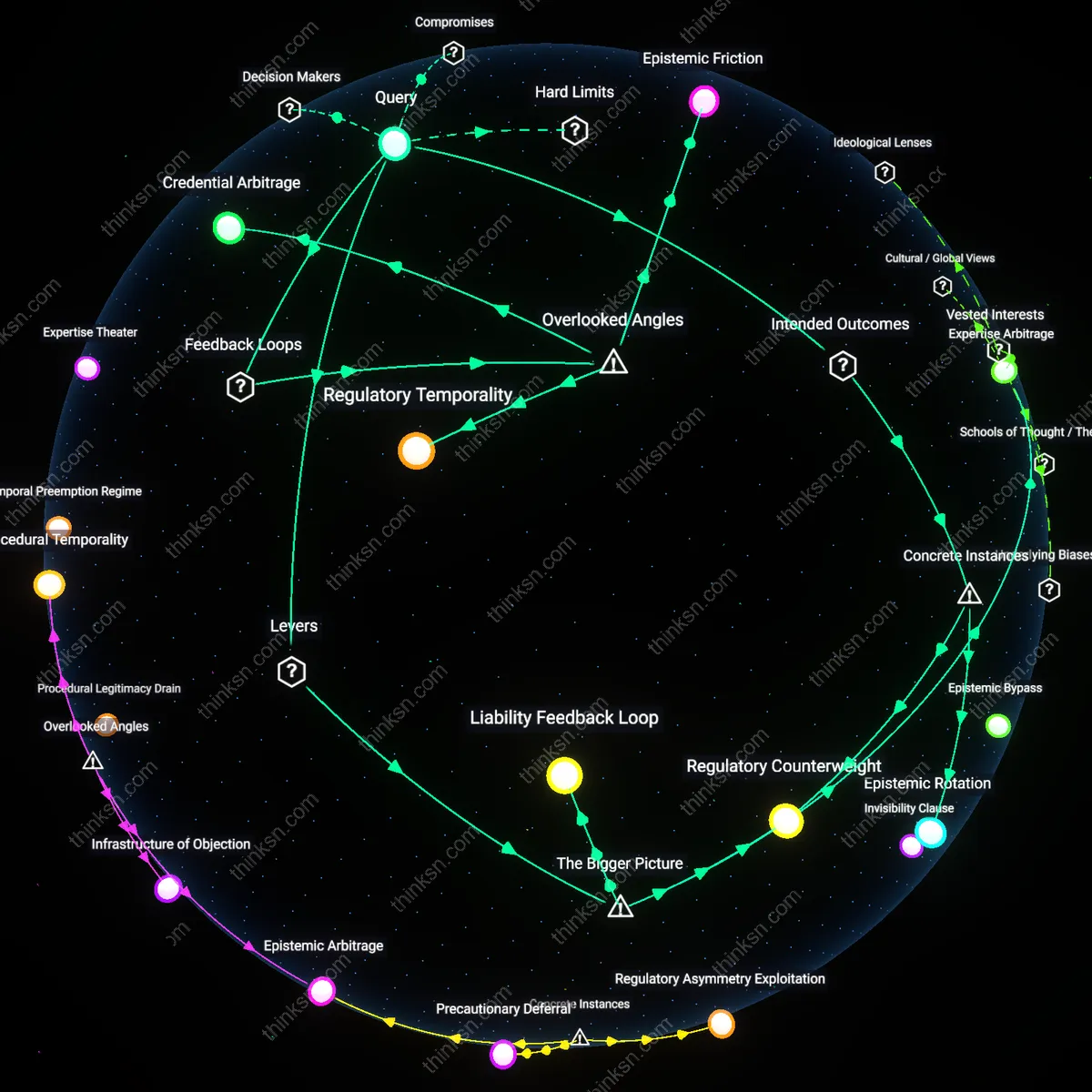

Clinician Autonomy Erosion

Mandating AI transparency erodes clinician autonomy by forcing physicians to act as intermediaries of algorithmic logic they neither designed nor fully understand, a shift accelerated after the 2016 FDA approval of IDx-DR, the first autonomous diagnostic AI, which reframed medical judgment as secondary to machine accountability. This repositioning transfers authoritative decision-making from bedside practitioners to regulatory-compliant engineers, revealing a historical transition from physician-led diagnosis to system-validated action, where trust is no longer cultivated interpersonally but audited technocratically—a dynamic rarely acknowledged in innovation discourse, which presumes transparency inherently restores agency.

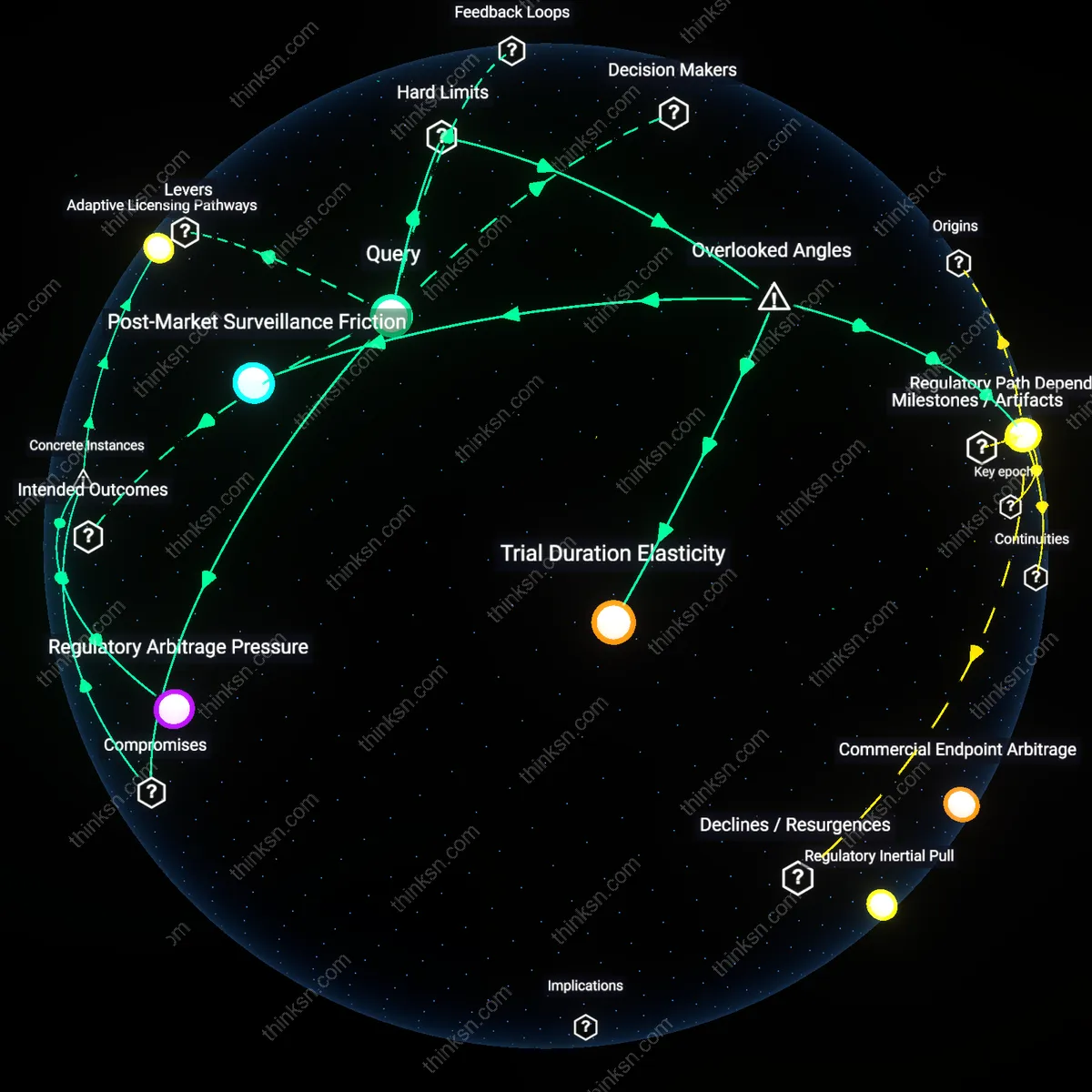

Regulatory Lag Fabric

The conflict between proprietary AI and public safety emerged distinctly after 2018, when deep learning models entered clinical validation phases en masse, exposing a regulatory apparatus designed for static devices, not adaptive algorithms, thereby producing a structural delay in oversight capacity. This temporal rupture—between the velocity of proprietary model iteration at firms like PathAI and the glacial pace of FDA premarket review—reveals how transparency mandates are undermined not by corporate resistance alone, but by an institutional timeline mismatch, where the very process of validation becomes obsolete before approval, an underappreciated mechanism of systemic risk.

Liability Transference Regime

Following the 2021 EU AI Act’s risk-tiered framework, hospitals adopting high-stakes AI now function as de facto liability absorbers, assuming legal and ethical risk when vendors satisfy transparency requirements only superficially, marking a shift from developer-centric to institution-centric accountability. This redistribution of risk—where public safety mandates are met on paper but not in clinical effect—transforms hospitals into operational shields for proprietary systems, revealing an emergent regime in which compliance paperwork displaces genuine explainability, a transition obscured by policy focus on algorithmic disclosure rather than consequence management.

Innovation flight

Mandating AI transparency in high-stakes medical tools drives proprietary developers to relocate research to less regulated jurisdictions, as seen when DeepMind Health moved its kidney injury prediction project from the UK’s NHS to private partnerships in Singapore after facing intense public scrutiny and data governance constraints—demonstrating how transparency requirements can trigger strategic withdrawal from public systems, especially where intellectual property exposure threatens competitive advantage. This dynamic undermines domestic access to cutting-edge diagnostics while weakening oversight precisely where it's needed most, creating a feedback loop of privatization and opacity that benefits neither patients nor regulators. The underappreciated mechanism here is not just non-compliance, but the *geographic rerouting* of innovation itself in response to disclosure pressures.

Explainability theater

Requiring AI transparency in medicine can devolve into performative disclosure rather than meaningful insight, exemplified by IBM Watson Oncology’s deployment in hospitals across India and Germany, where clinicians were given access to algorithmic confidence scores and keyword rationales that appeared transparent but did not enable accurate prediction of treatment errors. The system’s internal logic remained inscrutable, yet the *illusion* of explainability pacified regulatory expectations and institutional review boards, leading to sustained use despite documented harms such as unsafe radiotherapy recommendations. This reveals how transparency mandates, when poorly enforced or technically shallow, generate a theater of accountability that preserves proprietary control while transferring liability to frontline providers who lack the tools or authority to interrogate the system thoroughly.

Opacity arbitrage

Medical AI firms exploit uneven regulatory standards between countries to shield proprietary models from scrutiny, illustrated by the case of PathAI’s collaboration with the U.S. Department of Veterans Affairs, where the vendor agreed to transparency protocols for model validation but simultaneously marketed a closed-source version in South Africa’s public clinics with no audit rights or documentation transfer—enabling the vendor to arbitrage differences in oversight capacity and legal enforceability. This dual-track deployment leverages the appearance of compliance in high-income settings to legitimize non-transparent use elsewhere, amplifying global health inequities and exposing vulnerable populations to unmonitored model failures. The underrecognized risk is not simply delayed transparency, but the *strategic segmentation* of opacity as a market strategy.

Innovation Theater

Mandating AI transparency in high-stakes medical tools undermines corporate control over proprietary algorithms, triggering strategic obfuscation disguised as compliance. Firms retain trade secrets by reducing transparency to superficial disclosures—such as releasing summaries instead of code or training data—exploiting legal allowances under U.S. intellectual property doctrines like the Defend Trade Secrets Act. This leverages the public’s intuitive trust in regulatory stamps of approval, even when mechanisms lack enforcement teeth, revealing how the appearance of accountability substitutes for substantive explainability in healthcare AI markets.

Regulatory arbitrage

Mandating AI transparency in high-stakes medical tools does not inherently conflict with proprietary innovation because firms like Caption Health have circumvented full disclosure by segmenting algorithmic functions across jurisdictions, licensing only post-processed outputs in regulated markets while keeping core training pipelines in less scrutinized domains. This strategic partitioning allows companies to meet minimal explainability thresholds in places like the US FDA’s 510(k) pathway without exposing foundational models, revealing that regulatory fragmentation—rather than technical incompatibility—enables evasion of transparency. The non-obvious insight is that the tension is not between safety and innovation, but between harmonized oversight and jurisdictional loophole exploitation, which firms actively engineer into their deployment architectures.

Epistemic capture

The push for AI transparency in clinical AI tools actually reinforces proprietary control by conditioning public trust on vendor-specific explanations, as seen in the UK’s partnership with Babylon Health, where accountability mechanisms were designed using Babylon’s own interpretability metrics, effectively making regulators dependent on the innovator to define what ‘transparency’ means. This inversion—where the demand for explainability elevates the vendor to epistemic authority—undermines public oversight rather than enabling it, exposing a hidden mechanism by which compliance rituals can entrench corporate power. The dissonance lies in recognizing that transparency mandates, when poorly operationalized, may not check power but institutionalize reliance on proprietary knowledge regimes.

Diagnostic sovereignty

In India’s publicly funded AI tuberculosis screening rollout using Qure.ai’s qXR system, the state—not the public—became the arbiter of algorithmic validity by accepting black-box performance without demanding model disclosure, prioritizing rapid deployment over explainability, thereby bypassing the innovation-transparency dilemma entirely. Here, public interest was redefined not as patient access to reasoning but as statistical reach, enabling the government to claim care equity while insulating proprietary systems from scrutiny. This reframes the conflict not as one between innovation and safety, but as a state-mediated forfeiture of explainability in the name of scalable intervention, revealing that the ‘public right’ is often selectively interpreted by sovereign actors to justify technological pragmatism.