Does AI in Hospitals Shorten Wait Times at the Cost of Human Oversight?

Analysis reveals 6 key thematic connections.

Key Findings

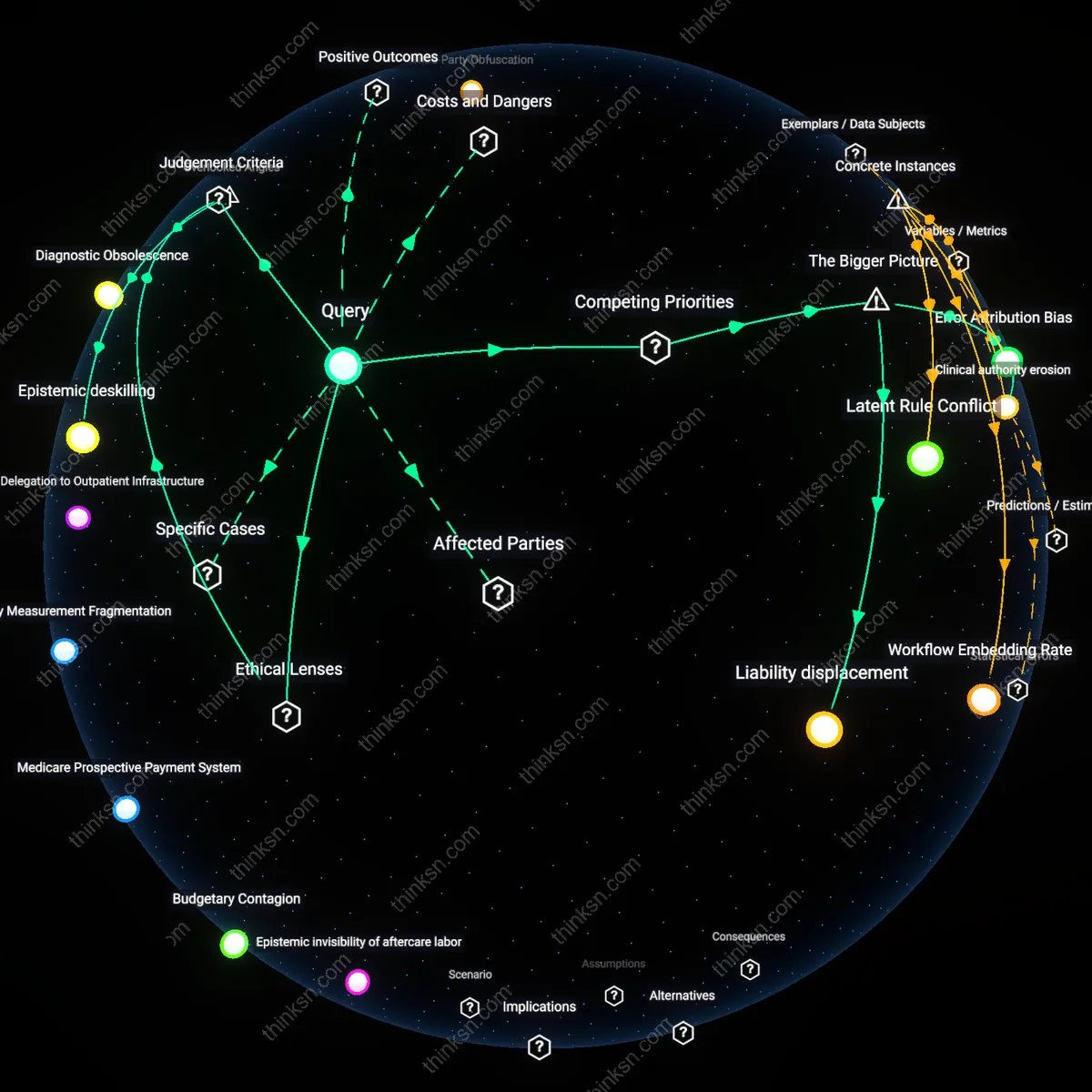

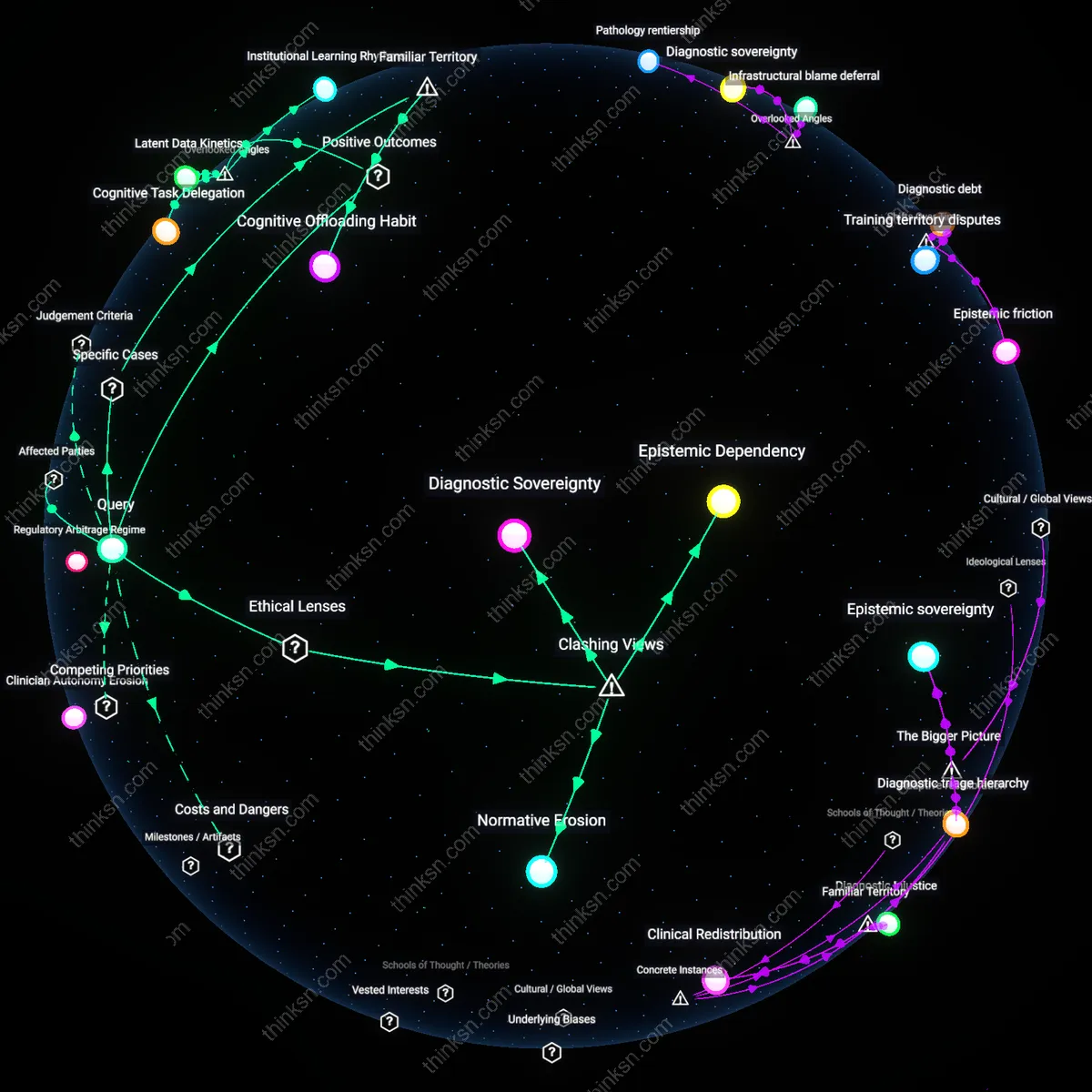

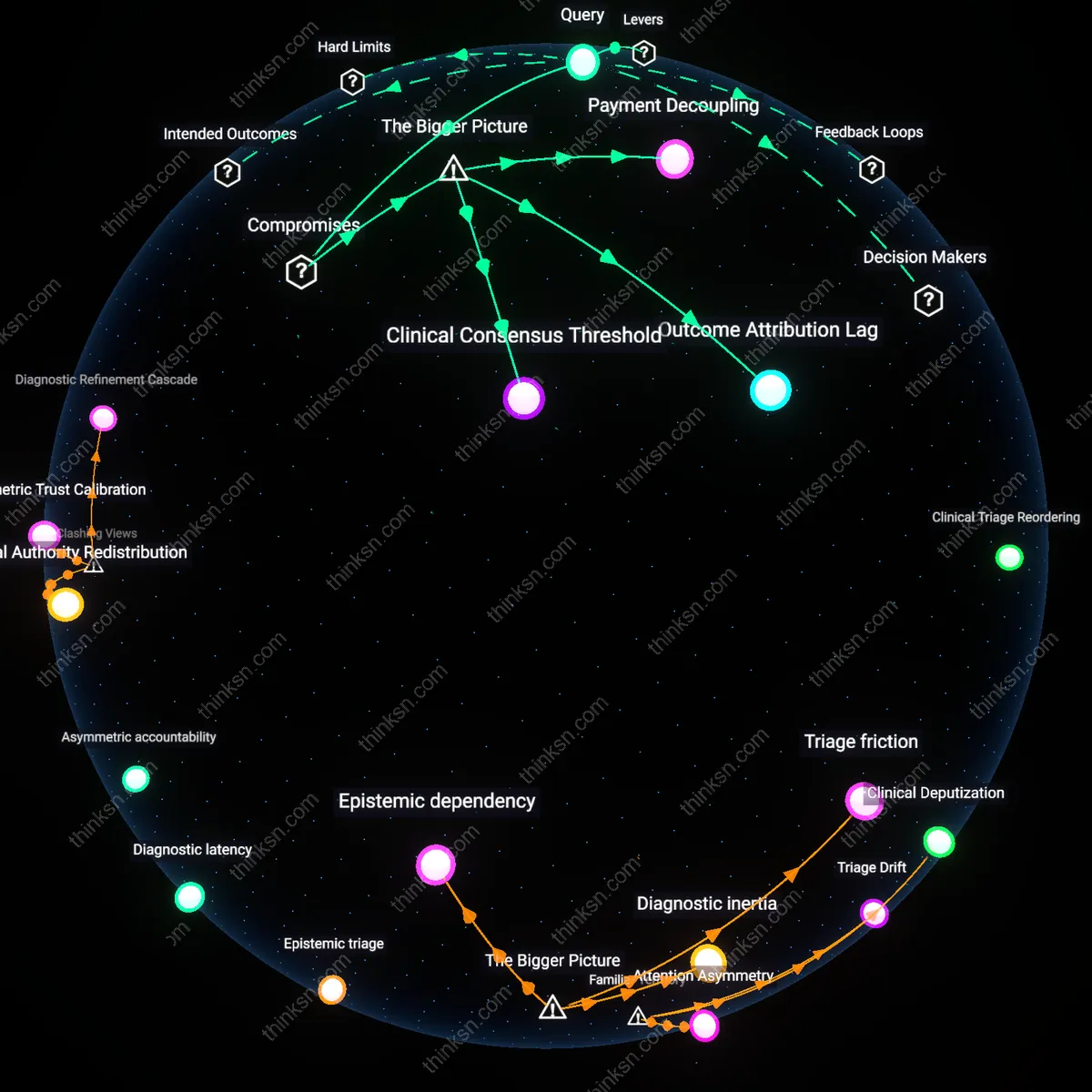

Diagnostic Obsolescence

The benefit of reduced wait times from AI in hospitals does not outweigh the ethical concern of reduced human oversight because rapid AI triage in emergency departments—such as in stroke assessment at urban Level I trauma centers—accelerates diagnostic pathways while eroding clinician calibration with disease progression over time, thereby increasing reliance on systems that degrade physician judgment through deskilling. This mechanism operates through automation-induced atrophy of clinical intuition, where consistent deferral to algorithmic prioritization weakens pattern recognition in complex presentations, a dynamic already observed in radiology workflows using AI pre-reading. Contrary to the efficiency-centered belief that speed enhances care, the non-obvious consequence is that faster throughput undermines the very expertise needed to manage AI errors in critical cases, revealing that acceleration can induce systemic diagnostic fragility.

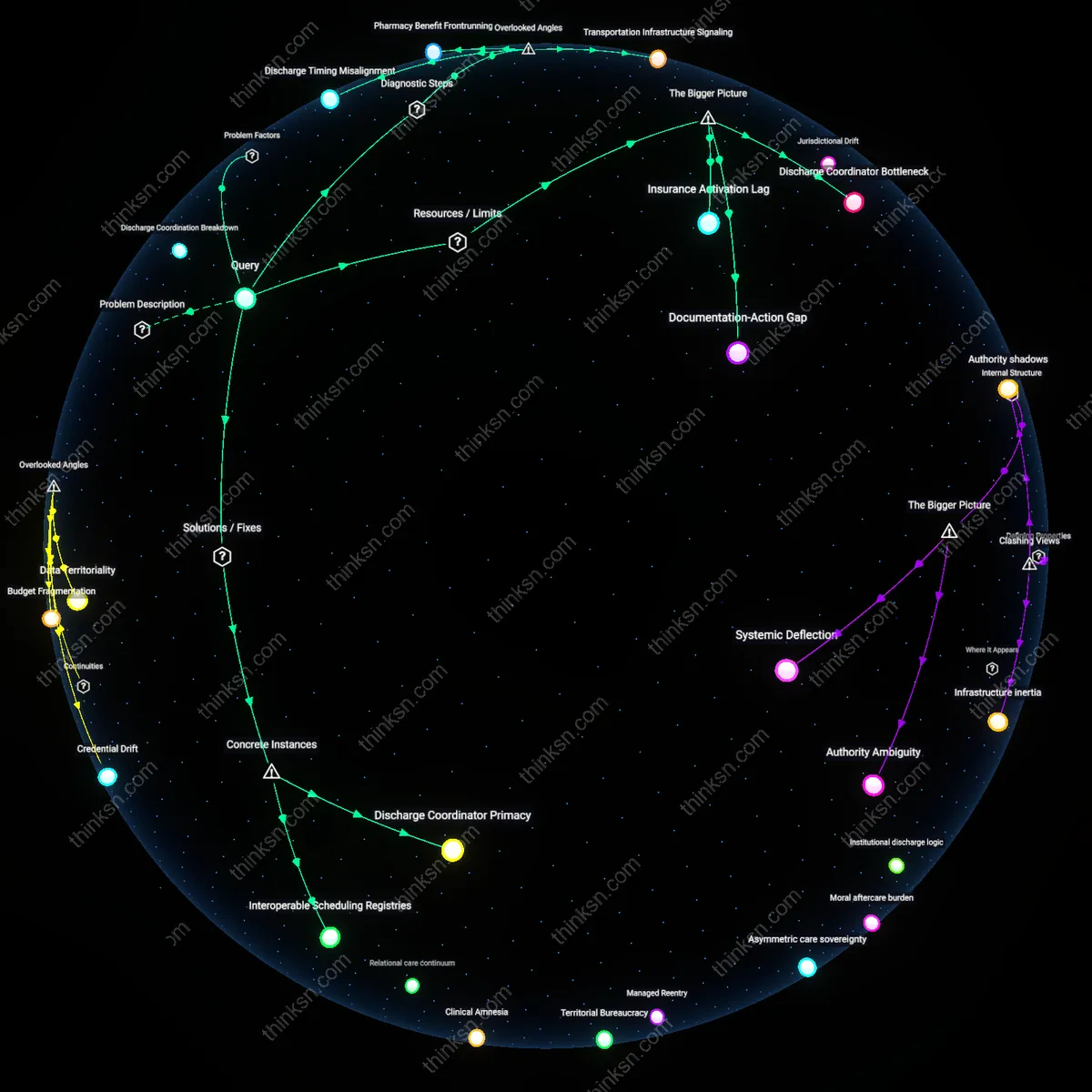

Temporal Harm Displacement

The benefit of reduced wait times from AI in hospitals does not outweigh the ethical concern of reduced human oversight because AI-driven discharge protocols in metropolitan teaching hospitals shift harm from immediate waiting-room mortality to delayed post-discharge crises, as seen in predictive models that optimize bed turnover while under-weighting social determinants of readmission. This occurs through a temporal misalignment in accountability systems, where hospital performance metrics reward shorter stays but fail to capture downstream patient destabilization weeks after release, particularly among elderly Medicaid recipients. Contrary to the dominant framing of wait time as the primary moral metric, the non-obvious effect is that eliminating queue-based suffering redistributes risk across time rather than eliminating it, revealing that efficiency gains can mask intertemporal trade-offs in harm exposure.

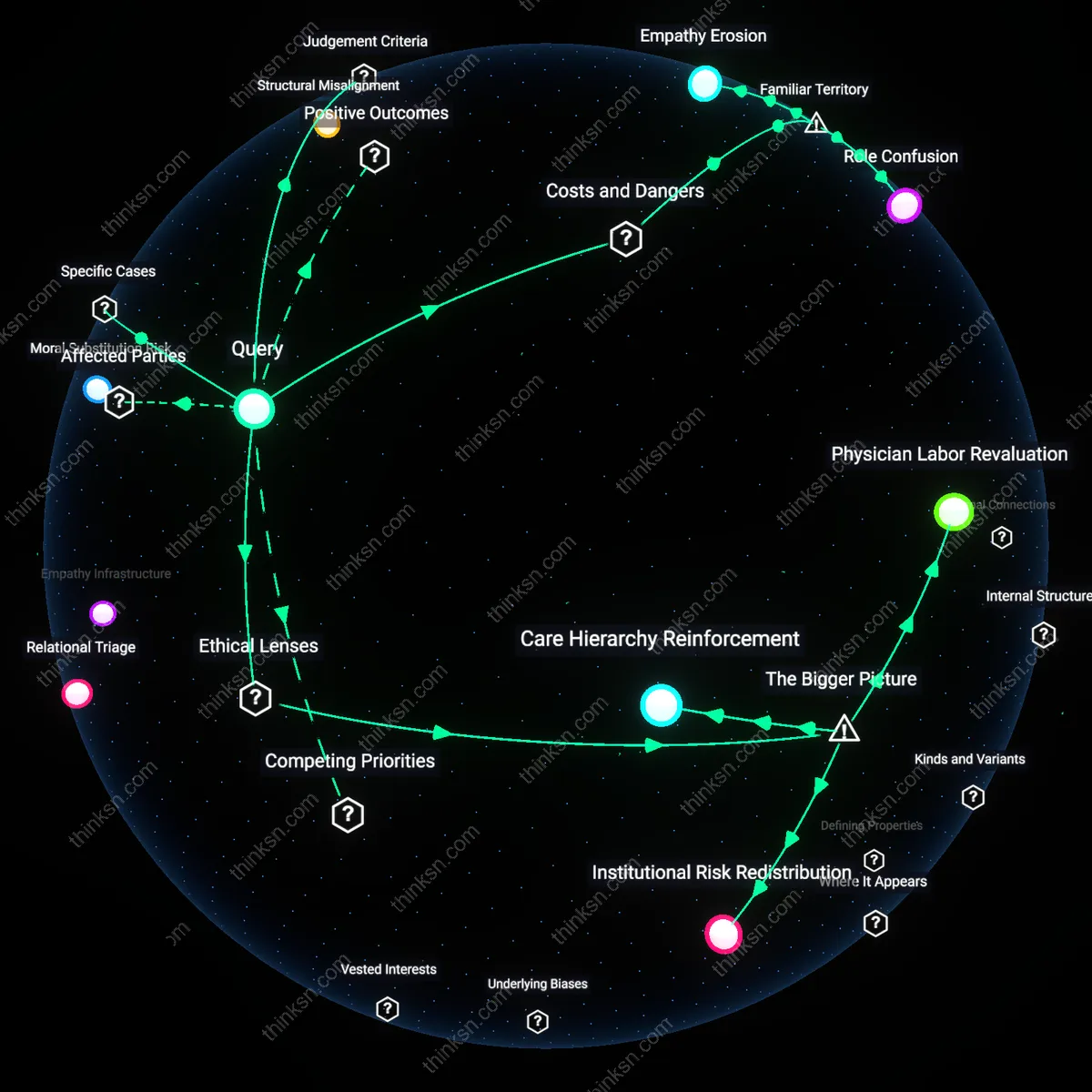

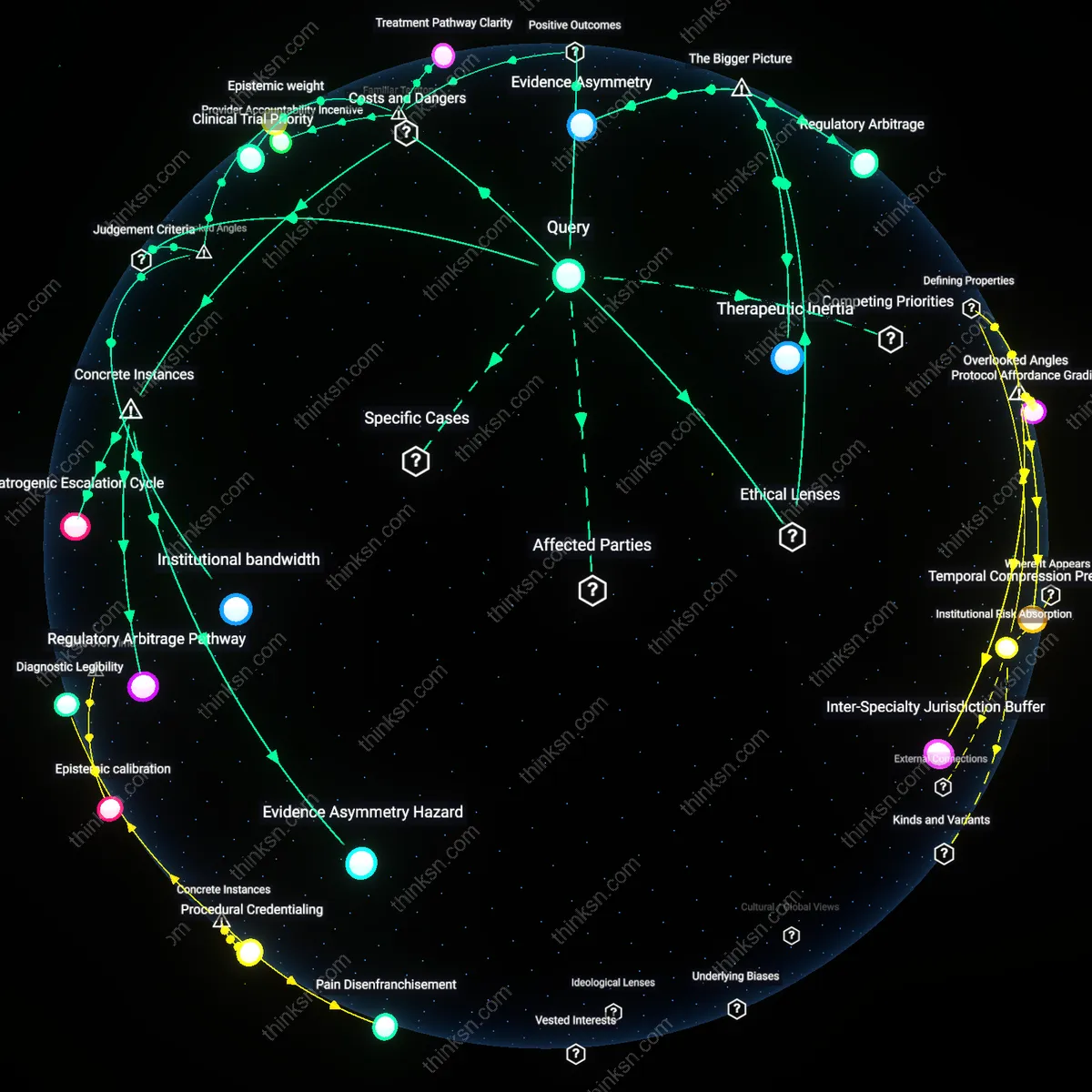

Clinical authority erosion

Reduced human oversight in critical care due to AI-driven efficiency gains undermines the role of physicians in final decision-making, especially in high-stakes environments like intensive care units. As hospitals adopt AI triage and diagnostic systems to cut wait times, regulatory and financial incentives prioritize throughput metrics over clinician autonomy, shifting authority toward algorithmic outputs that are opaque and difficult to challenge in real time. This dynamic is amplified by administrative actors optimizing for resource utilization, whose performance evaluations tie directly to reduced patient wait times, thereby weakening the structural power of medical staff to assert independent judgment—eroding a foundational pillar of clinical ethics. The non-obvious consequence is not just error risk, but a systemic reconfiguration of medical authority that diminishes professional agency even when human providers remain present.

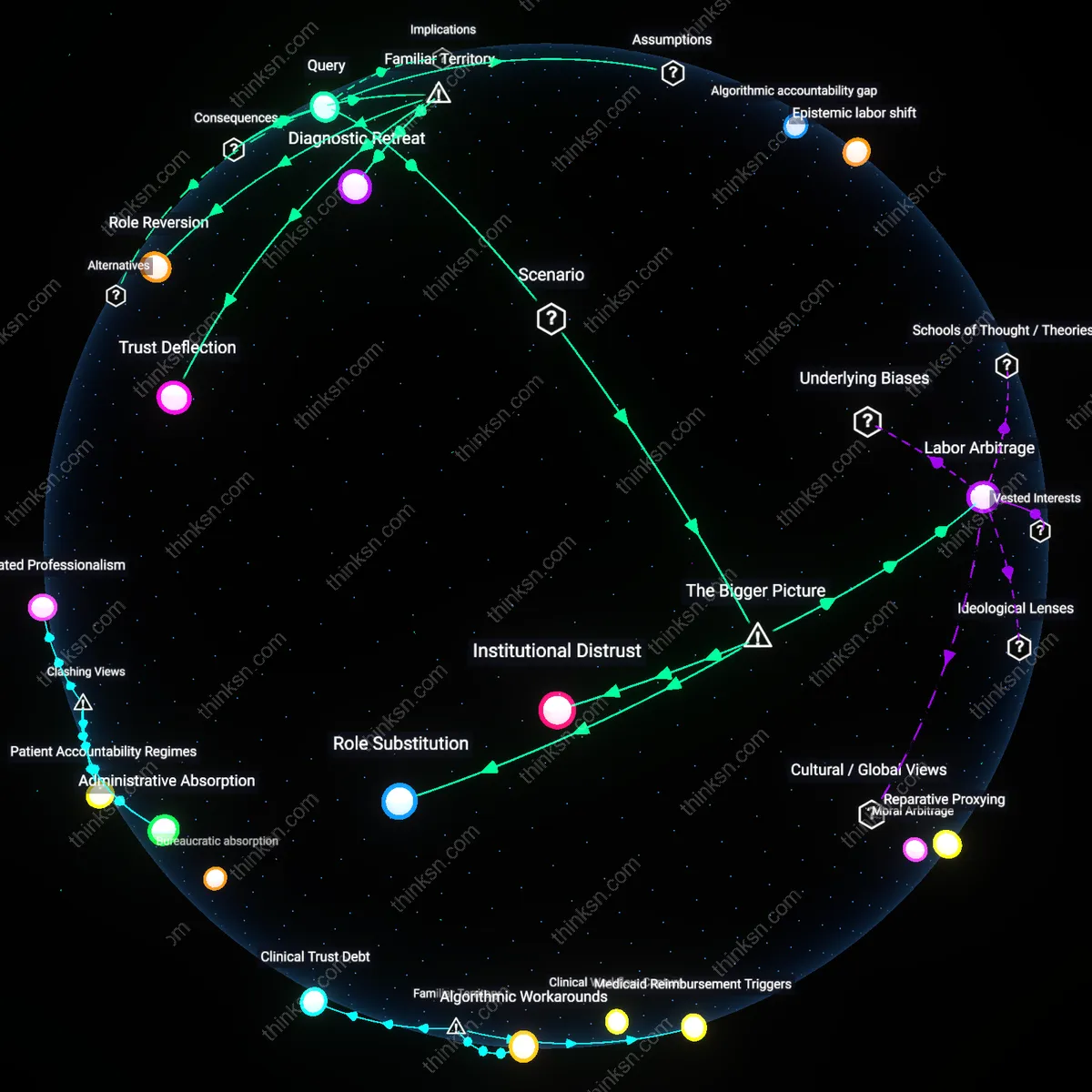

Liability displacement

The integration of AI to reduce hospital wait times transfers accountability from individual clinicians to distributed systems involving software vendors, data infrastructure, and institutional protocols, thereby diluting ethical responsibility in critical care decisions. As AI tools become embedded in emergency workflows—such as radiology prioritization or sepsis prediction—the chain of causality behind errors becomes fragmented, making it difficult to assign liability when oversight fails; this is exacerbated by legal frameworks that treat algorithmic recommendations as auxiliary tools, even when they effectively dictate action under time pressure. The result is a structural shift where no single actor bears full responsibility for outcomes, enabling institutions to deflect blame onto technical systems while retaining the efficiency benefits—creating a moral hazard where risk is socialized but rewards are operational. This redistribution of liability is rarely visible in policy debates but fundamentally alters incentive structures in patient safety.

Epistemic deskilling

Reduced human oversight due to AI in critical care undermines the long-term development of clinical expertise, particularly in novice physicians who rely on real-time mentorship and pattern recognition during high-acuity situations. In teaching hospitals like those affiliated with the Johns Hopkins Health System, resident training depends on exposure to diagnostic uncertainty and rapid decision-making—conditions that AI streamlines but does not explain, thereby eroding tacit learning opportunities. This shift weakens medical epistemology as a practice-rooted discipline, a concern absent from utilitarian assessments focused solely on throughput. The unnoticed mechanism is not error rate but the gradual degradation of professional judgment under algorithmic tutelage.

Liability deferral

AI-driven triage systems in NHS hospitals create a de facto deferral of legal accountability from clinicians to software vendors whose algorithms are protected as trade secrets, making post-event audits practically impossible under UK’s Medicines and Medical Devices Act 2021. This legal opacity shifts responsibility into unregulated spaces, enabling institutions to justify reduced staffing under the guise of technological reliability while evading duty-of-care obligations. The overlooked dynamic is not automation bias per se, but how liability avoidance—not patient safety—becomes the real driver of AI adoption, transforming ethical risk distribution in ways that neither deontological nor consequentialist models currently capture.