Is Health Monitoring AI a Privacy Price Worth Paying for Early Detection?

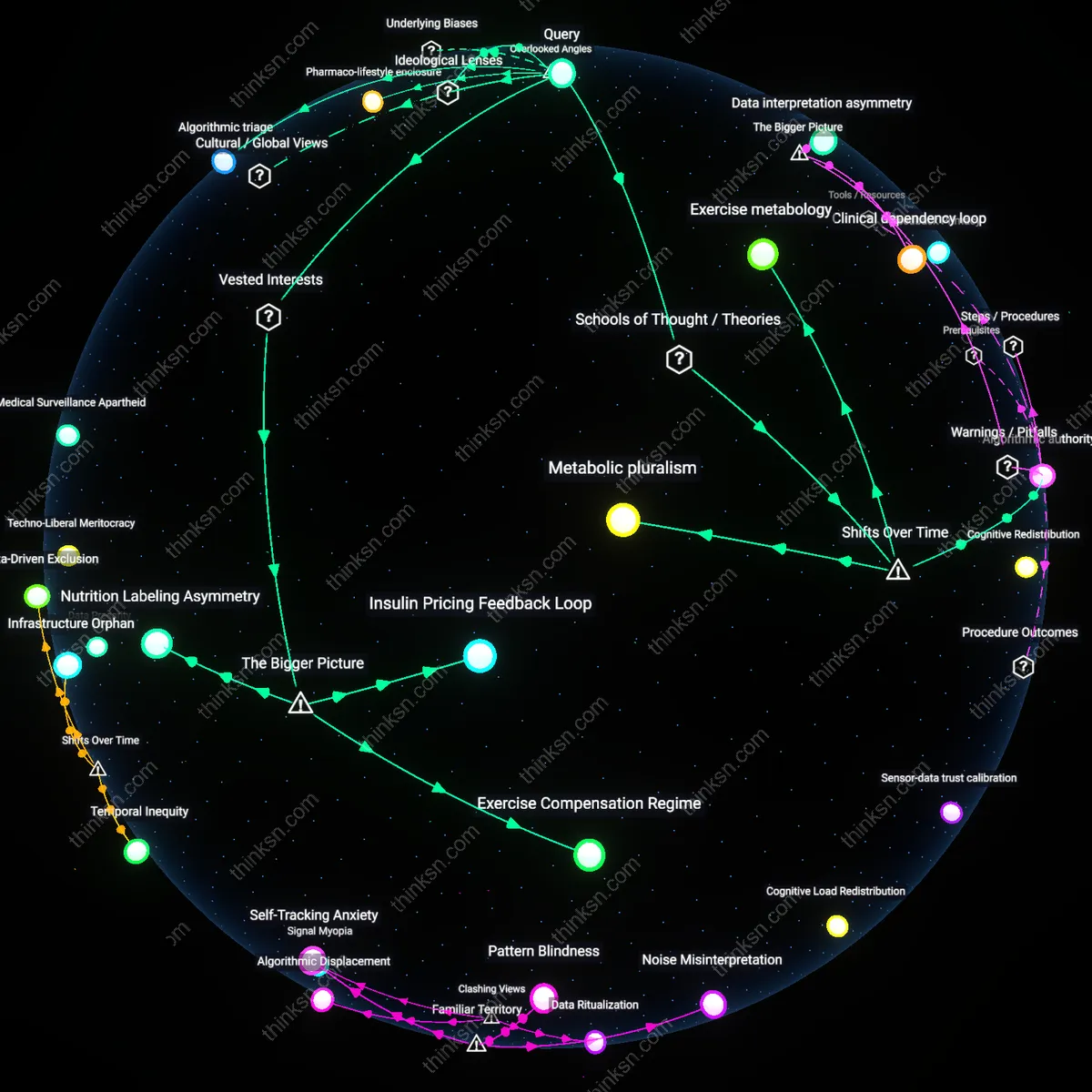

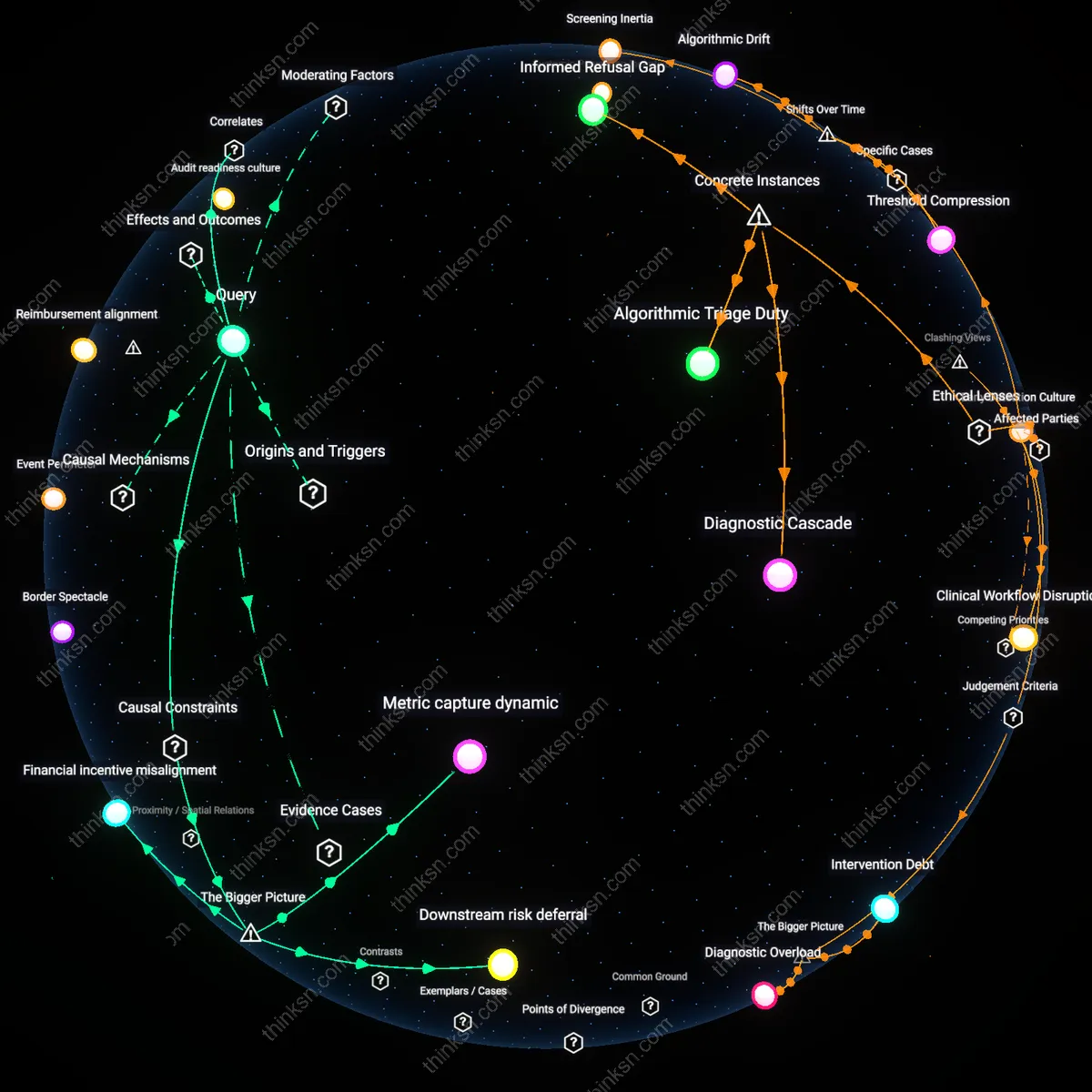

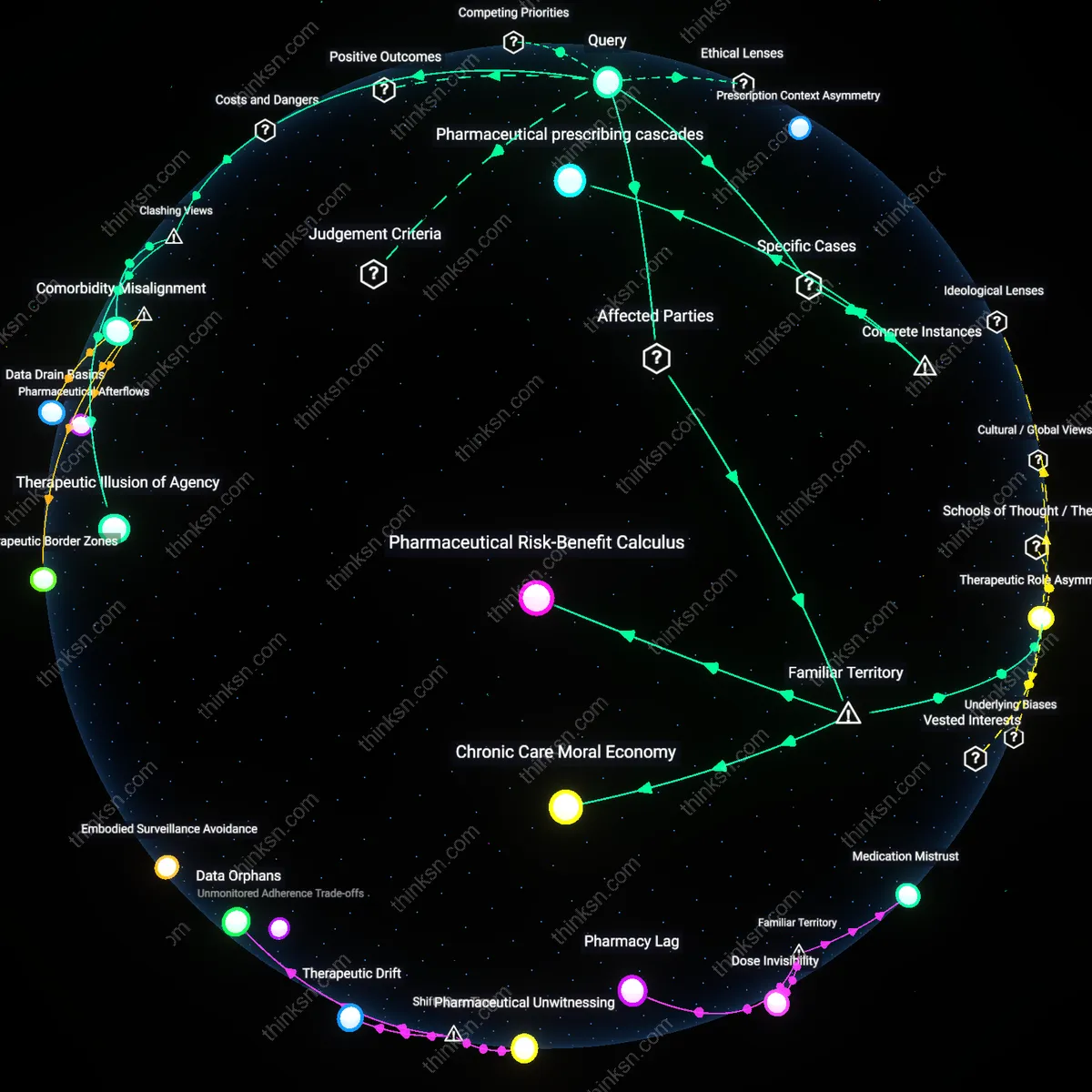

Analysis reveals 8 key thematic connections.

Key Findings

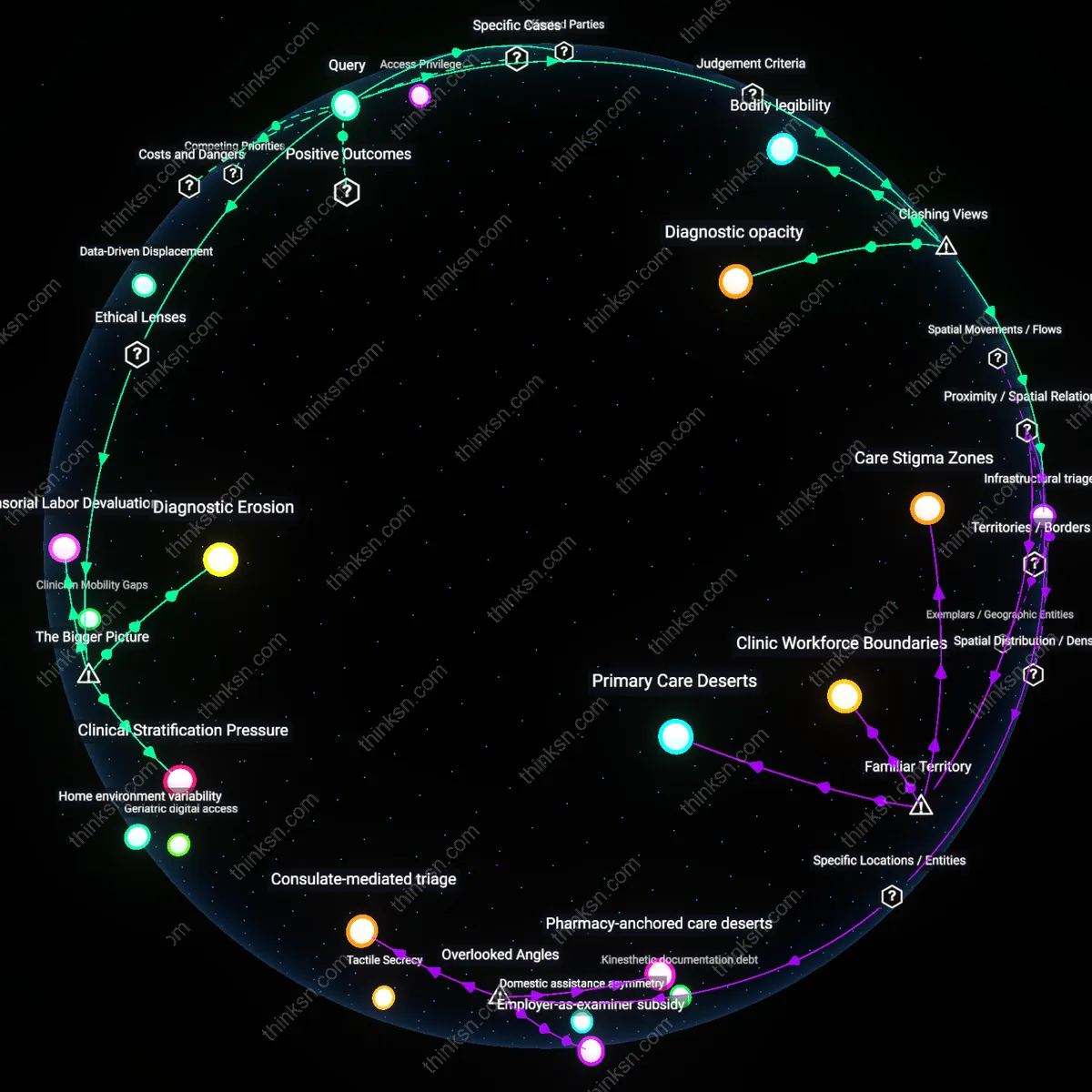

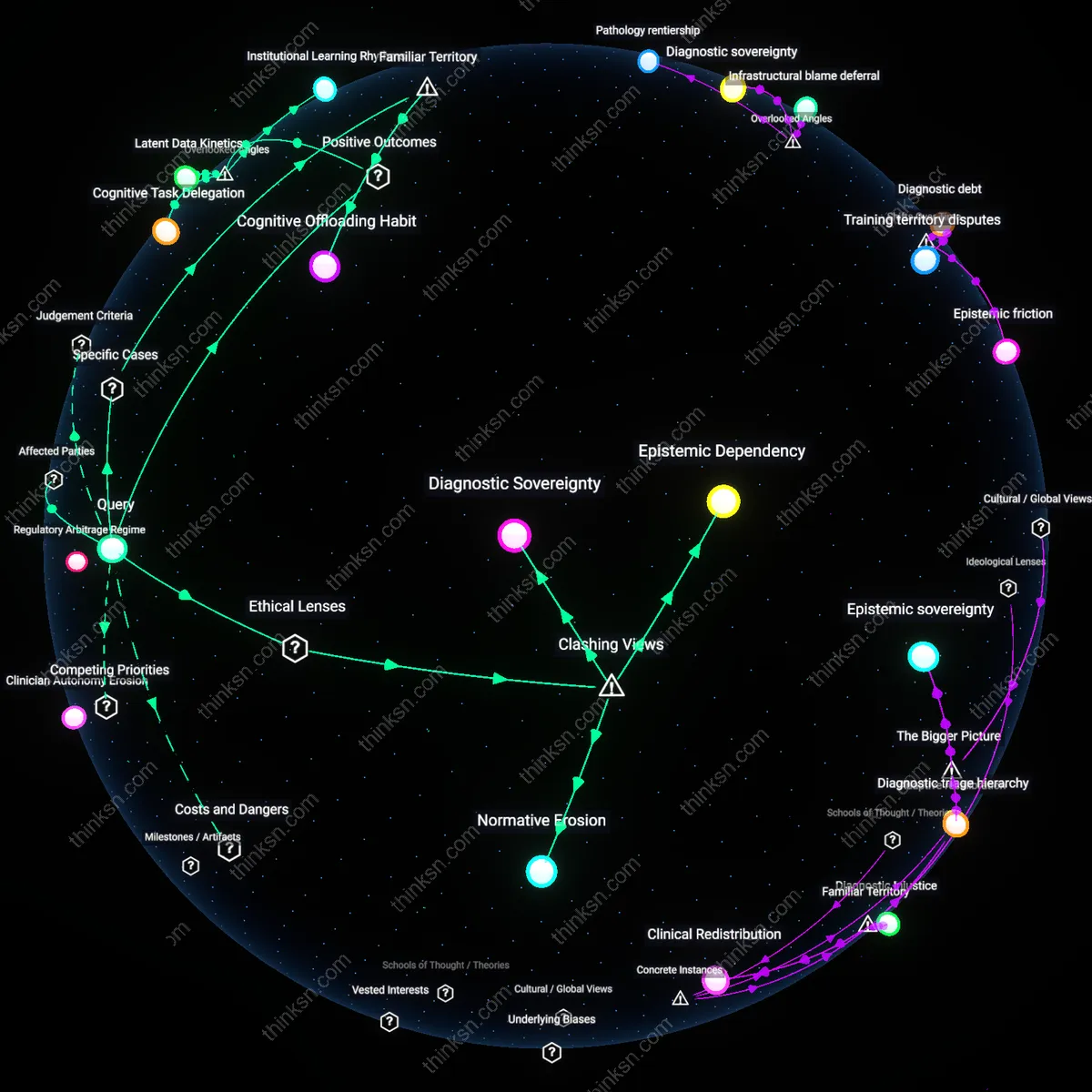

Patient Autonomy Erosion

Relying on AI for personal health monitoring undermines individual control over medical decisions because data-driven alerts override personal judgment. Tech companies and healthcare providers deploy algorithmic systems that prioritize early pathological signals over patient context, embedding decision latency into clinical workflows. This shift transfers authority from individuals to remote computational systems, making consent procedural rather than meaningful—what’s underappreciated is how routine reliance quietly normalizes delegation of bodily self-knowledge to machines, even when accuracy improves outcomes.

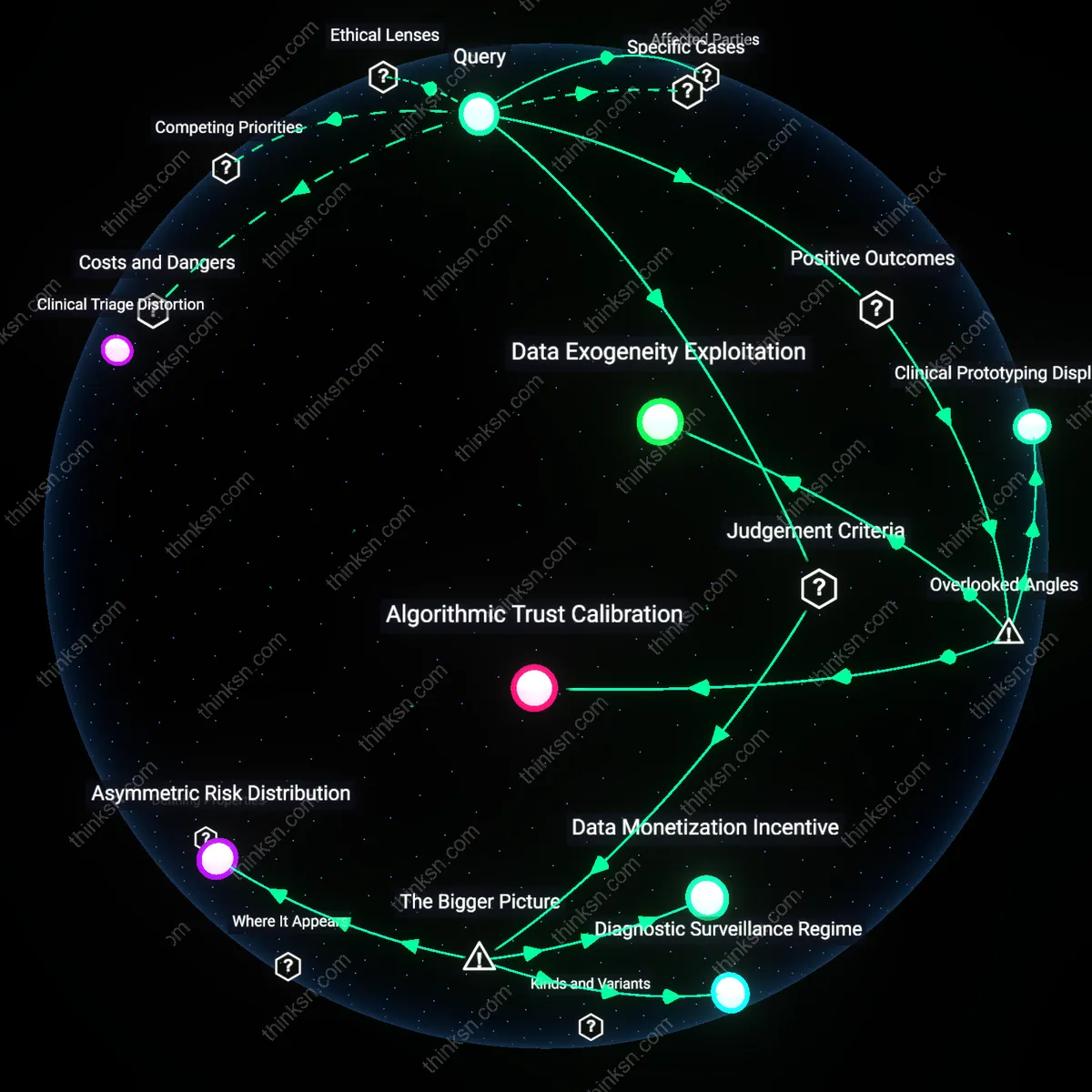

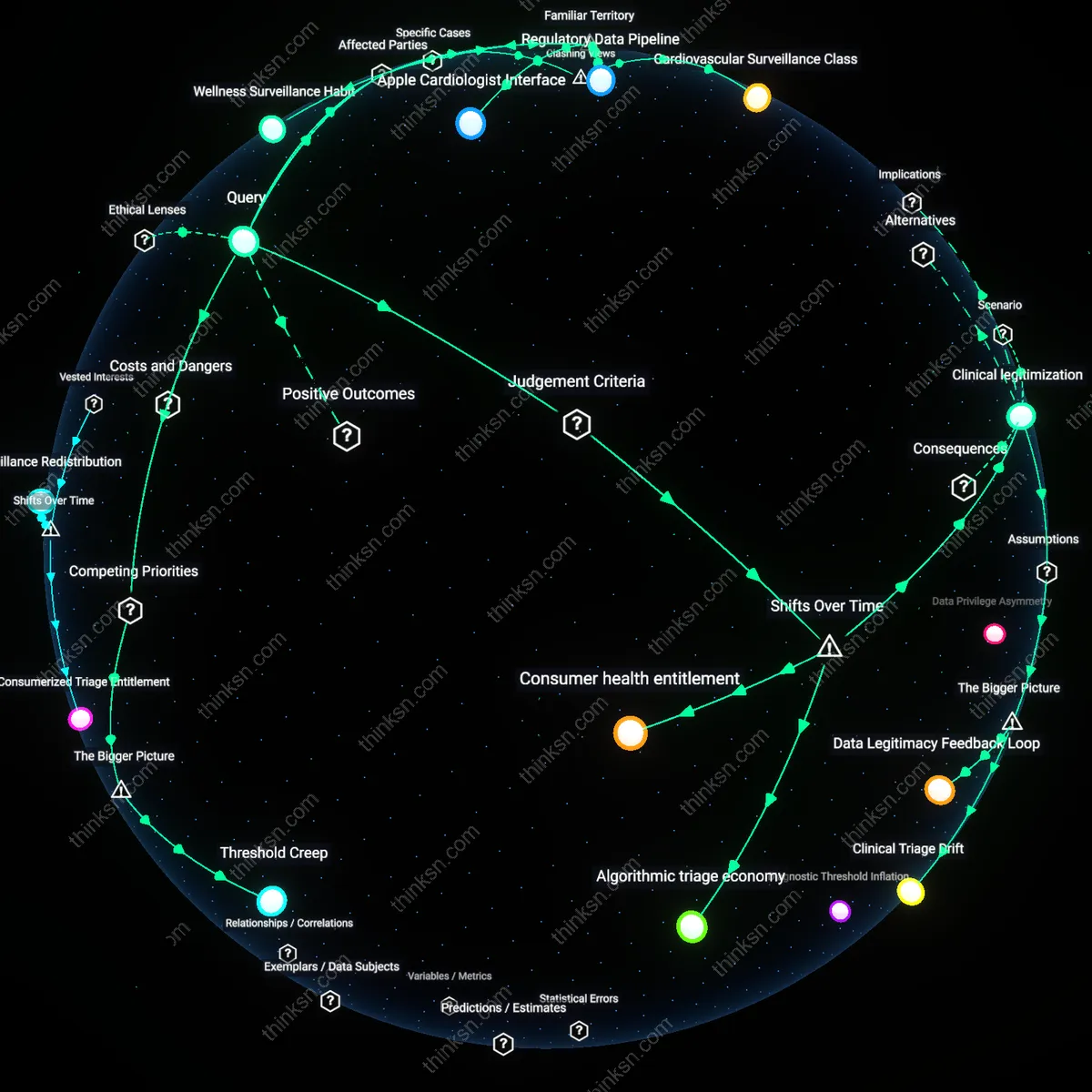

Clinical Triage Distortion

Emergency departments in urban hospitals experience increased patient influx from low-severity AI-generated alerts, skewing triage priorities and delaying acute care. Public trust in AI detection outpaces clinical validation, particularly among aging populations using consumer-grade devices. The familiar promise of early intervention obscures how decentralized monitoring floods overburdened systems with false urgency, effectively privatizing symptom interpretation while socializing healthcare strain.

Data Monetization Incentive

Yes, reliance on AI for personal health monitoring is justified when evaluated through the lens of public health infrastructure expansion, because the integration of AI into national screening programs—such as the UK's NHS using AI to analyze retinal scans for diabetic retinopathy—demonstrates measurable reductions in late-stage diagnoses, thereby fulfilling a duty of care grounded in the moral principle of beneficence; this holds due to institutional adoption creating system-wide early detection capacity that outweighs individual privacy risks, which are mitigated through anonymization protocols and governance frameworks—what is underappreciated is that the state, not the user, becomes the primary privacy steward, shifting the ethical burden from personal to collective risk management.

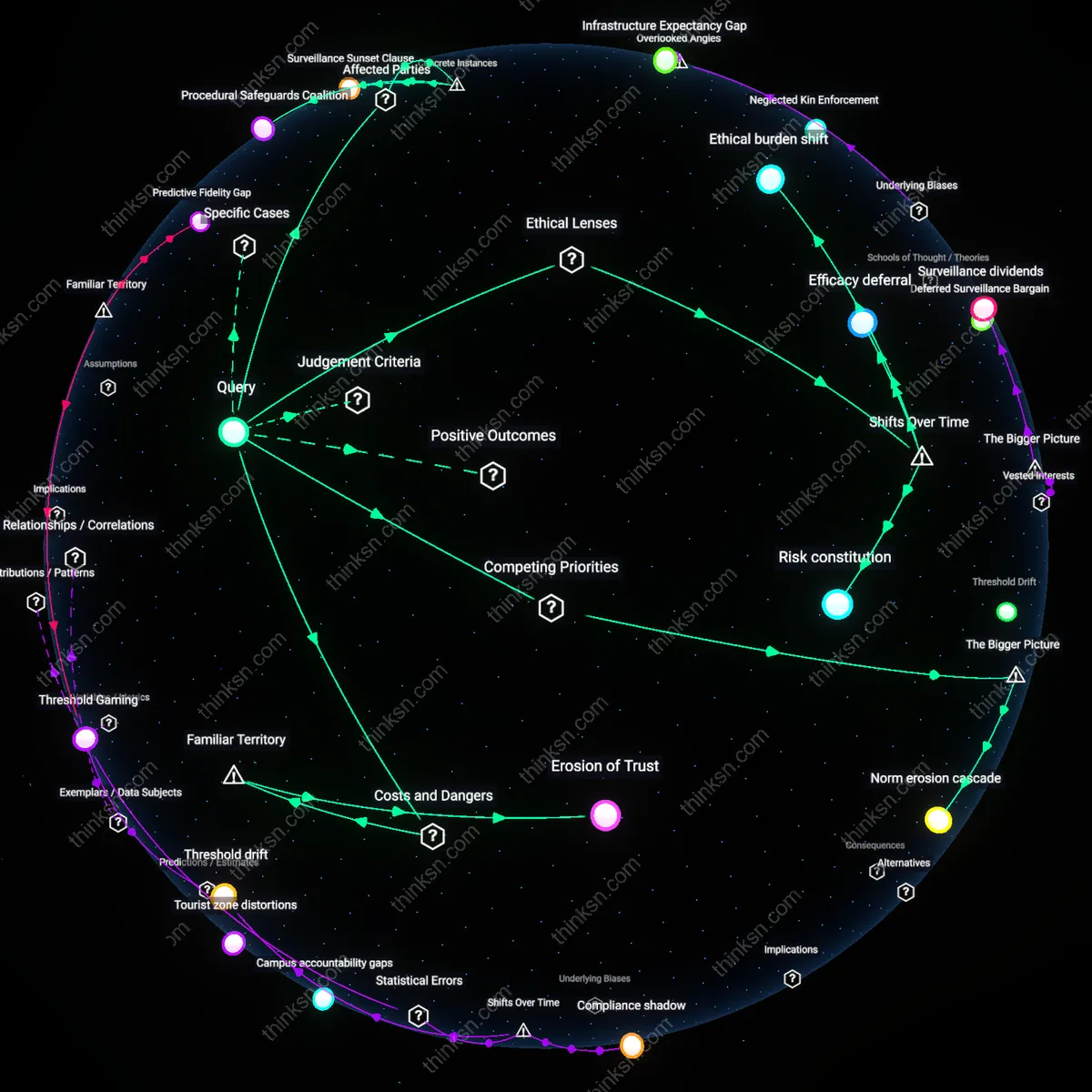

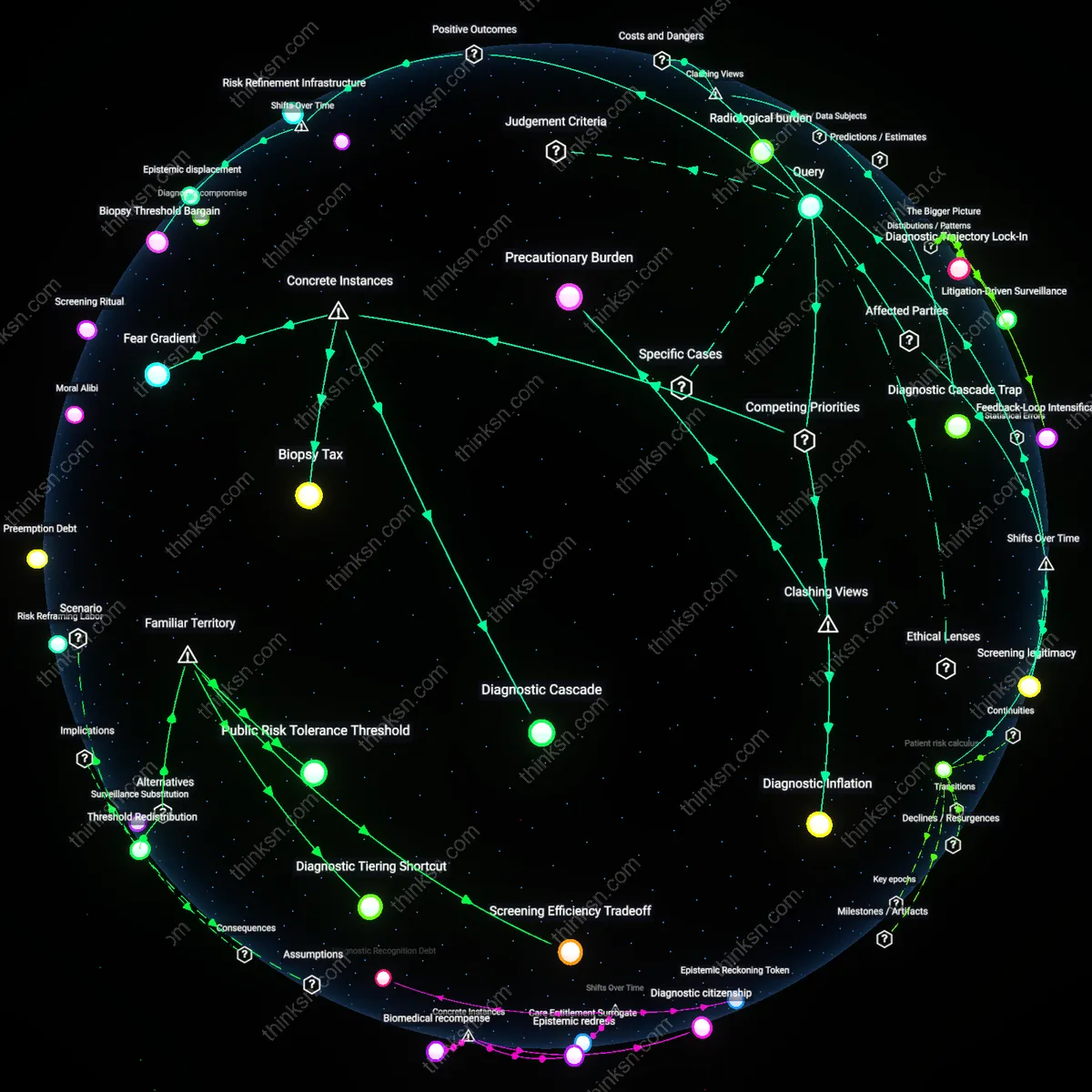

Diagnostic Surveillance Regime

No, reliance on AI for personal health monitoring is not justified when analyzed through the economic principle of market capture, because corporate ecosystems like Apple’s Health app and its integration with third-party insurers create covert data-sharing channels that repurpose personal biometrics for predictive risk modeling, privileging actuarial efficiency over patient autonomy; this dynamic is enabled by weak regulatory oversight in digital health markets, particularly in the U.S. where HIPAA fails to cover consumer-facing apps, allowing firms to treat health data as tradable assets—what is rarely acknowledged is that early detection benefits serve as a legitimizing alibi for the normalization of continuous physiological surveillance driven by profit, not care.

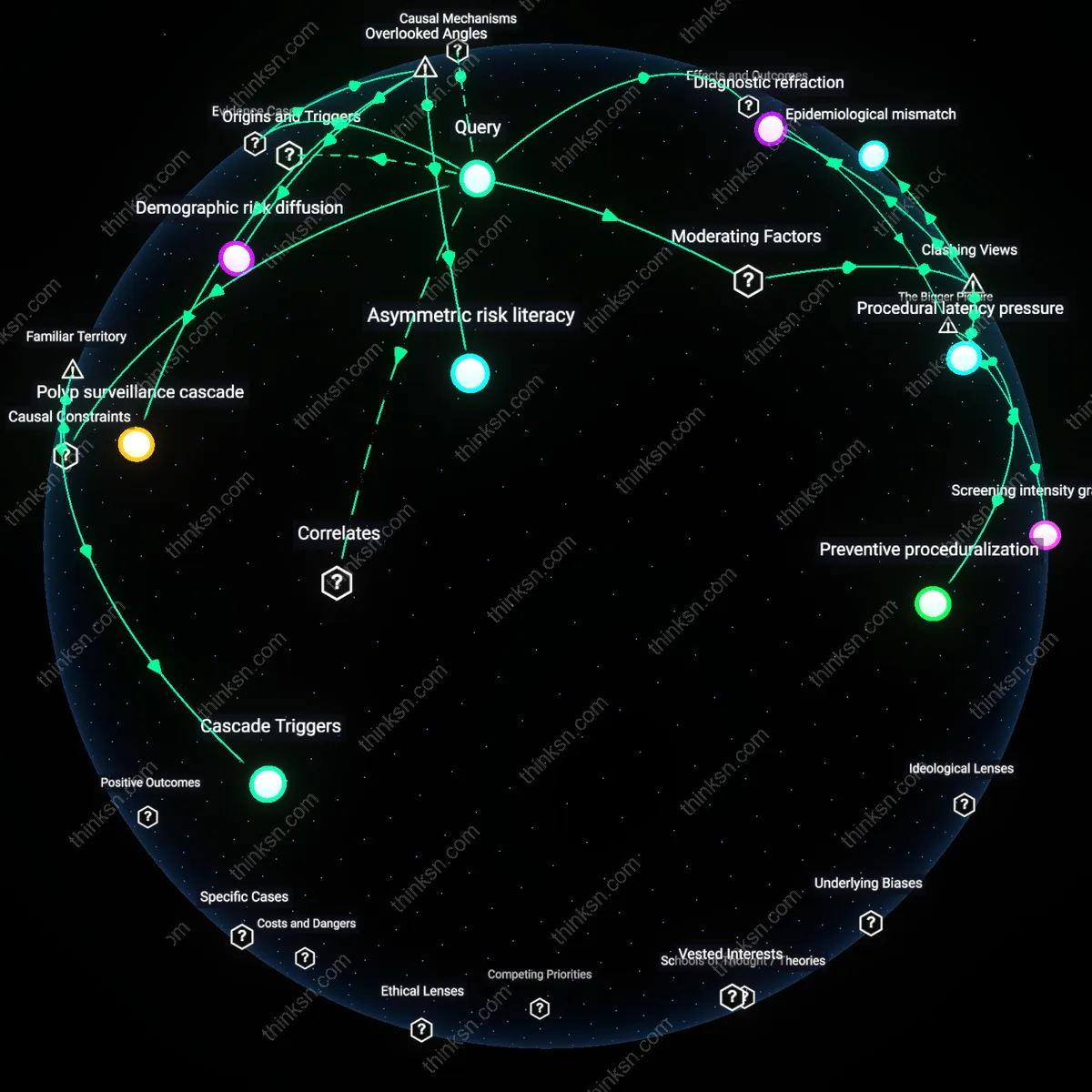

Asymmetric Risk Distribution

The justification for AI-driven health monitoring depends on the principle of distributive justice, as low-income populations in countries like India are targeted by AI-based tuberculosis screening via mobile chest X-rays but are simultaneously excluded from follow-up care, revealing that early detection without equitable access to treatment entrenches health disparities; this occurs through donor-funded digital health initiatives that prioritize metricizable outcomes (e.g., scans processed) over longitudinal care pathways, leveraging AI's scalability while externalizing the costs of intervention—what remains hidden is that the technology functions less as a medical tool and more as a triage filter that sorts the 'treatable' from the 'manageable' based on systemic resource constraints.

Algorithmic Trust Calibration

Reliance on AI for personal health monitoring is justified when patients recalibrate trust based on algorithmic transparency in low-severity chronic conditions, because systems like wearable ECG detectors in smartwatches create feedback loops where users learn to distinguish false positives from clinically actionable signals through repeated, contextualized alerts. This recalibration is mediated by longitudinal data literacy emerging not from technical understanding but from pattern recognition in lived bodily experience, a mechanism typically absent in privacy-risk framings that assume either blind compliance or outright rejection. The overlooked dynamic is that trust in AI health tools is not static or binary but evolves through bodily feedback, altering the perceived trade-off between early detection and data exposure.

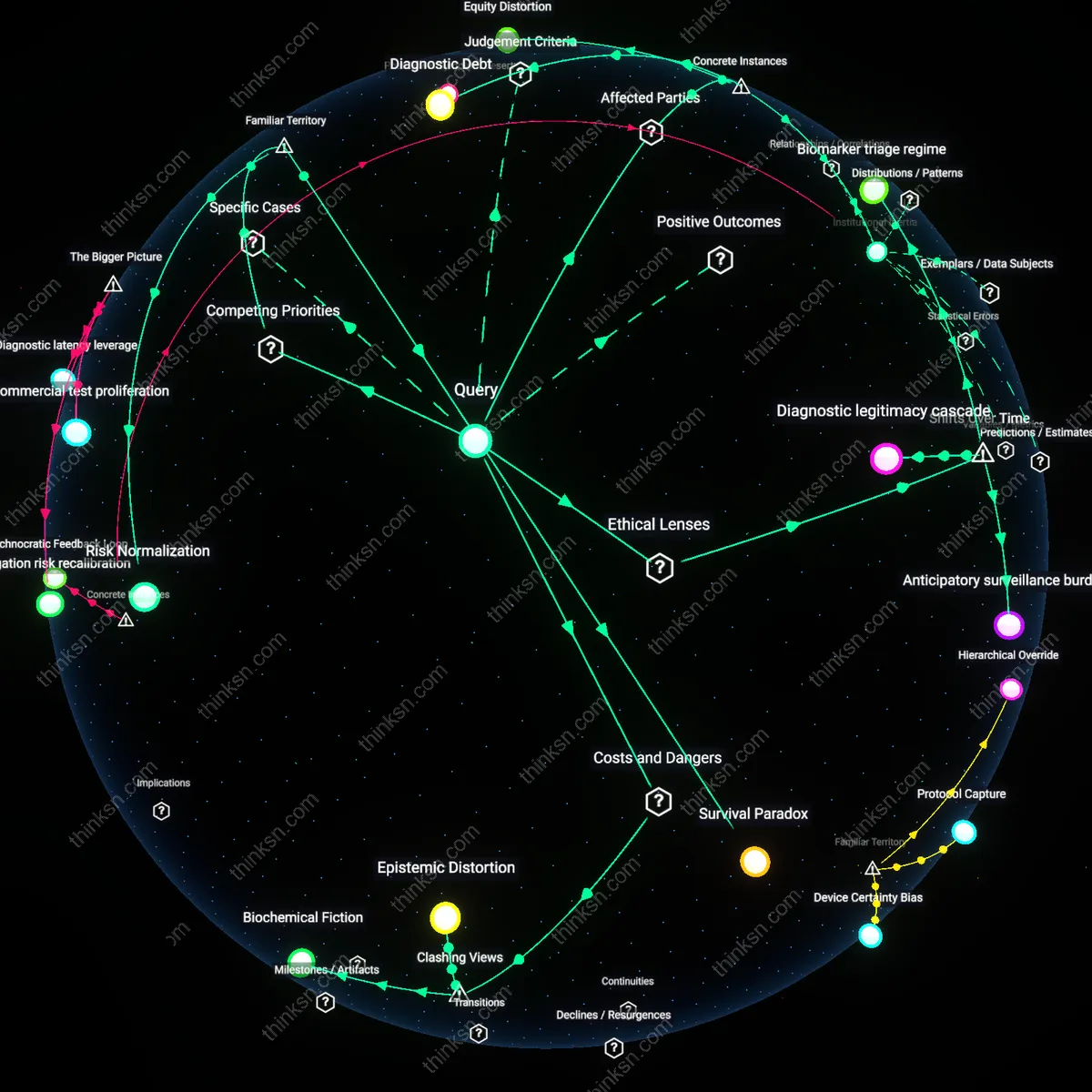

Data Exogeneity Exploitation

Reliance on AI for personal health monitoring amplifies unseen economic externalities by transforming intimate physiological rhythms into exogenous training data for third-party algorithms operating beyond the health domain, such as insurance risk modeling or neuromarketing platforms. Even anonymized heart rate variability streams from fitness trackers become part of feedback-rich datasets that refine behavioral prediction models in ways that cannot be anticipated at the point of collection, creating a stealth data economy. The underappreciated factor is that privacy risk is not merely about re-identification but about the repurposing of biometric time-series data into training fuel for systems that indirectly shape life opportunities — a dimension absent in conventional benefit-risk assessments focused on clinical accuracy versus disclosure.

Clinical Prototyping Displacement

Widespread reliance on AI health monitoring shifts the real-world development of clinical diagnostics from controlled hospital environments to consumer-facing platforms, where firms like Apple or Fitbit effectively conduct de facto clinical trials through over-the-air updates to irregular heartbeat detection algorithms used by millions. This creates a hidden dependency where medical validation becomes distributed, iterative, and user-funded, with false positives tolerated as necessary noise in the prototyping process — a dynamic that evades standard regulatory scrutiny. The non-obvious consequence is that early detection benefits are not outcomes of mature technology but products of a clandestine innovation model that treats users as both test subjects and data suppliers, which reshapes how we assess the ethical justification of AI in health.