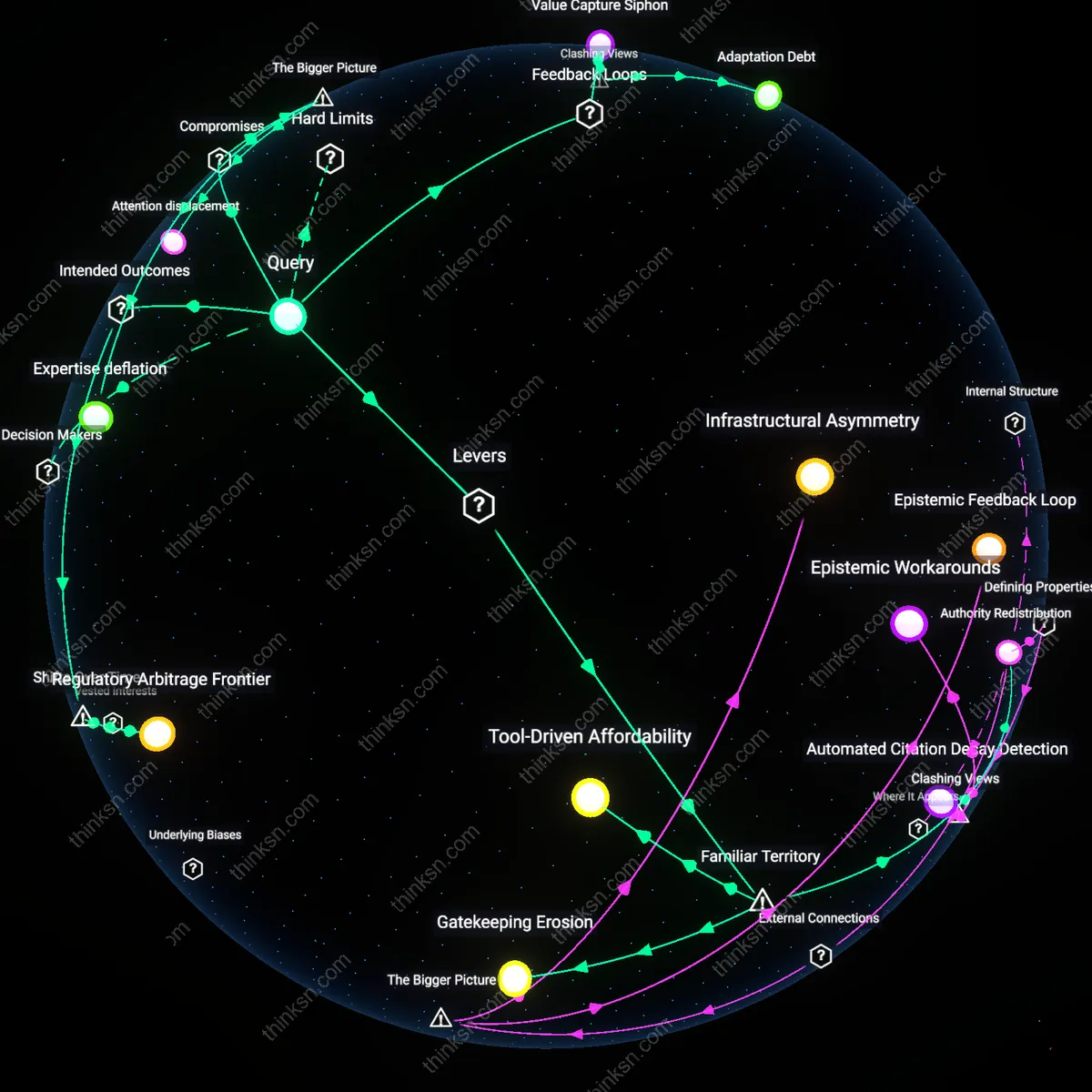

Are Legal Algorithms Making Us Too Reliant on Shortcuts?

Analysis reveals 7 key thematic connections.

Key Findings

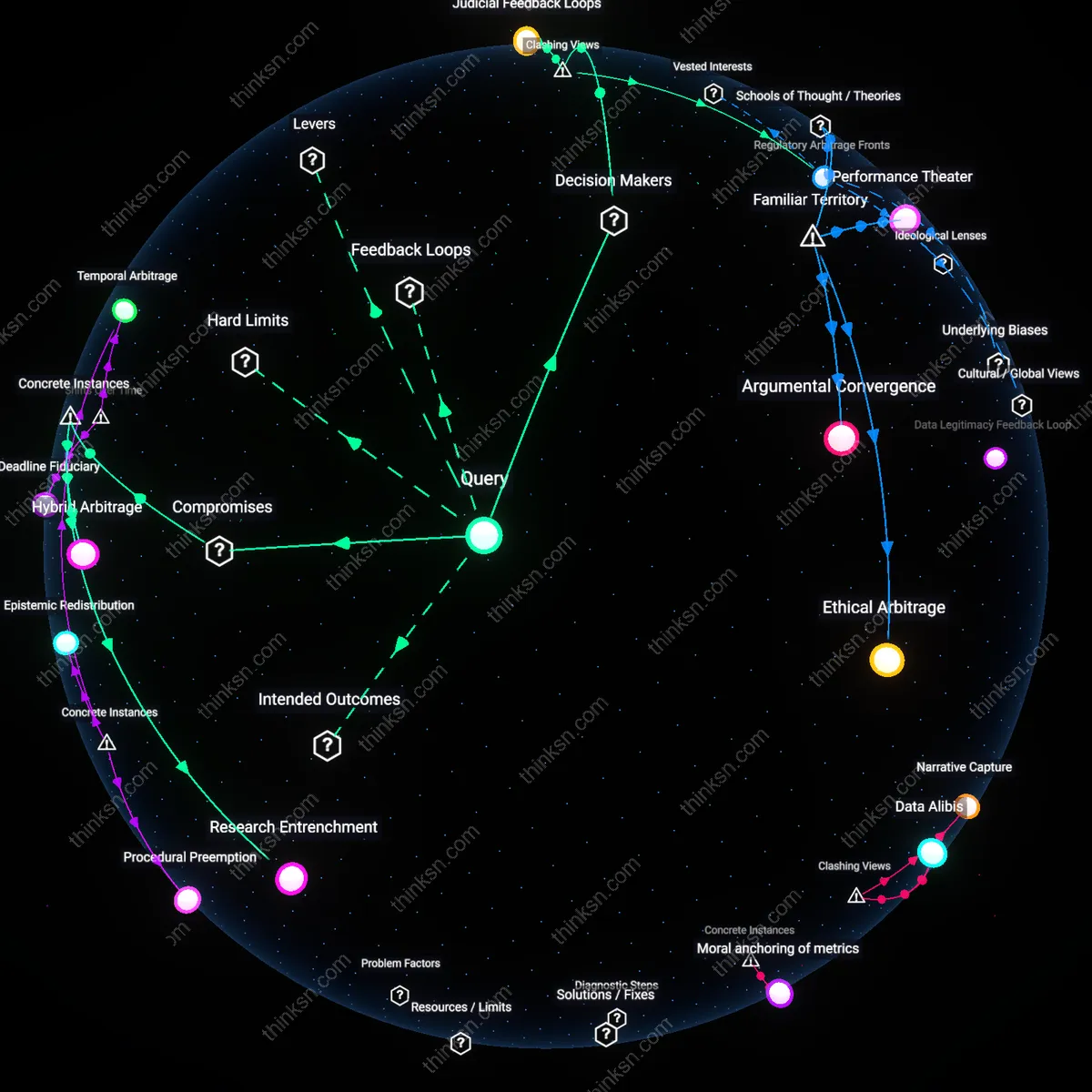

Epistemic drift

AI-driven legal research redistributes authority from juridical precedent to statistical correlation, eroding the doctrinal coherence of case law as courts increasingly defer to algorithmic patterns over legal reasoning. This shift occurs because machine learning tools weight outcomes by frequency rather than principle, leading firms and public defenders under cost pressure to adopt AI shortcuts that privilege predictive accuracy over constitutional fidelity—particularly in overburdened jurisdictions like municipal courts in Harris County or Cook County. The non-obvious consequence is not mere inefficiency but a silent reconfiguration of legal judgment, where rulings begin to reflect historical biases as statistical norms rather than evolving interpretations of rights, thus normatively anchoring law to past disparities under the guise of neutrality.

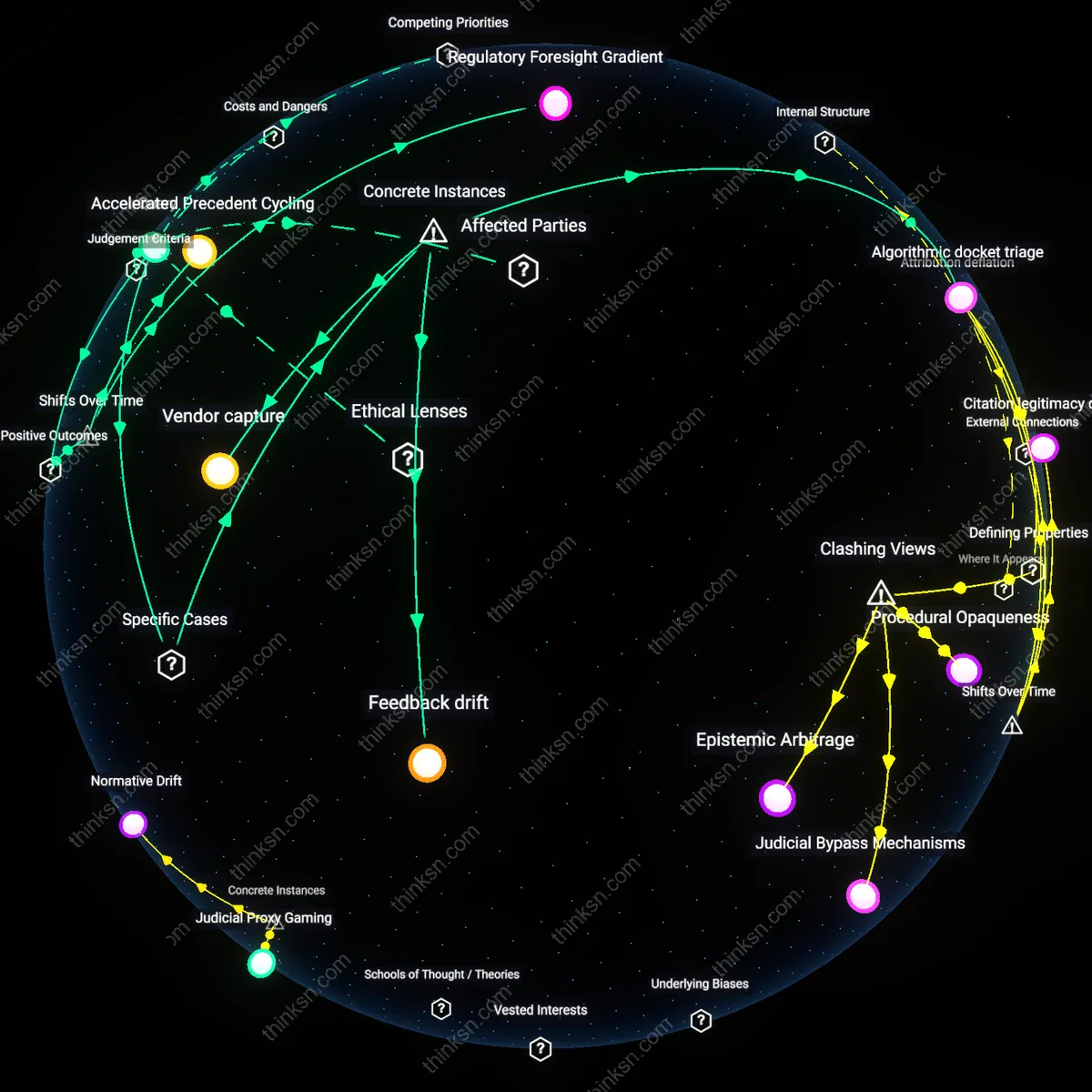

Accelerated Precedent Cycling

AI-driven legal research has shortened the feedback loop between judicial decisions and their reuse, enabling faster assimilation of novel rulings into prevailing practice. Historically, the latency between a court decision and its widespread citation could span years, limited by manual indexing in tools like Westlaw’s print digests; now, machine learning models flag and cluster emerging cases in real time, compressing doctrinal evolution that once took decades into months. This shift, crystallized after 2018 with the deployment of LLM-augmented research platforms like Casetext’s CARA and Ross Intelligence’s successor systems, reveals how doctrinal stability is giving way to rapid precedent turnover, particularly in fast-moving areas like data privacy and algorithmic liability. The non-obvious consequence is not merely efficiency but a transformation in how legal norms stabilize—less through authoritative deliberation, more through velocity of application.

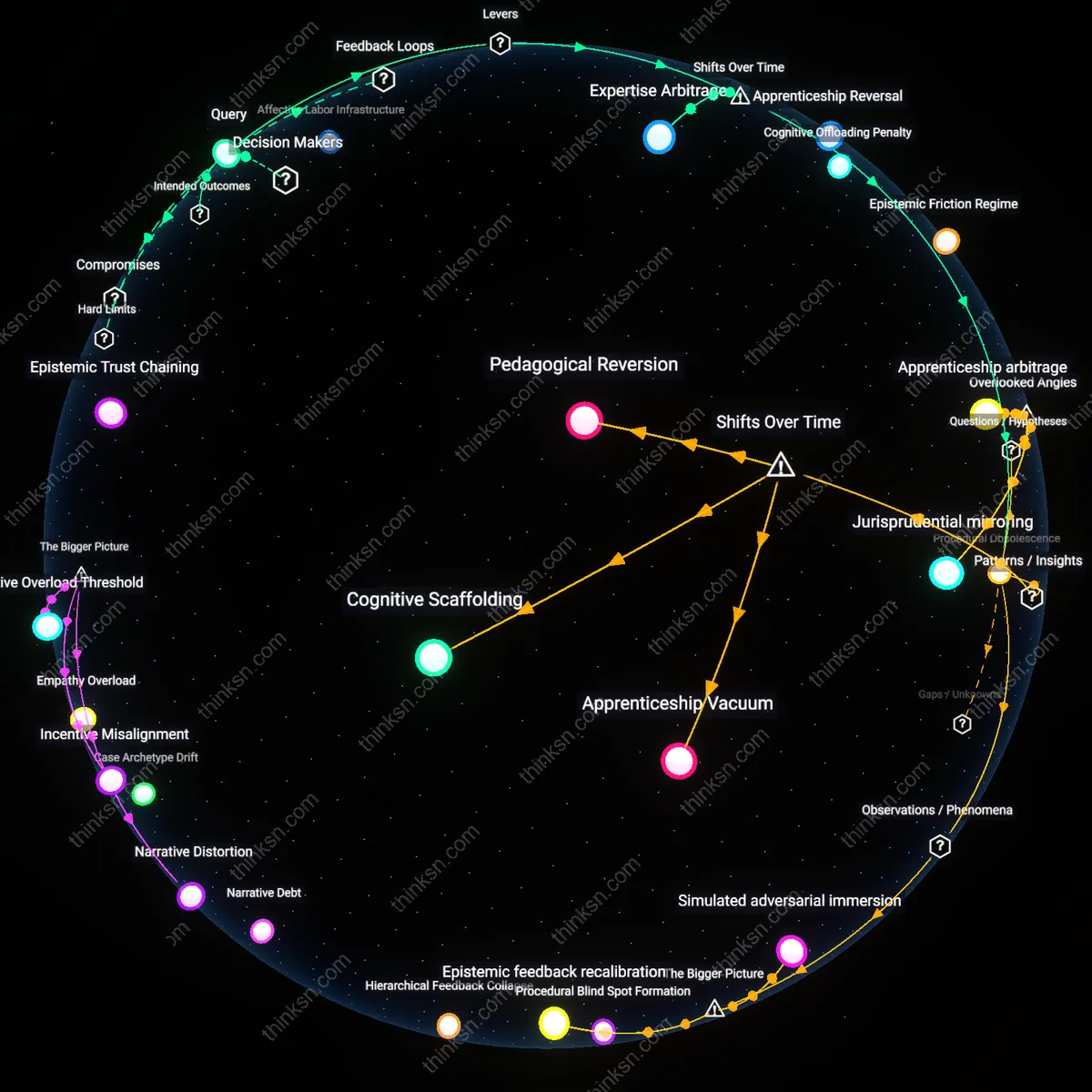

Institutional Knowledge Decoupling

Law firms now outsource cognitive scaffolding for legal reasoning to AI systems, marking a break from the apprenticeship-based knowledge transfer that defined the profession from the 19th century through the early 2000s. Previously, junior associates internalized research judgment by replicating senior lawyers’ methods under supervision, creating a lineage of interpretive continuity; today, AI tools like Westlaw Edge or Lexis+ AI bypass that transmission by delivering optimized search paths without exposing the user to failed queries or contextual dead ends. This transition, accelerating after 2020 with pandemic-driven digitization, severs the experiential learning chain, allowing firms to scale rapidly but eroding firm-specific doctrinal memory. The underappreciated effect is not just skill atrophy but the emergence of fungible, platform-dependent legal reasoning that weakens organizational epistemic identity.

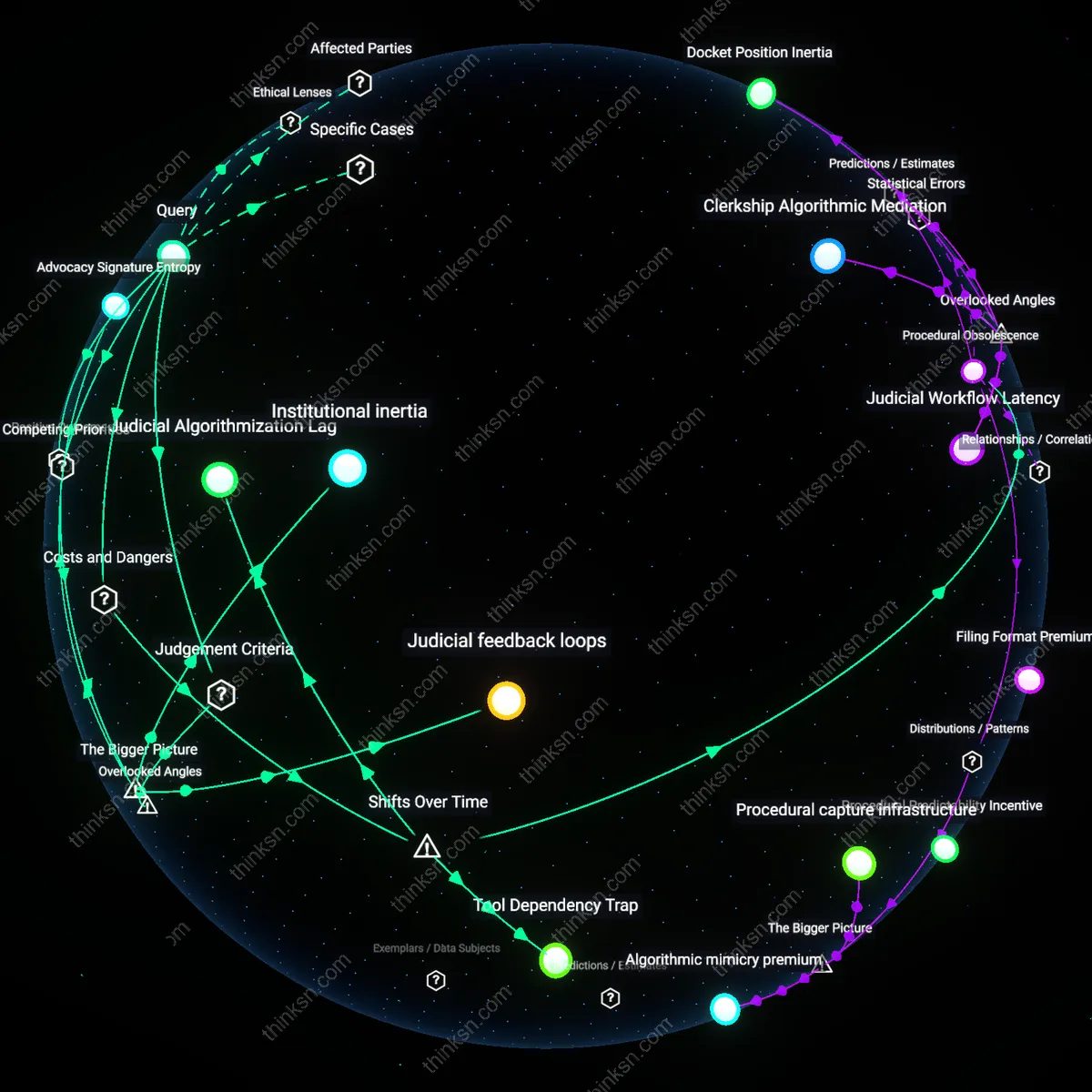

Regulatory Foresight Gradient

AI enables mid-tier law offices to simulate case outcomes with probabilistic models trained on opaque judicial behavior, democratizing access to predictive analytics once monopolized by elite firms with proprietary datasets. Before 2015, only well-resourced litigators could afford custom forecasting tools, creating a strategic asymmetry in high-stakes litigation; today, platforms like Paxton.ai or Klare’s NLP engine provide small firms with jurisdiction-specific win-probability estimates derived from historical judge rulings, motion dispositions, and docket trends. This shift—from scarcity to near-universal access—has intensified competitive parity in legal strategy, especially in bankruptcy and immigration law, where outcome uncertainty is high. The overlooked result is not just leveling the playing field but a structural incentive for preemptive settlement and procedural innovation, reshaping how legal conflict unfolds before courts even convene.

Feedback drift

LexisNexis's integration of AI-driven case prediction tools in state court systems has amplified feedback drift by privileging outcomes from jurisdictions with digitized, high-volume rulings, thereby skewing research relevance toward procedural commonality rather than legal novelty. This mechanism privileges frequently cited, affirmed decisions—such as those from Cook County civil courts—over outlier or dissenting opinions, subtly recalibrating AI training data to reflect statistical prevalence rather than normative validity. The systemically significant effect is that legal arguments grounded in precedent become self-stabilizing, masking doctrinal evolution under algorithmic reinforcement of past patterns, a dynamic rarely acknowledged in bar association guidelines on AI use.

Attribution deflation

The Southern District of New York’s reliance on AI-assisted brief analysis in the 2023 *SEC v. Ripple* proceedings exposed attribution deflation when both parties cited hallucinated case law generated by overlapping legal AI platforms, only detected during judicial scrutiny. The shared dependence on unstable synthetic data pools—originating from unverified Westlaw Edge and Casetext outputs—eroded the court’s ability to isolate responsibility for erroneous precedent invocation, collapsing accountability into systemic ambiguity. This instance reveals how professional reliance on convergent AI sources undermines the foundational legal norm of attributable reasoning, especially in high-stakes federal litigation where precision is presumed.

Vendor capture

Thomson Reuters’ control over both Westlaw and the underlying metadata schema used by AI research tools in over 70% of Am Law 100 firms demonstrates vendor capture, where a single private entity shapes the epistemic boundaries of legal knowledge through opaque algorithmic curation. When the company updated its KeyCite Plus AI model in 2022 to deprioritize older administrative rulings—such as certain pre-1990 EPA adjudications—many environmental law firms unknowingly abandoned viable arguments rooted in regulatory continuity. This centralized influence over what legal information is retrievable and framed as relevant constitutes a non-market mechanism of doctrinal steering, rarely subject to adversarial challenge or transparency mandates.