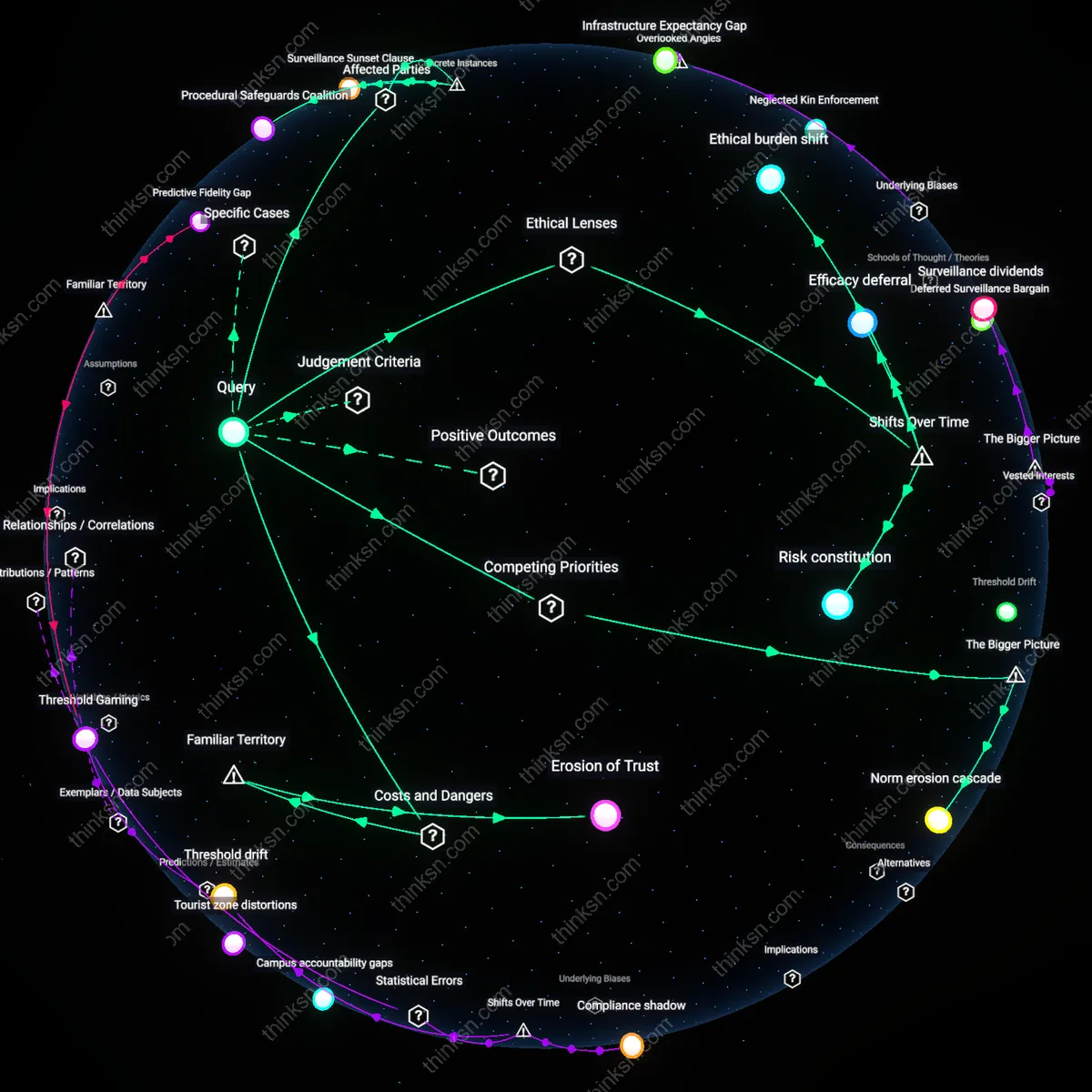

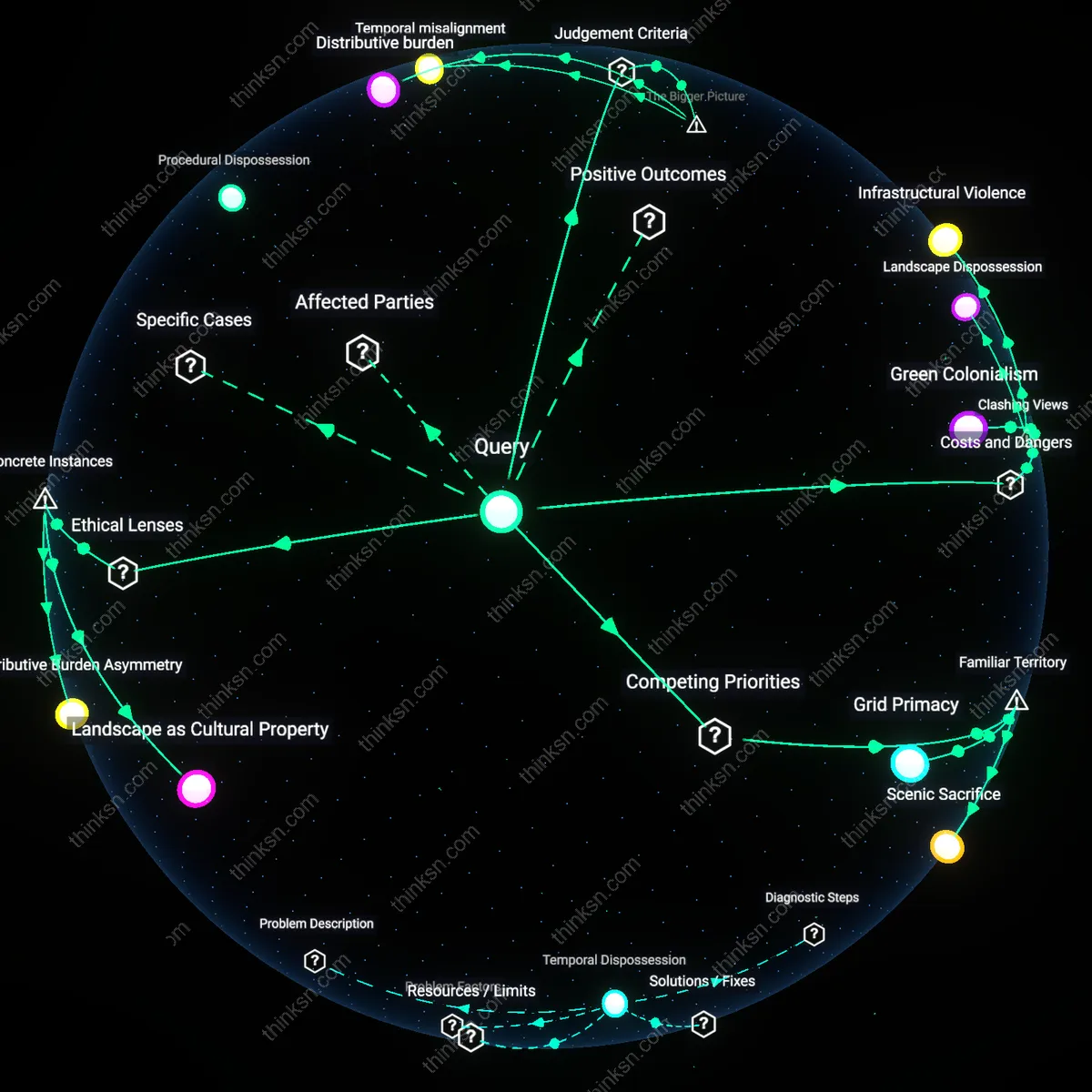

Do More Surveillance Cameras Actually Reduce Crime or Harm Ethics?

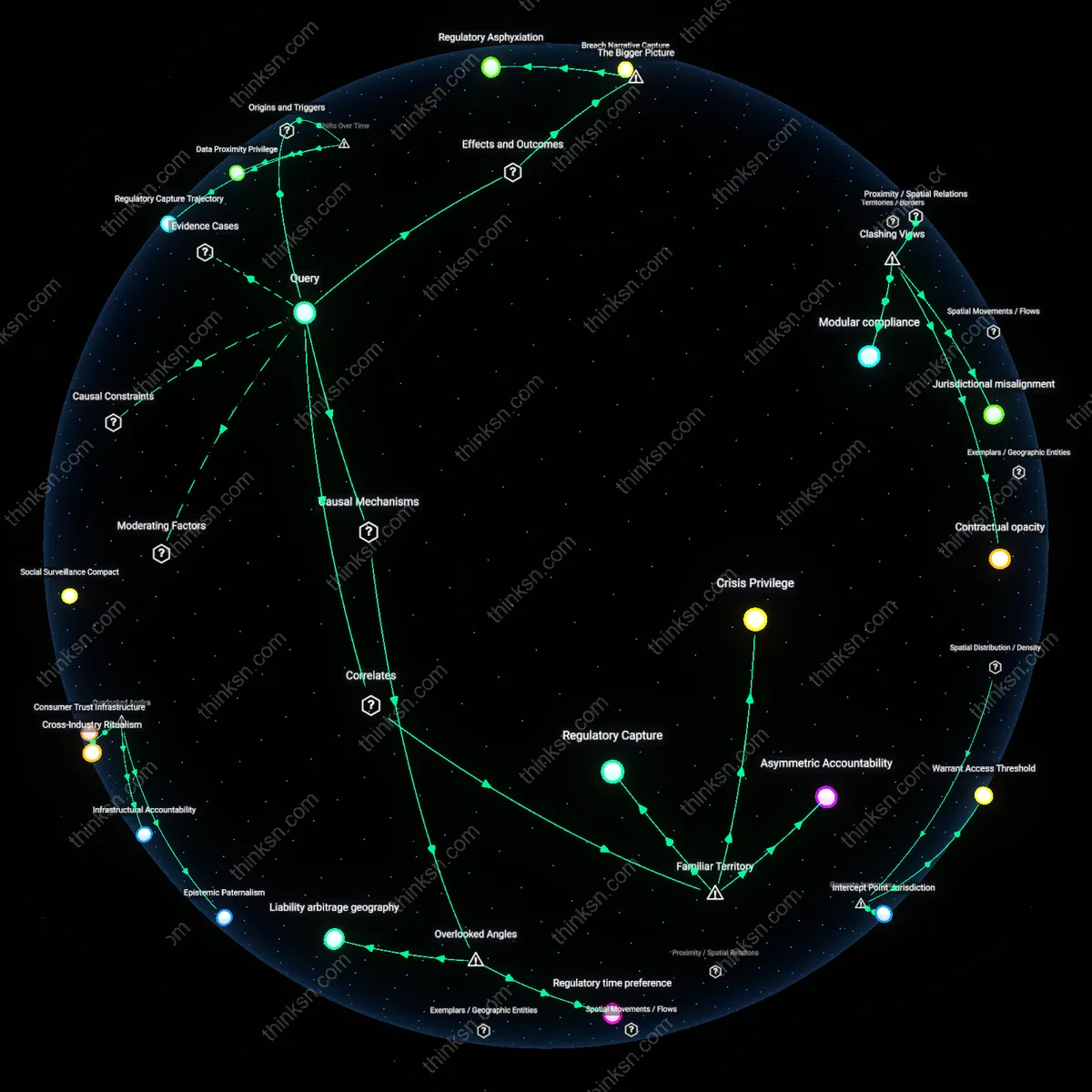

Analysis reveals 10 key thematic connections.

Key Findings

Procedural Safeguards Coalition

A policy analyst can balance ethical concerns about surveillance expansion by institutionalizing independent oversight through civilian review boards, as seen in the Baltimore Community Oversight Advisory Board established after the 2015 Freddie Gray protests, where sustained community pressure led to real-time auditing of aerial surveillance programs; this mechanism matters because it embeds accountability not as an afterthought but as an operational requirement, distributing legitimacy across affected residents, police, and city officials in a way that procedural inclusion modulates both abuse risk and public acceptance—revealing that oversight functions not merely as constraint but as a bridge-building infrastructure among distrustful parties.

Targeted Deployment Threshold

A policy analyst can justify limited surveillance deployment only when granular crime data meets a statistical threshold of repeat victimization, as demonstrated in the Chicago Police Department’s 2017 Strategic Decision Support Centers, which confined predictive patrols to specific blocks with three or more violent incidents in six months, thereby reducing blanket monitoring while increasing tactical precision; this approach works because it replaces broad suspicion with actuarial specificity, creating a measurable boundary between intervention and overreach—showing that quantifiable triggers, not political urgency, can calibrate ethical trade-offs in real-time public safety decisions.

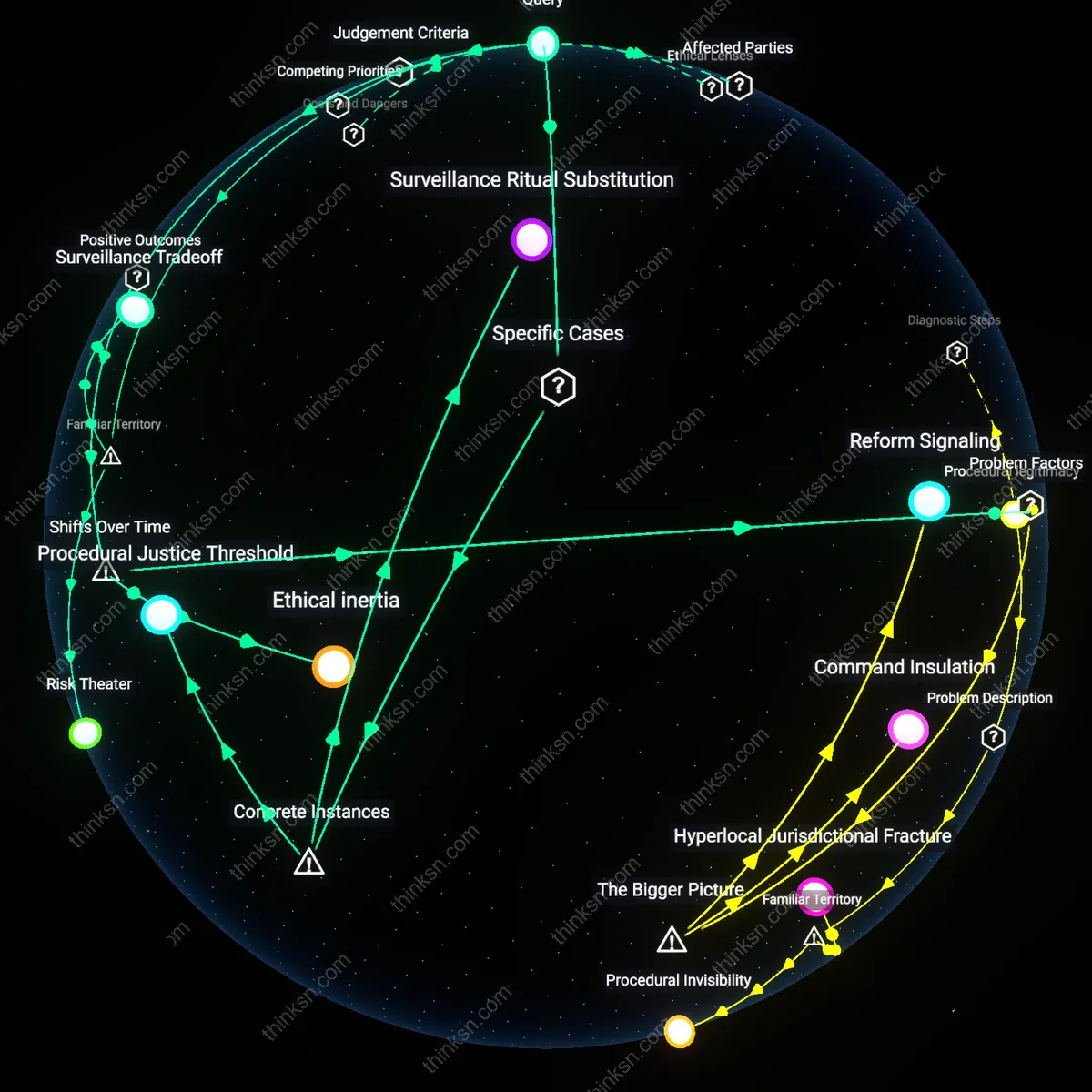

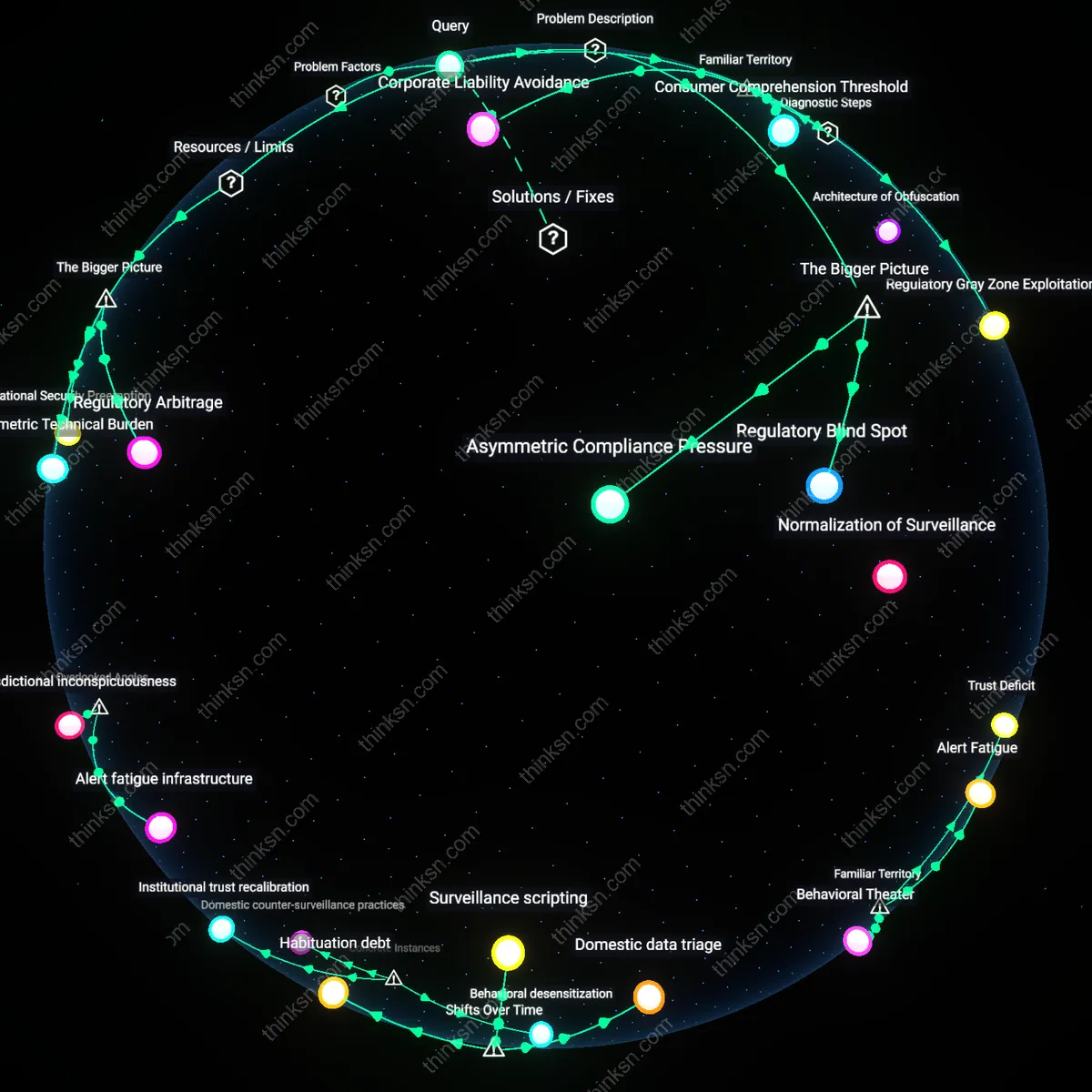

Surveillance Sunset Clause

A policy analyst can ethically manage uncertain evidence by building time-bound expiration into surveillance initiatives, as occurred with the London Metropolitan Police’s facial recognition trials between 2016 and 2020, where each deployment was restricted to 90-day pilots subject to judicial reauthorization based on efficacy reviews by the Biometrics Commissioner; this structure operates by forcing recurring justification, transforming speculative tools into testable policies and giving affected communities periodic leverage to contest continuation—highlighting that temporal limits do not weaken security policy but instead generate iterative learning and democratic renegotiation where evidence remains contested.

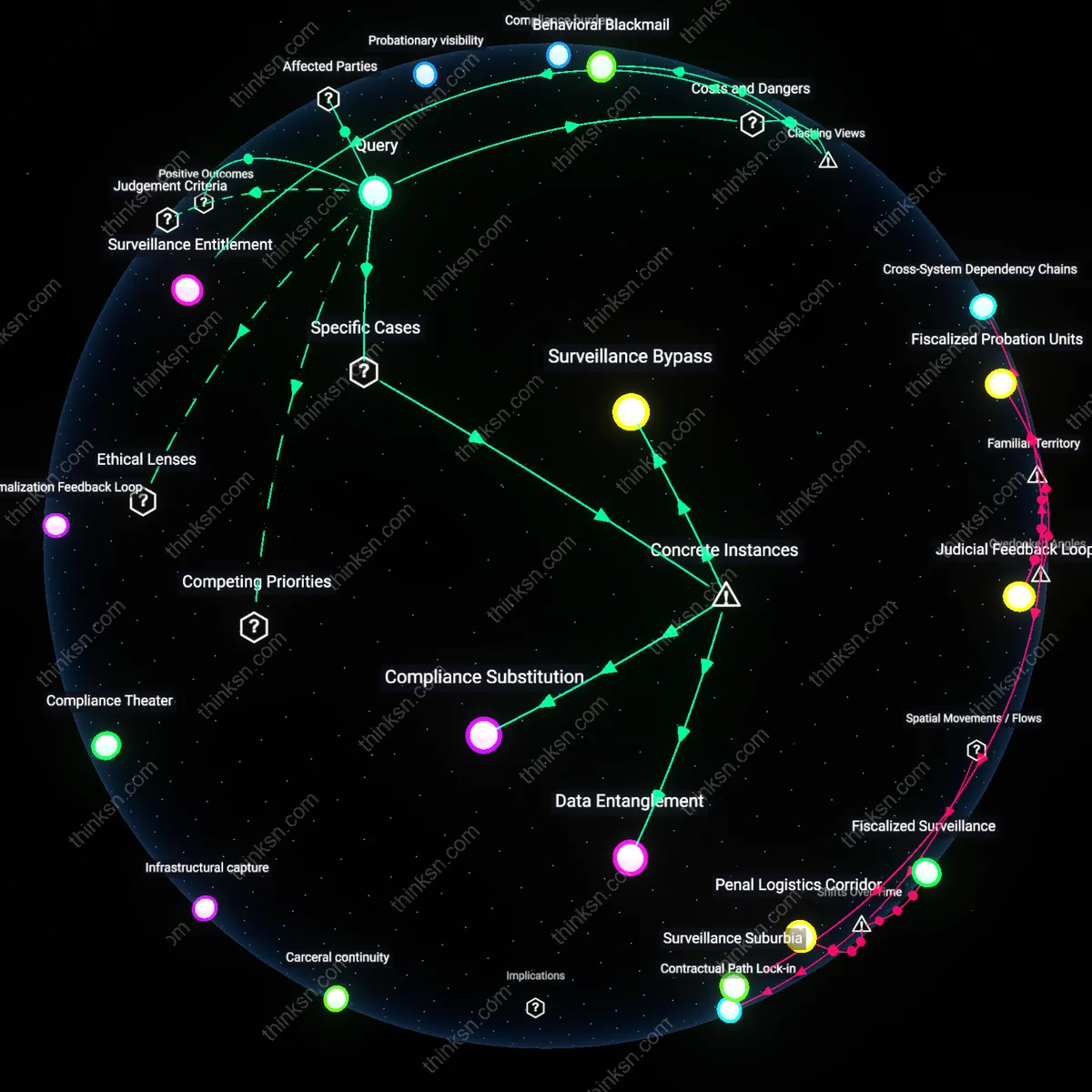

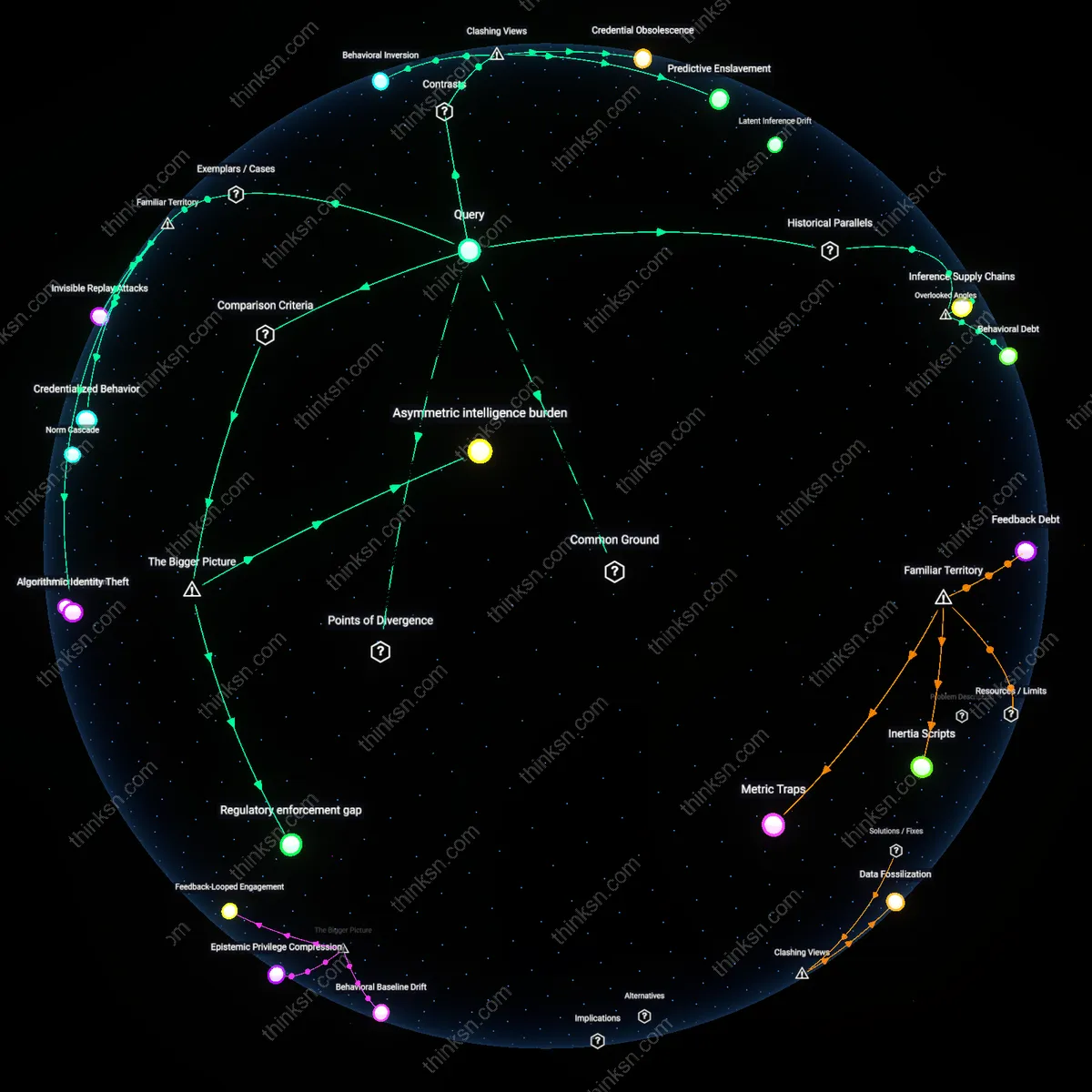

Erosion of Trust

A policy analyst should resist normalizing surveillance expansion because it systematically erodes community trust in law enforcement, particularly in historically over-policed neighborhoods like Chicago’s South Side, where persistent camera deployment without clear public safety outcomes deepens suspicion between residents and police. This damage operates through feedback loops in which visible monitoring hardens perceptions of state hostility, reducing cooperation with investigations and increasing social fragmentation—effects that persist even if crime rates remain unchanged. While the public easily associates surveillance with privacy loss, they less often recognize how its ineffectiveness can actively degrade the social infrastructure necessary for crime prevention.

Normalization of Coercion

A policy analyst must treat the adoption of surveillance in high-risk areas as an incremental step toward normalized state coercion, exemplified by how predictive policing algorithms in cities like Los Angeles embed expansion into standard operating procedures regardless of empirical success. This dynamic operates through institutional path dependency—once cameras and data collection infrastructures are in place, they become justified by their own existence rather than by results, shifting the burden of proof onto critics to demonstrate harm rather than proponents to prove utility. Although people readily connect surveillance to government overreach, they underestimate how routine bureaucratic maintenance of systems can entrench control practices absent any measurable benefit.

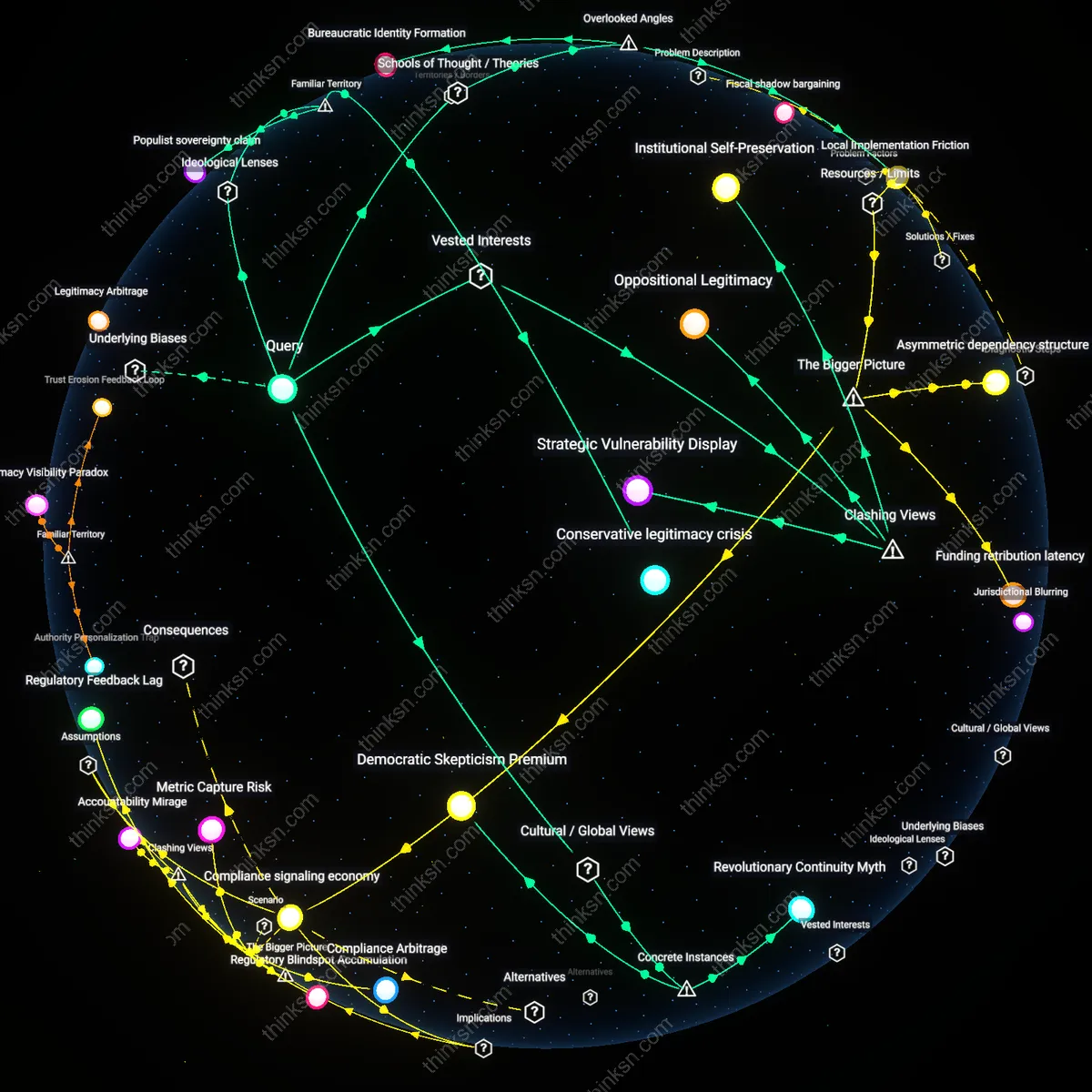

Budgetary capture

A policy analyst can balance ethical concerns about surveillance by resisting its expansion when crime reduction evidence is weak, because municipal budget cycles create irreversible resource allocation that favors visible security technologies over less visible social investments. Since police departments in cities like Chicago and Baltimore gain long-term operational dependence on surveillance infrastructure through federal urban security grants, early funding commitments structurally lock out alternative interventions—such as community outreach or mental health response units—by exhausting discretionary funds, a dynamic rarely reversible mid-cycle. This reveals how financial path dependency, not operational efficacy, often determines the longevity of surveillance programs, making budgetary decisions a more consequential ethical lever than oversight mechanisms.

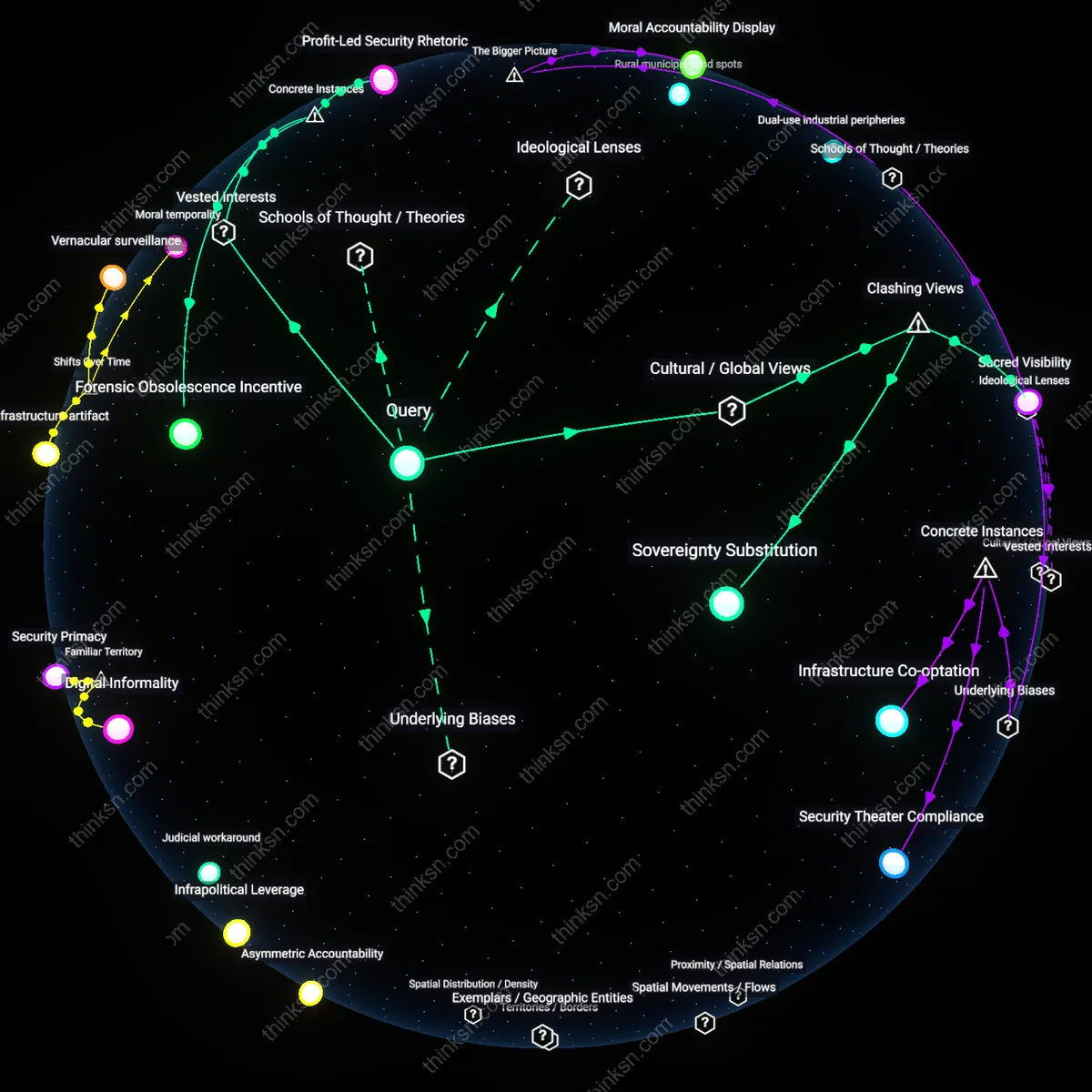

Norm erosion cascade

A policy analyst should constrain surveillance expansion in high-risk areas despite uncertain crime outcomes because incremental deployment normalizes data collection practices that later enable function creep across jurisdictions, as seen when license plate readers initially justified for violent crime in New Orleans were later used for immigration enforcement in Texas border counties. Regulatory inattention during pilot phases allows mission drift through interoperability agreements between fusion centers and federal agencies, leveraging shared data platforms that lack public transparency. This dynamic illustrates how localized, experimental programs serve as institutional entry points for broad surveillance regimes, making early ethical resistance critical to preventing systemic norm collapse.

Ethical burden shift

A policy analyst must transfer the moral responsibility of surveillance justification to elected legislators by invoking the republican principle of democratic accountability, which emerged decisively during the post-1960s expansion of administrative governance. This transfer works through formal rulemaking procedures in bodies like city councils or congressional committees, where legitimacy is derived from electoral mandates rather than technocratic assessments, thereby embedding ethical contestation within representative institutions. The non-obvious reality this reveals is that analysts historically did not bear primary ethical weight—this responsibility was displaced onto politicians after the Warren Court era heightened scrutiny of state power, making bureaucratic neutrality a shield rather than a failure.

Efficacy deferral

A policy analyst should postpone definitive claims about surveillance effectiveness by institutionalizing iterative pilot programs governed by independent review boards, a mechanism normalized after the 9/11 era’s backlash against warrantless monitoring. This deferral operates through temporary authorization frameworks, such as sunset clauses in local surveillance ordinances, which embed provisionalism into law while allowing data accumulation under civil liberties oversight. The underappreciated consequence is that uncertainty is no longer a barrier to policy but has become a structured feature of governance—produced precisely by the post-Chicago School shift toward experimentalist regulation in urban security.

Risk constitution

A policy analyst renegotiates ethical limits by codifying surveillance thresholds within risk-scoring protocols modeled on actuarial justice practices that gained dominance in U.S. policing after the 1994 Crime Bill incentivized data-driven deployment. This balancing occurs through algorithmic benchmarks embedded in CompStat-era command systems, where acceptable intrusion is tied to statistically defined hotspots, thus translating moral boundaries into operational triggers. The shift from discretionary oversight to automated thresholds since the 2000s reveals how ethical judgment has been silently reconstituted not as principle but as calculated exposure—producing a new species of procedural morality native to predictive governance.