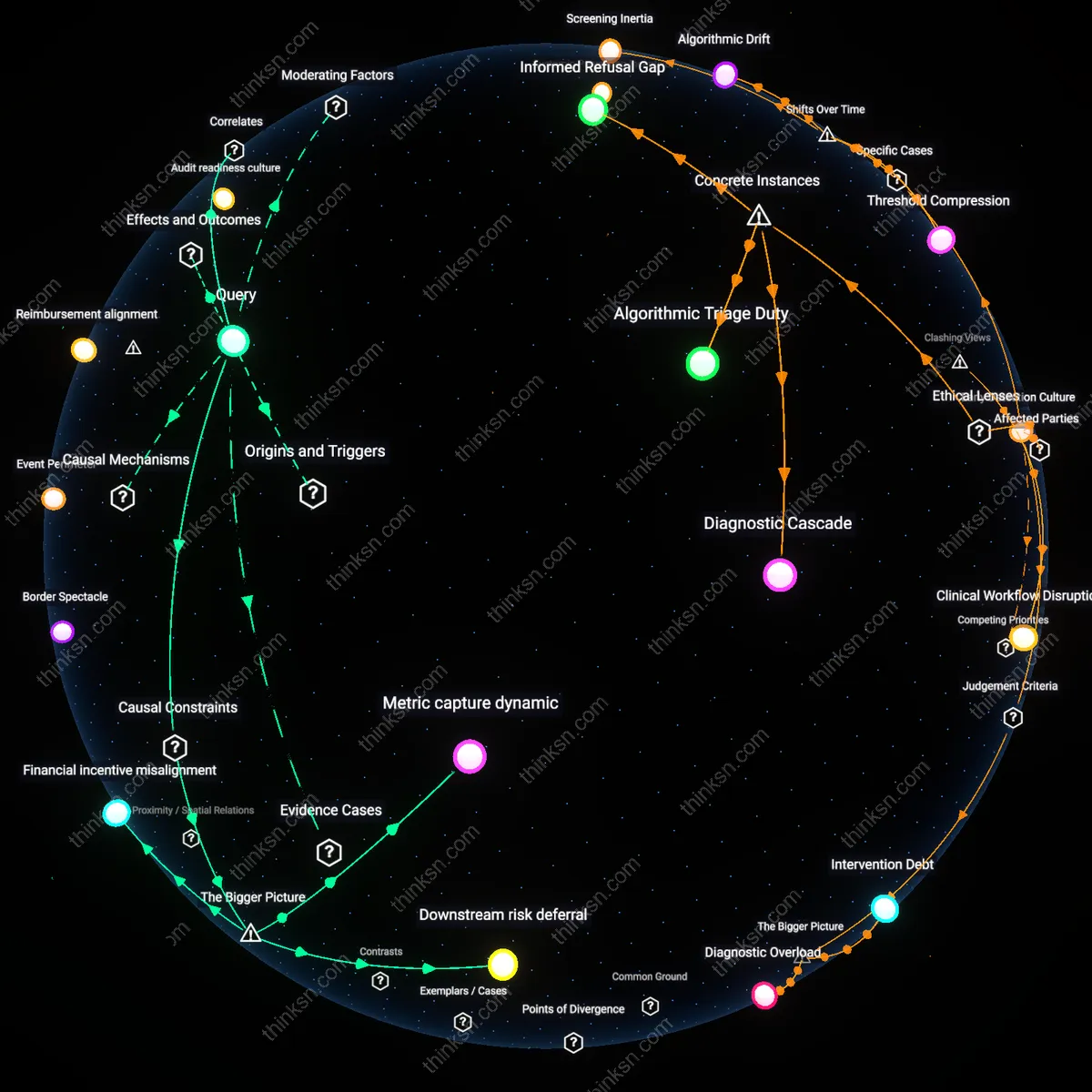

Diagnostic Overload

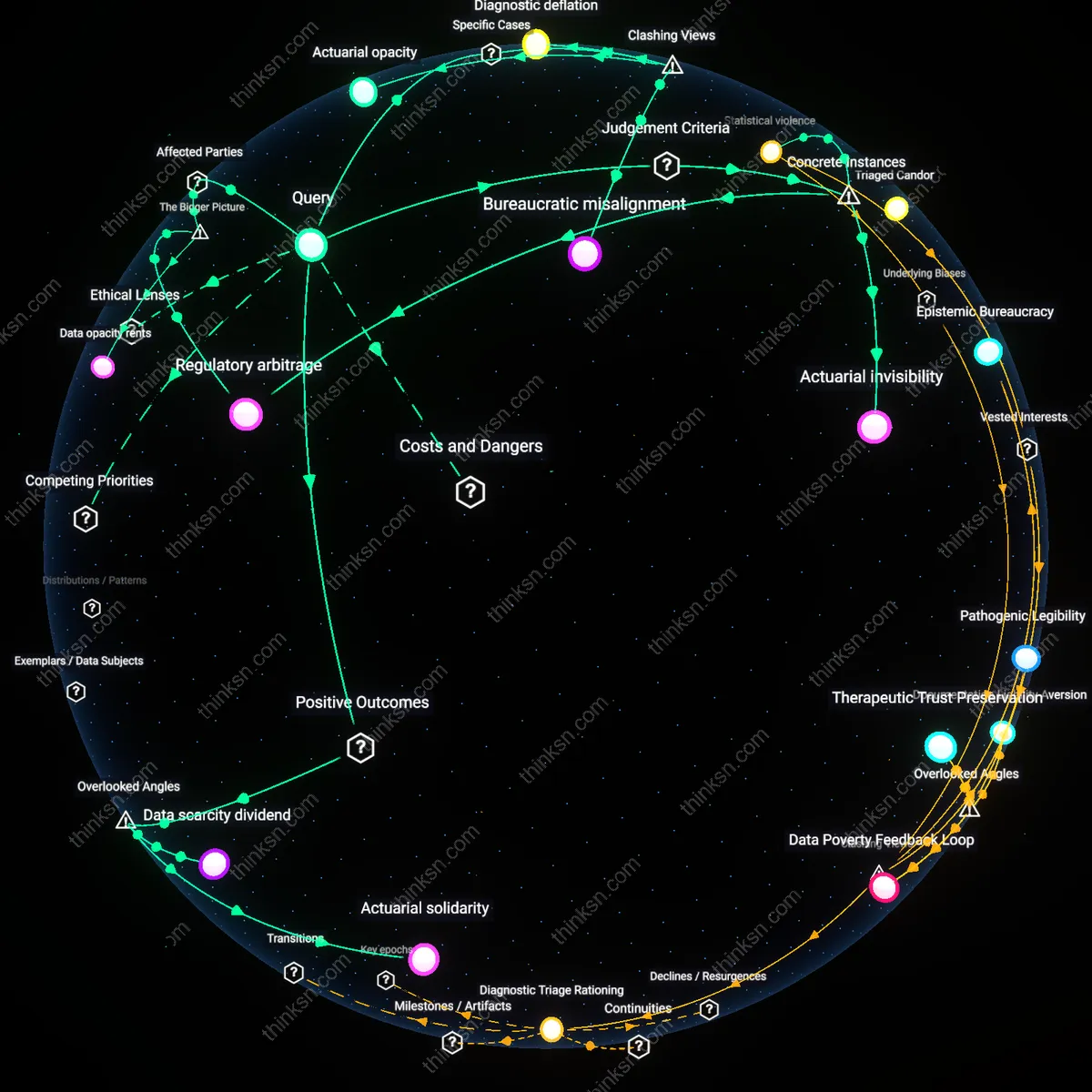

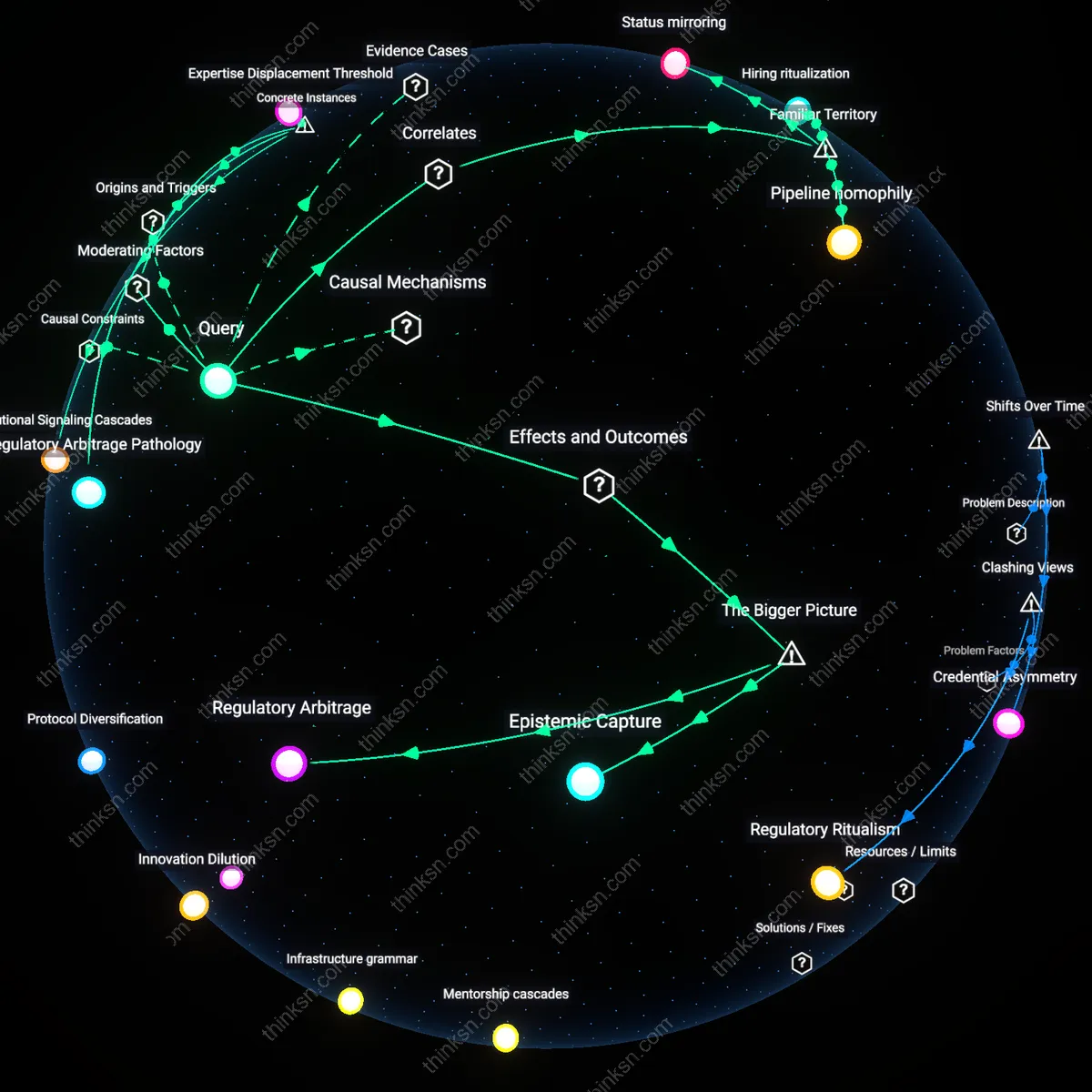

Automated screening alerts in hospital systems harm low-risk patients when primary care physicians, pressured by performance metrics tied to cancer detection rates, act on every alert without clinical suspicion, initiating cascades of invasive follow-ups that carry greater morbidity than the avoided disease. This occurs because electronic health record protocols treat all positive screens as urgent, overriding physician discretion amid time-constrained visits, particularly in safety-net hospitals where patient loads exceed capacity, making alert fatigue inevitable. The underappreciated consequence is that algorithmic risk thresholds, designed to maximize sensitivity, generate so many false positives that the system becomes iatrogenic by design—where the effort to eliminate missed cancers systematically increases net patient harm through unnecessary biopsies and surgeries.

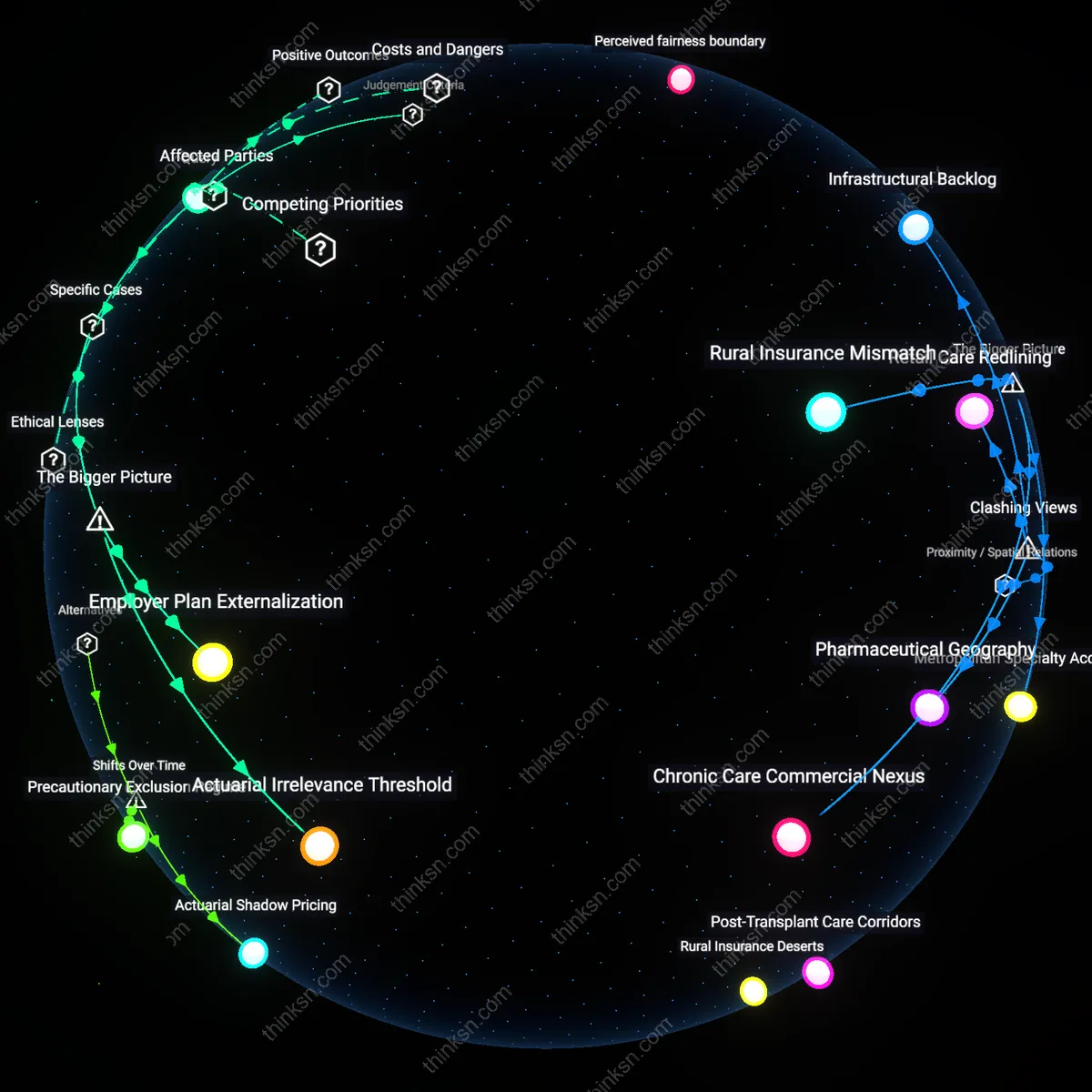

Equity Drift

Automated screening alerts lead to disproportionate harm in low-risk, underserved populations when hospital systems prioritize regulatory compliance over contextual clinical judgment, channeling socially marginalized patients—often lacking health literacy or continuity of care—into rigid screening algorithms that ignore baseline risk. Because federally funded institutions face penalties for low screening volumes but not for false-positive harms, administrators incentivize high alert response rates, pressuring clinicians to treat algorithmic prompts as mandatory regardless of individual risk, embedding structural bias in care pathways. The overlooked mechanism is that equity mandates, intended to reduce disparities in access, become perverted into procedural requirements that increase physical and psychological burdens on the very populations they aim to protect, especially in urban public hospitals serving predominantly Black and Latino communities.

Intervention Debt

Automated screening alerts generate long-term patient harm when health systems outsource risk stratification to centralized algorithms that do not account for future care complexity, causing low-risk individuals to accumulate minor but irreversible interventions—like CT scans or colonoscopies—that degrade resilience and increase sensitivity to future diagnostic errors. Teaching hospitals adopting enterprise-wide screening protocols optimize for short-term process compliance, but inadvertently saturate patient records with incidental findings that demand surveillance, creating clinical momentum doctors feel compelled to follow despite low pretest probability. The non-obvious outcome is that each deferred scan or ignored alert later carries reputational and legal risk for clinicians, reinforcing a system where the cumulative burden of 'preventive' care exceeds its benefits, especially in aging populations with limited life expectancy.

Diagnostic Efficiency Gain

Automated screening alerts improve early cancer detection rates by ensuring consistent follow-up for borderline cases that might otherwise be overlooked in high-volume hospital settings like those in the Mayo Clinic system, where clinician workload can delay manual review. These systems flag subtle imaging patterns or lab anomalies using validated algorithms tied to electronic health records, reducing human error in triage—particularly in rural referral hospitals with limited radiology staffing. The non-obvious insight is that even low-risk patients benefit from this heightened surveillance because the marginal cost of false positives is outweighed by the system-wide increase in diagnostic sensitivity, especially for fast-growing or atypical cancers not predicted by standard risk models.

Clinical Workflow Disruption

Automated alerts generate cognitive overload for overburdened primary care physicians in integrated systems like Kaiser Permanente, leading to delayed responses for all patients as clinicians spend disproportionate time justifying or dismissing low-yield warnings for low-risk individuals. The alert system operates through EHR-integrated rule engines that lack real-time risk recalibration, causing recurrent interruptions that erode trust in the tool—a phenomenon observed in University of Michigan Health studies on alert fatigue. The counterintuitive result is that harm emerges not from the test itself but from the breakdown in care coordination, revealing that automation in low-prevalence contexts can degrade overall care quality even when individual alerts are technically accurate.

Diagnostic Cascade

Automated screening alerts in Ontario’s primary care networks between 2018 and 2021 led to unnecessary diagnostic procedures for low-risk patients because the algorithms triggered follow-up imaging without incorporating individualized risk assessment, causing physical harm and anxiety among patients who otherwise met no clinical threshold for intervention. This mechanism operated through the province’s integrated electronic medical record system, which propagated alerts based on age-based heuristics alone, revealing how protocol-driven automation can override clinical discretion even when ethically unjustifiable under principle-based deontology that prioritizes non-maleficence. The non-obvious insight is that systemic adherence to screening benchmarks—intended to standardize care—can generate iatrogenic harm in low-risk populations when decoupled from contextual judgment.

Algorithmic Triage Duty

In the Veterans Health Administration rollout of the Predictive Analytics for Cancer Screening tool in 2019, veterans with low baseline cancer risk received repeated alerts due to race-adjusted algorithms that falsely elevated their perceived risk, leading to unjustified colonoscopies and resource diversion from higher-risk peers. This occurred within a system governed by utilitarian health distribution principles, where maximizing population-level detection inadvertently violated individual justice by imposing burdens on those least likely to benefit, exposing a conflict between aggregated efficiency and personalized ethical obligation. The underappreciated dynamic is that risk stratification models incorporating demographic proxies can create discriminatory duty allocations even when clinically unsubstantiated.

Informed Refusal Gap

At Kaiser Permanente Southern California in 2020, automated lung cancer screening alerts prompted radiologists to initiate low-dose CT scans for long-term smokers at minimal current risk, but patient consent processes failed to disclose the low probability of benefit, contravening the legal doctrine of informed refusal rooted in the patient autonomy principle from Schloendorff v. Society of New York Hospital. The systematic omission of probabilistic context in alert communications disrupted shared decision-making within an otherwise evidence-based care model, revealing how automation can erode fiduciary responsibility when ethical frameworks are reduced to procedural compliance. The overlooked issue is that standardized alerts, while improving screening rates, may compromise the moral obligation to support refusal as a legitimate medical choice.

Screening Inertia

Automated screening alerts in U.S. Veterans Health Administration hospitals after 2010 began producing disproportionate harm for low-risk patients due to protocol rigidification following the system’s shift toward metric-driven accountability. As performance benchmarks for cancer detection rates became tied to facility funding and clinician evaluations, alerts triggered by algorithms—originally designed as optional flags—were reinterpreted as mandatory workflow steps, leading to overtesting even when clinical judgment suggested otherwise. This transformation from advisory tools to de facto requirements, particularly evident in post-2015 audits of colorectal screening in low-incidence cohorts, reveals how quality measurement systems can invert safety goals when historical shifts toward standardization override contextual nuance. The underappreciated consequence is that the very standardization meant to reduce disparities has generated iatrogenic risk for previously excluded populations now swept into routinized pathways.

Algorithmic Drift

In England’s National Health Service, the rollout of the QCancer risk prediction model between 2012 and 2018 led to increasing false-positive alerts for low-risk women in cervical cancer screening after the algorithm was recalibrated to prioritize sensitivity over specificity in response to public inquiries about missed diagnoses. As the model incorporated broader demographic inputs to meet evolving equity mandates, its original precision in low-risk stratification eroded, causing primary care providers in high-volume urban clinics—such as those in inner London boroughs—to face alert fatigue and cascade referrals for unnecessary colposcopies. This temporal shift from clinician-mediated risk assessment to automated high-sensitivity flagging exposes how retrospective adjustments to algorithms, justified by past under-screening harms, can generate new patterns of overdiagnosis that are structurally invisible to the same equity frameworks driving the changes.

Threshold Compression

After Kaiser Permanente Northern California adopted integrated electronic health record alerts for lung cancer screening in 2015, low-risk former smokers with minimal pack-year histories began receiving routine LDCT referrals due to automated misclassification of exposure duration following a 2013 shift in USPSTF guidelines. The system’s inability to dynamically update individual risk trajectories—especially among patients who had quit decades prior and aged into lower biological vulnerability—meant that alerts based on static historical data overrode emerging geriatric insights about declining cancer lethality in the elderly. This misalignment, documented in Sacramento and Oakland clinics by 2019, illustrates how once-flexible eligibility thresholds, when encoded into persistent digital workflows, become temporally decoupled from evolving clinical understanding, turning preventive tools into sources of radiologic harm for aging low-risk cohorts.