Why Algorithmic Echo Chambers Reinforce Bias in Diverse Search

Analysis reveals 10 key thematic connections.

Key Findings

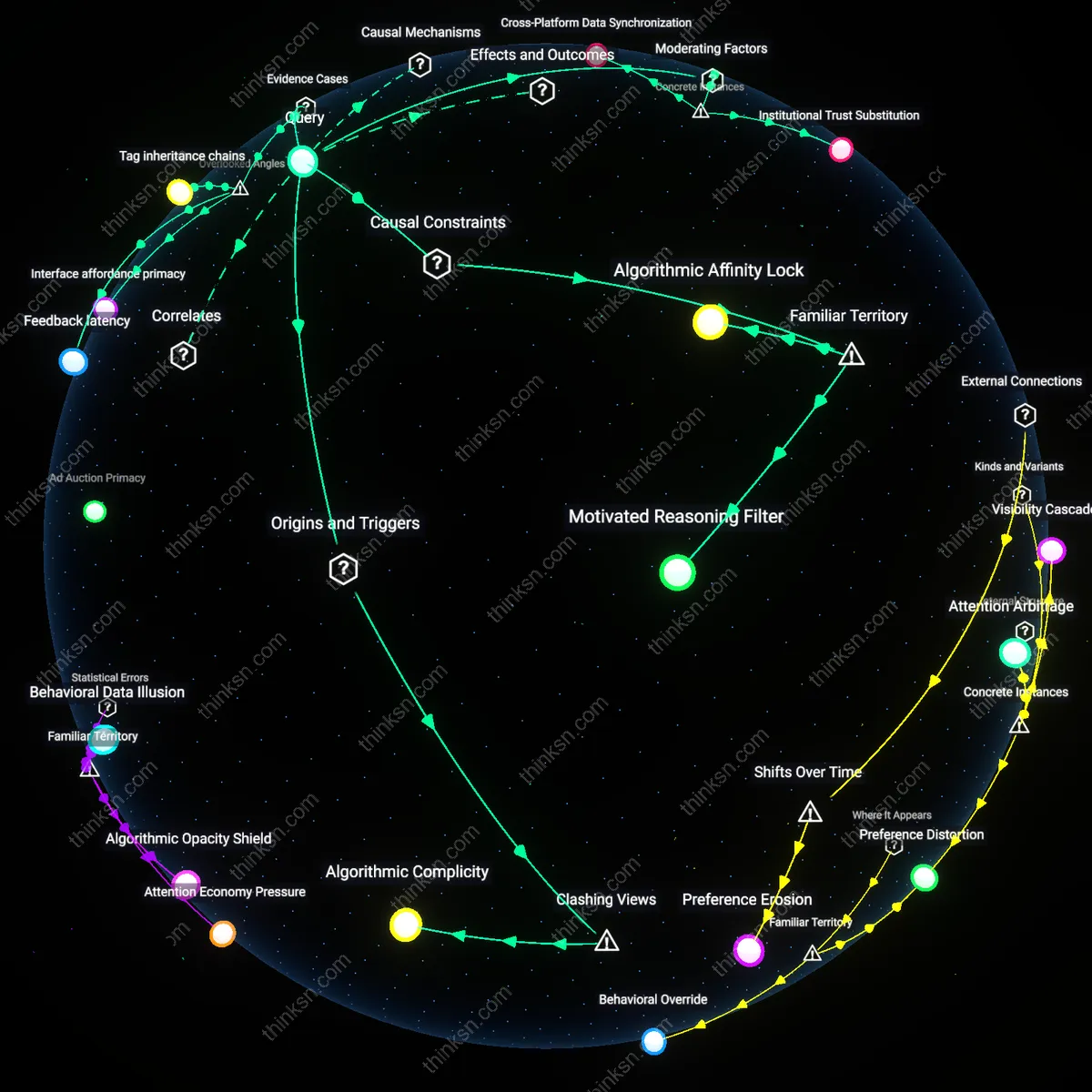

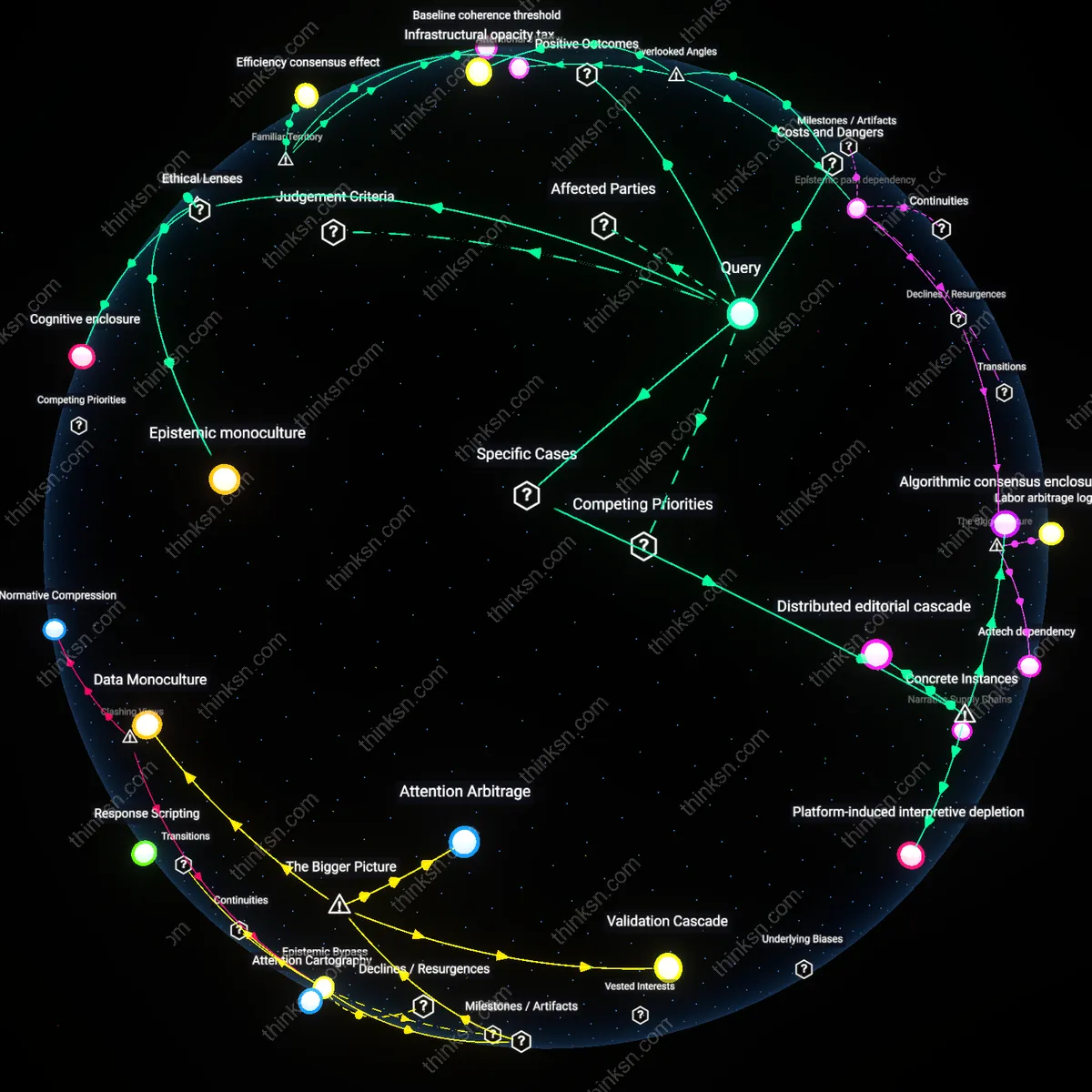

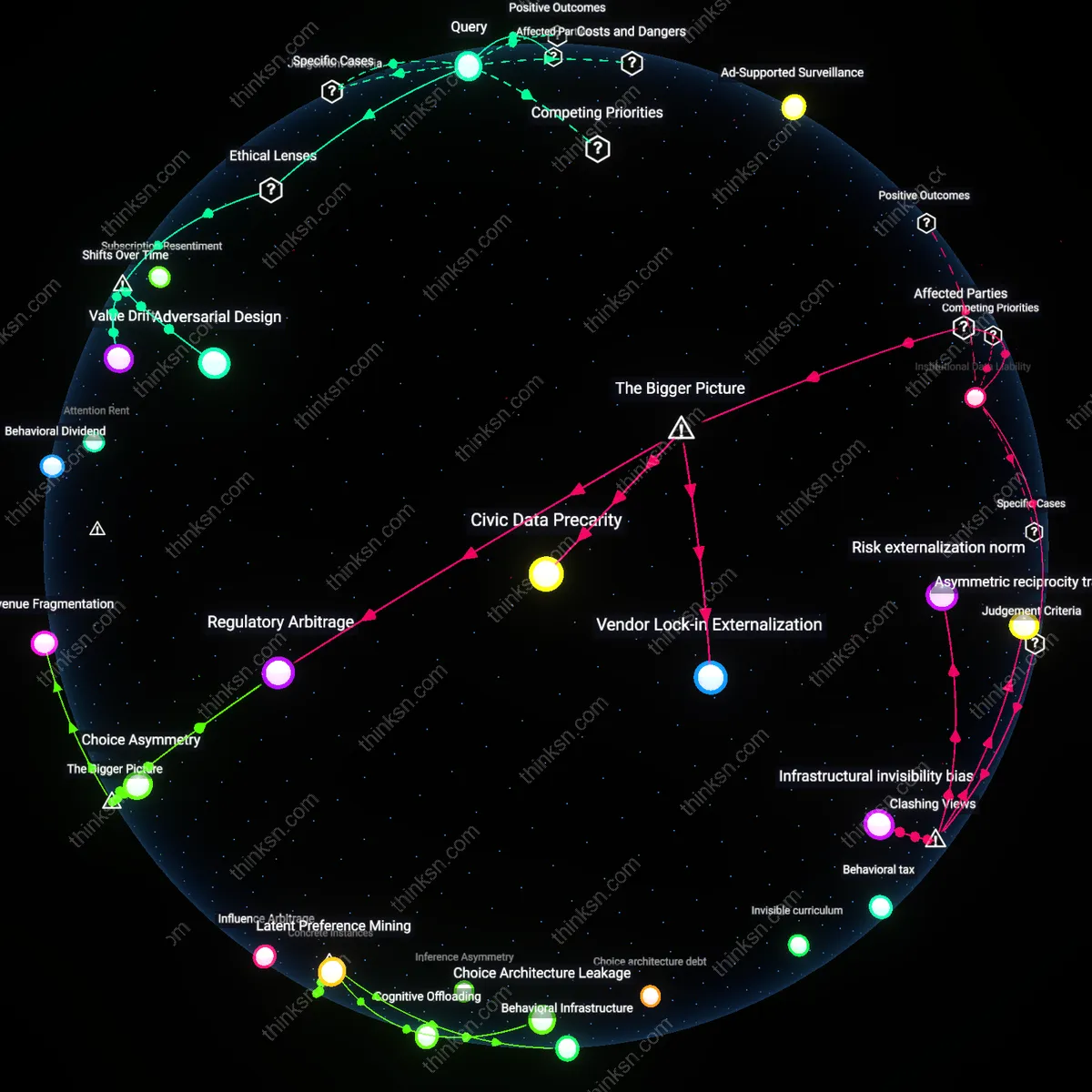

Algorithmic Complicity

Recommendation engines actively structure audience segmentation by reinforcing identity-based affiliations users only temporarily express, thereby converting transient engagement into durable ideological segmentation. Tech platforms’ machine learning systems prioritize retention over exposure diversity, recasting incidental interests in opposing views as algorithmic noise to be filtered out—thus privileging sustained engagement with congenial content. This mechanism reveals that algorithms do not merely respond to pre-existing preferences but conscript users into ideological identities they may not endorse, challenging the assumption that echo chambers arise from user-driven selection alone. The non-obvious reality is that users’ attempts to explore diverse perspectives are algorithmically interpreted as anomalies and suppressed, not amplified.

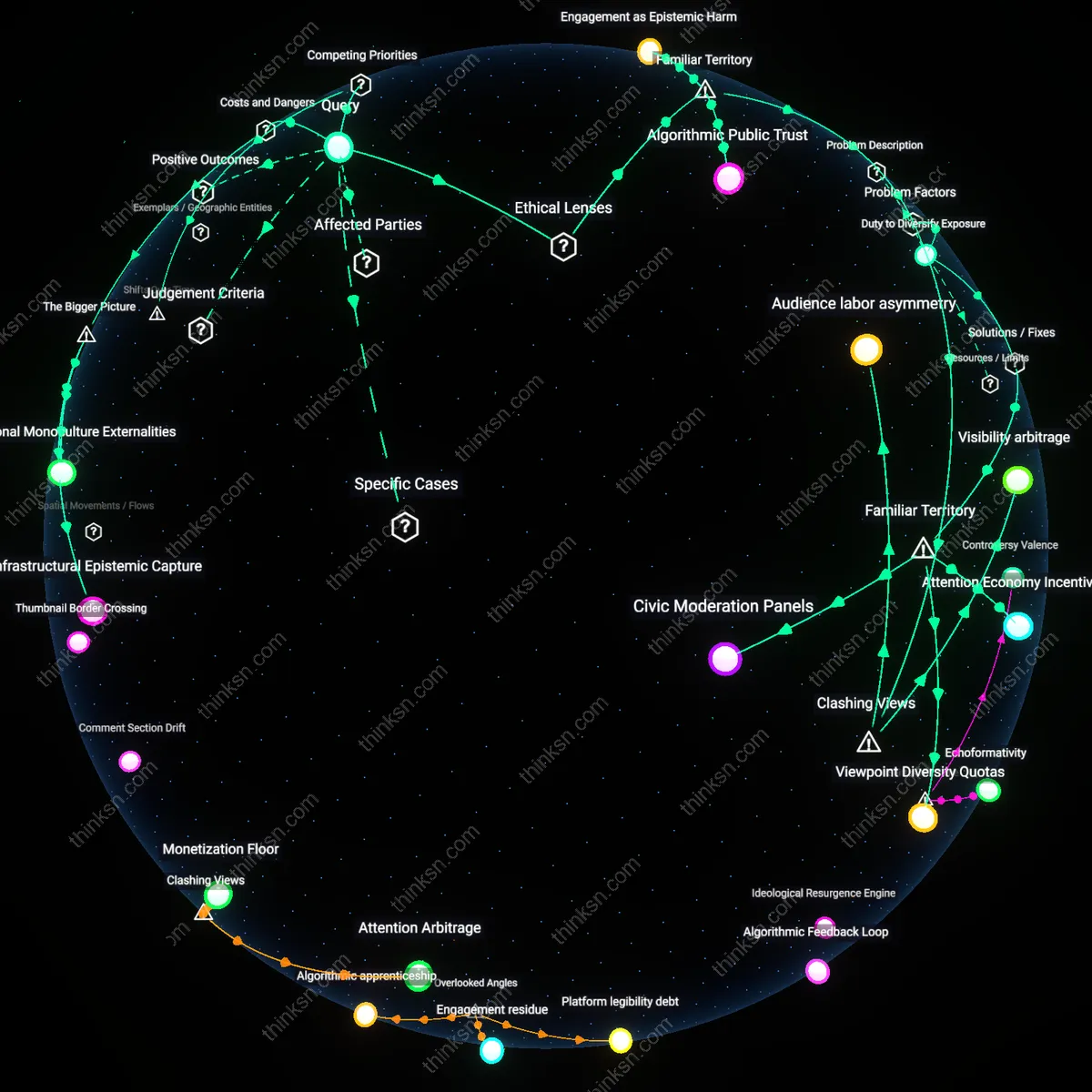

Partisan Affinity Traps

Audience segmentation driven by political advertisers on social platforms creates feedback loops that redefine recommendation logic, making exposure to counter-attitudinal content economically suboptimal. Microtargeted ad campaigns condition content distribution by signaling which user clusters yield the highest conversion, causing recommendation engines to de facto adopt partisan engagement metrics as proxies for relevance. This shifts content curation away from user-defined diversity toward politically instrumental coherence, undermining intentions to access plural views not because of user bias but because commercial lobbying reshapes algorithmic incentives. The dissonant finding is that echo chambers are less a product of individual cognition than of advertising-driven platform governance, where user agency is structurally sidelined.

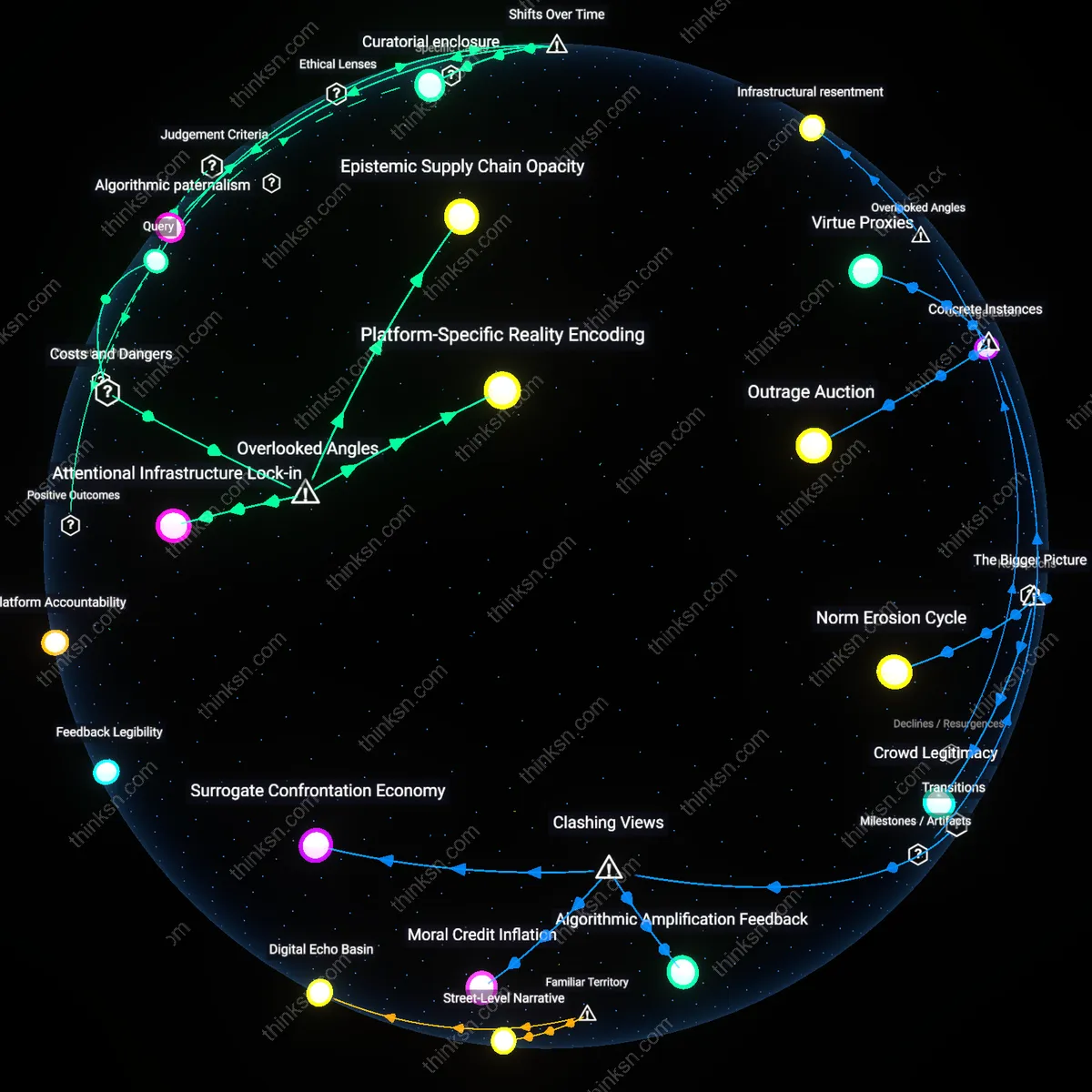

Algorithmic Feedback Loops

Facebook’s 2016 U.S. election news feed algorithm intensified ideological segregation by prioritizing engagement-driven content, which amplified emotionally charged posts within already-like-minded networks; because the platform’s recommendation engine interpreted prolonged viewing time as endorsement, it systematically suppressed exposure to cross-cutting perspectives even when users followed ideologically diverse sources, revealing how audience segmentation based on behavioral metrics can override stated preferences for media diversity through silent algorithmic curation.

Cross-Platform Data Synchronization

Cambridge Analytica’s microtargeting model in the 2016 Brexit referendum combined Facebook psychographic segmentation with third-party consumer data to deliver tailored political messages across multiple digital platforms, and because users encountered consistent ideologically congruent narratives on YouTube, Twitter, and Facebook simultaneously—driven by shared data infrastructures—their perception of viewpoint diversity was eroded despite accessing different websites, exposing how behind-the-scenes data integration between platforms can collapse pluralistic media environments into unified echo chambers.

Institutional Trust Substitution

In Brazil during the 2018 Bolsonaro campaign, WhatsApp’s encrypted group-based recommendation system enabled hyper-localized political segmentation where community leaders became de facto content gatekeepers, and because traditional media outlets had lost credibility amid economic crisis, users relied on these segmented peer networks for 'unfiltered' truth, demonstrating how breakdowns in institutional trust amplify the echo chamber effect by shifting epistemic authority to algorithmically isolated social clusters.

Algorithmic Affinity Lock

Recommendation engines prioritize content that matches historically engaged segments, so even users seeking diversity are steered toward variations of familiar narratives. Platforms like YouTube or Facebook use engagement metrics—watch time, likes, shares—as proxies for relevance, which inherently favors emotionally resonant or confirmation-aligned content over genuinely diverse viewpoints. This creates a bottleneck where intent to explore heterodox perspectives cannot override the system’s preference for proven engagement patterns, making exposure to dissimilar ideas a low-probability event despite user agency. The non-obvious reality is that segmentation doesn’t just reflect existing beliefs—it retroactively constrains future choices by redefining what counts as relevant.

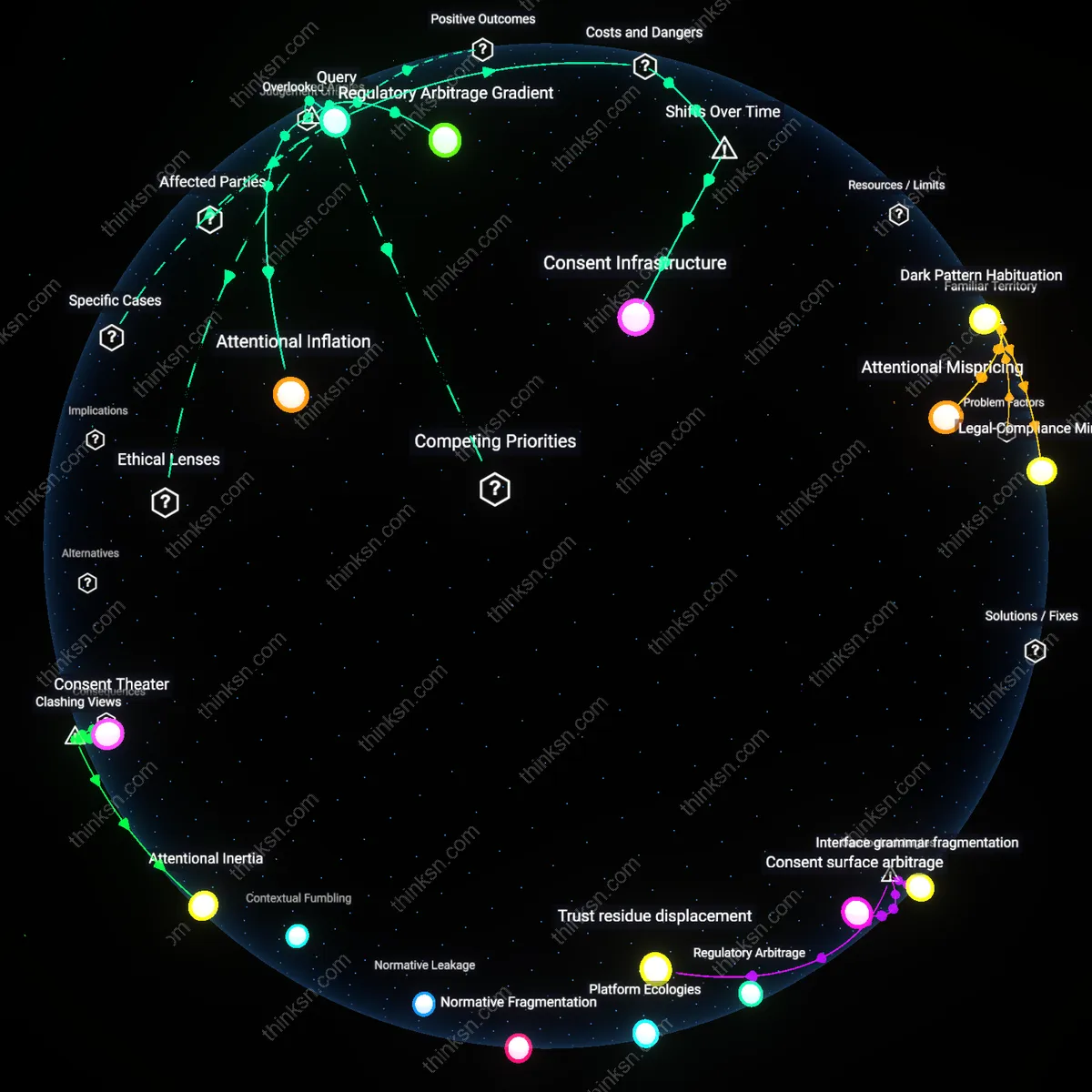

Motivated Reasoning Filter

Users’ expressed desire for diverse perspectives is systematically overridden because recommendation engines interpret engagement as alignment, mistaking prolonged attention to opposing views as endorsement rather than critical scrutiny. A liberal watching a conservative commentary for rebuttal purposes generates the same clickstream data as a convert—so the system reinforces the very ideology the user intends to challenge. The bottleneck lies in the inability of current algorithms to distinguish between affective alignment and analytical engagement, making ‘diversity-seeking’ behavior invisible to the engine. While public discourse assumes personal bias drives echo chambers, the filter shows that the system cannot recognize intentionality when it contradicts behavioral proxies.

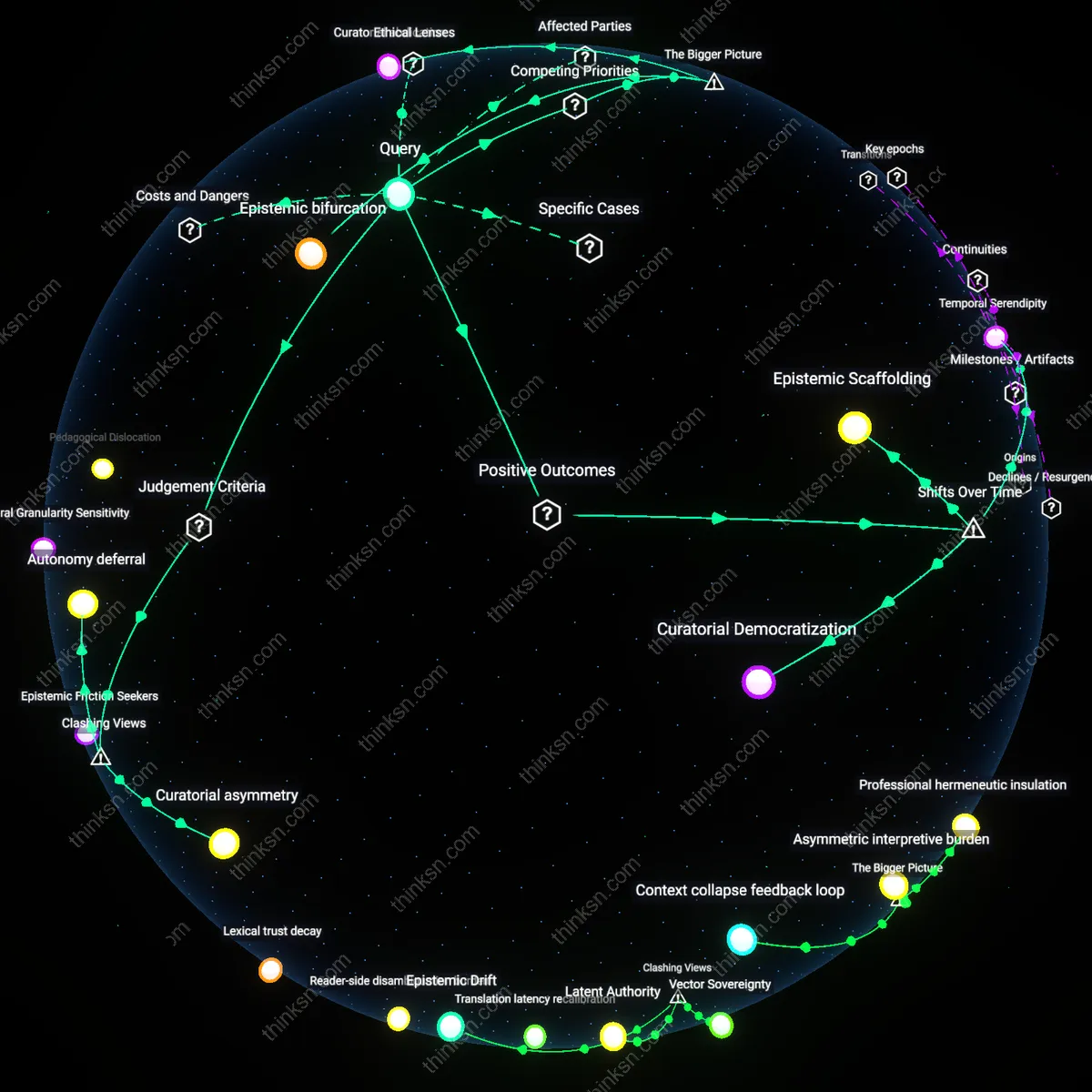

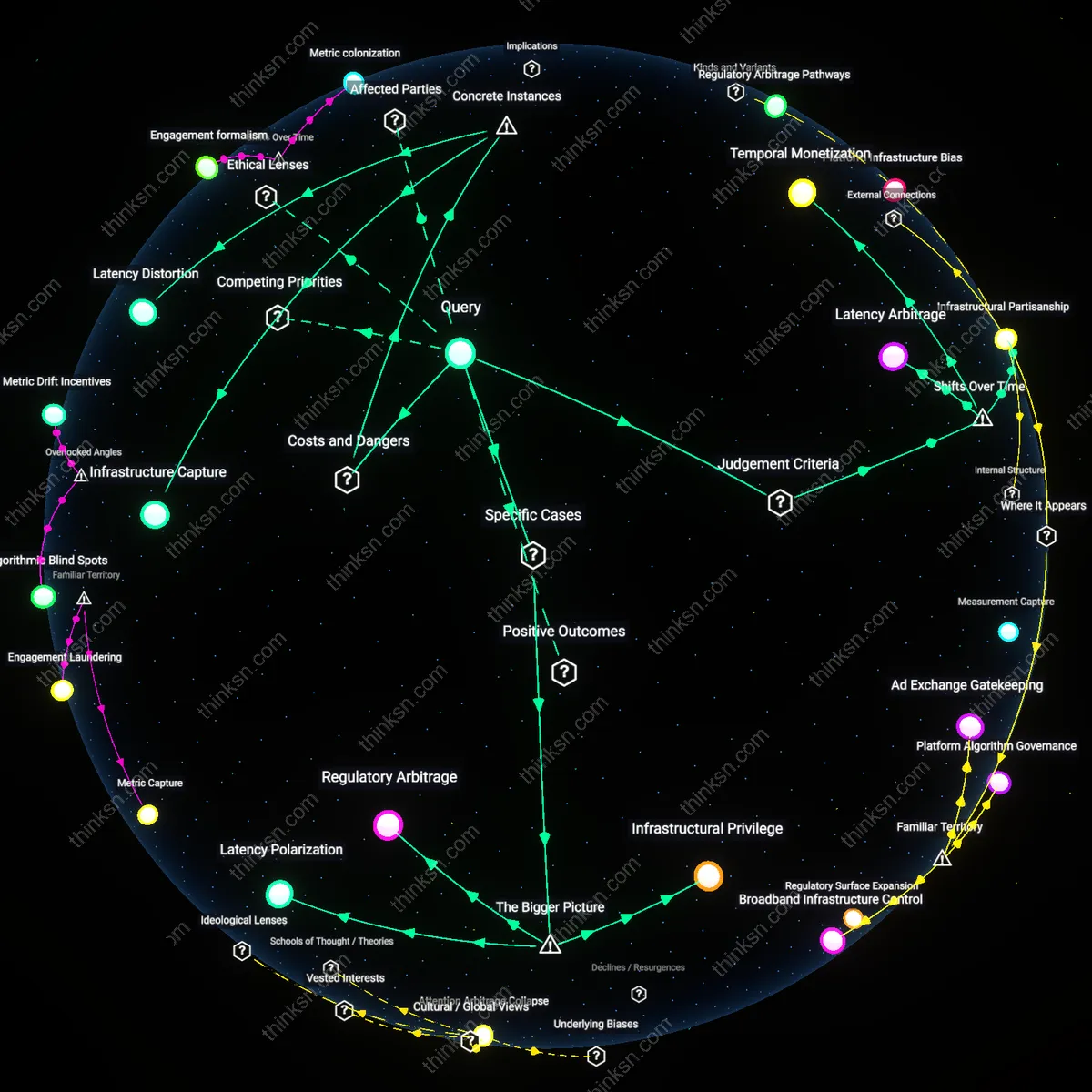

Feedback latency

Platform-induced delays in audience segmentation amplify confirmation bias by causing recommendation engines to misattribute stale preferences as current consensus, as seen in Facebook Groups' algorithmic reprioritization during the 2020 U.S. election cycle where weeks-old engagement patterns continued to shape content distribution even after user interests had shifted. This lag creates a recursive loop where outdated segments feed persistent thematic clusters, making users appear more ideologically consistent than they are and reinforcing motivated reasoning through delayed system responsiveness. The overlooked dynamic is not personalization itself but the temporal mismatch between real-time cognition and algorithmic processing cycles—what matters is not what users see, but when the system interprets their behavior, causing oscillations in exposure that users experience as unintended ideological drift. This changes the standard understanding by reframing echo chambers not as content filters but as misaligned feedback rhythms inherent to large-scale data pipelines.

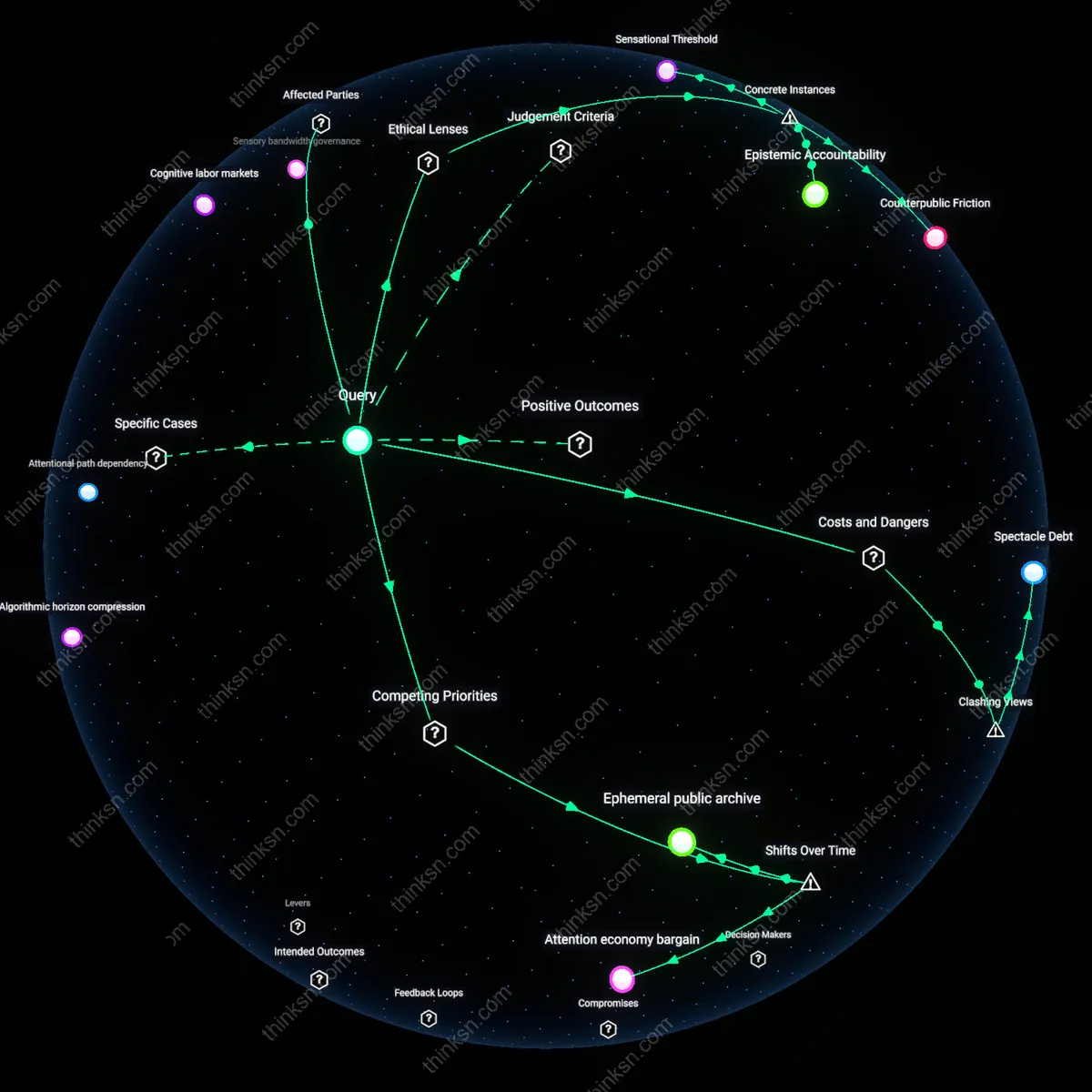

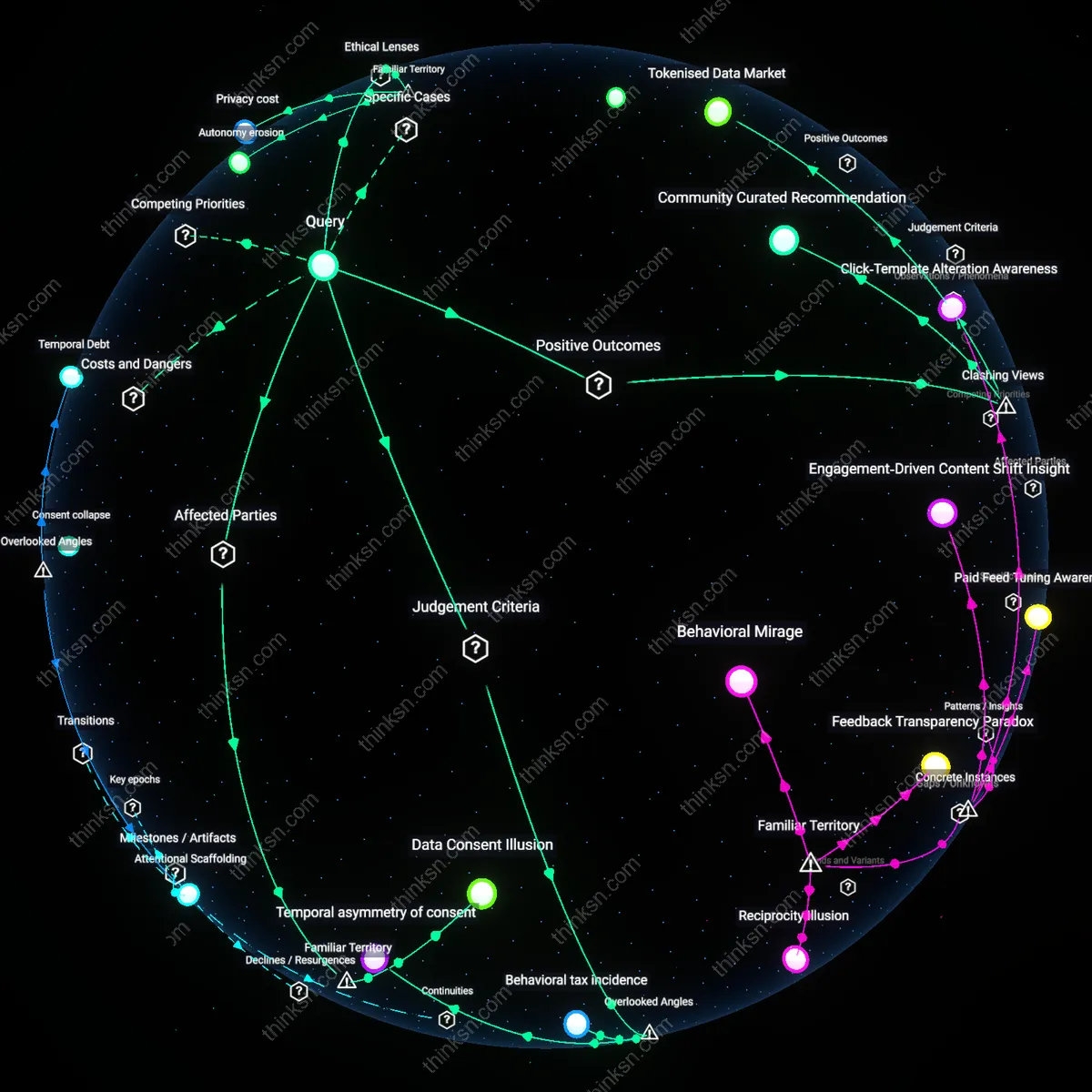

Interface affordance primacy

YouTube’s recommendation engine disproportionately amplifies motivated reasoning not through content categorization but through the hierarchical privileging of watch-time metrics in segment definition, causing audience clusters to cohere around retention-optimized emotional arcs rather than topic or ideology, as evidenced by the rise of 'truth-adjacent' creators like Graham Hancock during the 2021 pandemic, whose content gained traction not for factual claims but for narrative pacing that extended viewing duration. The non-obvious insight is that segmentation is driven not by belief alignment but by behavioral compliance with platform-defined engagement templates—users seeking diverse perspectives are channeled into structurally similar affective experiences regardless of subject. This shifts the causal locus from ideology to interface tempo, revealing that the echo chamber is constructed through rhythmic mimicry across topics, not thematic repetition.

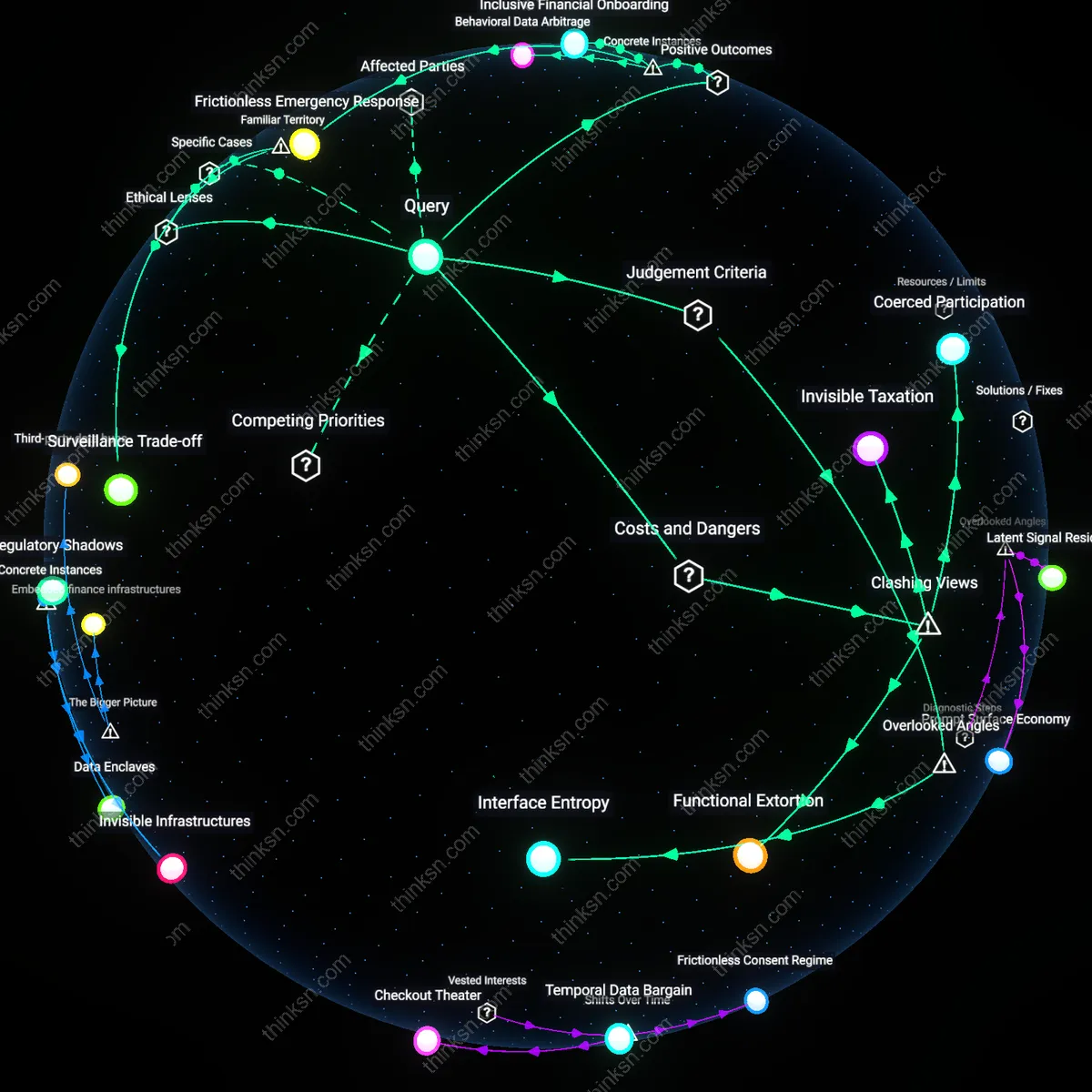

Tag inheritance chains

On TikTok, recommendation engines inherit audience segmentation metadata from upstream platforms such as Instagram and YouTube via cross-posted content, causing ideological cascades to propagate through reused hashtags and editing conventions even when creators shift messaging, as seen in the mutation of environmentalist discourse into survivalist prepping through the reuse of 'off-grid living' audio tracks and visual motifs. The overlooked mechanism is not algorithmic filtering but the covert transfer of audience identity through semiotic bundles that persist across context, enabling recommendation systems to re-segment users based on stylistic lineage rather than intent. This changes the understanding of echo chambers by showing they are sustained not through repetition of ideas but through recombinant aesthetics that retain latent ideological valence, making diversification appear novel while reproducing segmented pathways.