Is User Consent Legitimate When Fatigued by Data Collection?

Analysis reveals 4 key thematic connections.

Key Findings

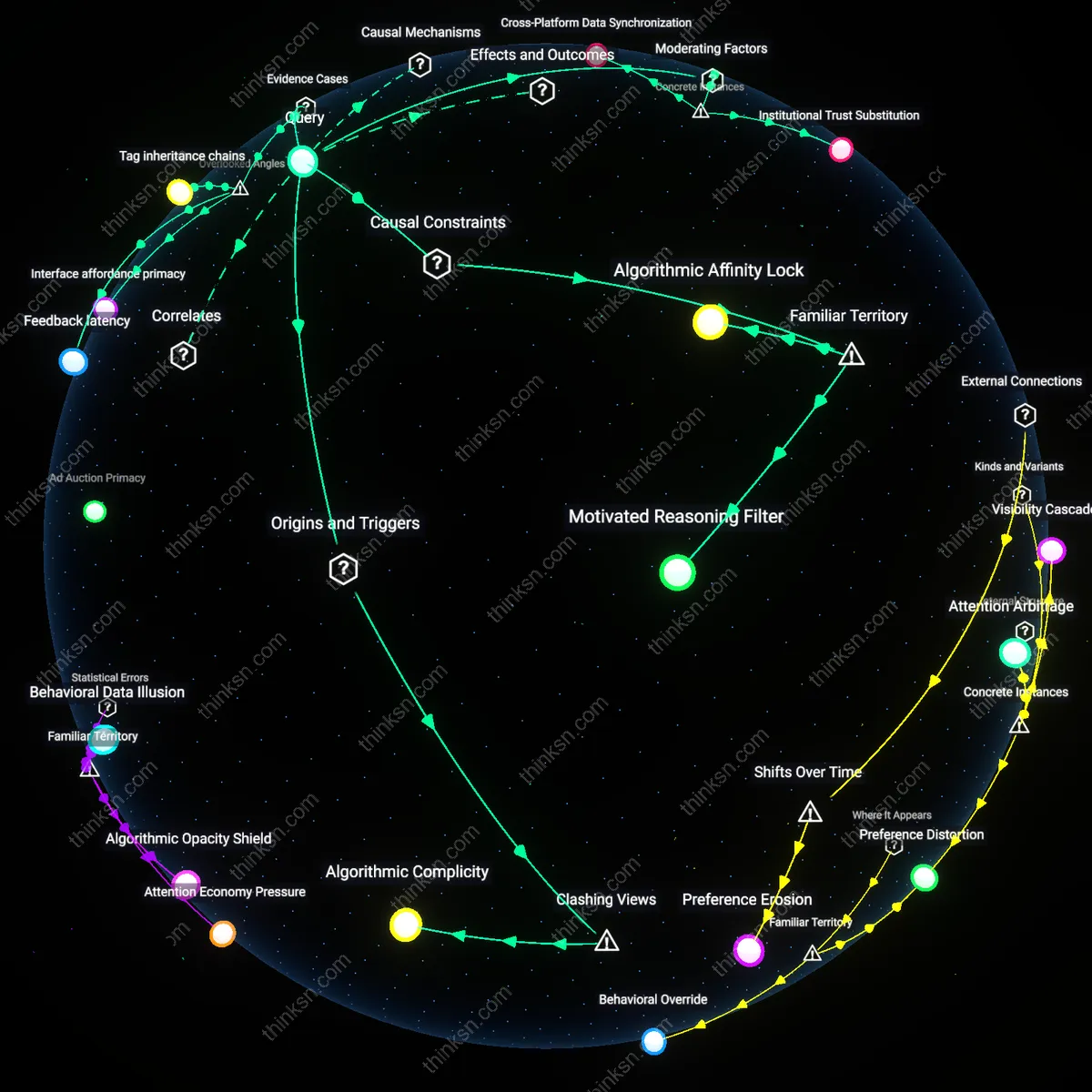

Interface Entropy

Widespread consent fatigue undermines the legitimacy of user agreements because the design instability of consent interfaces across platforms erodes users’ ability to form consistent mental models of data permissions. Interface elements—button placement, color contrasts, timing of pop-ups—vary so widely between platforms and update cycles that users cannot develop reliable cognitive schemas for granting or withholding consent, turning 'informed' agreement into a ritual of confusion rather than comprehension. This fragmentation, driven by A/B testing and platform-specific UX optimization, is rarely factored into legal or ethical assessments of consent legitimacy, yet it systematically degrades decision-making coherence. What’s overlooked is not just dark patterns but the very incoherence of the consent architecture itself, which makes sustained autonomy impossible across digital environments.

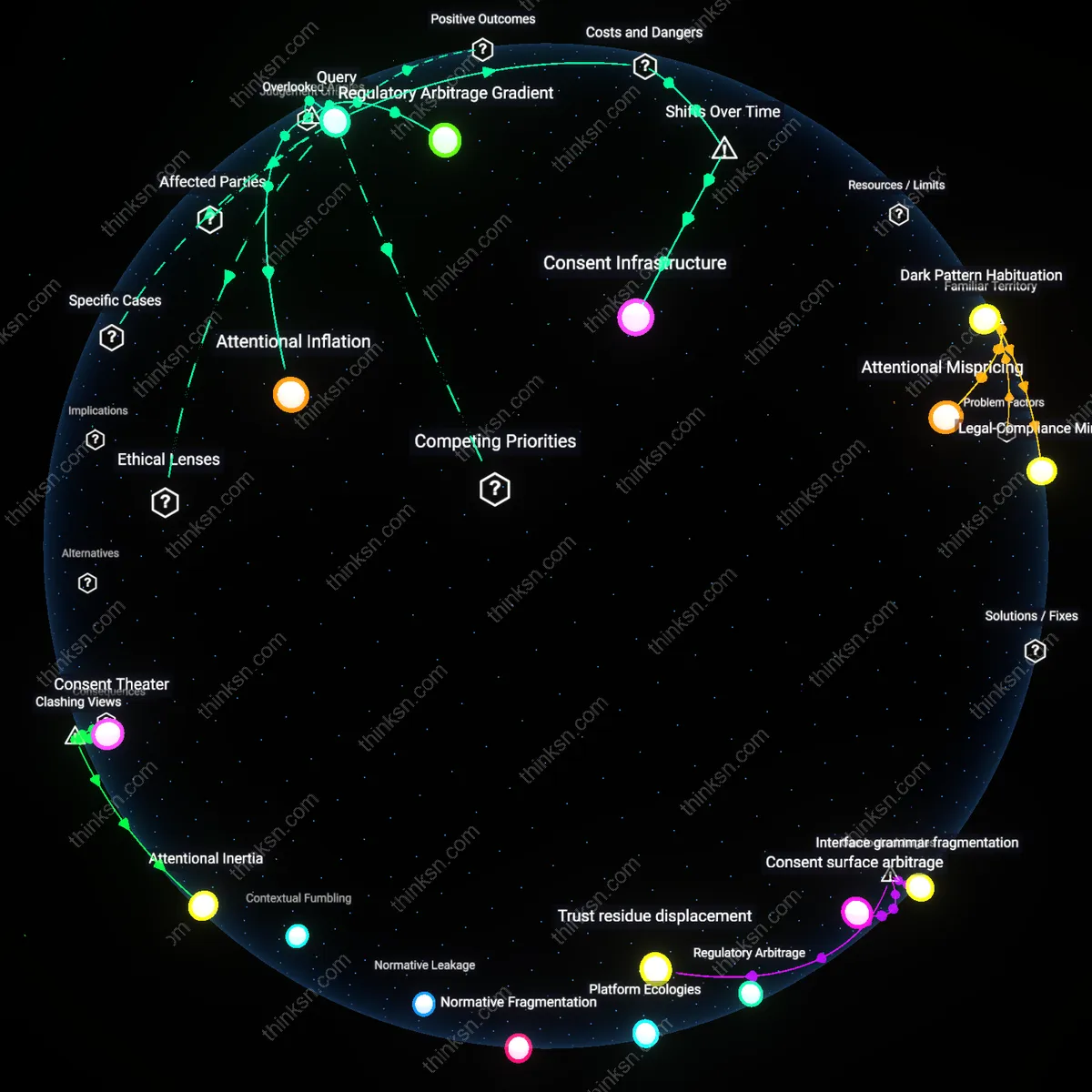

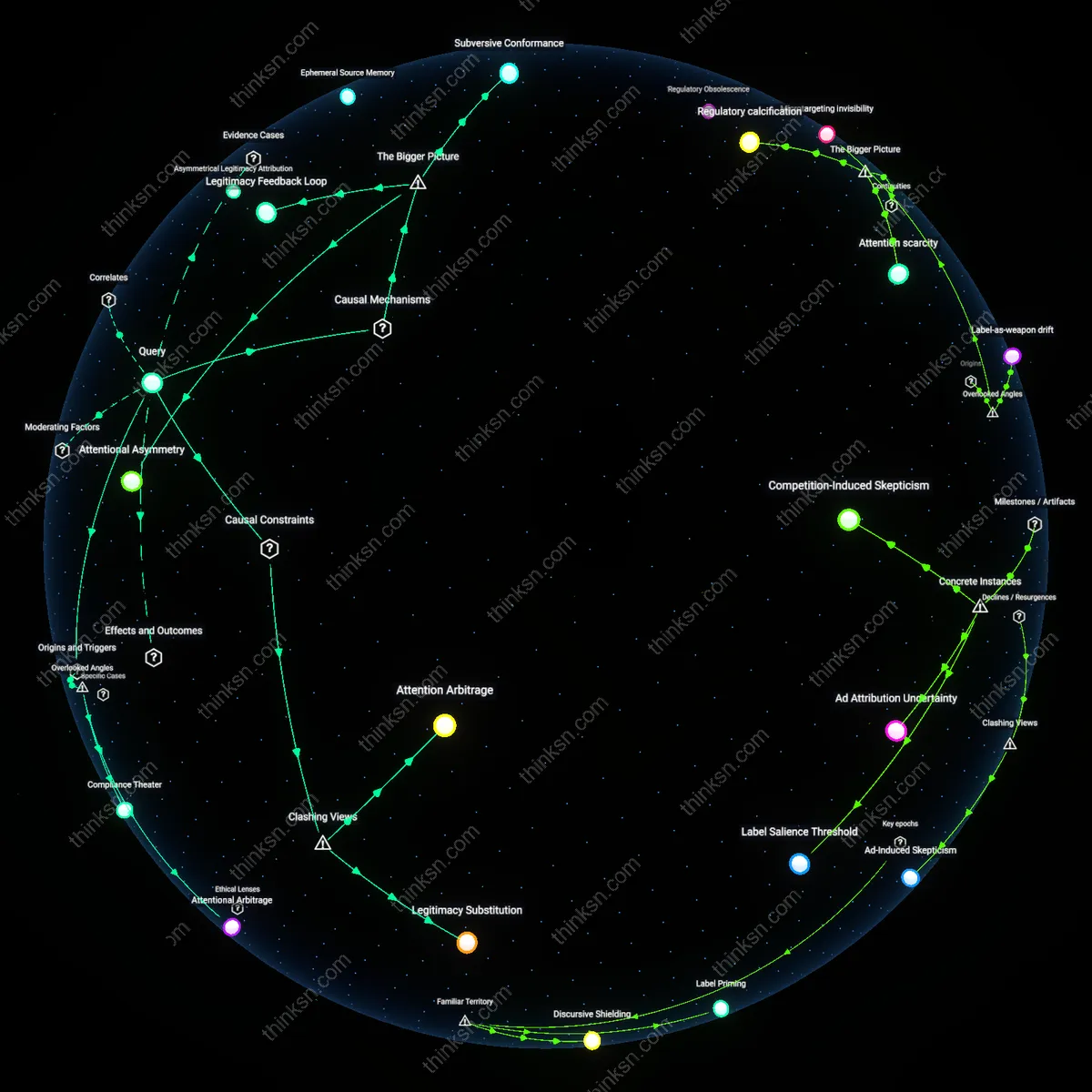

Regulatory Arbitrage Gradient

Consent fatigue legitimizes extensive data collection by enabling platforms to exploit jurisdictional asymmetries in enforcement rigor, where user inattention becomes a transferable compliance asset. Platforms subject to GDPR may present thorough consent flows in Europe while maintaining minimal disclosures in regions like Southeast Asia or Africa, knowing users globally exhibit comparable fatigue—yet only stringent jurisdictions impose penalties, allowing firms to treat global user apathy as a risk-distribution tool. This creates a gradient where consent non-compliance is tolerated in weak-regulation zones and repackaged as 'compliance efficiency' in strong-regulation ones. The overlooked mechanism is that consent fatigue isn’t just a cognitive failure but a structural enabler of jurisdictional cost-shifting in data governance.

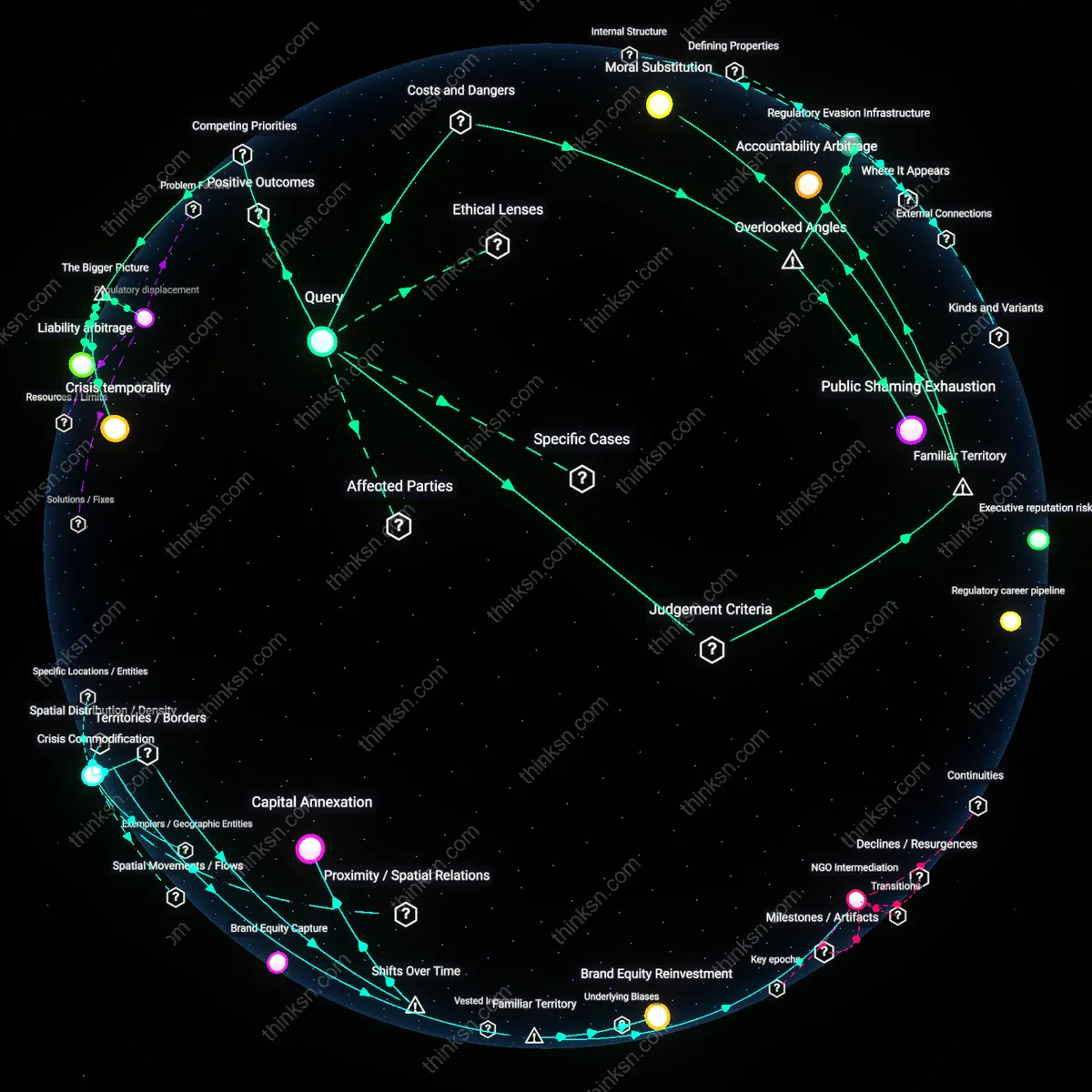

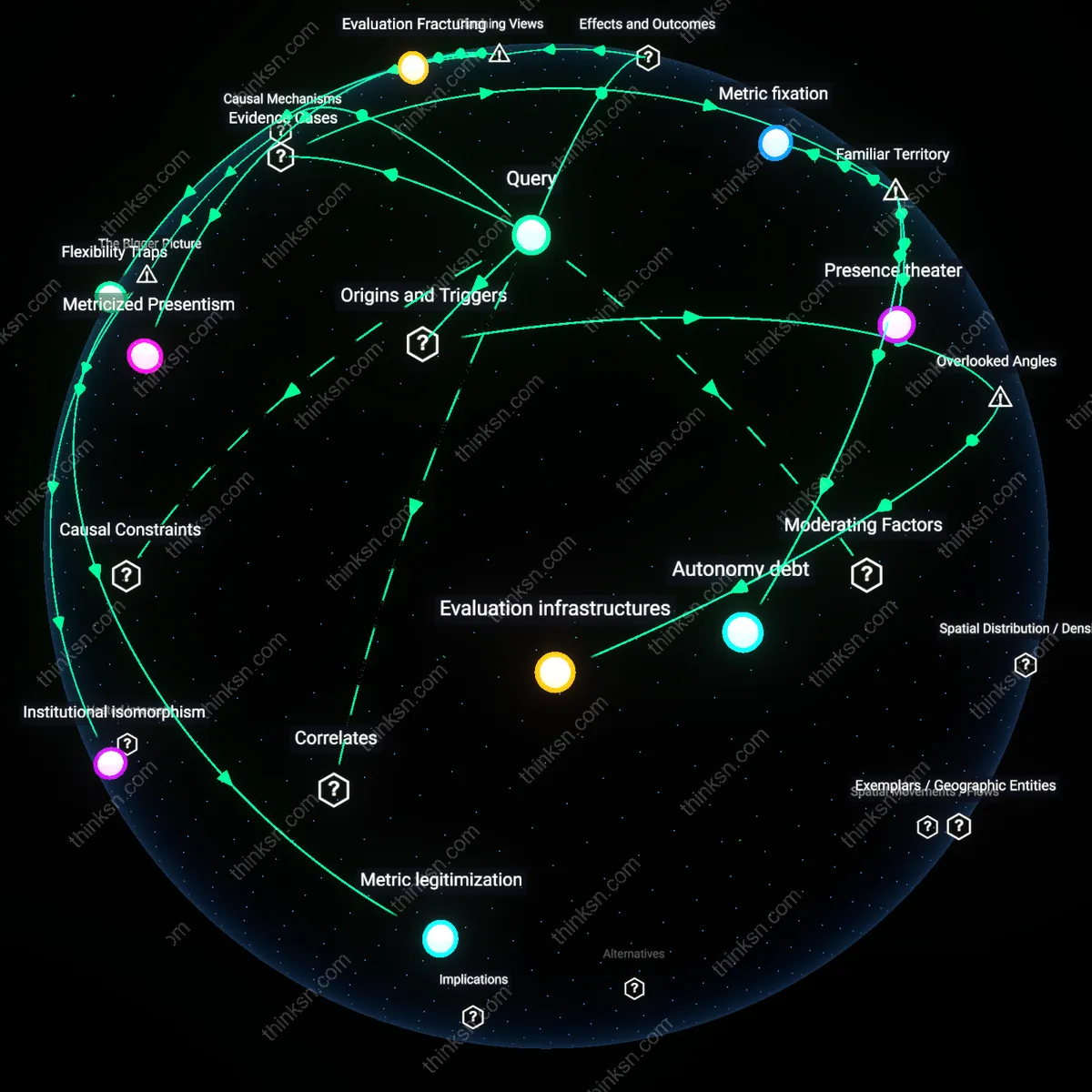

Attentional Inflation

The legitimacy of user agreements decays not because users are misled, but because the sheer volume of consent requests across platforms devalues attention as a finite cognitive currency, making any single agreement functionally negligible. Just as monetary inflation erodes purchasing power, repeated demands for consent—across apps, devices, and services—dilute the perceived weight of agreeing, such that even users who care about privacy cannot sustain calibrated attention. This inflation is accelerated by ecosystems like Android and iOS, which normalize constant permission prompts, training users to treat consent as ambient noise. The overlooked dynamic is that the problem isn’t ignorance or resignation alone, but an economic-like devaluation of decision significance due to oversaturation.

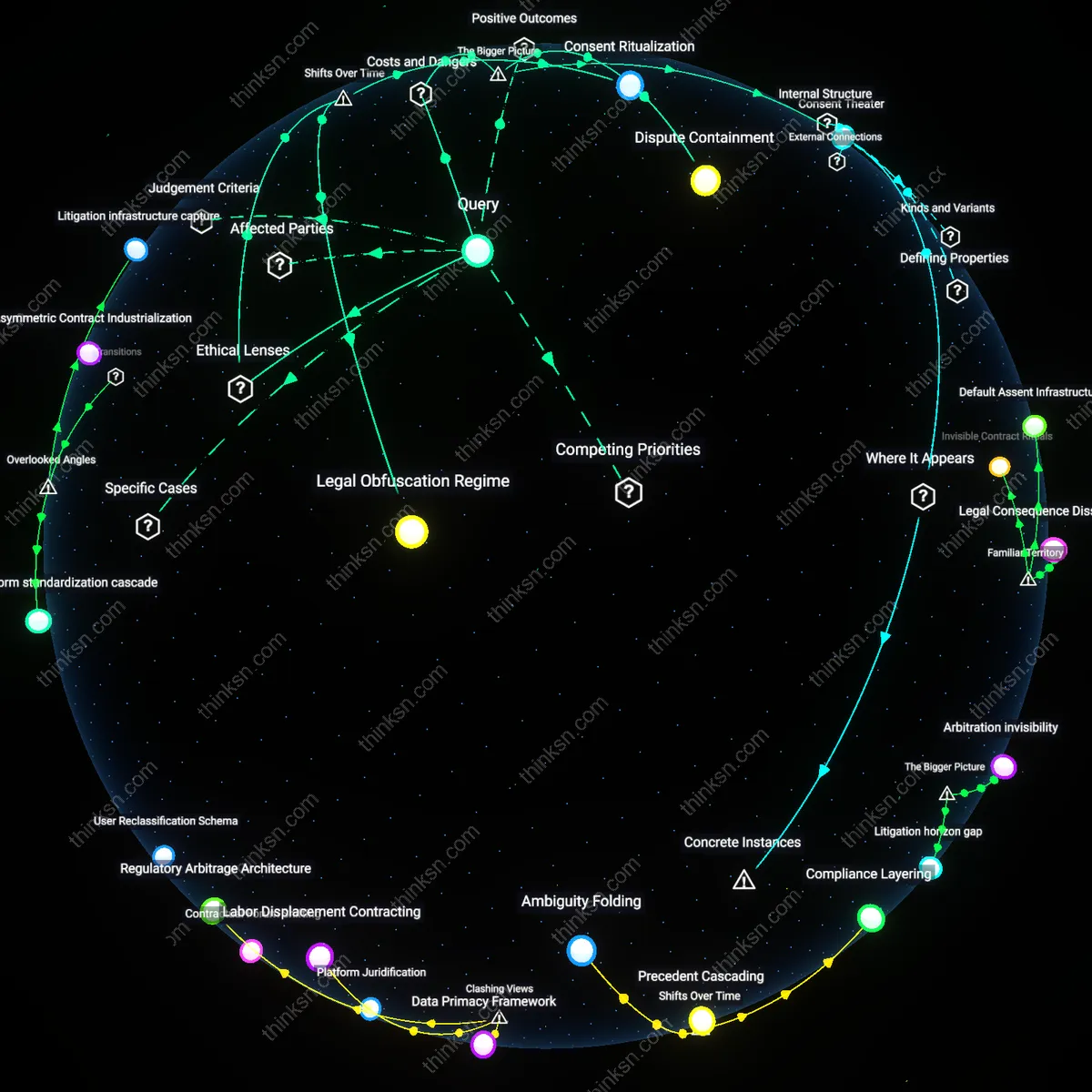

Consent Infrastructure

Consent fatigue undermines legitimacy because the shift from negotiated opt-in models in early web platforms (2000–2008) to algorithmically choreographed acceptance flows after the rise of mobile app ecosystems (post-2010) transformed user agreements into automated tollgates rather than meaningful disclosures, embedding passive compliance into UX patterns designed to exhaust attention. This institutionalized a technical architecture where consent is procedurally fulfilled but cognitively bypassed, with gatekeepers like app stores and ad-tech stacks standardizing 'accept or exit' choices, making refusal functionally incompatible with service access. The non-obvious consequence is not mere apathy, but the systemic replacement of juridical consent with infrastructural inevitability—where the design, not the content, of the agreement erodes legitimacy by default.