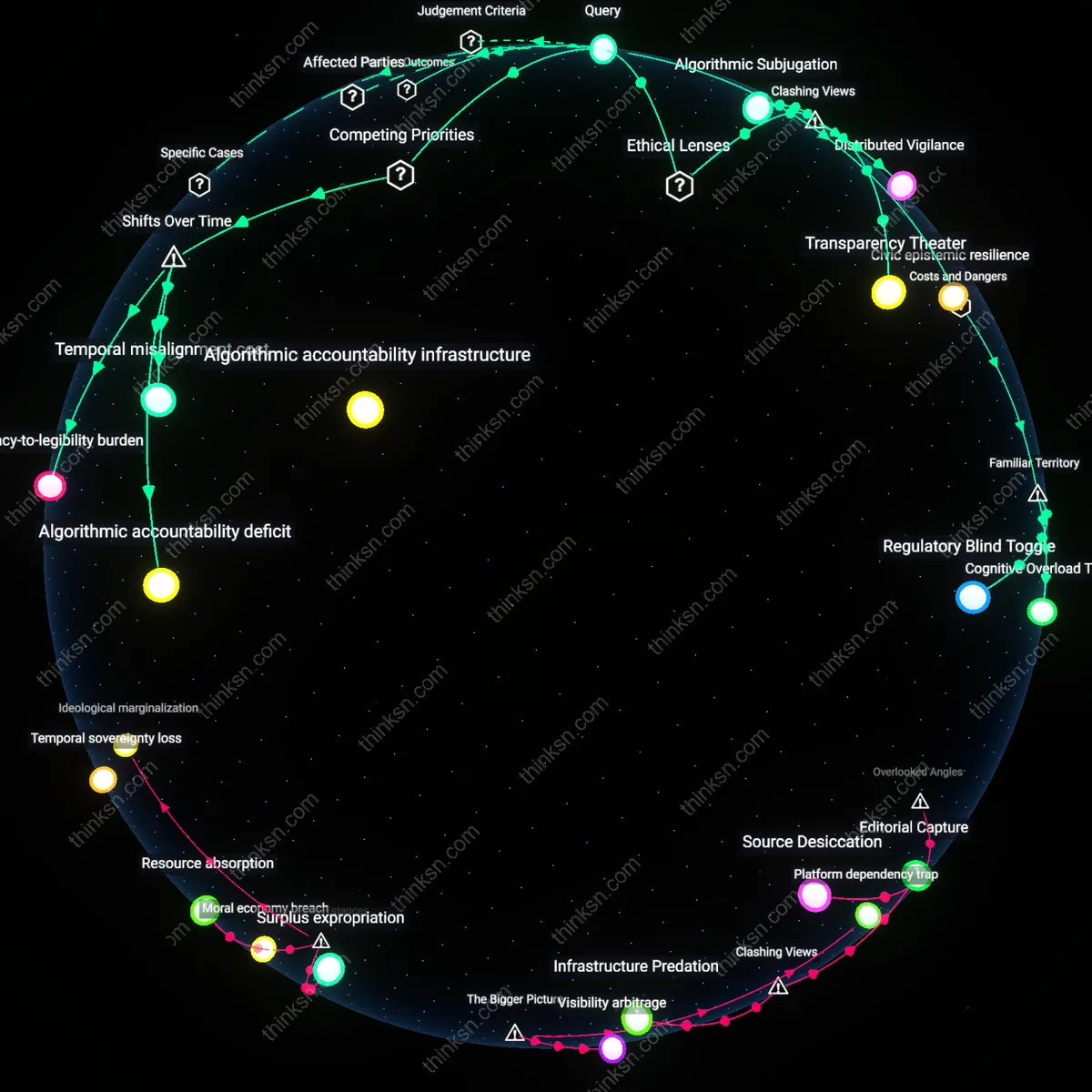

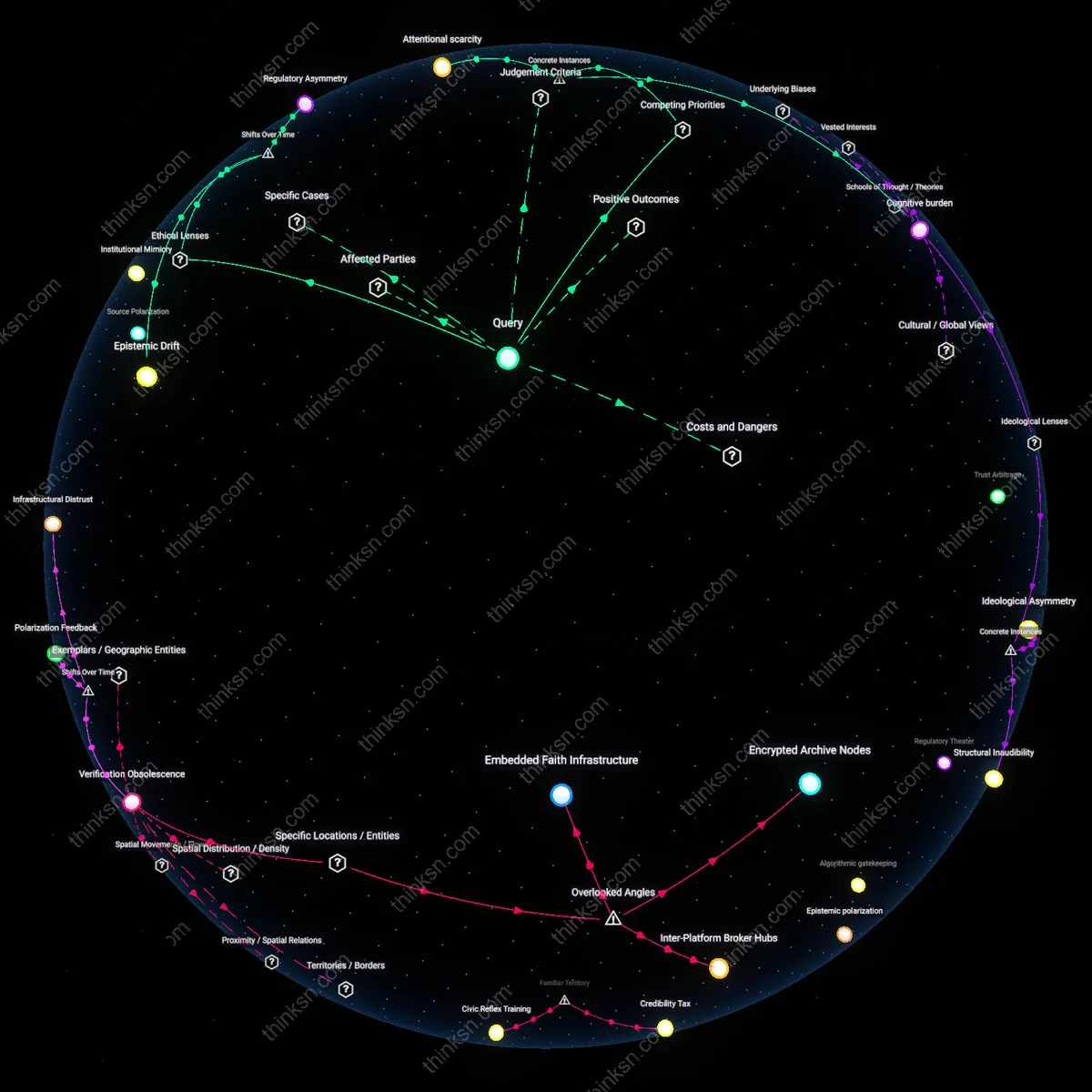

Do Personalized Recommendations Create Echo Chambers or Enhance Discovery?

Analysis reveals 9 key thematic connections.

Key Findings

Epistemic inertia

The risk of echo chambers from AI-curated reading recommendations outweighs the benefits because users are systematically funneled into recursive affinity loops where engagement metrics override cognitive diversity, entrenching preexisting beliefs through personalized reinforcement rather than challenging them; this occurs not through overt censorship but through the invisible architecture of recommendation algorithms operated by platforms like Amazon Kindle and Apple Books, which prioritize click-through rates over intellectual serendipity, privileging popularity and compatibility with past behavior—thus making the erosion of epistemic flexibility a structural byproduct of convenience-driven design. The non-obvious insight is that these systems do not isolate users by blocking access to dissenting views but weaken their capacity to recognize such views as meaningful when encountered.

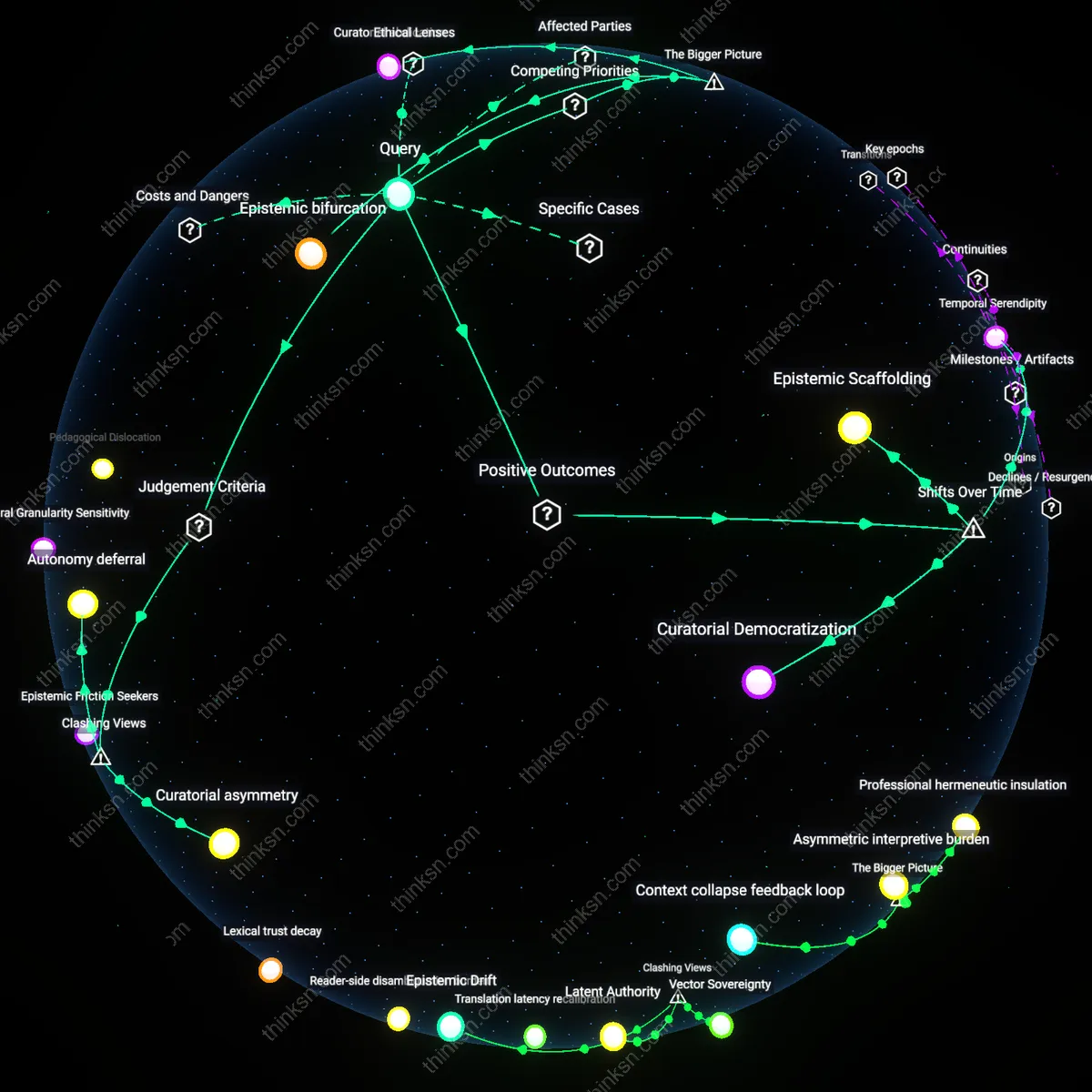

Curatorial asymmetry

The benefits of AI-curated reading recommendations outweigh the risks of echo chambers because discovery mechanisms in systems like Google Books and Library Genesis bypass commercial personalization altogether, enabling users in intellectually resource-poor regions—such as underfunded university towns in Nigeria or rural India—to access niche global scholarship that local collections lack, thereby expanding cognitive horizons despite algorithmic homogenization in consumer platforms; this asymmetric access, driven by AI indexing rather than behavioral profiling, reveals that convenience can function as a democratizing force when decoupled from engagement-based personalization. The dissonance lies in recognizing that the same technological infrastructure blamed for ideological isolation also powers large-scale intellectual liberation in contexts where traditional curation is absent.

Autonomy deferral

The risk of echo chambers is not primarily a function of AI recommendations but of user rationalization, as readers in high-choice environments like Audible or Blinkist actively select algorithmic guidance to reduce decision fatigue, thereby outsourcing epistemic agency to systems they perceive as neutral arbiters; this voluntary deferral transforms convenience from a feature into a habituated dependency, where the perceived benefit—time saved—masks a deeper surrender of judgment that makes echo chambers not a failure of AI but a successful outcome of convenience culture. The underappreciated mechanism is that users, particularly in time-pressured professional classes, come to distrust their own selection capacities, making algorithmic curation less a manipulative imposition than a collaboratively sustained abdication.

Curatorial Democratization

AI-curated reading recommendations have expanded access to niche and diverse voices by replacing gatekept editorial boards with algorithmic discovery systems, enabling readers from marginalized communities to find content that resonates with their lived experiences. This shift from print-era editorial centralization in metropolitan publishing hubs to distributed digital curation—accelerated by the rise of independent publishers on platforms like Substack and Medium post-2015—has decentralized cultural authority. The mechanism operates through engagement-weighted ranking models that surface long-tail content, thereby increasing the visibility of non-mainstream perspectives that traditional publishers historically underrepresented. What is underappreciated is how this transition has not merely personalized reading but restructured intellectual inclusion over time, privileging resonance over pedigree.

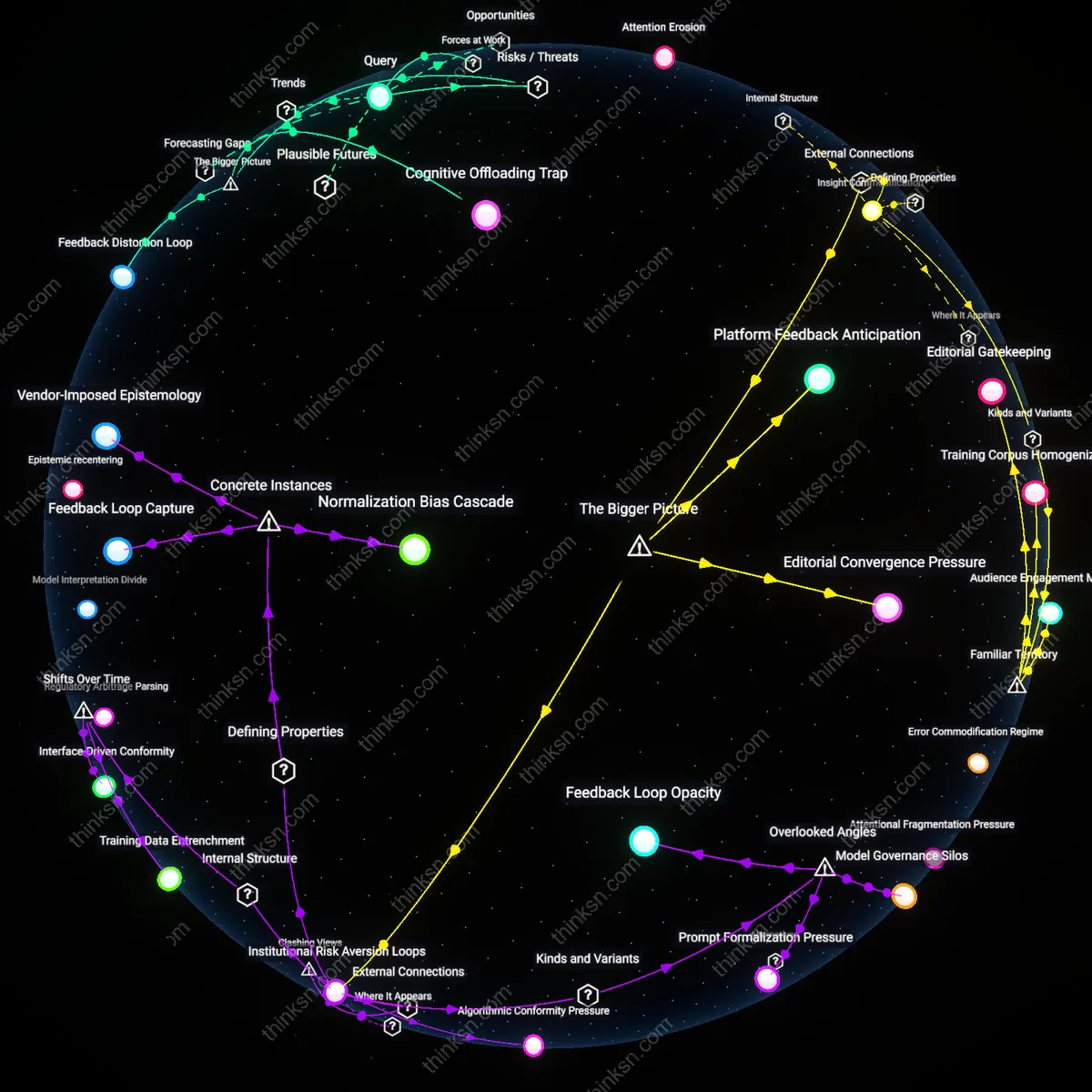

Temporal Serendipity

AI-driven discovery reintroduced accidental exploration in an age when information overload had flattened reading into utilitarian consumption, marking a departure from the early web’s unstructured browsing (pre-2010) to a new form of guided chance forged by predictive modeling. Starting around 2018, platforms like Pocket and Later.com began using temporal behavioral data—when users read, pause, or save—not just what they read—enabling systems to recommend articles that align not with immediate interests but with evolving cognitive rhythms. This mechanism counters echo chambers by injecting serendipitous material calibrated to moments of receptivity, transforming convenience into a scaffold for intellectual drift. The underappreciated shift is that temporal dynamics, once ignored in favor of topical relevance, now serve as a hidden channel for discovery that mimics pre-digital encounters with unexpected ideas.

Epistemic Scaffolding

AI curation has transitioned from serving passive recommendation engines (circa 2010–2016) to actively structuring pathways through complex knowledge domains, as seen in platforms like The Markup or MIT’s Climate Primer, where users navigate layered explanatory content. This developmental shift toward pedagogical framing—evident in the rise of explainer journalism and AI-guided learning trails post-2020—transforms convenience into a tool for epistemic progression, guiding readers from surface understanding to nuanced critique. The mechanism operates through adaptive taxonomies that map conceptual dependencies, surfacing content that builds literacy over time rather than amplifying affinity. What is rarely acknowledged is that this trajectory reveals curation not as a risk to intellectual diversity but as a new form of scaffolding that counters echo chambers by design through sequential exposure.

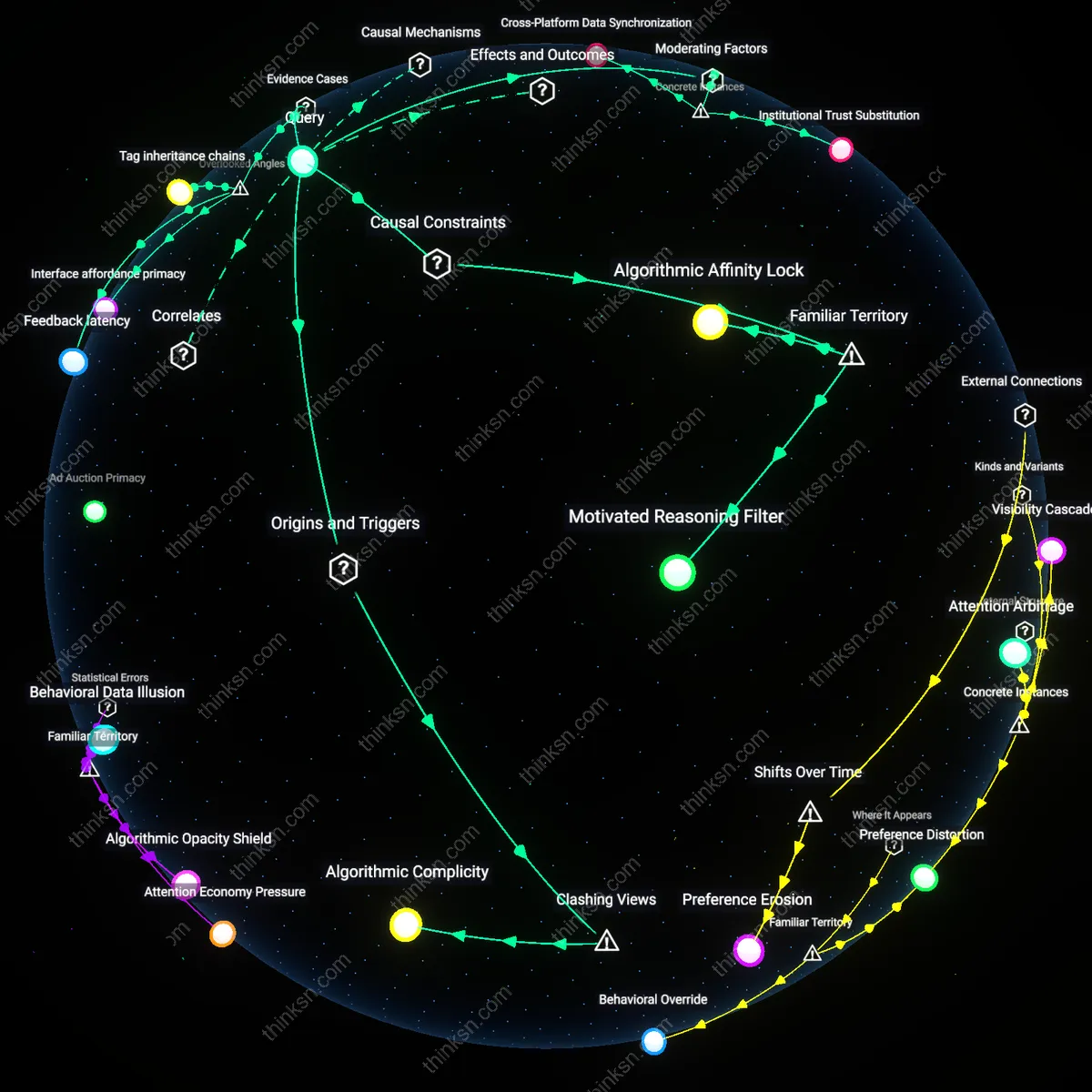

Algorithmic capture

AI-curated reading recommendations entrench echo chambers by optimizing for user engagement, which systematically overrides intellectual serendipity. Commercial platforms like Facebook and Google prioritize attention retention through machine learning models trained on behavioral feedback loops, where content that reinforces existing beliefs is disproportionately amplified because it generates higher dwell time and click-through rates. This mechanism, driven by advertising revenue models, systematically devalues exposure to dissenting or unfamiliar perspectives, not due to technical limitation but economic incentive. The non-obvious implication is that convenience and discovery are not neutral features but outcomes shaped by the platform’s imperative to capture and commodify attention.

Epistemic bifurcation

The convenience of AI-driven discovery intensifies societal polarization by fragmenting shared informational baselines across demographic and ideological lines. As recommendation systems in services like YouTube or TikTok adapt to granular user behavior, they generate increasingly divergent knowledge ecosystems where individuals encounter different facts, sources, and narratives—effectively splitting the epistemic landscape into insulated domains. This fragmentation is accelerated by network effects and platform architectures that reward viral homogeneity within clusters while suppressing cross-cluster visibility. The underappreciated consequence is that the very personalization enabling discovery also erodes the common ground necessary for democratic discourse, turning convenience into a structural driver of cognitive divergence.

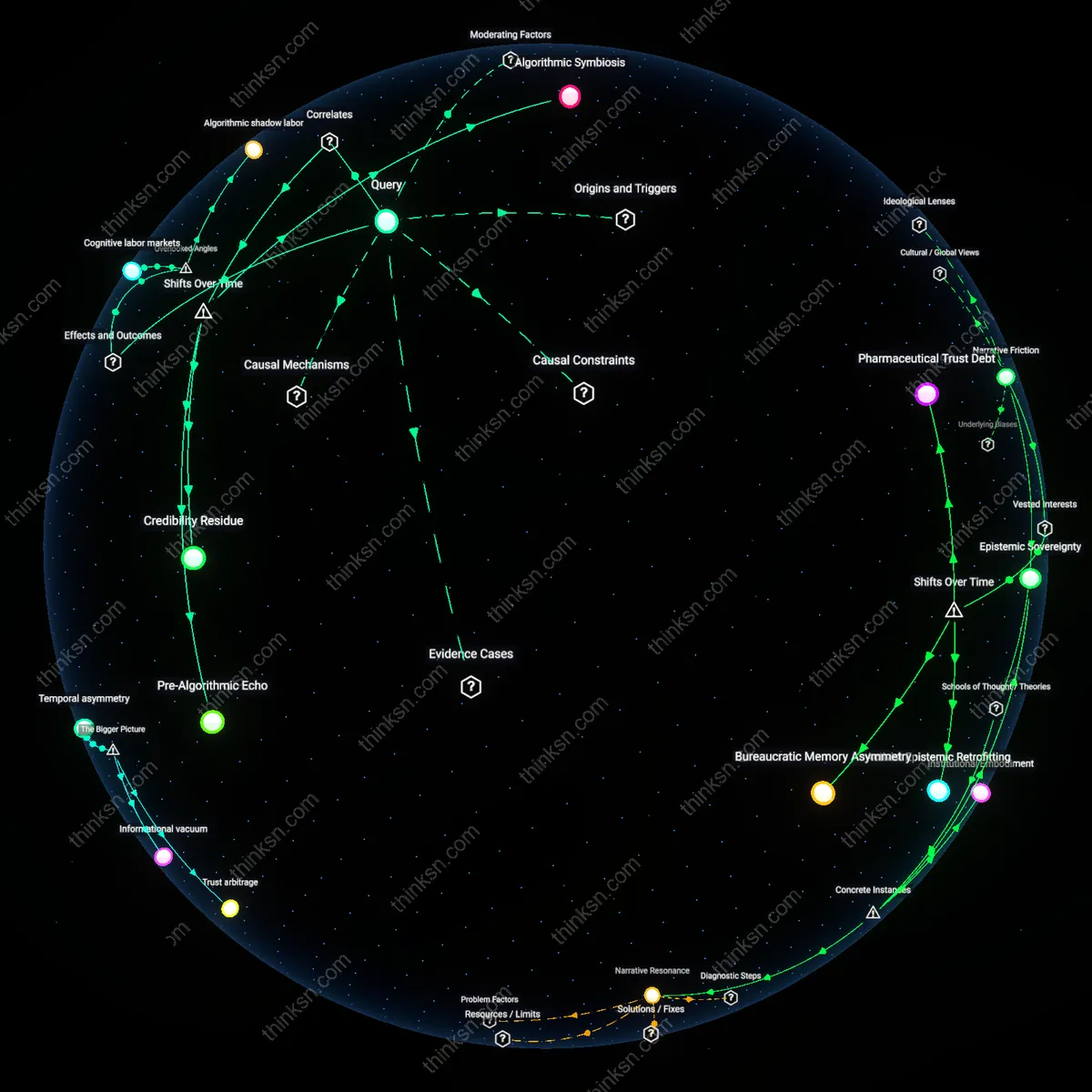

Curatorial abdication

Institutional actors such as libraries, universities, and public media are progressively outsourcing intellectual curation to AI systems, thereby relinquishing deliberate stewardship of knowledge diversity. As budget constraints and digital transformation pressures mount, these institutions integrate third-party recommendation engines—like those embedded in JSTOR or ProQuest—that follow engagement-based logic rather than pedagogical or pluralistic principles. This shift transfers normative control over what is deemed 'discoverable' from educators and librarians to opaque algorithms optimized for efficiency and scalability. The overlooked dynamic is that the convenience offered by AI curation comes not just at the cost of serendipity, but through a systemic delegation of epistemic authority to commercial infrastructures with misaligned incentives.