Is Free Social Networking Worth Your Privacy?

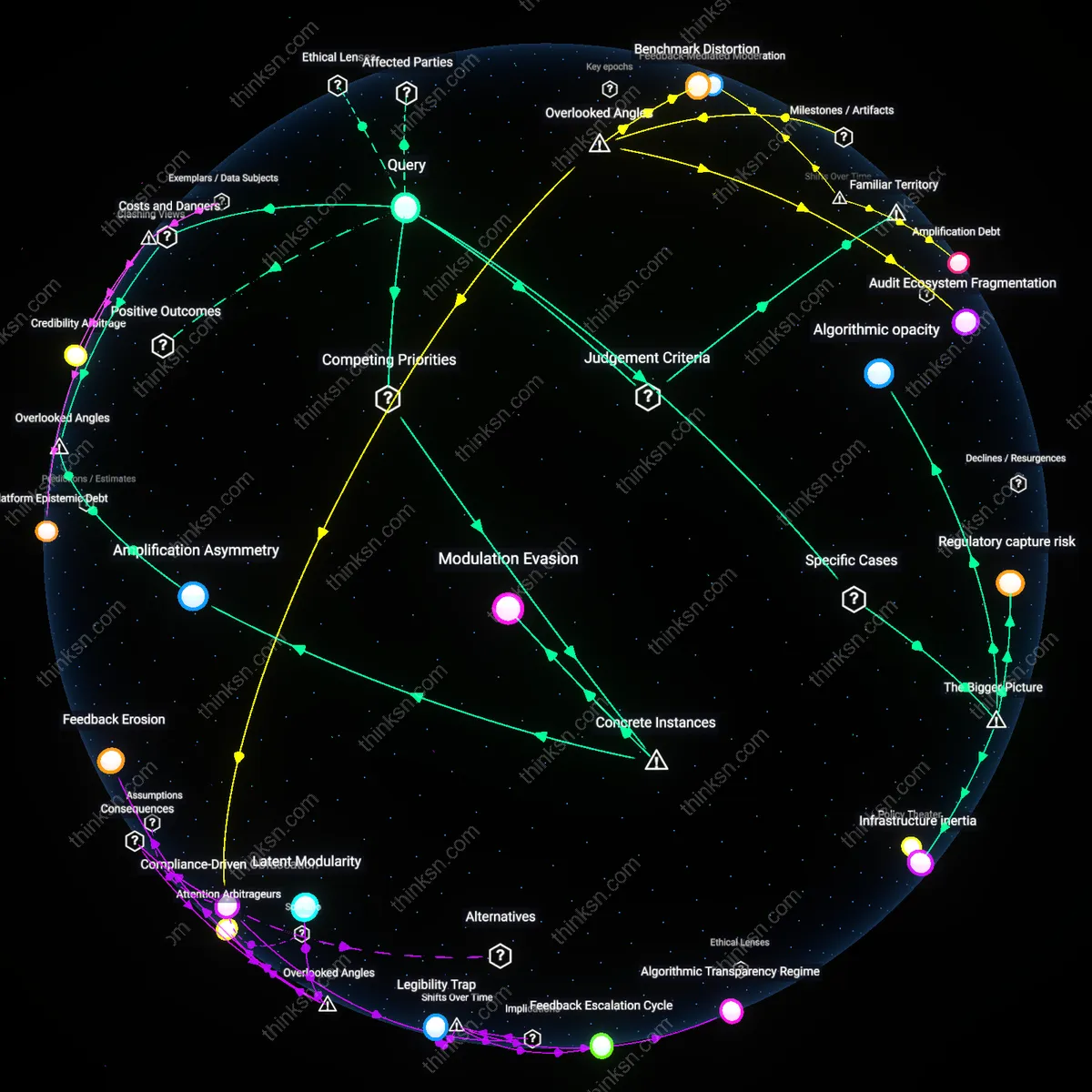

Analysis reveals 11 key thematic connections.

Key Findings

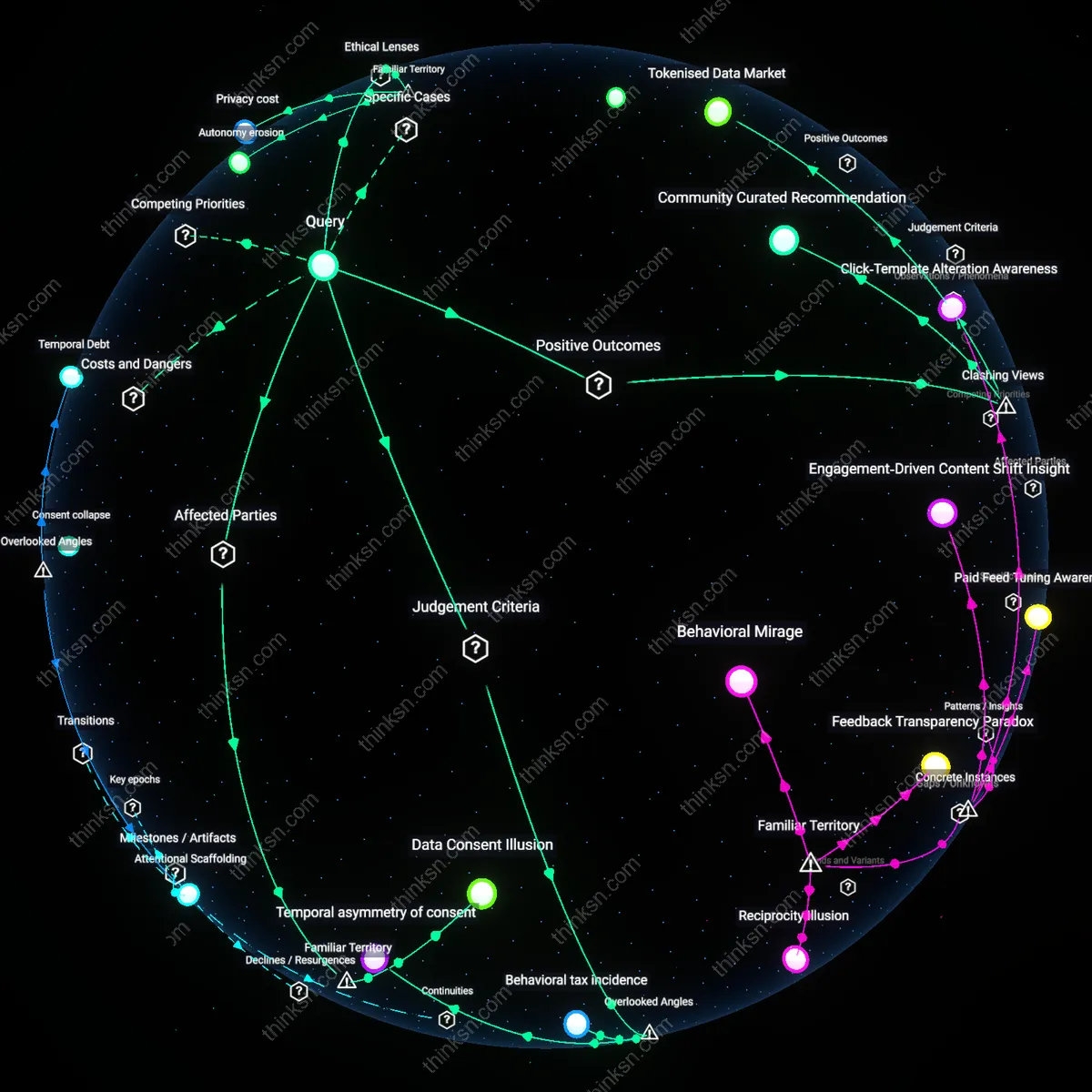

Data Consent Illusion

Consumers assess value by assuming their agreement to terms of service reflects meaningful choice. Most users click ‘accept’ on data policies without reading them, mistaking accessibility of social media for negotiated consent, a dynamic amplified by design patterns like dark patterns and default opt-ins. The non-obvious insight is that this familiar 'consent ritual' creates a false sense of agency, centralizing corporate control while distributing risk to individuals who believe they’ve made a trade, not been enrolled into a system they don’t govern.

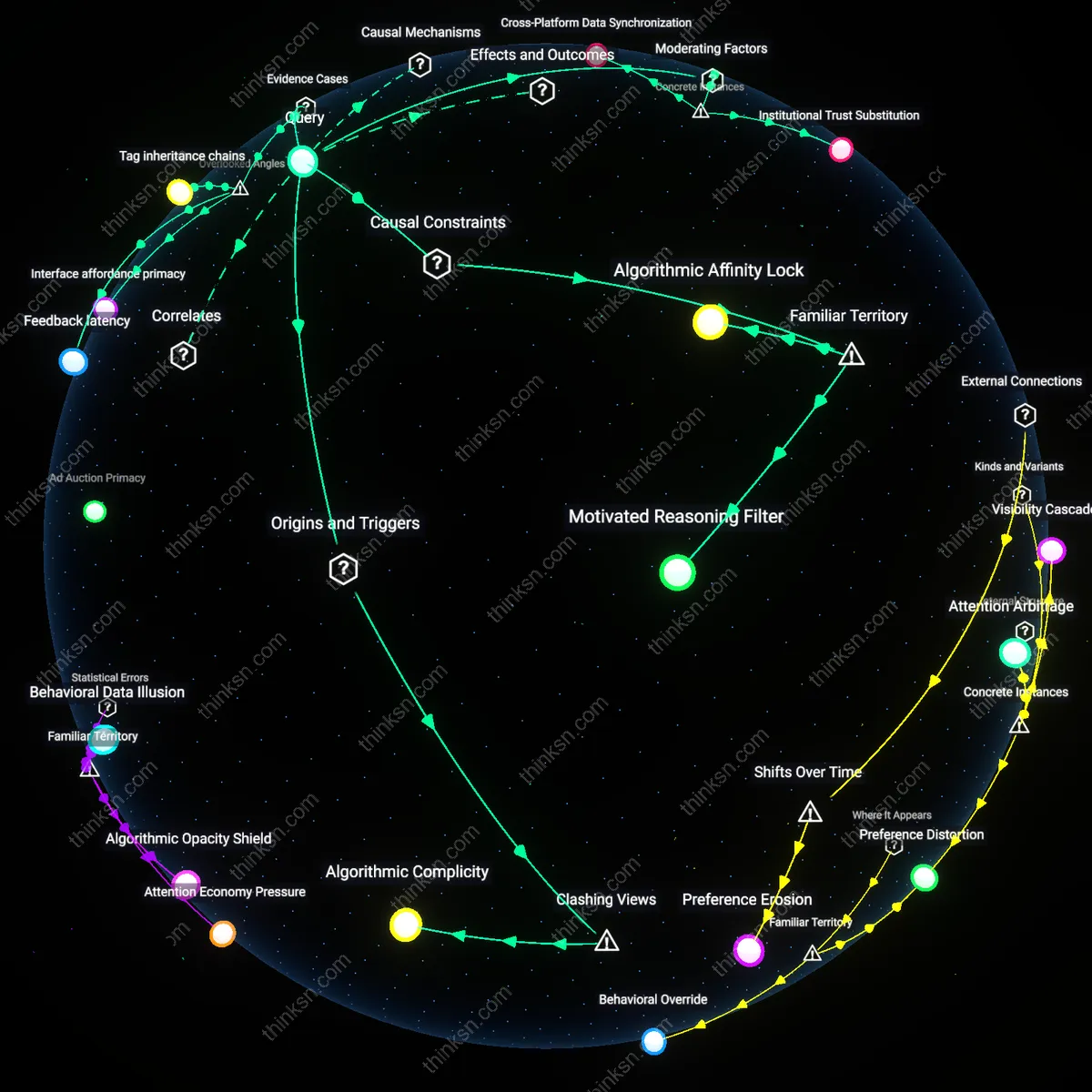

Attention Debt Trap

Consumers gauge value by comparing ad load and intrusiveness against platform utility, mistaking reduced disruption for fair exchange. Every notification, feed autoplay, and suggested post conditions users to tolerate more data harvesting in return for seamless engagement, trapping them in cycles of passive compliance. The underappreciated reality is that this trade feels voluntary because the cost—attentional fatigue and behavioral manipulation—is diffuse and cumulative, making harm imperceptible until dependency is entrenched.

Behavioral tax incidence

Consumers should assess the value exchange by evaluating how algorithmic filtering redistributes attentional costs across demographic groups, because the burden of commercial data extraction falls unevenly on populations with less digital literacy or weaker privacy protections, exposing a regressive impact where marginalized users effectively subsidize the 'free' access enjoyed by others; this shifts the moral evaluation from individual autonomy to systemic distributive justice, revealing that the pricing of social media is not zero but instead invisibly levied through differential exposure to manipulation. The overlooked dimension is not consent or transparency, but how the economic incidence of data harvesting—like taxation—bears hardest on those least able to avoid or negotiate its terms.

Temporal asymmetry of consent

Consumers cannot accurately assess value exchange because their present agreement to data use is structurally incapable of accounting for future contexts in which that data will be recombined, re-identified, or repurposed, as machine learning models retroactively extract meaning from behavioral traces originally generated in benign settings; this creates a temporal misalignment between consent and consequence, where the practical yardstick shifts from informed choice to intertemporal harm exposure. The underappreciated factor is not data quantity but the irreversible compounding of inferential risk over time, which standard privacy frameworks ignore by treating consent as a one-time transaction rather than a continuously vulnerable state.

Infrastructural opacity gradient

Consumers lack evaluative footing because the technical layers mediating data extraction—such as real-time bidding protocols, identity resolution markets, and edge-computing inference engines—are intentionally concealed from public view, making the economic value derived from personal data unknowable even in principle; this epistemic barrier prevents any meaningful market-like judgment of exchange fairness, shifting the relevant principle from consumer choice to epistemic justice. The overlooked dimension is not corporate surveillance per se, but how the deliberate fragmentation and obfuscation of data supply chains make valuation impossible, rendering the 'free' label a category error in the absence of transparent pricing mechanisms.

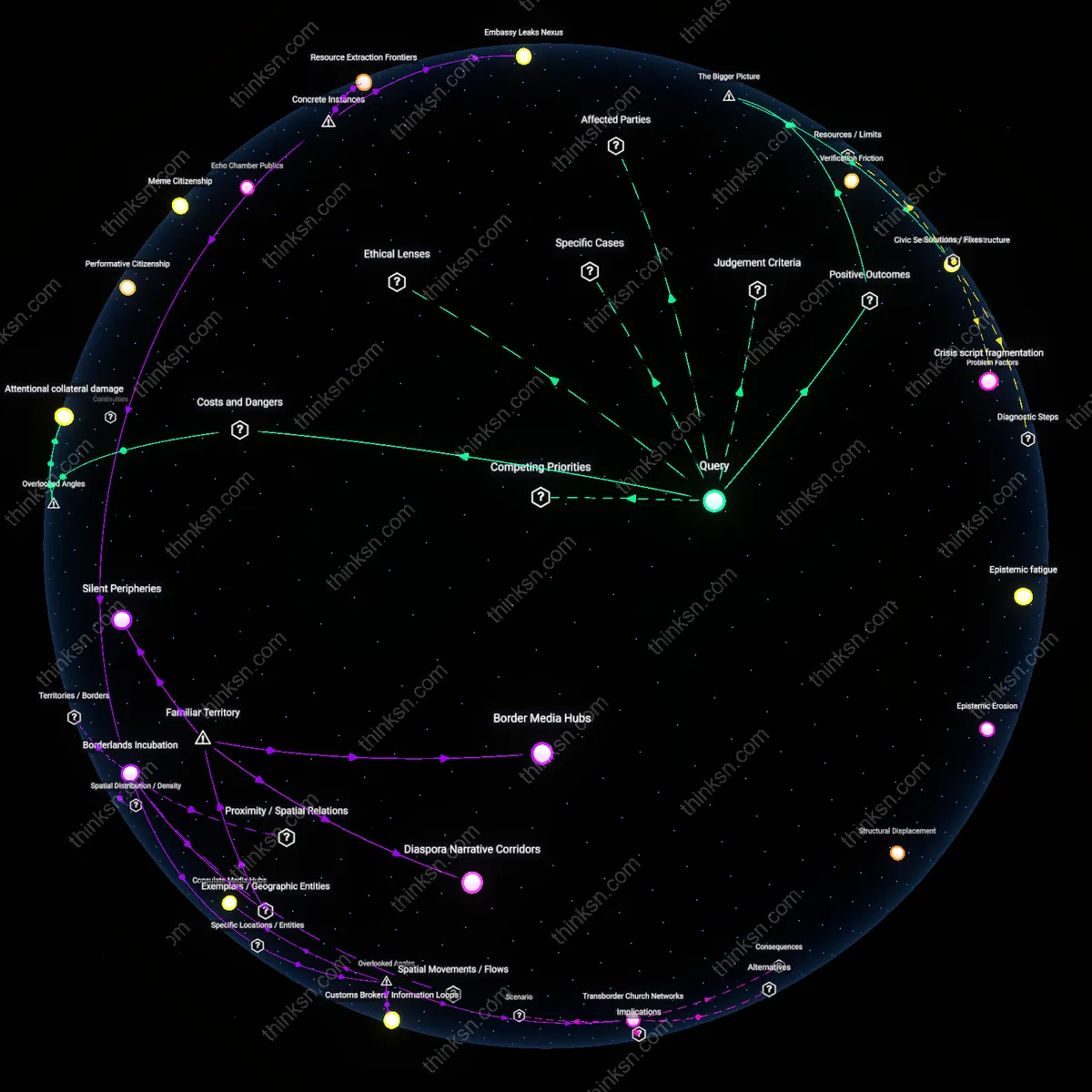

Tokenised Data Market

Consumers can now enter a data token market where each piece of personal information is assigned a token value and traded for direct financial remuneration while the platform remains free. Tokenised data sold to third‑party advertisers through a privacy‑preserving ledger generates a revenue stream that is distributed back to users and offsets the cost of the free service. This reframes the data‑ads trade‑off as a negotiated exchange rather than a unilateral extraction, revealing that users can actively profit from and control their data.

Community Curated Recommendation

By joining local community‑curated recommendation networks that rely on anonymised aggregate data, users receive highly relevant content while the platform eliminates intrusive tracking ads. These networks compute ad‑like sponsorships using homomorphic encryption, so the user sees only context‑appropriate offers that directly support nearby businesses. The result is a significant reduction in cognitive load and advertising fatigue, demonstrating that privacy‑first ad models can actually enhance user experience—a counterintuitive benefit to a system normally perceived as harmful.

Ad‑Feedback Governance

Targeted ads can be transformed into a participatory governance feedback loop, where users’ ad relevance scores directly inform algorithmic adjustments and platform policy. Each interaction with an ad generates a data point that feeds into a transparent decision‑making dashboard, allowing users to see how their signals shift content curation and advertising thresholds. This process turns the ad ecosystem from a covert extraction mechanism into an explicit dialogic platform, revealing that consumer engagement with advertising can actively shape the social network’s evolution.

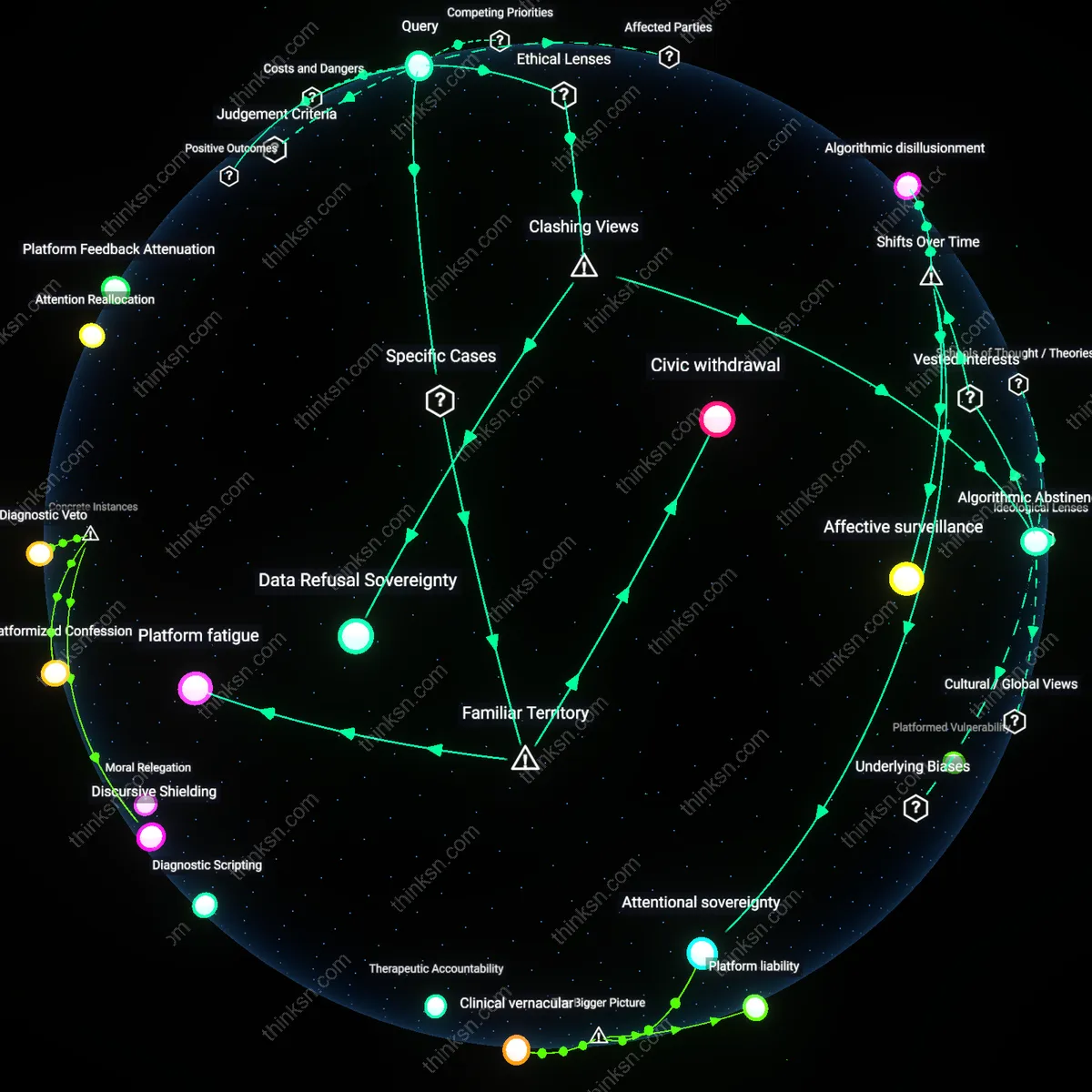

Privacy cost

Consumers can assess the trade‑off by quantifying the utility gained from free access, such as uninterrupted service hours, against the quantified utility loss suffered when their data is sold under GDPR‑regulated consent mechanisms. The actors are the consumer, the platform, and EU data‑protection regulators; the mechanism is the value‑chain of data harvesting and advertising revenue cross‑referencing service fees. This operates through the legal doctrine of proportionality embedded in the GDPR, ensuring that any privacy cost must be offset by a demonstrable public benefit. The under‑appreciated element here is that the consumer can request a precise cost‑benefit calculation from the platform, a provision rarely exercised but explicitly mandated in Article 33.

Autonomy erosion

A Kantian consumer evaluates the trade‑off by treating targeted advertising as a potential violation of the categorical imperative that privacy must be respected as an end in itself. The actor is the data subject, the platform as the agent; the mechanism is consent validation via explicit opt‑in, which if absent, treats data as a means to profit. This operates within the legal doctrine of informed consent, but the analytical gain lies in formalizing an autonomy‑compromise metric that equates non‑consensual data use to a measurable erosion of personal agency. The non‑obvious insight is that consumers can refuse benefits (e.g., free access) to preserve autonomy, a cost that many overlook when driven by habitual free‑service expectations.

Surveillance burden

From a libertarian‑liberal viewpoint, consumers assess the trade‑off by benchmarking the economic utility of free platforms against the surveillance burden incurred by data commodification. The actors are individual users, private firms, and the implicit state through regulatory oversight; the mechanism is data monetization contracts that monetize behavioral profiles for targeted ads. This sits within the political doctrine of minimal state intrusion, yet the analytical tool becomes a surveillance‑burden index that captures the cumulative effect of ever‑expanding data footprints. The overlooked aspect is that repeated data exchanges with aggregated micro‑entities dilute personal privacy faster than typical state surveillance, shifting the trade‑off away from the individual toward a collective commons.