Is Twitters Diverse Viewpoint Worth Its Sensationalist Bias?

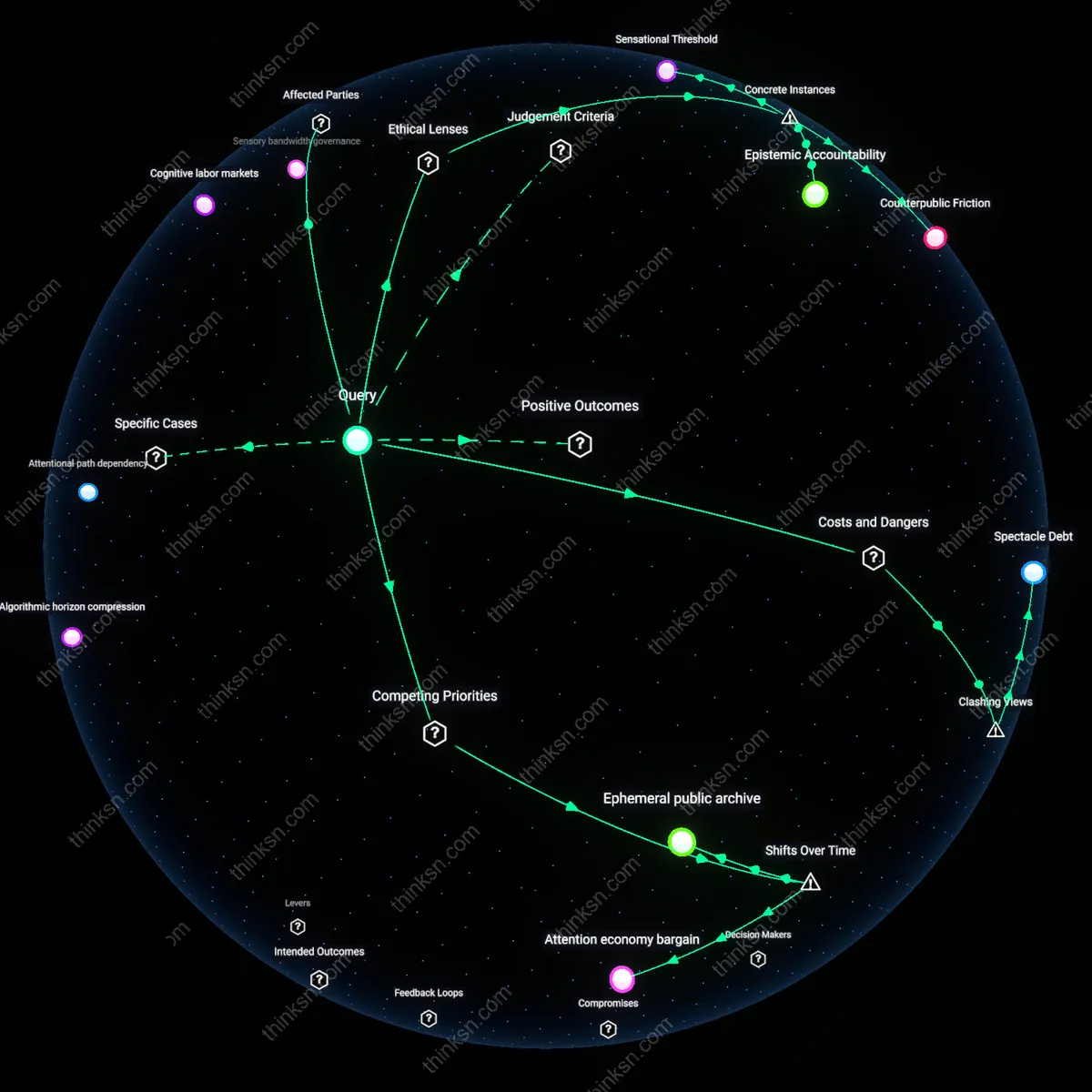

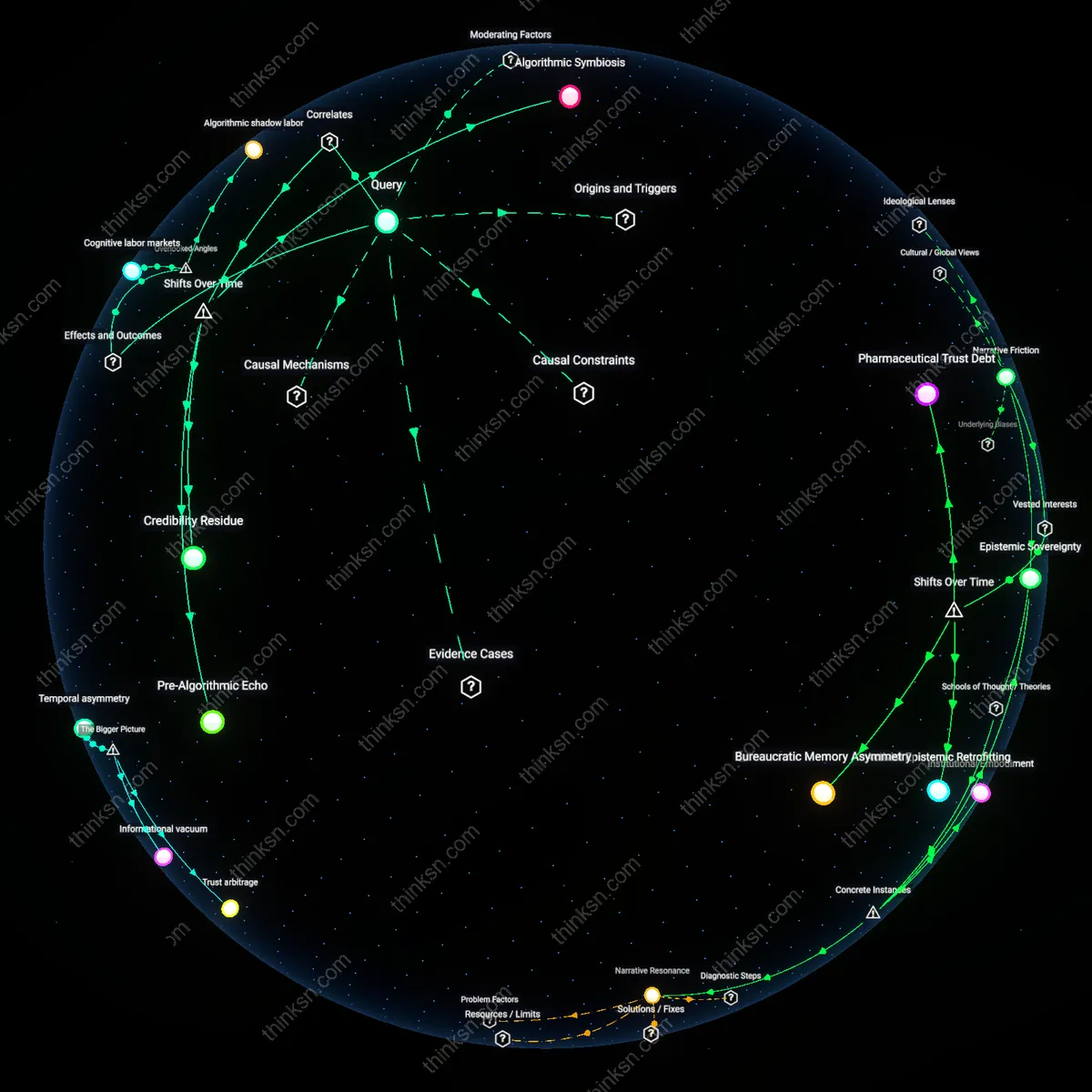

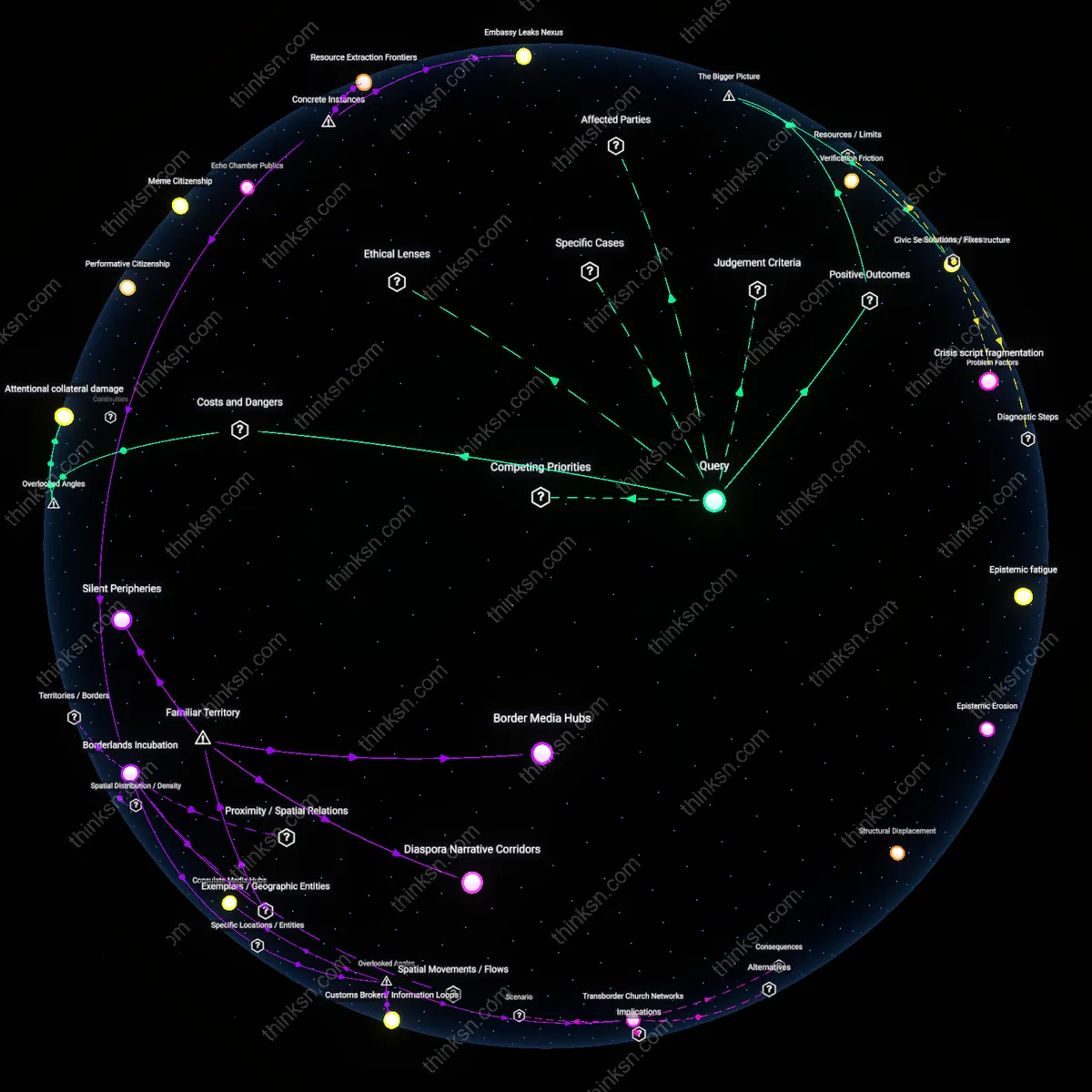

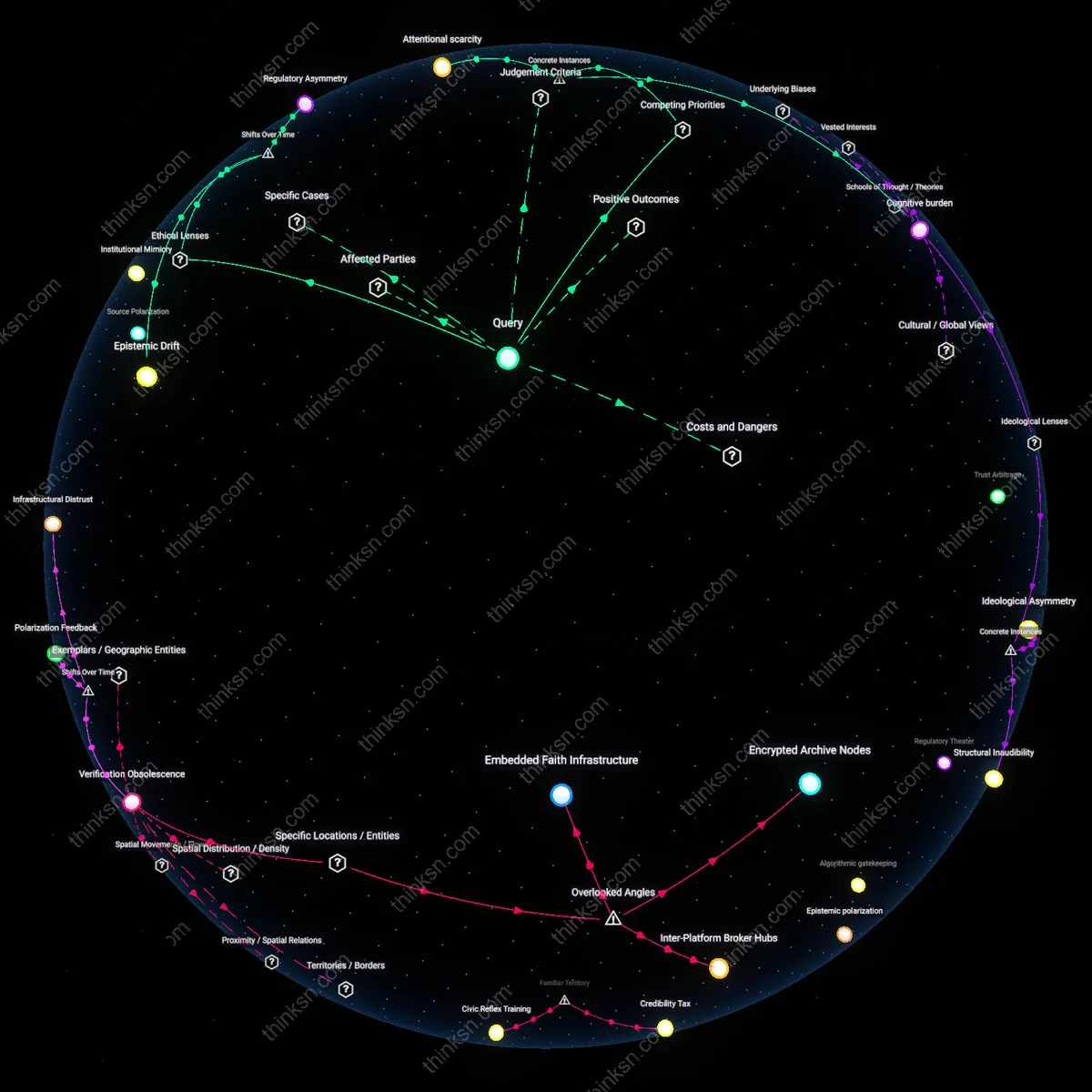

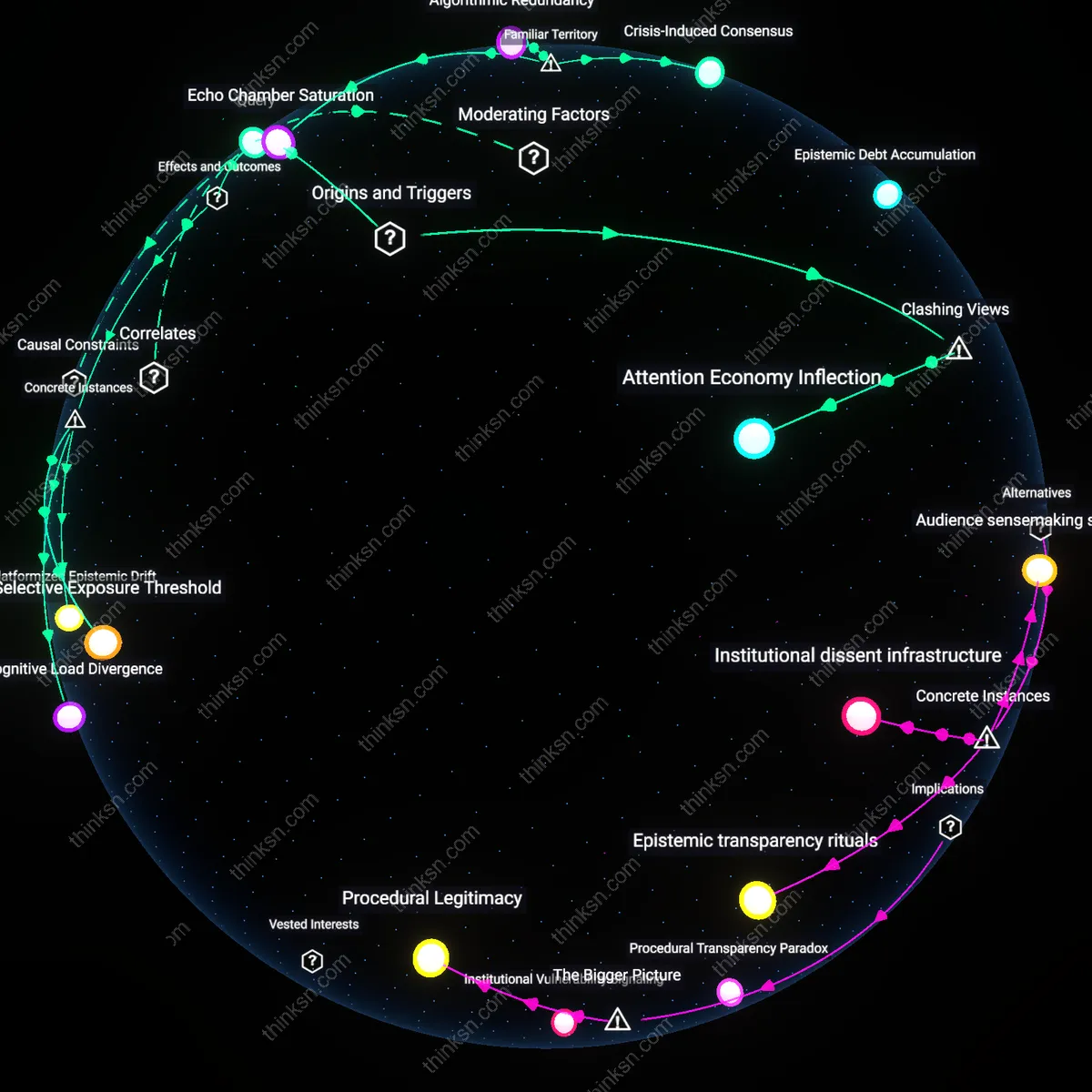

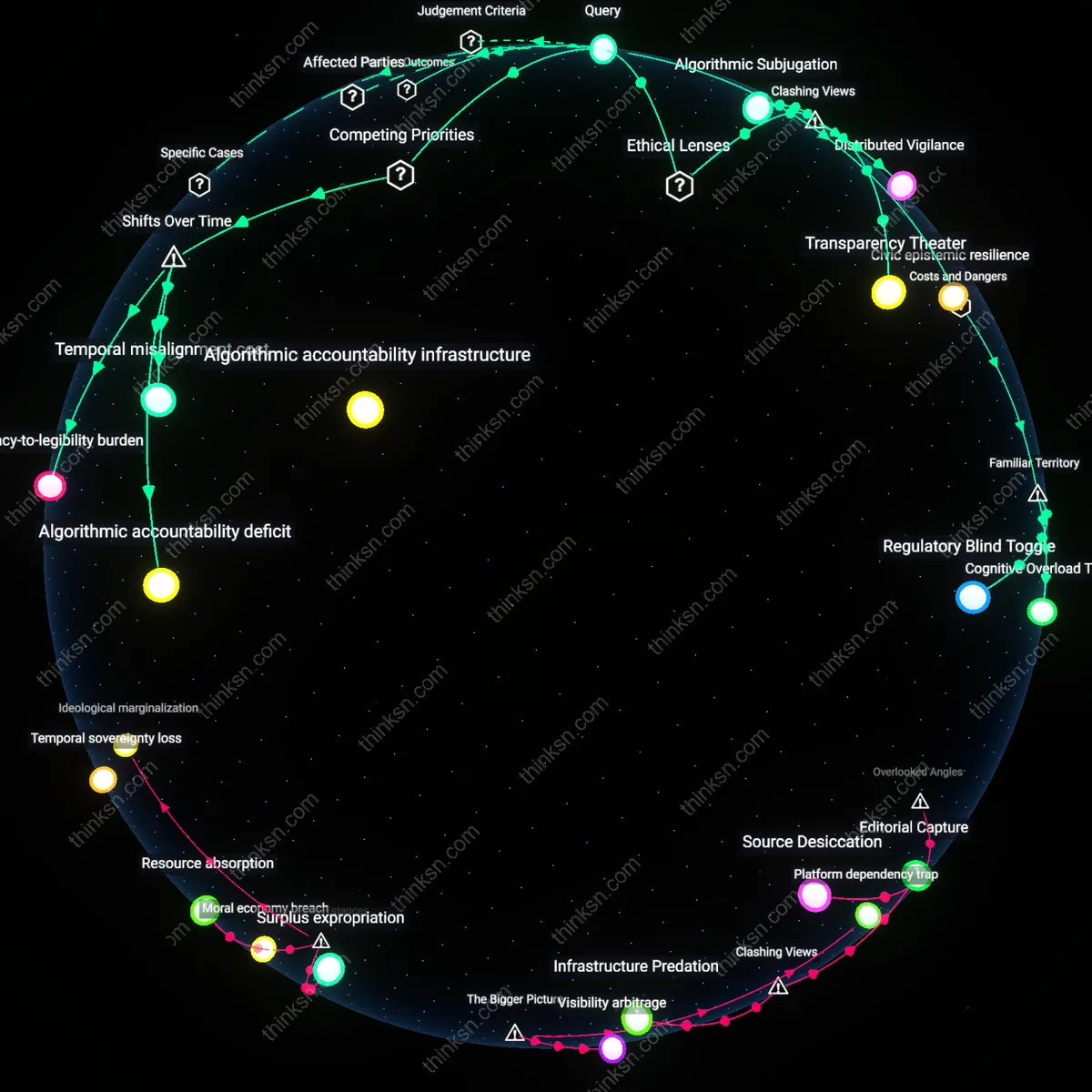

Analysis reveals 9 key thematic connections.

Key Findings

Cognitive labor markets

Diversifying Twitter's recommendation algorithms by compensating marginalized knowledge producers directly reduces sensationalism's dominance by altering incentive structures among underrepresented experts. Professional fact-checkers, indigenous knowledge holders, and community moderators—who often withdraw from Twitter due to epistemic exploitation—can re-engage when monetization aligns with depth rather than virality, shifting content ecosystems toward sustained dialogue. This transforms how credibility is distributed, revealing that algorithmic sensationalism persists not just from platform design but from the unpaid cognitive labor of niche perspectives being systematically extracted and discarded. Most analyses ignore how value extraction from expert attention, not just engagement metrics, sustains epistemic imbalance—making cognitive labor markets the hidden economic substrate of content toxicity.

Sensory bandwidth governance

Regulating the sensory modality distribution of algorithmically amplified content—such as privileging long-form audio threads over rapid-fire image-text blends—can temper sensationalism while preserving perspective diversity by modulating emotional throughput. Deaf advocates and neurodivergent users, who experience algorithmic amplification differently due to reliance on visual versus auditory processing, expose how platform 'neutrality' in format prioritization covertly amplifies affective reactivity. By recognizing that the sensory form of content determines its potential for distortion independent of viewpoint, interventions can decouple diversity from speed, a dimension overlooked because policy debates fixate on speech rather than perceptual load. This reframes algorithmic responsibility as a question of sensory bandwidth governance, not merely editorial judgment.

Infrastructural memory asymmetry

Preserving low-engagement, high-context exchanges in searchable, archived thread structures counters algorithmic sensationalism by enabling later citation and delayed recognition of nuanced perspectives. Migrant worker collectives and diaspora activists, whose initial posts often lack immediate traction but gain significance during crises, depend on infrastructural memory that current algorithms erase through temporal decay and engagement-based ranking. The absence of persistent, context-retaining backchannels allows superficial narratives to dominate not because they are preferred, but because they are the only ones algorithmically accessible over time. This hidden dependency—infrastructural memory asymmetry—exposes how forgetting, not just amplification, drives epistemic distortion, a mechanism absent in mainstream critiques focused solely on visibility.

Spectacle Debt

Users from historically silenced groups must accrue social capital through repeated performance of trauma or crisis to gain algorithmic traction, creating a recursive burden where representation depends on reenactment. On Twitter, content documenting racial violence, gendered harassment, or economic precarity generates outsized engagement not because of its informational value but because it satisfies the platform’s appetite for emotional spectacle—yet this attention rarely translates into material support or systemic redress. As a result, marginalized individuals and advocacy groups internalize the necessity of perpetual exposure to harm as the price of visibility, reinforcing cycles of psychological strain and narrative exploitation; the underappreciated danger is that diversity itself becomes a form of algorithmic labor, wherein authentic perspectives are not integrated but extracted through a feedback loop that consumes personal suffering to fuel engagement metrics, leaving behind emotional exhaustion and representational burnout.

Attention economy bargain

Shifting from chronological to engagement-based ranking in 2016 institutionalized a trade-off where user exposure to diverse perspectives became subordinate to algorithmic amplification of emotionally charged content. This change, driven by Twitter’s need to sustain ad revenue amid slowing user growth, embedded a mechanistic preference for rage, urgency, and moral outrage into content distribution—effectively monetizing cognitive bias. The non-obvious consequence is that diversity of perspective persists only when it is weaponized into conflict, making pluralism structurally dependent on hostility rather than coexistence.

Ephemeral public archive

The platform’s transition from a real-time messaging service (circa 2010–2015) to an algorithmically curated news ecosystem eroded the original expectation that public discourse would remain accessible and contextually stable. As of 2022, with the prominence of For You feeds and disappearing trends, tweets promoting marginalized viewpoints often gain visibility only to be algorithmically buried within hours, creating a temporal fragility in diverse expression. The underappreciated outcome is that diversity is no longer suppressed by absence but by scheduled obscurity—where inclusion is fleeting, unarchived, and thus incapable of building sustained counter-narratives.

Epistemic Accountability

California’s 2022 Digital Platform Commission hearings revealed that applying public utility ethics to content moderation—modeled on municipal oversight boards—can institutionalize diverse community input into algorithmic curation, thereby tempering sensationalism by embedding equity reviews into platform governance; this challenges the assumption that moderation must either be reactive or ideologically driven by showing how procedural fairness, rooted in Rawlsian justice theory, can systematically elevate marginalized voices without compromising coherence, a mechanism underappreciated in debates dominated by free speech absolutism.

Sensational Threshold

After the 2019 Sri Lankan Easter Sunday bombings, Facebook and Twitter were found to have amplified extremist manifestos over crisis response information due to engagement-based ranking, prompting the UN Human Rights Council to designate algorithmic amplification of hate content as a potential violation of the 'duty to protect' under R2P (Responsibility to Protect) doctrine—revealing that algorithmic sensationalism can constitute structural negligence when platforms function as de facto public spheres during mass violence, a link overlooked in consumer-centric regulatory models.

Counterpublic Friction

The #EndSARS movement in Nigeria (2020) demonstrated how state-ignited citizen coalitions used Twitter’s viral mechanics not to amplify spectacle but to coordinate forensic documentation and legal advocacy, subverting algorithmic sensationalism by repurposing trending topics into evidence repositories scrutinized by international courts—exposing how marginalized groups can weaponize visibility norms against dominant narratives, a tactic absent from Western-centric design ethics rooted in liberal pluralism.