Free Email Safety: Privacy vs. Personalized Ads?

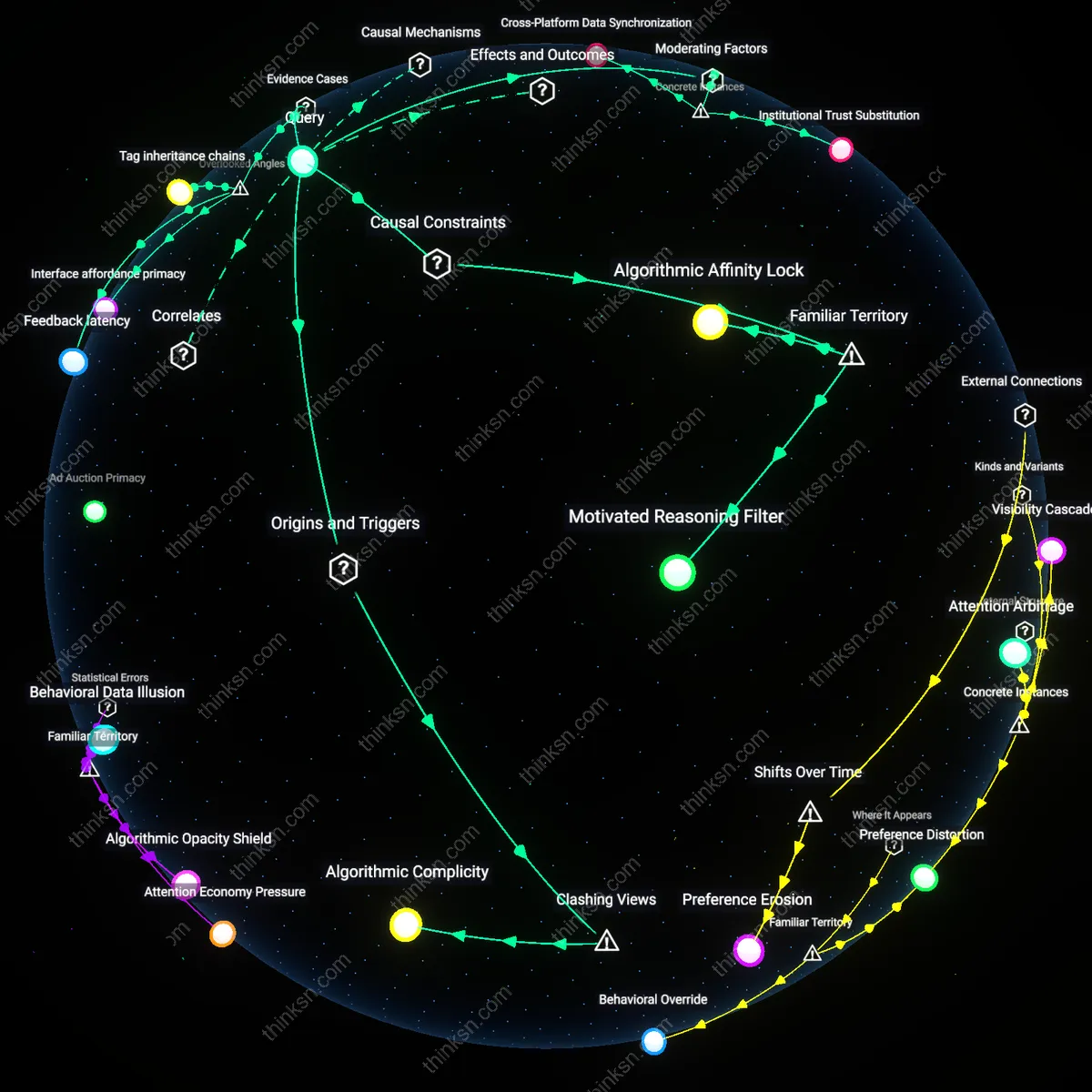

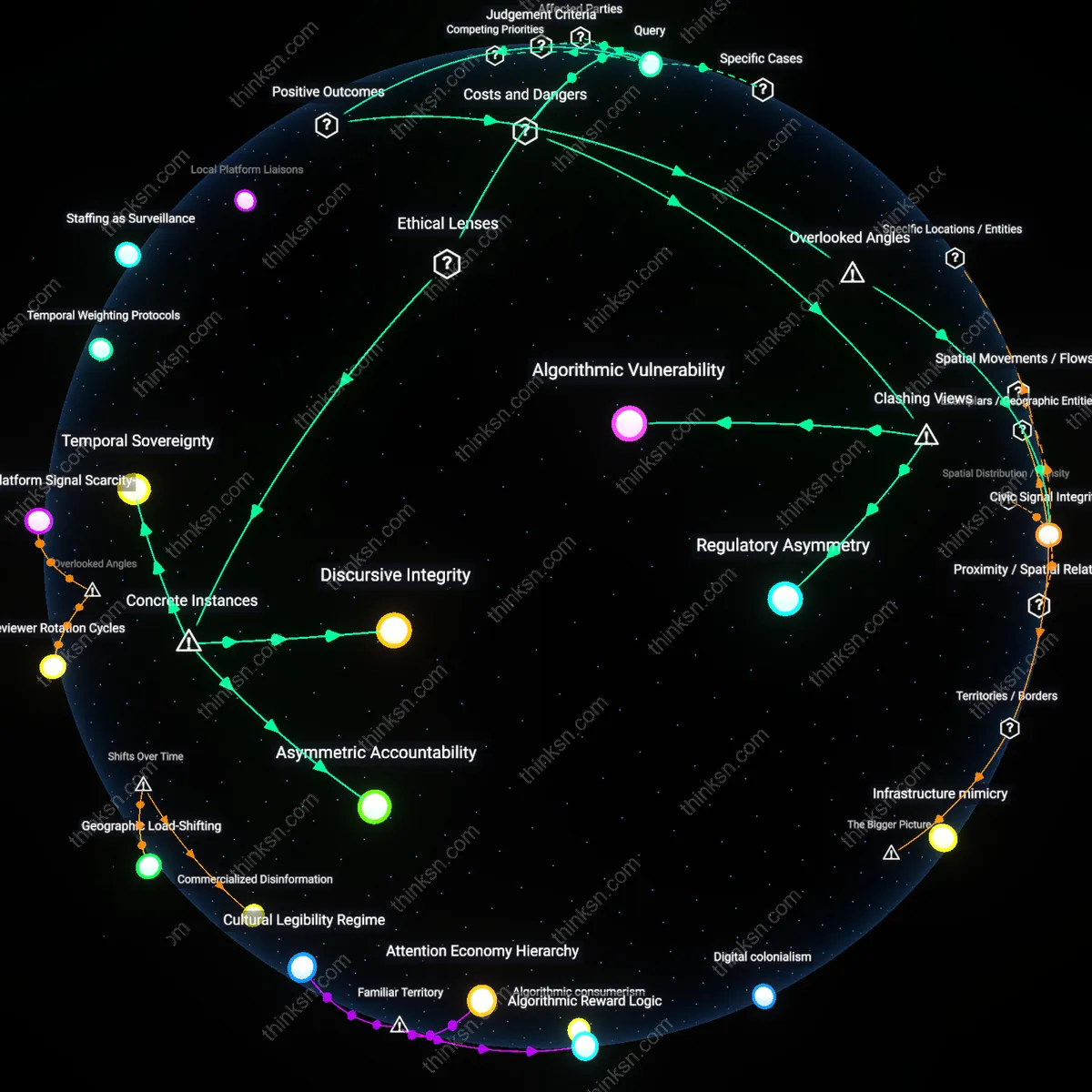

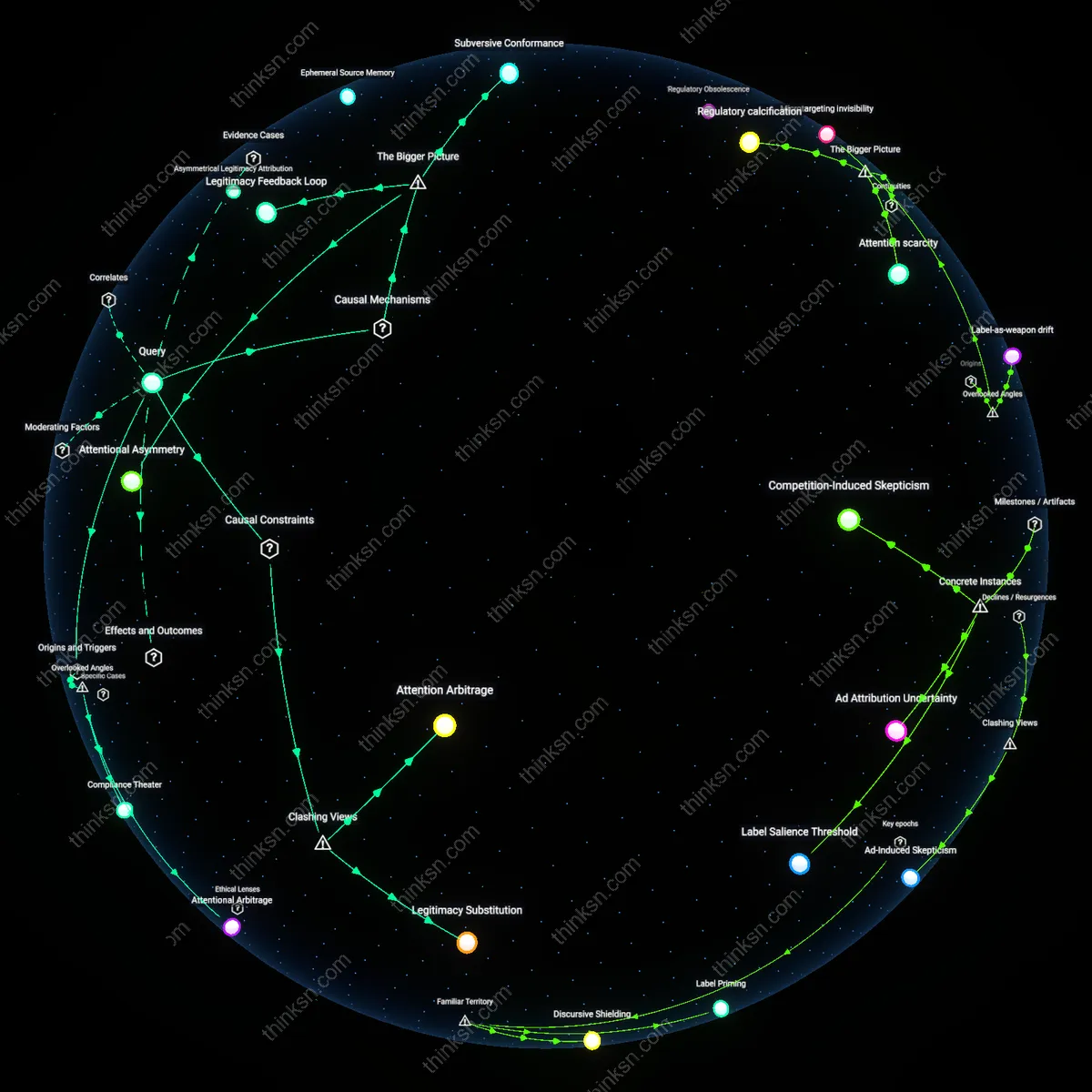

Analysis reveals 6 key thematic connections.

Key Findings

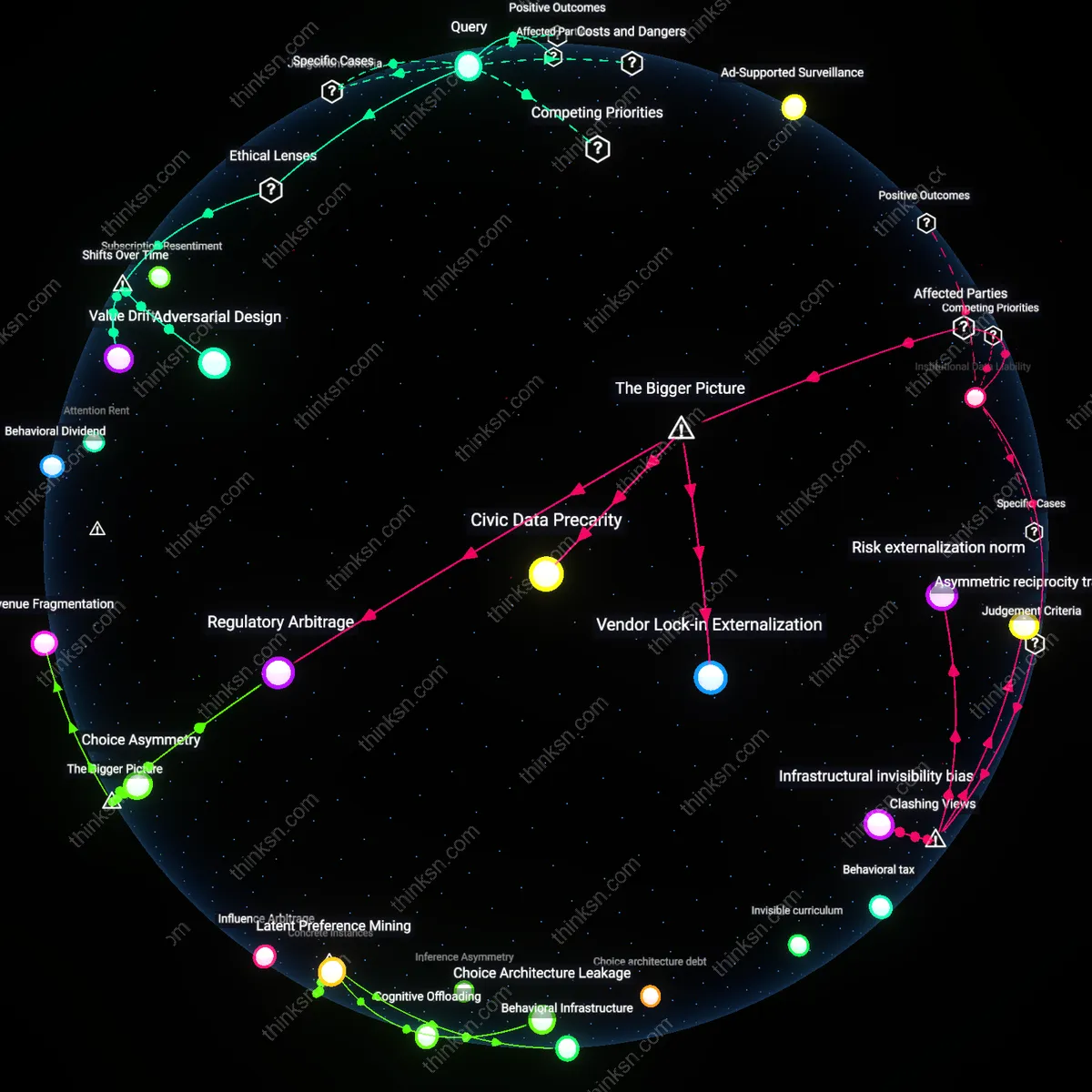

Ad-Supported Surveillance

Free email services are less safe because advertising networks fund them through continuous user data harvesting, which inherently increases exposure to privacy breaches. Major providers like Gmail embed tracking scripts that scan emails to tailor ads, making the service dependent on invasive profiling. The non-obvious insight is that the safety risk isn’t a flaw but a core operational feature—users are not customers but data sources, and their content is the product being monetized.

Consumer Privacy Trade-off

The safety of free email appears justified to most users because individuals consciously accept data collection in exchange for convenience and cost savings. Everyday users associate 'free' with personal responsibility—choosing to share data feels safer than paying, due to the visibility of financial cost versus invisible surveillance. The underappreciated aspect is that this trade-off is socially normalized, making profiling seem like a fair price rather than an asymmetric exploitation.

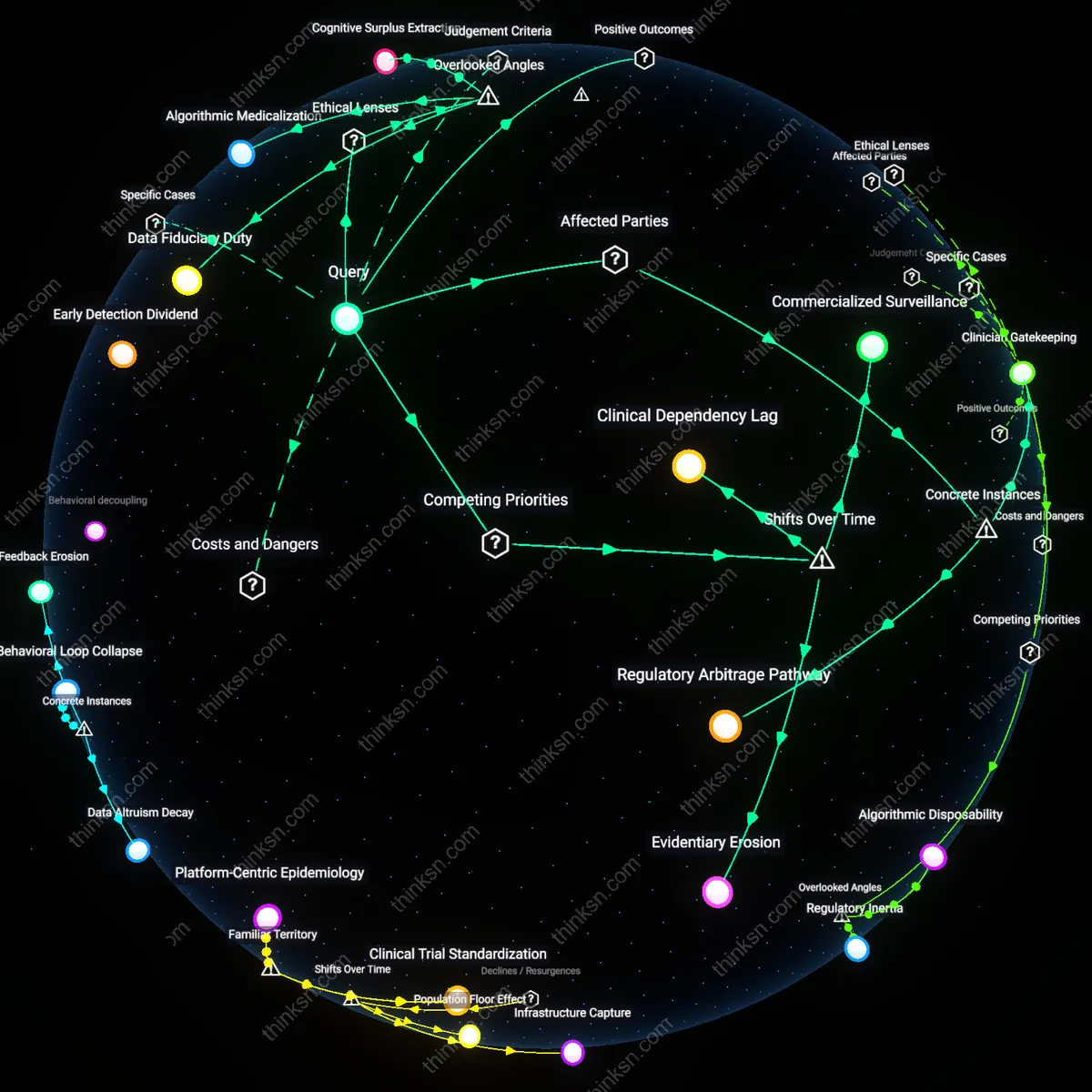

Institutional Data Liability

Employers, educators, and government agencies rely on free email for mass communication, legitimizing its perceived safety despite risks, because operational efficiency outweighs privacy concerns. These institutions treat platforms like Gmail or Outlook as de facto utilities, embedding them in official workflows, which reinforces public trust. The overlooked reality is that organizational adoption, not individual consent, sustains the illusion of safety, shifting liability onto users when data misuse occurs.

Consent Obsolescence

Free email services became ethically problematic after the mid-2000s shift from data minimization to mass behavioral capture, a transition justified under liberal contractualism where user consent replaced privacy as the moral baseline; this mechanism allowed providers like Gmail to reframe inbox scanning as a permissible term of service, embedding surveillance within the architecture of 'free' access. The non-obvious consequence is that consent, once a safeguard, now functions as a legitimizing ritual for perpetual profiling, rendering it obsolete as a protective norm.

Adversarial Design

The justification of safety in free email eroded after 2013, when post-Snowden revelations exposed how government-access doctrines like FISA Section 702 transformed commercial data stores into de facto surveillance infrastructure; this pivot turned advertising profiles into intelligence assets, aligning corporate and state interests in a dual-use data economy. The underappreciated shift is that email 'safety' ceased being a user-centric metric and became a systemic feature of national security architecture, where design choices privilege institutional access over individual protection.

Value Drift

Perceived safety in free email emerged as a managed illusion after the 2010 commodification of attention metrics, when platforms transitioned from treating users as customers to treating them as data sources, a shift codified in shareholder-driven growth models that prioritized ad revenue over fiduciary care; this realignment repurposed encryption and spam filters not as privacy tools but as trust-building measures to sustain long-term data extraction. The overlooked dynamic is that the ethical value of safety has drifted from harm prevention to user retention, making it functionally orthogonal to genuine security.

Deeper Analysis

Why do so many people see giving up their data as a fair deal, even when they don’t fully know what it’s used for?

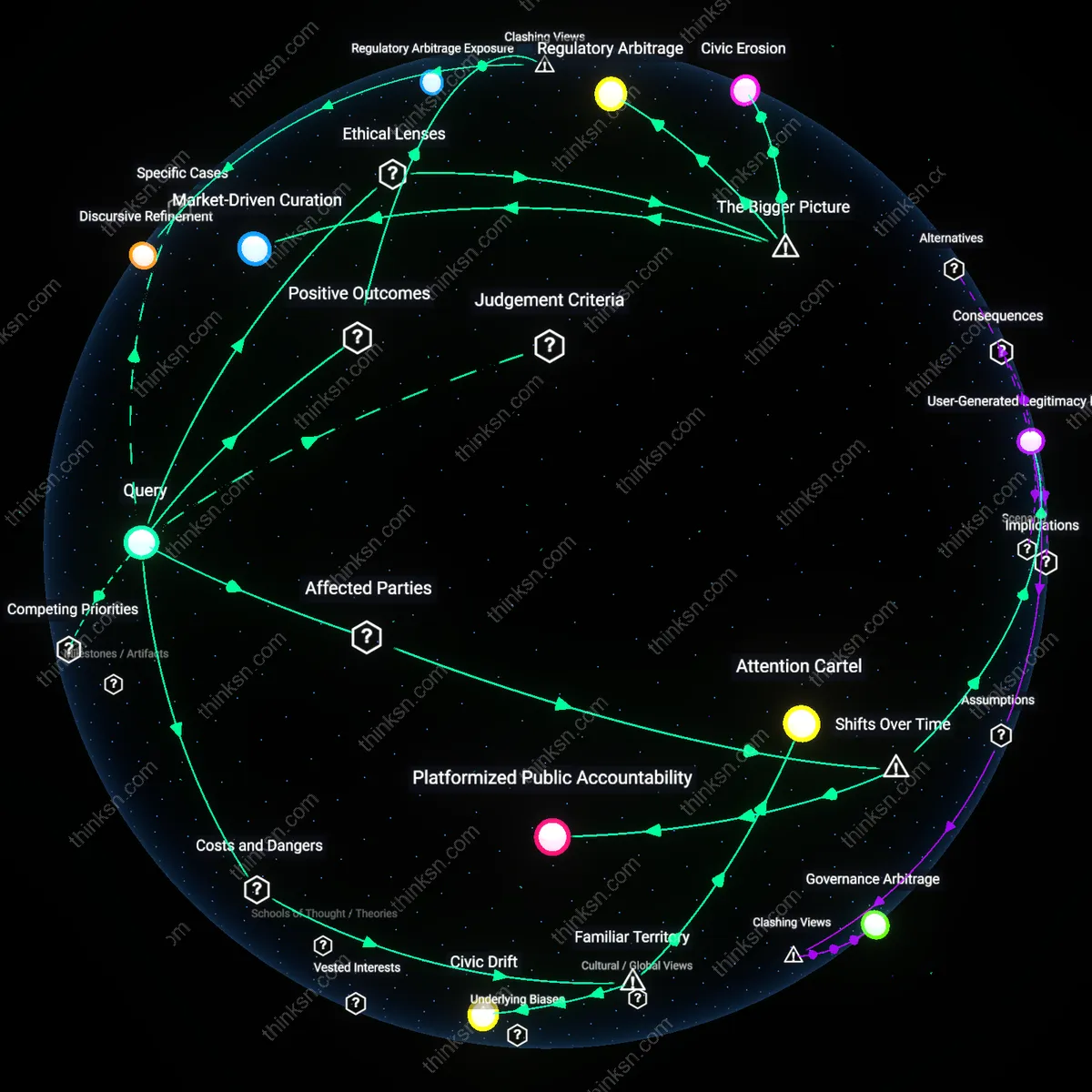

Digital Social Contract

People accept data collection because they perceive a tacit exchange of personal information for convenience and access to services as mutually beneficial. This belief is sustained by tech platforms that position themselves as neutral facilitators of modern life—offering free communication, navigation, and entertainment in return for behavioral data—while obscuring downstream uses like microtargeting and algorithmic manipulation. The non-obvious truth is that this perceived fairness mirrors classical liberal ideals of voluntary, rational exchange, even though asymmetrical knowledge and design coercion undermine informed consent.

Surveillance Normalization

The routine integration of data-harvesting tools into everyday activities—from social media check-ins to grocery store loyalty cards—makes surrendering data feel like a natural condition of participation in contemporary society. People don’t resist because the mechanisms are embedded in familiar rituals, such as holiday shopping or school updates, which evoke trust and routine rather than suspicion. The underappreciated dynamic is that normalization operates not through ideology but through repetition and ambient design, making resistance seem disruptive rather than principled.

Value Extractivism

Individuals relinquish data because dominant economic structures frame personal information as a resource to be mined and monetized by powerful institutions, much like raw materials in industrial capitalism. This logic, rooted in capitalist accumulation models, positions users as passive suppliers in a data supply chain where tech firms, advertisers, and data brokers extract disproportionate value. What remains hidden is that this system depends on rendering labor—cognitive, emotional, behavioral—as invisible input, turning everyday digital actions into a new form of surplus production.

Infrastructure Asymmetry

Users in India ceded biometric data to the Aadhaar system because enrollment was tied to accessing essential services like food rations and bank accounts, creating a coercive bargain masked as voluntary participation; the mechanism—integration of digital identity into welfare delivery—obscured data risks by making surrender a precondition for survival, revealing that apparent consent often emerges from structural dependency rather than informed choice.

Value Deferral Regime

Facebook users accepted indefinite data collection under the assumption that personalized ads would eventually yield tangible benefits, as promised during the rollout of early News Feed algorithms; in practice, the platform deferred real utility while accumulating behavioral surplus, demonstrating how the perception of future value sustains data extraction even in the absence of present accountability or transparency.

Normalization Through Crisis

During the 2020 pandemic, citizens in South Korea accepted widespread location tracking via credit card records and CCTV under the Seoul Metropolitan Government’s contact tracing protocol, legitimizing mass surveillance as a temporary health measure; this emergency framing embedded data surrender into public expectations of safety, revealing how crisis governance can reset societal baselines for privacy without explicit long-term consent.

When institutions treat free email as essential tools, how do they decide who ends up bearing the risk if personal data gets exposed?

Regulatory Arbitrage

Public institutions assign liability to users when adopting free email services because data protection responsibilities are diffused across jurisdictions where enforcement is weakest, enabling vendors like Google or Microsoft to operate under less restrictive legal frameworks. This shift occurs through deliberate procurement choices that prioritize cost and scalability over data sovereignty, leveraging international discrepancies in privacy laws—such as the gap between the EU’s GDPR and the U.S.’s sectoral approach—so that institutional risk is minimized at the expense of individual users’ data exposure. The non-obvious consequence is that public agencies, despite their duty to protect citizens, become complicit in offloading accountability through technical partnerships whose legal opacity shields the institution while rendering the end-user as the de facto data custodian.

Vendor Lock-in Externalization

Private-sector service providers embed long-term dependency into institutional workflows, forcing administrators to accept pre-defined data stewardship terms that default to individual users bearing breach risks once data leaves the organizational perimeter. This happens because once institutions integrate tools like Gmail into core operations—HR, academic communications, student records—the cost of migration or encryption overhauls exceeds projected breach liabilities, allowing companies to shift data governance burdens downstream through standardized, non-negotiable terms of service. The overlooked mechanism is how operational inertia created by technical integration actively dissolves institutional bargaining power, making user liability not a policy choice but an emergent outcome of infrastructural entrenchment.

Civic Data Precarity

Marginalized populations experience disproportionate exposure when public services rely on commercial email because these groups, often digitally dependent on free platforms, lack alternative means to access essential communications from schools, healthcare, or government agencies. This asymmetry is systemically reinforced when institutions adopt such tools without providing parallel secure channels, effectively mandating personal data submission to third-party ecosystems as a condition for service eligibility—yet without extending the same data protections afforded to employees or higher-status users. The underrecognized dynamic is that institutional convenience normalizes a two-tiered data citizenship, where vulnerability becomes structurally encoded through the very tools meant to ensure universal access.

Risk externalization norm

Institutions assign data exposure risk to users by designating free email as a personal utility rather than a public infrastructure, thereby activating private liability frameworks. This shift occurs through contractual terms that absolve providers of fiduciary responsibility, positioning data breaches as user-managed hazards rather than systemic failures—despite institutional dependence on these platforms for official communication. The non-obvious reality is that universities, governments, and healthcare systems offload cybersecurity burdens onto individuals by treating a de facto public service as if it were a consumer product. What appears to be individual responsibility is actually an engineered transfer of institutional accountability through legal and technical defaults.

Asymmetric reciprocity trap

Free email users bear exposure risk because institutional adoption creates a one-sided reciprocity where access to services is conditional on data surrender, while institutions retain control over data flow and retention policies. Governments and employers mandate email use for service delivery but do not reciprocally commit to protecting the resulting data trails, exploiting the asymmetry between operational necessity and liability avoidance. This undermines autonomy not through overt coercion but by embedding dependency in the architecture of participation—revealing that convenience is weaponized to erode consent and shift risk onto the least empowered parties.

Infrastructural invisibility bias

Risk allocation favors email providers and institutions because free email is perceived as a neutral, background tool rather than an active data governance system, obscuring its role in shaping liability outcomes. Municipalities and universities treat Gmail or Outlook as passive conduits, not data actors, despite their central role in data collection, retention, and exposure—enabling institutions to ignore shared stewardship obligations. This cognitive blind spot perpetuates a myth of technical neutrality, allowing powerful organizations to evade co-responsibility by relegating systemic risks to the category of 'user error' or 'third-party failure.'

What would happen if email services gave users a real choice between seeing ads or paying a small fee, breaking the 'free' model built on constant surveillance?

Attention Rent

Email providers would shift from monetizing user data to charging micro-fees, restructuring revenue around direct user payment rather than ad-driven surveillance—exemplified by services like ProtonMail introducing paid tiers post-2018 as GDPR tightened data controls. This transition reveals a market realignment where user attention, not personal behavior, becomes the unit of exchange, with providers now taxing access rather than extracting insights. The non-obvious consequence is that privacy is no longer a premium feature but a priced commodity, exposing the fragility of 'free' services once regulatory pressure disrupts surveillance economies.

Behavioral Dividend

If users could opt out of ads by paying, large-scale platforms like Gmail would lose the economic rationale for hoarding behavioral data, marking a reversal from the 2004–2012 era when metadata harvesting became central to monetization. The mechanism—user exit from ad-supported models—undermines the compound value derived from years of accumulated activity logs, redefining profitability not by scale of tracking but by user retention of control. This exposes how surveillance was not inevitable but a function of pricing architecture, now undone by minor cost shifts.

Subscription Resentiment

Introducing payment options would trigger user resistance not because of cost but because it reframes surveillance as a hidden subsidy, revealing resentment built during the 2010s as ad-targeting precision increased without transparency. The shift mirrors earlier transitions in media—like cable TV’s move from bundled to à la carte models—where pricing visibility resurrected debates over fairness. The overlooked outcome is that user anger isn’t about price but about belated recognition of prior exploitation, which payment options inadvertently expose.

Revenue Fragmentation

Email services that offer users a choice between ads and fees would fragment their revenue base, forcing platforms like Gmail or Yahoo to balance two distinct funding models with divergent scaling logics—advertising thrives on mass surveillance and engagement maximization, while subscription revenue depends on user trust and perceived value. This split would create internal misalignment between product teams optimizing for data harvesting and those designing minimalist, privacy-respecting user experiences, weakening the unified incentive structure that has long enabled free services to dominate through network effects and data accumulation. The non-obvious consequence is that such fragmentation would erode the economies of scale that currently allow surveillance-based platforms to undercut paid alternatives, revealing how financial monoculture underpins digital dominance.

Choice Asymmetry

Even with a nominal choice between ads and payment, most users would default to surveillance because behavioral economics and systemic inequality make cognitive fatigue and cost sensitivity stronger forces than privacy concerns—especially in low-income markets where a small fee is still prohibitive. Platforms would retain excessive power to nudge by preselecting ad-supported tiers, using dark patterns to obscure opt-out costs, and commodifying the data of those least able to pay, thereby preserving the extractive model under a facade of consent. This asymmetry exposes how apparent user agency often serves as a legitimizing ritual rather than a redistributive mechanism in digital capitalism.

Regulatory Arbitrage

If major email providers introduced paid no-ads tiers, they would trigger a race to the regulatory bottom, as companies exploit jurisdictional differences in privacy enforcement—firms based in weak-regulation zones could offer cheaper 'freemium' surveillance models while undermining compliant peers in strict regimes like the EU, where GDPR reshapes the cost calculus of data processing. The result would be a stratified global information ecosystem where privacy becomes a geographically contingent product tier, not a universal standard, revealing how legal fragmentation enables platforms to transform ethical choices into competitive advantages.

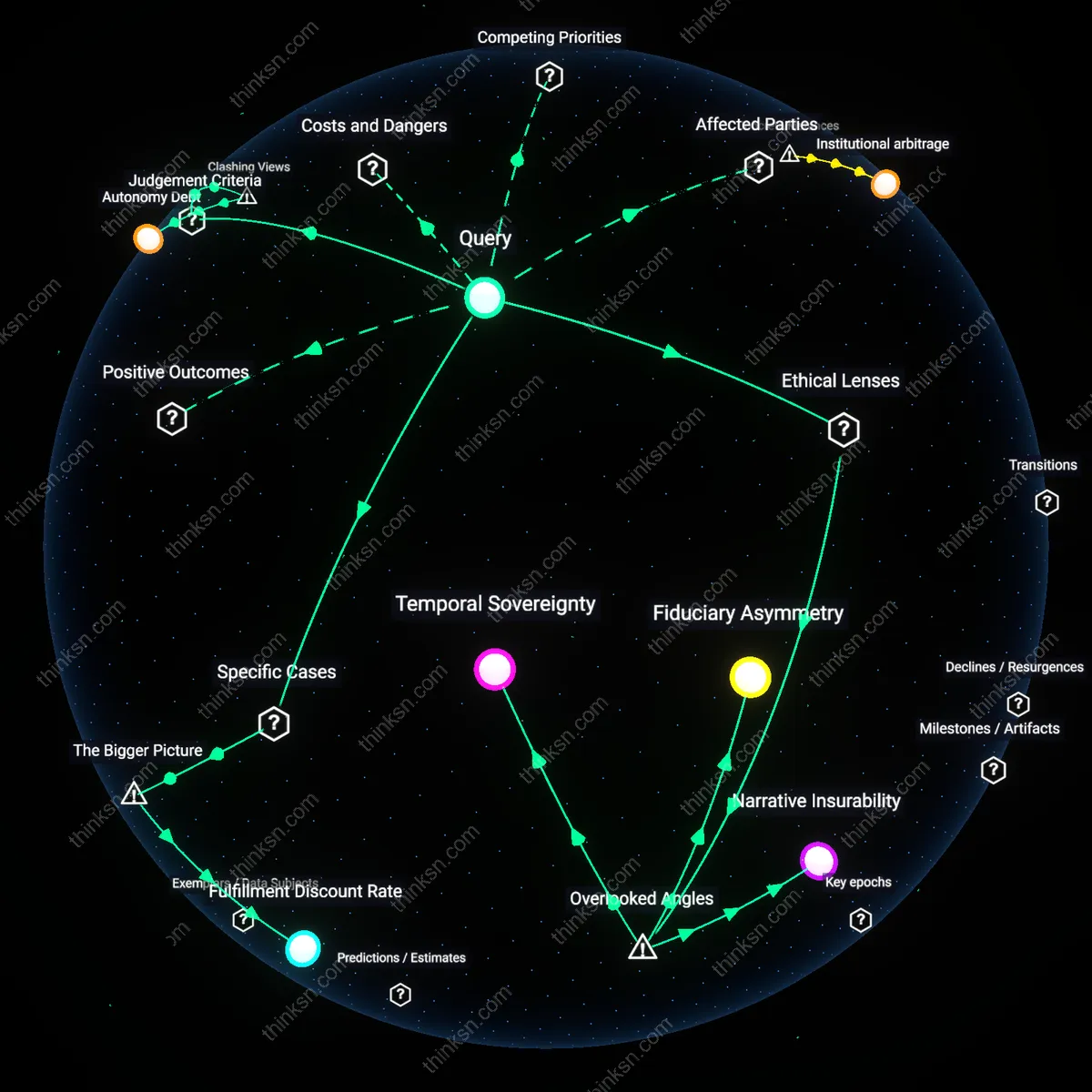

When people think their data is just paying for free email, how often does it end up shaping choices they didn't know were being influenced?

Infrastructural nudging

Data harvested from free email services systematically shapes user behavior by reconfiguring the underlying infrastructure of digital choice environments, such as default settings in calendar apps or priority in inbox sorting, which nudge users toward specific actions like meeting times or promoted events without overt advertisement. This occurs not through targeted ads but through embedded algorithmic curation in tools that structure daily decision-making, a mechanism typically invisible because it operates beneath the level of content, within the architecture of software functionality. The non-obvious insight is that influence is decoupled from messaging and instead embedded in the operational logic of platforms, making it a form of behavioral shaping that eludes detection because it mimics neutral utility.

Third-party inference latency

Ad networks and data brokers often use email metadata not to target the email user directly but to infer behavioral patterns for unrelated third parties—such as insurance risk models or employment screening services—where the original data subject has no discernible connection to the final decision context. Because these inferences propagate through delayed and indirect pathways, the causal link between checking an inbox and, say, a loan denial is obscured by time and institutional separation, rendering the influence undetectable to individuals and regulators alike. This latency in inference chains is overlooked because most privacy critiques focus on immediate feedback loops like ad targeting, not the deferred, transitive uses of data that shape life outcomes off-platform.

Ambient norm calibration

Aggregate email usage data is used by platforms to model 'normal' communication patterns, which are then fed back into organizational tools like smart replies, sentiment analysis in collaborative documents, or productivity benchmarks in corporate email systems, thereby subtly training users to align their communication styles with statistically dominant behaviors. This form of influence operates not on individual choices but on the social calibration of norms, where deviation from data-derived baselines is discouraged through friction or invisibility in interface design. The overlooked mechanism is that personal data from millions of users is used to define and enforce behavioral normality in professional and social interactions, making conformity a quiet byproduct of personalization infrastructure.

Behavioral Infrastructure

Google’s Gmail scanning of email content to populate targeted ads in the 2010s systematically transformed private correspondence into a predictive input for advertising platforms, where message context—such as discussions of travel plans—triggered related advertisements without user awareness of the data’s real-time conversion. This mechanism operated through automated natural language processing embedded directly in email delivery pipelines, making the extraction of intent a routine feature of service infrastructure rather than an optional tracking add-on. The non-obvious insight is that the service itself, not third-party trackers, became a silent observer and shaper of user environment, embedding influence into the core functionality people trust as neutral.

Choice Architecture Leakage

In the 2016 U.S. presidential election, Facebook’s News Feed algorithm—trained on user engagement data originally collected for ad targeting—was repurposed by political campaigns to micro-target swing voters in Michigan, Wisconsin, and Pennsylvania with tailored disinformation. The psychological profiles built from mundane online behavior, such as 'likes' on entertainment pages, allowed operators like Cambridge Analytica to manipulate voter decisions through emotionally resonant messaging framed as organic content. This reveals how data collected for commercial convenience leaks into civic decision-making structures, covertly shifting the terrain of democratic choice through technically sanctioned but socially unexamined feedback loops.

Latent Preference Mining

Amazon’s recommendation engine, trained on decades of purchase and browsing data, began influencing consumer product development by the early 2020s, as seen when Amazon Basics used aggregated shopping patterns to reverse-engineer and dominate demand for headphones, phone chargers, and laptop adapters. The platform inferred latent user preferences not from explicit feedback but from behavioral residues—abandoned carts, dwell times, and sequential clicks—that revealed unmet needs before consumers could articulate them. This demonstrates how passive data becomes an active design force, shaping not just what people buy but what gets made, effectively pre-empting consumer agency through invisible market sculpting.

Behavioral Debt

Users’ data does not merely pay for free email—it accumulates behavioral debt by encoding predictive models that silently pre-structure future choices in interfaces like search autocomplete, ad placement, and content recommendation. This occurs through continuous A/B testing infrastructures at firms like Google and Meta, where user engagement is optimized not toward user intent but system retention, making individuals unknowingly conform to algorithmic scripts that predate their decisions. The non-obvious insight is that influence isn’t a one-time transaction but compounds over time, like interest, shaping cognition through repeated exposure to tailored digital environments.

Influence Arbitrage

The primary use of personal data is not to target ads but to arbitrage influence across platforms and temporal phases, where behavioral surplus collected during routine email use is repurposed to sway high-stakes decisions—such as political affiliation or health choices—on unrelated future occasions. Entities like Cambridge Analytica and data brokers such as Acxiom exploit weak regulatory boundaries to transfer predictive scores between commercial and civic domains, leveraging trust in utility services to enable covert social engineering. This reframes data exploitation not as surveillance for profit but as a cross-domain manipulation strategy masked by the benign appearance of service funding.

Cognitive Offloading

People’s reliance on personalized digital tools leads to cognitive offloading, where the brain delegates decision-making routines to systems that covertly shape those very routines through incremental nudges embedded in interface design. For example, Gmail’s Smart Reply reshapes linguistic habits by offering probabilistic responses, subtly constraining emotional range and communicative intent under the guise of convenience. The underappreciated shift is not manipulation through content but the erosion of autonomous cognition—users aren’t influenced in their choices so much as acclimated to a depleted field of options they no longer recognize as diminished.

Inference Asymmetry

Mandating third-party audits of recommendation logic in email and social platforms corrects manipulation by exposing inferred user profiles that individuals cannot see or contest—a remedy needed only after the mid-2010s, when machine learning systems moved beyond click tracking to probabilistic inference of latent traits like political leanings or mental health risks. Unlike earlier models that relied on explicit behavior, modern systems infer preferences users never expressed, creating an epistemic imbalance where platforms know more about people than they know about themselves; this hidden leap in inference capacity, not merely data scale, marks the pivotal temporal shift.

Consent Obsolescence

Replacing one-time consent banners with adaptive, real-time data use notifications interrupts the feedback loop between data harvesting and decision shaping, a necessary intervention since the early 2000s shift from server-side email tools like Outlook to cloud-based ecosystems like Gmail, where message content became continuously scannable for behavioral modeling. This transition turned private correspondence into a live training stream for AI classifiers, rendering initial consent meaningless over time as data reuse evolved beyond original scope; the underappreciated insight is that consent is not just poorly informed but structurally outdated in systems designed for perpetual reinterpretation of old data.

Behavioral tax

People’s data acts as a hidden fee that distorts future decisions because tech platforms use historical engagement patterns to engineer click-heavy interfaces; major firms like Google and Meta optimize email-adjacent features such as smart replies and priority inboxes using models trained on years of personal communication, making minor interface nudges that compound across billions of daily interactions; most users perceive email as a neutral utility but fail to see how their past language and attention habits are repurposed to increase platform engagement, mistaking convenience for autonomy; the distortion isn’t in single overt manipulations but in the cumulative shaping of default behaviors under familiar, trusted interfaces.

Invisible curriculum

Free email conditions user judgment over time by embedding platform-driven norms about relevance, urgency, and trust through automated sorting and labeling; Gmail’s spam filters, promotional tabs, and ‘nudging’ reminders are built on predictive models that silently classify what users should notice or ignore, shaping long-term attention habits by defining what constitutes valuable communication; people assume these systems protect them from clutter, but the criteria for inclusion are tuned to engagement metrics, not user-defined priorities; the underappreciated effect is that users unknowingly learn to delegate cognitive sorting to systems whose priorities diverge from their own, normalizing algorithmic authority in personal decision spaces.

Choice architecture debt

Every data-driven convenience in free email accumulates long-term influence by hardcoding past behavior into interface defaults, reducing the visibility of alternatives; when Google suggests contacts, predicts responses, or auto-snoozes messages, it amplifies previously observed patterns while diminishing exposure to unexpected or infrequent actions, effectively narrowing the range of perceived choices over time; users don’t notice these constraints because they align with immediate ease and familiarity, but the cumulative effect is a shrinking zone of spontaneous decision-making; the non-obvious cost is that the very tools meant to save time end up reinforcing behavioral inertia under the guise of personalization.