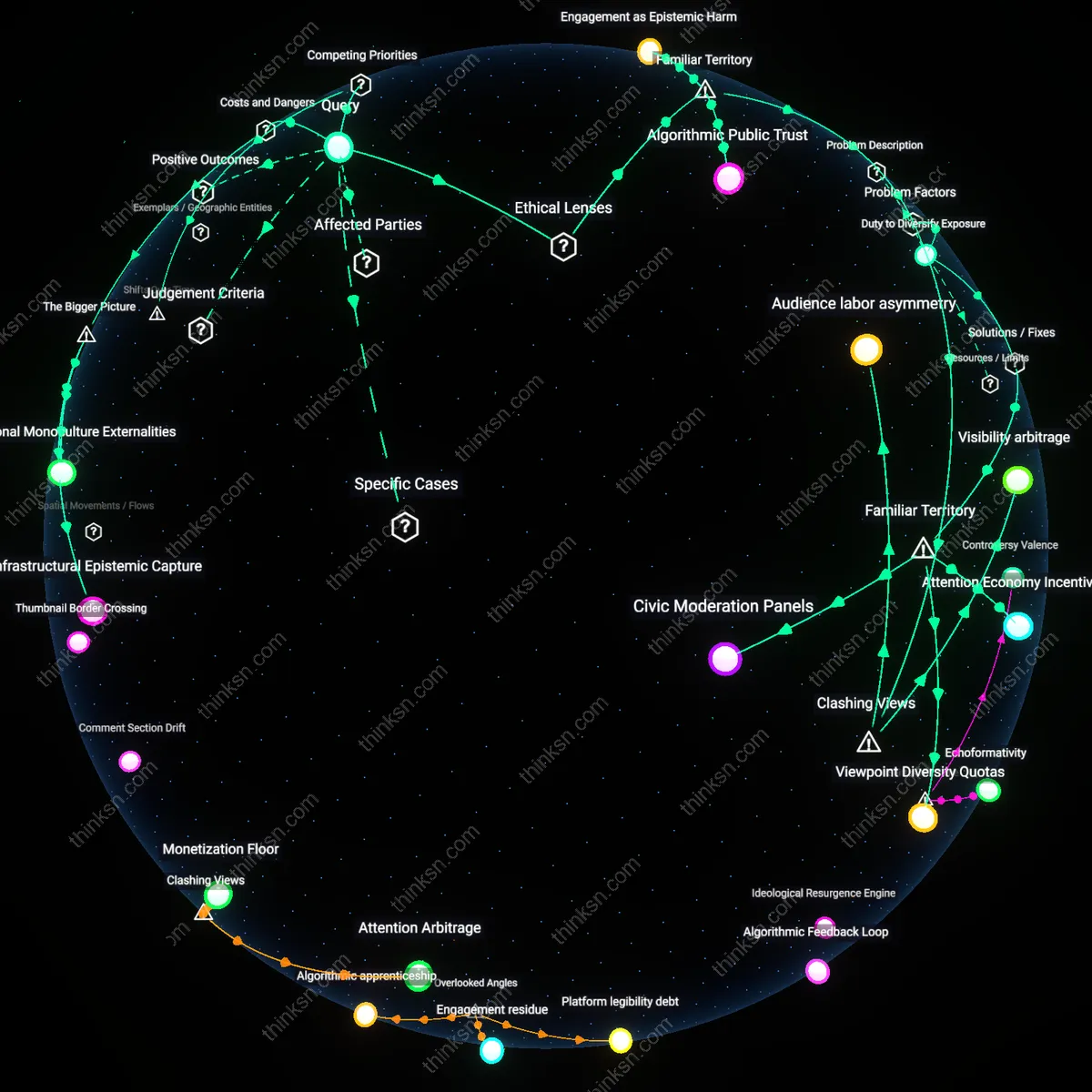

Do YouTube Algorithms Keep You Engaged or Trapped in Bubbles?

Analysis reveals 7 key thematic connections.

Key Findings

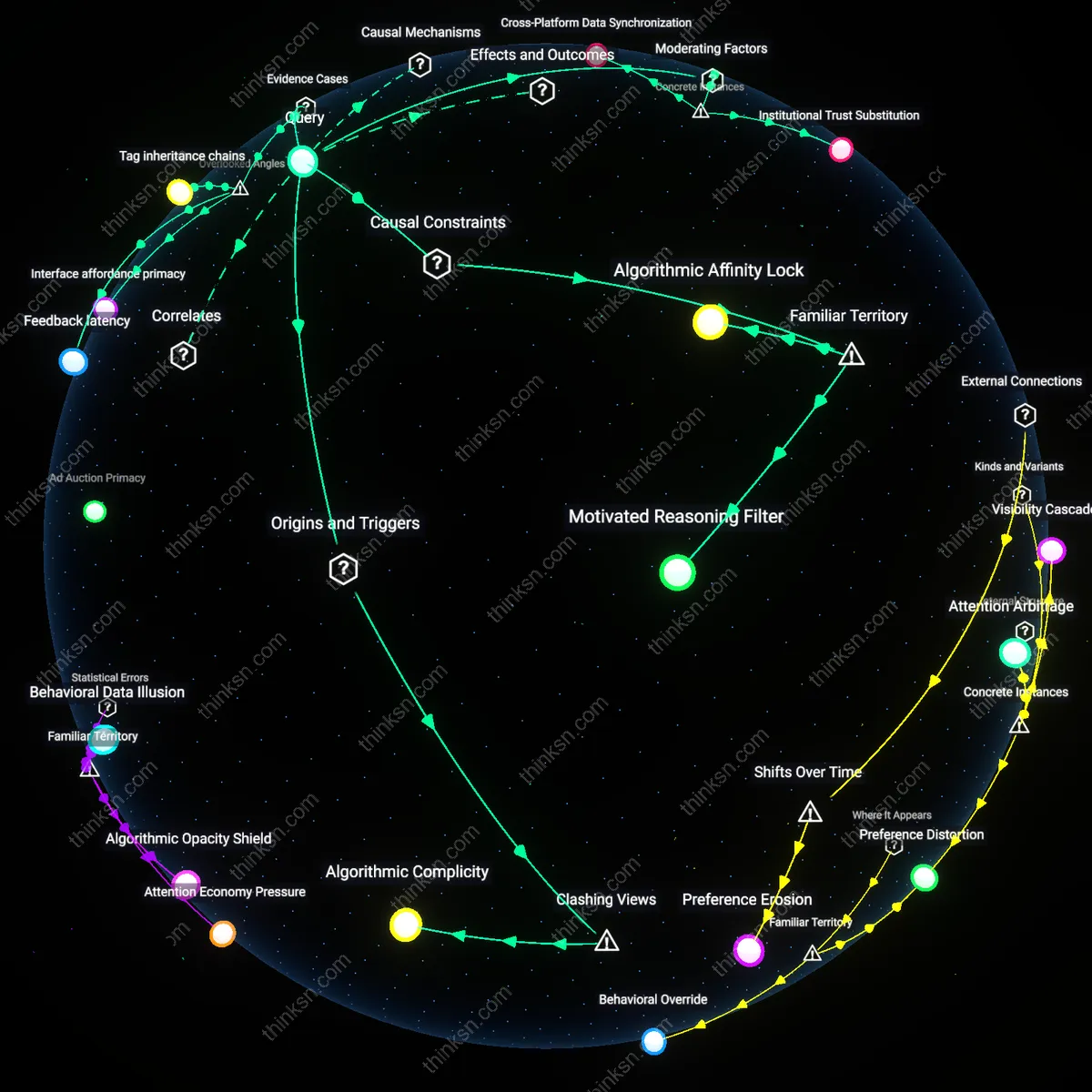

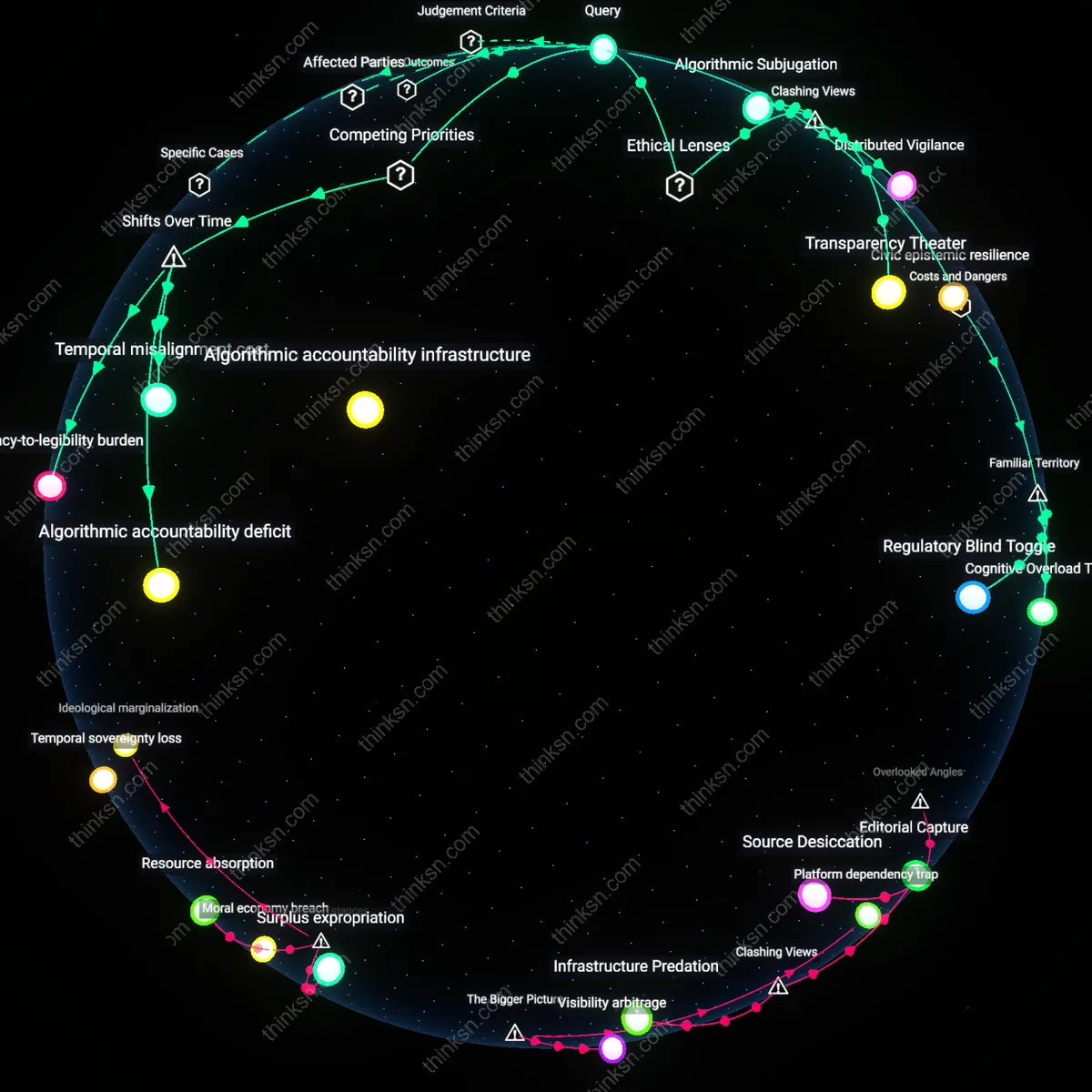

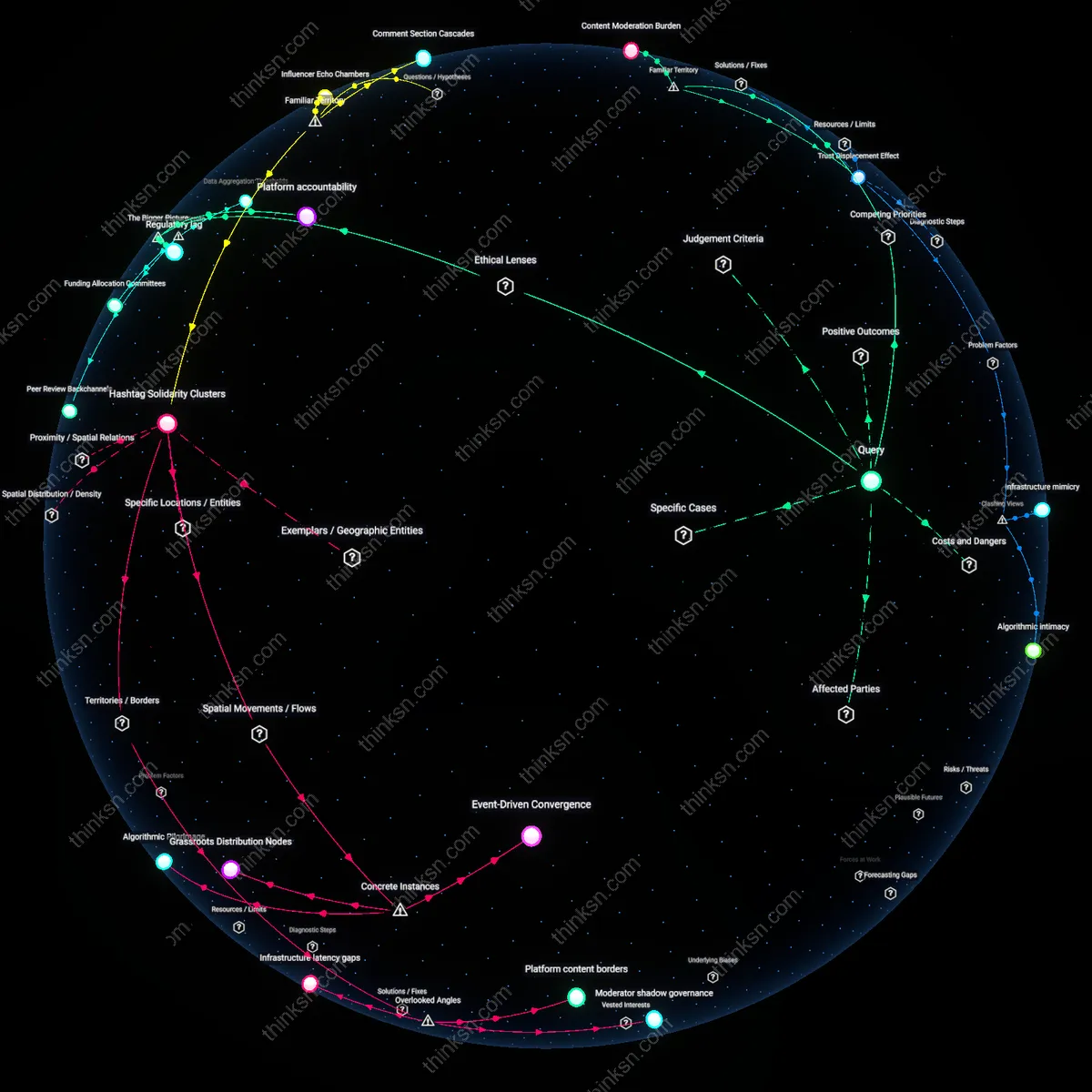

Algorithmic Epistemic Drift

Prioritizing engagement-driven personalization on YouTube systematically skews users' information diets toward ideational proximity, weakening exposure to structurally necessary cognitive dissonance. This occurs because recommendation algorithms optimize for watch time by reinforcing existing attentional patterns, effectively narrowing the range of acceptable epistemic variance under the guise of relevance—particularly in politically and socially salient domains. The mechanism is not mere bias but a feedback loop between user behavior and platform incentives, where marginal deviations from a user’s inferred worldview are deprioritized unless they align with high-engagement tropes. What is underappreciated is that this drift does not merely reflect user preference but actively reshapes it over time, producing ideational convergence even in initially diverse epistemic environments.

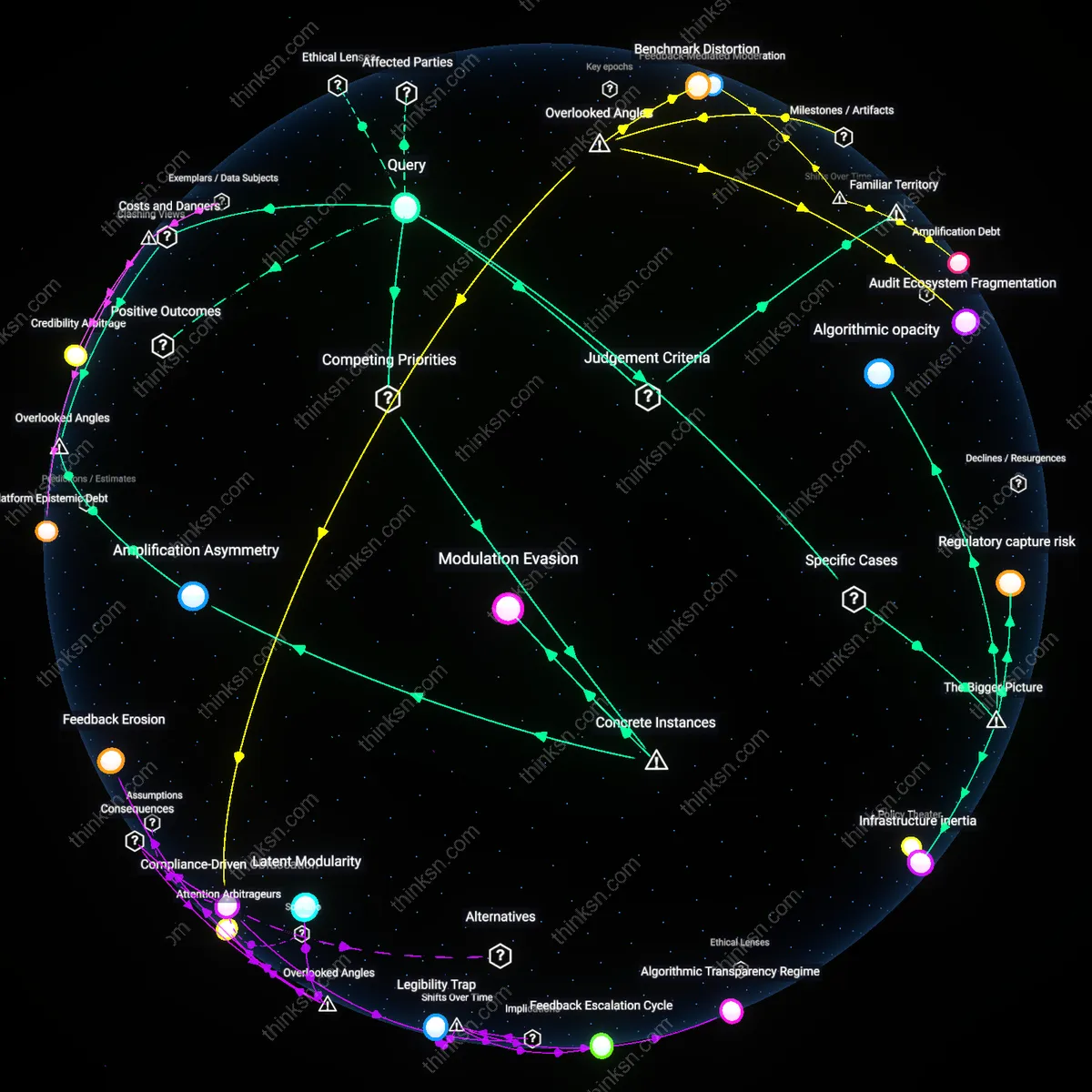

Infrastructural Epistemic Capture

The architecture of YouTube’s recommendation system enables third-party actors—including state-backed disinformation networks and algorithmic grifters—to exploit personalization for scalable epistemic capture. By gaming engagement signals like click-through rate and session duration, malign operators can ensure their content is amplified within self-reinforcing feedback circuits that appear organic to both users and platform moderators. This capture is systemic because content need not be persuasive on merit; it only needs to trigger attentional reflexes that the algorithm rewards, thereby colonizing the epistemic space meant for pluralistic discourse. The overlooked reality is that personalization infrastructure, designed to reduce cognitive load, inadvertently becomes a vector for soft coercion, not through deception alone but by altering the conditions under which beliefs are formed.

Attentional Monoculture Externalities

YouTube’s personalization model generates negative externalities in the form of homogenized attentional economies that depress the visibility of non-viral, high-epistemic-value content. Because engagement metrics favor emotionally reactive or identity-confirming content, creators producing nuanced, context-dependent knowledge are structurally disincentivized, leading to a market failure in epistemic diversity. This dynamic is amplified by the platform’s reliance on ad-based revenue, which binds content viability to performative engagement rather than informational robustness. The non-obvious consequence is that even users seeking diverse perspectives encounter a convergent attentional landscape—one shaped not by individual choice but by the invisible hand of monetization-driven algorithmic selection, producing a monoculture masked as personalization.

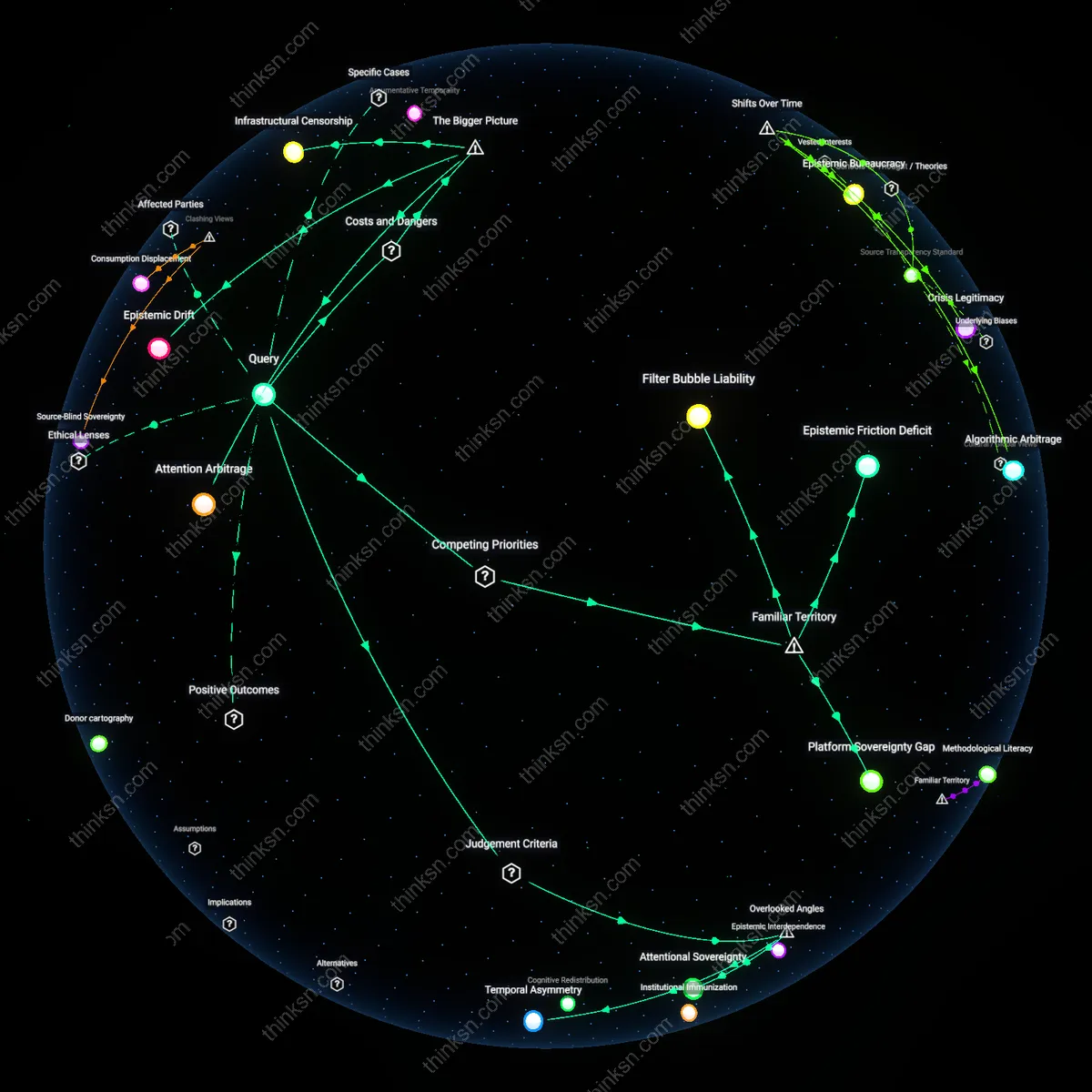

Curation Threshold

The transition from human-moderated editorial curation in pre-2010 YouTube (e.g., Featured Videos tab) to algorithmic selection post-2012 marked a decisive shift where personalization displaced shared cultural entry points, redistributing epistemic authority from editorial teams to behavioral tracking systems. This change reframed diversity not as a public responsibility but as an emergent property of individual choice, even though user decisions are shaped by algorithmic nudge environments. The non-obvious consequence is that filter bubbles are not merely cognitive side effects but structural compensations for removing centralized curators who once bore institutional responsibility for balancing exposure—revealing how the erosion of explicit curation thresholds enabled personalization to expand at epistemic diversity’s expense.

Duty to Diversify Exposure

Platforms must actively counteract filter bubbles by diversifying user content exposure, because they hold a moral duty under deontological ethics to uphold epistemic autonomy. YouTube, as a dominant public sphere infrastructure, functions as an epistemic gatekeeper whose recommendation algorithms shape what users consider plausible or legitimate knowledge. The mechanism—algorithmic amplification of preference-congruent content—reinforces cognitive biases, creating an ethical obligation not merely to avoid harm but to intervene. What’s underappreciated is that the familiar framing of 'free choice' obscures this duty, normalizing passivity in the face of systemic epistemic distortion.

Engagement as Epistemic Harm

Prioritizing user engagement through personalization on YouTube constitutes a form of epistemic harm under virtue epistemology, where the platform cultivates intellectual vices like epistemic laziness and dogmatism. The algorithm’s reinforcement of confirmation-seeking behavior mimics behavioral conditioning seen in gambling systems, substituting cognitive closure for reflective inquiry. This dynamic transforms YouTube from an information environment into a habit-forming apparatus that rewards certainty over curiosity. The underappreciated point is that public discourse frames personalization as convenience, not as a corrosion of epistemic character—a shift that makes vice feel like fulfillment.

Algorithmic Public Trust

YouTube should be held to a standard of algorithmic public trust, modeled on public utility doctrines in administrative law, because its recommendation system exercises quasi-governmental influence over knowledge distribution. By concentrating epistemic power in opaque, profit-driven systems, the platform undermines democratic ideals of reasoned pluralism akin to those protected in public forum theory. The mechanism—proprietary algorithms optimizing for watch time—functions as a de facto editor with no accountability to civic norms. What’s overlooked in the familiar 'filter bubble' metaphor is that it implies accidental enclosure, not deliberate, legally cognizable stewardship failure.