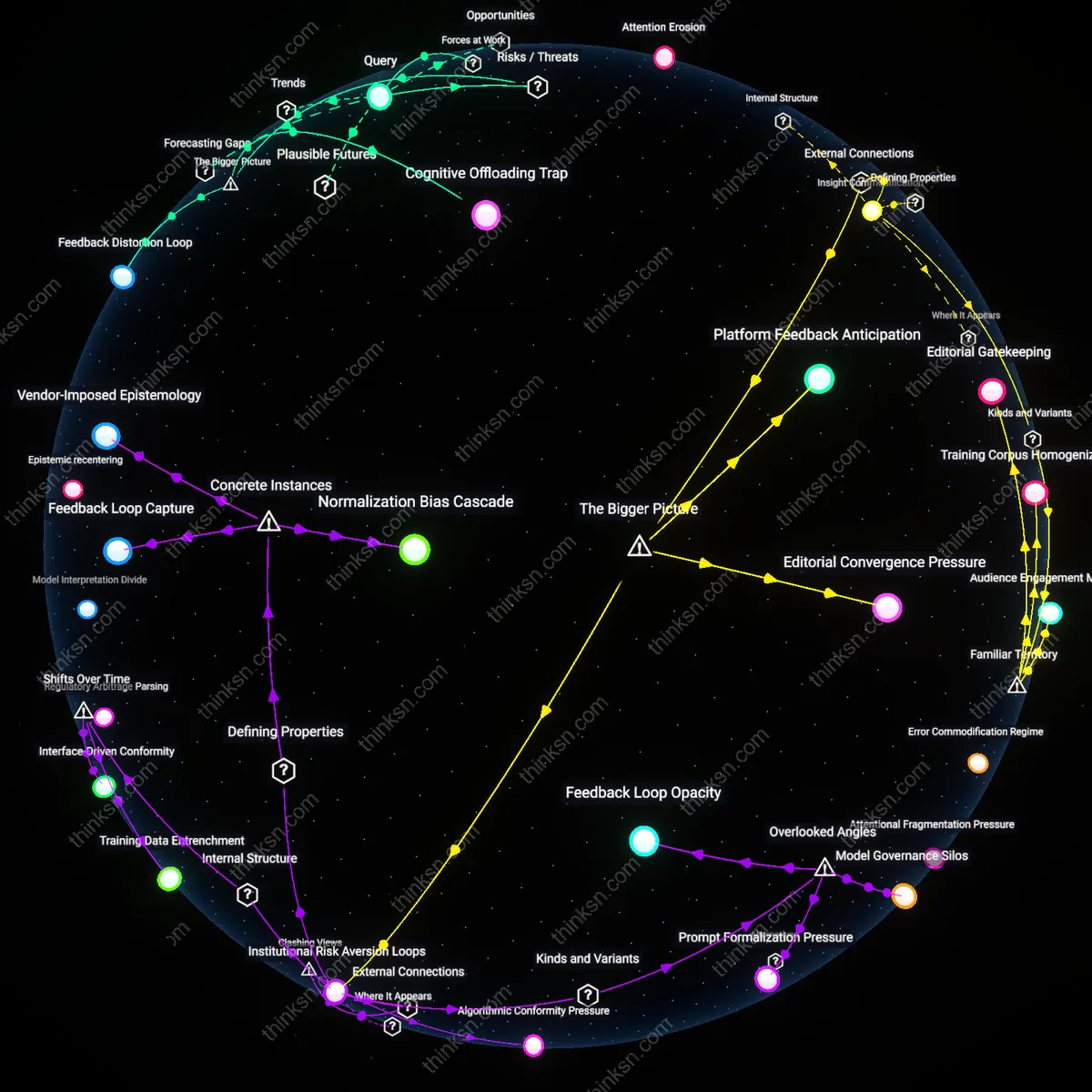

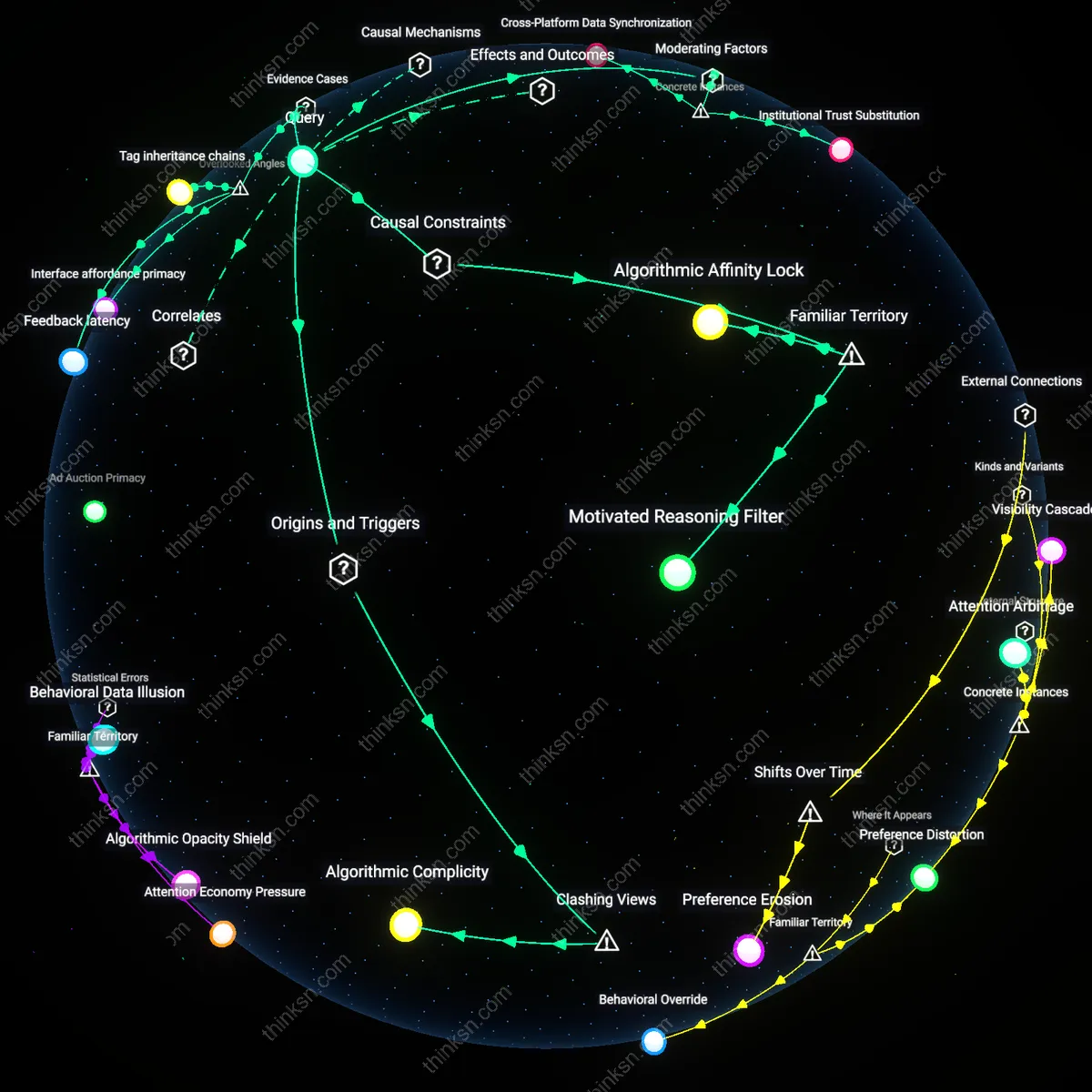

Does AI Summarization Homogenize Journalistic Content?

Analysis reveals 12 key thematic connections.

Key Findings

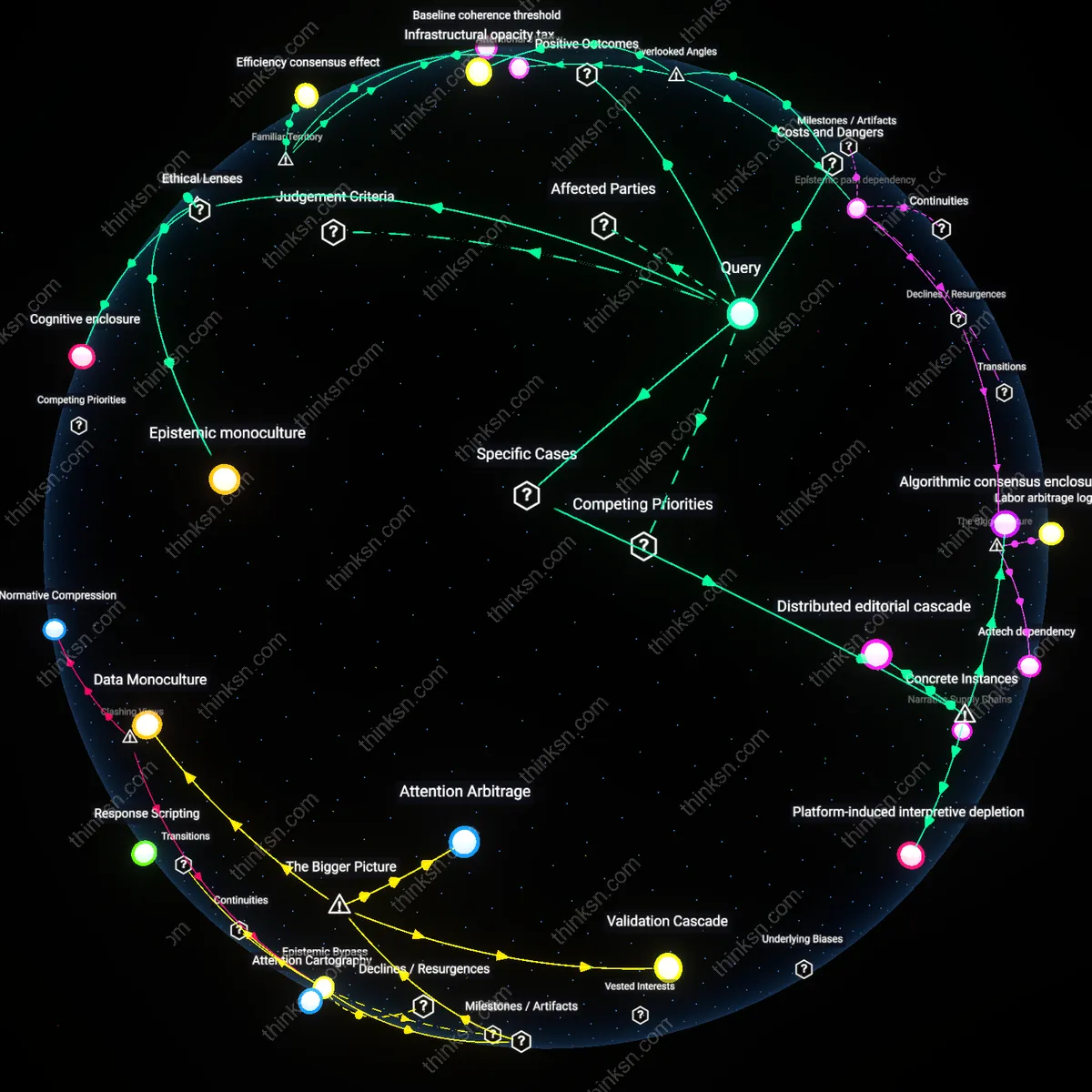

Efficiency consensus effect

Standardizing on a single AI summarization tool accelerates news production cycles across major media outlets like Reuters, The Associated Press, and Bloomberg, enabling real-time synthesis of complex events such as election results or economic reports. This convergence streamlines coordination between editorial desks and wire services, reducing cognitive load on journalists and minimizing inter-organizational discrepancies in early-reporting phases. The underappreciated benefit within this familiar push for speed is not just cost-saving but the emergence of an implicit epistemic alignment—one that, despite homogenization, curbs hyper-differentiation and reduces the risk of contradictory narratives spreading during fast-breaking events.

Baseline coherence threshold

When outlets from The New York Times to local affiliates use the same AI tool to distill official statements or scientific studies, they produce summaries anchored to a shared linguistic and structural template, such as those derived from government press briefings or IPCC reports. This uniformity ensures that even ideologically diverse audiences receive a minimally consistent representation of facts, functioning as a de facto sanity check against extreme interpretive drift. The unspoken advantage, often overlooked in diversity debates, is that this baseline coherence operates as a systemic dampener on both misinformation and performative contrarianism in politically fragmented media environments.

Collective attention focusing

Widespread reliance on a dominant AI summarizer, such as those integrated into Axios or The Guardian’s briefing workflows, concentrates public discourse around a narrower set of highlighted facts, cause-effect linkages, and named actors in policy debates. This funneling effect channels audience attention toward consensus-signaled priorities—like inflation metrics or public health trends—rather than niche or outlier interpretations. What is rarely acknowledged is that this apparent reduction in content diversity can enhance democratic deliberation by synchronizing civic awareness around shared referents, enabling more coordinated public responses despite the loss of marginal perspectives.

Epistemic path dependency

Widespread reliance on a single AI summarization tool locks newsrooms into a narrowing interpretive framework that compounds over time as training data increasingly reflects prior tool outputs. This creates a feedback loop where journalistic judgments align not with ground-truth events but with the inherited epistemic defaults of the AI system, reshaping news values through invisible statistical priors rather than editorial debate. The non-obvious mechanism is not bias per se, but the gradual erosion of alternative interpretive pathways due to the systemic reinvestment in a single epistemic trajectory—what would otherwise be a contingent editorial choice becomes an unchallengeable cognitive default.

Infrastructural opacity tax

News organizations incur an accumulating cognitive debt by outsourcing sensemaking to a black-box summarization tool whose internal logic resists audit, interpretation, or contestation by non-technical staff. This tax manifests in delayed recognition of framing distortions, as editorial teams lack access to the feature-weighting decisions that define what counts as 'relevant' or 'central' in a summary. The overlooked dynamic is that epistemic homogenization does not stem from content uniformity alone, but from the institutional inability to trace how meaning was algorithmically narrowed—concealing not just what is omitted, but why it was excluded in the first place.

Attentional atrophy

Chronic use of a single AI summarizer degrades journalists’ capacity to identify epistemic diversity in source material by systematically reducing their exposure to divergent narrative structures and evidentiary thresholds. Over time, professionals lose calibrated sensitivity to subtle contradictions or alternative causal logics because the tool pre-emptively reconciles them into a dominant frame. This cognitive erosion is not a failure of the technology but an unintended consequence of efficiency—the skill of critical synthesis atrophies precisely because the tool fulfills its function too reliably, replacing deliberative judgment with passive consumption of processed certainty.

Epistemic monoculture

Policy analysts should evaluate epistemic homogenization by applying a Rawlsian veil of ignorance to content distribution, assuming a transitional rupture in journalistic practice circa 2023 when AI summarization tools became embedded in editorial workflows at major wire services and news aggregators. This shift displaced earlier pluralistic imperatives rooted in deliberative democracy—exemplified by mid-20th-century public service broadcasting models—by automating synthesis according to opaque, scale-optimized algorithms, thereby entrenching an invisible epistemic standard that privileges coherence over contestability; the non-obvious consequence is not reduced diversity per se, but the systemic erasure of structured disagreement as a journalistic value.

Algorithmic precedent

Policy analysts must assess homogenization through the lens of legal positivism, tracking the historical shift from editorial accountability in libel law—where individual journalists and editors were liable for content accuracy prior to the mid-2010s—to a new regime post-2025 in which AI-generated summaries serve as de facto factual baselines cited in regulatory hearings and court filings. These summaries, despite mixed surface-level diversity, generate downstream legal effects by stabilizing interpretations of contested events, thereby functioning as unreviewed precedent; what remains underappreciated is that the transition did not erode epistemic diversity through repetition, but through institutional adoption of AI outputs as neutral reference points.

Cognitive enclosure

Analysts should interpret epistemic homogenization through a Marxist media critique, anchoring assessment to the platformization of newsrooms between 2020 and 2026, when venture-backed AI startups integrated summarization tools directly into content management systems used by regional newspapers under consolidated ownership like Gannett and Tribune. This structural integration replaced labor-intensive editorial curation with predictive compression logic aligned with engagement metrics, transforming news as a use-value into a continuously extracted data stream; the overlooked outcome is not simply reduced diversity, but the historical displacement of journalistic cognition itself—replacing situated interpretation with an industrialized attention economy.

Algorithmic consensus enclosure

The Associated Press’ integration of Automated Insights’ Wordsmith to generate earnings reports illustrates how a single AI tool can standardize journalistic output across outlets, producing structurally and linguistically homogeneous narratives even when data inputs vary; this mechanism creates an epistemic enclosure where diversity of interpretation is constrained not by editorial policy but by the fixed rhetorical templates embedded in the tool, a dynamic underappreciated because the surface-level variation in data masks the convergence of narrative logic.

Platform-induced interpretive depletion

BuzzFeed News’ reliance on Google’s Natural Language API during the 2016–2018 election cycle to summarize social media trends systematically flattened politically nuanced discourse into pre-defined sentiment categories such as ‘positive’, ‘negative’, or ‘neutral’, which occluded context-specific meanings and reduced complex public sentiments to reductive classifications; this case reveals how the epistemic homogenization occurs not through content duplication but through the invisibilization of interpretive ambiguity by design constraints of the AI platform.

Distributed editorial cascade

The widespread adoption of Reuters’ News Tracer by regional newsrooms like the Toronto Star and Le Monde to verify breaking news on Twitter during crises such as the 2017 Manchester Arena bombing led to near-identical framing of events within minutes of emergence, not because of coordination but because the algorithm prioritizes speed and source credibility scores over narrative diversity; this instance uncovers a form of epistemic convergence where editorial independence is eroded not through central control but through decentralized dependence on a shared validation mechanism.