Report Hateful Posts: Personal Risk vs Societal Gain?

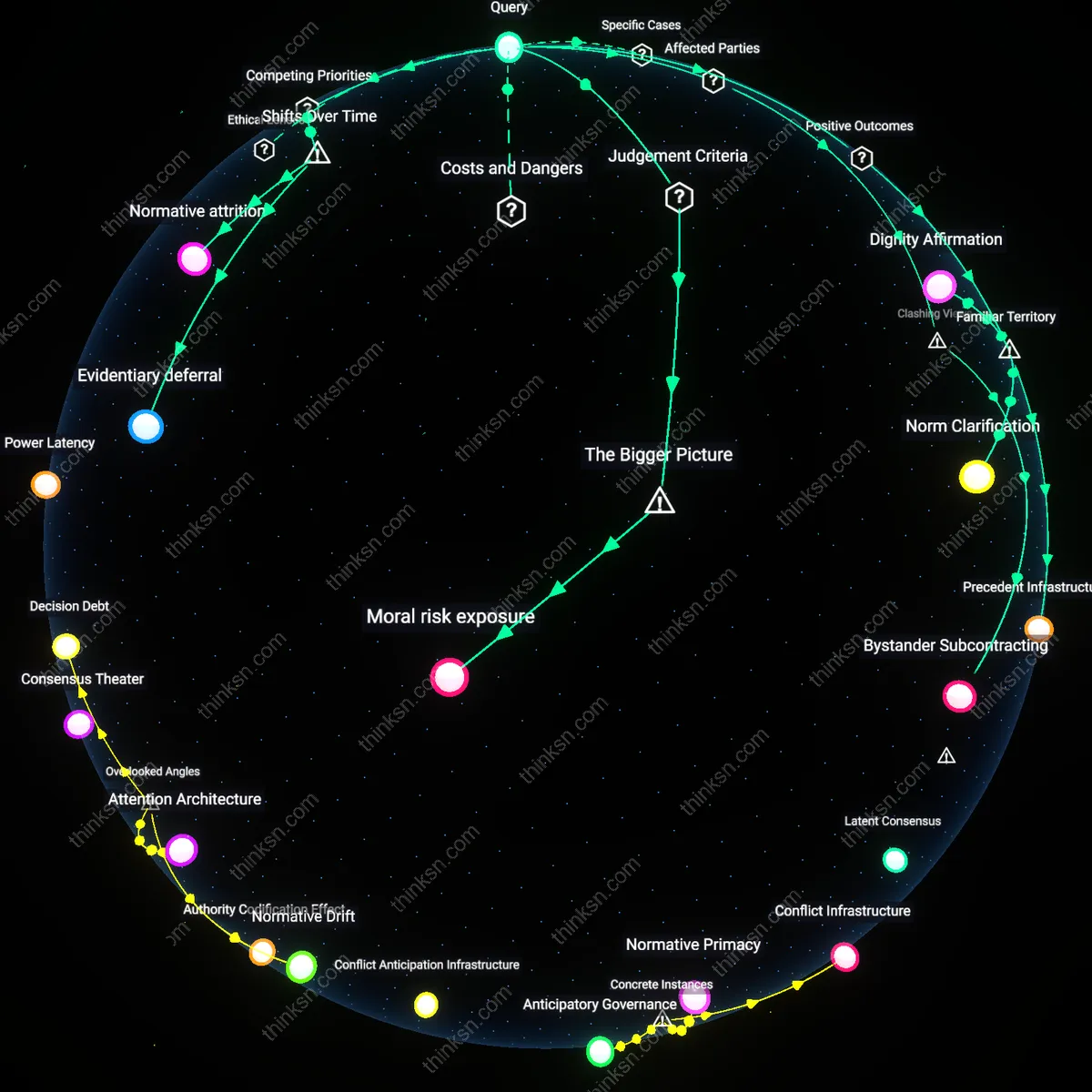

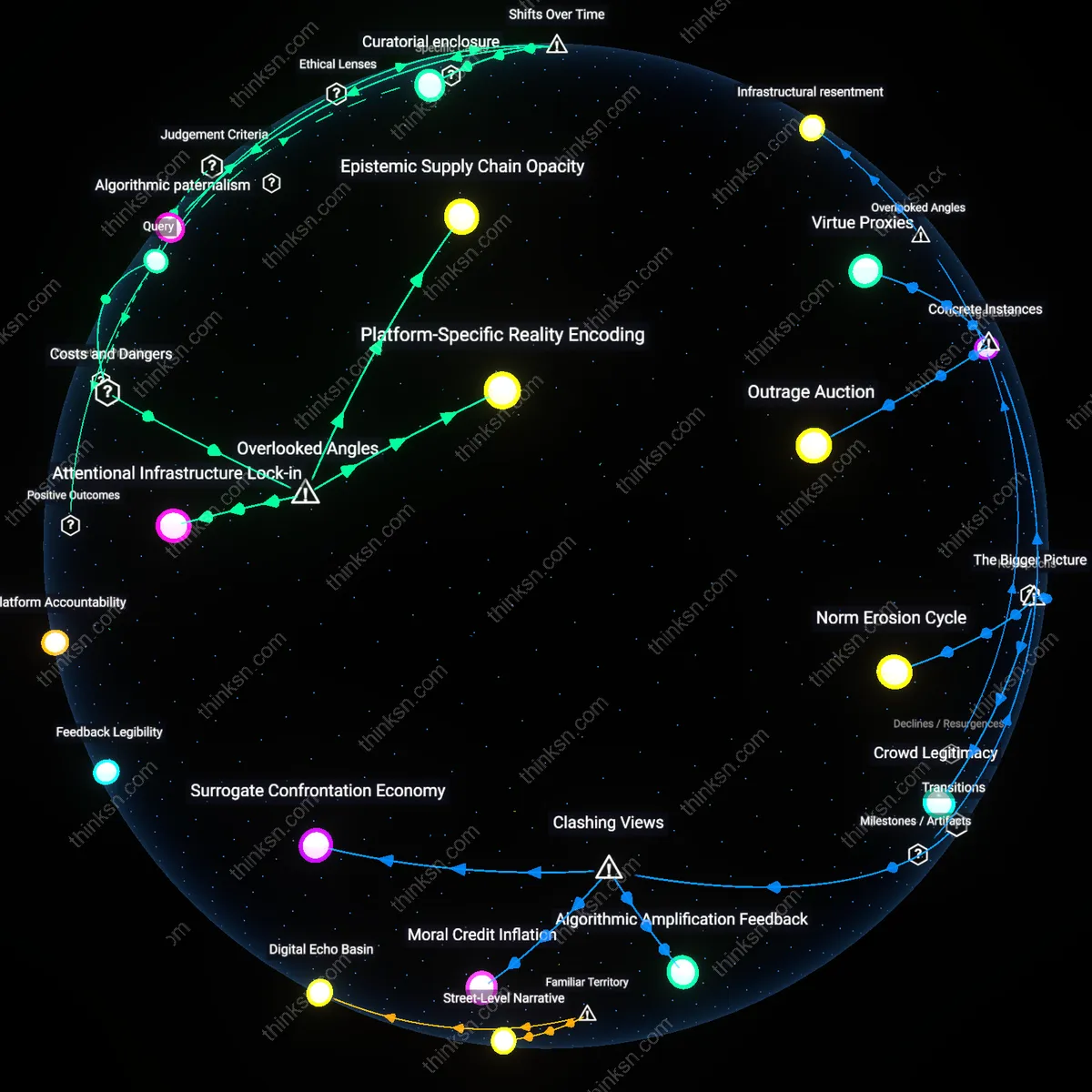

Analysis reveals 6 key thematic connections.

Key Findings

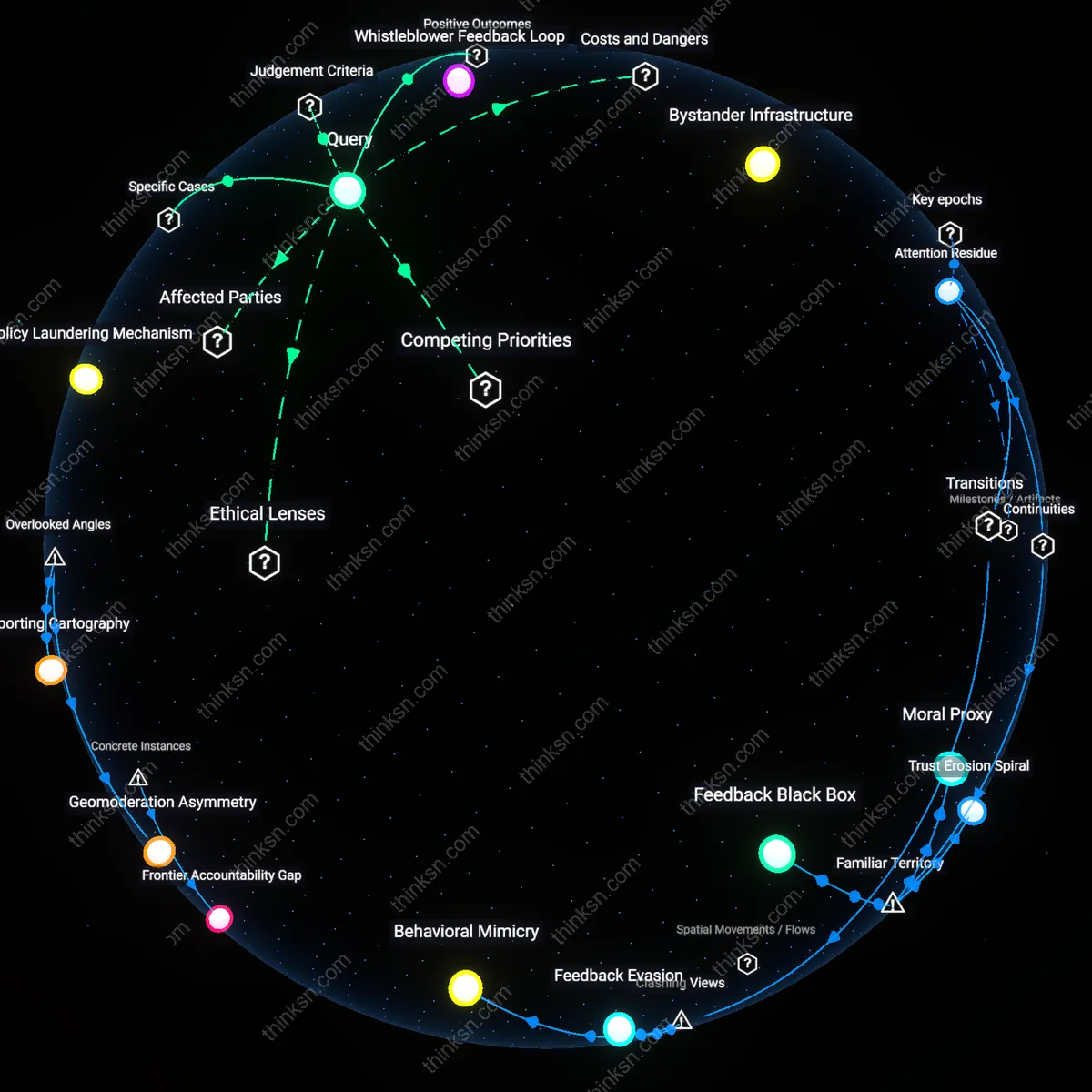

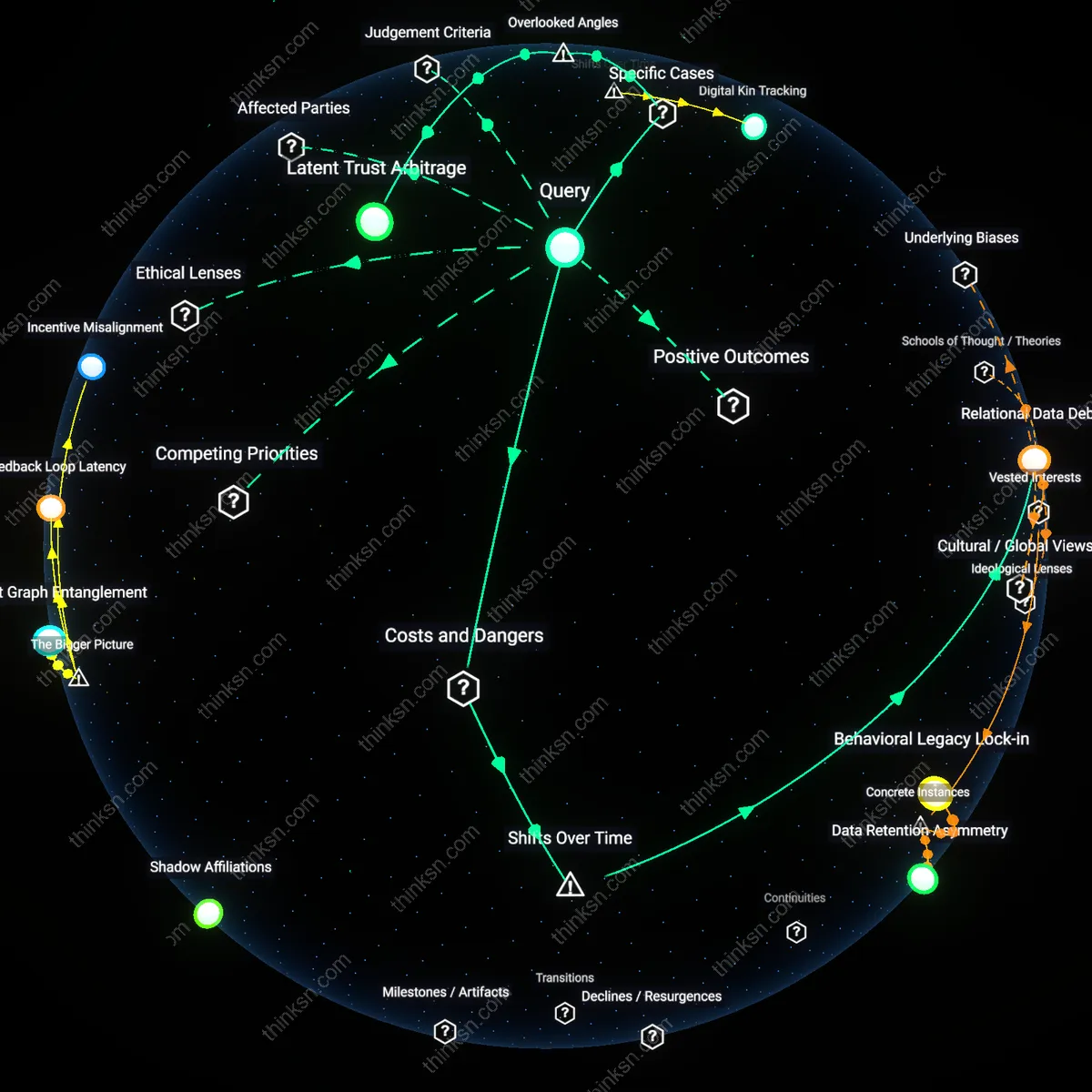

Attention Residue

Individuals who report extremist content generate positive utility by creating attention residue that enables platform algorithms to detect behavioral anomalies in viewing patterns, not just content. When users flag content—even if moderation policies are ambiguous—their actions leave diagnostic traces in metadata streams, such as shortened dwell times or abrupt navigation exits, which machine learning systems can correlate with latent harmfulness. This overlooked dimension transforms user reporting from a binary moderation trigger into a continuous sensor network for pre-moderation behavioral analytics, altering the standard view of reporting as merely reactive by showing how user vigilance silently trains preventive AI systems without requiring policy clarity.

Bystander Infrastructure

Reporting extremist content strengthens bystander infrastructure, a hidden system of psychological and technical preparedness that communities rely on during crisis escalation. When individuals report, even uncertainly, they reinforce norms of engagement that lower the activation threshold for future interventions, making platforms function more like civic spaces with distributed responsibility. This is non-obvious because most analyses treat reporting as a discrete act aimed at removal, but the real positive utility lies in normalizing participatory governance—where each report, regardless of outcome, incrementally builds collective digital hygiene and reduces the bystander effect in real-time emergencies.

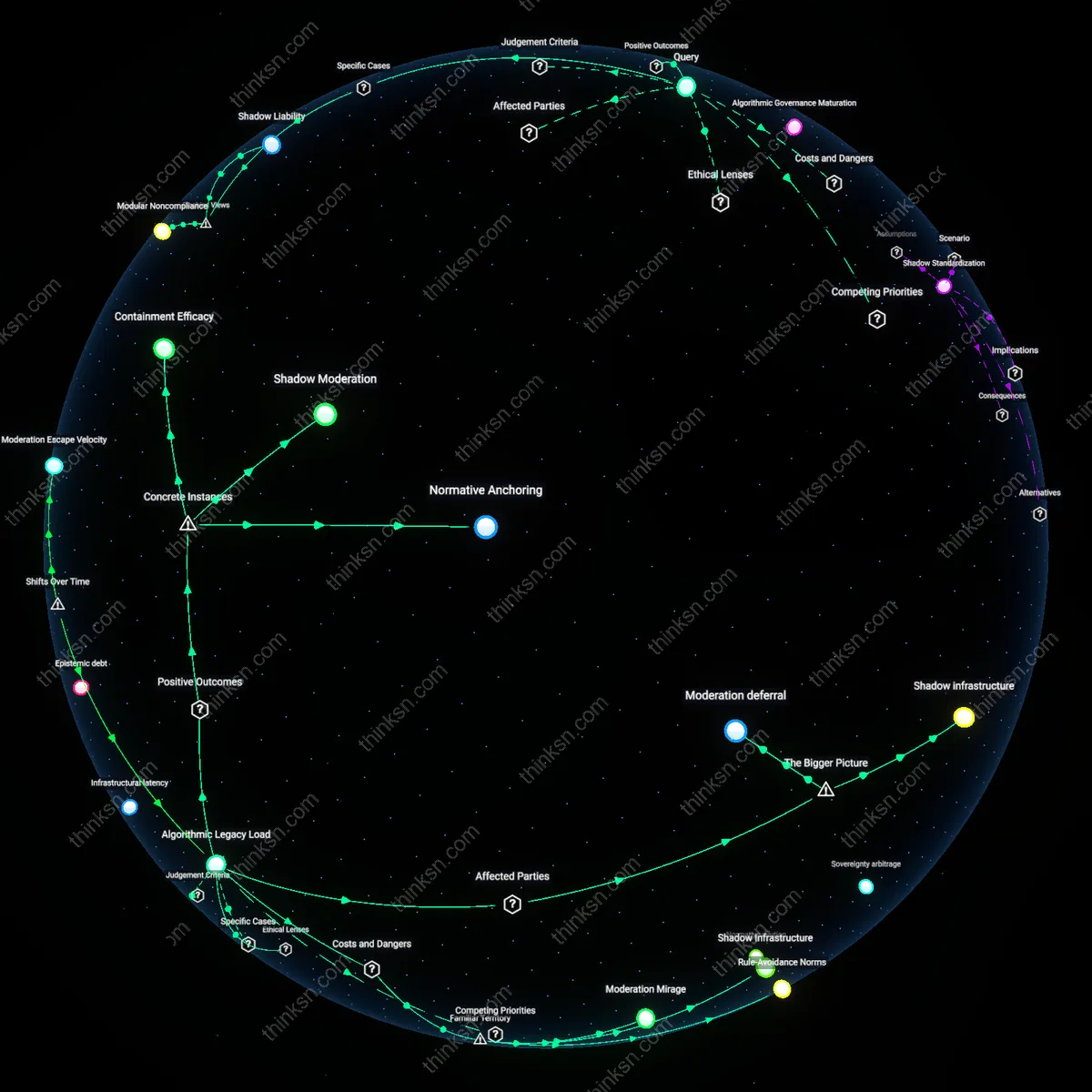

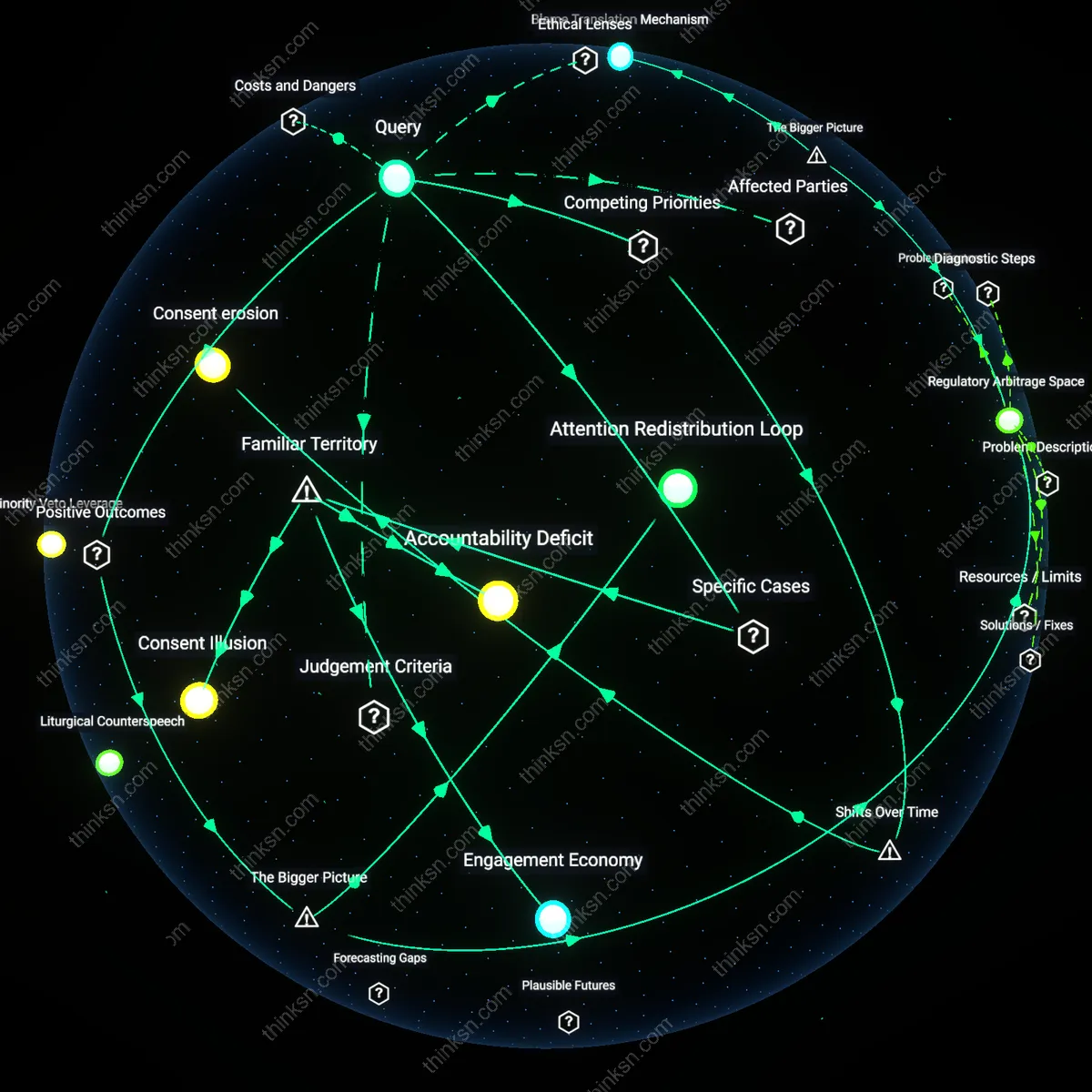

Policy Fuzz Testing

Individuals who report extremist content under unclear moderation policies perform informal fuzz testing of platform governance, exposing edge cases that reveal systemic weaknesses in enforcement logic. This creates positive utility by simulating adversarial pressure on moderation systems, akin to ethical hacking, allowing platforms to detect inconsistencies in policy application before malicious actors exploit them. The overlooked dynamic is that user reports—especially ambiguous or rejected ones—function as live probes that generate feedback loops for institutional learning, transforming individual risk-taking into a form of distributed quality assurance that improves systemic resilience over time.

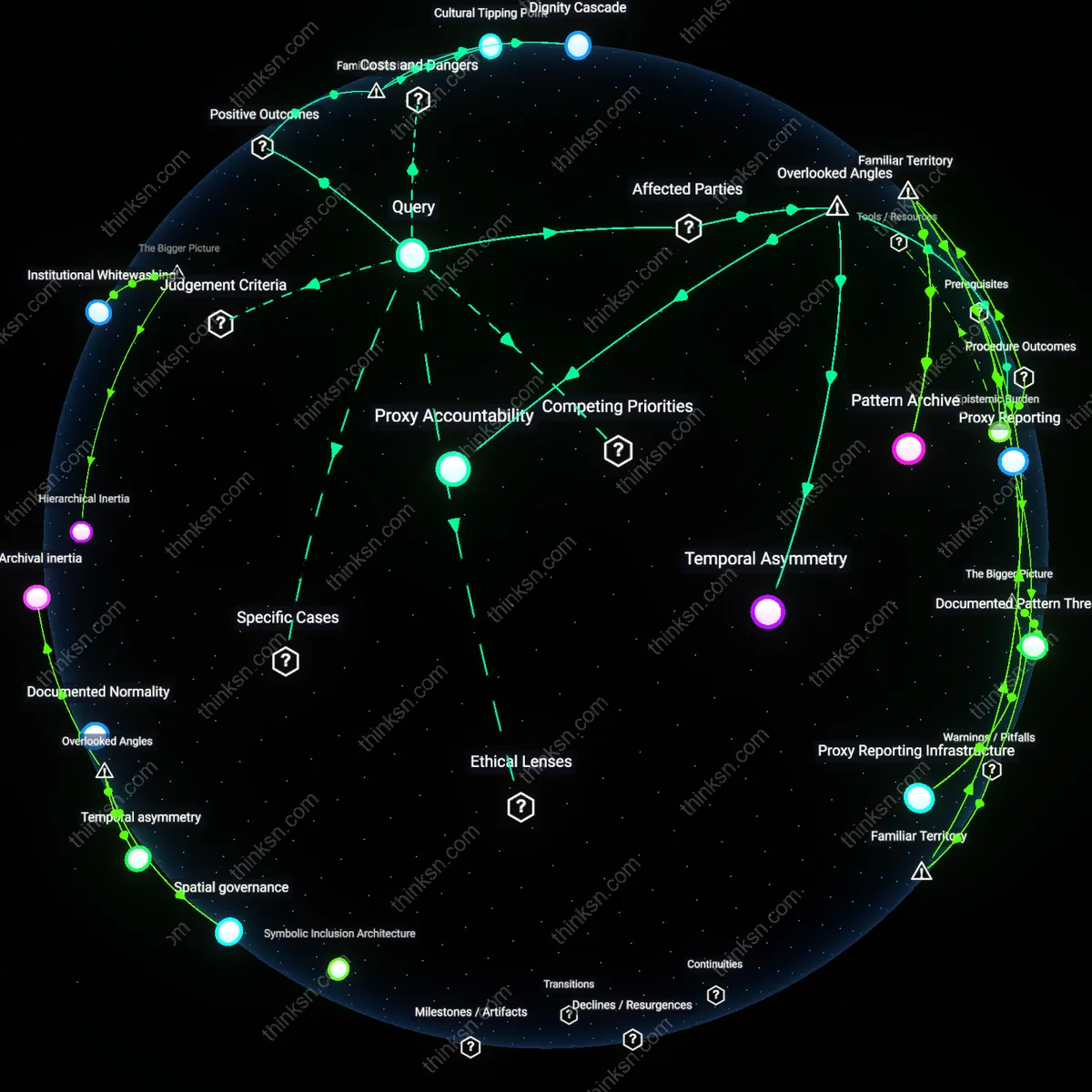

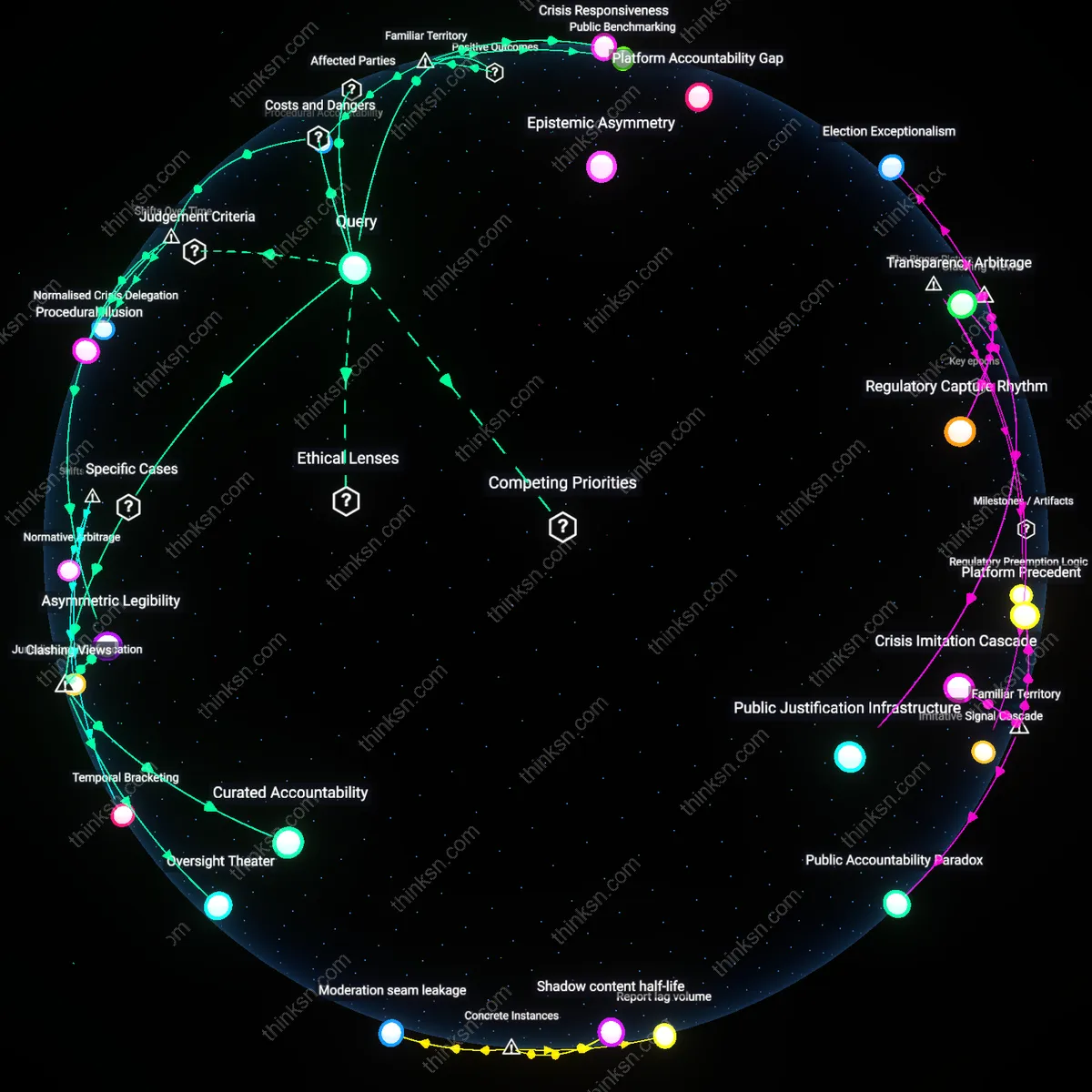

Platform Accountability Gap

Individuals must report extremist content despite personal risk because ambiguous moderation policies on platforms like Facebook in Ethiopia enable state and non-state actors to exploit enforcement delays, where the absence of transparent escalation protocols transforms user reporting into a liability rather than a safeguard, revealing how decentralized content governance absolves platforms of operational responsibility while externalizing harm onto local users who bear both social and physical consequences.

Whistleblower Feedback Loop

In contexts like India’s WhatsApp lynchings crisis, users who reported extremist rumors faced violent reprisal because encrypted networks amplified unverifiable content faster than platform-led verification could respond, demonstrating how individual reporting creates a feedback loop where state authorities demand user-generated evidence while offering no protection in return, thereby institutionalizing risk as a de facto co-production of digital moderation between platforms and vulnerable citizen-informants.

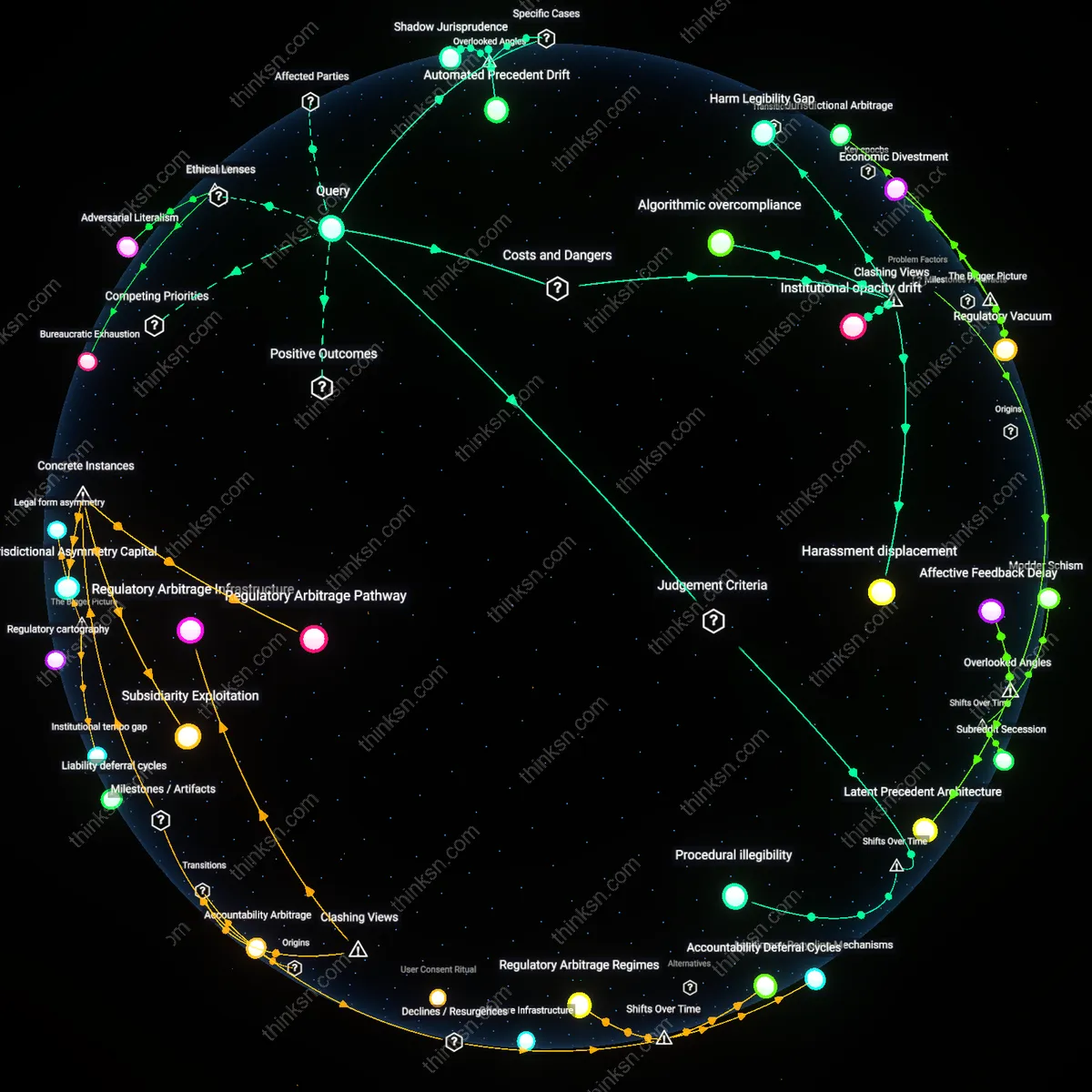

Policy Laundering Mechanism

When users report extremist content on YouTube in Indonesia, their actions inadvertently legitimize opaque algorithmic downranking by framing takedown as a public service, but this allows Google to outsource normative judgment to individuals while retaining policy opacity, showing how personal reporting functions as a policy laundering mechanism—where corporate liability is mitigated through the visible performance of user participation, insulating platforms from regulatory intervention despite persistent societal harm.