Is Platform Transparency Enough to Balance Power in Content Moderation?

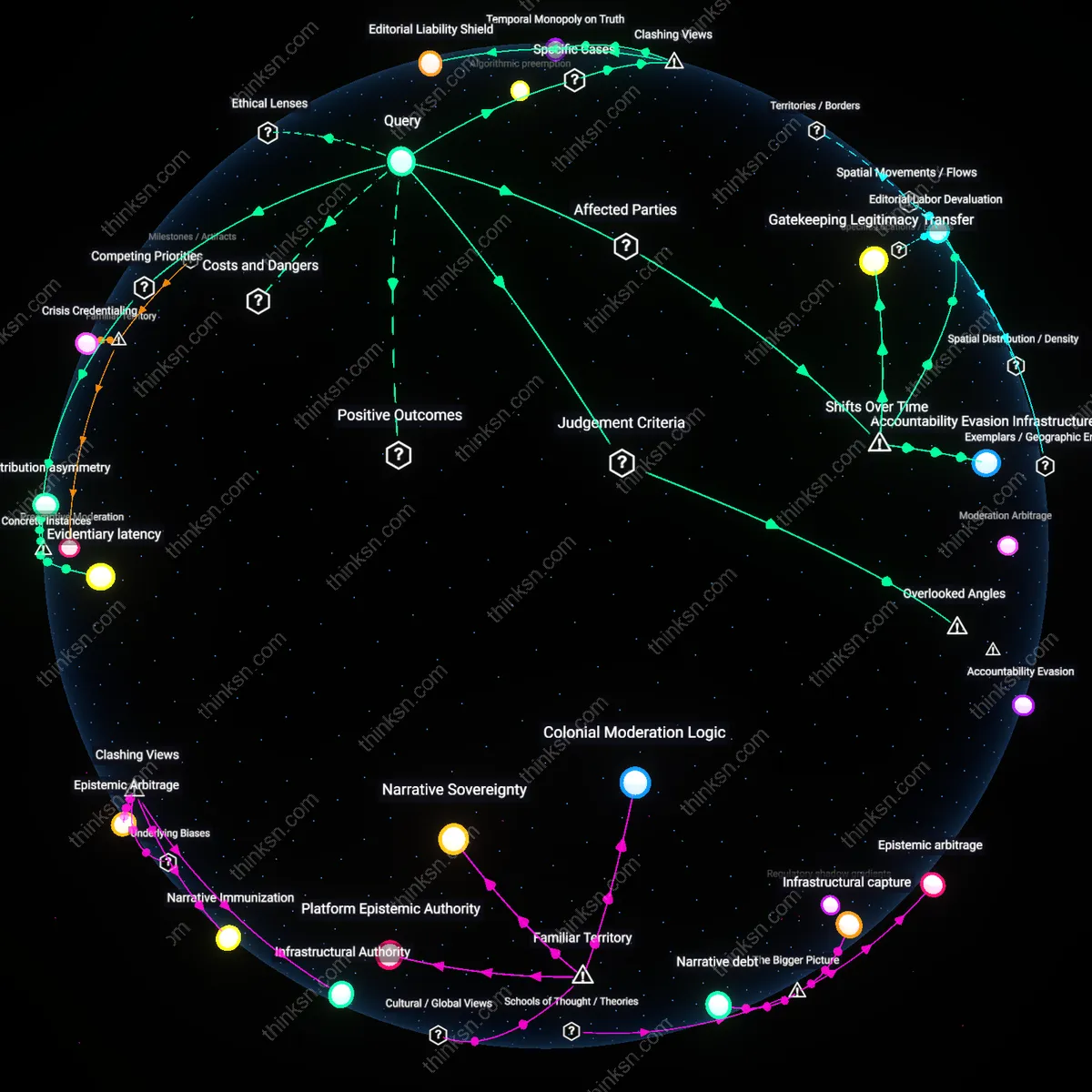

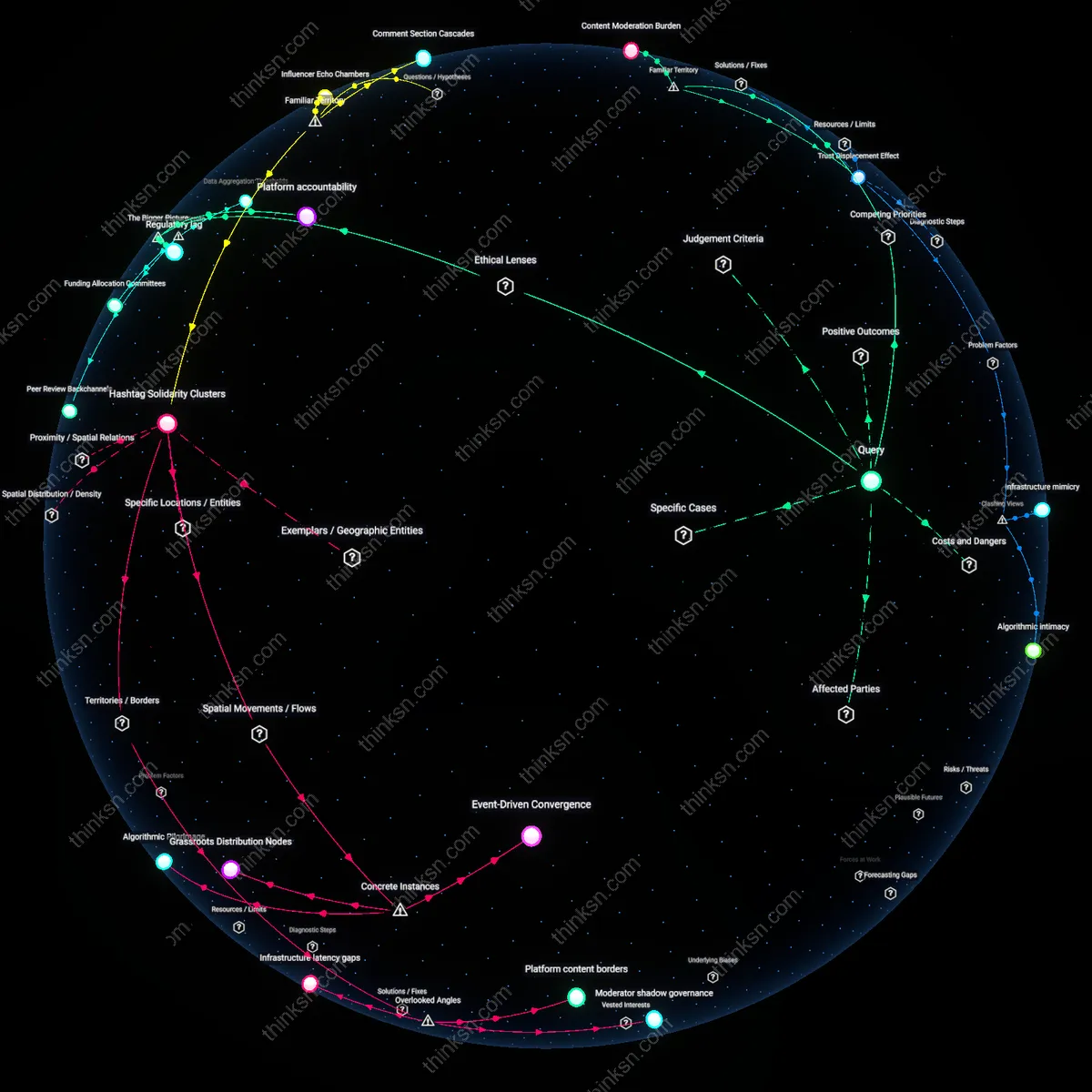

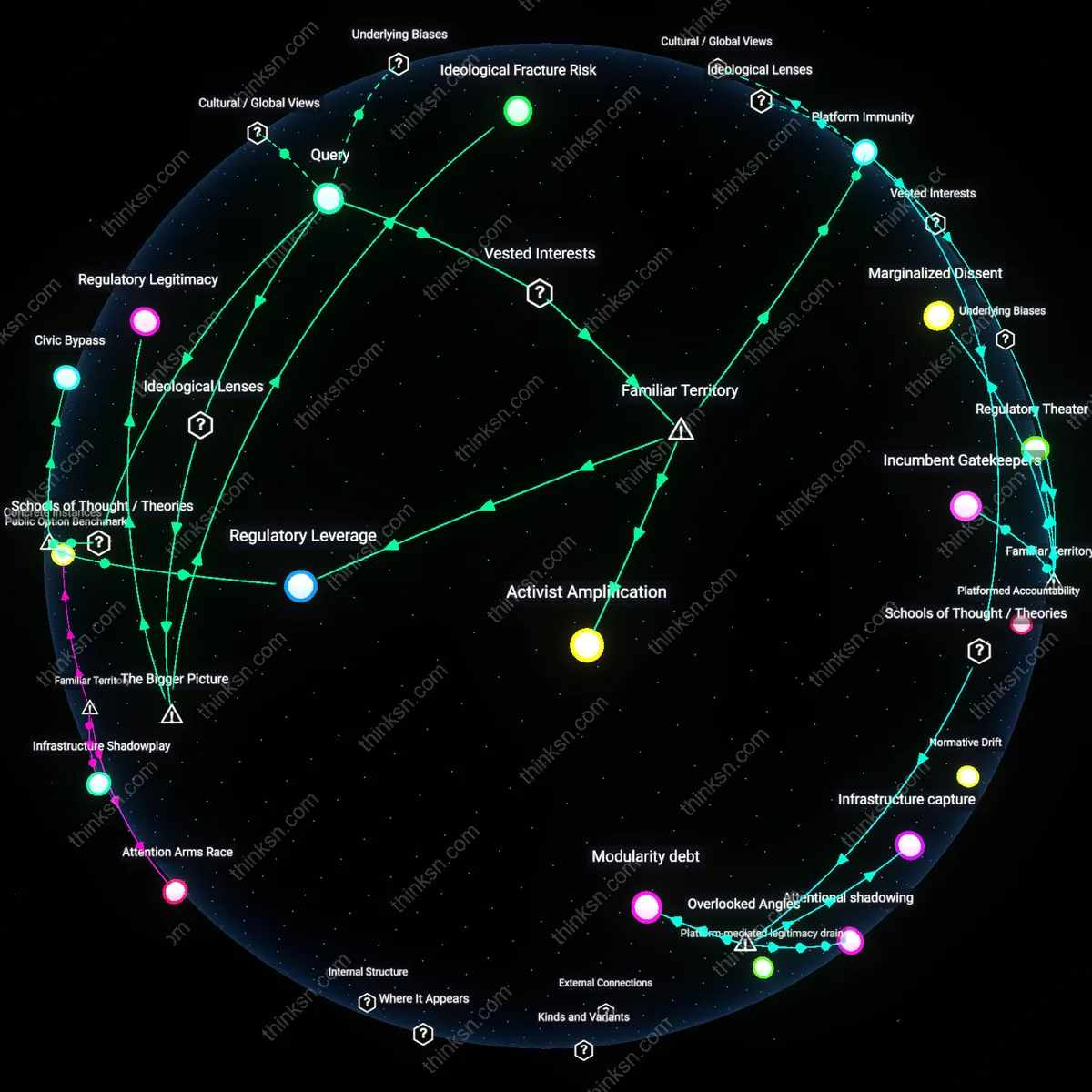

Analysis reveals 11 key thematic connections.

Key Findings

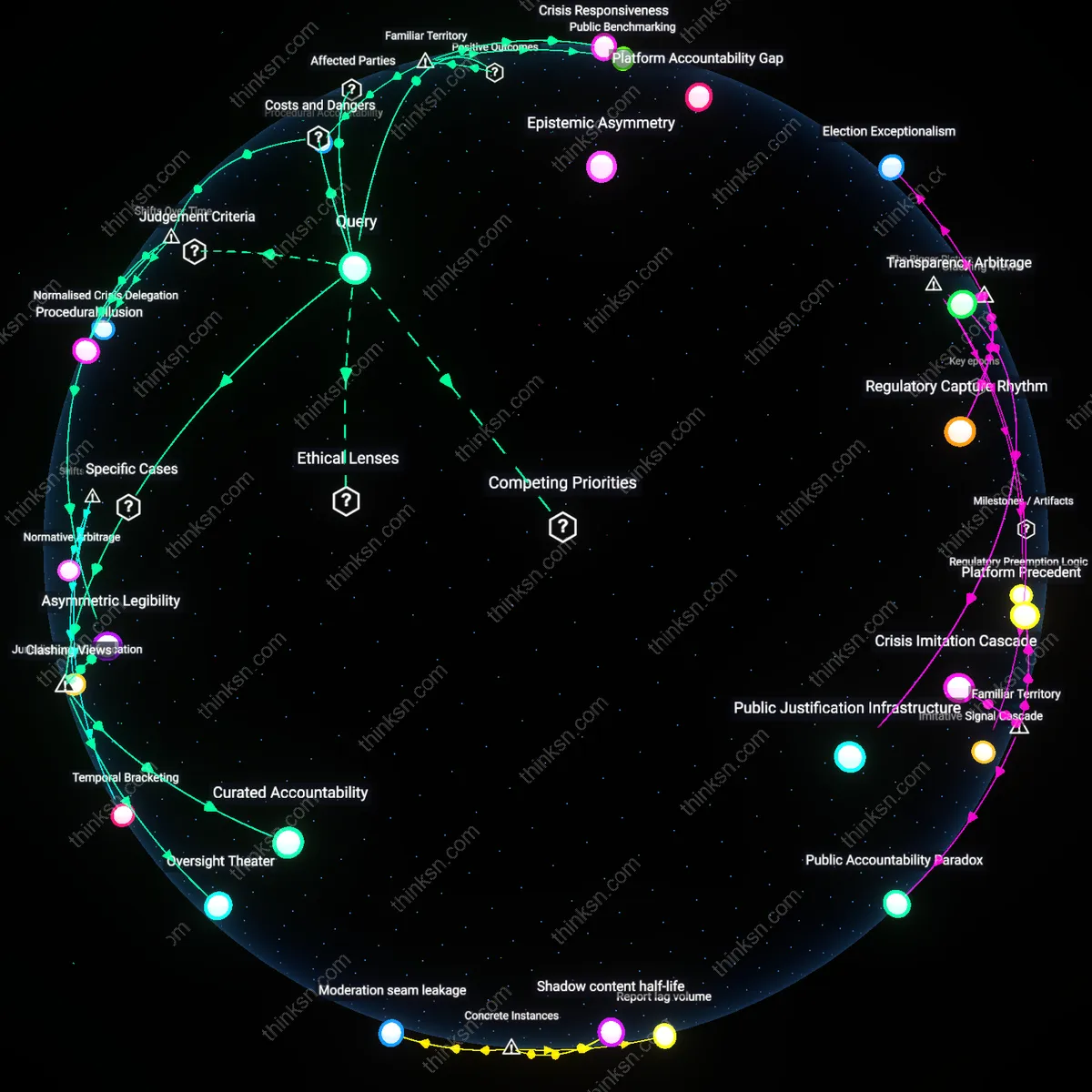

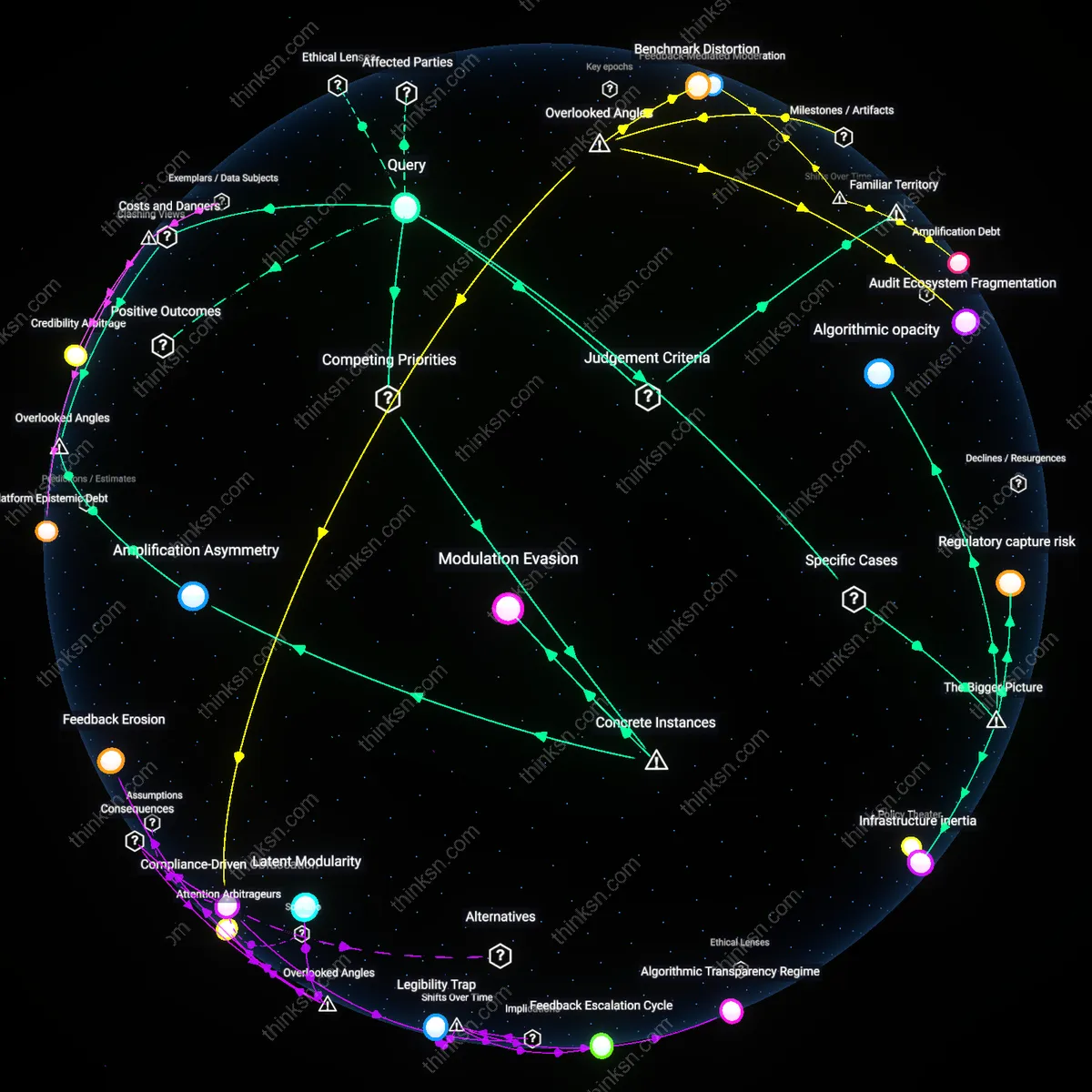

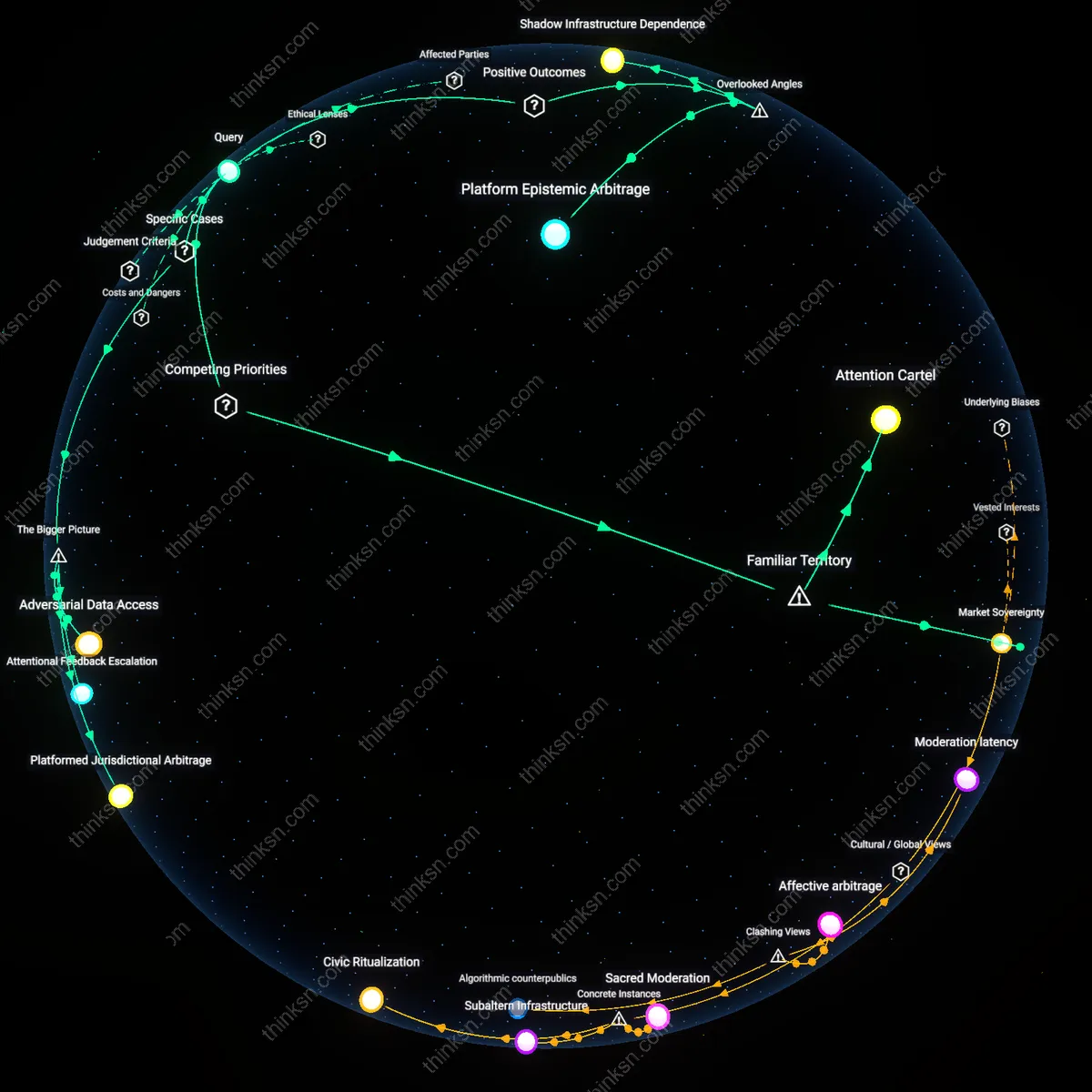

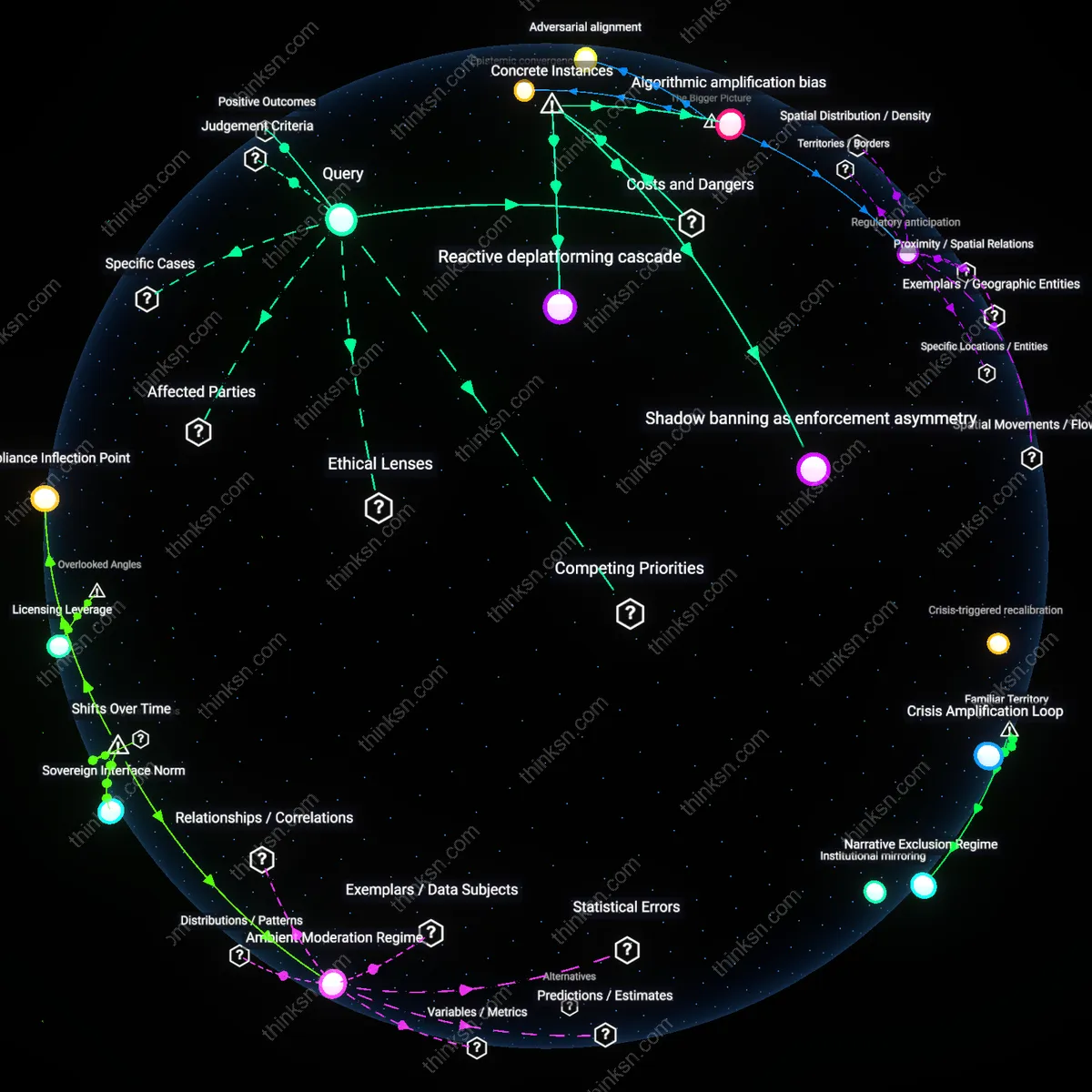

Platform Accountability Gap

Content-moderation transparency reports fail to constitute adequate oversight in Myanmar because Facebook’s delayed disclosure of its role in amplifying anti-Rohingya hate speech allowed military actors to exploit its algorithms with minimal public accountability. The reports published after the 2017 ethnic cleansing offered post hoc data without revealing real-time decision-making processes or enforcement thresholds, shielding internal prioritization of growth over safety. This reveals that transparency as practiced often functions as performative documentation rather than a mechanism of preventive control, particularly where political speech incites violence against marginalized populations.

Regulatory Arbitrage by Design

Brazil’s 2022 electoral authorities’ attempt to enforce real-time moderation transparency on X (formerly Twitter) during the Bolsonaro campaign was undermined by the platform’s selective compliance and delayed reporting, illustrating how platforms can weaponize the format of transparency reports to obscure urgent political interference. By releasing aggregated, decontextualized data weeks after critical election events, X avoided meaningful scrutiny while technically fulfilling disclosure expectations. This demonstrates that transparency reports, when unmoored from enforceable timing and granularity standards, become instruments of legal deflection rather than democratic oversight.

Epistemic Asymmetry

In India’s 2020 Delhi riots, WhatsApp’s transparency reports omitted specific details about how its forwarding limits were selectively enforced amid government pressure to suppress Muslim citizen networks sharing protest footage, revealing that the reports serve as curated narratives rather than neutral records. The platform’s refusal to disclose state-requested takedown data in real time created an information imbalance favoring state actors over vulnerable communities who depended on encrypted messaging for safety coordination. This illustrates how transparency reports reinforce epistemic hierarchies by granting platforms sole authority to define what counts as 'relevant' information in political crises.

Procedural Accountability

Transparency reports establish procedural accountability by requiring platforms like Meta and YouTube to publicly log takedown statistics, appeal outcomes, and policy enforcement trends, which allows civil society groups and regulators to benchmark consistency in content moderation. This mechanism transforms opaque editorial decisions into auditable institutional behavior, enabling watchdogs to identify systemic biases or irregular enforcement patterns over time. The non-obvious aspect is that these reports do not resolve political disputes directly but instead reinforce normative expectations of due process in digital governance, a function more familiar from legal institutions than tech companies.

Public Benchmarking

Transparency reports enable public benchmarking by publishing standardized metrics—such as the volume of removed posts, government takedown requests, and detection rates—allowing users, researchers, and journalists to compare platform performance across time and geopolitics. This data infrastructure supports informed public discourse by grounding debates over censorship and bias in shared evidence rather than anecdote, particularly evident during election periods when platforms like Twitter and TikTok release real-time enforcement data. What’s underappreciated is that these reports function less as oversight tools than as informational leveling mechanisms, transforming asymmetric knowledge into a common reference point for democratic deliberation.

Crisis Responsiveness

Transparency reports improve crisis responsiveness by activating predefined disclosure protocols during high-risk events such as elections or civil unrest, as seen with Facebook’s pre-election reports in Nigeria and India. These disclosures alert media, NGOs, and international observers to emerging moderation patterns, enabling faster intervention when suppression or disinformation spikes. Though control over political speech remains concentrated, the timed release of data turns transparency into an early-warning system—one that leverages public scrutiny not to redistribute power but to compress reaction cycles during democratic stress tests.

Procedural Illusion

Transparency reports exacerbate democratic erosion by simulating accountability without redistributing power, a shift that crystallized after 2016 when platforms replaced editorial discretion with algorithmic moderation at scale. State actors and civil society now depend on sanitized data feeds that obscure real-time content manipulation, masking how automated systems selectively silence marginalized voices under the guise of neutrality—particularly during electoral cycles. This procedural facade emerged distinctly post-Snowden, when public pressure forced platforms to disclose moderation metrics, but not decision-making authority, creating a ritualized performance of oversight that neutralizes dissent while appearing responsive. The non-obvious consequence is that standardization of reporting formats across companies has locked in a definition of ‘transparency’ that precludes structural critique.

Asymmetric Legibility

Corporate transparency regimes generate dangerous knowledge imbalances that systematically disadvantage public interest actors, a rupture that deepened after 2020 when governments began outsourcing disinformation governance to private trust and safety teams. While platforms log granular data on removals and appeals, they restrict access to the training data, logic gates, and escalation protocols governing political speech suppression—leaving researchers and regulators fluent in symptoms but blind to mechanisms. This shift from public regulation to proprietary governance created a path dependency where state oversight mimics corporate report structures instead of enforcing public standards, rendering asymmetric visibility a feature, not a flaw. The underappreciated risk is that this entrenched legibility gap enables plausible deniability for state-adjacent takedowns, especially in hybrid regimes using platforms as de facto censors.

Normalised Crisis Delegation

Transparency reports function as crisis management tools that legitimise emergency powers as routine governance, a transformation cemented during the 2020–2021 U.S. election cycle when platforms preemptively suspended norms of political neutrality to curb election-related misinformation. In that period, ad-hoc moderation policies were institutionalised through report templates that frame exceptional interventions as standard metrics, erasing the distinction between peacetime and emergency speech control. The mechanism operates through quarterly reporting cycles that recode political instability as operational data, absorbing public scrutiny into technical discourse while insulating platforms from democratic renegotiation of authority. The overlooked cost is that this trajectory treats recurring electoral violence not as a signal to return power to public institutions, but as justification for permanent private guardianship over political discourse.

Curated Accountability

No, content-moderation transparency reports cannot be considered adequate oversight because they are selectively framed by platforms to emphasize compliance and volume metrics rather than political equity, as seen in Meta’s biannual Transparency Reports that detail removals of extremist content but obscure algorithmic amplification of partisan speech—this curated disclosure functions as a legitimacy technology, allowing dominant platforms to define the terms of scrutiny while evading binding external evaluation. The mechanism lies in standardizing only those metrics that are defensible or neutral in political valence, such as terrorism-related takedowns, while omitting data on shadow-banning, recommendation patterns, or differential enforcement across ideological groups, thereby simulating oversight without ceding interpretive control. This reveals how transparency can be weaponized not as a check on power but as a procedural alibi within asymmetric governance systems.

Oversight Theater

No, transparency reports do not constitute meaningful oversight because they operate as ritualized performances of accountability disconnected from remedial power, as demonstrated by YouTube’s consistent publication of detailed content-moderation statistics while maintaining opaque appeals processes and unresponsive human review, allowing sustained suppression of fringe but lawful political voices like those in Palestinian advocacy networks. The ritual lies in the repetition of standardized disclosures—appeals upheld, videos removed—that neither anticipate nor rectify systemic biases in enforcement, creating a façade of responsiveness without altering the imbalance in whose speech is ultimately governable. This reframes transparency not as a regulatory conduit but as a ceremonial practice that sustains platform sovereignty by absorbing dissent into procedural displays.