Is Free Social Media Worth the Risk of Political Profiling?

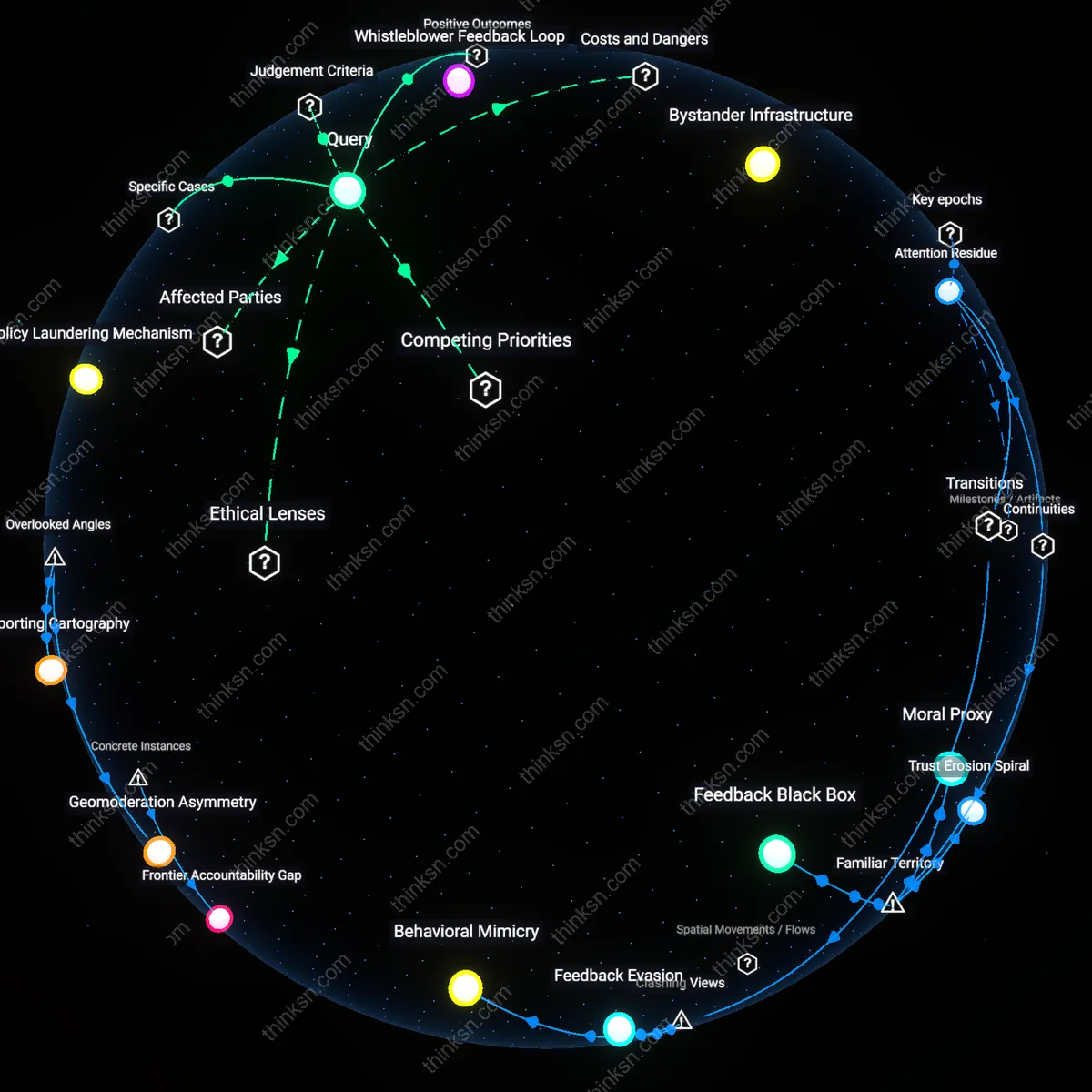

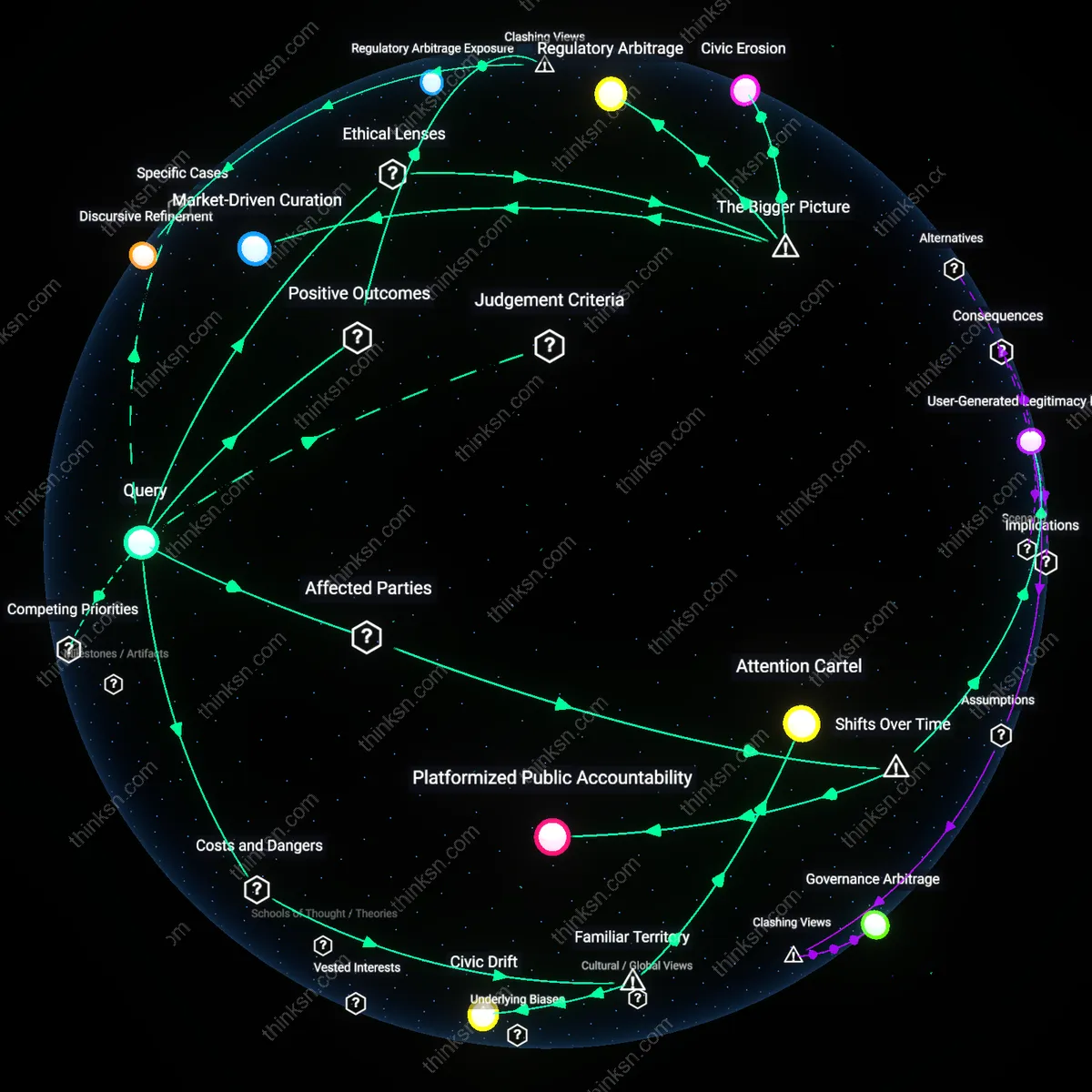

Analysis reveals 8 key thematic connections.

Key Findings

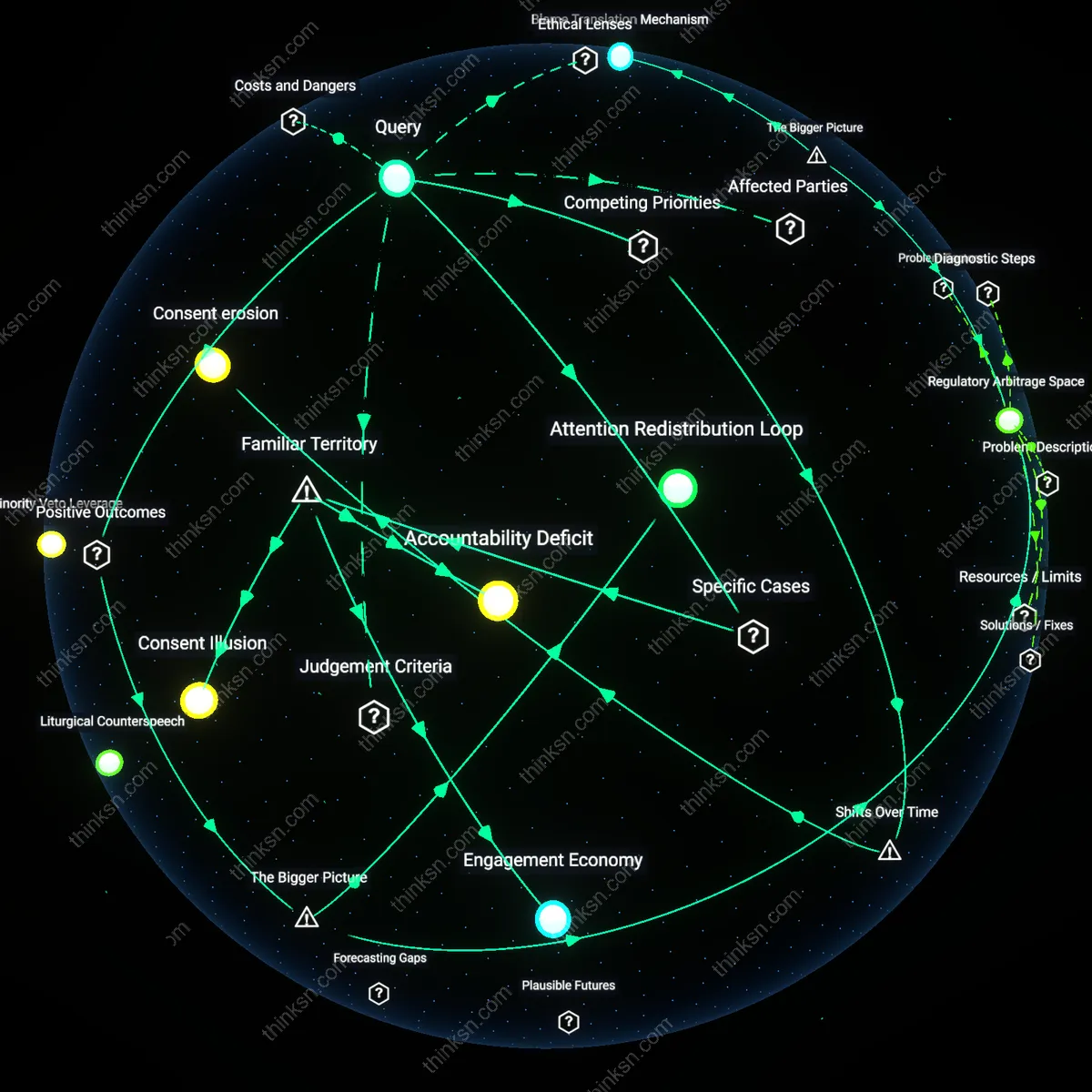

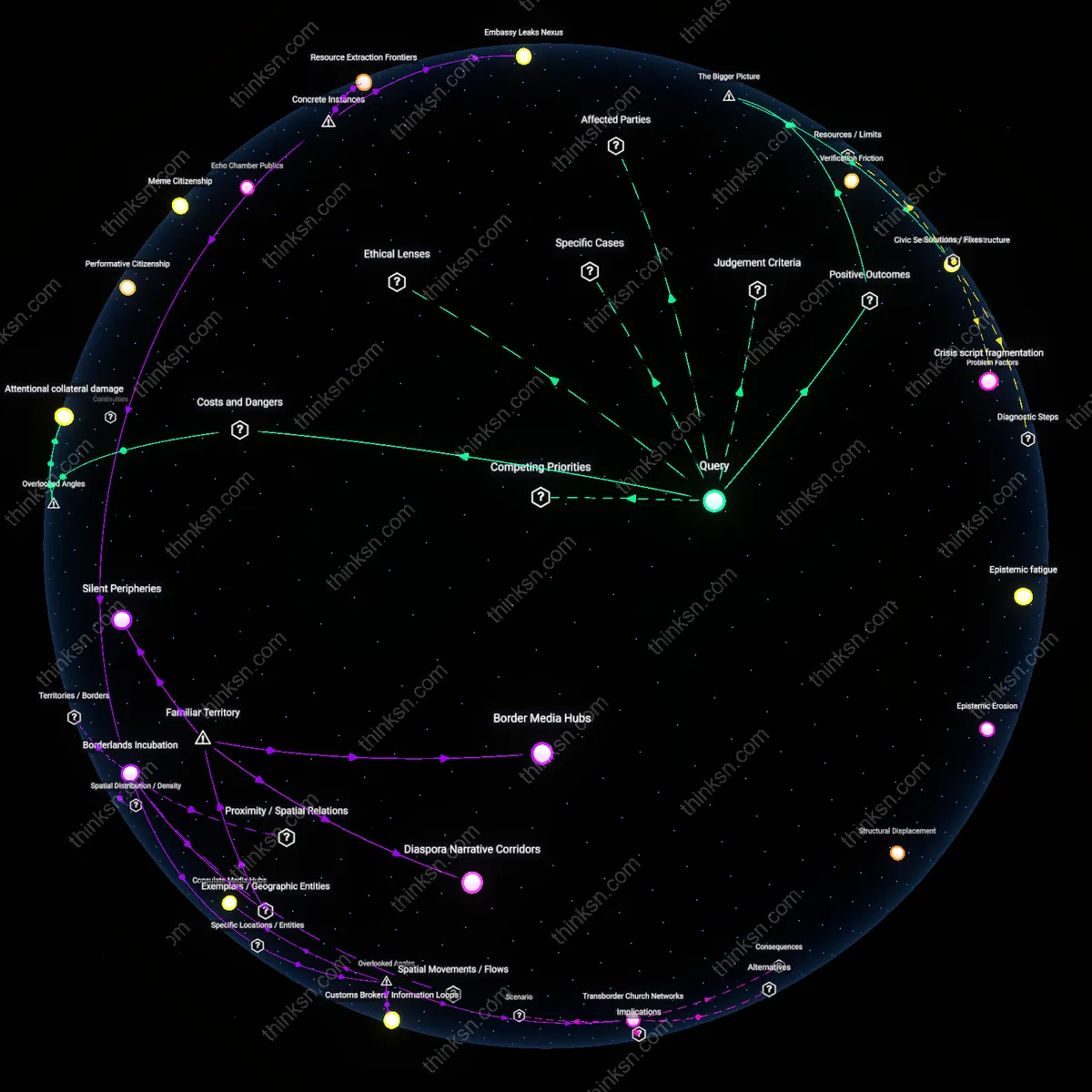

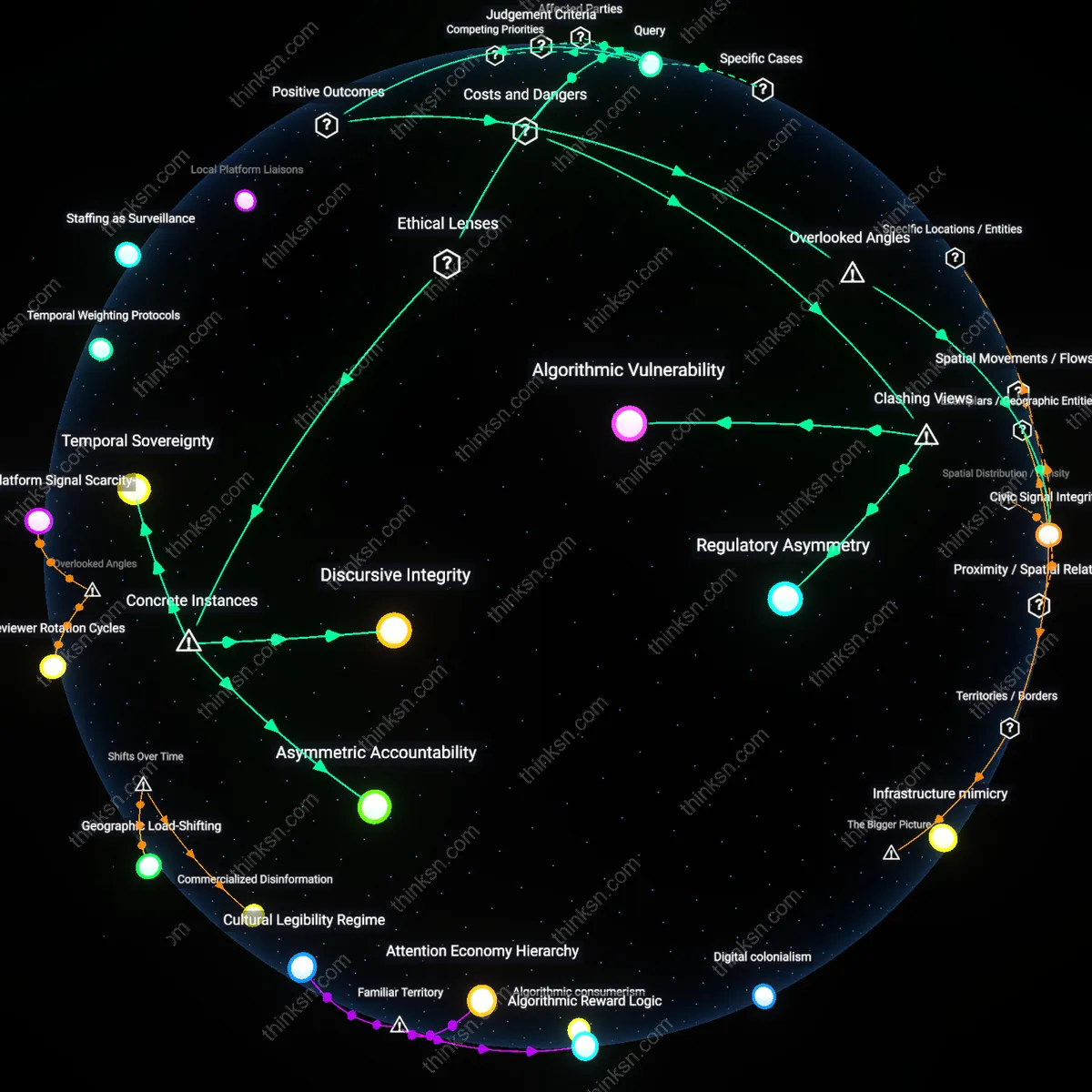

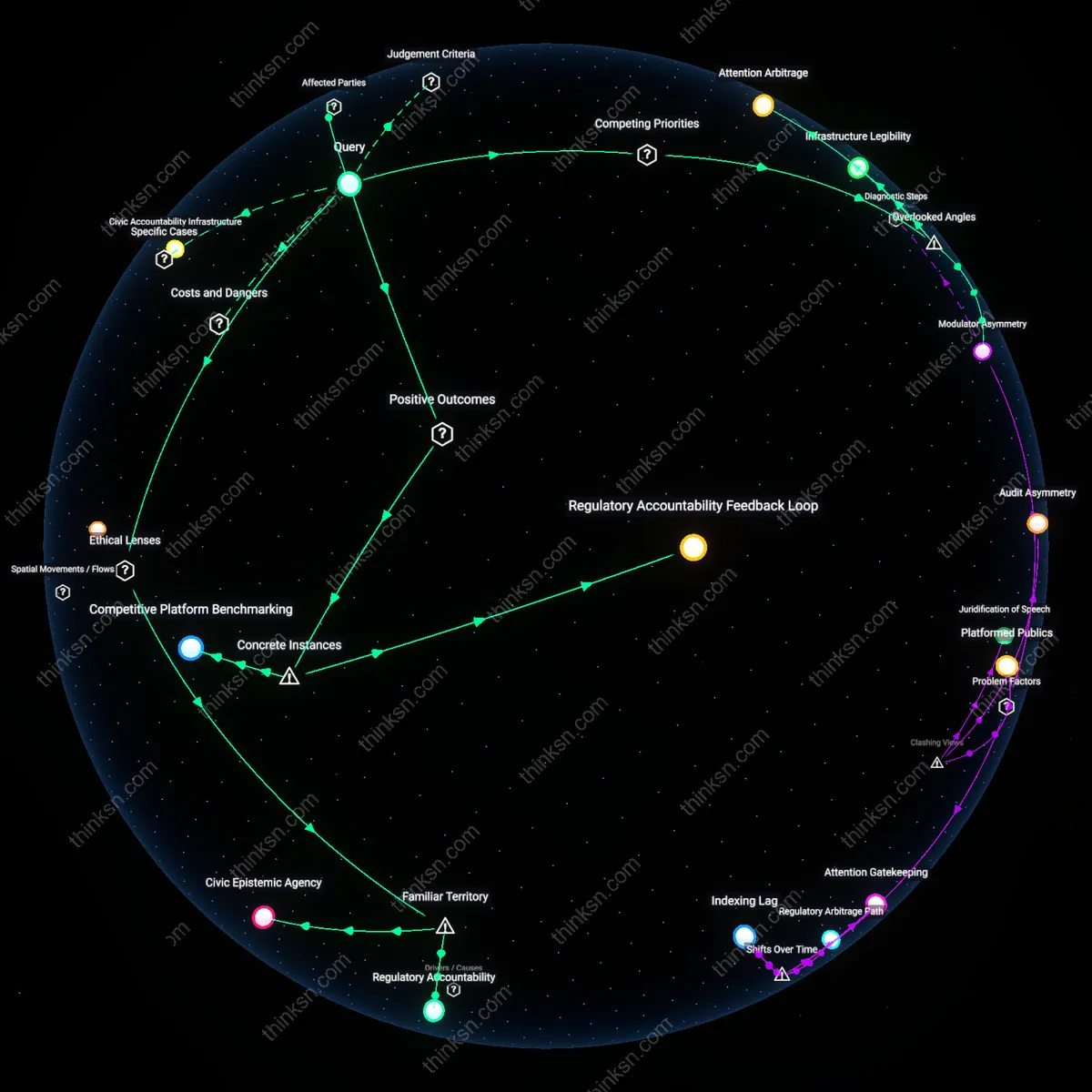

Regulatory Arbitrage Space

Professionals enhance democratic engagement by leveraging free social media platforms to amplify marginalized political voices, as low-cost access enables grassroots organizations—such as Black Lives Matter chapters in midsize U.S. cities—to bypass traditional media gatekeepers and directly mobilize supporters, a mechanism made effective by the structural misalignment between platform scalability and national campaign finance regulations, which historically privilege high-budget actors; this creates a rare equity-preserving loophole where resource-constrained groups can achieve outsized influence through behaviorally-informed targeting, a dynamic rarely recognized because it reframes micro-targeting not as a distortion but as a corrective within uneven political systems.

Attention Redistribution Loop

Professionals generate societal value by using behavioral profiling to redirect public attention toward under-discussed policy issues, as data-driven targeting on platforms like Facebook enables public health advocates in Brazil to shift algorithmically-fragmented audiences from entertainment content to vaccination literacy campaigns, a process sustained by the feedback loop between user engagement metrics and platform-curated visibility, which repurposes surveillance infrastructure for civic education—an outcome that is structurally obscured because it depends on actors exploiting the very mechanisms critics condemn, revealing that profiling systems can be hijacked for collective benefit when civil actors master their logic.

Counter-Targeting Capacity

Professionals mitigate systemic manipulation risks by developing fluency in micro-targeting tools, enabling watchdog NGOs in Eastern Europe to reverse-engineer disinformation campaigns on TikTok and preemptively inoculate vulnerable demographics through counter-messaging, a defensive capability made possible by the transparency asymmetry inherent in platform ecosystems where adversarial actors innovate faster than state regulators can respond, thus transforming surveillance tech into an early-warning civic sensor network—an underappreciated systemic pivot where the means of control become tools of resistance through asymmetric learning dynamics.

Consent erosion

The professional adoption of behavioral micro-targeting has shifted from optional tactic to structural necessity in political communication since the mid-2010s, as platforms like Facebook migrated from open newsfeeds to algorithmically gated content distribution. This change forced political actors to accept opaque user profiling as a condition of audience reach, transforming informed consent from a regulatory ideal into a procedural afterthought. The underappreciated shift is that consent mechanisms did not merely weaken—they became temporally irrelevant, as real-time data harvesting precedes and shapes user choices rather than following them.

Accountability deferral

Following the Cambridge Analytica revelations of 2018, political professionals increasingly externalize ethical responsibility for micro-targeting onto platform governance, marking a decisive institutional pivot away from self-regulation toward blame delegation. This shift reveals how the locus of ethical judgment has moved from campaign teams to third-party tech firms, creating a systemic delay in accountability that aligns with the decoupling of message design from message delivery. The overlooked dynamic is that professionals now gain plausible deniability by design, allowing them to exploit profiling tools while treating their consequences as contingent rather than inherent.

Accountability Deficit

Professionals should restrict reliance on free social media platforms when engaging in political communication because these platforms systematically obscure data usage from both users and regulators, exemplified by Facebook’s role in the 2016 U.S. election where Cambridge Analytica leveraged undisclosed psychographic profiling at scale. The mechanism—undisclosed third-party data harvesting enabled by platform design—allowed micro-targeting without meaningful consent, revealing a structural absence of oversight. While the public typically associates platform risk with privacy breaches, the deeper issue is not data theft per se but the institutional absence of accountability in who can shape political influence and how, making ethical compliance impossible under current conditions.

Engagement Economy

Professionals should avoid political micro-targeting on free social media platforms because their default incentive structures prioritize engagement over accuracy, as seen in YouTube’s algorithmic amplification of extremist content during the 2020 U.S. election cycle. The system rewards emotionally charged content through automated recommendation, which political actors exploit to polarize audiences, demonstrating that ethical misuse is not an anomaly but a predictable output of platform economics. Though public discourse fixates on specific bad actors, the non-obvious reality is that the business model itself operationalizes behavioral profiling as a tool for sustained attention capture, making responsible use technically feasible but institutionally disfavored.

Consent Illusion

Professionals should reject the premise that informed consent mitigates ethical risks in political micro-targeting, given how Instagram’s interface design during the 2018 Brazil election cycle made data permissions effectively invisible to users despite legal compliance. The platform fulfilled technical requirements for consent while structurally preventing comprehension through cognitive overload and layered opt-out mechanisms, enabling pervasive profiling under the guise of legality. Most people assume consent equates to control, but the real ethical failure lies in the manufactured gap between legal formality and behavioral reality—where user agency is preserved in theory but dissolved in practice.