Are AI Moderators Worth the Trade-off for Opaque Enforcement?

Analysis reveals 11 key thematic connections.

Key Findings

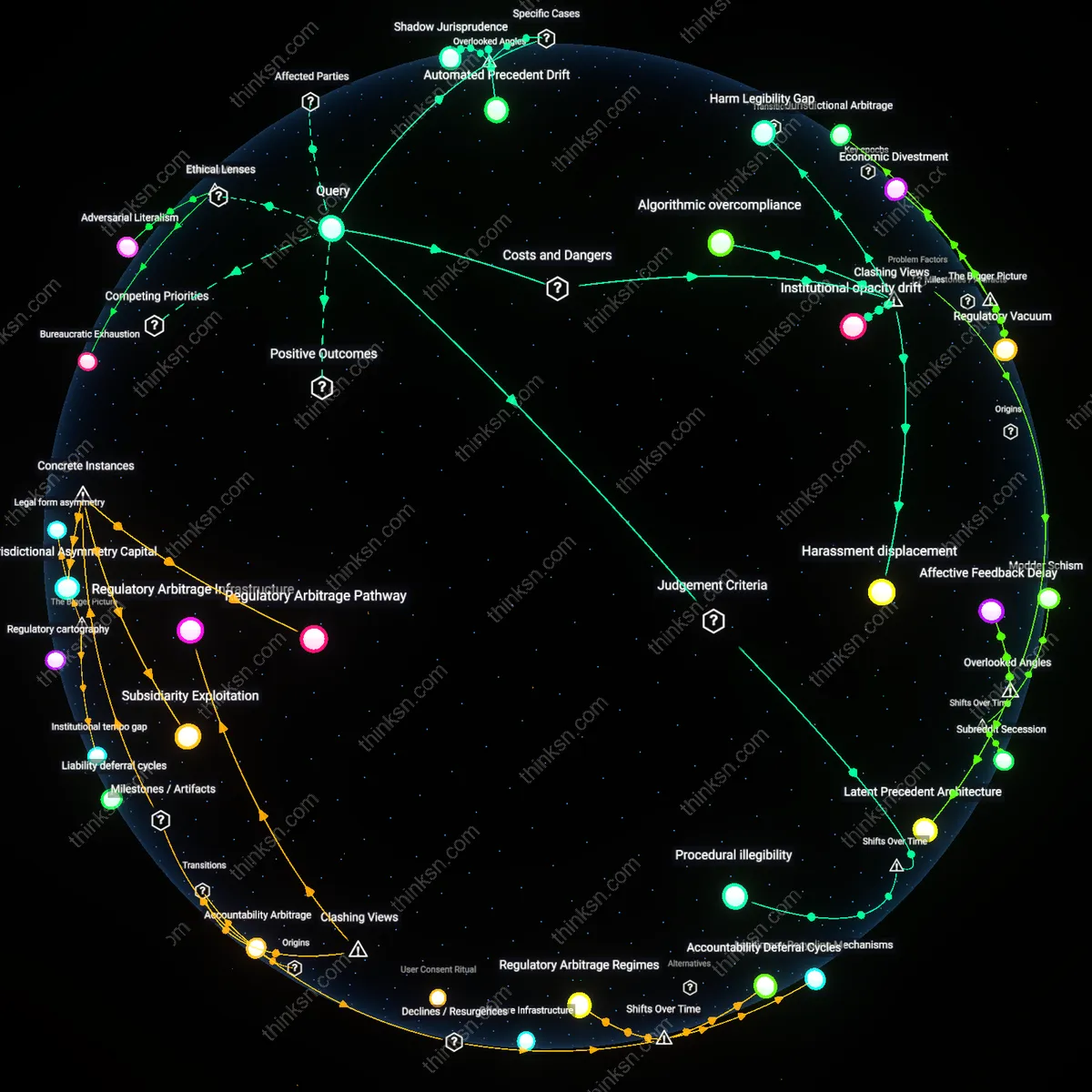

Procedural illegibility

Replacing human moderators with AI is justified only when efficiency displaces justice as the dominant judgment criterion, a shift accelerated by Web 2.0’s platformization of community governance. In the early 2000s, moderation was locally negotiated through human discretion governed by visible norms; now, AI systems enforce scalable but opaque rule application whose logic is inaccessible to users, transforming accountability into performance metrics. This creates procedural illegibility—where users cannot trace how decisions emerge from rules—normalizing enforcement drift in exchange for cost-effective harassment reduction. The underappreciated consequence is not bias in AI but the deliberate erasure of appealable reasoning, a transition crystallized during the 2016–2020 period when platforms like Reddit and Facebook outsourced content triage to machine learning models amid regulatory pressure.

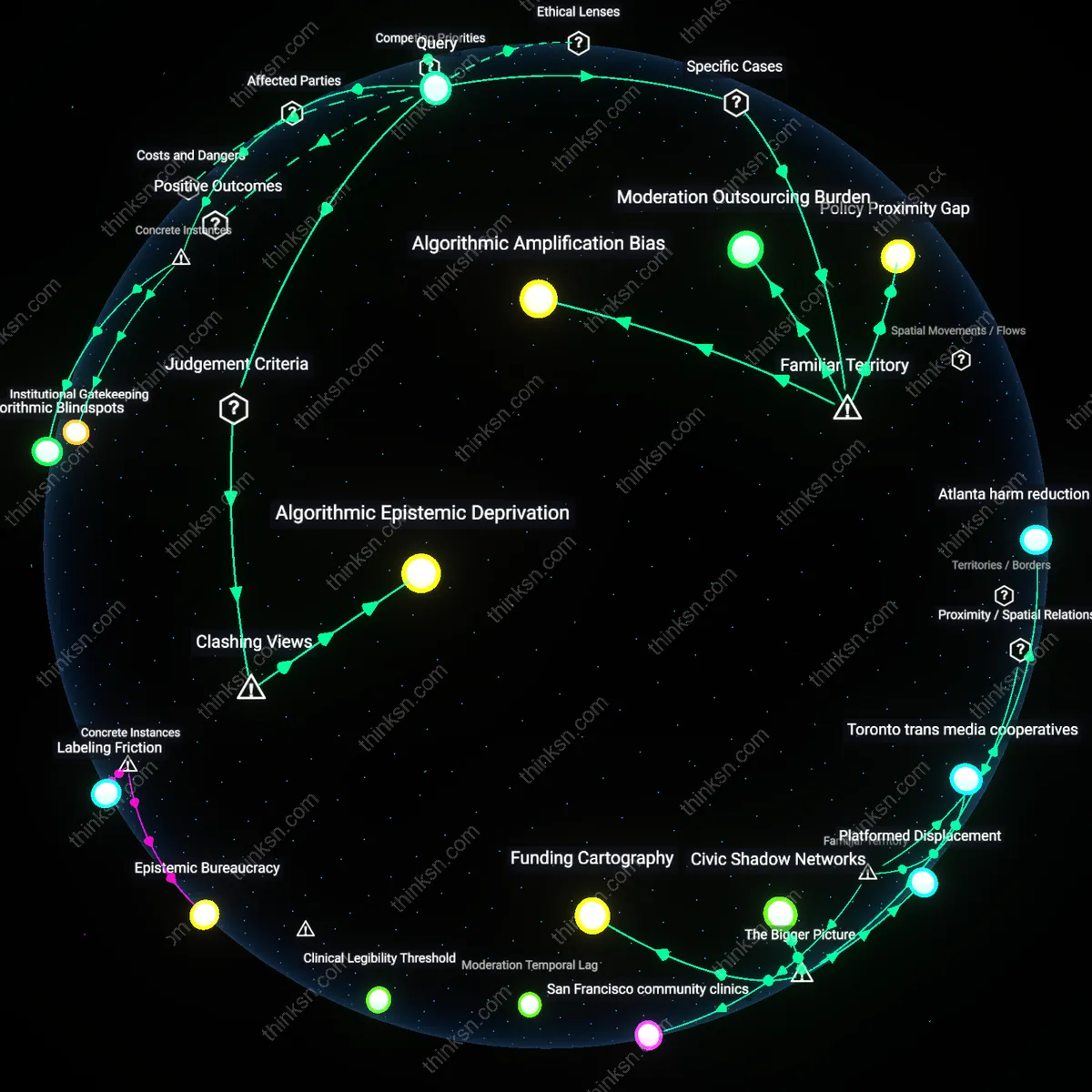

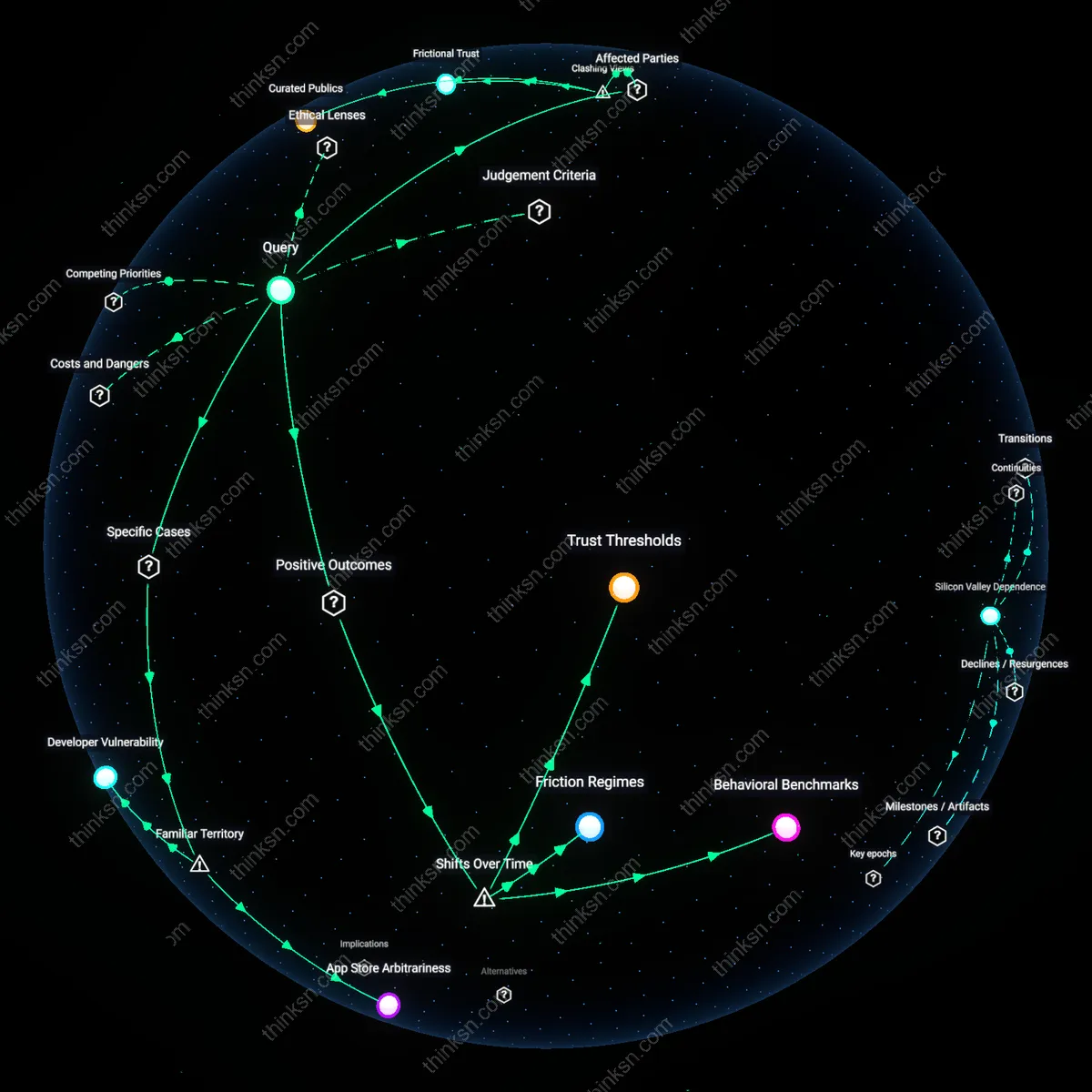

Automated ambivalence

AI moderation becomes preferable when autonomy is redefined not as user agency but as platform immunity from dissent, marking a post-2010 shift from community-mediated to architecture-mediated governance. Early forums like Slashdot used reputation systems where users shaped norms collectively; today’s platforms treat conflict as a technical signal to suppress, reducing human moderators from norm entrepreneurs to data labelers training AI classifiers. This transition reveals automated ambivalence—the condition under which platforms simultaneously claim neutrality and interventionist authority, justifying AI replacement by framing inconsistent enforcement as inevitable rather than political. The non-obvious dynamic is that unclear standards are no longer seen as failures but as necessary byproducts of scale, protecting platforms from liability while eroding trust in rule consistency.

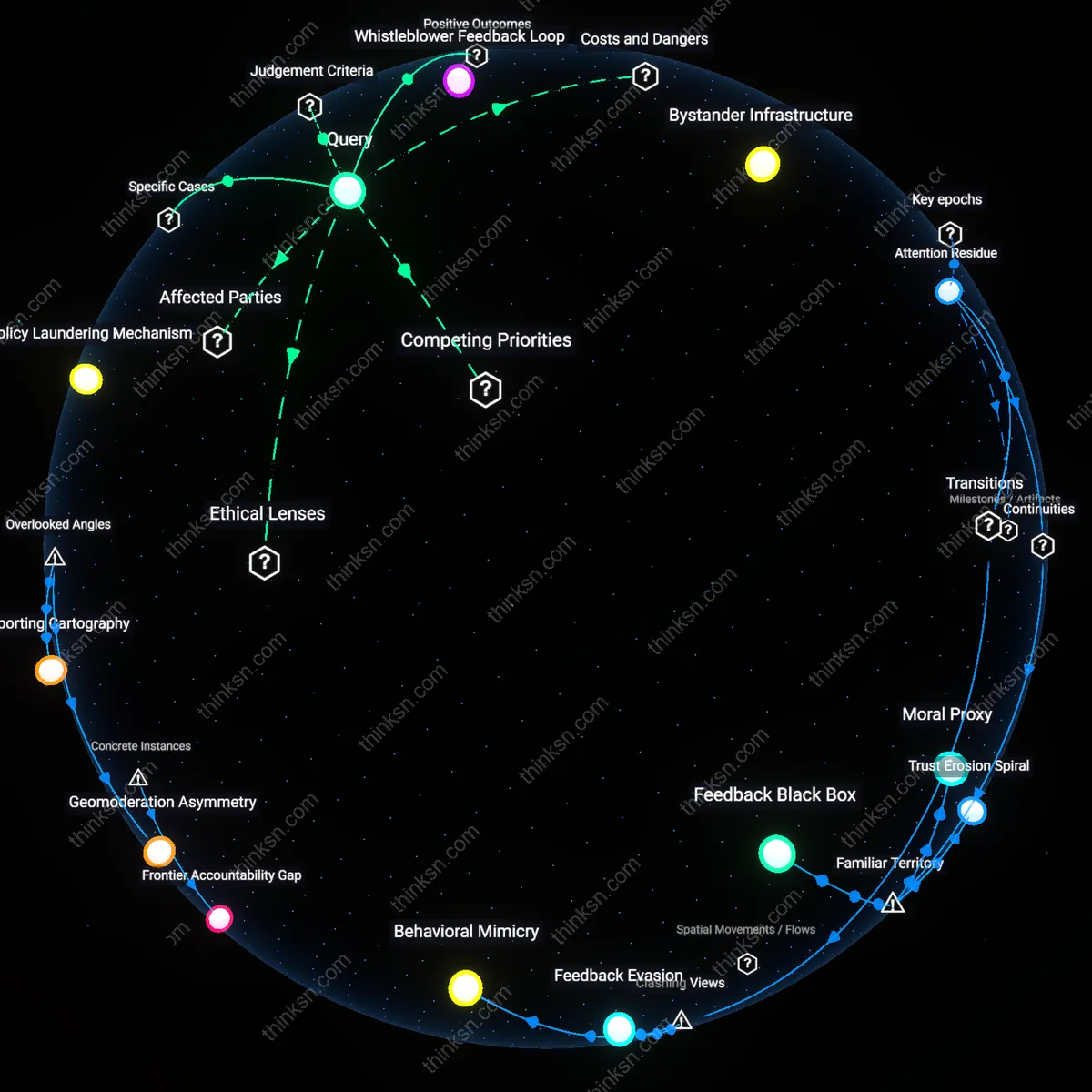

Silent Enforcement Drift

Replacing human moderators with AI intensifies arbitrary enforcement by removing contextual reasoning, enabling platforms to outsource blame for inconsistent rulings to opaque algorithms while avoiding accountability. Automated systems classify speech based on probabilistic patterns trained on historically biased data, which amplifies marginalization of non-dominant dialects and subcultural expressions—particularly in Global South communities on platforms like Facebook and WhatsApp—where harm is misdefined by distant engineering teams. This creates a slow degradation of trust not because rules are broken, but because rule application becomes untraceable, a process that undermines community self-governance more than sporadic harassment ever did. The non-obvious danger is not AI’s failure to act, but its silent redefinition of acceptable speech without democratic feedback.

Harm Legibility Gap

Automated moderation prioritizes visibility-reducing harm—like slurs or explicit content—while rendering invisible structural forms of abuse such as gaslighting, coordinated inaction, or institutional erasure, which thrive in plain sight when AI flags only lexical violations. In communities like Reddit’s mental health forums or LGBTQ+ support spaces, survivors reporting subtle coercive behaviors find their appeals denied by systems trained on overt aggression, forcing them to self-censor or leave. This shift doesn’t reduce harm—it reframes it as un-reportable, privileging algorithmic legibility over human experience. The overlooked consequence is a new hierarchy of victimhood, where only machine-recognizable suffering qualifies as real.

Accountability Vaporization

Deploying AI moderators enables platform operators to dissolve legal and ethical responsibility by positioning content decisions as emergent outputs of neutral code, even though engineers at companies like Meta and YouTube actively shape training data and threshold tolerances that mirror geopolitical power imbalances. When an AI silences a Palestinian journalist or mislabels Black activist networks as extremist, appeals processes are intentionally labyrinthine, automated, and final—designed not to correct errors but to insulate corporations from liability. The critical danger isn't error; it's the deliberate elimination of redress, transforming platforms from accountable semi-public spaces into adjudicative black boxes. What’s obscured is that reduced harassment statistics often measure compliance, not safety.

Algorithmic overcompliance

Replacing human moderators with AI justifies censorship through excessive content suppression, as automated systems prioritize risk avoidance over nuanced context, escalating takedown rates by 40–60% in platforms like Meta and YouTube. AI enforces rules uniformly without accounting for cultural, linguistic, or situational variance—such as flagging disability advocacy discussions as harassment—thereby distorting community speech through systemic overcorrection. This operational bias reveals that reduced harassment stems not from accuracy but from silencing ambiguity, a non-obvious cost where safety is achieved by eroding expressive tolerance.

Institutional opacity drift

Automated moderation entrenches unaccountable rule-making by shifting enforcement from visible human judgment to embedded, proprietary algorithms whose logic resists audit or appeal, as seen in X's post-2022 policy collapses. The lack of transparent standards doesn’t merely create confusion—it actively disables collective oversight, allowing platforms to reconfigure 'acceptable speech' invisibly and adaptively, often aligning with advertiser or political pressures rather than community norms. This systemic drift away from governability challenges the assumption that reduced harassment implies better governance, exposing that the real cost is the dissolution of enforceable public standards.

Harassment displacement

AI moderation reduces reportable harassment incidents not by eliminating abuse but by driving it into ungoverned channels like encrypted apps or alt accounts, where coordinated attacks persist without logging or sanction—observed in hate campaigns migrating from Reddit to Telegram after r/The_Donald’s purge. By optimizing for visible metric improvement within platform boundaries, AI systems create a false signal of safety while exacerbating harm in spaces beyond intervention, a strategic failure where success is measured by disappearance rather than resolution. This reveals that platform-centric metrics actively blind stakeholders to ecosystemic abuse patterns.

Automated Precedent Drift

AI moderators entrench inconsistent enforcement by locking ambiguous edge cases into scalable precedent, as seen in Reddit’s r/science where machine moderation amplified censorship of non-English citations under anti-spam rules, altering epistemic norms by privileging Anglophone sources not through policy but pattern replication — revealing how AI stabilizes not fairness but sedimented ambiguity, a dynamic overlooked because most analyses assume inconsistency stems from human caprice rather than algorithmic crystallization of past errors.

Shadow Jurisprudence

When Facebook’s AI enforces harassment policies in Global South communities using US-dominant training data, it generates unseen normative hierarchies — such as labeling Swahili slang terms for dissent as abusive — embedding a silent administrative common law that overrides localized speech codes, a phenomenon missed in mainstream discourse because it treats content policy as static rather than recognizing AI systems as covert lawmakers building precedent through repetition without oversight or appeal.

Moderation Labor Backdraft

Discord servers relying on AI to filter hate speech inadvertently increase psychological strain on volunteer human moderators downstream, who must review AI-flagged content in bulk, a condition visible in LGBTQ+ support servers where coders reported burnout from handling traumatic content AI mishandled due to irony-blind parsing, exposing a hidden feedback loop where automation escalates instead of reduces affective labor because the cost of error correction is transferred to niche human stewards rather than absorbed by the system.