Do Opaque Algorithms in Social Media Hide or Shift Regulatory Accountability?

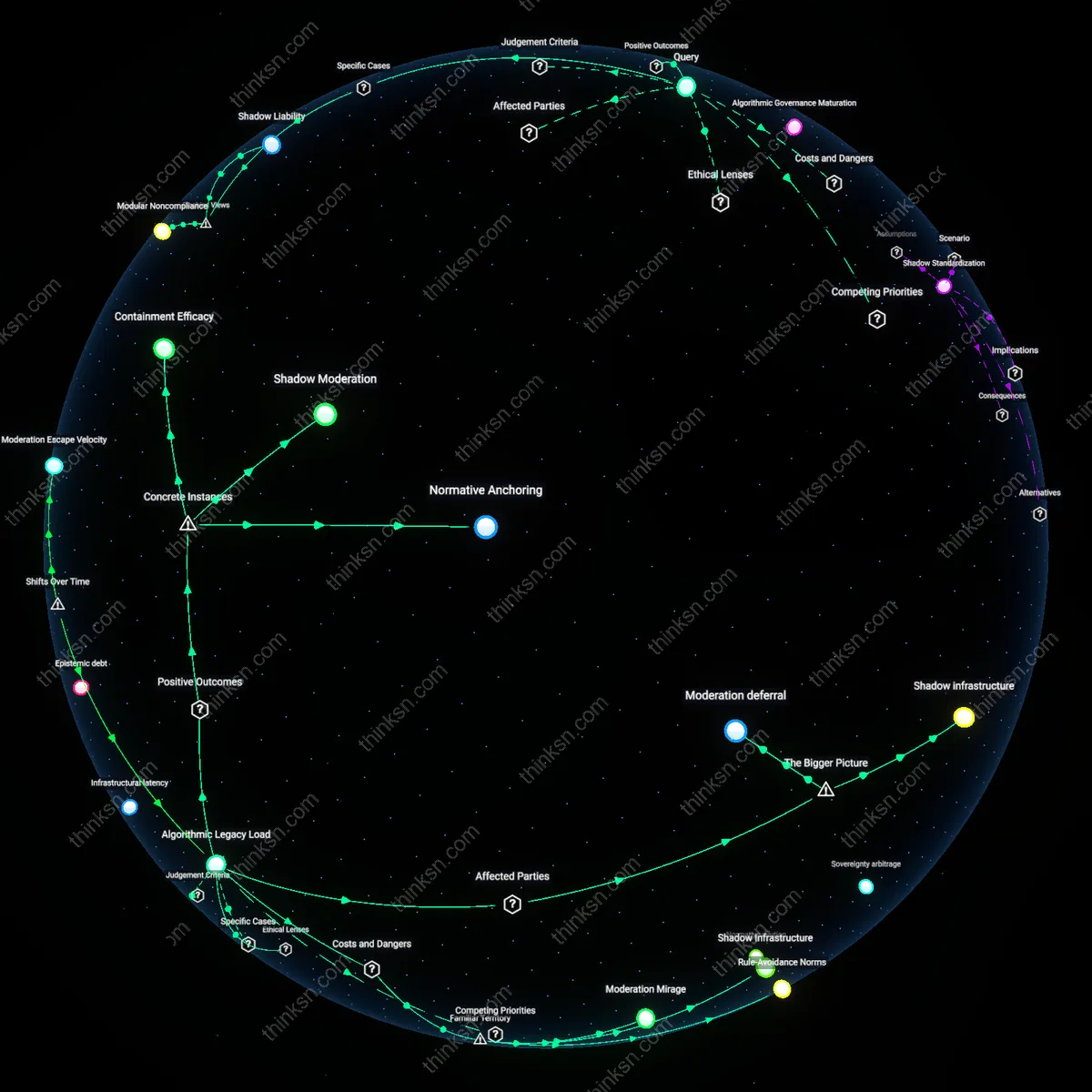

Analysis reveals 6 key thematic connections.

Key Findings

Algorithmic Governance Maturation

The shift from reactive content moderation to proactive self-regulation via machine learning systems after 2016 enabled platforms like Facebook and YouTube to scale trust and safety operations, reducing reliance on user-flagging and governmental pressure. This transition embedded institutional responsibility within platform infrastructure, replacing fragmented human review with consistent, rule-based automated enforcement calibrated to evolving norms. What was once ad hoc and geographically variable became a centralized, time-sensitive operational logic that preempts harm through patterned intervention. The non-obvious outcome is that regulatory consistency improved not through external mandate but through internal engineering imperatives driven by crisis response and scale demands.

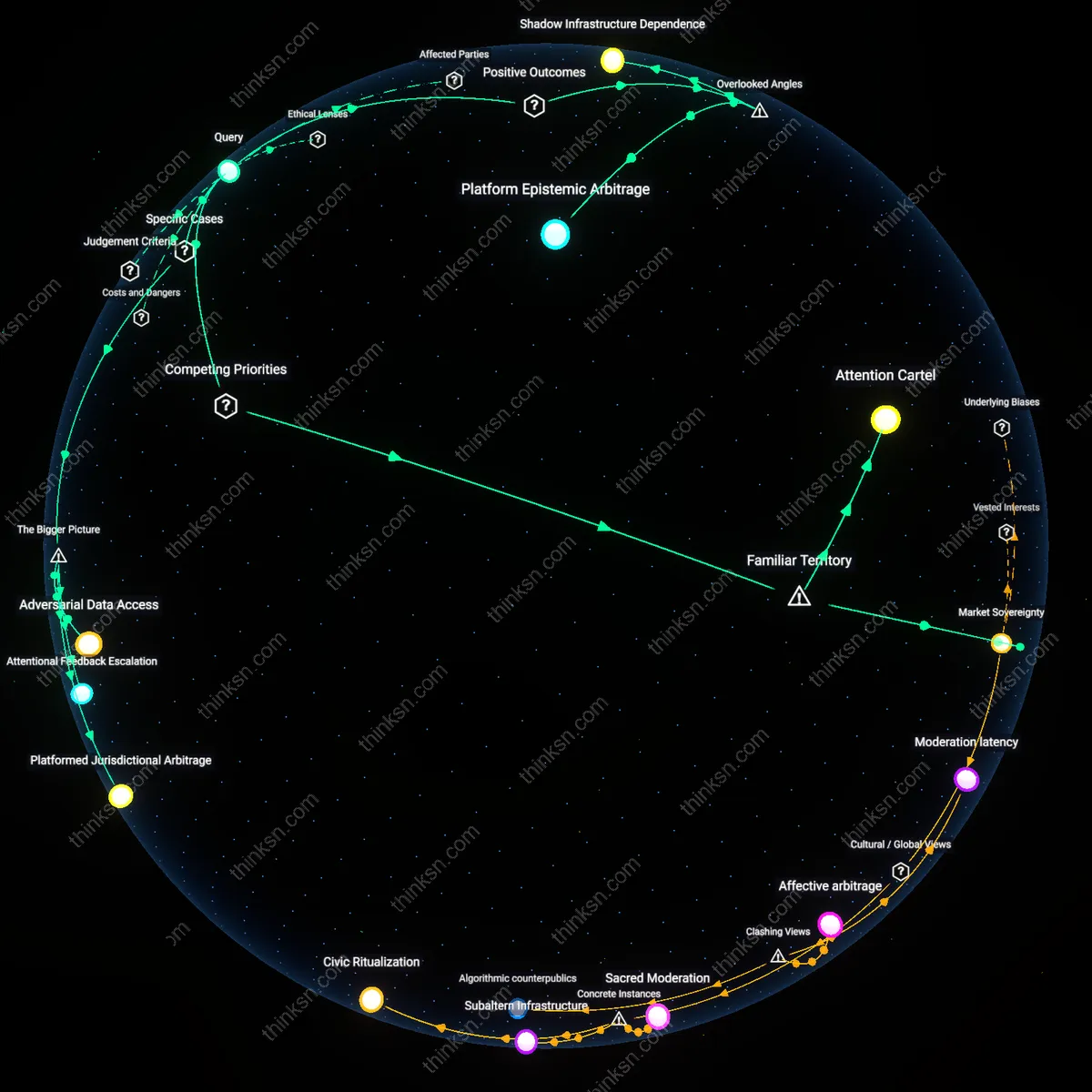

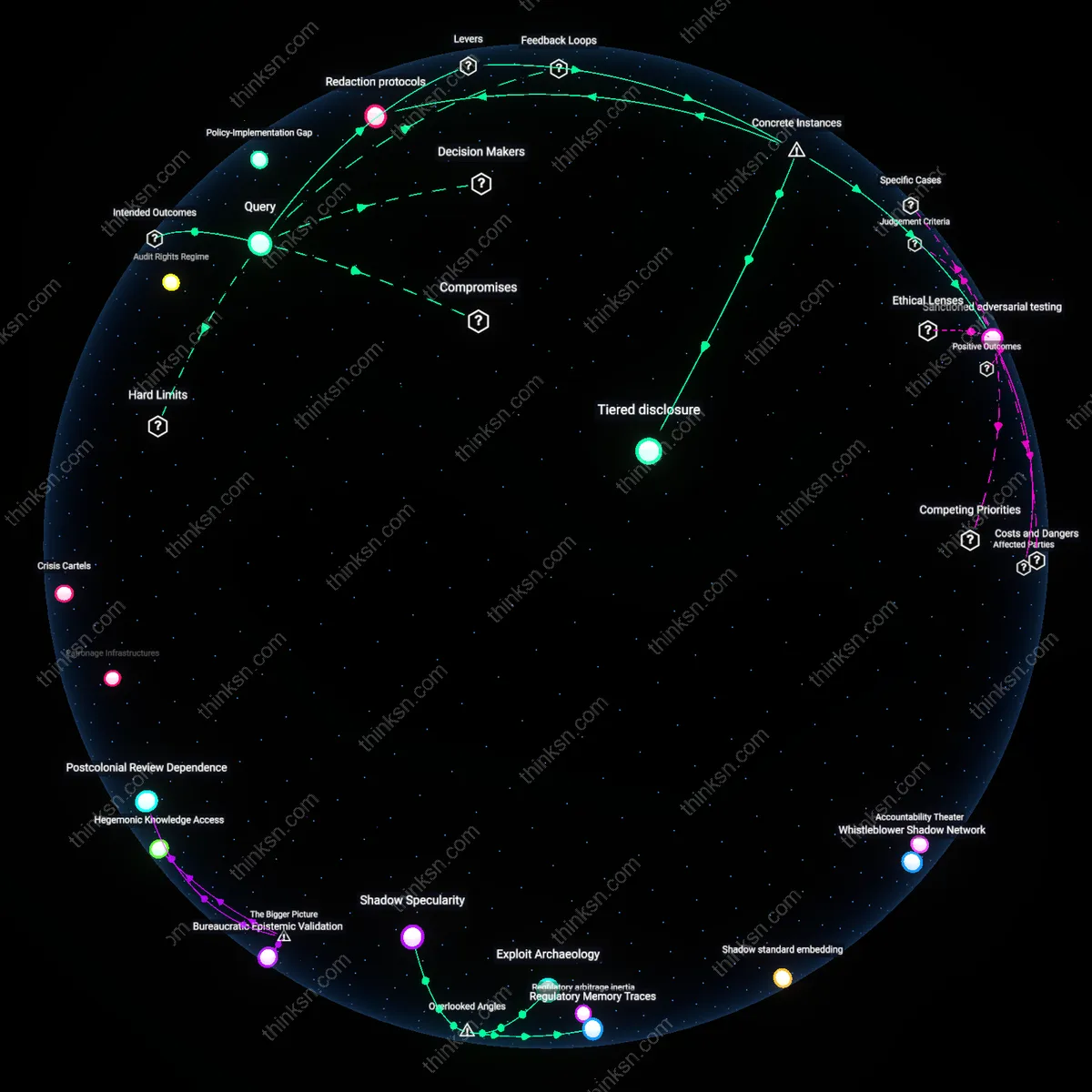

Corporate Accountability Substitution

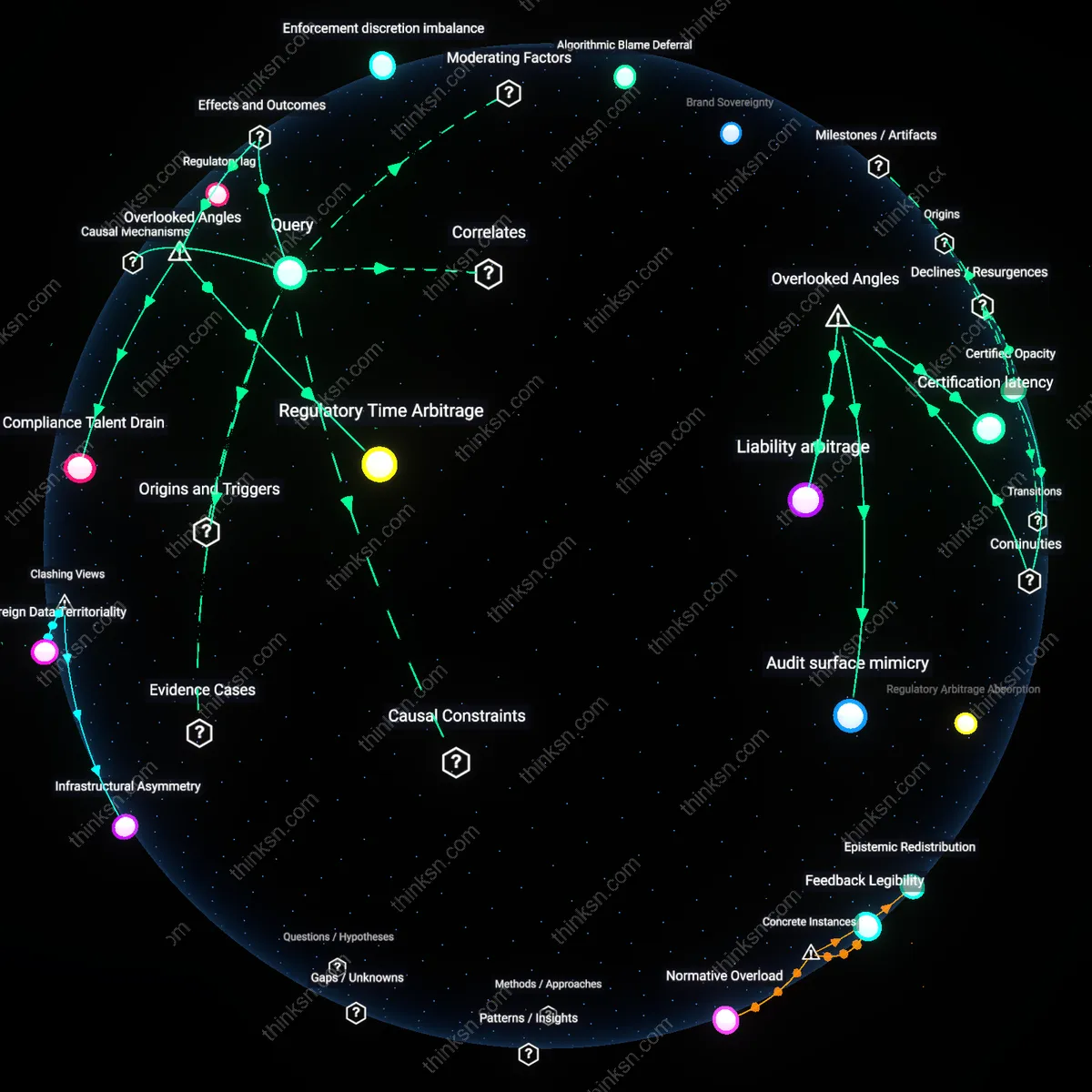

Following the 2018 Cambridge Analytica revelations, major platforms began formalizing algorithmic transparency reports and internal review boards, marking a shift from public regulatory dependency to private standard-setting. This era saw accountability mechanisms—once the domain of legislative hearings and FCC-style oversight—replaced by corporate-designed audit trails accessible only to privileged third parties. The key mechanism is the enclosure of oversight within proprietary frameworks, where accountability is performed rather than enacted, satisfying stakeholder expectations without ceding control. The underappreciated dynamic is that this shift did not evade regulation but redefined it as a deferred, internalized process that mimics public governance while insulating decision-making.

Shadow Standardization

Between 2020 and 2023, coordinated disinformation campaigns during global elections prompted platforms to harmonize opaque algorithms across jurisdictions, effectively creating a de facto global speech regime without legislative consensus. This convergence was not driven by public law but by shared risk models and inter-platform data-sharing pacts under the guise of 'election integrity alliances.' The mechanism operates through quiet alignment of recommendation systems to suppress similar content patterns, producing uniform outcomes despite diverse legal environments. The non-obvious result is that regulatory intent—originally rooted in national sovereignty—has been temporally displaced into a forward-leaning, anticipatory compliance model that prioritizes systemic stability over democratic pluralism.

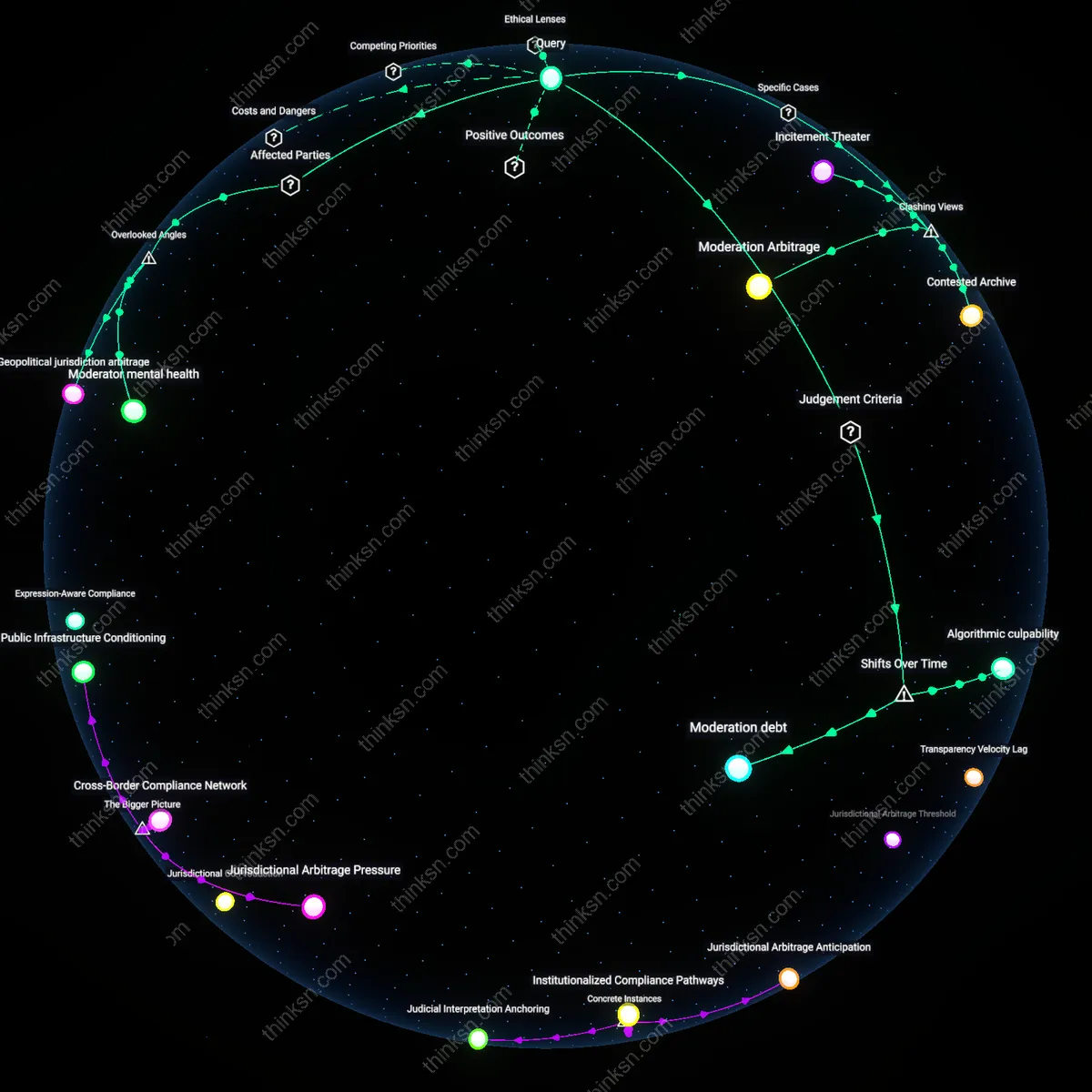

Algorithmic Theater

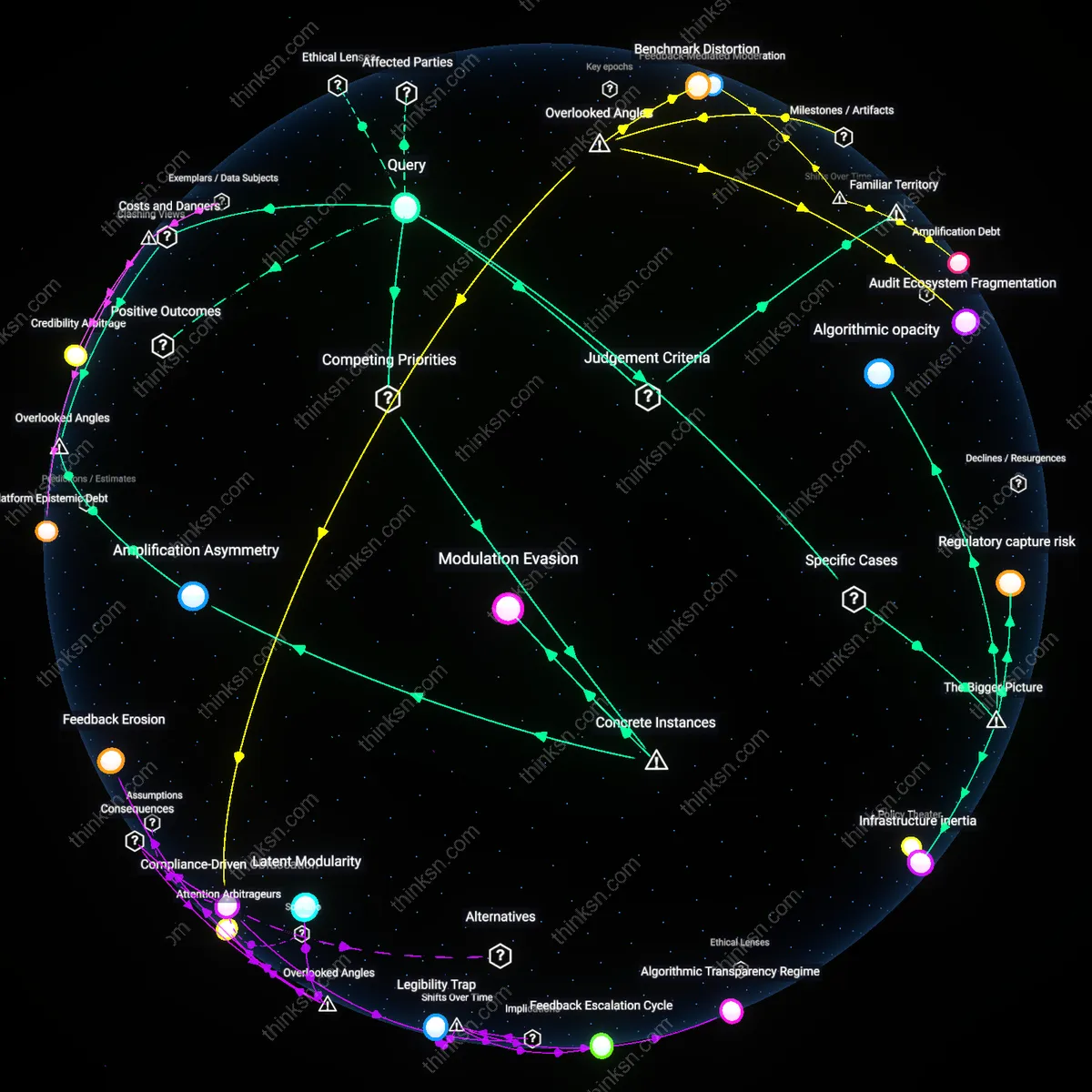

Self-regulation via opaque algorithms on Facebook during the 2020 U.S. election fulfilled regulatory intentions only ritually, as the platform deployed visible content moderation crackdowns while shielding decision-making criteria in proprietary systems; this performance of accountability—evident in the inconsistent enforcement around political fact-checking and the delayed removal of viral misinformation—allowed Meta to appear compliant with public pressure without ceding control to external oversight, revealing that the primary function of algorithmic governance is not regulation but legitimation through selective visibility.

Shadow Liability

YouTube’s recommendation algorithm, despite internal documentation showing its amplification of harmful content, remains protected under Section 230 precisely because its opacity prevents attribution of intent, allowing Alphabet to outsource accountability to users and creators while retaining design sovereignty; this deliberate obscurity—exposed in the 2018 Senate hearings and reinforced by restricted researcher access—demonstrates that corporate self-regulation does not fail accountability so much as reengineer it into an undetectable form, where harm can be acknowledged without culpability being assignable.

Modular Noncompliance

Twitter’s implementation of algorithmic amplification filters under its ‘Healthy Conversations’ initiative in 2022 did not align with regulatory goals of transparency or equity but instead functioned as a modular defense, where discrete, testable changes could be showcased to regulators while core engagement-driven ranking mechanisms remained concealed and unaltered; this tactical compartmentalization—visible in the selective release of metrics on reply quality while withholding viral content pathways—exposes how self-regulation enables platforms to satisfy procedural expectations without structural adherence, treating compliance as a feature to be toggled rather than a framework to be internalized.