Friend Suggestions: Privacy Risk or Social Gain?

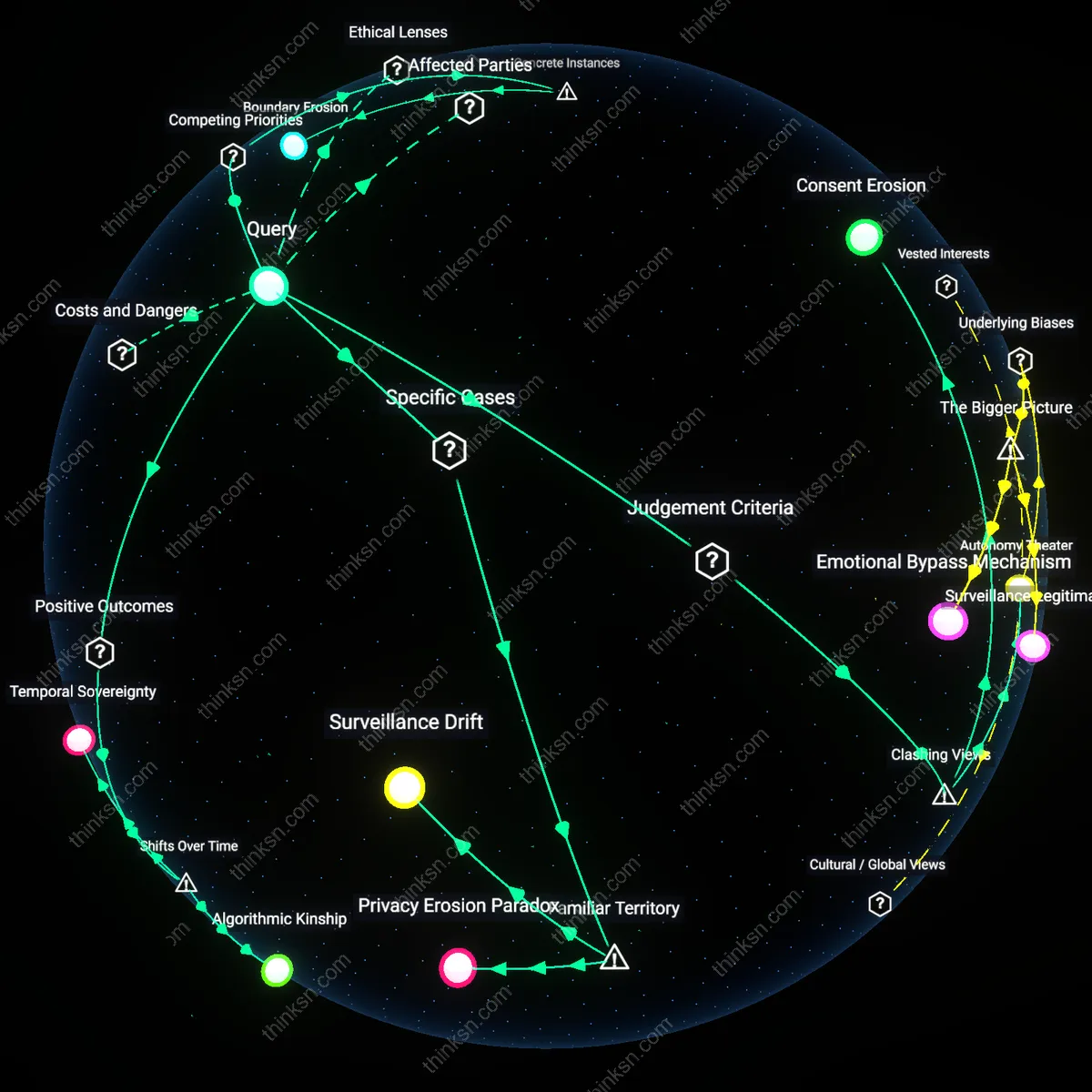

Analysis reveals 4 key thematic connections.

Key Findings

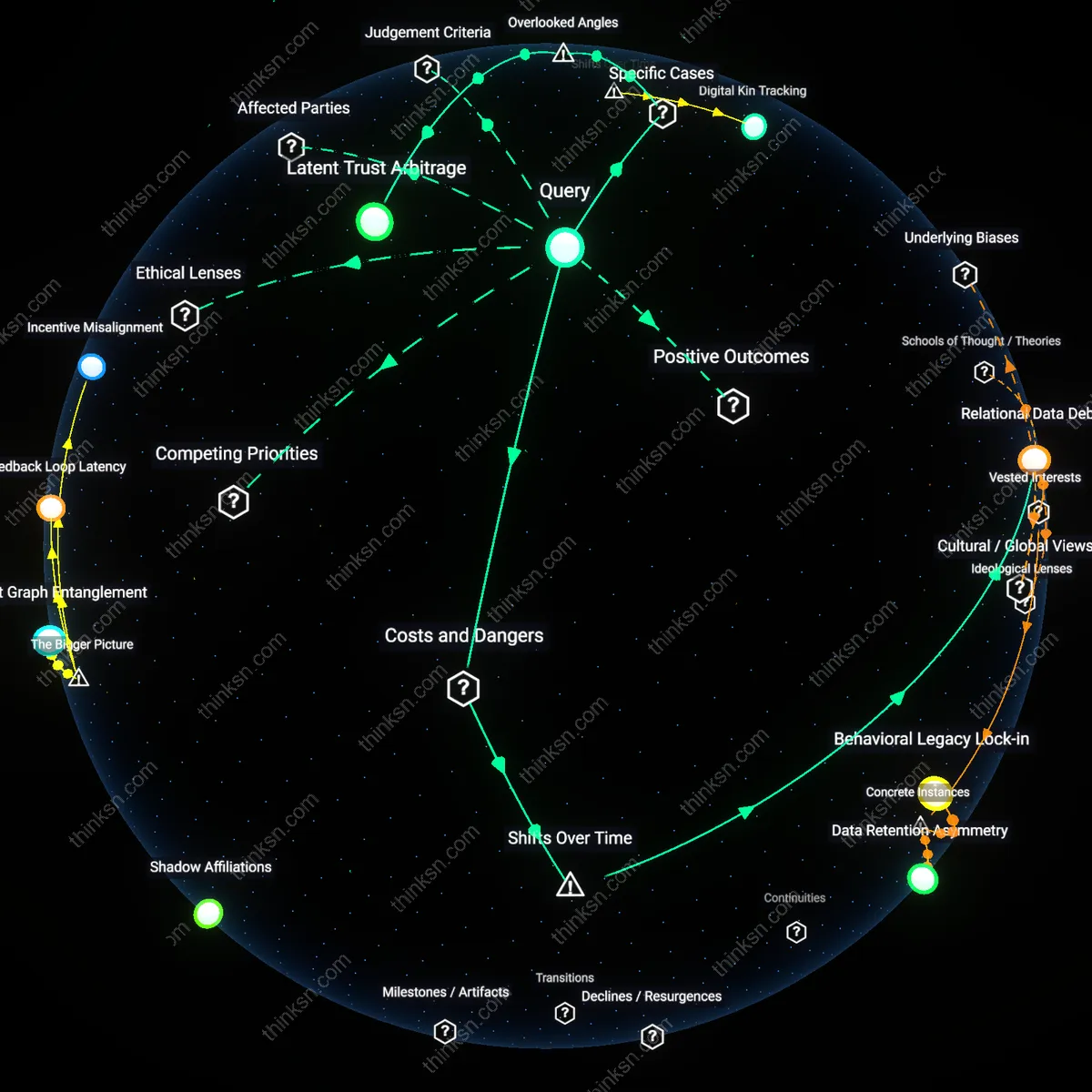

Relational Data Debt

The normalization of network-based friend suggestions since the early 2010s has entrenched a systemic liability in which users unknowingly accrue long-term exposure through ephemeral social gestures—a shift from personal communication to infrastructural data seeding. Early social media relied on explicit friend links, but the rise of passive behavioral tracking (time spent, mouse hovers, message read receipts) turned every interaction into relational metadata, feeding models that map hidden networks across accounts and platforms. This data is rarely purged and becomes embedded in cross-platform profiles, meaning a casual five-second interaction in 2016 can still generate accurate intimacy inferences in 2030. The critical transition occurred when platforms stopped viewing connections as user-defined states and began treating behavior as an always-already-analyzable record, creating a growing backlog of relational exposures that users cannot audit or erase. The consequence is not isolated breaches but a compounding, intergenerational data burden—'relational debt'—that accrues silently and outlives both the users and the original context.

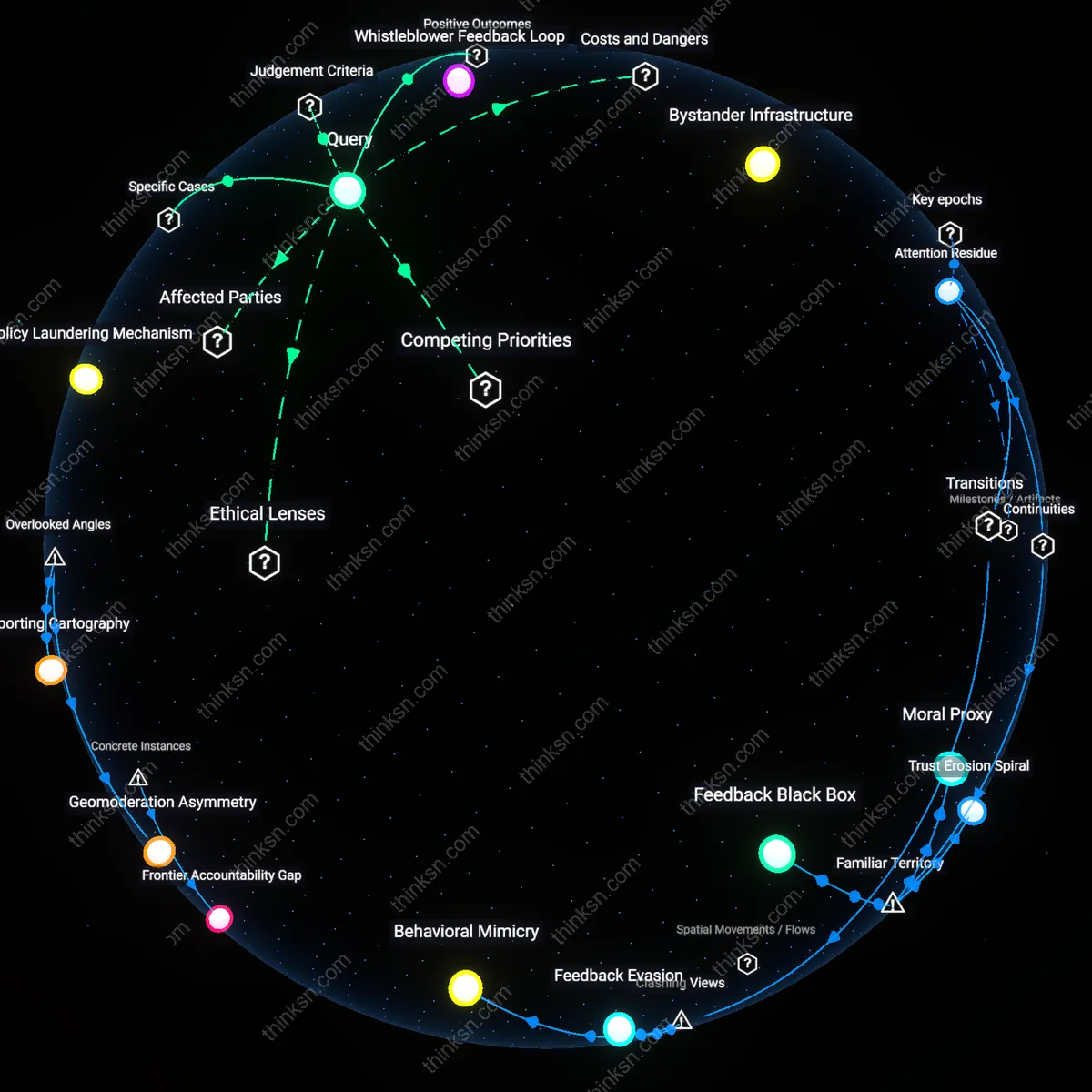

Affinity Redundancy Loops

Since the late 2010s, friend suggestion systems have amplified the risk of exposure by institutionalizing feedback loops that reify and escalate inferred intimacy signals across platforms, turning probabilistic guesses into operational truths through repeated re-suggestion. When Instagram, owned by Meta, repurposes Facebook's inferred kinship scores to suggest 'Close Friends' or potential family members, each suggestion—whether accepted or ignored—generates behavioral feedback that reinforces the algorithm's confidence in the relationship's existence and depth. This marks a shift from static network analysis to dynamic relational entrenchment, where the act of suggesting a bond strengthens its statistical reality within the system, regardless of its actual social validity. What is rarely acknowledged is that these loops pre-emptively expose intimate patterns not by leaking data but by forcing them into repeated algorithmic visibility, making the suggestion itself a mode of operational exposure—each prompt a potential betrayal disguised as convenience.

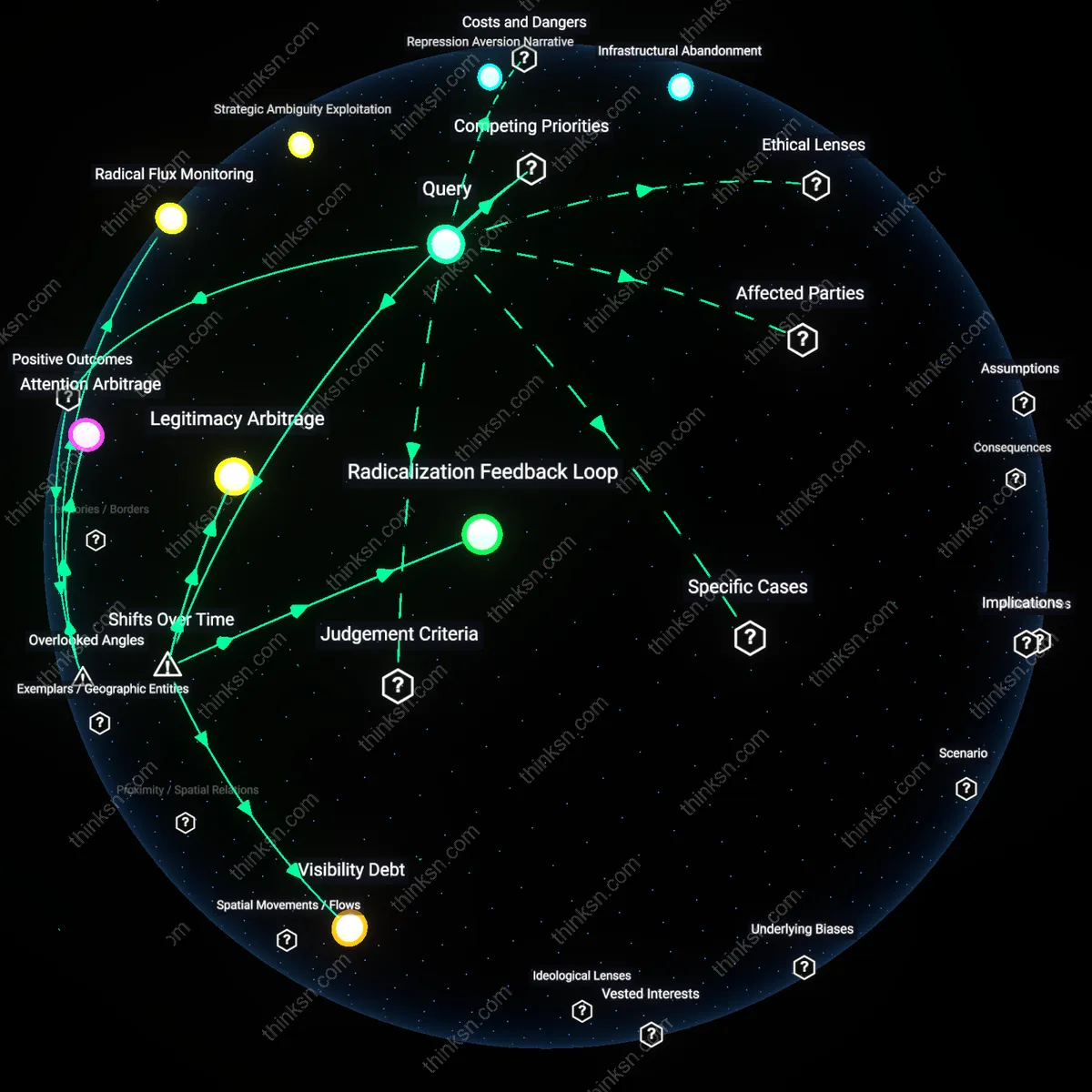

Algorithmic Kinship Mapping

Friend suggestion systems on Facebook in Lebanon have exposed covert marital networks among Shia communities, where religious endogamy and familial alliances are socially expected but politically sensitive, revealing that the risk lies not in exposure to strangers but in algorithmic inference by state or sectarian actors who repurpose kinship patterns for surveillance—this mechanism is overlooked because most privacy debates focus on individual data leaks rather than the structural reconstruction of collective social topologies through aggregated relational signals.

Latent Trust Arbitrage

In Brazil’s favelas, WhatsApp contact recommendations based on network proximity have been exploited by local militias who infiltrate community groups by leveraging algorithmically suggested 'friends of friends,' turning relational density into a vector for coercive governance—this illustrates how trust gradients in high-surveillance environments become exploitable infrastructure, a dimension missed in mainstream discourse that frames algorithmic suggestions as socially neutral rather than embedded in asymmetric power economies.