Lenient Data Privacy: Tech Firms Prioritize Convenience Over Future Rights?

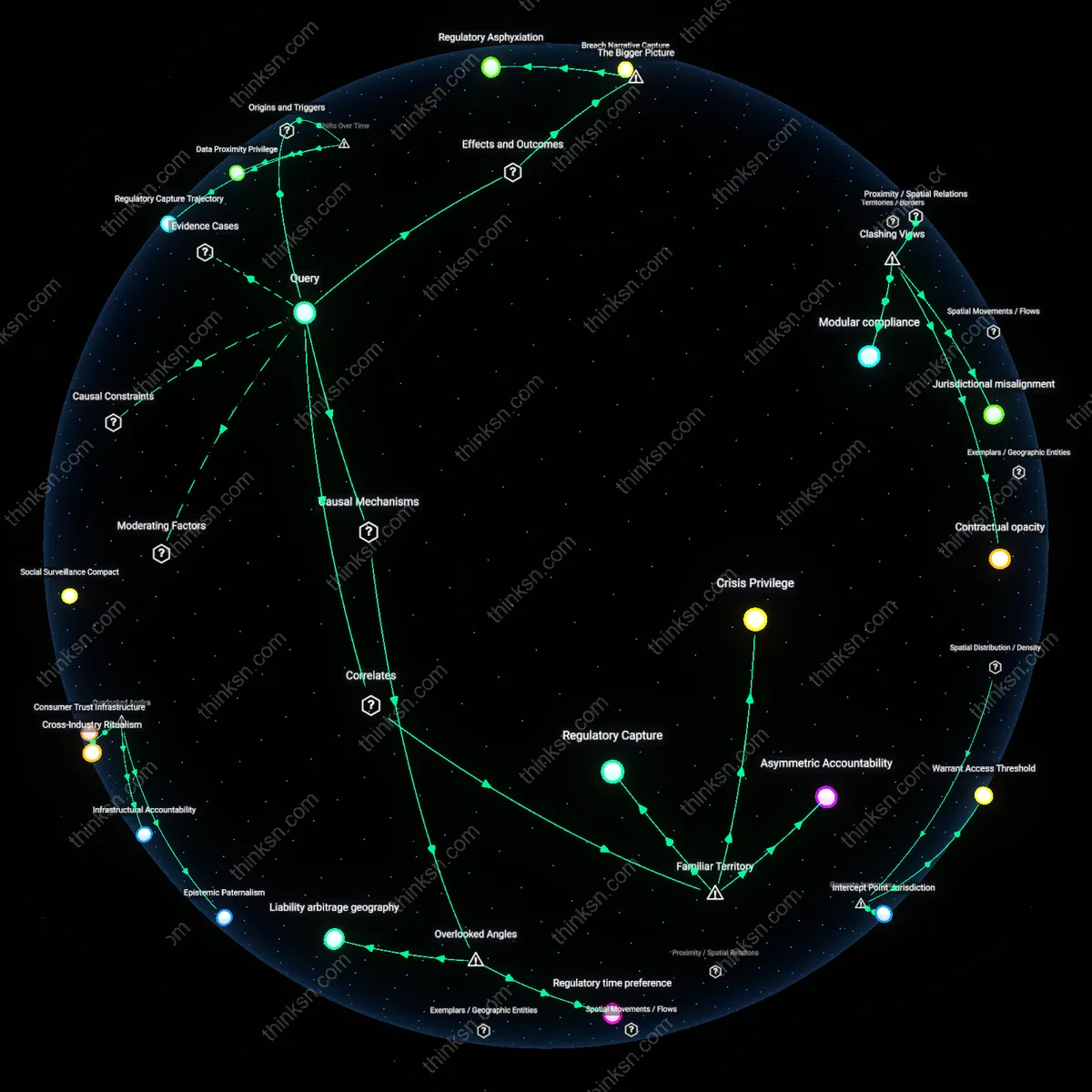

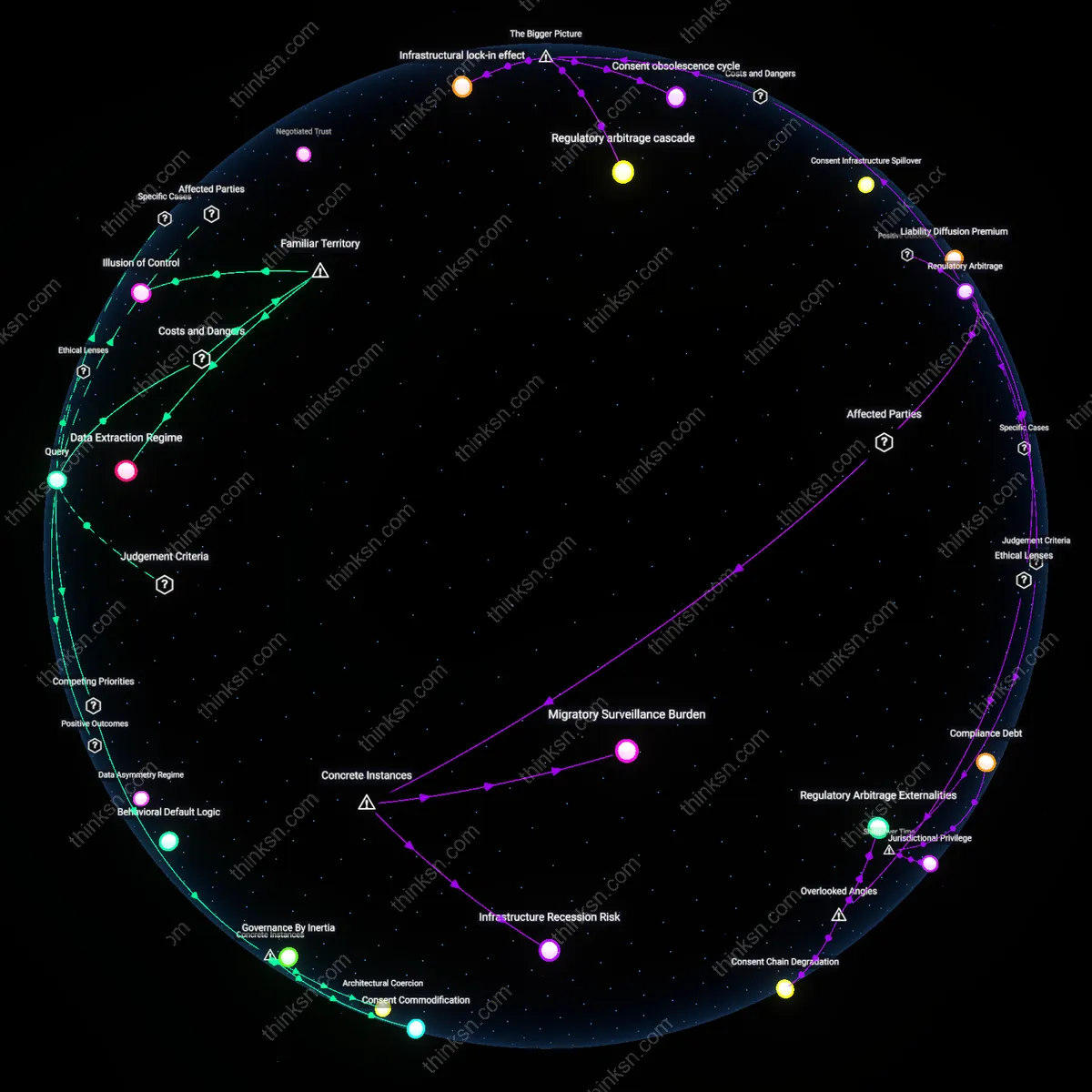

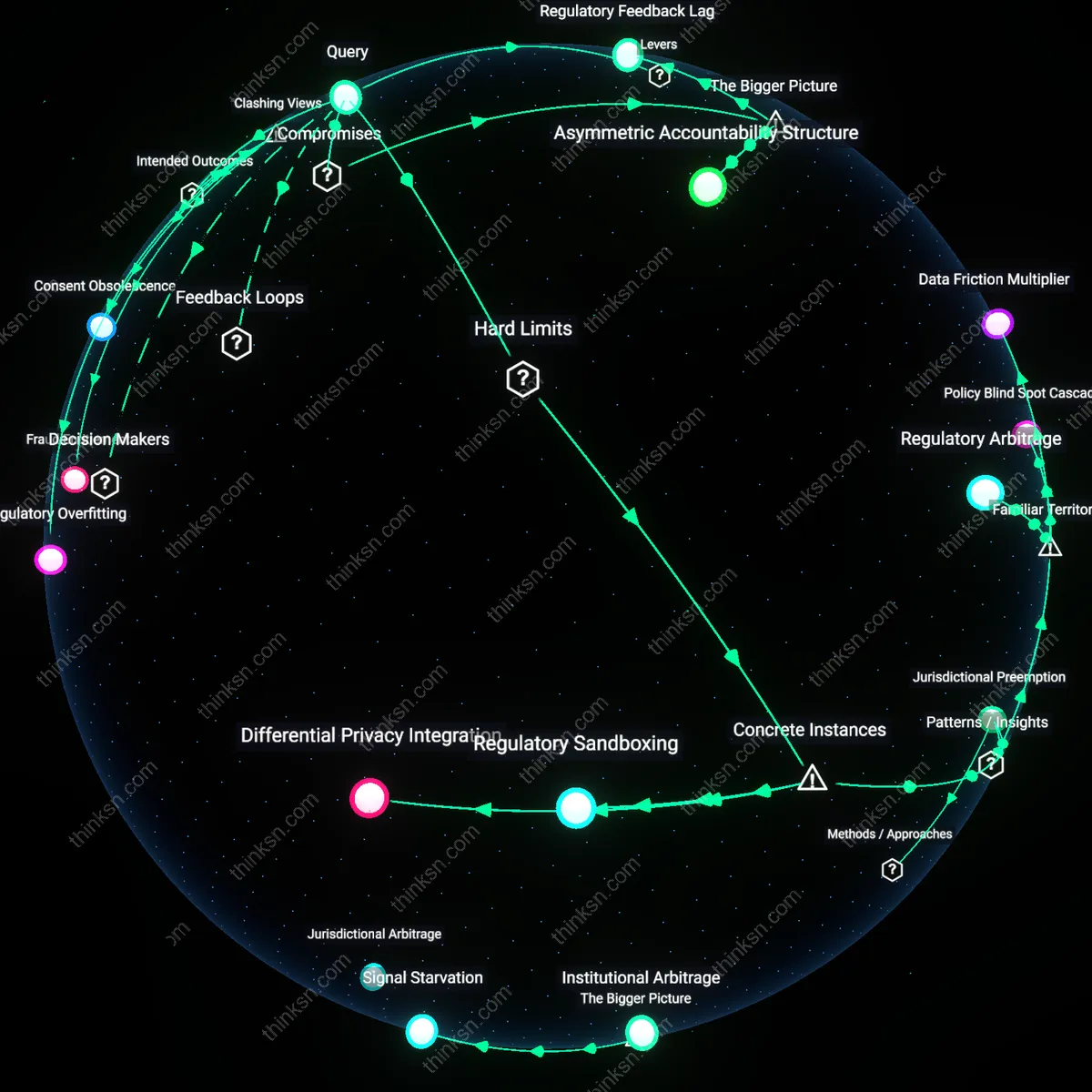

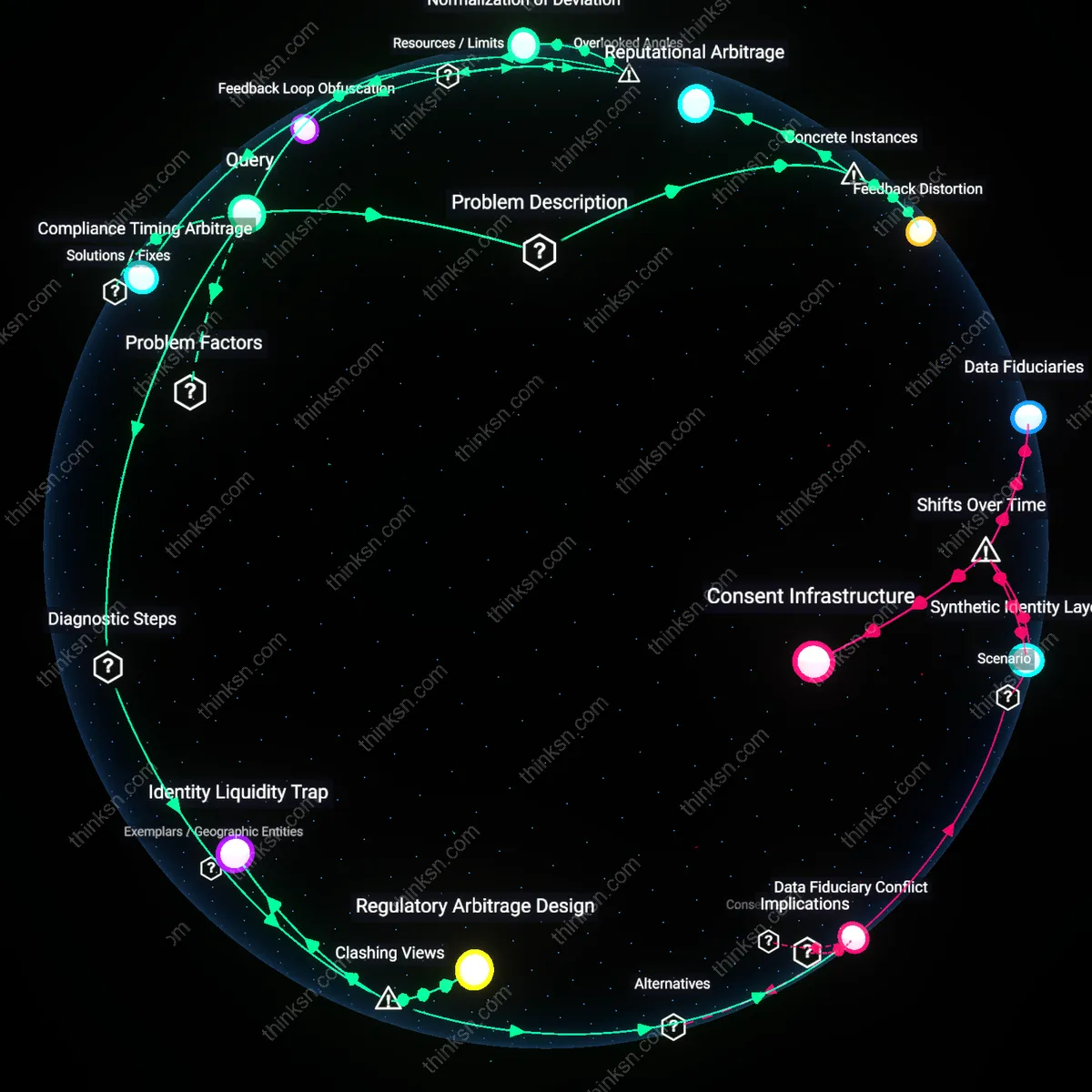

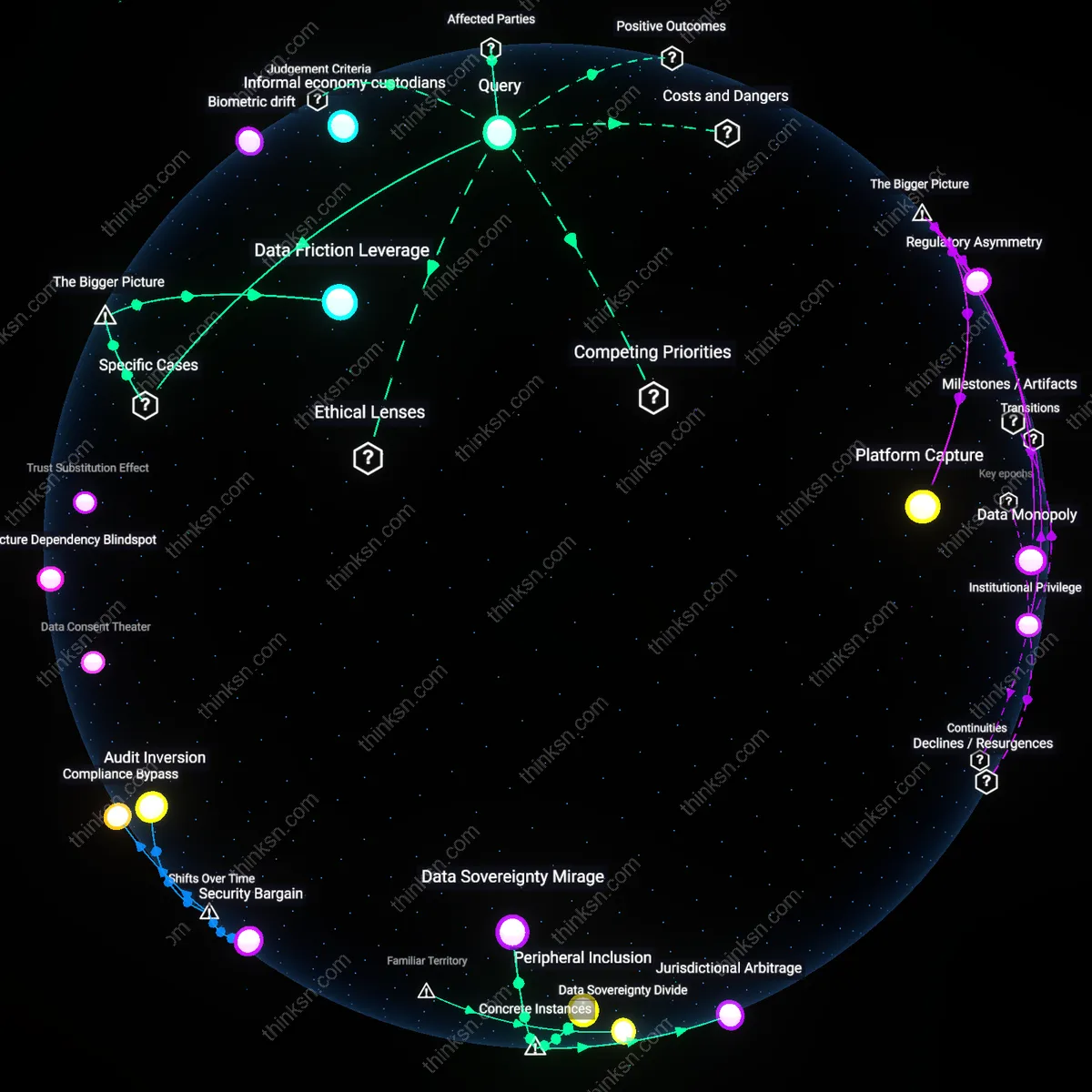

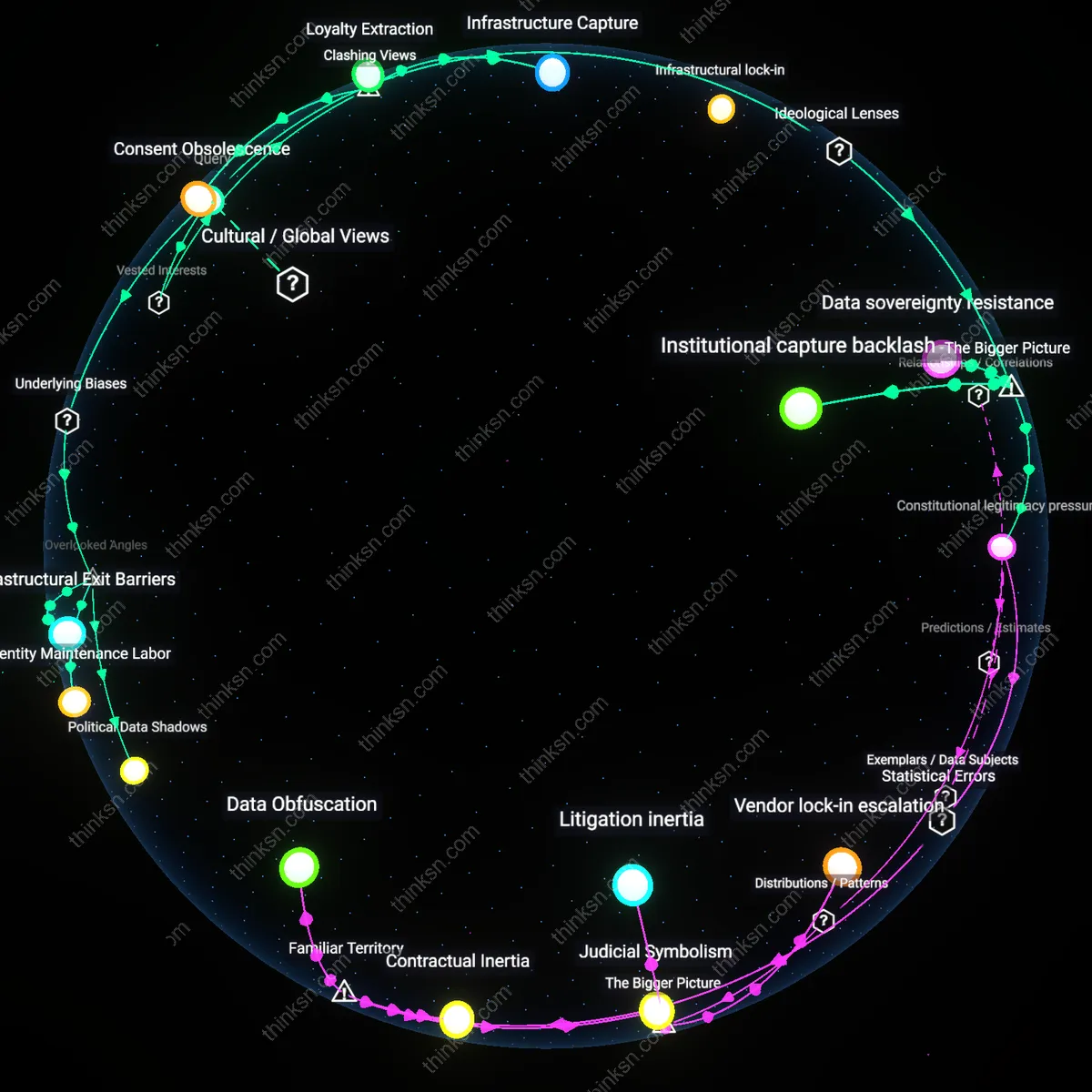

Analysis reveals 9 key thematic connections.

Key Findings

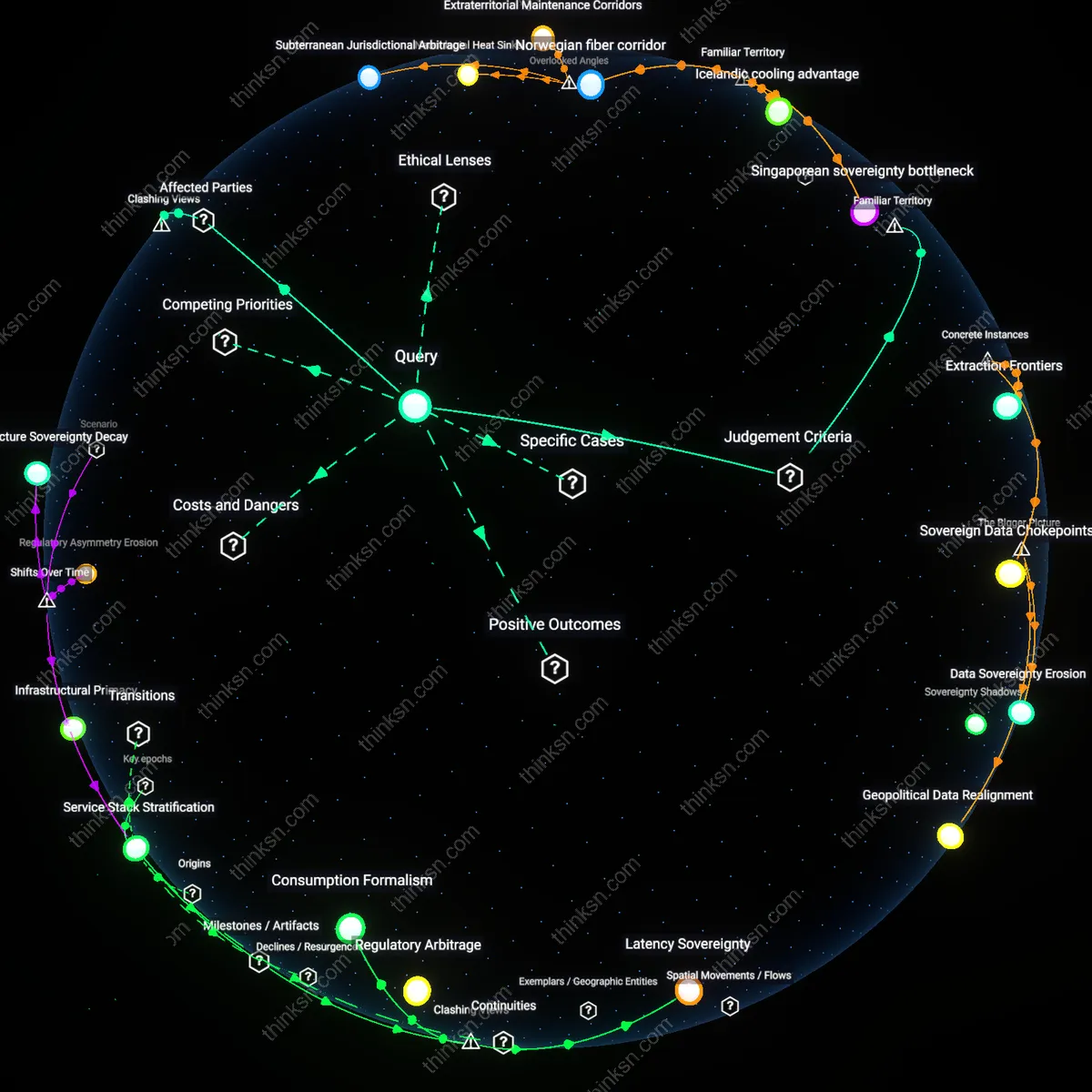

Regulatory Arbitrage Incentive

Tech industry lobbying for weaker data-privacy rules reflects a systemic preference for regulatory arbitrage, judged by the economic principle of efficiency prioritized over autonomy. Firms like Meta and Google push for lenient regulations in fragmented jurisdictions—such as U.S. states or emerging digital markets—not to eliminate oversight entirely, but to exploit jurisdictional misalignment that allows low-compliance operations while scaling globally. This dynamic is sustained by the structural condition that privacy enforcement remains nationally bounded while data infrastructures are transnational, making uneven regulation a predictable enabler of cost-minimizing strategies. The non-obvious insight is that these lobbying efforts are not merely about immediate convenience, but about locking in a permanent asymmetry that rewards jurisdictional fragmentation.

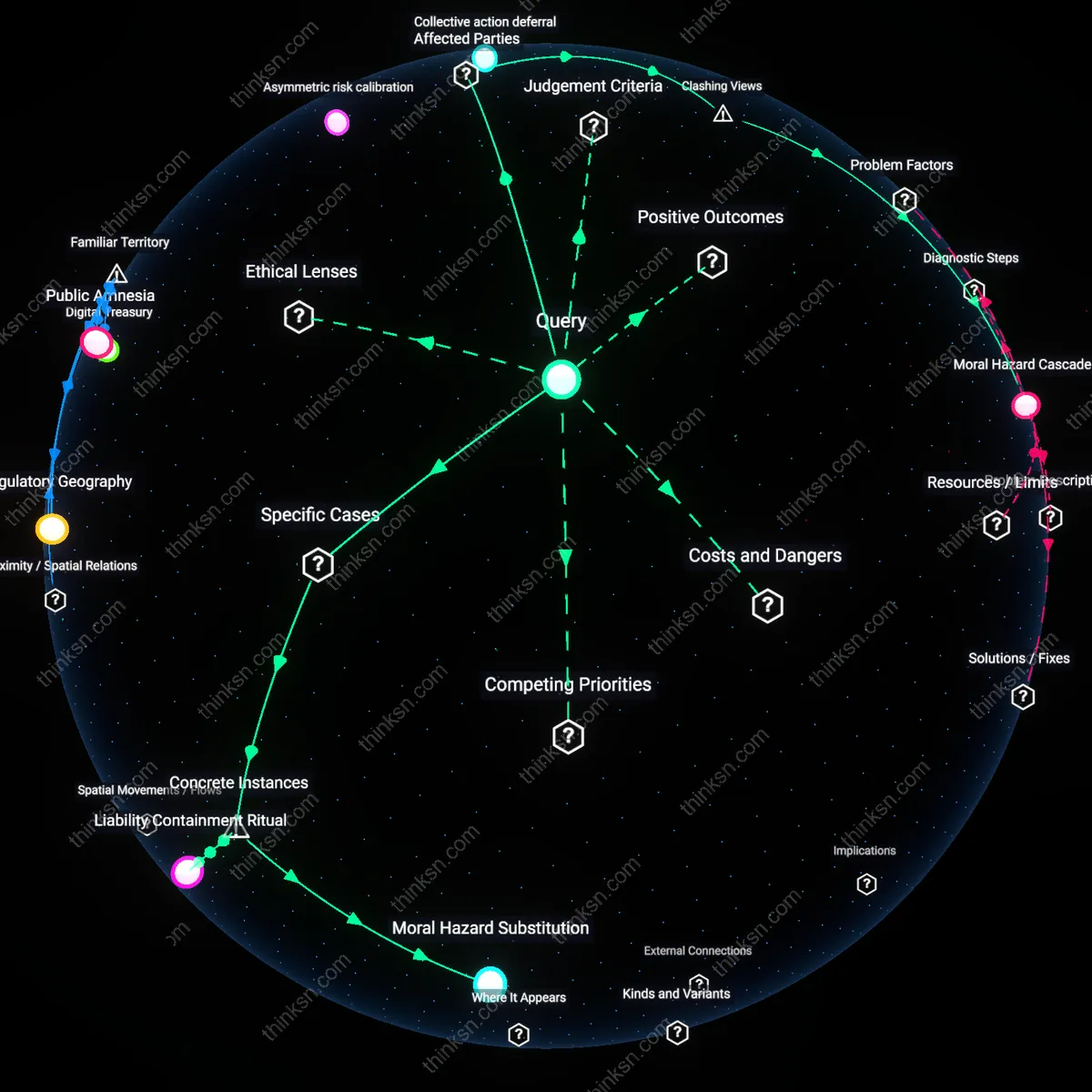

Consent Infrastructure Erosion

Lobbying for weaker data-privacy rules undermines long-term digital rights by systematically degrading the infrastructure of informed consent, judged against the moral principle of individual autonomy. Platforms shape policy outcomes—such as opposing default opt-in consent or weakening 'right to explanation' clauses—not just to ease data collection, but to normalize irreversible user dependency on opaque algorithmic systems. This occurs through feedback loops where weakened rules reduce transparency, which in turn diminishes public expectation and demand for control, making future re-regulation politically harder. The underappreciated consequence is that convenience becomes coercive not through design alone, but through the deliberate atrophy of civic capacity to resist surveillance norms.

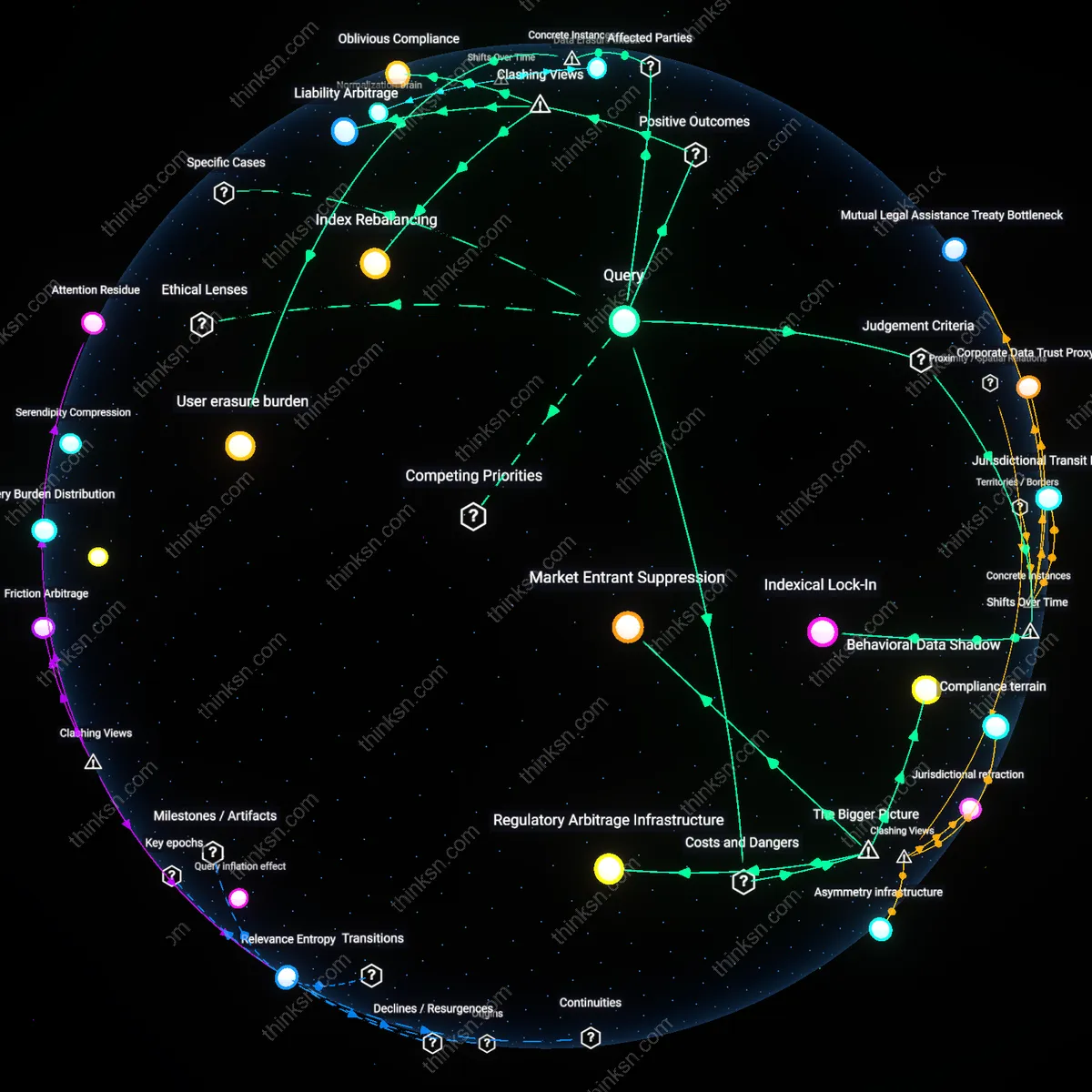

Innovation Deflection Mechanism

The push for lax privacy rules functions as an innovation deflection mechanism, evaluated through the practical principle of adaptive governance. Major tech firms use lobbying to reframe privacy as a barrier to 'innovation,' thereby redirecting public and regulatory attention toward short-term consumer features—like personalized recommendations—while suppressing regulatory momentum around structurally transformative alternatives, such as decentralized identity or user-owned data vaults. This works because the discourse of convenience is politically tractable, whereas discussions of systemic control require complex coordination among users, regulators, and technologists. The non-obvious effect is that the industry doesn’t just resist privacy—it actively shapes what counts as 'progress' in digital markets, entrenching centralized data models as inevitable.

Surveillance Entrenchment

Tech industry lobbying for weaker data-privacy rules locks in mass surveillance as the default architecture of digital life. Major firms like Meta and Google funnel resources through trade groups such as the Internet Association to shape legislation before it forms, positioning data extraction as necessary for innovation and free services. This isn’t a temporary convenience compromise—it hardwires surveillance into infrastructure, making opt-out nearly impossible and redefining public expectations of privacy as obsolete. The non-obvious consequence is that the public accepts surveillance not because they consent but because alternatives are systematically erased from design and policy.

Regulatory Capture by Default

Lobbying for weaker privacy rules enables tech firms to shape regulations in their image, turning oversight bodies into extensions of corporate strategy. Agencies like the FTC or EU policymakers face asymmetrical information and staffing gaps, allowing companies to flood rulemaking processes with self-serving risk assessments and economic projections. The result is that privacy regulations end up protecting business models rather than citizens, with compliance theater replacing enforceable rights. What’s overlooked is that the very form of regulation becomes a tool of legitimization, masking corporate dominance as public interest governance.

Normalization of Data Extraction

Weaker data-privacy rules, pushed by tech lobbyists, normalize constant personal data harvesting as the price of participation in digital society. When platforms make features like personalized ads or smart recommendations synonymous with usability, users internalize data surrender as unavoidable. This isn’t just about targeted ads—it reshapes identity in commercial terms, where behavior is perpetually monitored, scored, and sold. The subtle harm is that people stop imagining digital spaces where they aren’t products, eroding collective ambition for alternatives.

Institutionalized Surveillance Drift

Tech industry lobbying for weaker data-privacy rules represents a decisive shift from opt-in data accountability in the early 2000s to systemic normalization of passive consent, where platforms leveraged rapid product innovation during the smartphone boom (2007–2014) to embed data extraction into user experience defaults—turning regulatory leniency into structural inevitability. This transition was enabled by venture capital’s prioritization of growth metrics over ethical design, which made comprehensive privacy protections economically retrograde in emerging digital markets. The non-obvious outcome is not increased consumer harm per se, but the erosion of regulatory imagination—policymakers increasingly frame privacy as a user-choice problem rather than a market-power issue, locking in surveillance logic as infrastructural rather than contingent.

Regulatory arbitrage temporality

Tech industry lobbying for weaker data-privacy rules prioritizes immediate market expansion over durable rights protections by exploiting asynchronous timelines in legal codification, where fast-moving data infrastructures outpace the slow accretion of legal doctrine—this mechanism is clearest in U.S. federalism, where tech firms lobby state legislatures to preempt comprehensive privacy regimes with weaker, fragmented laws as interim defaults. The overlooked dynamic is that the ‘long-term’ is not a future abstraction but actively eroded through deliberate present legal fragmentation, which locks in path dependencies favoring industry control under the banner of flexibility, a move justified under liberal economic efficiency but which systematically degrades democratic accountability in law-making temporalities.

Infrastructural path entrenchment

Persistent investment in data extraction architectures—such as real-time bidding systems in digital advertising—creates a material dependency on weak privacy rules, making reversibility structurally improbable regardless of future ethical or legal shifts. Few analyses recognize that the trade-off is not merely between convenience and rights but between preserving already-embedded technical systems that financially reward surveillance logic and the cost of dismantling them, which manifests in developer incentives, proprietary API designs, and cloud vendor contracts that predate and pre-empt emerging rights-based regulations like GDPR or CCPA enforcement—this infrastructural inertia acts as a silent veto on meaningful reform.