Should You Share Your Voiceprint When Privacy Policies Lag?

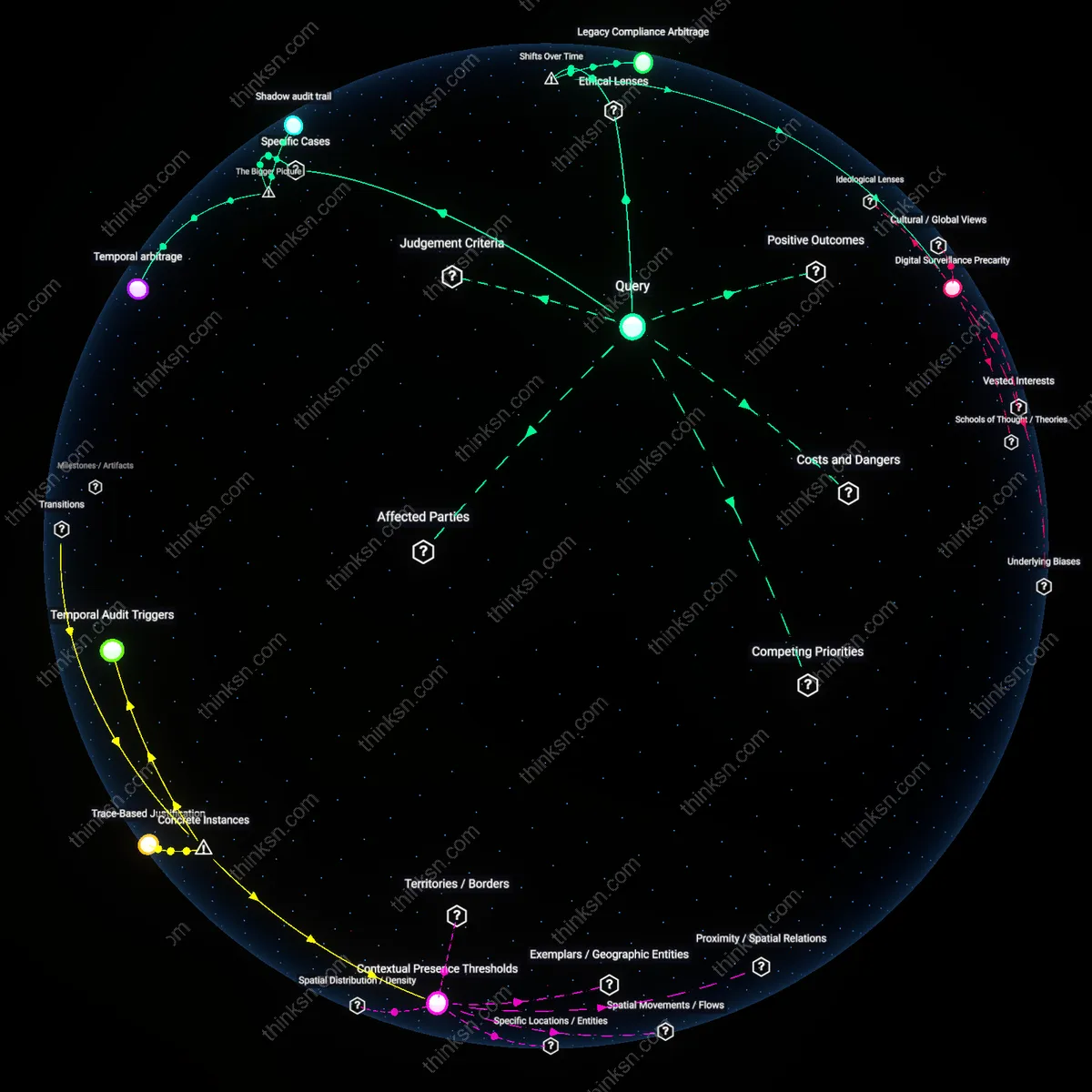

Analysis reveals 11 key thematic connections.

Key Findings

Voiceprint Exploitation Gap

Consumers should assess biometric voiceprint risks by demanding transparency about data handover protocols between tech firms and federal agencies like the FBI or DHS, because standard user agreements rarely disclose whether voiceprints are protected under the Fourth Amendment or subject to National Security Letters—this gap enables legally ambiguous access by law enforcement without probable cause, and the underappreciated reality is that most voice data collected by smart speakers or banking IVRs is stored in cloud repositories with weaker privacy safeguards than physical biometrics like fingerprints.

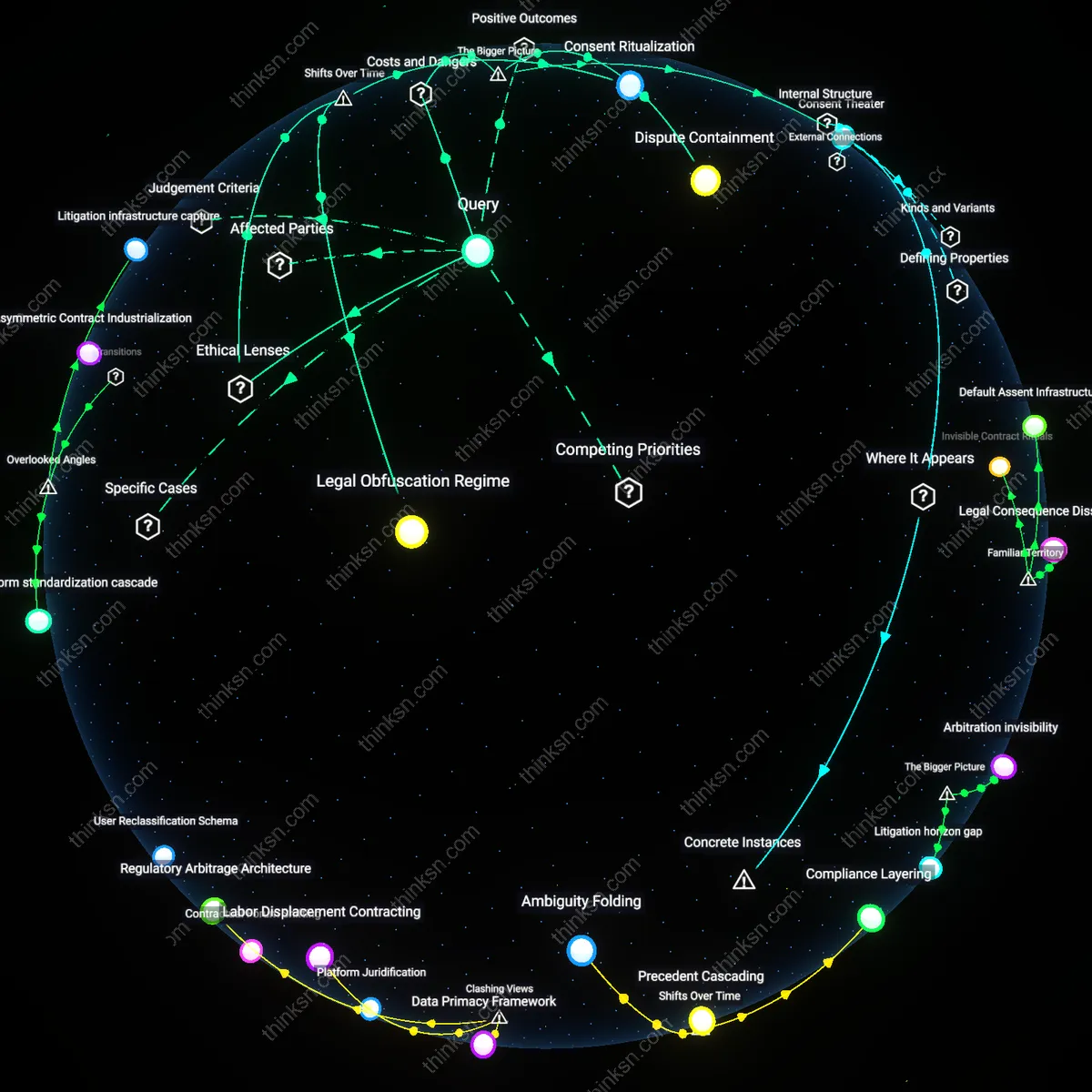

Consent Illusion

Consumers must recognize that agreeing to voiceprint collection through digital terms of service falsely implies control over future use, because these interfaces simulate informed consent while operating within opaque corporate data practices where revocation is technically impossible and legal recourse is preempted by arbitration clauses—this mismatch between perceived agency and actual enforceability reveals how the familiar ritual of ‘accepting terms’ masks irreversible data surrender, particularly when voiceprints are repurposed by third-party contractors in intelligence fusion centers.

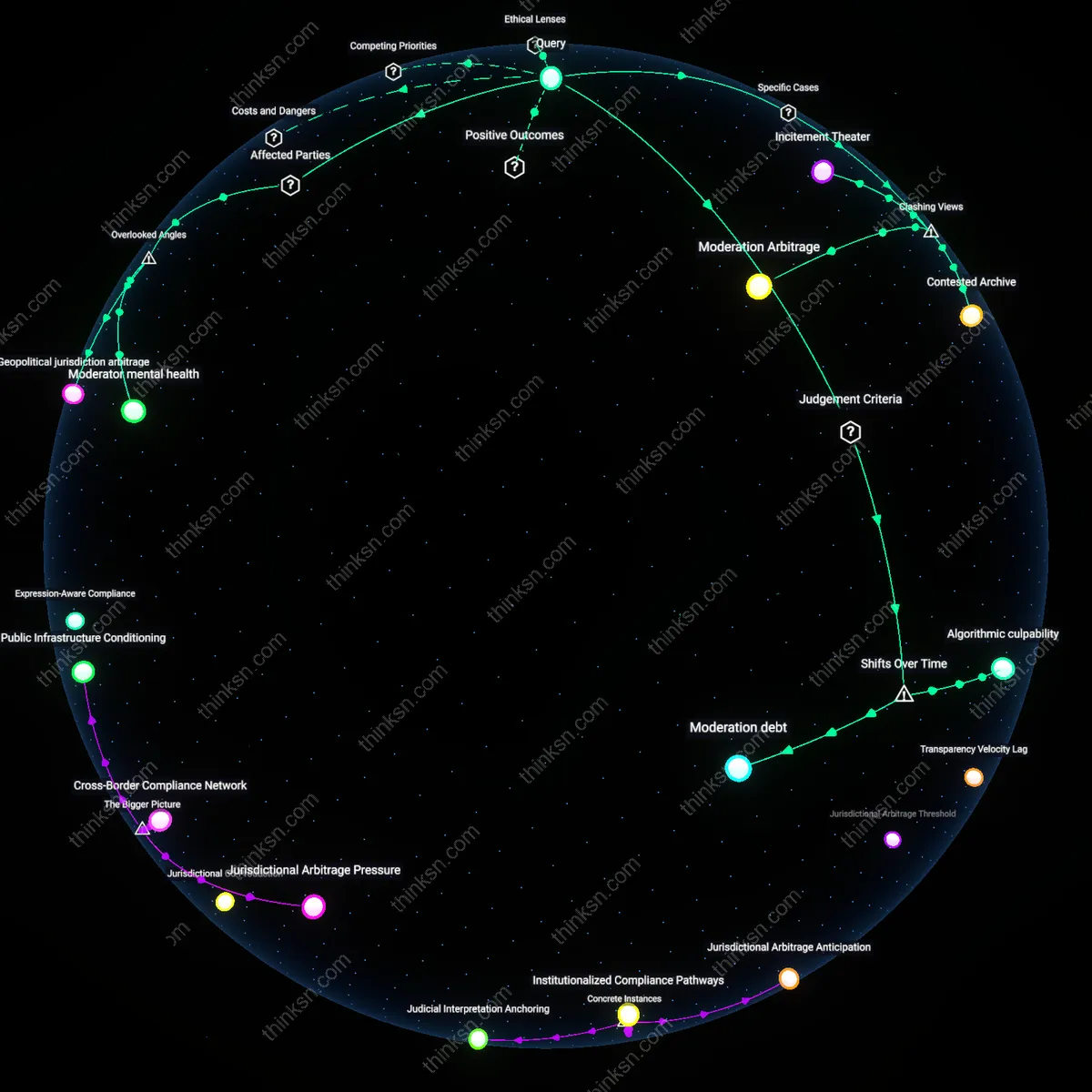

Surveillance Drift

Consumers should evaluate voiceprint sharing by tracking documented cases where biometric systems deployed for commercial authentication—such as voice ID in banking or ride-sharing apps—are later leveraged by local police through mutual aid agreements or shared databases like the FBI’s Voice Stress Analysis program, because the migration of data from private to public surveillance occurs incrementally and without legislative debate, and the unnoticed mechanism is interoperability design built into backend APIs that allow cross-sector data pooling under emergency exceptions.

Behavioral Compliance Nudge

Consumers gain indirect protection from misuse of biometric voiceprints by engaging with systems that use law enforcement ambiguity as a behavioral nudge toward corporate accountability, where companies like Amazon or Google limit internal access to voice data not because of legal mandates but to preempt regulatory backlash and maintain user trust essential for ecosystem lock-in. The opacity around police access becomes a reputational liability that firms actively manage by self-imposing stricter data handling than required, meaning users benefit from de facto privacy enhancements despite weak legal safeguards. This dynamic reveals that uncertainty in state power can incentivize corporate self-restraint more effectively than clear rules would, flipping the assumption that policy clarity is always protective.

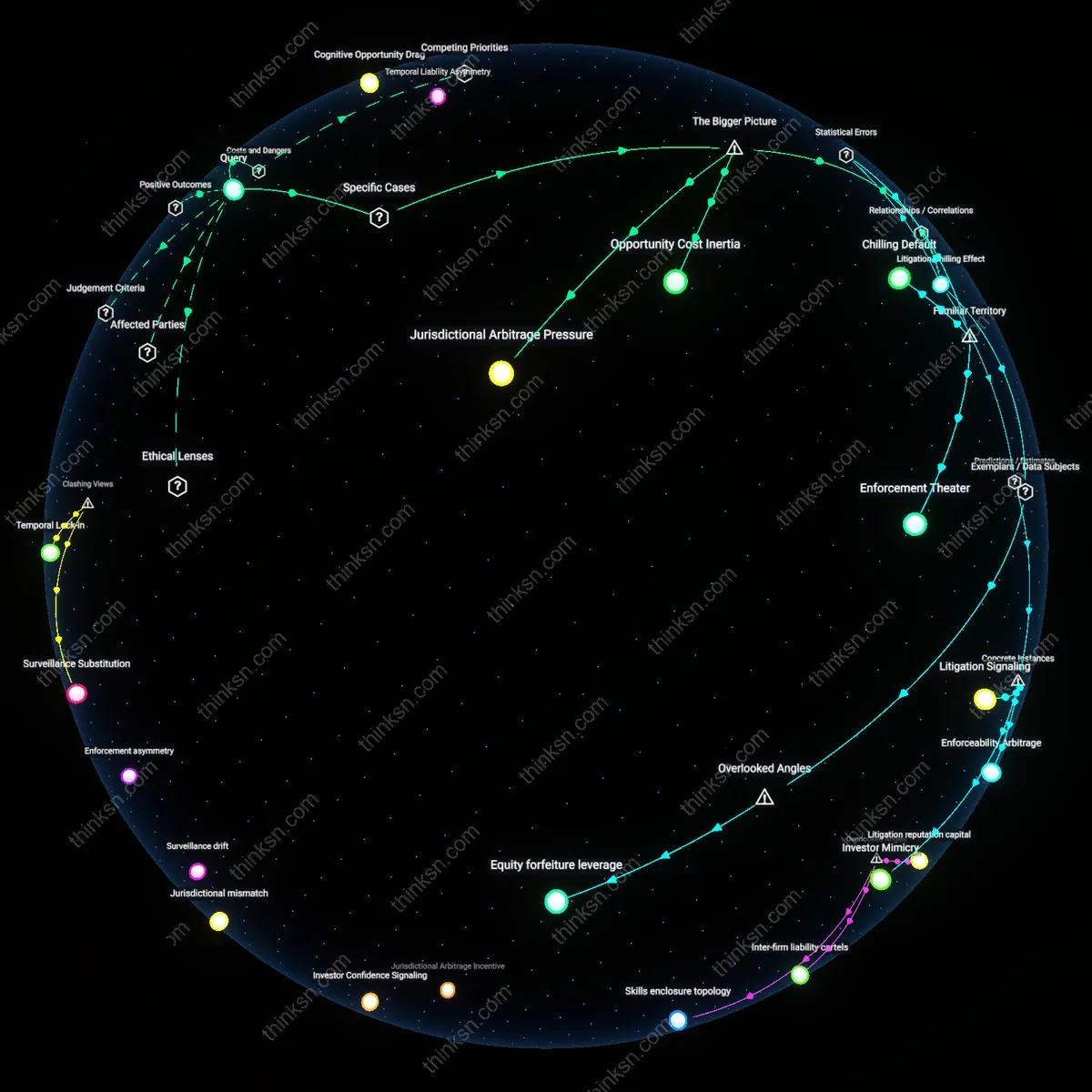

Data Jurisdiction Arbitrage

Consumers in transnational digital platforms such as Apple’s Siri or Microsoft’s Azure Cognitive Services actually benefit from fragmented and unclear law enforcement access policies because these ambiguities create jurisdictional friction that delays or prevents state data grabs, as seen when U.S. agencies request voiceprint data stored in Irish servers governed by GDPR. The lack of clear bilateral protocols forces governments into protracted legal negotiations, during which consumer data remains shielded by procedural inertia and institutional misalignment. This illustrates that regulatory incoherence across borders can function not as a vulnerability but as a de facto privacy buffer, contradicting the view that harmonized policies are inherently superior for user protection.

Voiceprint Utility Scaling

Sharing biometric voiceprints accelerates the development of inclusive speech technologies that serve marginalized populations—such as voice-driven AAC (Augmentative and Alternative Communication) devices for ALS patients—because large, diverse datasets improve recognition accuracy across dialects, impairments, and non-native speakers, and companies like Nuance or Whisper train models on consumer-contributed voiceprints despite unclear law enforcement access rules. The perceived risk of surveillance is counterbalanced by rapid advancements in assistive technology that depend on widespread data donation, revealing that personal data exposure can be a form of civic contribution to medical and accessibility innovation, challenging the dominant narrative that voiceprint sharing is inherently exploitative.

Surveillance Substitution

As physical forensic methods decline in effectiveness due to encryption and anonymization, law enforcement increasingly depends on biometric voiceprints accessed through private-sector platforms, making consumer consent a de facto proxy for warrantless surveillance expansion. This shift is institutionalized through mutualized data-sharing routines—such as automated API gateways between voice assistant providers and federal agencies under CALEA extensions—that convert everyday user interactions into searchable biometric databases. The underappreciated mechanism is that privacy compromises are no longer one-off trade-offs but systemic reload points for state surveillance capacity cloaked in commercial service agreements.

Trust Deferral

Consumers defer risk evaluation of voiceprint sharing to platform reputation and brand trust—such as reliance on Apple or Amazon’s stated privacy policies—because the operational opacity of backend data routing makes technical due diligence impossible for individuals. This deferral is systemically reinforced by standardized terms-of-service architectures that disperse legal responsibility across third-party contractors, cloud hosts, and AI training consortia, effectively dissolving locatable accountability. The overlooked outcome is that trust functions not as a safeguard but as an institutional displacement mechanism, allowing both corporations and governments to outsource legitimacy to perceived brand neutrality.

Judicial Precedent Asymmetry

Consumers should evaluate voiceprint risks by recognizing that biometric data collected under corporate privacy policies can be compelled by law enforcement without consent, as demonstrated by the 2016 *In re Order Requiring Amazon to Provide Customer Data* case in Bentonville, Arkansas, where a prosecutor subpoenaed Alexa voice recordings from Amazon despite the company’s initial refusal, establishing that user biometrics stored commercially are vulnerable to legal seizure under the Stored Communications Act and Fourth Amendment precedents allowing third-party data access. This case reveals that the legal distinction between corporate custodianship and government access collapses in practice, rendering consumer-facing privacy policies functionally irrelevant once law enforcement invokes investigatory authority—what matters is not the stated policy but the jurisdictional precedent governing data production. The non-obvious insight here is that ethical frameworks like deontological privacy rights fail to constrain state power when biometric data is held by third parties, making legal realism a more predictive lens than normative ideals.

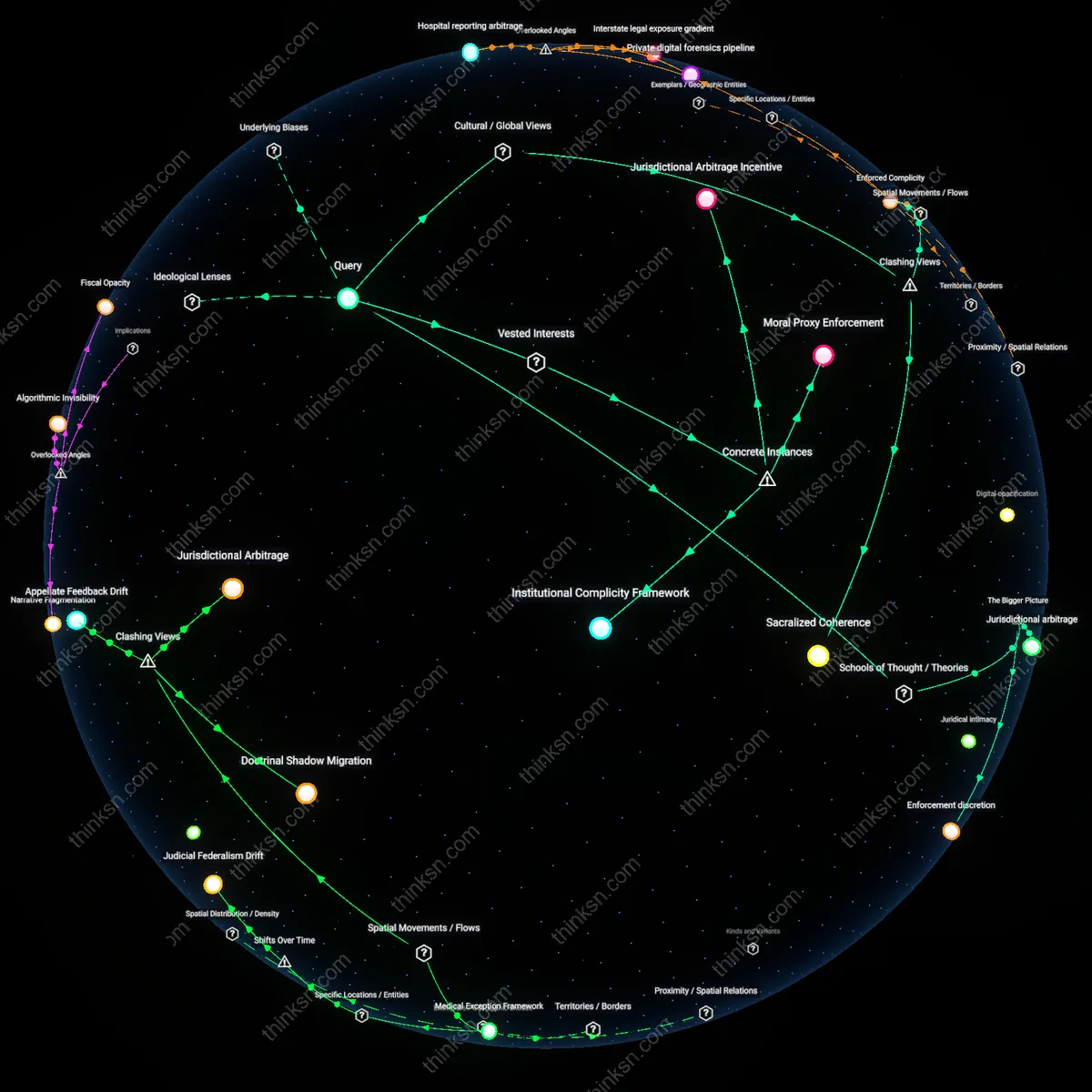

Function Creep Pathway

Consumers must assess voiceprint risks by understanding that biometric systems initially deployed for commercial convenience can be retrofitted for state surveillance, as illustrated by the rollout of Nuance Communications’ voice recognition technology in call centers across the U.S. banking sector, which was later adapted by U.S. Immigration and Customs Enforcement (ICE) in 2018 to identify non-English speakers in intercepted communications, demonstrating how private-sector biometric databases become de facto public surveillance infrastructure through procurement linkages and interoperable standards. This shift occurs without new legislation or public debate, exploiting the absence of data reuse restrictions in consumer consent agreements—rendering individual autonomy illusory when biometric data is encoded for one purpose but activated for another. The underappreciated reality is that utilitarian justifications for efficiency in private applications create latent surveillance capacities that bypass democratic scrutiny, enabling functional creep through technical compatibility rather than legal mandate.

Sovereignty Arbitrage

Consumers should treat voiceprint sharing as inherently exposed to transnational law enforcement access, particularly when data is processed in jurisdictions with weak privacy safeguards, as shown by Apple’s 2020 decision to route Chinese iCloud voice data through Guizhou-Cloud Big Data (GCBD), a state-linked provider, subjecting all Siri voiceprints from users in China to the 2017 Cybersecurity Law, which mandates unfettered access for public security organs—revealing that corporate data localization compliance effectively outsources biometric control to authoritarian legal regimes regardless of the user’s nationality or intended privacy expectations. This arrangement illustrates how liberal democratic assumptions about due process fail when infrastructure is jurisdictionally fragmented, and consumer trust in brand ethics is structurally undermined by geopolitical compliance demands. The critical insight is that libertarian appeals to personal choice in data markets collapse when sovereignty itself is asymmetrically leveraged to bypass procedural safeguards through extraterritorial infrastructure control.