Does Right to Be Forgotten Protect from Search Engine Profiling?

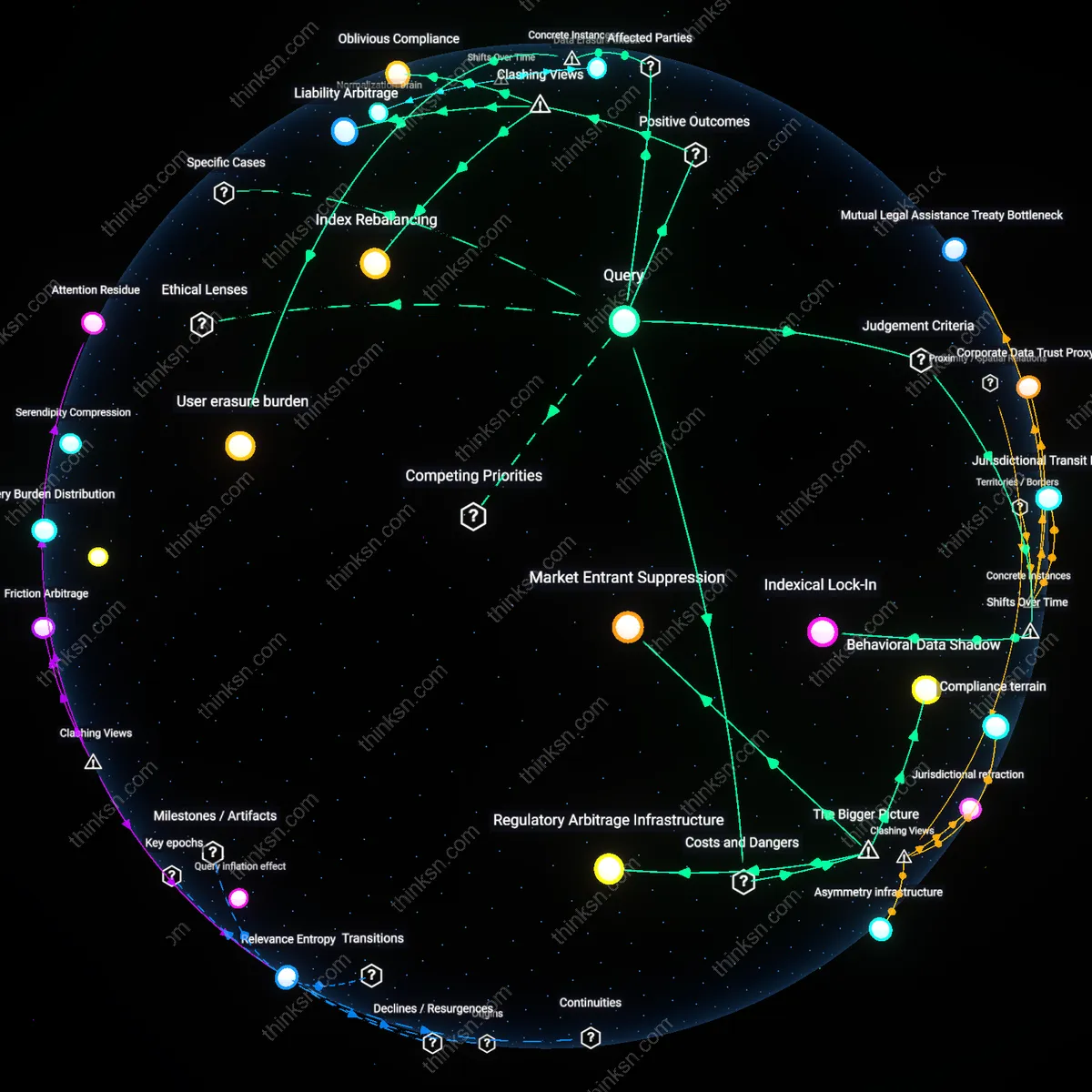

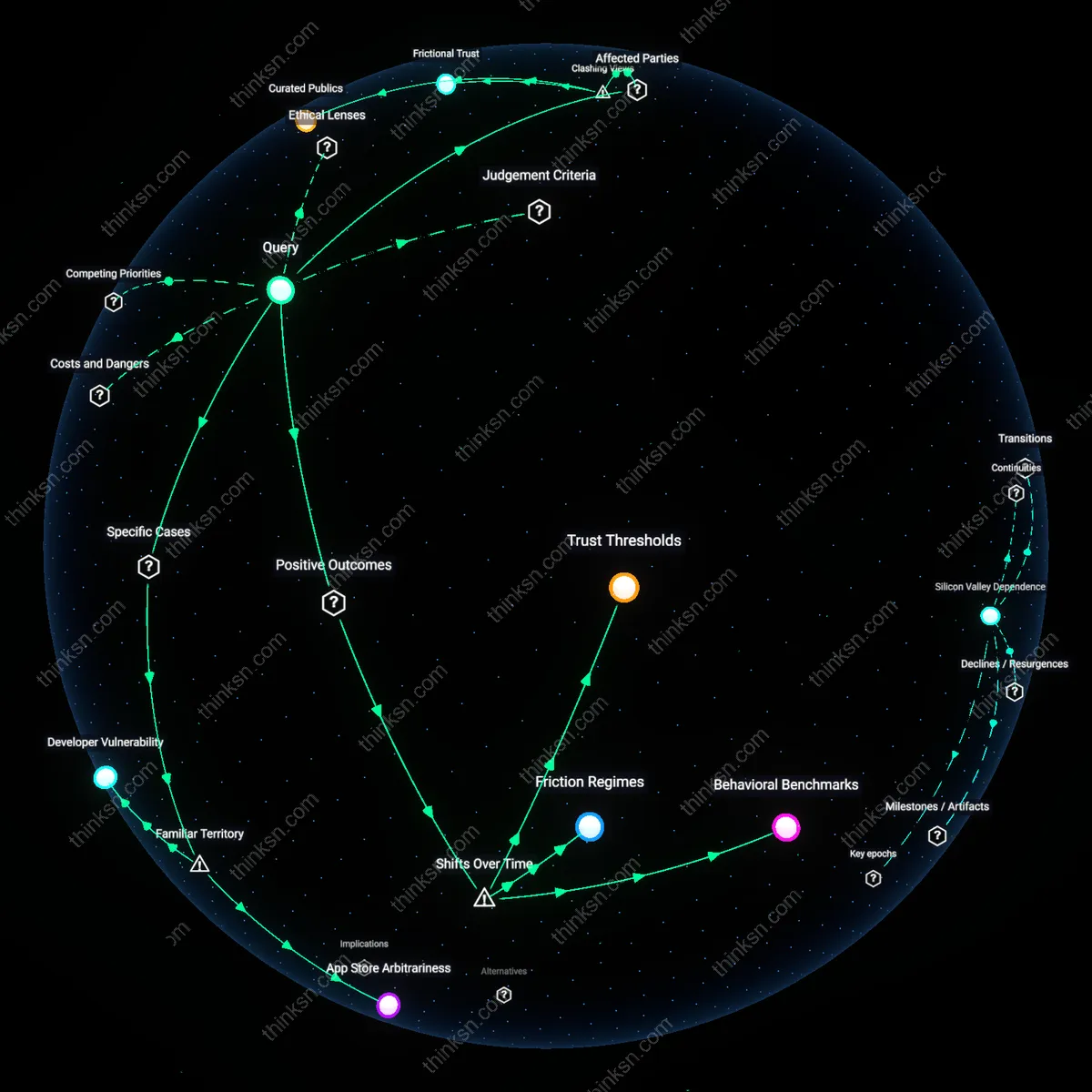

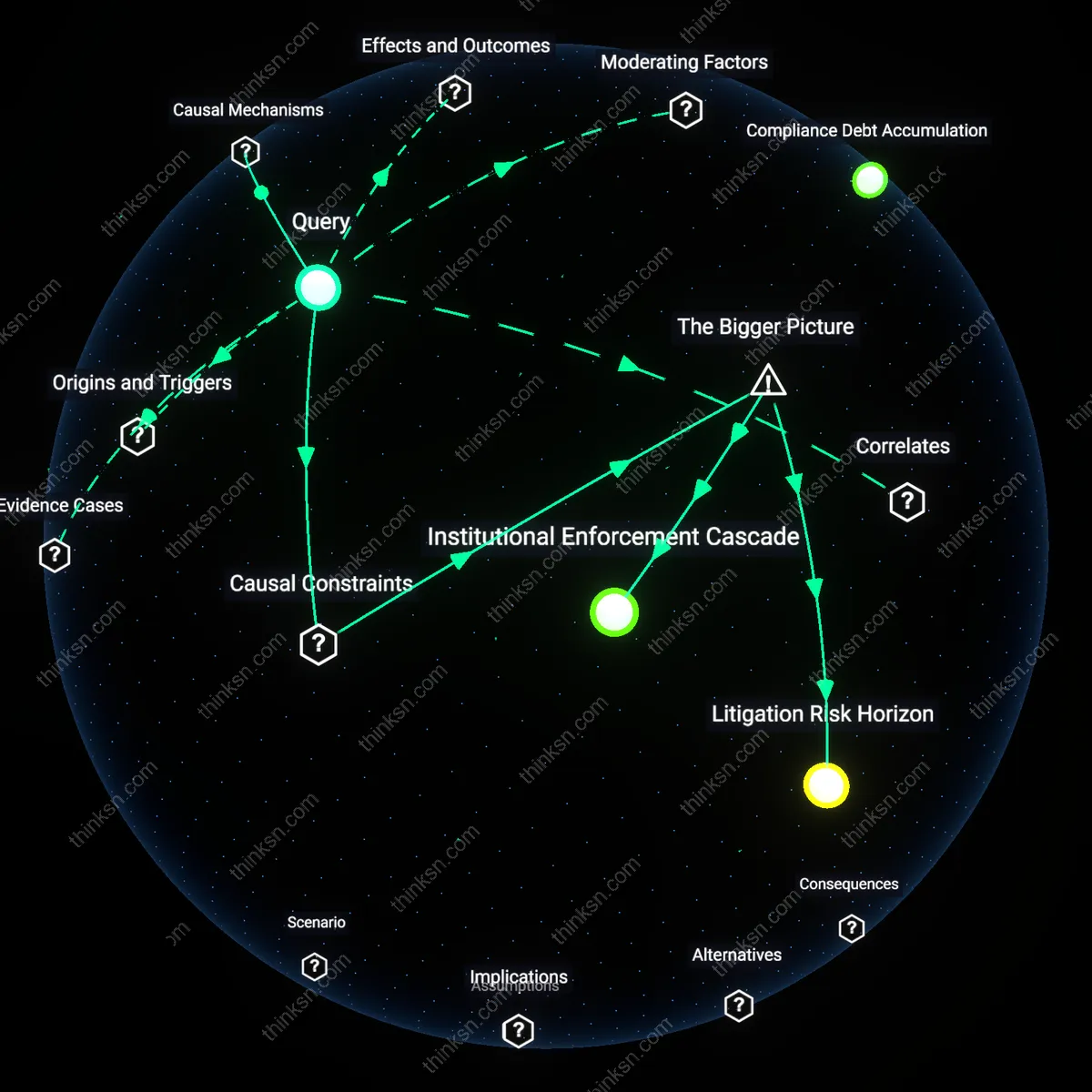

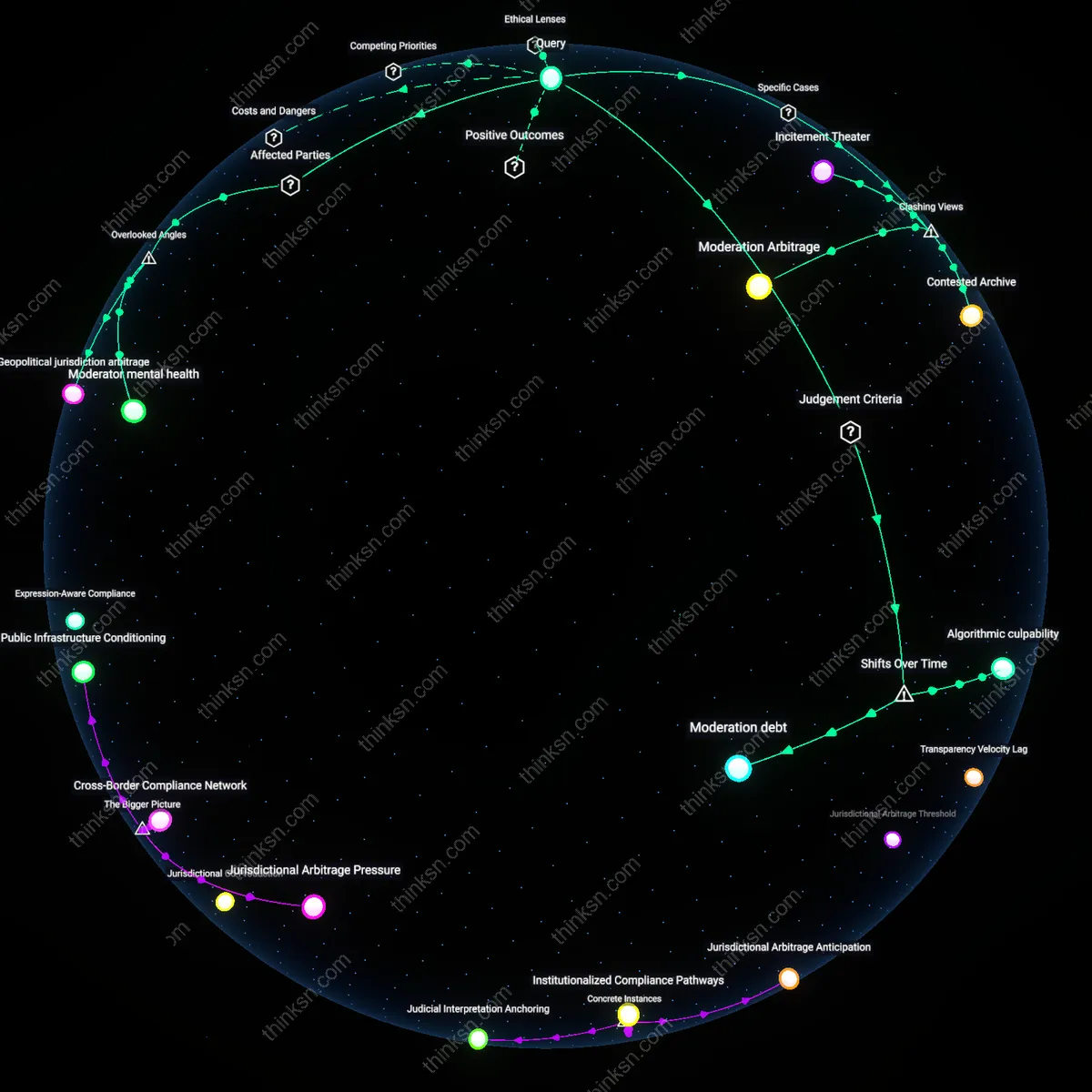

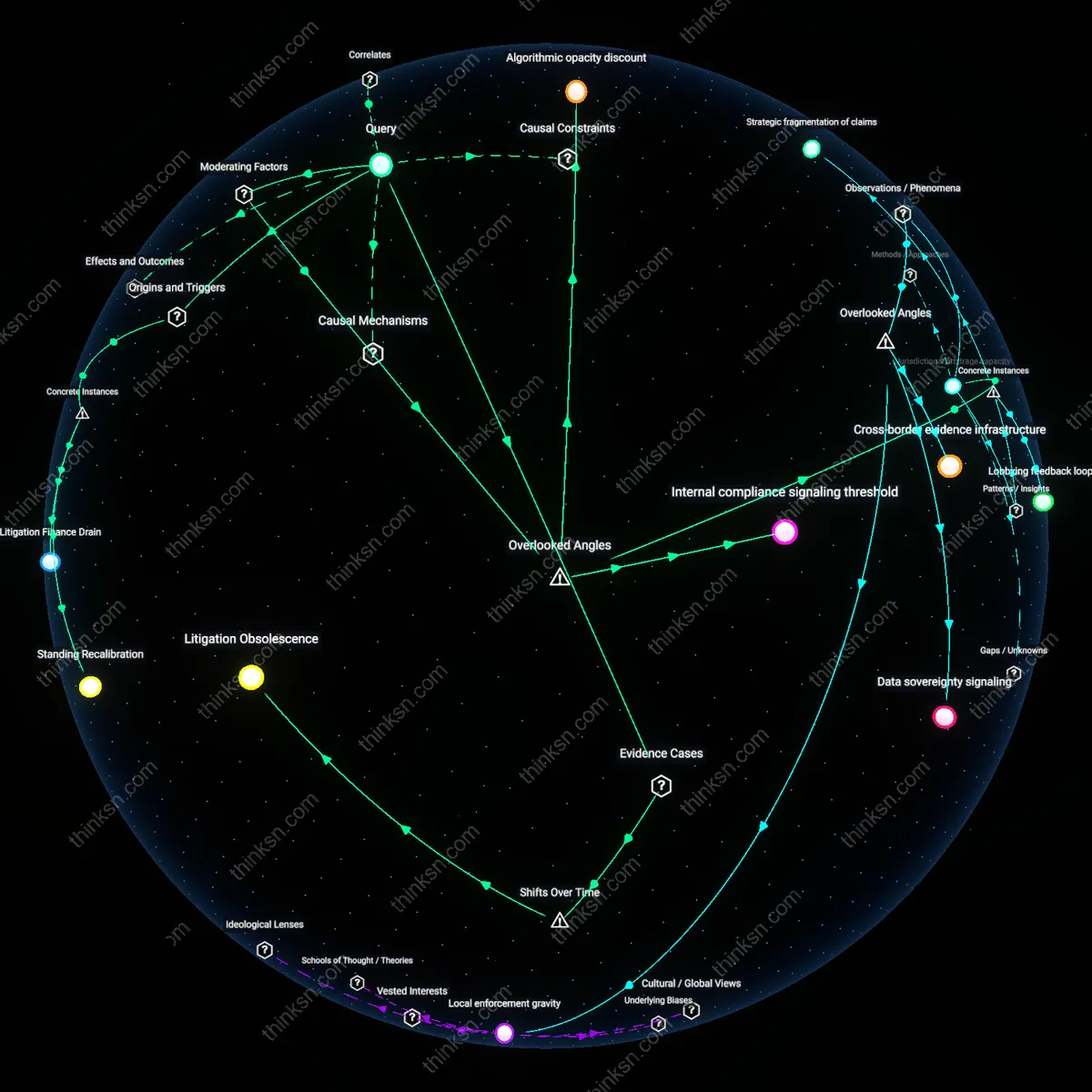

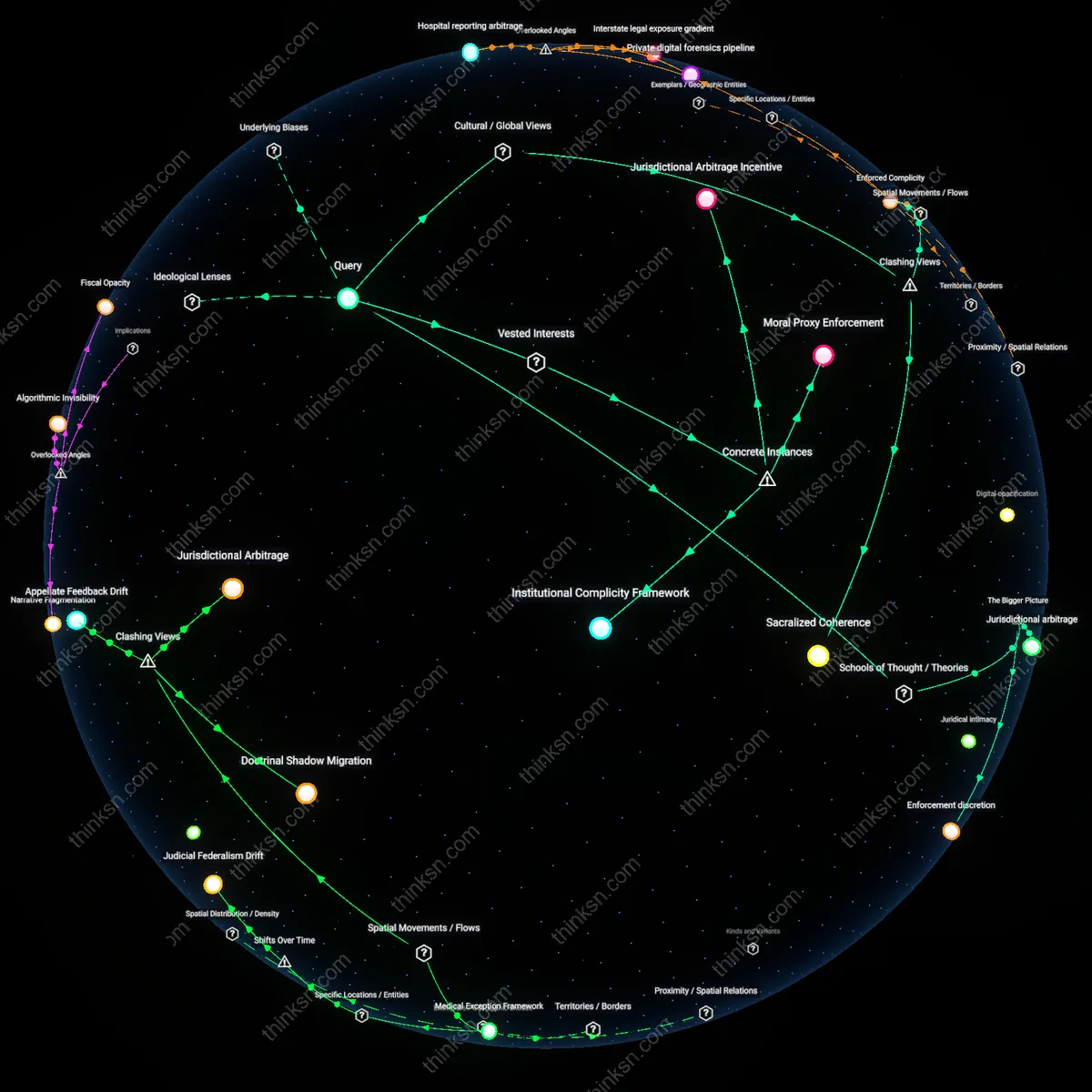

Analysis reveals 10 key thematic connections.

Key Findings

Search oligopoly inertia

Dominant search engines like Google maintain long-term user profiles through structural control over data infrastructure, making the GDPR’s right to be forgotten ineffective at halting algorithmic profiling. The 2014 Costeja ruling, which established the right to de-index certain personal data, did not prevent Google from continuing to infer behavioral profiles from residual, non-deleted data points across its ecosystem. Because deletion requests only remove specific links and not the underlying profiling logic, Google’s advertising-driven business model persists unchecked through data redundancy and cross-service integration, particularly across Gmail, YouTube, and Search. This reveals how market concentration enables functional immunity to individual data erasure.

User erasure burden

Individuals in marginalized communities, such as Roma populations in Eastern Europe, face disproportionate difficulty invoking the right to be forgotten due to procedural complexity and lack of institutional support, allowing search engine profiling to persist unchecked. In 2020, a Slovak advocacy group documented cases where Roma individuals were unable to navigate Google’s de-indexing forms without legal assistance, and even then, re-profiling occurred through algorithmic re-association of partial identifiers. The structural coercion lies in the GDPR’s reliance on individual action within a system designed to maximize data retention, shifting the cost of privacy onto the most vulnerable. This exposes a coercive design where rights exist only for those with literacy, time, and access.

Judicial Asymmetry

Structural coercion undermines the GDPR’s right to be forgotten because asymmetric enforcement capacities between EU regulators and U.S.-based search engines replicate colonial-era imbalances in legal jurisdiction, where domestic rulings cannot compel extraterritorial compliance. National data protection authorities like France’s CNIL can order Google to delink search results, but Google’s operational base in California and its algorithmic opacity allow it to delay, narrow, or technically circumvent de-referencing—especially for cross-border search domains like google.com. This gap between localized legal authority and distributed digital infrastructure reveals a condition not of mere noncompliance but of judicial asymmetry, where regulatory power is formally asserted yet materially constrained by historical imbalances in technological sovereignty.

Indexical Lock-In

The right to be forgotten is structurally weakened by the shift from document-based archives to algorithmic indexing, which emerged definitively after 2010 when search engines began prioritizing behavioral prediction over information retrieval—a transformation codified in Google’s Knowledge Graph integration. Profiling now depends less on stored personal data than on ephemeral, recomputed inferences derived from indexical relationships between user queries, ad networks, and third-party trackers, making deletion of specific URLs irrelevant to the persistence of profile shadows. This shift reveals that the GDPR targets a documentary past while search engines operate in a regime of indexical lock-in, where memory is not retained through storage but regenerated through relational computation.

Index Rebalancing

Structural coercion actually enhances the effectiveness of the GDPR's right to be forgotten by forcing search engines like Google to restructure their indexing logic in ways that reduce long-term profiling risks. Because compliance requires timely delinkings at scale, companies must automate decision systems that deprioritize stale or contested personal data, which inadvertently weakens the persistence of outdated information in algorithmic profiles. This operational burden—often seen as a cost—introduces a corrective mechanism that rebalances the index toward temporal relevance, a shift rarely acknowledged because the debate focuses on evasion rather than systemic recalibration.

Liability Arbitrage

The right to be forgotten increases the political visibility of data subjects not by empowering individuals directly, but by enabling civil society organizations and public defenders to exploit structural coercion as a procedural weapon against dominant platforms. NGOs such as noyb or the EDRI network use repeated, strategic requests not only to remove specific data but to generate litigation pressure that forces search engines to preemptively alter internal data retention protocols across jurisdictions. This form of liability arbitrage turns a supposedly individual right into a collective regulatory instrument—challenging the assumption that the right is ineffective due to scale, when instead it has become a trigger for systemic policy drift.

Oblivious Compliance

Search engines like Bing and Google comply with GDPR delinking orders so predictably and completely that the very transparency of enforcement undermines long-term profiling, not despite structural coercion but because of it. The visibility of takedown patterns creates a feedback loop where data brokers and third-party scrapers learn which content is legally fragile and therefore avoid integrating it into persistent profiles, resulting in a de facto chilling effect on data reuse. This oblivious compliance—where adherence to law indirectly hollows out profiling ecosystems through market anticipation rather than direct enforcement—refutes the idea that structural coercion inherently favors incumbents.

Market Entrant Suppression

Structural coercion undermines the GDPR’s right to be forgotten by entrenching dominant search engines, as smaller competitors cannot absorb the legal and operational costs of complying with deletion requests at scale. Incumbents like Google possess the capital and legal infrastructure to manage GDPR compliance as a fixed cost, while new market entrants face prohibitive barriers when attempting to offer privacy-centric alternatives, effectively freezing innovation in search. This dynamic is driven not by explicit regulation but by the economic inelasticity of compliance infrastructure, which transforms data protection into a tool that reinforces rather than restrains surveillance capitalism—highlighting how regulatory burdens can become filters for competitive exclusion.

Behavioral Data Shadow

The right to be forgotten fails to prevent long-term profiling because structural coercion in digital labor markets compels individuals to continuously re-engage with free search platforms despite privacy concerns, generating inevitable data resurfacing. Gig economy workers, job seekers, and welfare applicants—who depend on Google for employment, housing, or social services—are effectively coerced into perpetual data exposure, ensuring that deleted information is quickly regenerated through renewed usage. This creates a shadow data trail that negates erasure, sustained by socioeconomic precarity rather than technical loopholes, revealing how privacy rights collapse when legal autonomy is undermined by material dependency.

Regulatory Arbitrage Infrastructure

Structural coercion enables search engines to neutralize the right to be forgotten by externalizing enforcement costs onto users and decentralized data brokers, who absorb the burden of deletion verification across complex data supply chains. Google leverages its position to shift compliance labor onto ad-tech ecosystems and third-party publishers, where deletion requests are delayed, ignored, or obstructed due to weak contractual enforcement and jurisdictional fragmentation. This creates a regulatory gray zone where erasure is legally fulfilled at the core but functionally incomplete in practice, exposing how multilayered data capitalism insulates central platforms from the redistributive intent of regulation through systemic obfuscation.