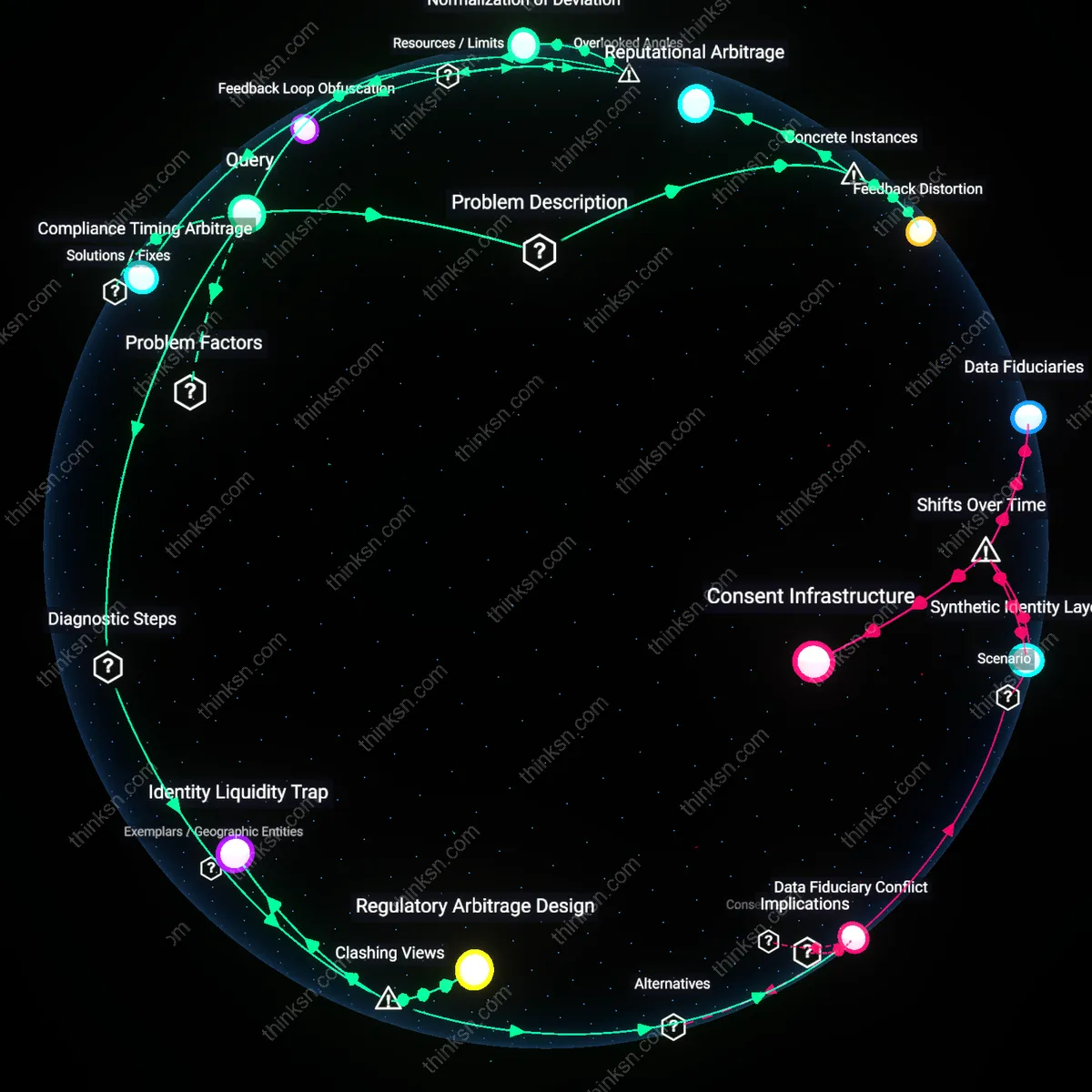

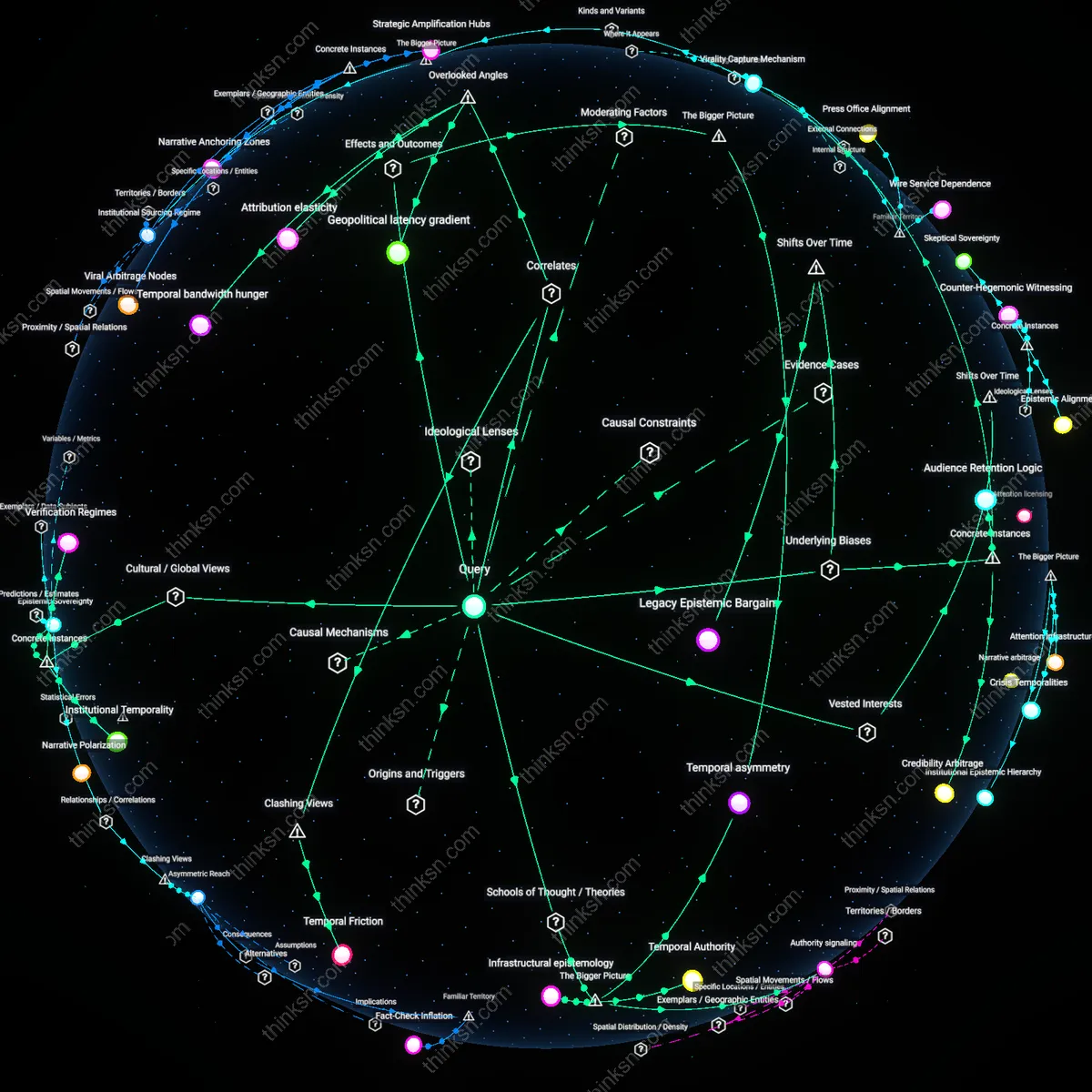

Does Identity Correction Fail When Profit Motives Prevail?

Analysis reveals 9 key thematic connections.

Key Findings

Incentive Misalignment

A profit-driven credit reporting agency like Equifax has systematically delayed or denied corrections to inaccurate credit reports because correcting errors reduces opportunities to sell credit monitoring services. The mechanism operates through a revenue model tied to perceived data risk, where maintaining ambiguous or erroneous records increases customer conversion into paid products. This dynamic reveals that the financial incentive to preserve data imperfection directly conflicts with the accuracy goals of correction rights, exposing a structural disincentive embedded in hybrid governance models.

Reputational Arbitrage

When Facebook (Meta) moderates user identities during election periods in India, it has selectively upheld or rejected identity corrections based on the political influence of the parties involved, as seen during the 2019 general elections when ruling party-aligned pages received accelerated verification while opposition corrections languished. The platform leverages its control over identity as a tool to manage governmental relationships and avoid regulatory scrutiny, treating accuracy not as a right but as a negotiable asset. This exposes how hybrid entities use corrective power asymmetrically to accumulate political goodwill, turning identity integrity into a currency for regulatory survival.

Feedback Distortion

In the case of Amazon Mechanical Turk, worker identity corrections—such as name or nationality updates required for payment compliance—are processed slowly or rejected without appeal, not due to technical limits but because high rejection rates suppress worker visibility and downstream requesters optimize for 'proven' profiles. The system’s algorithmic trust metrics, which reward low-change histories, create a performance bias against corrected identities, effectively penalizing accuracy. This reveals that automated evaluation systems can render correction rights meaningless when identity stability, rather than truthfulness, becomes the proxy for reliability.

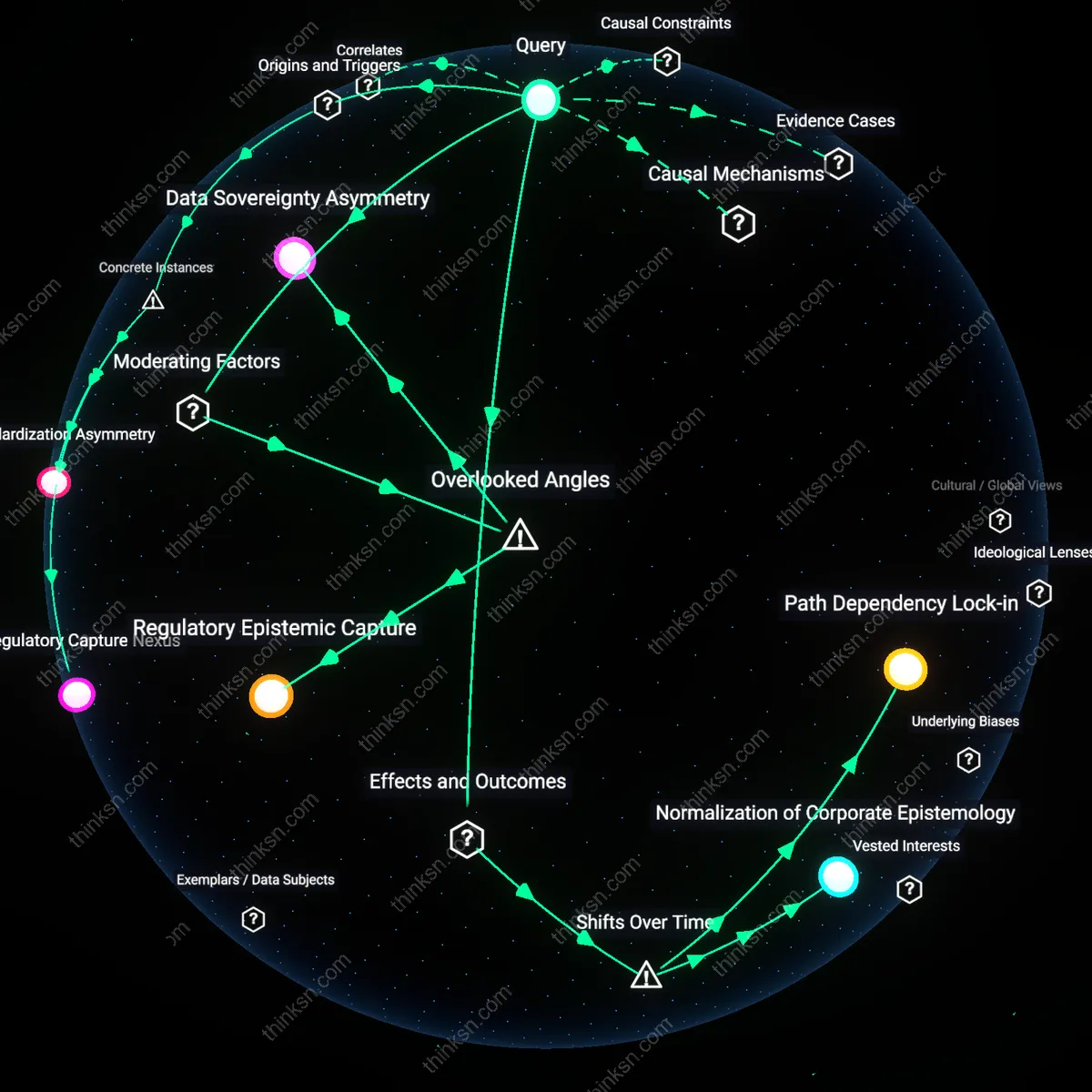

Data Fiduciary Conflict

A profit-driven hybrid entity’s financial dependence on data exploitation creates a structural disincentive to accurate identity correction, because verified corrections reduce the volume and continuity of behavioral data used for targeting. This mechanism operates through the entity’s dual role as both service provider and data aggregator, where correcting identity attributes—such as user preferences or demographic classifications—disrupts established profiling trajectories that underpin advertising revenue. The non-obvious insight is that correction is not merely a technical update but a threat to data asset integrity, revealing how fiduciary loyalty is split between user rights and platform economics.

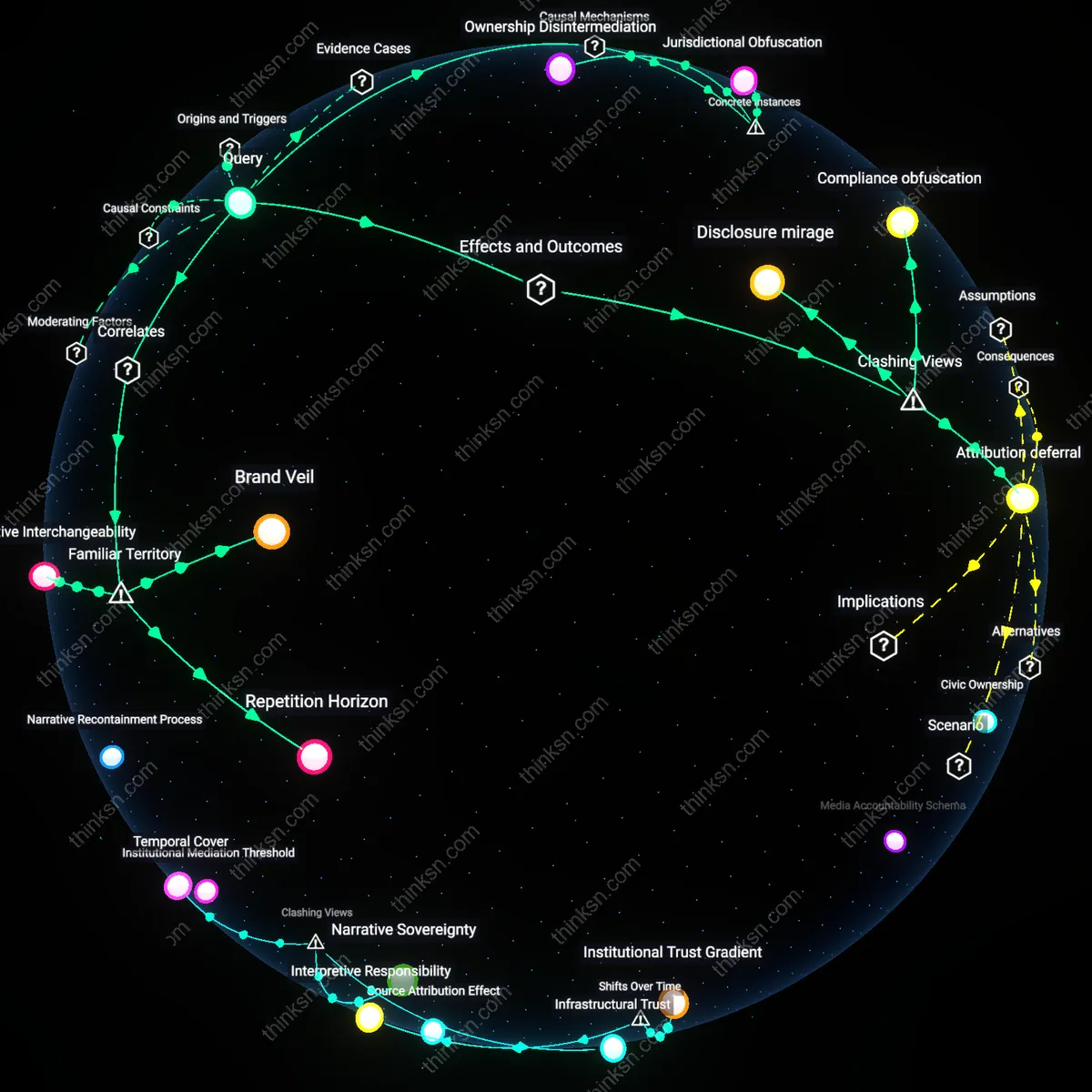

Regulatory Arbitrage Design

Hybrid entities manipulate the procedural complexity of correction mechanisms to preserve de facto control over identity data, exploiting regulatory loopholes that validate procedural compliance over substantive accuracy. This occurs through deliberately fragmented identity architectures—such as siloed consent databases or delayed sync cycles—that satisfy audit requirements while maintaining divergent internal records for operational use. The dissonance lies in treating ‘correction’ as a formal ritual rather than a functional update, exposing how regulatory adherence can be weaponized to negate the right’s real-world effect.

Identity Liquidity Trap

The right to correction fails when the economic value of fluid, speculative identity models outweighs the static truth of corrected data, as seen in platforms where user identity is continuously reassembled for dynamic pricing, credit scoring, or content moderation. Here, correction interrupts high-frequency algorithms that rely on probabilistic inference rather than verified facts, making fixed identities functionally obsolete within systems designed for perpetual uncertainty. This challenges the intuitive belief that truth enhances control, revealing instead that in fast-data markets, instability is a feature, not a flaw.

Compliance Timing Arbitrage

A profit-driven hybrid entity can exploit regulatory latency by delaying identity corrections just long enough to maximize data exploitation before compliance. This occurs because automated systems prioritize high-frequency, revenue-generating data flows over manual or low-priority correction queues, creating a deliberate lag window where inaccurate identity data continues to inform monetized interactions—such as targeted advertising or credit scoring—despite formal acknowledgment of inaccuracy. The non-obvious dimension is not deliberate non-compliance but the strategic use of permissible procedural delays, which few regulatory frameworks penalize. This reveals how temporal precision, not just accuracy, governs identity efficacy in commercial ecosystems.

Feedback Loop Obfuscation

When hybrid entities control both identity verification and correction channels, they can obscure downstream usage feedback that would otherwise signal urgent need for rectification, particularly in algorithmic lending or content moderation systems. Because these entities do not share internal usage logs with data subjects or auditors, individuals cannot provide contextual evidence—like denied insurance applications or shadow-banned content—that could validate a correction request. The overlooked mechanism is the absence of traceable identity impact trails, which severs the causal link between error and harm. This changes the standard understanding by showing that correction efficacy depends not on appeal systems but on reverse visibility into automated decision chains.

Normalization of Deviation

Profit-driven systems incentivize retaining slightly inaccurate identity data when corrections reduce model predictability in recommendation or risk-assessment engines, leading to active deprioritization of precision in favor of statistical coherence. For instance, social media platforms may resist updating a user's declared gender identity if it disrupts engagement models trained on historical behavioral clusters. The hidden dependency is the tension between individual data rights and system-level performance metrics, where identity 'accuracy' is subordinated to platform stability. This dimension is rarely acknowledged because regulation assumes identity facts are neutral, not destabilizing inputs to predictive architectures.