Do Opt-Out Mechanisms Empower Users or Exploit Trust in Health Apps?

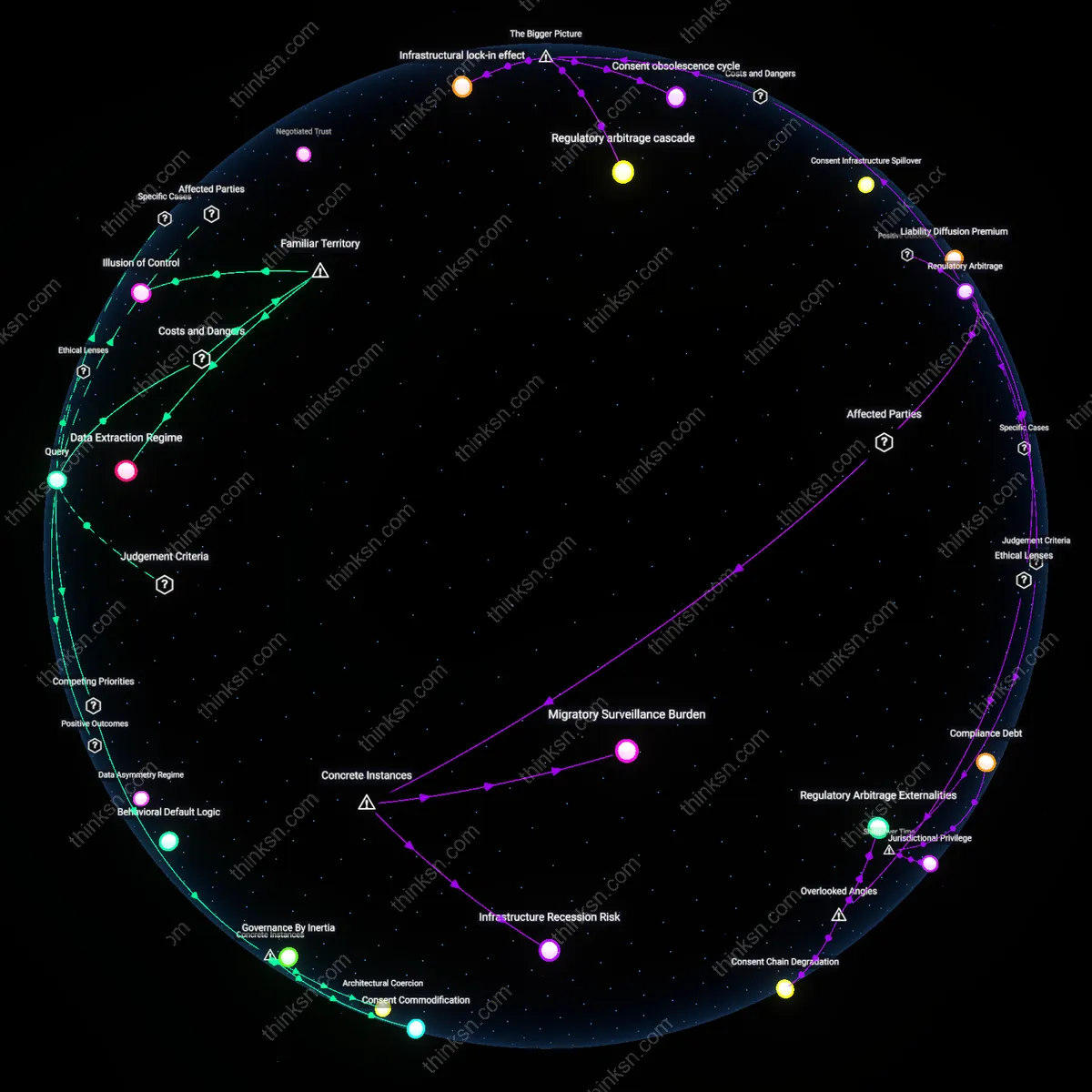

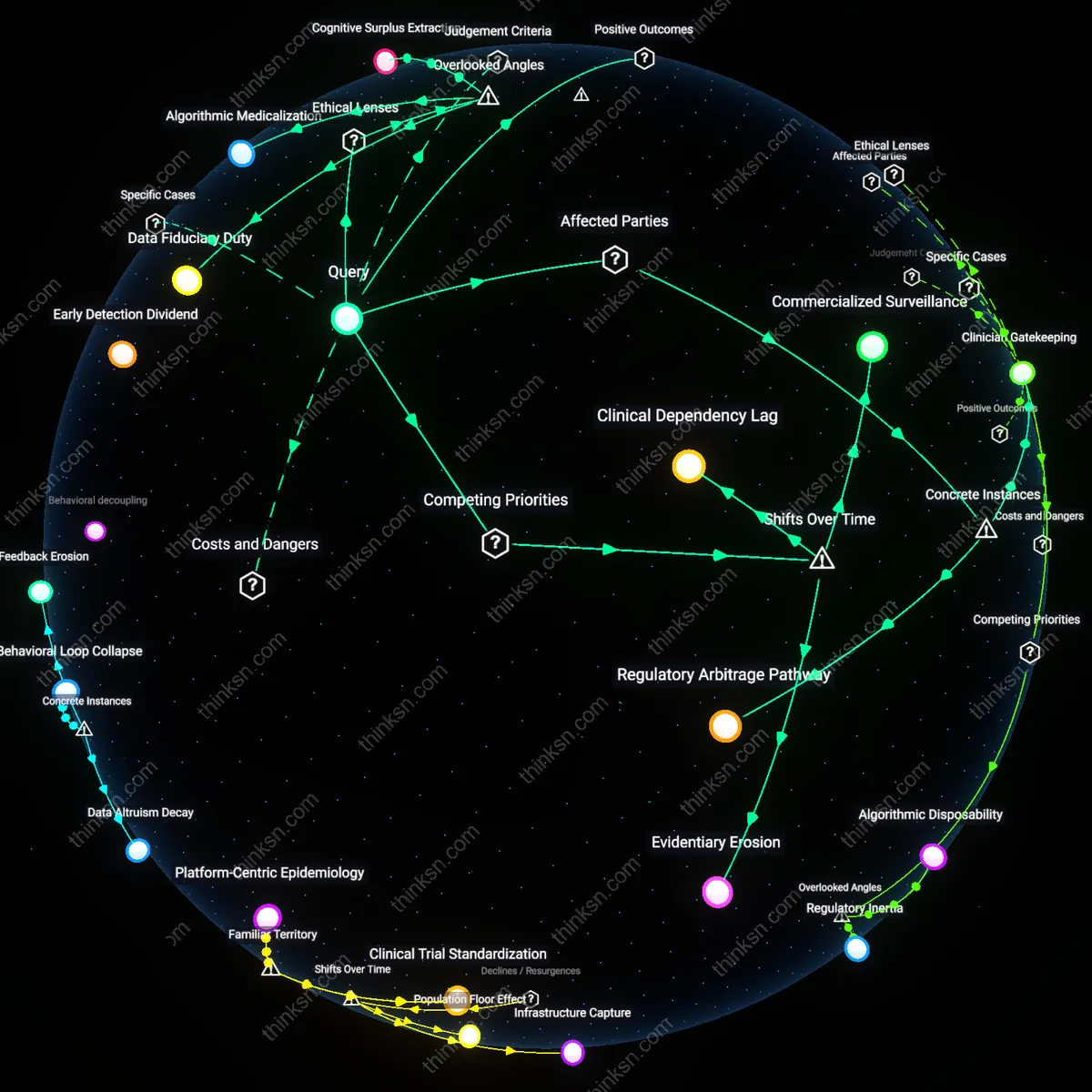

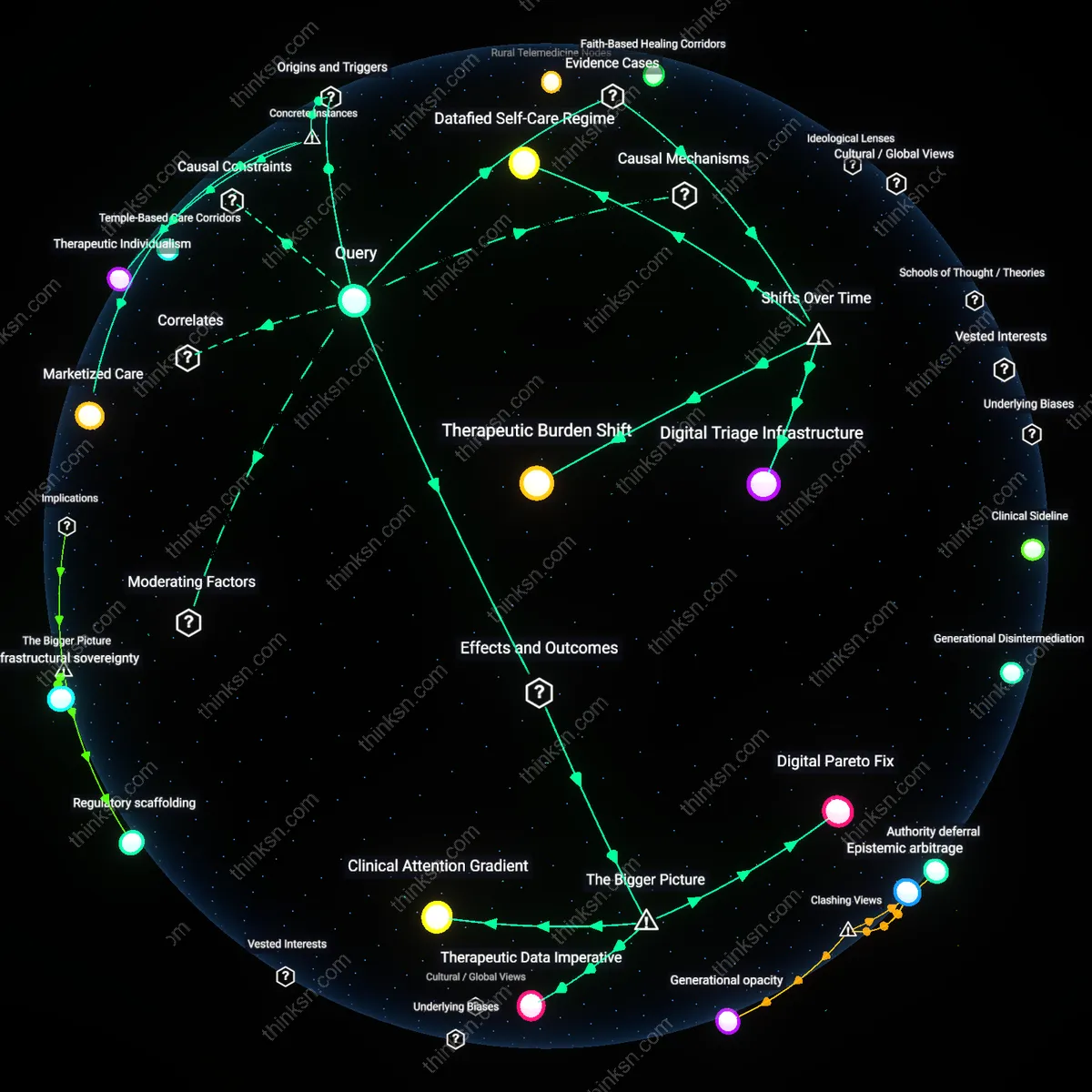

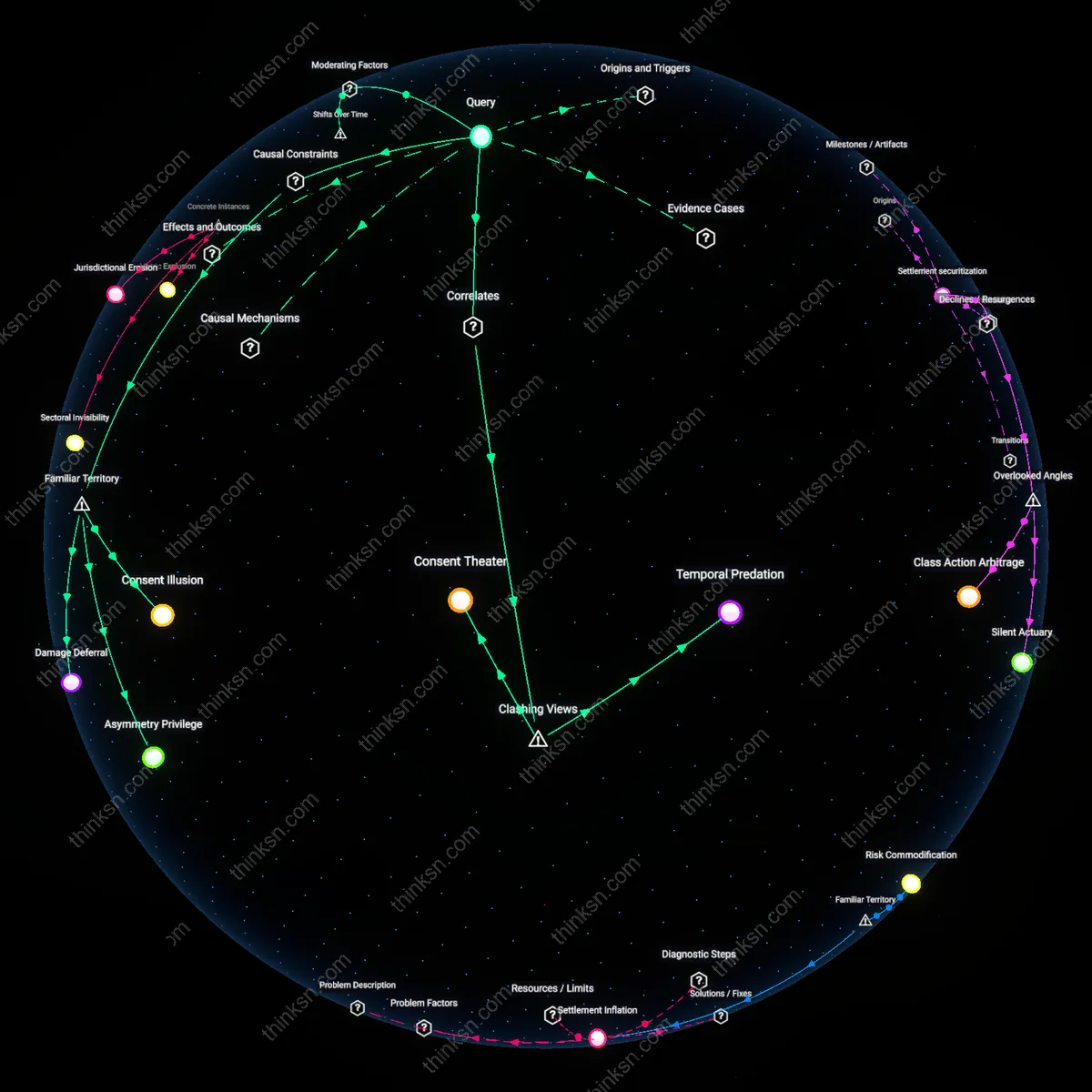

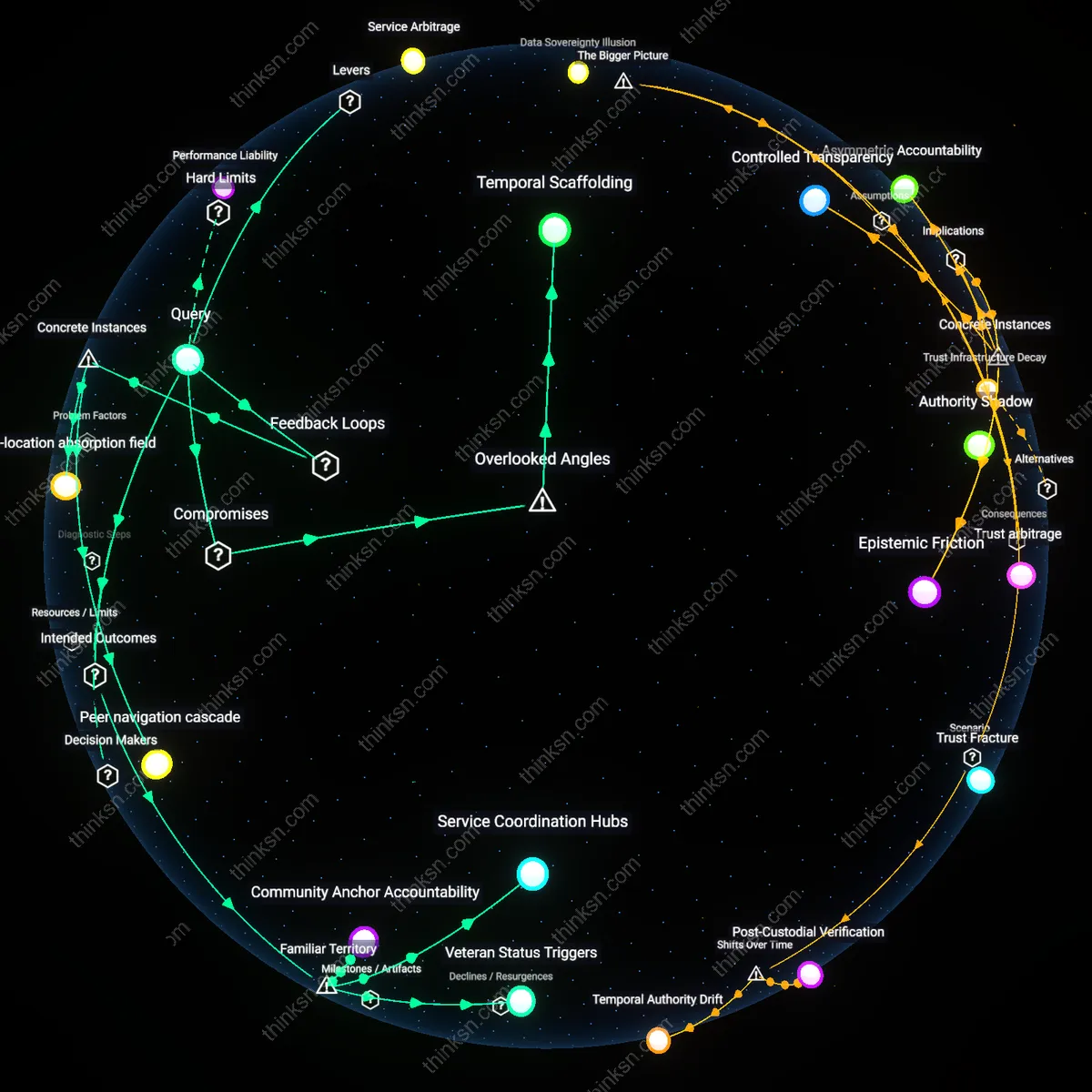

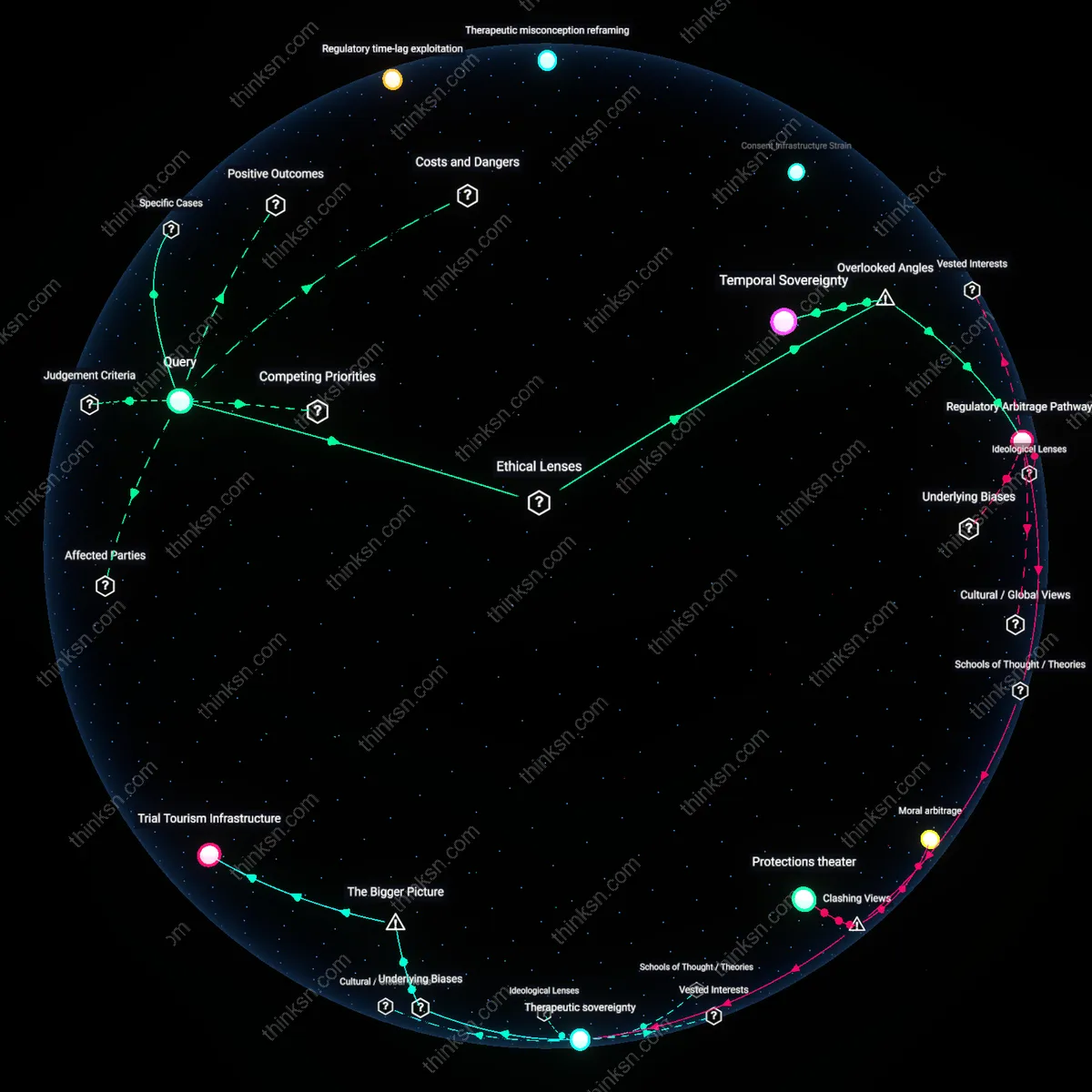

Analysis reveals 8 key thematic connections.

Key Findings

Consent Infrastructure

Opt-out consent in free health apps reflects a historical shift from clinician-mediated data practices to platform-driven data extraction, where users are positioned as passive contributors rather than active participants. This transition, accelerated after 2010 with the rise of consumer-facing digital health platforms like MyFitnessPal and Fitbit, replaced informed-consent norms rooted in medical ethics with scalable, automated enrollment systems designed for venture-backed data accumulation. The mechanism—embedding consent within app onboarding flows—reduces provider liability while expanding data liquidity for third-party monetization, revealing how post-2010 digital health platforms repurposed clinical trust into a technical governance feature. What’s underappreciated is that this shift didn’t merely erode autonomy but actively constructed a new operational layer—standardized, invisible, and systemic—through which personal health data could be continuously harvested without repeated negotiation.

Data Asymmetry Regime

The adoption of opt-out consent marks a decisive transition from pre-2000s regulatory models, where health data control was legally centralized in insurers and hospitals, to a post-2015 ecosystem where app developers and data brokers operate beyond HIPAA's reach. In this new regime, providers leverage jurisdictional gaps created by the FTC’s non-medical classification of most apps to deploy default-sharing settings that treat user data as fungible assets. This shift enables continuous data aggregation at scale, favoring platform profitability over patient agency, and institutionalizes an imbalance not through overt coercion but through engineered inattention. The non-obvious insight is that power here is no longer held statically by institutions but dynamically produced through evolving regulatory arbitrage between health law and consumer tech policy.

Behavioral Default Logic

The normalization of opt-out consent in free health apps emerged decisively after 2018, when behavioral design principles from Silicon Valley were fully integrated into digital health onboarding, transforming passive agreement into a scalable data procurement engine. Unlike earlier eras when consent required deliberate action—such as signing a paper form—today’s apps exploit cognitive biases like status quo preference and choice overload to nudge users toward data sharing as the path of least resistance. This mechanism, operationalized through A/B tested interfaces and dark patterns, shifts power toward providers not by denying access but by structuring decisions in ways that make non-consent effortful. The underappreciated consequence is that user ‘choice’ has been redefined not as autonomy but as friction tolerance, producing a system where compliance is behaviorally enforced rather than ethically negotiated.

Data Extraction Regime

Opt-out consent in free health apps enables companies to automatically harvest user health data without active permission, locking individuals into passive contributor roles. This default enrollment mechanism exploits user inertia and low health literacy, allowing platforms like fitness trackers and symptom checkers to amass vast biometric datasets under the guise of convenience. The systemic cost is the normalization of surveillance as a condition of care access, where the real service provided is not health support but data capture—turning intimate bodily information into corporate assets while users remain unaware of scale or use. What’s underappreciated is that this isn’t merely poor consent design, but a deliberate infrastructure for invisible extraction.

Illusion of Control

Opt-out settings create the appearance of user agency while ensuring most will never deactivate data sharing, due to confusing interfaces and psychological default bias. This dynamic plays out in apps like calorie counters or meditation platforms, where privacy toggles are buried under layers of menus, reinforcing the myth that consent is a choice. The system leverages familiar digital habits—swiping, skipping, trusting defaults—to mask powerlessness. What people don’t recognize is that the interface itself is the coercion, designed not to inform but to neutralize resistance under the guise of autonomy.

Consent Commodification

The deployment of opt-out consent in the NHS-backed Babylon Health app reduced informed agreement to a procedural hurdle users bypassed to access free symptom-checking services, transforming consent into a transactional artifact rather than a mechanism of control. Within the UK’s public health ecosystem, where demand for digital triage surged during post-2010 austerity-driven service reductions, Babylon leveraged NHS endorsement to naturalize data collection on user behavior, medical history, and device usage—collection that persisted by default unless actively refused. This mechanism reveals how public-sector partnership with private vendors shifts the burden of data sovereignty onto overstretched patients, whose prioritization of immediate care access inherently undermines privacy exercise, thus commodifying consent as a byproduct of service necessity rather than a safeguard against exploitation.

Architectural Coercion

In India’s national Ayushman Bharat Digital Mission, third-party health apps integrated with the federal health ID system employ opt-out defaults to automatically enroll users in data-sharing networks linking private clinics, insurers, and wellness platforms, justified as necessary for seamless care coordination. Given patchy digital literacy in rural rollout regions like Uttar Pradesh and the perceived non-negotiability of registration for subsidized treatment, users encounter consent interfaces embedded in technical workflows they cannot meaningfully navigate—rendering refusal practically invisible. This reveals how infrastructure-scale digitization weaponizes usability norms to coerce acquiescence, privileging system interoperability and administrative efficiency over individual agency, thereby institutionalizing asymmetry through design rather than overt policy.

Governance By Inertia

Meta’s attempted acquisition of mental health startup Akili Interactive—whose FDA-cleared EndeavorRx prescribes game-based ADHD treatment through app-based monitoring—exposed how opt-out data clauses become buried within clinical software used under therapeutic obligation, particularly for pediatric populations. Parents enrolling children in prescribed digital therapy face no meaningful alternative delivery method, making data-sharing consents de facto medical compliance requirements rather than discretionary choices. This case reveals how clinical validation loopholes allow health tech firms to position data extraction as care continuity, leveraging medical authority to freeze governance challenges in place through user dependence on treatment-adjacent services, thus normalizing surveillance as therapeutic adherence.