Regulating AI Fraud: Weighing Privacy Against Loss Prevention?

Analysis reveals 10 key thematic connections.

Key Findings

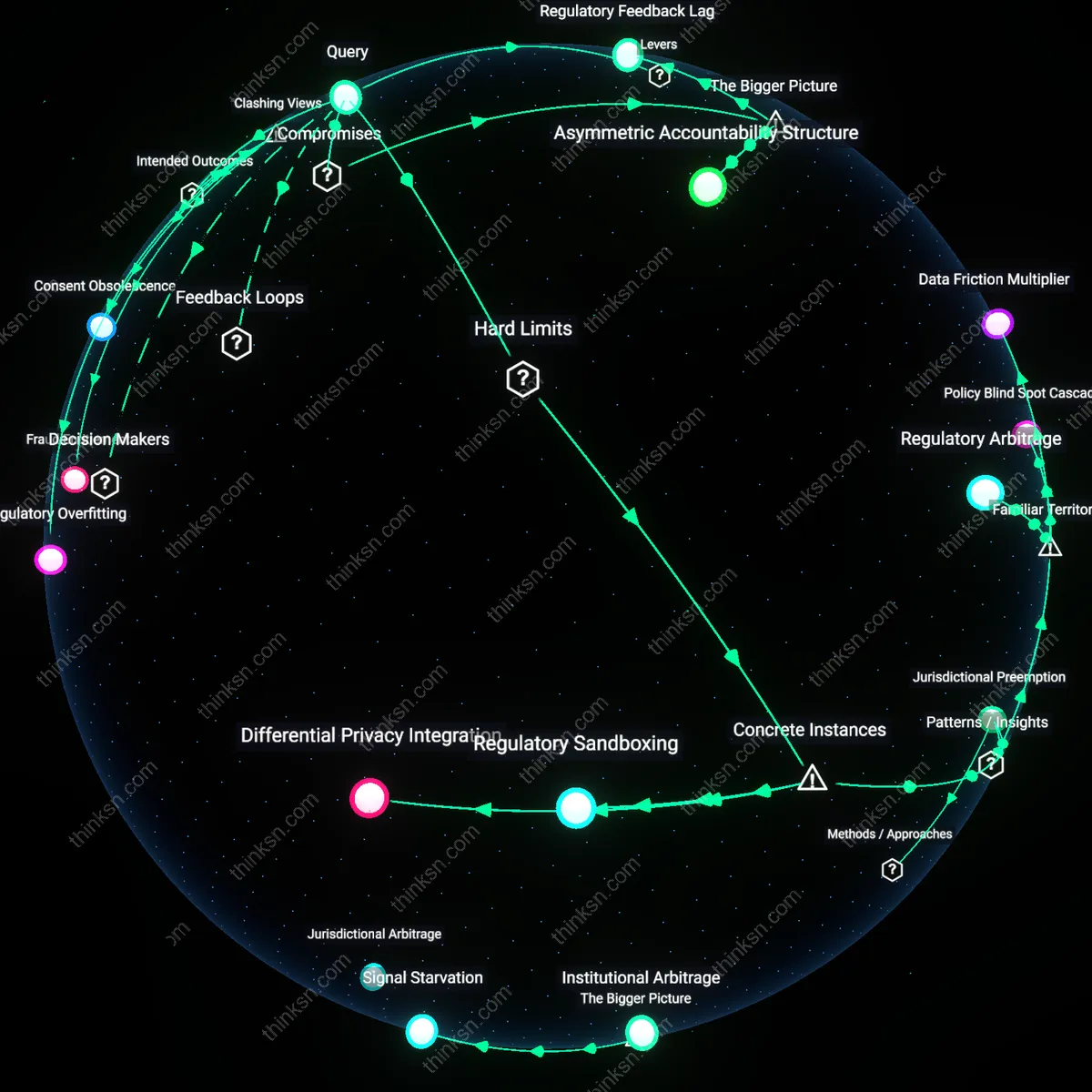

Regulatory Sandboxing

Regulators can balance AI fraud detection and privacy by confining AI systems to regulated test environments where data use is strictly monitored, as demonstrated by the UK Financial Conduct Authority's sandbox for fintech firms. Within this framework, companies deploy AI fraud detection tools on limited consumer datasets under supervisory oversight, enabling performance evaluation without uncontrolled data exposure. The mechanism relies on iterative feedback between firms and regulators, ensuring compliance with GDPR while fostering innovation, a nuance often overlooked in debates that frame regulation as inherently obstructive. This reveals how controlled experimentation serves as a boundary-preserving innovation pathway.

Differential Privacy Integration

Integrating differential privacy into AI fraud detection systems ensures consumer data utility without enabling re-identification, a practice adopted by Apple in its on-device intelligence to prevent fraud while safeguarding user data. By adding mathematical noise to datasets during analysis, the system maintains aggregate accuracy for fraud pattern recognition while making individual data points cryptographically indistinct. This technical boundary enforces privacy as a non-negotiable computational constraint rather than a policy overlay, a distinction rarely emphasized in regulatory discourse focused on consent and data minimization. The case illustrates how algorithmic design can embed hard privacy limits that are immune to institutional override.

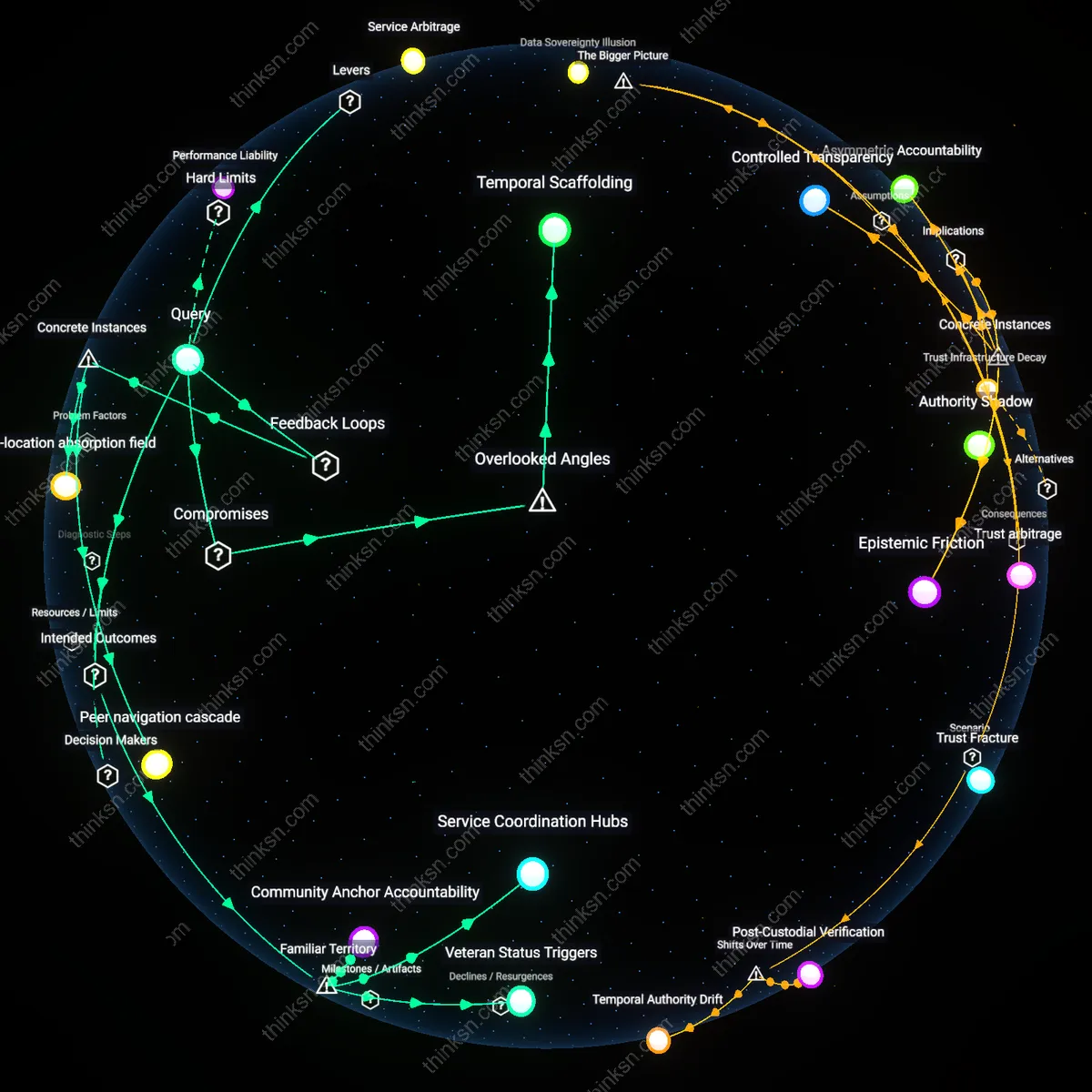

Jurisdictional Preemption

State-level AI fraud applications must comply with federal privacy standards like those imposed by the U.S. Privacy Act of 1974, which limits how government agencies can retain and use personal data, as seen when Illinois' AI-driven unemployment fraud algorithm was curtailed by federal auditors over unauthorized data sharing. The enforcement of federal boundaries prevented the state from scaling its system without privacy safeguards, demonstrating how higher-tier legal frameworks can preemptively constrain AI deployment regardless of fraud detection efficacy. This underscores that regulatory balance is often achieved not through compromise but through hierarchical legal invalidation of non-compliant systems.

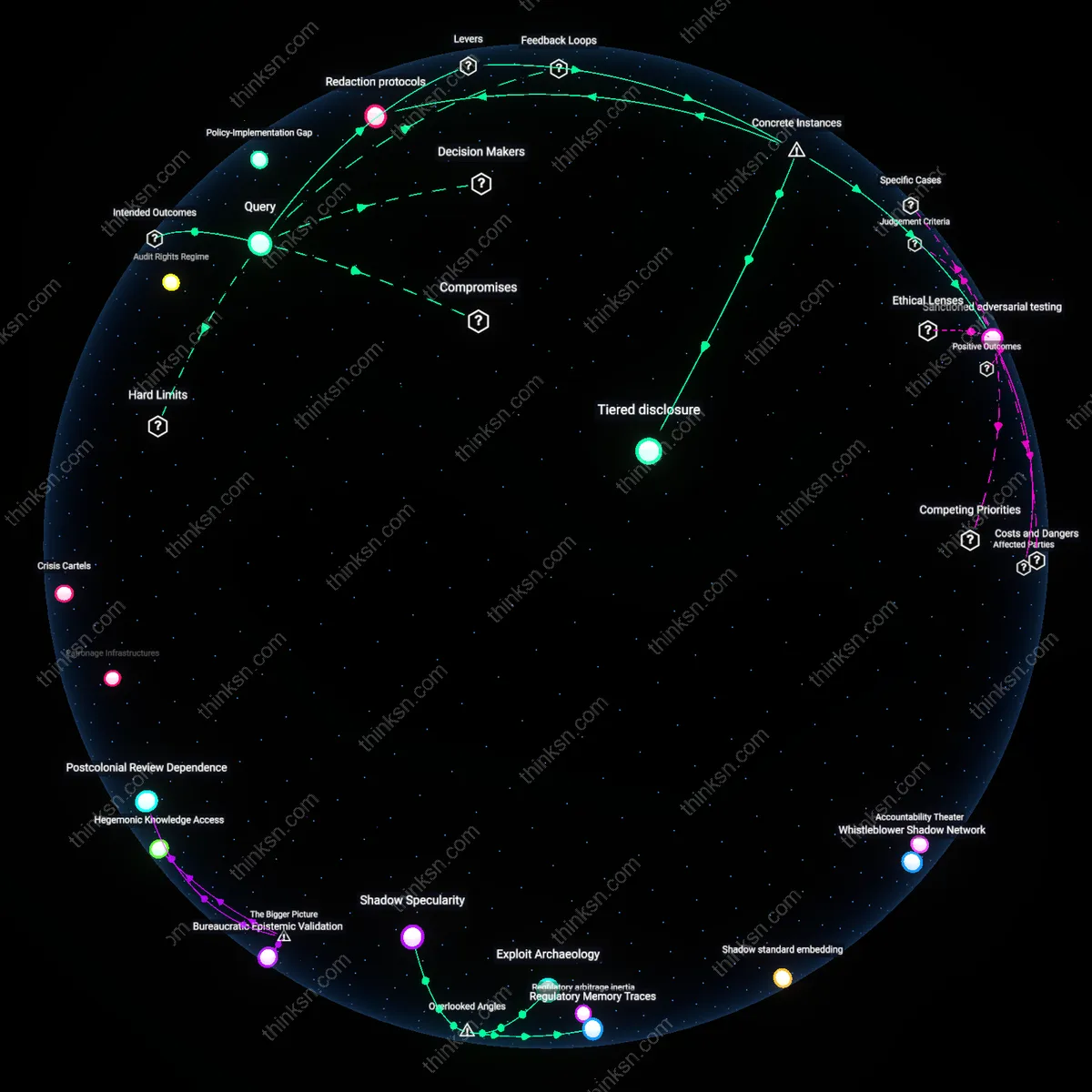

Consent Erosion

Regulators can limit third-party data sharing for AI fraud detection through strict interpretation of opt-in consent requirements under frameworks like GDPR or the California Privacy Rights Act. By enforcing granular user consent as a gatekeeper mechanism, authorities alter the economic calculus of firms that rely on broad data pools to train detection models, compelling them to either reduce data intake or improve synthetic data use. This shift marks a reversal from the 1990s–2000s era of implicit consent and data aggregation norms, where fraud detection justified expansive data use under 'legitimate interest' claims; today’s requirement for meaningful consent exposes how privacy erosion was institutionalized through assumed user acquiescence, now being challenged by legal insistence on user agency. The underappreciated dynamic is that consent functions not as empowerment but as a procedural chokepoint that reveals the fragility of AI systems dependent on unconsented data.

Surveillance Drift

Regulators can impose statutory sunset clauses on AI fraud detection models, requiring legislative or judicial renewal after fixed intervals to prevent functional creep into broader surveillance. This tool—exemplified by renewability conditions in French AI legislation or proposed U.S. algorithmic accountability bills—forces periodic reassessment of whether detection systems still serve narrowly defined purposes, disrupting the historical trajectory where fraud tools expand into behavioral monitoring (e.g., credit scoring, insurance underwriting) through incremental justifications of risk mitigation. The shift from targeted intervention to systemic monitoring, evident in financial tech ecosystems post-2010, reveals how privacy risks accumulate not from single breaches but from sustained, unreviewed operation. The non-obvious insight is that time itself becomes a regulatory instrument, interrupting the default drift toward normalization of surveillance.

Regulatory Feedback Lag

Regulators must institutionalize iterative rulemaking that treats AI fraud detection policies as provisional, adjusting them as new privacy harms emerge from real-world deployment. This approach acknowledges that static regulations cannot keep pace with the evolving tactics of both fraudsters and AI systems, creating a gap where privacy violations accumulate before legal remedies catch up—evident in cross-jurisdictional fintech expansions where models trained on broad data access in one region impact user privacy in another with weaker protections. The non-obvious insight is that regulatory timing, not just content, becomes a primary determinant of privacy preservation, as delays in feedback loops enable normalization of invasive practices before oversight can respond.

Asymmetric Accountability Structure

Regulators should assign differential liability to AI system outcomes based on organizational control over data flows, making core infrastructure providers—like payment processors or cloud platforms—responsible for ensuring downstream fraud models comply with privacy thresholds. This shifts enforcement from reactive penalties on end-user companies to proactive governance at architectural chokepoints where data aggregation is technically centralized, as seen in the SWIFT network’s influence on anti-fraud protocols across banks. The critical but hidden factor is that consumer privacy erosion often stems not from individual model decisions but from the concentration of data processing power in a few enabling institutions, which regulators are structurally positioned to constrain more efficiently than dispersed monitoring.

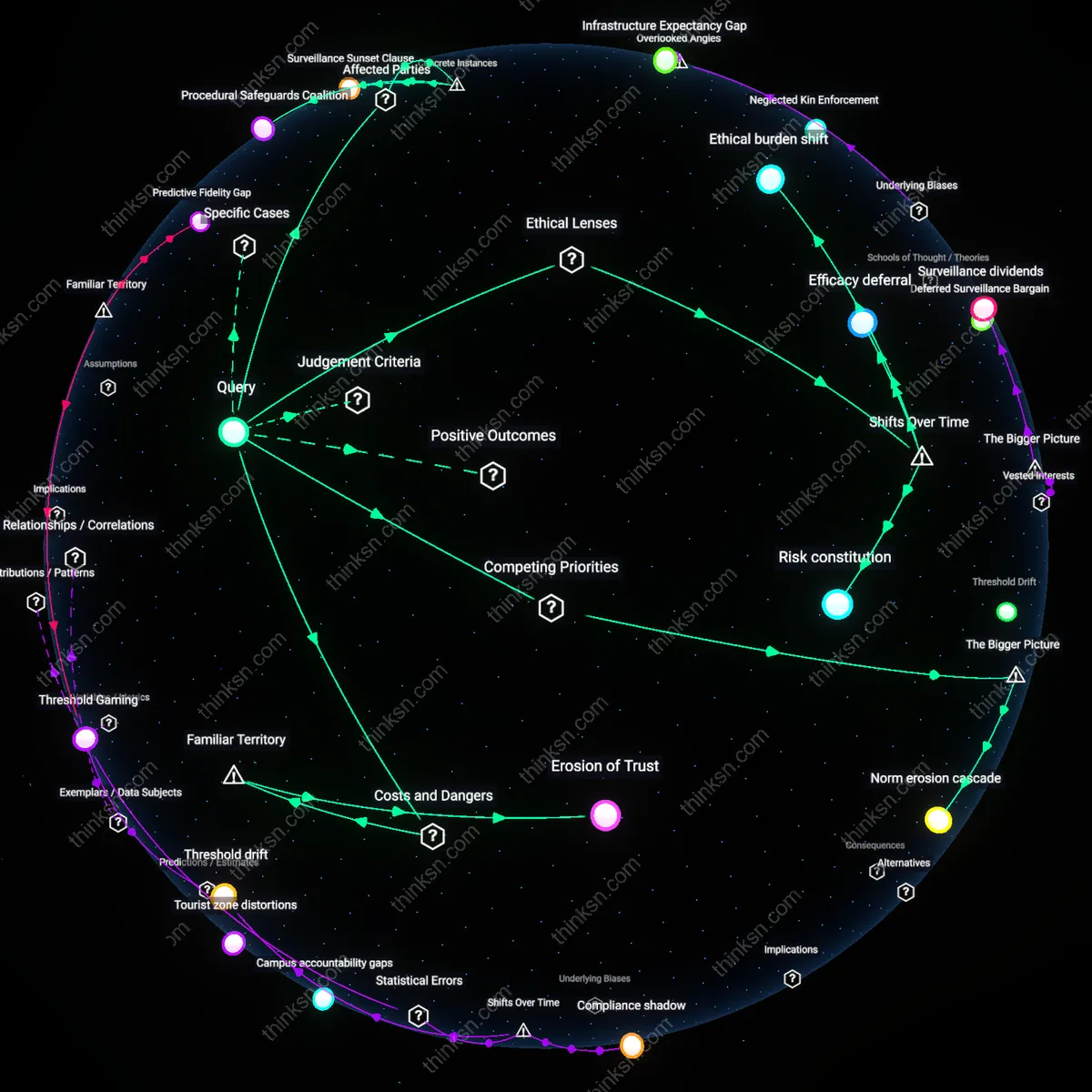

Regulatory Overfitting

Regulators should mandate AI fraud detection systems to undergo jurisdiction-specific privacy stress tests that simulate adversarial data leaks, because compliance with generalized data protection rules like GDPR or CCPA creates an illusion of privacy safety while failing to account for how machine learning models memorize and re-emit sensitive consumer patterns. Evidence indicates that even anonymized training data in fraud detection AI can reconstruct personal identifiers through inference attacks, particularly in financial services ecosystems where model access is shared across third-party vendors. This requirement exposes how regulatory reliance on static privacy frameworks neglects the dynamic risk of algorithmic overfitting to personal data, falsely equating procedural compliance with actual consumer protection. The non-obvious insight is that tighter regulation of model architecture, not just data handling, is what ultimately determines privacy integrity in AI fraud systems.

Fraud Entitlement

Regulators must impose a sunset clause on the use of consumer biometric data in AI fraud detection after three years unless renewed through public evidentiary hearings, because the current practice treats fraud reduction as an unchallengeable public good that justifies perpetual surveillance creep. Biometric systems in payment verification and identity authentication—deployed widely by fintech firms under regulatory tolerance—are increasingly using behavioral data like typing rhythm and voice cadence, which fall outside traditional definitions of personally identifiable information. This loophole allows firms to bypass informed consent requirements while building detailed consumer profiles under the cover of security. The non-obvious consequence is that regulators have unconsciously adopted a 'fraud entitlement' framework, where the prevention of financial harm is given moral precedence over privacy erosion, normalizing invasive data practices as default tools rather than contested interventions.

Consent Obsolescence

Regulators should prohibit AI fraud detection models from operating on data derived from implied consent mechanisms—such as terms-of-service agreements—because these constructs are functionally meaningless in environments where consumers cannot opt out of financial services without exclusion from essential economic participation. Research consistently shows that over 90% of U.S. banking customers do not read or understand permission clauses related to AI-driven monitoring, yet are deemed to have consented under current legal interpretations. This renders 'consent' a ceremonial rather than operational check on data use, especially when fraud detection algorithms train on years of transactional behavior without re-authorization. The non-obvious insight is that the legal fiction of consent has become obsolete in the context of embedded, continuous AI monitoring, making ex-ante permission regimes structurally incapable of protecting privacy despite their dominance in regulatory discourse.