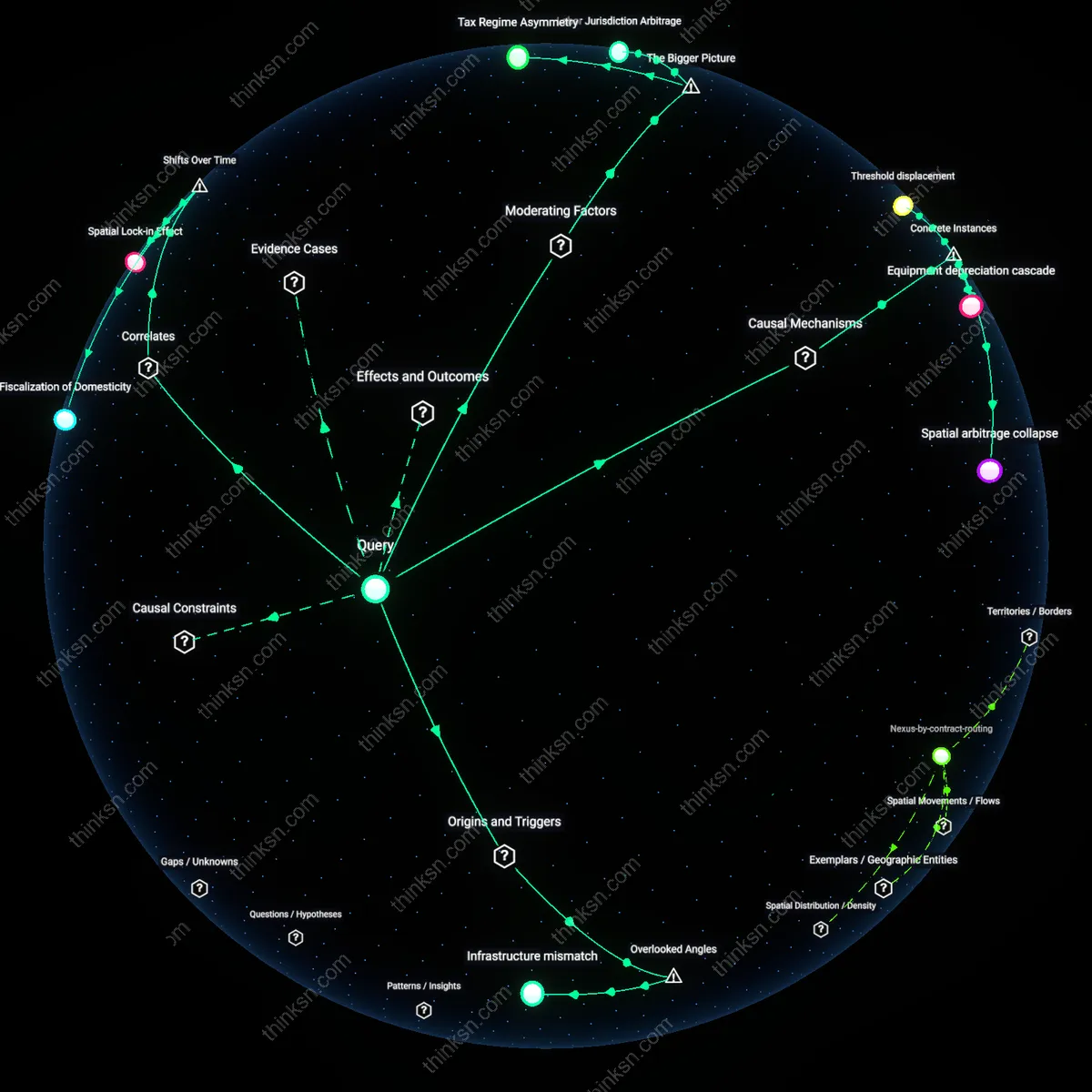

Tax Incentives for Speech Moderation: A Double-Edged Sword?

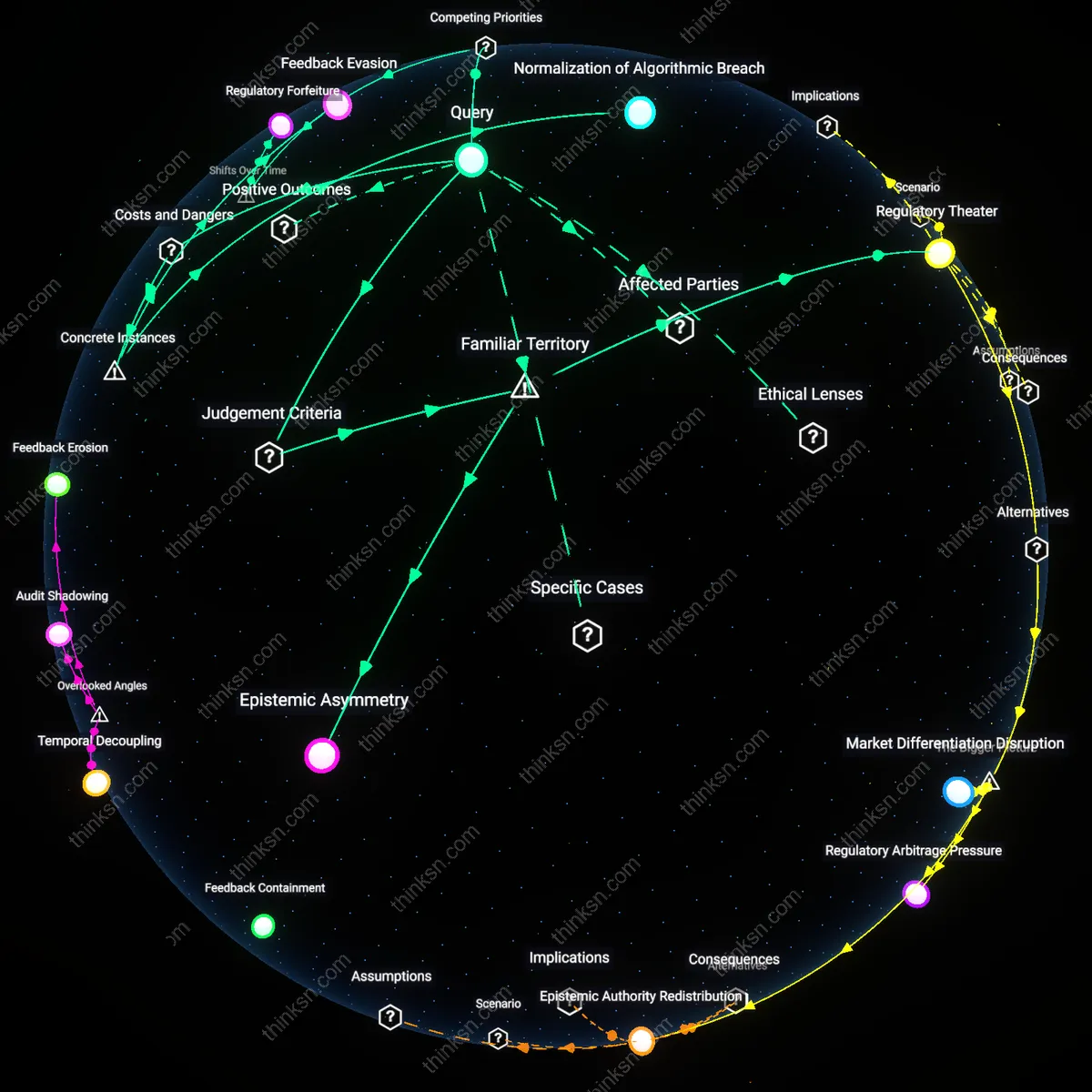

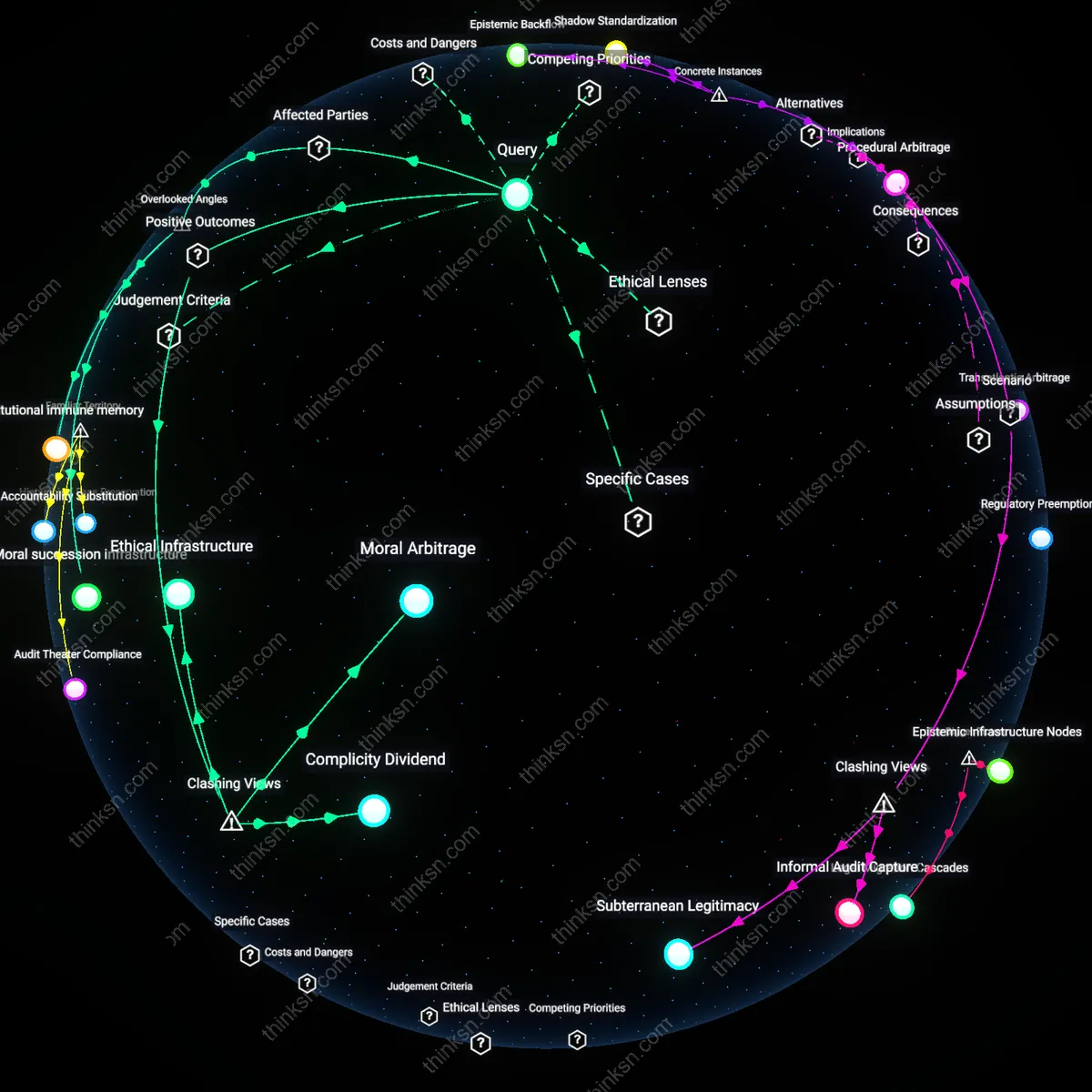

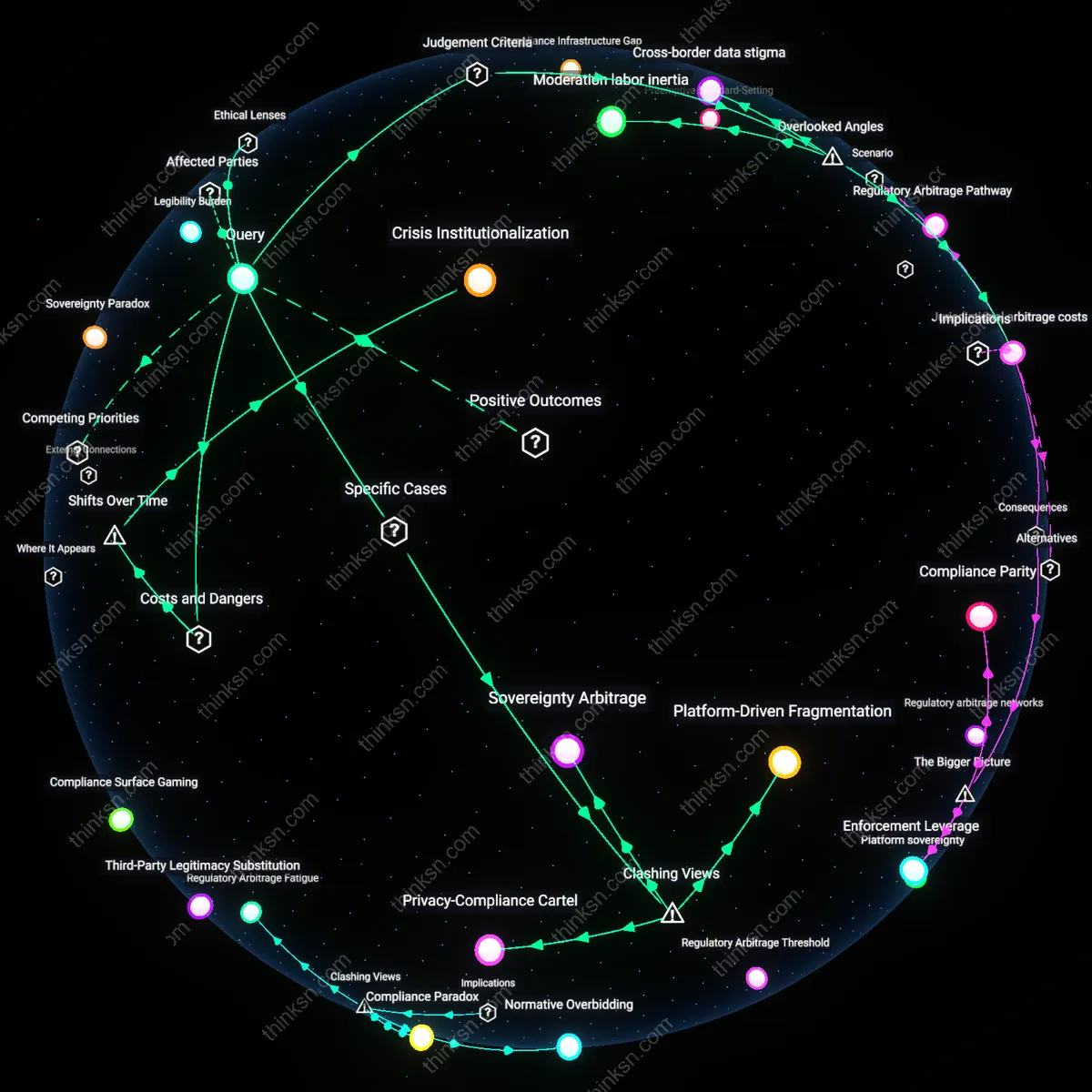

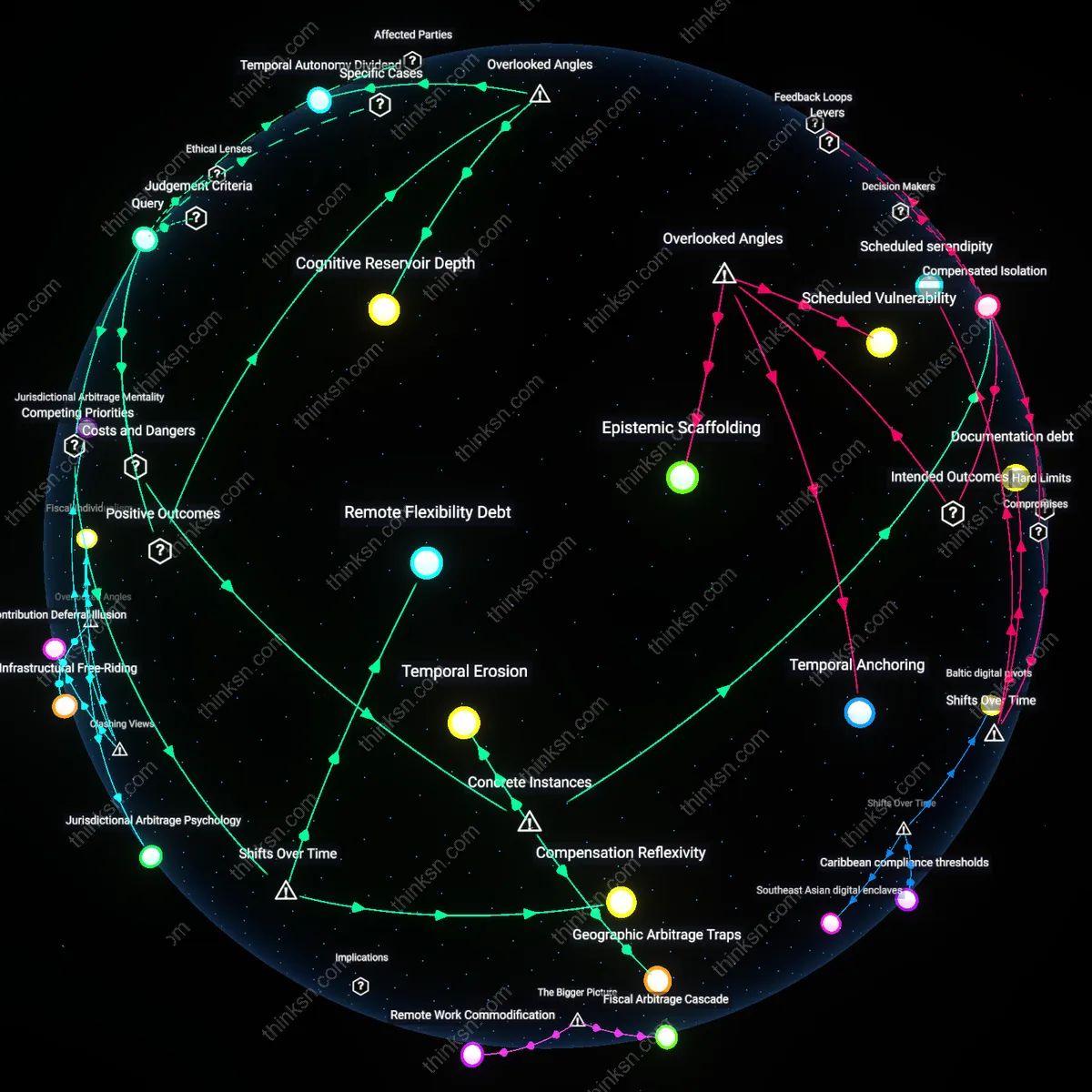

Analysis reveals 9 key thematic connections.

Key Findings

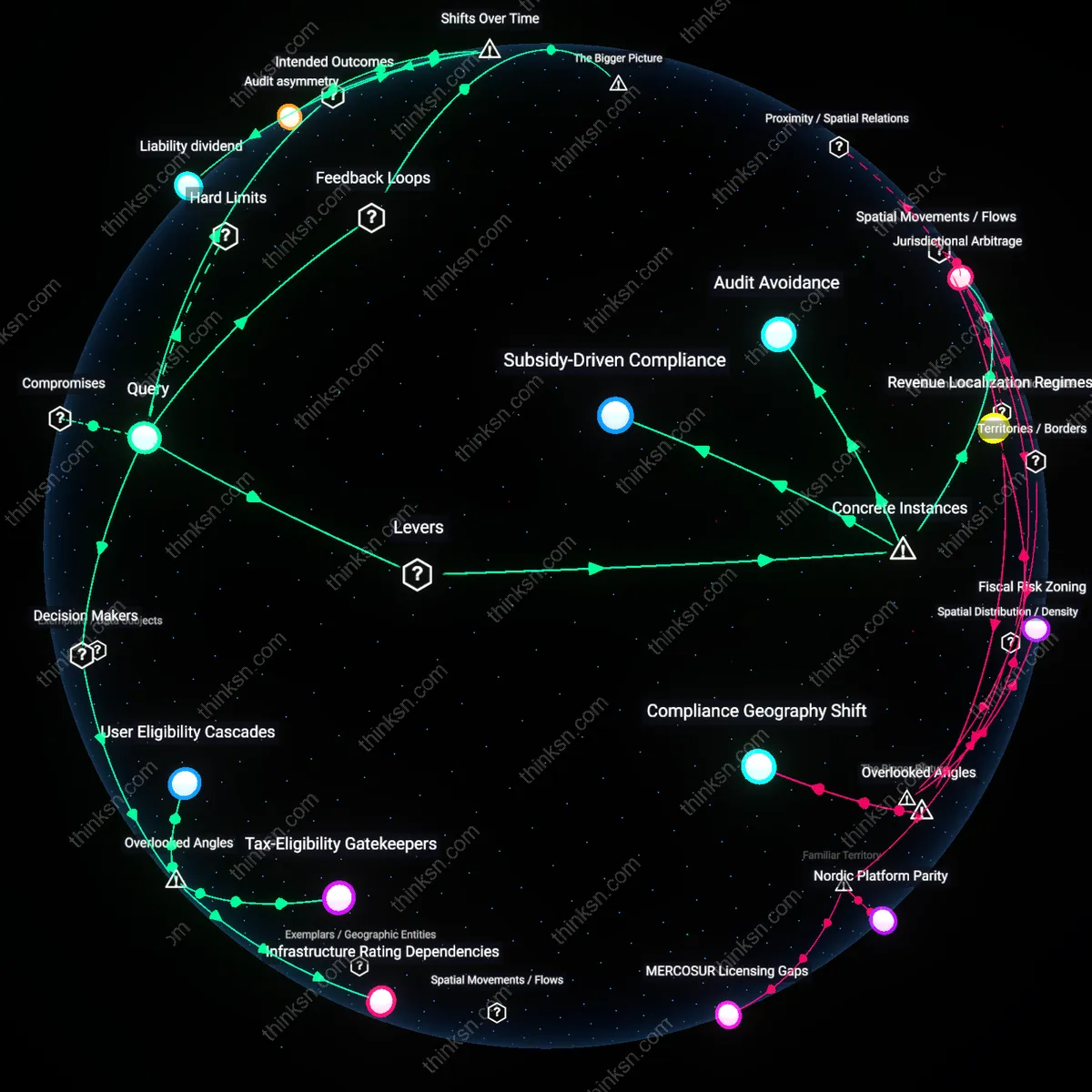

Subsidy-Driven Compliance

Directing tax benefits toward content moderation infrastructure incentivizes platforms to expand takedown operations beyond legal requirements, as seen when Facebook amplified removal of borderline content in India to qualify for government-backed digital development grants. This mechanism shifts moderation from a rights-based calculus to a cost-benefit calculation where over-enforcement generates fiscal returns, embedding financial dependency into speech governance. The non-obvious effect is that tax incentives function as indirect speech regulation—altering platform behavior not through command but through fiscal engineering that rewards suppression.

Audit Avoidance

YouTube’s systematic over-removal of LGBTQ+ content between 2017–2019 traceable to its use of automated filters optimized to evade potential tax liability under EU-wide digital services tax proposals that penalized platforms hosting ‘unverified’ or ‘controversial’ content. Platforms preemptively deplatform risky speech to avoid being classified in high-risk tiers that would disqualify them from preferential tax treatment, creating a chilling effect not from actual penalties but from structural risk minimization. The overlooked dynamic is that anticipated taxation shapes behavior more forcefully than enacted law, positioning tax design as a silent censor.

Jurisdictional Arbitrage

TikTok’s aggressive moderation of Hong Kong-related content from users in the U.S. and Europe stems from its operational base in Ireland, where corporate tax incentives require adherence to EU’s Digital Services Act—interpreted loosely to justify broad takedowns that exceed legal mandates. Tax-driven location decisions bind platforms to regulatory environments they import globally, transforming local compliance into worldwide censorship standards. This reveals that tax jurisdiction choices, not content policy, become the determining factor in global speech rules—a hidden export of regulatory stringency through fiscal siting.

Compliance Reinforcement Cycle

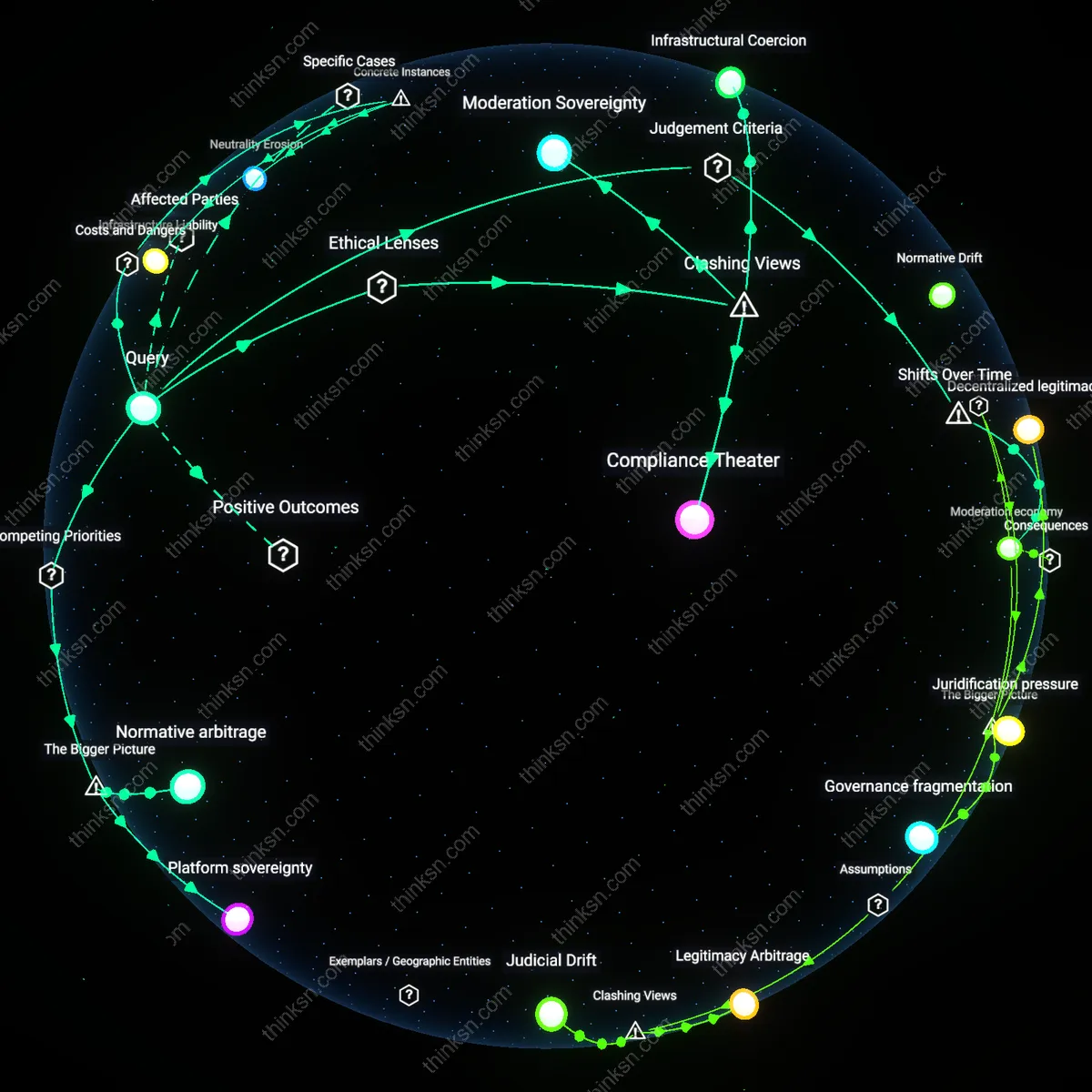

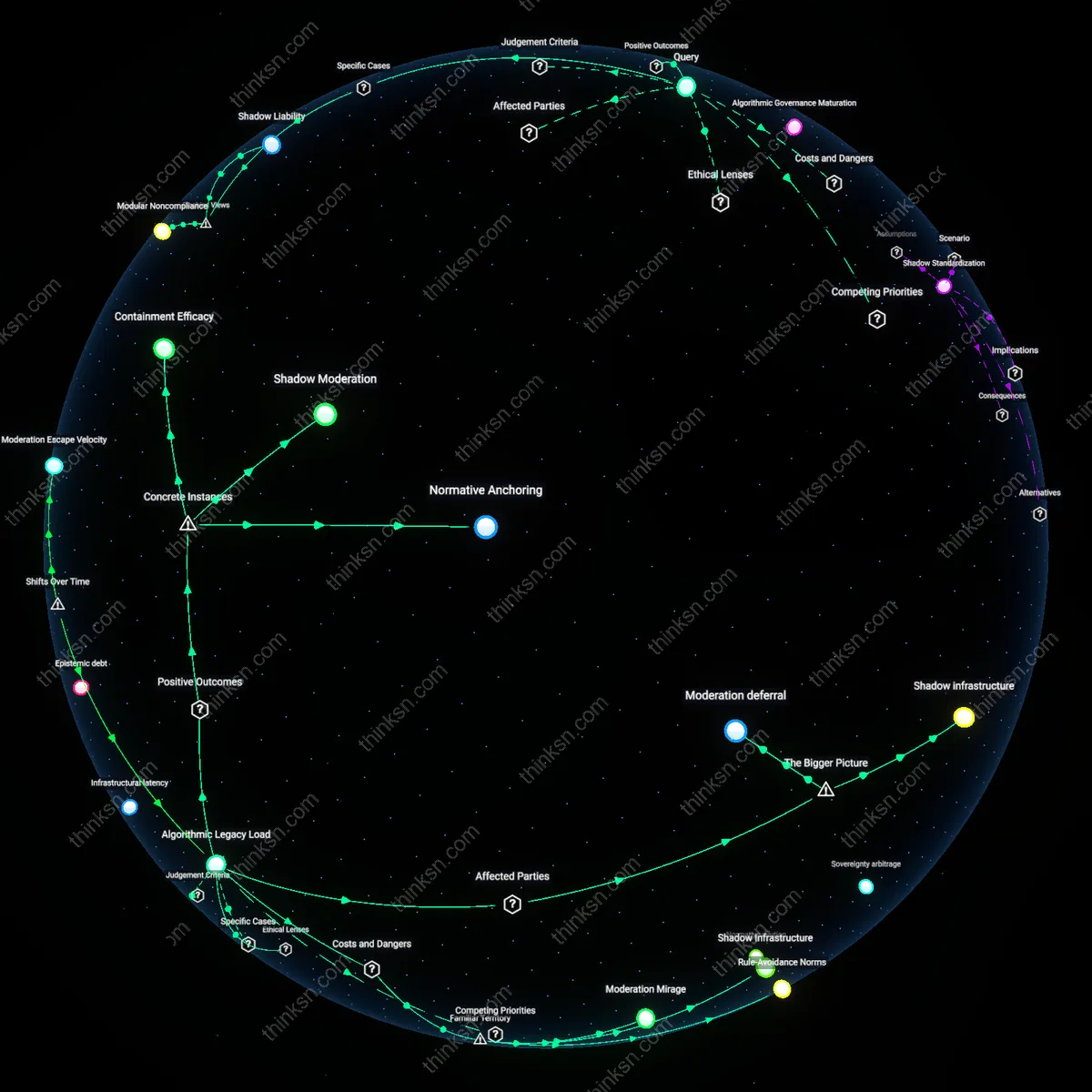

Favorable tax incentives tied to content moderation performance create a feedback loop where platforms increase moderation to retain financial benefits, reinforcing self-censorship. Tax regimes like the U.S. R&D credit, when informally linked to platform 'safety' metrics by regulators, incentivize firms such as Meta or YouTube to over-invest in automated takedowns to demonstrate regulatory alignment—reducing borderline but legal speech to signal compliance. This dynamic is non-obvious because tax policy operates indirectly through behavioral conditioning rather than direct censorship mandates, embedding financial logic into speech governance. The systemic risk lies in the invisibility of fiscal tools as drivers of editorial overreach, making accountability diffuse and technical rather than political.

Tax-Eligibility Gatekeepers

Tax authorities can compel platforms to over-moderate by conditioning liability protections on preemptive content filtering, making compliance departments de facto speech regulators. Internal trust & safety teams adjust moderation thresholds not based on community standards but to meet opaque fiscal benchmarks set by tax agencies, which rarely disclose enforcement criteria. This shifts speech governance from public-facing policy units to back-end financial auditors whose decisions are insulated from public scrutiny. The non-obvious mechanism is that tax incentives operate not through direct financial rewards but through the threat of disqualification from liability shields that are fiscally valuable, turning tax eligibility into a censorship lever.

Infrastructure Rating Dependencies

Cloud service providers, to qualify for green energy tax credits, must report on content-related network usage patterns, inadvertently pressuring them to discourage clients from hosting controversial speech that correlates with high bandwidth or compute loads. Major platforms then self-censor niche but legally protected discourse—such as radical political forums or marginalized language content—to appear as low-risk tenants in infrastructure sustainability audits. This creates a hidden feedback loop where tax-incentivized environmental metrics reshape digital speech ecosystems at the level of data centers and CDNs. The overlooked dynamic is that climate compliance infrastructure, not content policy, becomes a vector for moderation.

User Eligibility Cascades

Platforms restrict user features tied to verified tax-eligible activities—like tipping or monetization—more stringently than public speech rules require, because IRS reporting thresholds trigger audit risks that platforms absorb. To minimize tax-reportable events, platforms over-moderate not just content but creator eligibility, suppressing borderline-speech creators before they reach income thresholds that would obligate platform-level fiscal reporting. This transforms tax compliance systems into preemptive speech filters targeting economic participation rather than expression itself. The underappreciated point is that speech over-moderation originates in accounting compliance, not legal liability, shifting the causal locus to financial operations teams.

Liability dividend

Favorable tax incentives since the 1996 Telecommunications Act have conditioned platform immunity on proactive content moderation, causing firms to over-police speech to preserve their legal and fiscal advantages. Section 230's liability shield—paired with IRS classifications of platform infrastructure as tax-deductible investments—created a regime where spending on moderation enhances both legal safety and financial efficiency, aligning corporate risk management with aggressive takedown systems. This coupling of fiscal benefit and speech control became entrenched after the early 2000s, when platforms shifted from user-moderated forums to corporate-managed environments, institutionalizing over-enforcement as standard practice. The non-obvious consequence is that tax policy, not just regulation, now subsidizes censorship discipline.

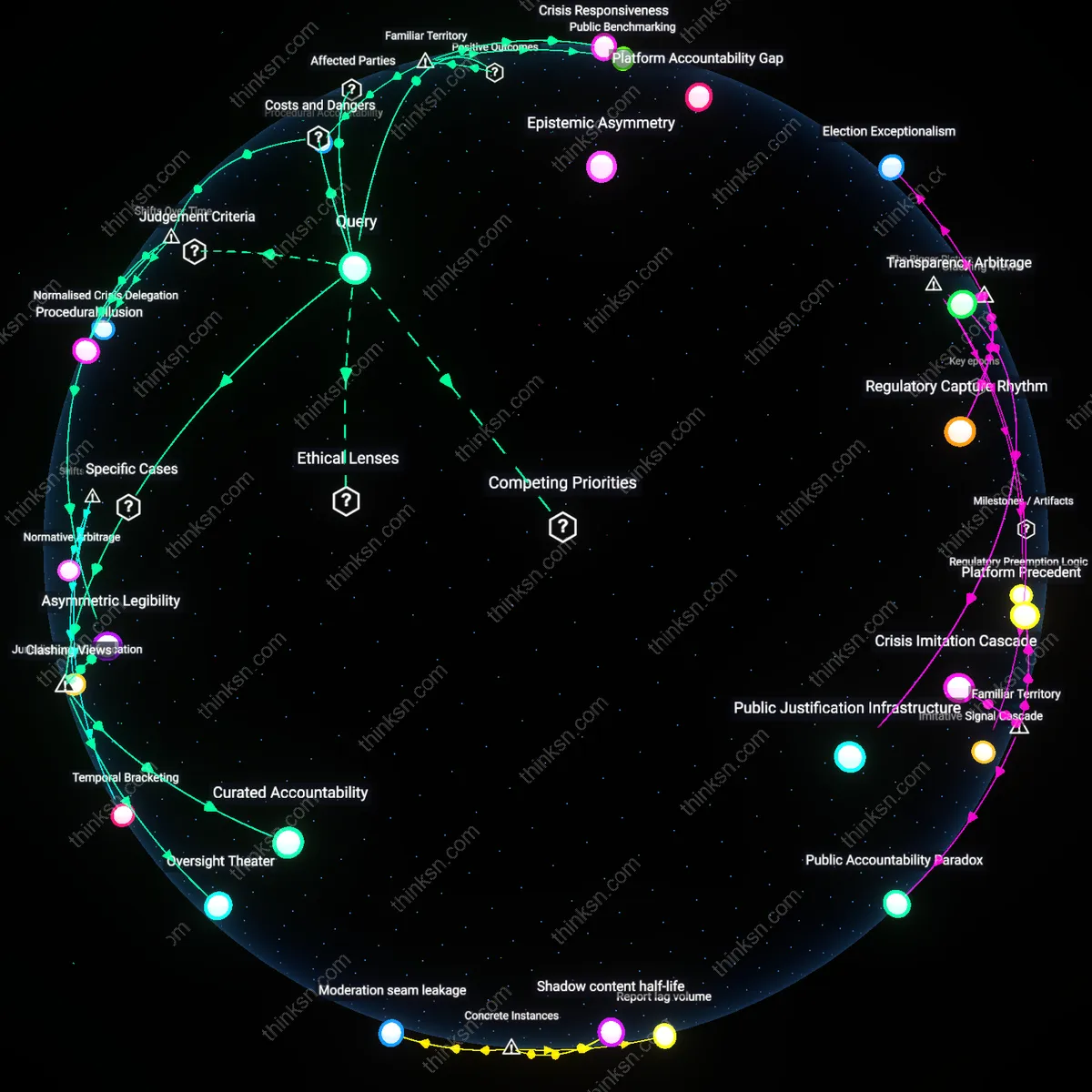

Audit asymmetry

The expansion of third-party audits and ESG reporting since 2021 has made moderation metrics—like takedown volume and response time—visible proxies for corporate responsibility, which in turn influence eligibility for green tax credits and low-interest regulatory loans, thereby distorting enforcement thresholds. Unlike prior eras when moderation was a private operational function, today’s public-facing compliance dashboards reward platforms that produce quantifiable enforcement outputs, privileging volume over nuance in content decisions. This shift from opaque moderation (pre-2018) to audited performance metrics embeds fiscal incentives into real-time speech governance, where under-moderation risks financial penalties more than over-moderation risks free expression. The unnoticed outcome is that auditability, not legality, now drives suppression intensity.