Is Social Media Speech Regulation a Public Utility?

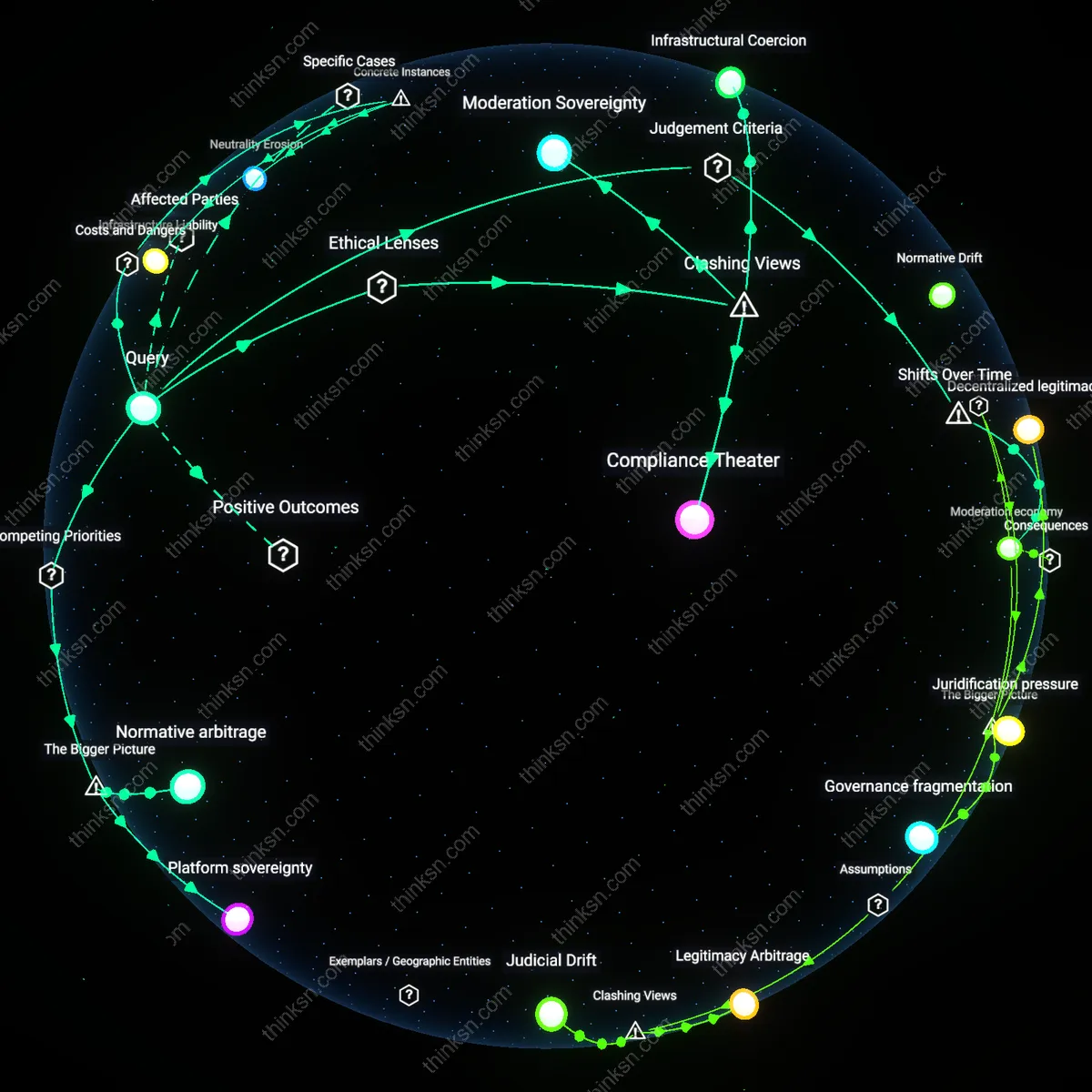

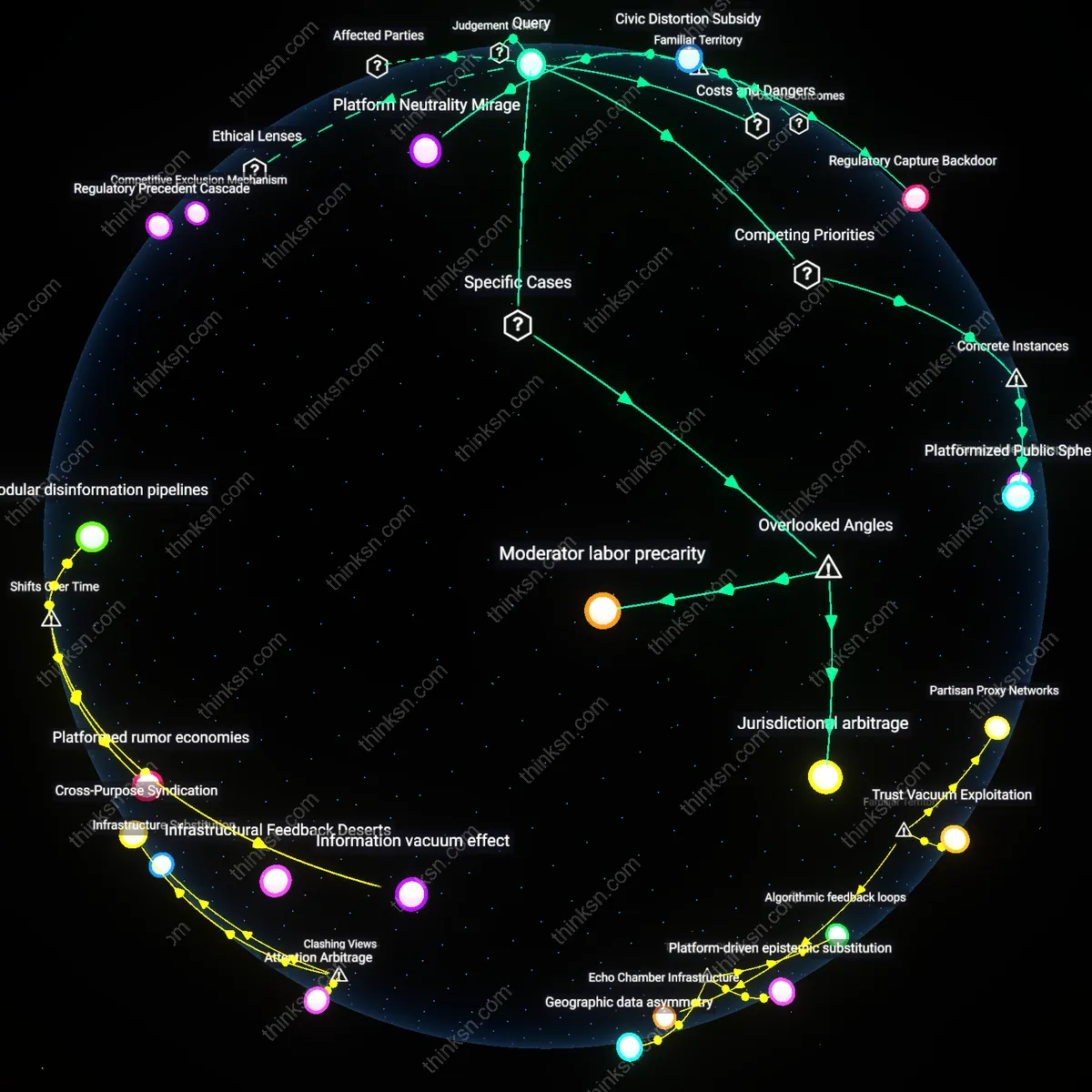

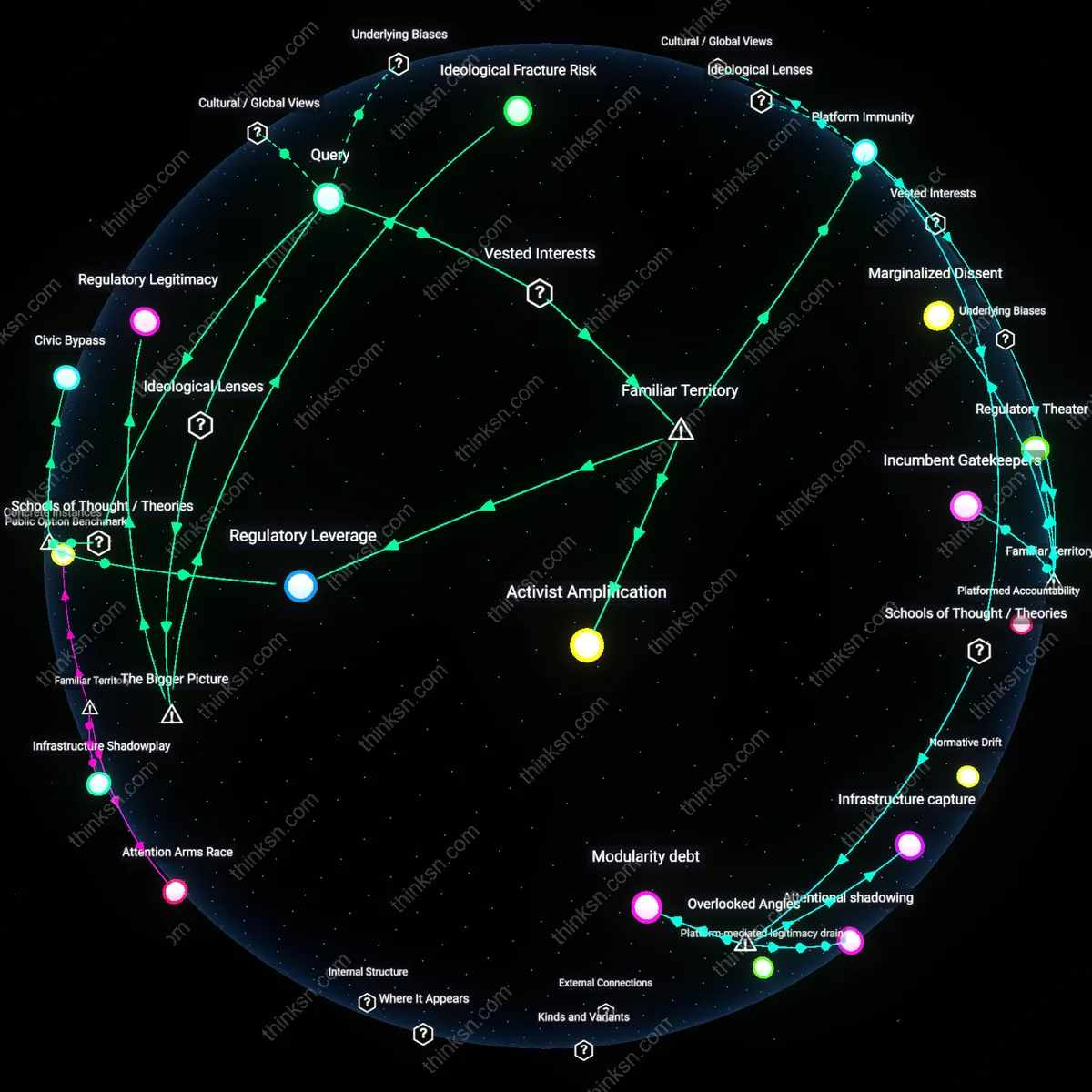

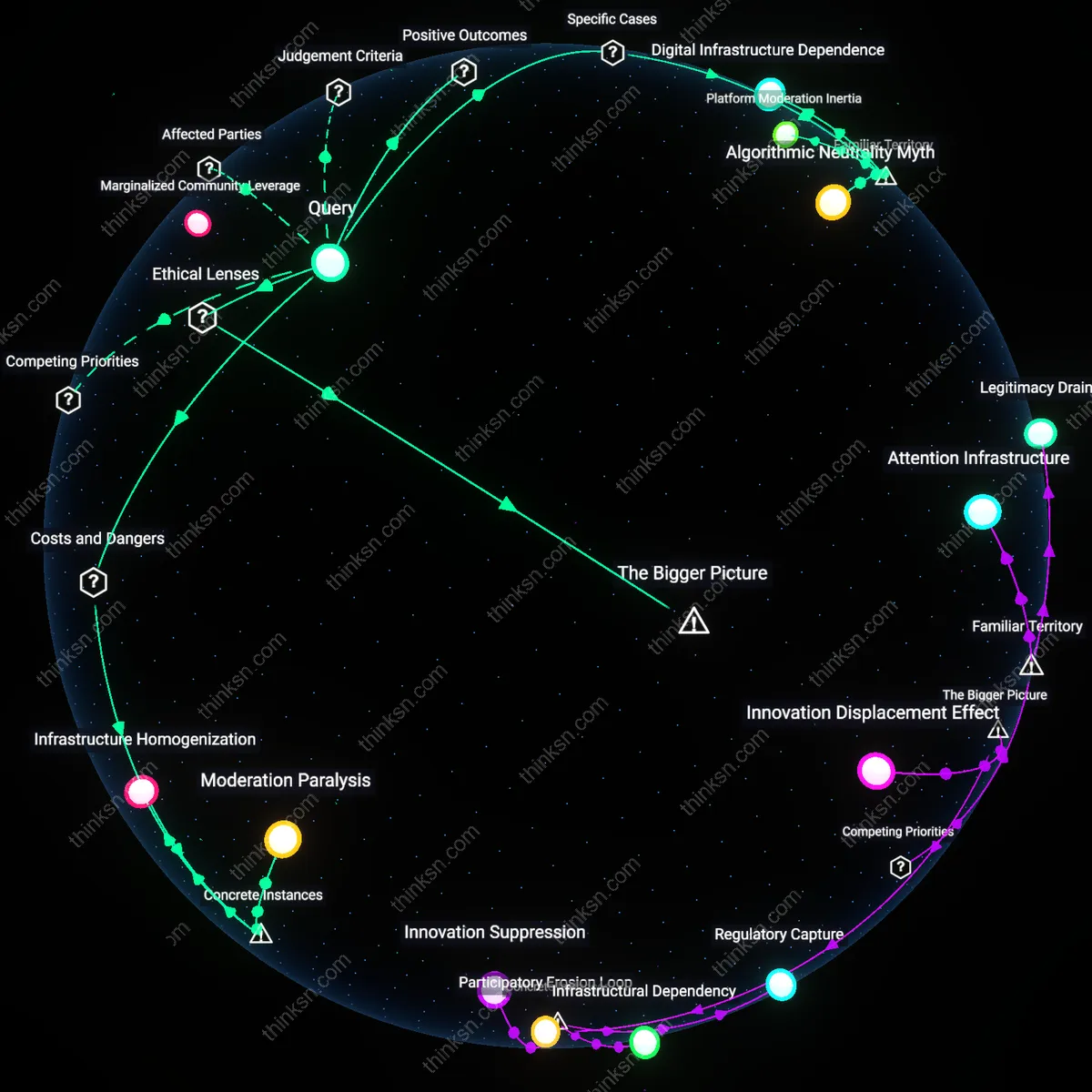

Analysis reveals 9 key thematic connections.

Key Findings

Moderation economy

Yes, dominant social media platforms should be treated as public utilities because content moderation functions as a de facto regulatory system that allocates speech rights, a shift from state-controlled censorship to privately administered, rule-based exclusion—a mechanism once reserved for public institutions. Tech firms now operate through standardized enforcement infrastructures (e.g., automated takedowns, appeals panels, community guidelines) that mimic judicial processes, yet lack transparency and due process, making their decisions on lawful speech economically and socially binding. This transition from ad hoc moderation to institutionalized governance in the post-2016 era—triggered by elections, disinformation crises, and global platformization—reveals how private actors now perform public functions, normalizing a market-driven speech regime where access is contingent on compliance with corporate rules rather than constitutional principles.

Moderation Capture

Treating dominant social media platforms as public utilities risks institutionalizing state-aligned content moderation, as seen when the Indian government in 2021 pressured Twitter to suppress posts related to farmer protests; the mechanism involves legal compulsion leveraging utility-style compliance, which transforms neutral platforms into conduits for politically selective censorship under the guise of lawful order, revealing how public utility designation can enable systemic suppression through formalized coercion rather than market impulse.

Infrastructure Liability

Classifying platforms like Facebook as public utilities would expose them to tort liability for failing to prevent harm during crises, such as the role Meta’s algorithms played in amplifying mob violence in Ethiopia between 2018 and 2022; the unacknowledged danger lies in how utility status could incentivize over-censorship to avoid legal penalties, distorting free expression through risk-averse content filtering mechanized at scale under regulatory duress.

Neutrality Erosion

When Brazil’s Supreme Court ordered WhatsApp shutdowns in 2015 and 2016 over encryption disputes, treating the service as essential infrastructure invited state interference based on national security claims; this case reveals that public utility designation facilitates governmental disruption of lawful speech under emergency powers, transforming platform neutrality into a negotiable condition rather than a structural safeguard.

Platform sovereignty

Dominant social media platforms should not be treated as public utilities because doing so would collapse the distinction between state regulation of speech and corporate control over digital infrastructure, enabling governments to exploit content moderation regimes as de facto speech licensing systems. The U.S. government, under pressure to combat disinformation and hate speech, increasingly relies on platforms’ private enforcement of speech norms to avoid constitutional constraints on direct censorship—this public-private entanglement creates a systemic incentive for regulators to outsource speech governance while maintaining plausible deniability. What is underappreciated is that treating platforms as utilities would formalize this arrangement, transforming ad hoc moderation into a regulated standard and effectively granting platforms quasi-judicial authority over lawful expression under state mandate, yet without accountability mechanisms inherent in the judiciary. This dynamic reveals how the push for utility status is less about access and more about codifying platform sovereignty—the residual regulatory space where corporate discretion replaces both market competition and democratic oversight.

Normative arbitrage

Dominant social media platforms should not be treated as public utilities because their global operation inherently depends on exploiting discrepancies between national speech regimes, and utility designation in one jurisdiction would undermine their capacity to engage in normative arbitrage. Platforms like Meta and X align content moderation policies with the highest common denominator of permissibility across regions—e.g., enforcing EU hate speech standards globally to avoid fragmentation—creating a de facto speech floor that preempts local democratic deliberation. The non-obvious consequence is that utility regulation in the U.S. would anchor platforms to American free speech norms, forcing either withdrawal from stricter jurisdictions or the imposition of U.S.-style permissiveness abroad, thereby exporting First Amendment logic without democratic mandate. This exposes normative arbitrage as the systemic mechanism through which platforms preserve operational coherence by gaming jurisdictional pluralism, turning legal fragmentation into a strategic resource rather than a constraint.

Moderation Sovereignty

Dominant social media platforms should not be treated as public utilities because their content moderation functions operate as exercises of private editorial judgment, protected under a digital interpretation of the First Amendment as recognized in cases like Miami Herald v. Tornillo. This reframing resists the assumption that ubiquity necessitates public status, instead treating algorithmic curation and takedown decisions as expressive acts by corporate entities, akin to newspaper editors choosing headlines. The non-obvious implication is that holding platforms accountable as utilities would not correct private control but dismantle the very legitimacy of platform speech rights—revealing that the core tension lies not in access but in who holds expressive authority in networked discourse.

Infrastructural Coercion

Dominant social media platforms should be treated as public utilities not because of speech rights, but because their structural position enables coercive control over participation in civic life, analogous to how electricity or telephone networks were regulated under common carrier doctrines in the 20th century. Once platforms become non-avoidable conduits for political candidacy, job seeking, and emergency communication—as seen in rural Kenya’s reliance on WhatsApp for governance announcements—their denial of access functions as de facto disenfranchisement. This reframing challenges the liberal narrative of platforms as mere speakers by exposing how infrastructural indispensability, not editorial intent, creates systemic vulnerability to private power.

Compliance Theater

Treating dominant platforms as public utilities would legitimize state-aligned content governance under the guise of neutrality, benefiting not users but regimes seeking to normalize surveillance and compelled amplification of official narratives, as evidenced by India’s 2021 IT Rules enforcement through government oversight committees. Framing moderation as a utility service implies a standardizable, auditable pipeline that requires platform alignment with state-defined legality, eroding boundary maintenance against authoritarian legal creep. The underappreciated risk is that utility status does not constrain power but institutionalizes platforms as administrative arms of the state—what emerges is not equity, but performance of due process without judicial independence.