Do EU Social Media Rules Protect Privacy or Entrench Giants?

Analysis reveals 11 key thematic connections.

Key Findings

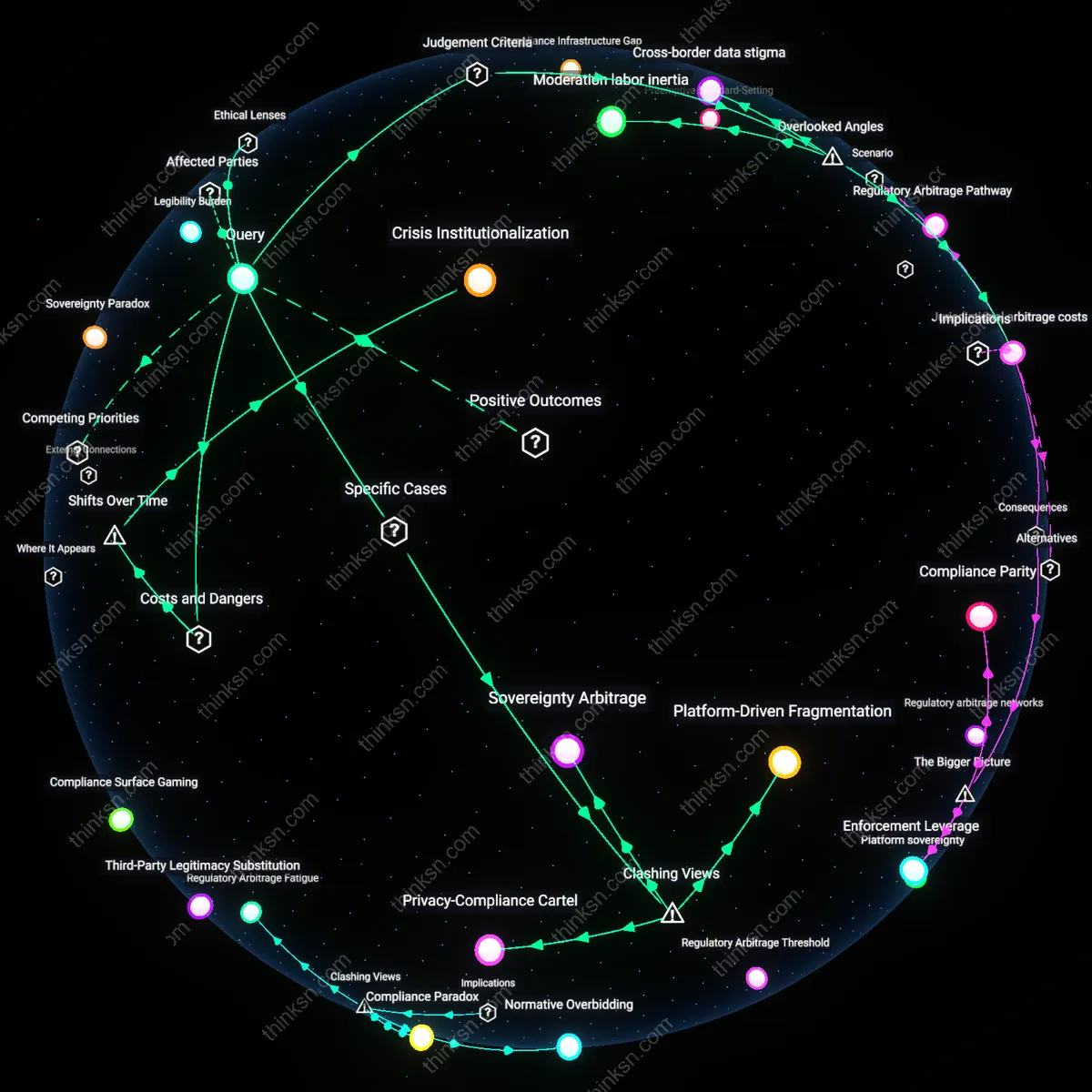

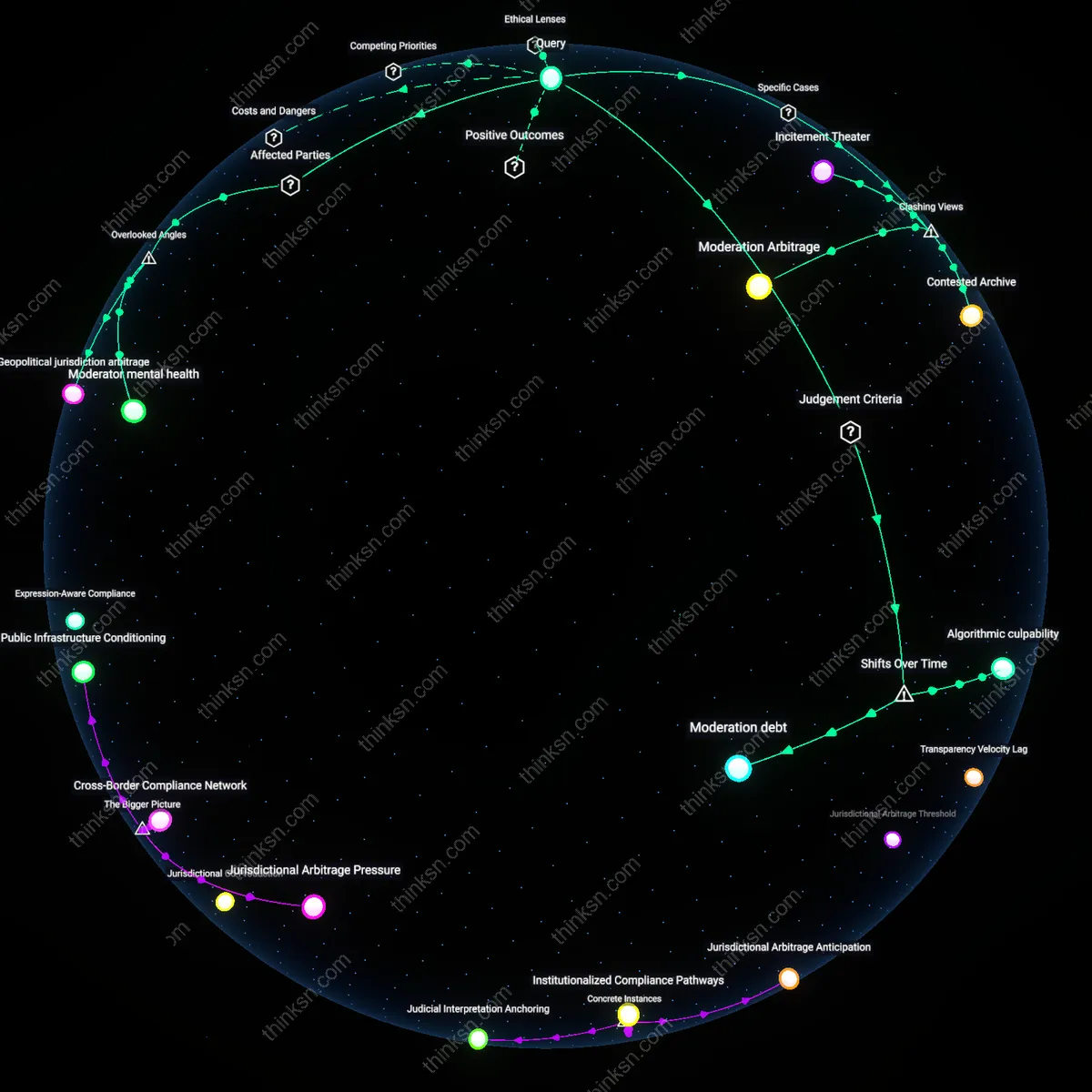

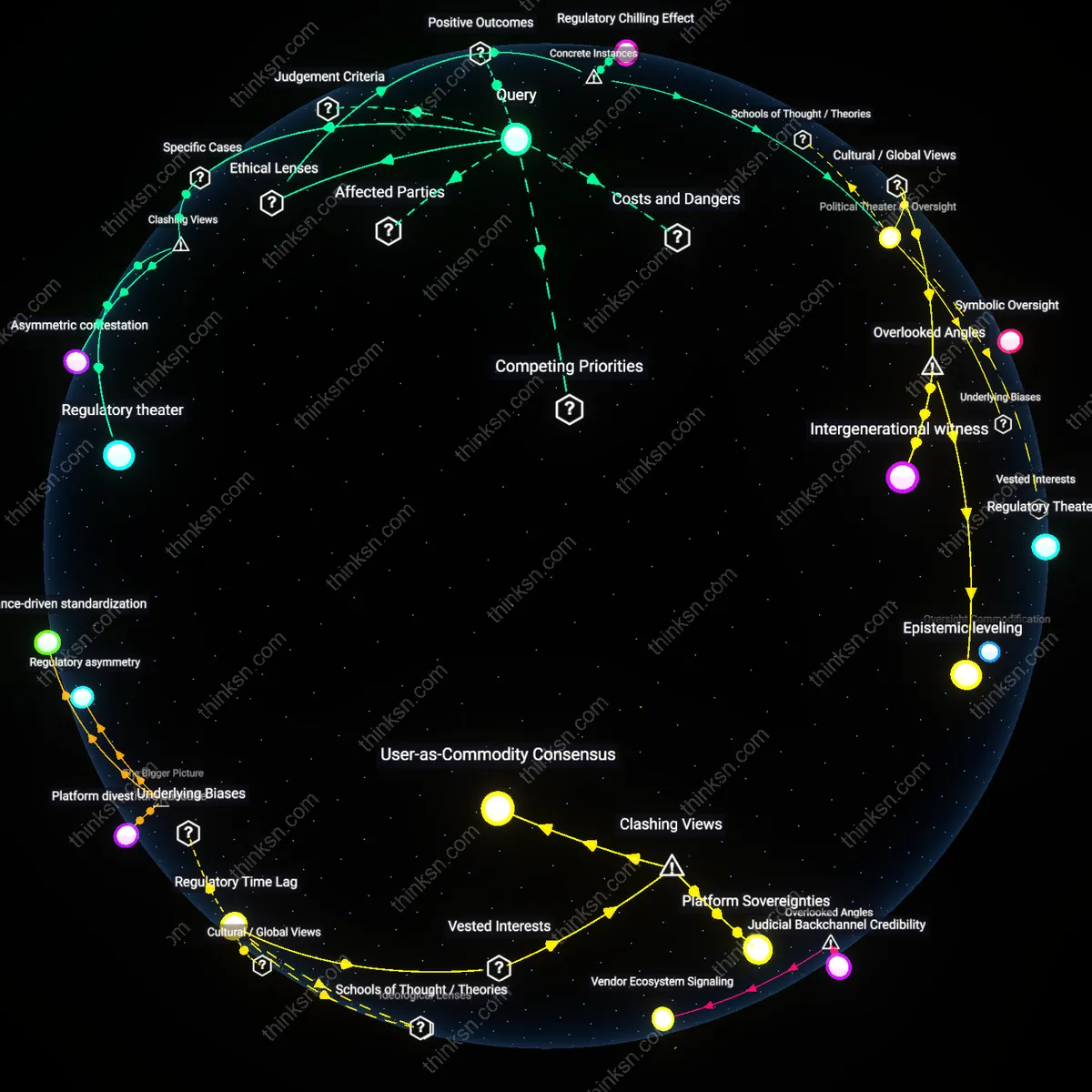

Jurisdictional arbitrage costs

EU-wide content moderation rules unintentionally raise the operational cost of regulatory evasion for dominant platforms by forcing non-EU competitors to replicate Brussels-approved systems locally, thereby reducing their ability to exploit jurisdictional fragmentation. Major firms like Meta or TikTok can absorb the expense of aligning with strict EU norms across markets, while smaller rivals without global scale must either incur disproportionate compliance overhead or exit EU-adjacent regions, quietly accelerating market concentration despite privacy gains. This dynamic is overlooked because most analyses focus on fines or enforcement power, not the hidden economic barrier formed by institutional mimicry requirements—a cost that only becomes visible when examining how regulatory templates spill across borders through infrastructure lock-in.

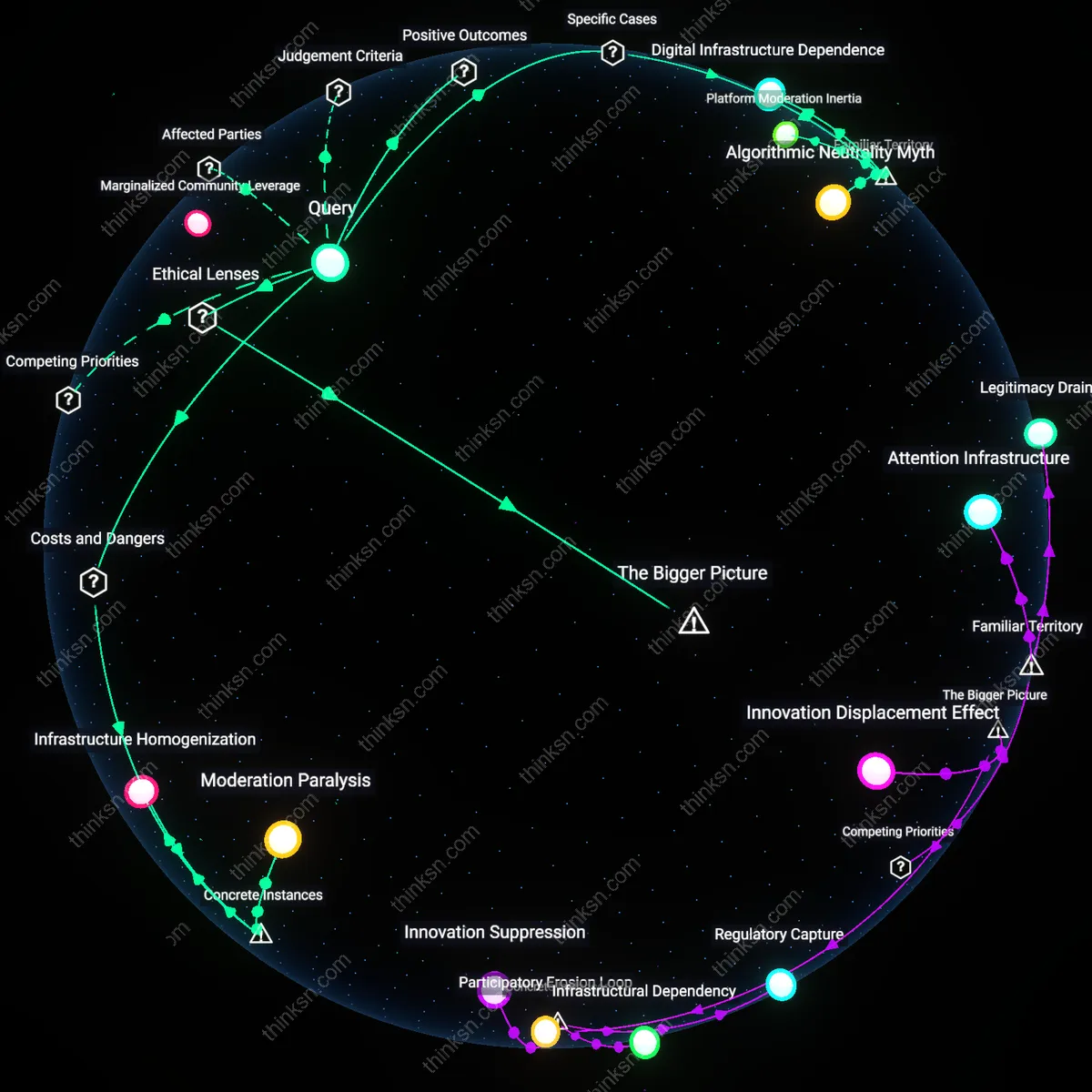

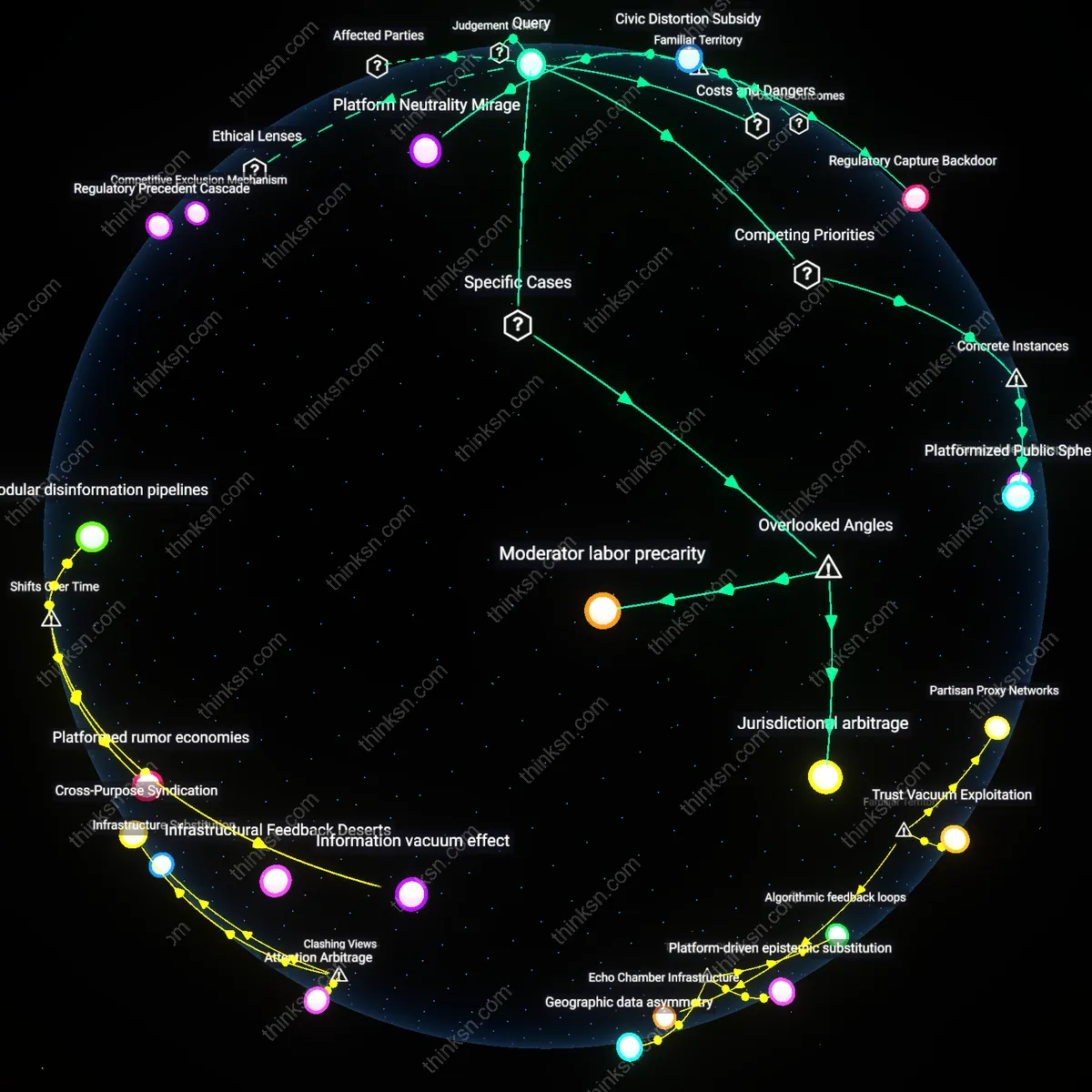

Moderation labor inertia

Strict EU content rules increase reliance on centralized moderation workforces in Dublin and Vilnius, entrenching platforms’ dependence on a few large contractor hubs that resist changes in oversight mandates due to retraining and coordination costs. When new privacy-preserving moderation tools emerge—like local AI classifiers that avoid data pooling—these established human-machine workflows delay adoption because restructuring labor processes threatens short-term compliance reliability. Analysts typically assess privacy and competition through data flows or market share, but the inertia of large-scale content moderation labor systems as a bottleneck for innovation is rarely considered, even though it determines how quickly platforms can shift toward less centralized, more privacy-friendly architectures.

Cross-border data stigma

EU content moderation rules amplify reputational risks for platforms transferring user data outside the bloc—even for non-personal content—because any data movement becomes politically sensitive after GDPR and Schrems II, which has led companies to limit internal data sharing between regional teams to avoid scrutiny. This data caution fragments internal knowledge systems, preventing efficient scaling of local moderation practices and inadvertently favoring large firms that can afford isolated regional silos over agile startups needing data fluidity. This effect is typically ignored because competition policy assumes data access drives dominance, but the underappreciated force here is the cultural and bureaucratic aversion to cross-border data handling—even anonymized content logs—that turns privacy norms into stealth consolidation mechanisms.

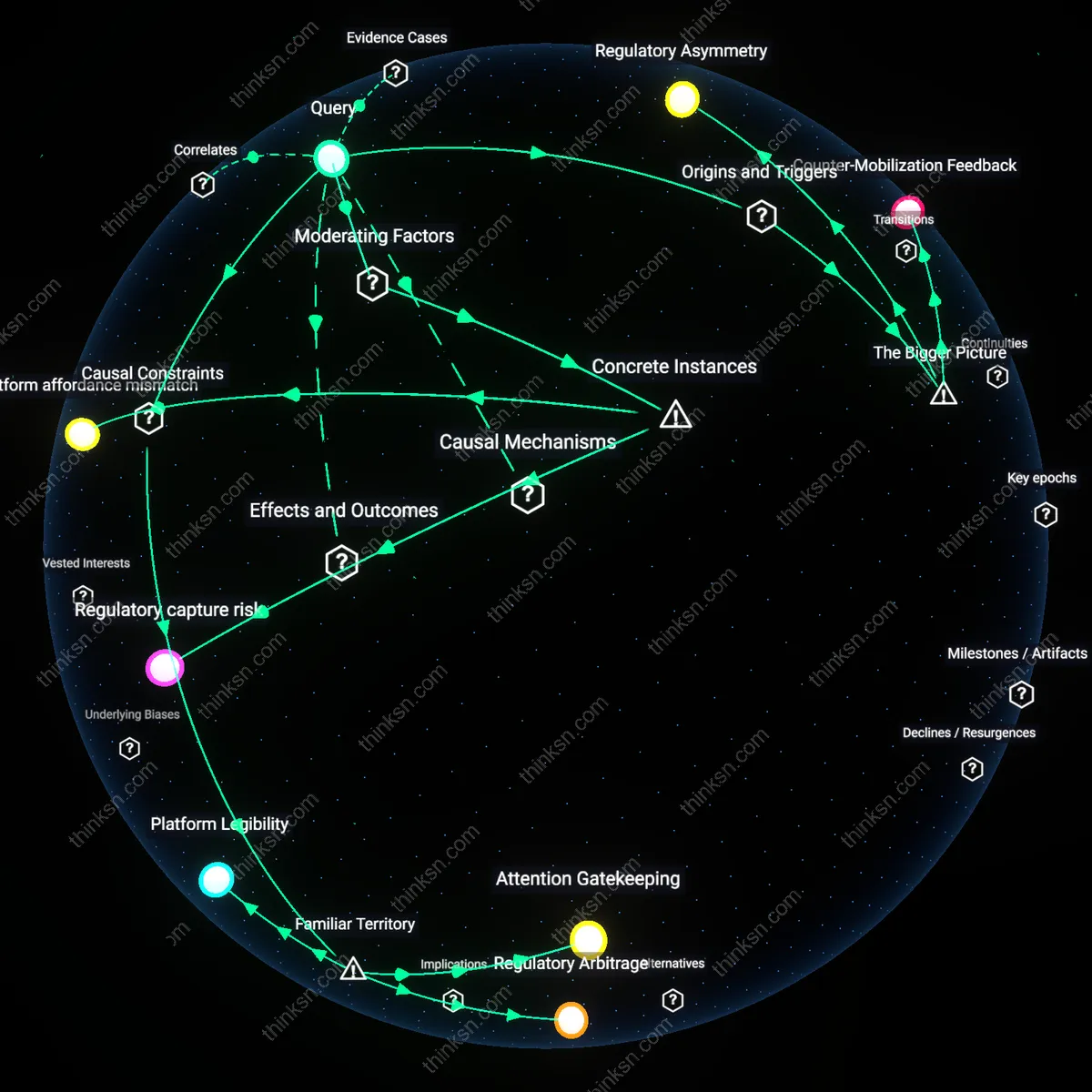

Surveillance Drift

EU content moderation rules have transformed privacy from a procedural safeguard into a latent vector for centralized data aggregation, as seen in the shift from the 2002 ePrivacy Directive’s consent-based model to the 2023 Digital Services Act’s automated detection mandates. Platforms now deploy AI-driven monitoring to comply with takedown requirements, normalizing continuous user surveillance under the guise of compliance—this inversion means privacy protections are leveraged to justify deeper data processing, not limit it. The non-obvious consequence is that regulatory intent to protect public discourse has instead institutionalized preemptive tracking, turning privacy regimes into infrastructure for systemic exposure.

Crisis Institutionalization

Following the 2015 refugee crisis and subsequent counterterrorism focus, EU content governance evolved from addressing copyright or spam to framing all unmoderated speech as emergent threat, embedding emergency logic into permanent regulation. This securitization trajectory culminated in the 2022–2023 rollout of crisis response protocols under the Digital Services Act, where temporary measures became templates for routine enforcement—conflating harmful content with systemic instability. The result is a feedback loop in which the periodic invocation of crisis legitimizes both expanded surveillance and centralized control, normalizing disproportionate enforcement in ways that erode both civil liberties and competitive market structures simultaneously.

Attention Economy Entrenchment

EU-wide content moderation rules strengthen dominant platforms by imposing compliance costs that smaller competitors cannot absorb, entrenching firms like Meta and Google. These rules require sophisticated monitoring infrastructure and legal teams that only incumbents possess, transforming privacy safeguards into barriers to entry. While the public frames content regulation as a shield for personal data, the non-obvious effect within this familiar territory is that such shields are metabolized by the attention economy itself, reinforcing the centralization of digital influence under the guise of protection.

Sovereignty Paradox

By enforcing strict privacy-respecting content moderation, the EU inadvertently elevates its regulatory model as a global benchmark, compelling foreign platforms to adopt EU standards to access its market. This projects European political sovereignty outward but simultaneously invites backlash from democratic allies and authoritarian states alike, who frame it as normative overreach. The familiar association of EU regulation with consumer empowerment masks the less-discussed dynamic where ethical governance becomes a form of soft power, producing a sovereignty paradox in which the defense of privacy risks triggering geopolitical fragmentation in internet governance.

Legibility Burden

Standardized content moderation across the EU increases the legibility of user behavior to both platforms and regulators, enabling better privacy enforcement through consistent oversight mechanisms like the Digital Services Act. Yet this legibility requires centralized data collection and classification protocols, which consolidate analytical control within a few technically capable institutions. The public commonly links transparency with accountability, but the underappreciated consequence in this familiar framing is that making user conduct legible to protect privacy simultaneously makes it governable at scale, producing a legibility burden where surveillance logics are re-legitimized through ethical content governance.

Platform-Driven Fragmentation

EU content moderation rules strengthen dominant platforms by imposing compliance costs that smaller rivals cannot absorb, thereby consolidating market power under the guise of privacy protection. The Digital Services Act’s mandatory risk assessments and audit frameworks favor firms like Meta and TikTok, which possess legal and technical infrastructure to adapt, while independent platforms such as Mastodon instances or smaller EU-based social startups face existential scaling burdens. This creates a regulatory moat where only the largest players can operate across the bloc, contradicting the intuitive belief that privacy regulation inherently democratizes digital markets. The non-obvious outcome is that privacy enforcement, when procedurally complex, becomes a vehicle for platform-driven fragmentation of the competitive landscape.

Privacy-Compliance Cartel

Strict EU content moderation rules incentivize data minimization practices that paradoxically reduce consumer privacy by pushing users toward unregulated, encrypted private networks. As seen with the rise of WhatsApp broadcast channels in Germany and Poland following increased platform liability for disinformation, users migrate from transparent, auditable public forums to opaque peer-to-peer ecosystems where moderation is impossible and metadata harvesting by telecom intermediaries increases. This counters the dominant narrative that stronger moderation rules yield better privacy outcomes, revealing instead how compliance pressures can foster a privacy-compliance cartel in which state-enforced public oversight unintentionally accelerates the shift to less accountable, commercially entrenched private communication monopolies.

Sovereignty Arbitrage

The EU’s enforcement of uniform content standards across member states enables large platforms to leverage centralized AI moderation systems that override regional cultural norms, reducing both market entry opportunities and local linguistic nuance in moderation outcomes. In Ireland, where Meta hosts its European headquarters, algorithmic enforcement of EU-wide rules has led to disproportionate content takedowns in minority language contexts like Catalan and Sámi, while German or French content is processed with greater contextual sensitivity due to regulatory proximity and legal capacity. This undercuts the assumption that uniform rules enhance rights protection, exposing instead how centralized compliance creates sovereignty arbitrage, where geopolitical centrality within the EU determines the quality of digital rights enforcement and entrenches structural inequalities within the single market.