Is Voluntary Code Enough to Safeguard AI from Harm?

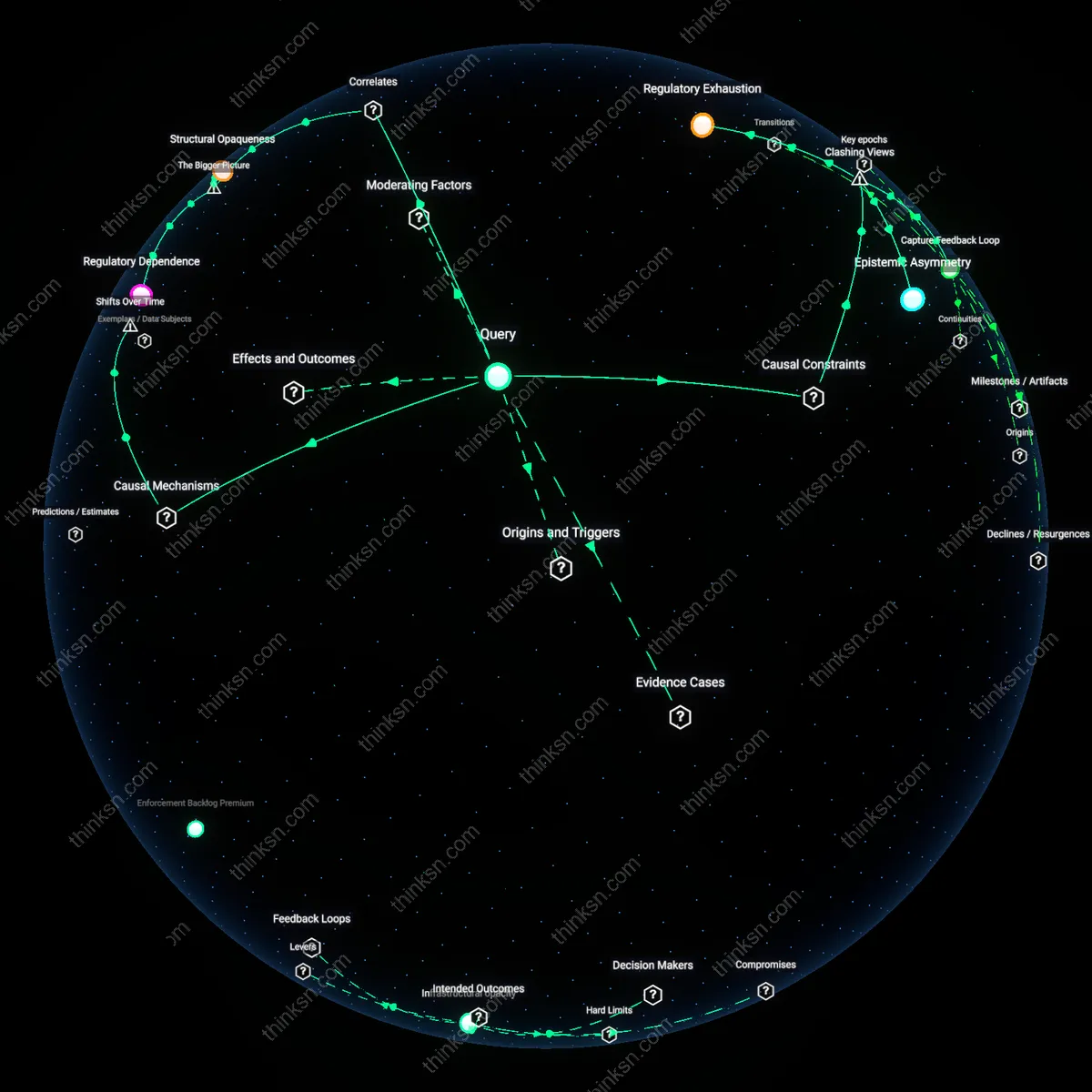

Analysis reveals 7 key thematic connections.

Key Findings

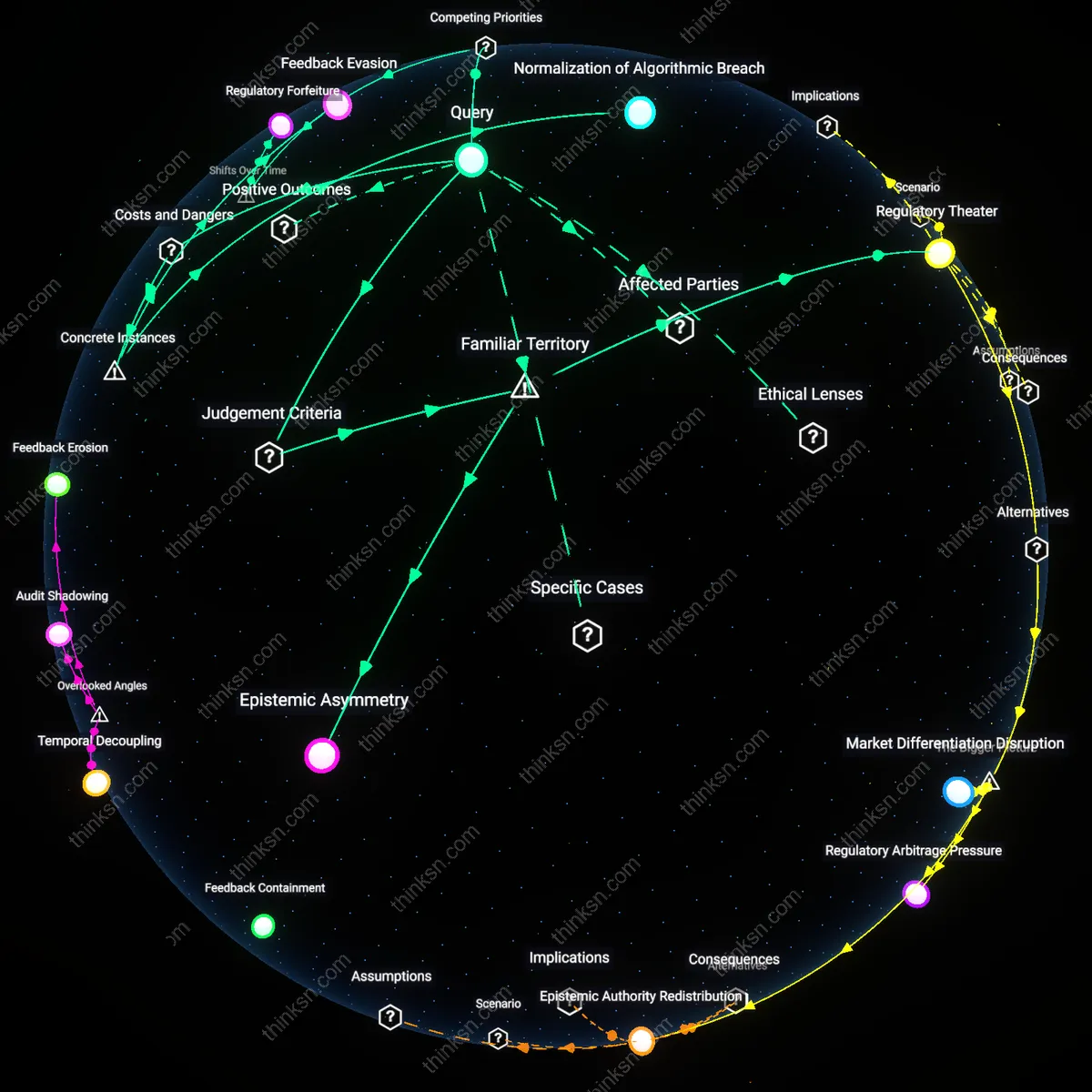

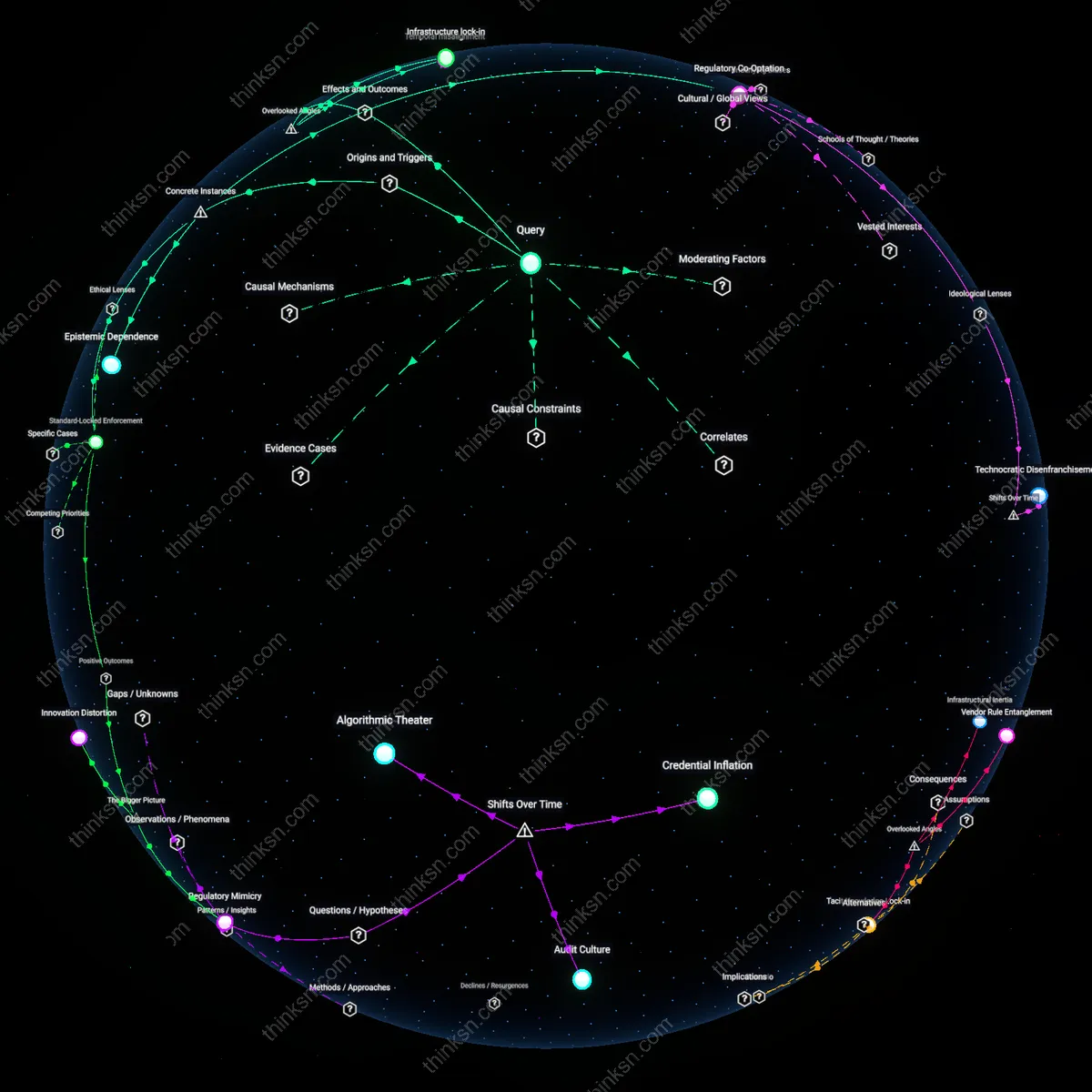

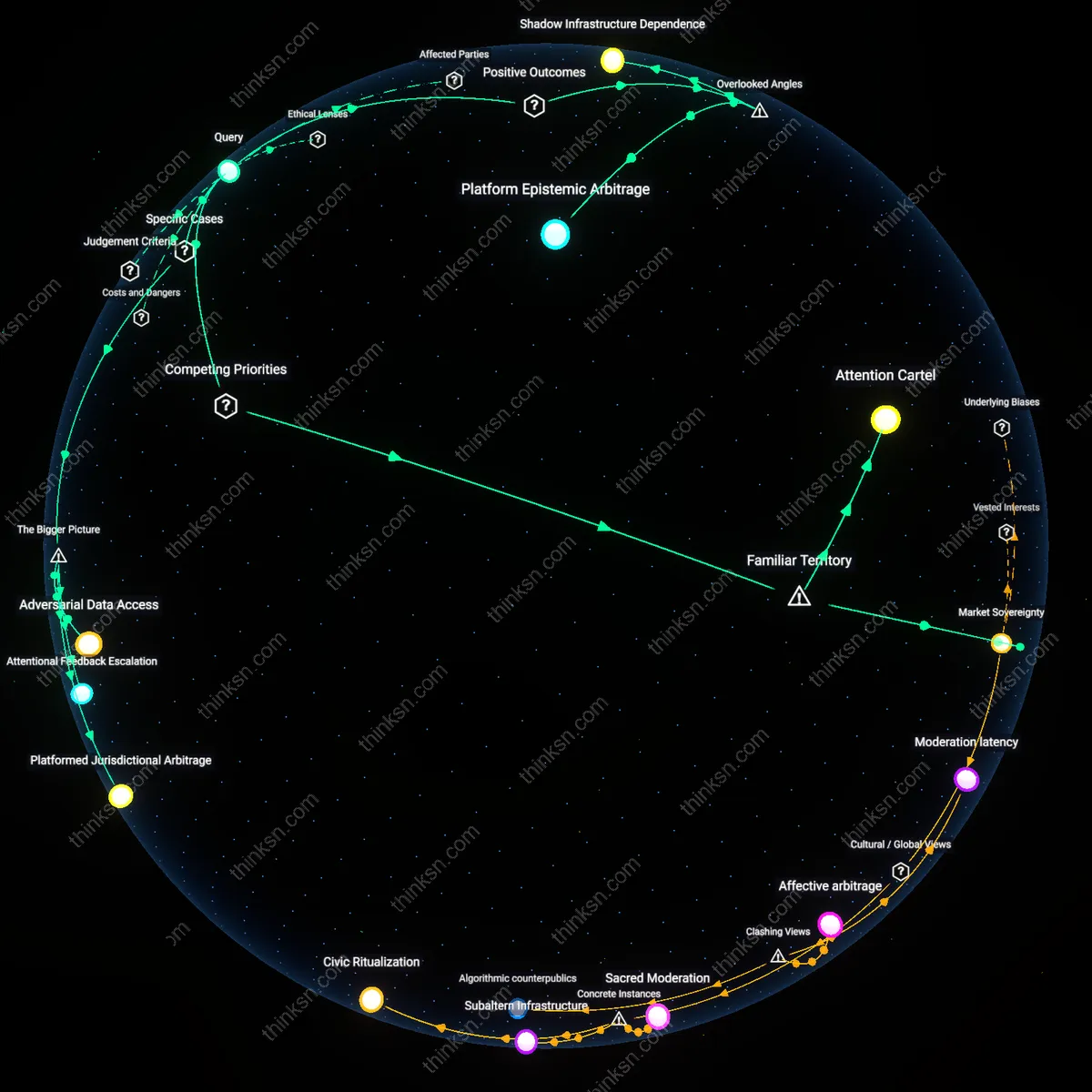

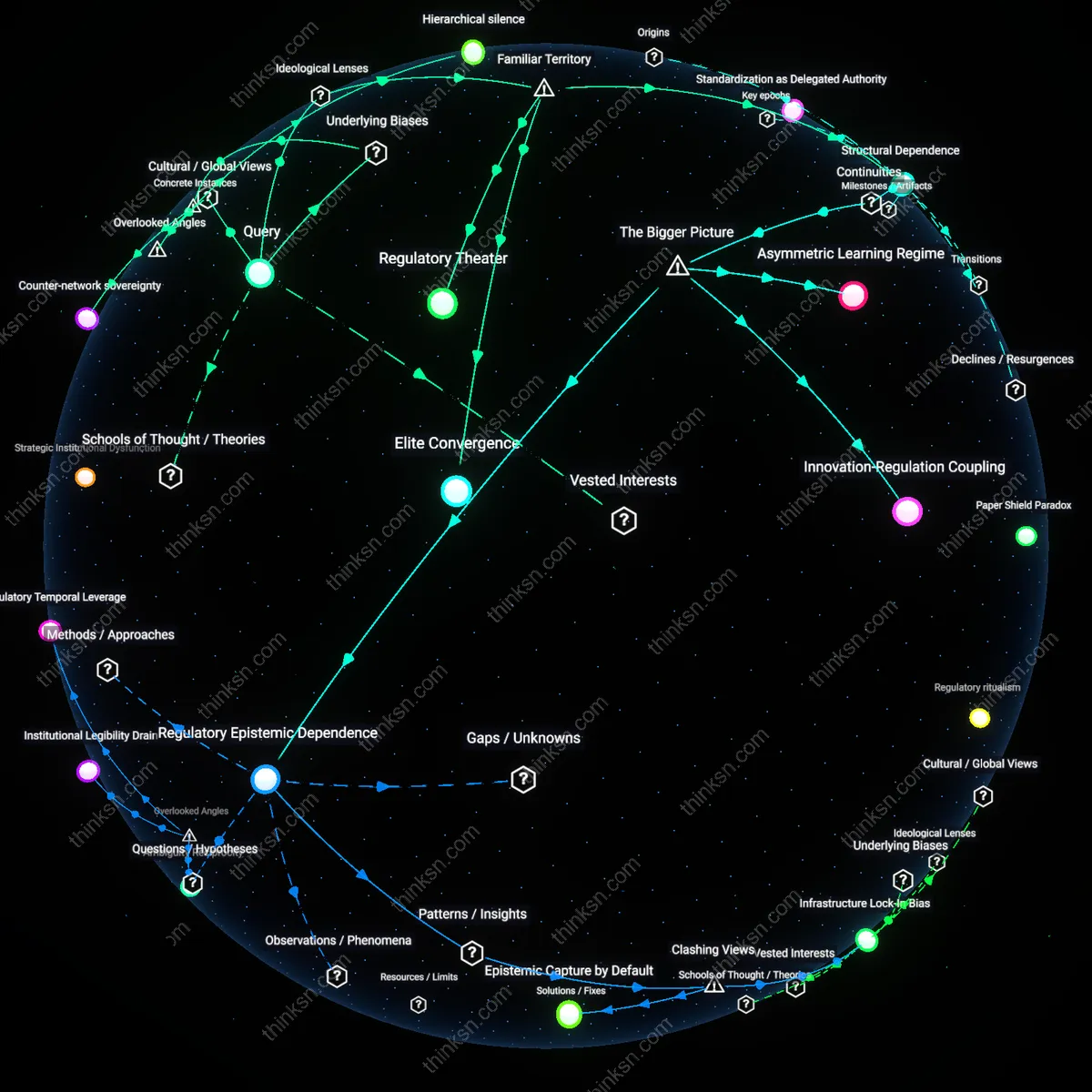

Regulatory Theater

Yes, U.S. reliance on voluntary AI safety codes is unjustifiable because it replicates the structural failure of self-policing seen in financial and environmental sectors, where industry actors design standards that minimize compliance costs while maximizing public trust without reducing systemic risk. This mechanism operates through institutional isomorphism—agencies like NIST or the White House AI Initiative legitimize frameworks that firms then selectively implement, creating the appearance of action without enforceable thresholds. What’s underappreciated is that the harm isn’t just in ineffectiveness but in its success at preempting binding regulation by satisfying political demand for ‘responsiveness,’ thus functioning as regulatory theater.

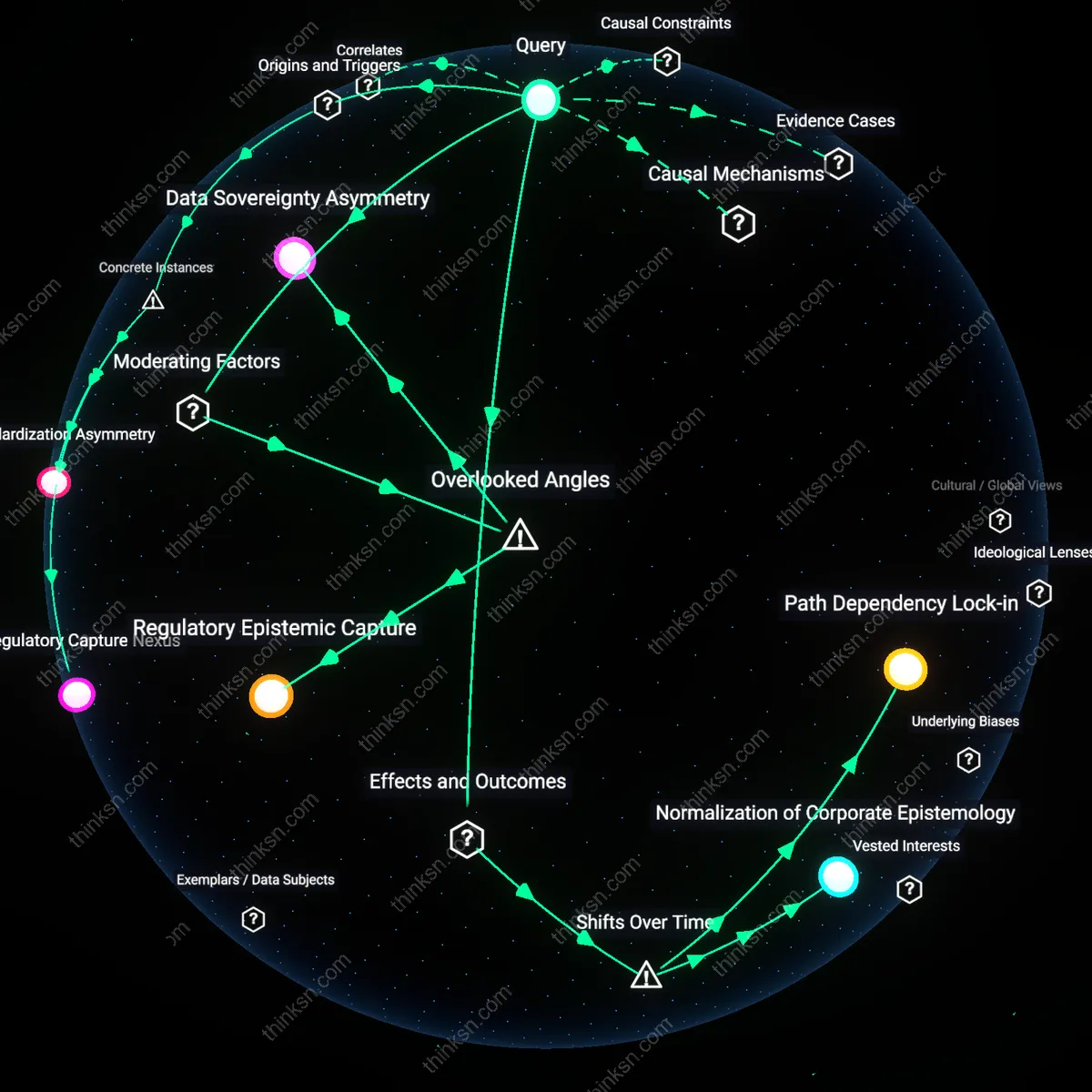

Epistemic Asymmetry

No, voluntary codes are unjustifiable because they institutionalize epistemic asymmetry—where developers at firms like OpenAI or Anthropic possess granular knowledge of model behavior that auditors, regulators, and affected communities cannot access, even in principle, under current transparency limits. This imbalance disables meaningful oversight, as seen in algorithmic bias cases involving hiring or lending tools where impact assessments are based on proxies rather than ground-truth data. The overlooked reality is that self-reporting doesn’t just fail to prevent harm; it actively distorts public understanding of risk by substituting narrative accountability for empirical verification.

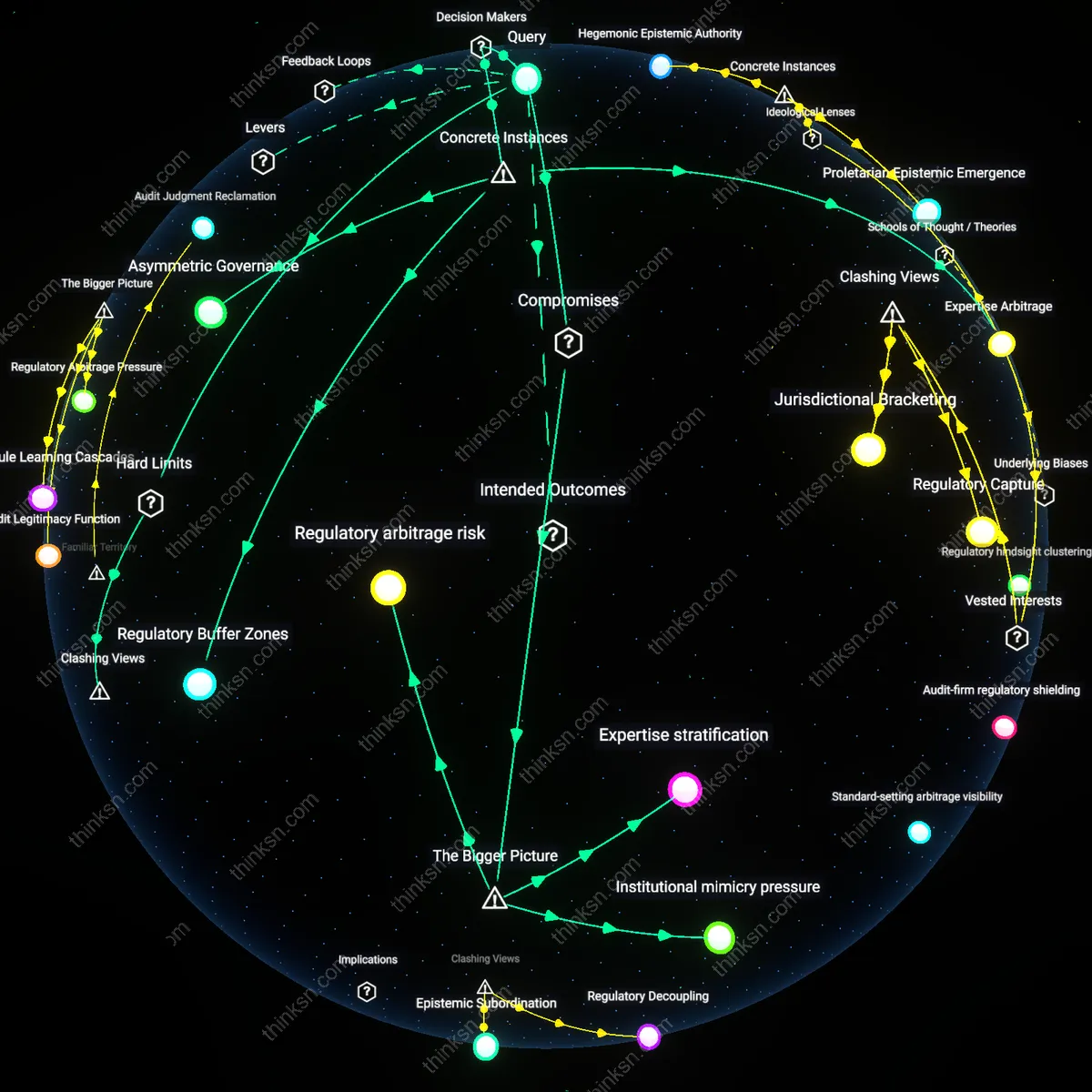

Regulatory Capture by Design

The Federal Trade Commission’s enforcement of Facebook’s 2011 consent decree failed to constrain Cambridge Analytica’s exploitation of 87 million user profiles because Meta (then Facebook) retained control over audit mechanisms and data access, allowing it to selectively disclose compliance while expanding surveillance infrastructure. This mechanism—where regulators delegate oversight to the regulated entity’s internal processes—enabled documented manipulation by third-party actors embedded in the platform’s architecture, revealing that self-regulation in digital systems functions not as oversight but as institutionalized opacity. What is underappreciated is that the failure was not due to lax enforcement alone, but because the very structure of the agreement embedded Meta’s governance logic into regulatory enforcement, making violations invisible by design.

Feedback Evasion

Google’s voluntary AI Principles, adopted in 2018, did not prevent the firing of AI ethicists Timnit Gebru and Margaret Mitchell in 2020 and 2021 because the code lacked external review mechanisms and enabled leadership to classify ethical dissent as policy violation. The internal review process for AI fairness became a tool to suppress research on language model biases, particularly those implicating search and advertising systems central to Google’s revenue. This reveals that voluntary codes can create a legitimacy shield—publicly signaling responsibility while operationally insulating core products from critique—where harm is not an accident but a preserved output of systems designed to evade corrective feedback.

Normalization of Algorithmic Breach

The 2014 IRS adoption of Intuit’s TurboTax Free File program—based on a voluntary agreement with tax preparation companies—allowed Intuit to use the government-endorsed platform to steer low-income filers toward paid products, ultimately depriving over 15 million taxpayers of refunds they were owed, because the agreement lacked monitoring, enforcement triggers, or penalties. The system normalized the conversion of a public service into a lead-generation funnel by permitting the regulated to define compliance boundaries, illustrating that self-regulation in algorithmic systems institutionalizes harm through incremental attrition rather than sudden failure. The underappreciated dynamic is that the breach was not a violation of the code but its intended operation—where public-private partnerships become transfer conduits for public value into private profit under the guise of voluntary cooperation.

Regulatory Forfeiture

U.S. reliance on voluntary AI safety codes is a strategic deferral of binding oversight, enabled by post-2008 financial crisis deregulatory momentum that reframed corporate self-policing as agile innovation stewardship. As federal agencies faced budgetary constraints and political pressure to reduce rulemaking burdens, Silicon Valley positioned algorithmic self-regulation as a faster, more adaptive alternative to bureaucratic oversight—despite accumulating evidence of discriminatory algorithms in mortgage lending and hiring tools. This shift replaced pre-emptive harm mitigation with reactive accountability, normalizing the exchange of public accountability for private control over risk definition—an outcome not of technological necessity but of a deliberate recalibration of governance authority after the crash weakened trust in state capacity.

Legibility Deflection

The turn to voluntary standards after 2016 coincided with the rising public visibility of algorithmic bias in criminal justice and social media, exposing a strategic shift whereby tech firms reframed safety as an inscrutable technical challenge rather than a governance failure. By advancing codes of conduct rooted in proprietary risk matrices and internal review boards—a practice amplified during the 2020 content moderation debates—companies transformed algorithmic accountability into a problem of engineering precision rather than democratic oversight. This reconfiguration made harms harder to litigate or regulate by embedding responsibility within closed epistemic communities, effectively substituting transparency with technical ritual, a transformation only legible in hindsight as a deliberate obfuscation strategy evolved in response to post-2016 accountability demands.