AI Oversight or Molecular Expertise: Pathologists Dilemma in Diagnostic Errors

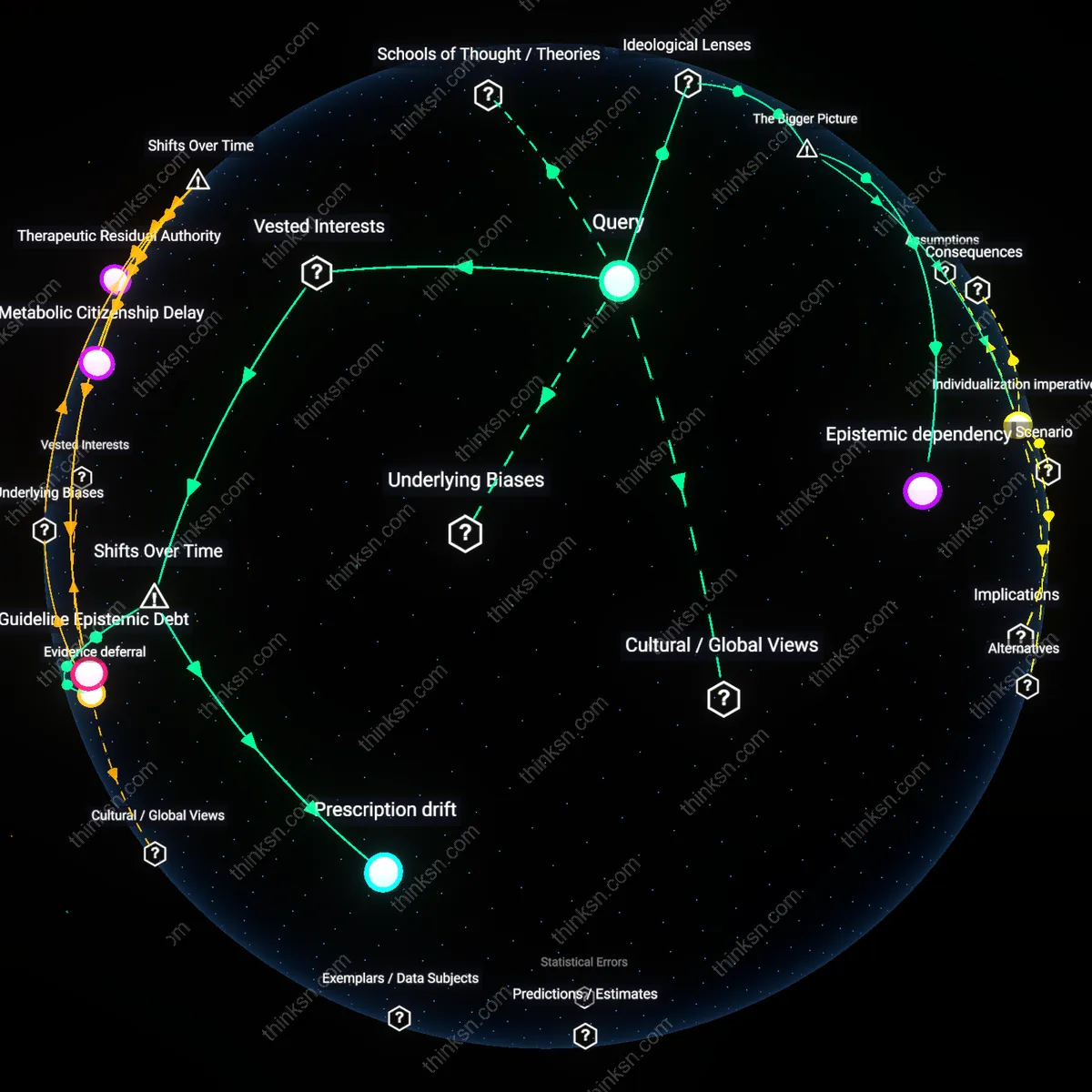

Analysis reveals 12 key thematic connections.

Key Findings

Methodological Debt

A pathologist should prioritize molecular pathology expertise to counterbalance AI’s historical reliance on pattern-matching over mechanistic validation, a shift accelerated by the post-2015 deep learning boom that privileged algorithmic performance on static datasets over biological interpretability. This imbalance produces diagnostic models that replicate morphological correlations without interrogating molecular causality, placing pathologists in the role of retroactively validating AI outputs against ground-truth biomarkers—work that exposes how decades of underinvestment in computational biology infrastructure now manifest as unverified assumptions in AI training sets. The non-obvious risk is not AI inaccuracy per se, but that oversight becomes reactive triage rather than proactive design, perpetuating a cycle where molecular data is used only to clean up after black-box predictions.

Temporal Misalignment

Pathologists must allocate career development toward AI oversight because the clinical integration of AI since the early 2020s has created a regulatory and operational lag, where validation protocols remain rooted in pre-digital histopathology paradigms that assume human-perceivable features as gold standards. As AI systems detect subvisual patterns from whole-slide images—patterns that emerged as actionable only after the TCGA-era molecular cataloging of tumor heterogeneity—the gap widens between what algorithms identify and what pathologists are trained to confirm, shifting diagnostic authority toward software with unmonitored drift. The underappreciated dynamic is that molecular pathology, once seen as the future of precision, is now being pulled backward to verify AI findings rather than drive discovery, revealing a timeline inversion in diagnostic logic.

Validation Burden

The pathologist should strategically engage in AI oversight roles because the post-2018 transition to FDA-cleared AI tools in surgical pathology has decentralized diagnostic risk, shifting the responsibility for ongoing performance monitoring from developers to individual laboratories—where molecular testing becomes the default mechanism for discordant case resolution. This institutionalizes a feedback loop in which molecular pathology resources are consumed not for primary classification, but to audit AI outputs, effectively turning molecular labs into verification engines for machine predictions that were trained on datasets lacking genomic correlates. The overlooked consequence is that molecular expertise is being structurally depleted from research and innovation pipelines, as its utility is reduced to a corrective afterthought, thereby entrenching dependence on algorithms with opaque validation histories.

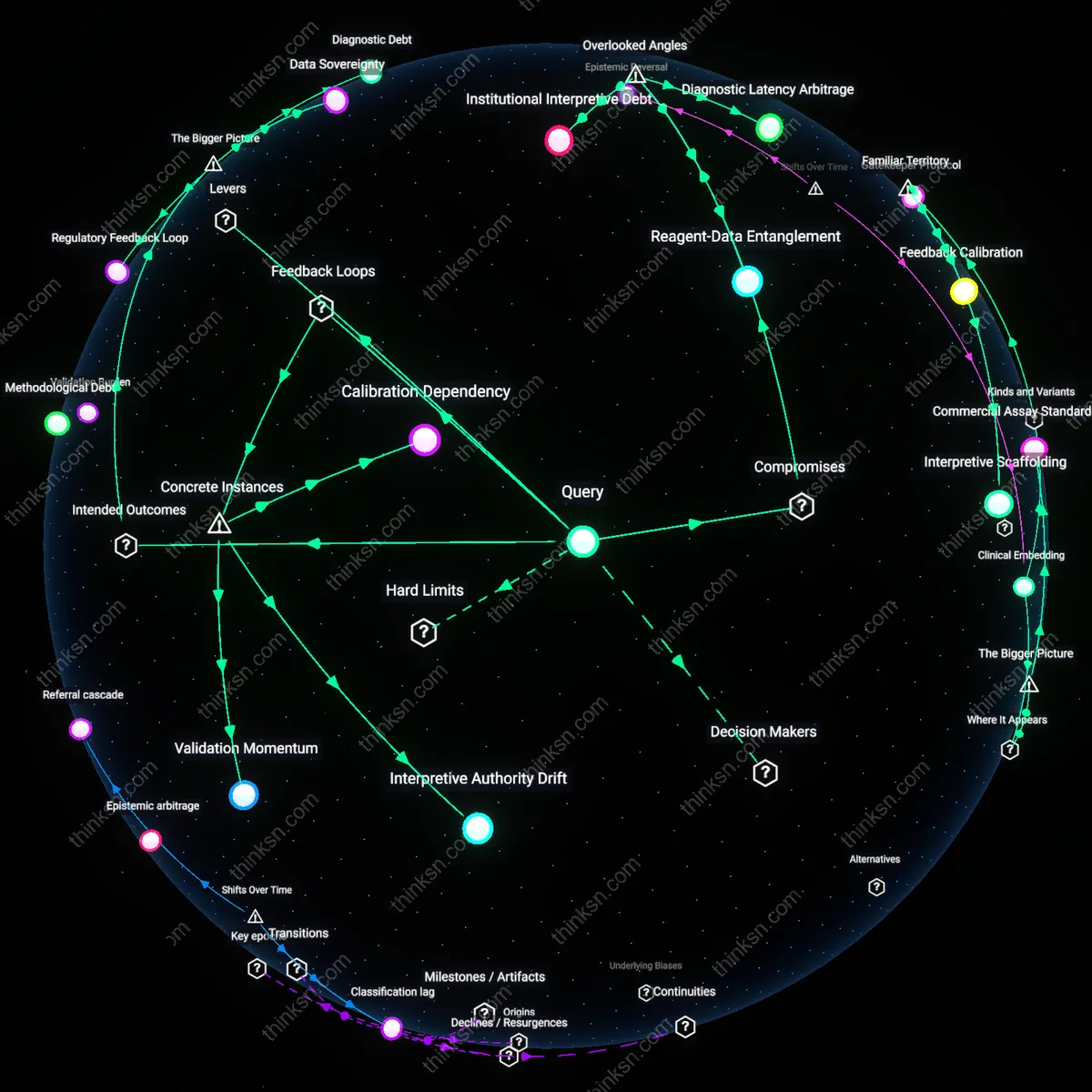

Calibration Dependency

A pathologist should prioritize molecular pathology expertise to serve as a biological ground-truth anchor against which AI diagnostic outputs are iteratively calibrated, as seen in the rollout of IBM Watson for Oncology at Memorial Sloan Kettering Cancer Center, where AI recommendations were found to deviate from clinical reality due to training on synthetic and non-validated case data, revealing that AI oversight fails without continuous recalibration against wet-lab-confirmed molecular profiles; this creates a balancing loop in which molecular findings correct AI overreach, ensuring diagnostic stability, a dynamic rarely acknowledged in AI integration policies that assume algorithmic competence post-deployment.

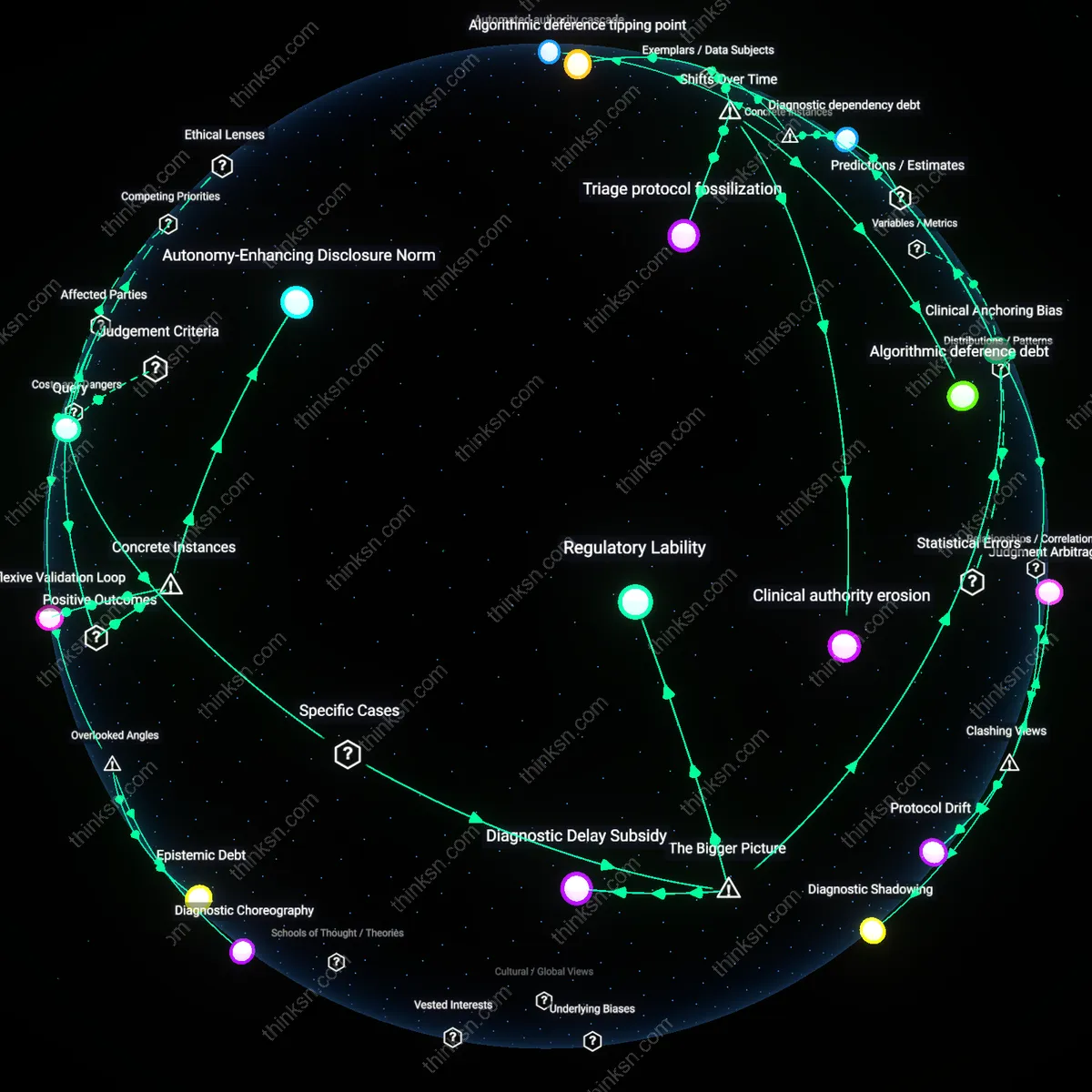

Interpretive Authority Drift

Pathologists must expand oversight roles in AI systems to prevent erosion of diagnostic accountability, exemplified by the 2017 deployment of automated Pap smear analysis in Sweden’s national cervical screening program, where AI reduced pathologist workload but concentrated interpretive authority in opaque algorithmic decisions, triggering a reinforcing loop in which reduced human case exposure degraded rare-morphology recognition skills over time, ultimately increasing reliance on AI—a feedback cycle exposing how technical efficiency can silently transfer clinical authority from clinicians to machines unless actively counterbalanced.

Validation Momentum

Investing in molecular pathology generates self-sustaining diagnostic credibility when used to audit AI performance, as demonstrated by the Molecular Tumor Board at University of California, San Diego, which mandated NGS-confirmed variants as required discordance checks for AI-driven tumor profiling, creating a reinforcing feedback loop where molecular validation successes increased institutional trust in human-led review processes, thereby expanding funding and staffing for molecular labs, revealing that technical rigor in biology can generate organizational inertia that resists premature automation.

Diagnostic Latency Arbitrage

A pathologist should prioritize molecular pathology over AI oversight because the clinical impact of delayed molecular diagnostics in time-sensitive therapies creates a covert window where imperfect AI interpretations, though faster, may degrade actionable insight—this dynamic operates through tumor board decision timelines in academic medical centers, where treatment plans are often locked before confirmatory AI analyses are validated; what is overlooked is that speed advantages from AI are not neutral but actively distort therapeutic urgency, making molecular depth a stealth compromise in favor of clinical coherence rather than algorithmic throughput.

Reagent-Data Entanglement

A pathologist should anchor their career in molecular pathology because molecular workflows generate proprietary reagent-dependent data streams that constrain future AI model retraining, tying diagnostic autonomy to supply chains dominated by a few biotech firms like Roche or Illumina; this dependency creates a hidden governance role for pathologists who control tissue allocation, an influence rarely acknowledged in AI ethics debates—most oversight discussions assume data is freely malleable, but in reality, the chemical origin of molecular data dictates its digital destiny, making bench-level choices structurally strategic.

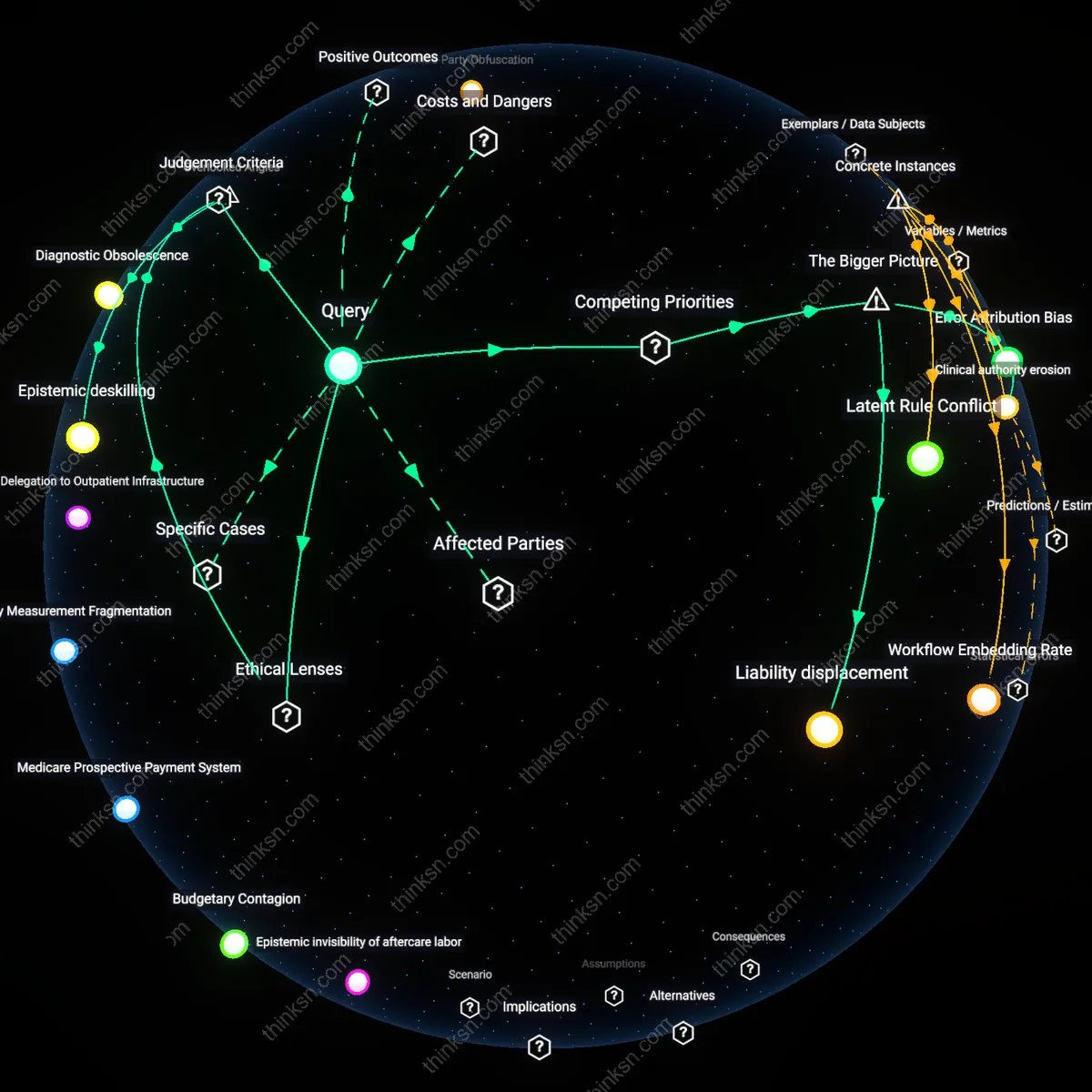

Institutional Interpretive Debt

A pathologist should invest in molecular pathology to mitigate institutional interpretive debt—the accumulating cost of retraining clinicians to trust AI-generated conclusions when discordant with long-standing morphologic intuition—this plays out in community hospitals where senior oncologists rely on pathologist narratives from H&E stains, and AI disruptions force retrial of diagnostic consensus at systemic cost; the overlooked mechanism is that AI oversight doesn’t just evaluate algorithms but erodes experiential epistemology, making molecular expertise a stabilizing collateral that grounds trust in measurable biology rather than probabilistic outputs.

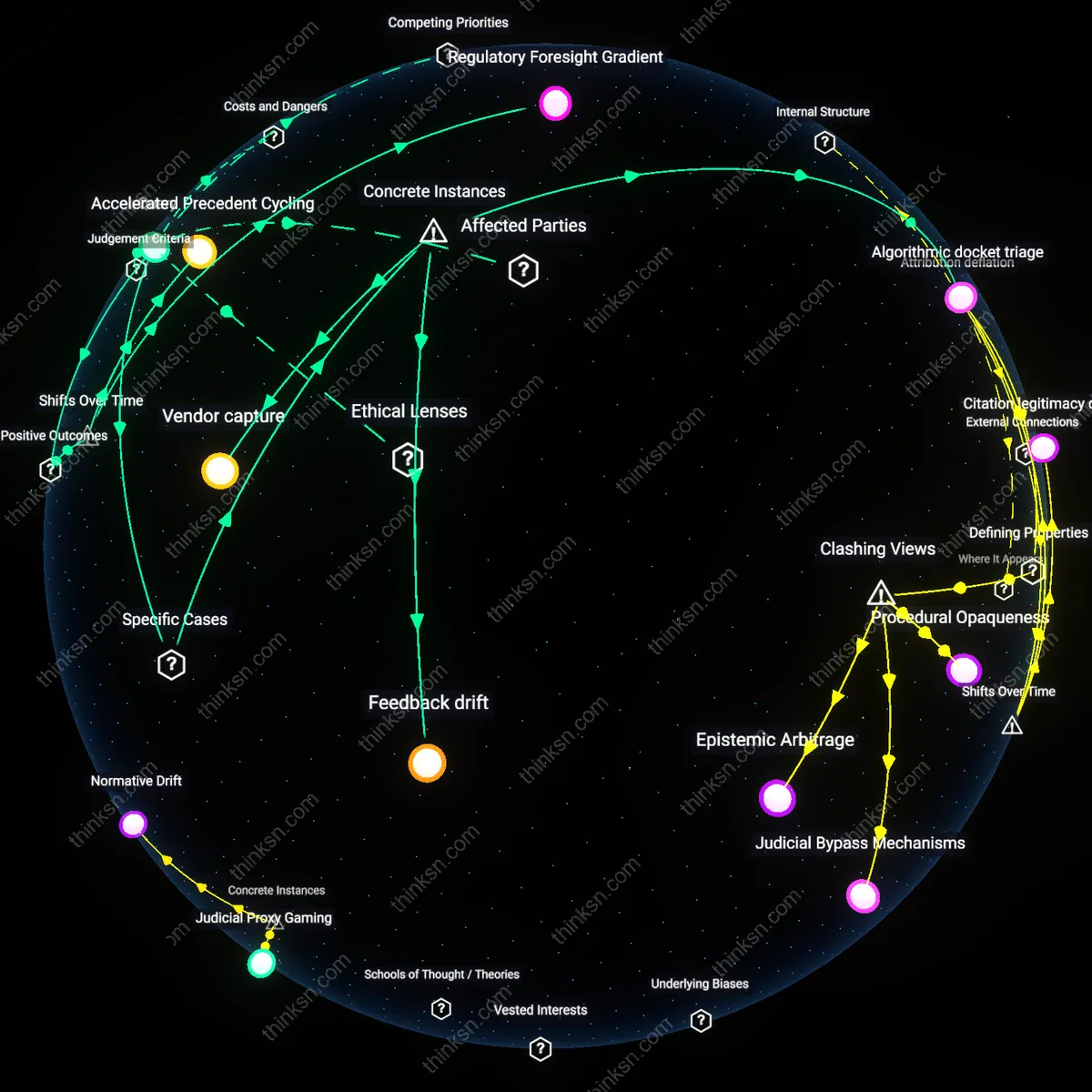

Regulatory Feedback Loop

A pathologist should prioritize molecular pathology expertise now to shape emergent AI validation standards within clinical laboratories, because senior pathologists with domain-specific knowledge are being recruited by the College of American Pathologists and FDA to define what counts as clinically acceptable AI performance in oncologic diagnostics. This positions molecular pathologists as arbiters of the evidence that regulators will require for AI clearance, turning technical specialization into institutional influence over how accuracy claims are verified and enforced. The non-obvious consequence is that early-career investment in molecular training creates indirect leverage over AI’s integration pace and reliability thresholds—making scientific credibility a regulatory gatekeeping tool.

Diagnostic Debt

A pathologist should weight AI oversight as a long-term leadership opportunity because health systems adopting AI in histopathology without robust internal audit mechanisms will accumulate undetected diagnostic discrepancies that only pathologists can identify and remediate. As enterprise AI vendors push deployment timelines under pressure from hospital CFOs to reduce turnaround costs, pathologists who develop oversight competence become critical absorbers of technical risk, preventing downstream liability from false-negative cancer calls masked by algorithmic overconfidence. This reveals that AI oversight is not just interpretive but infrastructural—one where pathologists function as institutional shock absorbers for systemic inaccuracy, delaying or accelerating trust based on their capacity to detect silent failures.

Data Sovereignty

A pathologist should strategically balance molecular pathology with AI oversight by securing control over local tumor sequencing and imaging data pipelines, because academic medical centers with annotated, high-grade biospecimen datasets are becoming the de facto validators of AI model generalizability across diverse populations. When AI developers rely on institutionally siloed data to train classifiers, pathologists who steward biorepositories and integrated genomic databases gain leverage to demand transparency and co-development rights, turning data access into a bargaining chip for accuracy improvement. This shifts power from algorithm creators to data custodians, making pathology departments not just end-users but co-determinants of AI reliability through selective data sharing.