Do Dark Patterns in Health Apps Undermine User Autonomy for Corporate Gain?

Analysis reveals 6 key thematic connections.

Key Findings

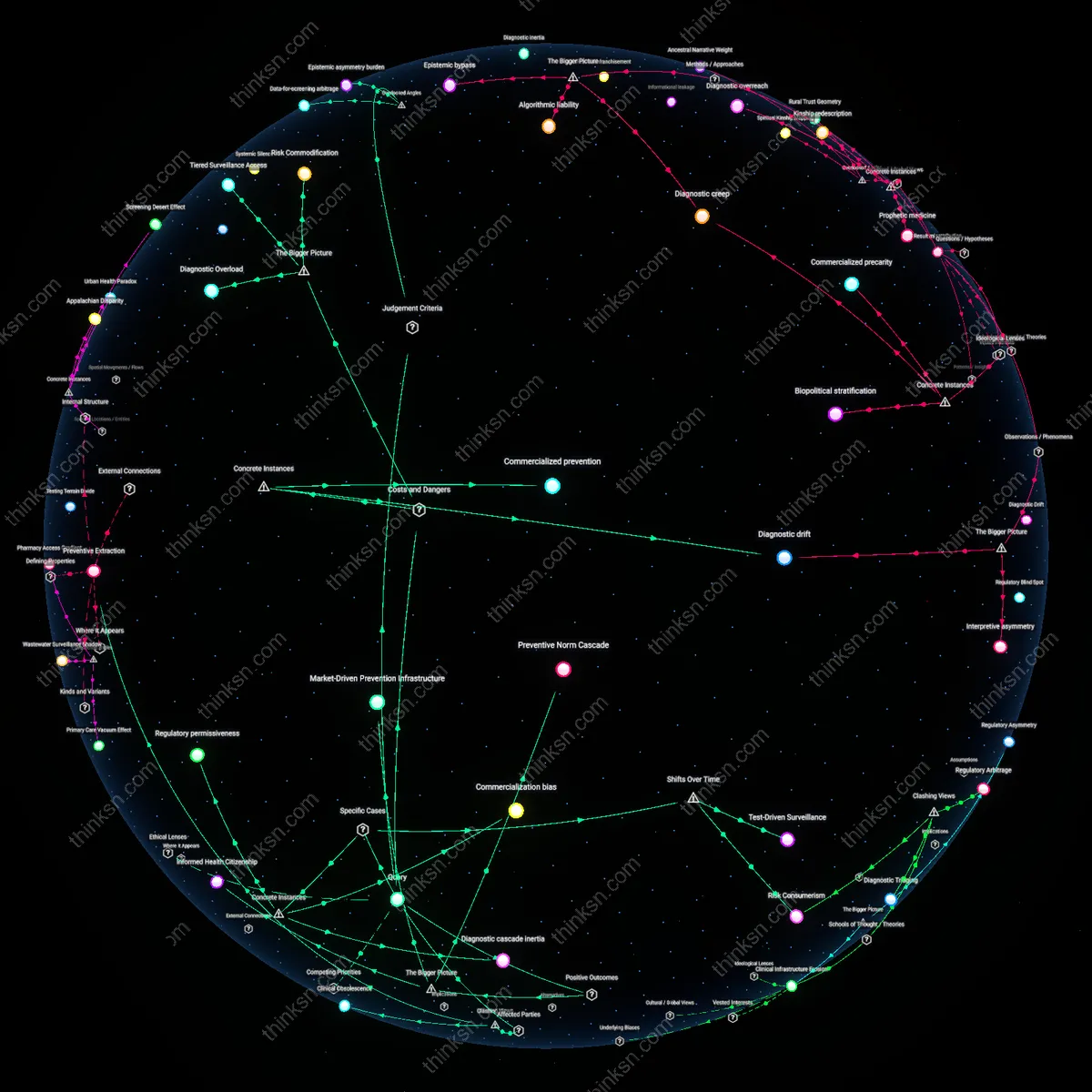

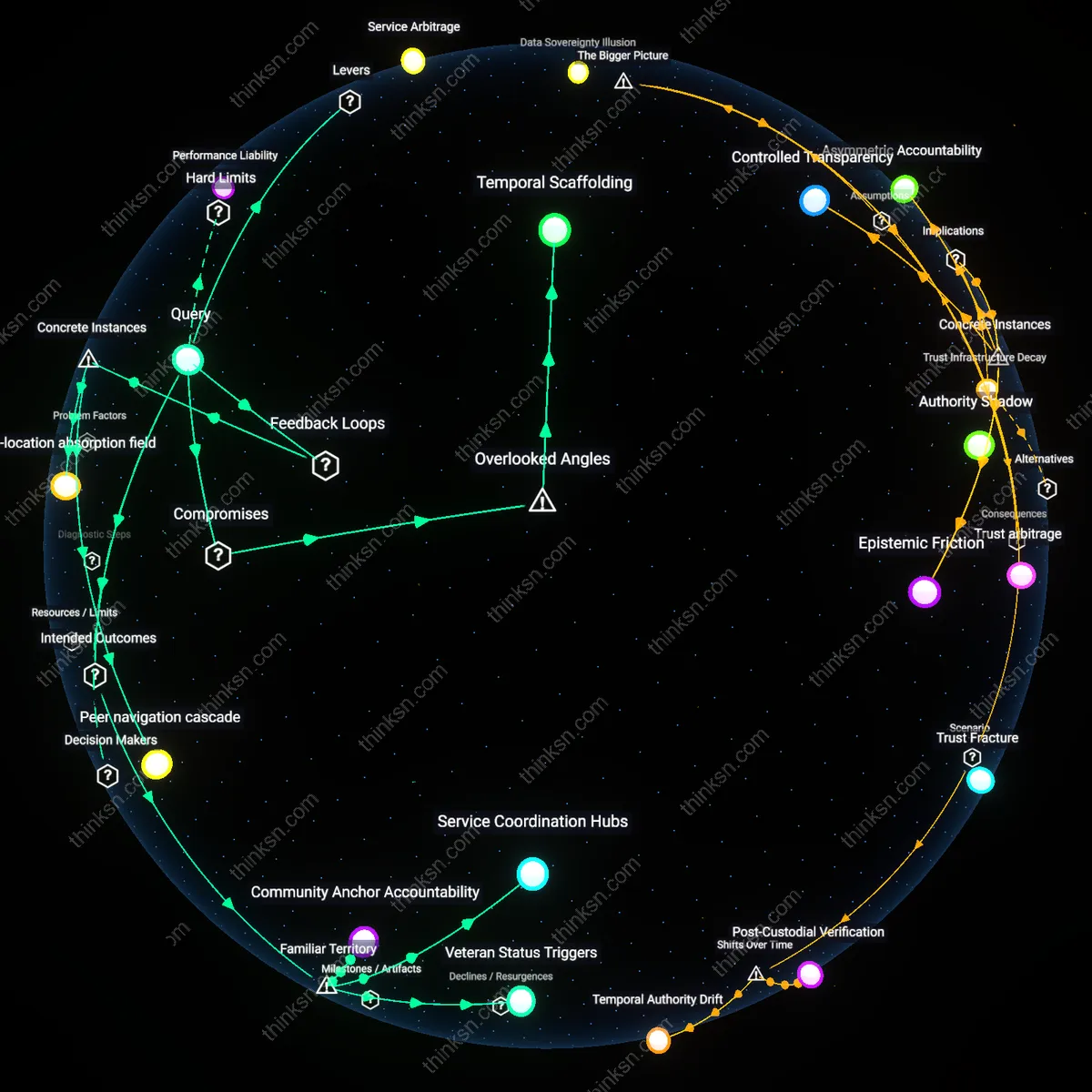

Behavioral Compliance Infrastructure

The widespread use of dark-pattern consent interfaces in health-tracking apps reveals that regulatory oversight bodies, such as medical ethics boards and digital privacy agencies, are inadvertently complicit in normalizing user coercion by certifying apps that meet procedural compliance—like displaying consent forms—while ignoring interface design that undermines informed choice. This occurs through accreditation processes that treat data consent as a checkbox rather than a cognitive experience, privileging legal defensibility over actual user understanding, which shifts the burden of resistance onto individuals already managing health vulnerabilities. What remains overlooked is how institutional risk management strategies in public health digitization create a behavioral compliance infrastructure, where user autonomy is not actively suppressed but systematically disengaged through accepted UX standards.

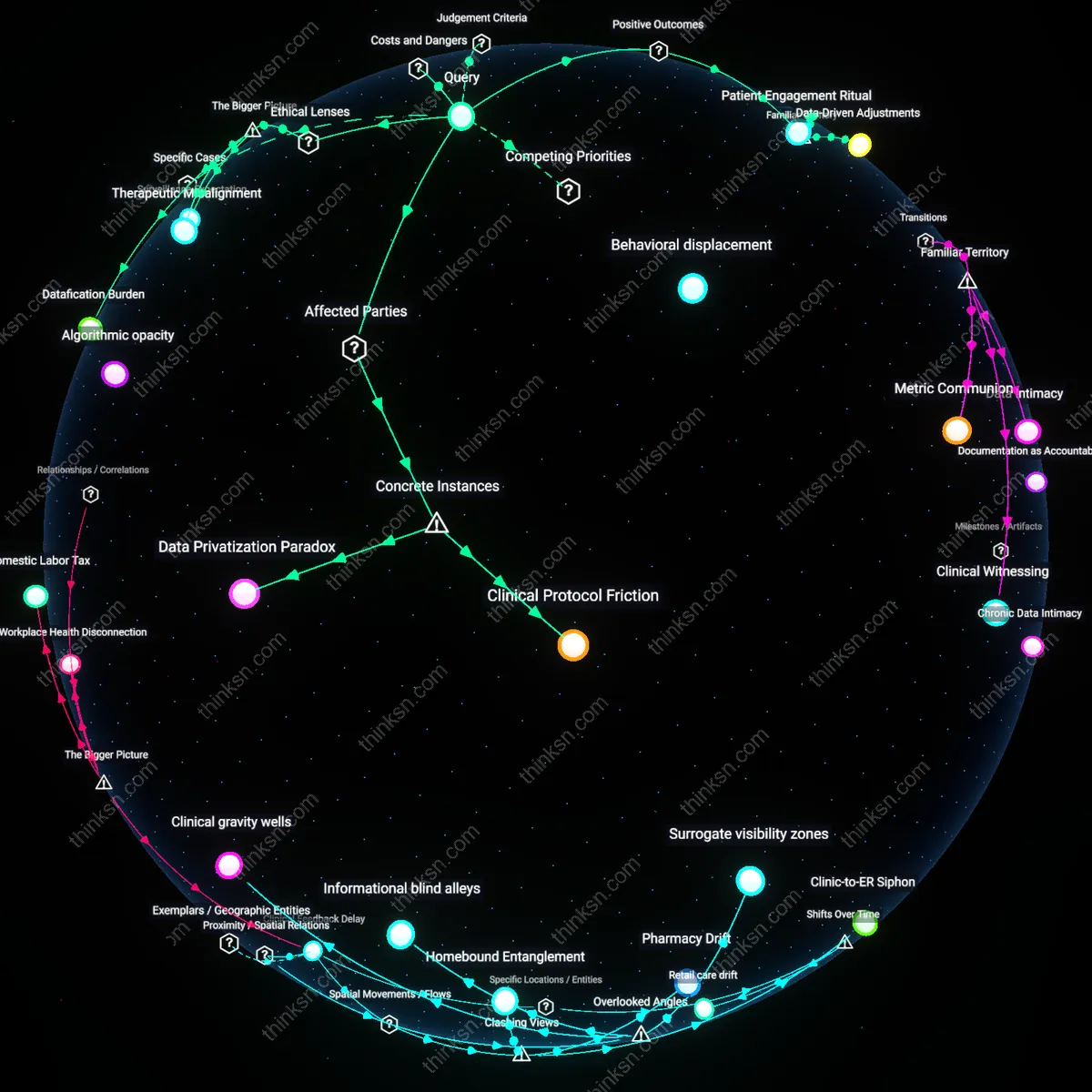

Affective Labor of Trust

Patients with chronic illnesses, particularly those reliant on continuous health monitoring, bear the affective labor of trust when navigating dark-pattern consent interfaces, as their medical continuity depends on app functionality despite knowing their data may be exploited. These users often disable privacy protections or accept opaque terms not out of ignorance but as a tacit survival strategy, trading future data risks for immediate care utility, a dynamic amplified in low-digital-literacy populations. The overlooked mechanism is that user autonomy becomes a form of emotional and cognitive labor—where maintaining health requires sustained effort to manage distrust—revealing that corporate data collection is sustained not only by deception but by extracting affective labor from vulnerable patient groups.

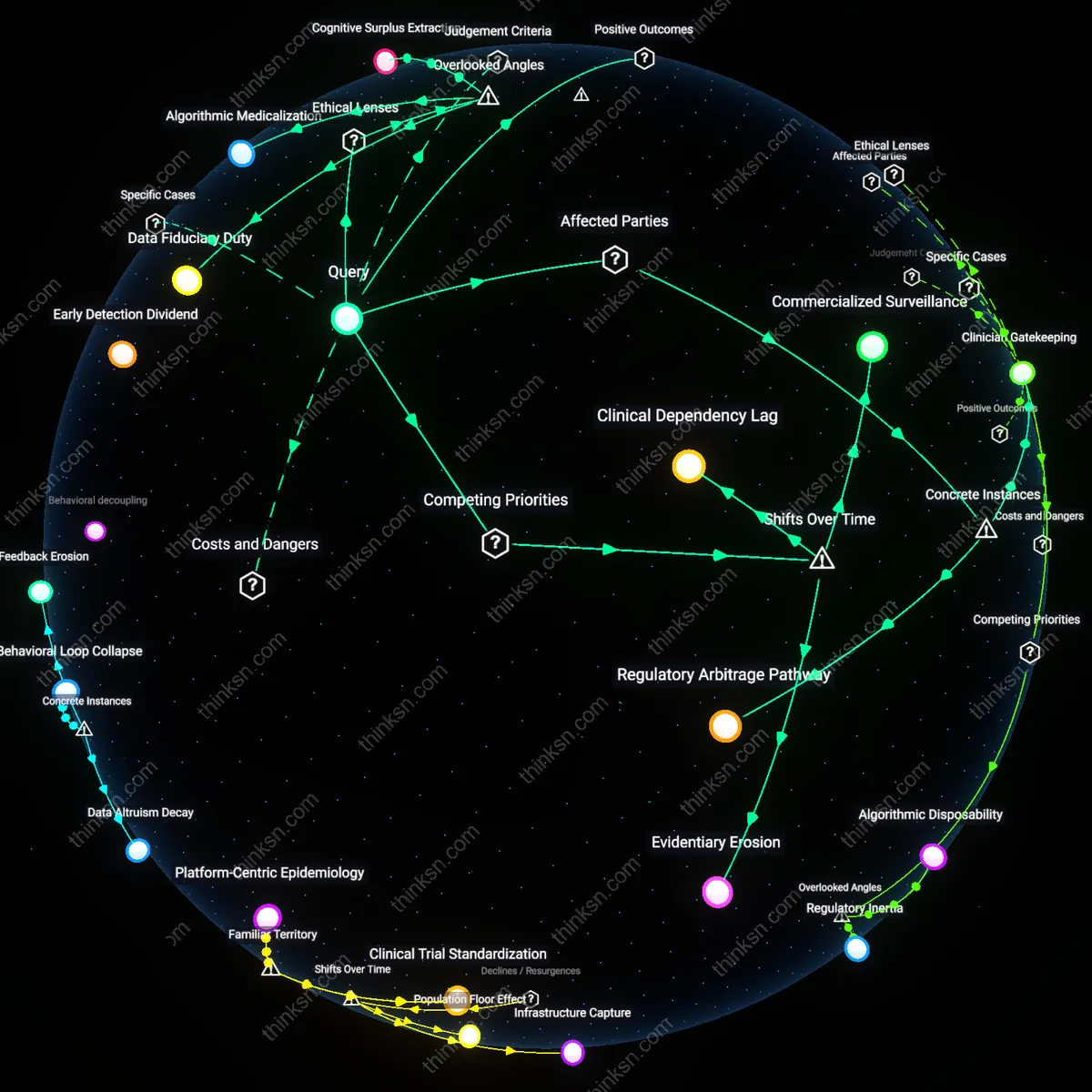

Institutional Data Dependency Chains

Healthcare institutions integrating third-party app data into clinical decision-making—such as hospital networks using Apple Health or Fitbit inputs for preventive care—unwittingly reinforce corporate data collection by creating institutional demand for continuous user data flows, even when those flows originate through manipulative consent designs. Because these institutions prioritize data accessibility over data provenance, they form dependency chains that incentivize app developers to maximize data extraction, regardless of ethical onboarding practices. The hidden dynamic is that clinical validation of consumer health data acts as an indirect subsidy for surveillance architectures, making user autonomy a casualty not of corporate malice alone, but of institutional normalization of ethically compromised data pipelines.

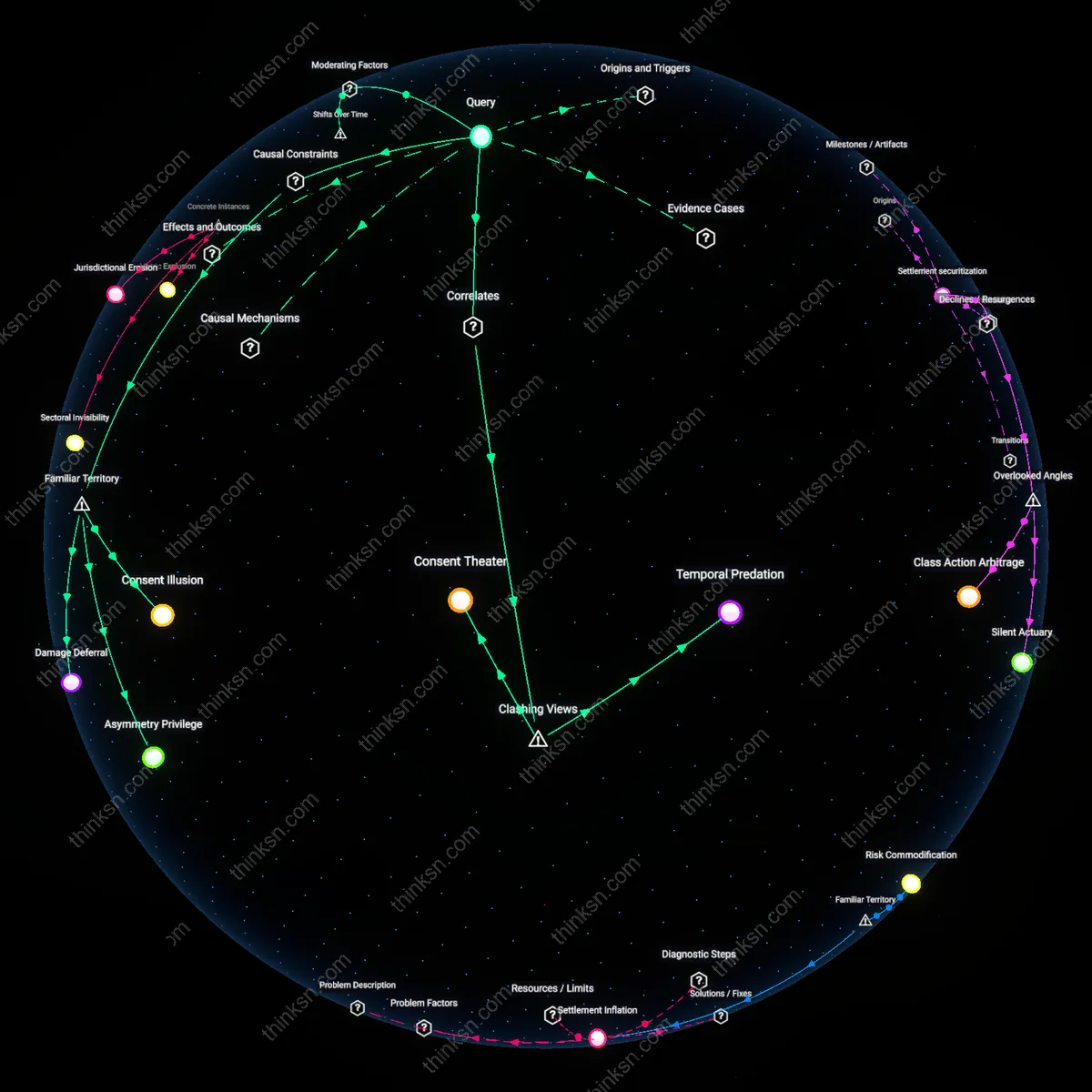

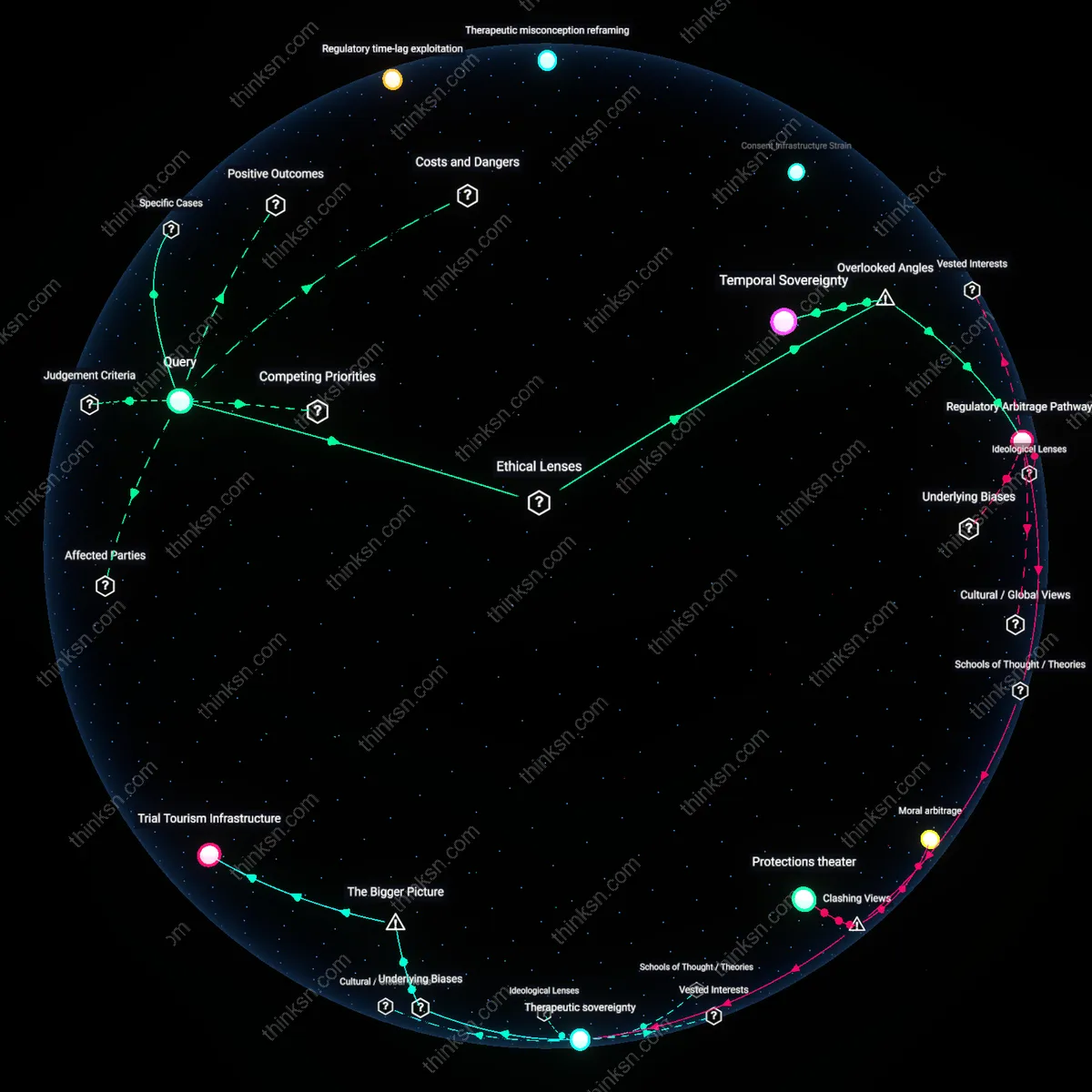

Regulatory Arbitrage Pressure

The widespread use of dark-pattern consent interfaces in health-tracking apps enables tech firms to maximize data extraction while appearing compliant with privacy regulations, thereby reducing legal risk without sacrificing commercial utility. This occurs because companies design interface architectures that exploit loopholes in legislation like GDPR or HIPAA—such as pre-selected checkboxes or confusing opt-out flows—allowing them to claim user consent was obtained, even when autonomy is materially undermined. The mechanism hinges on regulatory divergence and weak enforcement capacity, where oversight bodies lack the technical fluency or resources to evaluate interface design as a compliance issue. What is non-obvious is that the persistence of dark patterns does not reflect regulatory failure alone, but a systematic incentive structure that rewards firms for mimicking compliance better than enacting it.

Data Volume Imperative

Corporate reliance on expansive health datasets to train predictive algorithms and refine targeted services drives the normalization of coercive consent design in health apps. Firms such as fitness platform providers or digital therapeutics developers depend on continuous, granular user input—sleep cycles, heart rate, activity levels—to improve machine learning models that underpin subscription-based product differentiation. Because model accuracy correlates with data scale and diversity, product teams face internal pressure to minimize user drop-off at consent stages, leading to interface designs that subtly discourage refusal. The non-obvious insight is that dark patterns here emerge not from malice but from a systemic need to feed algorithmic engines, revealing how infrastructure demands can reshape ethical boundaries in UX design.

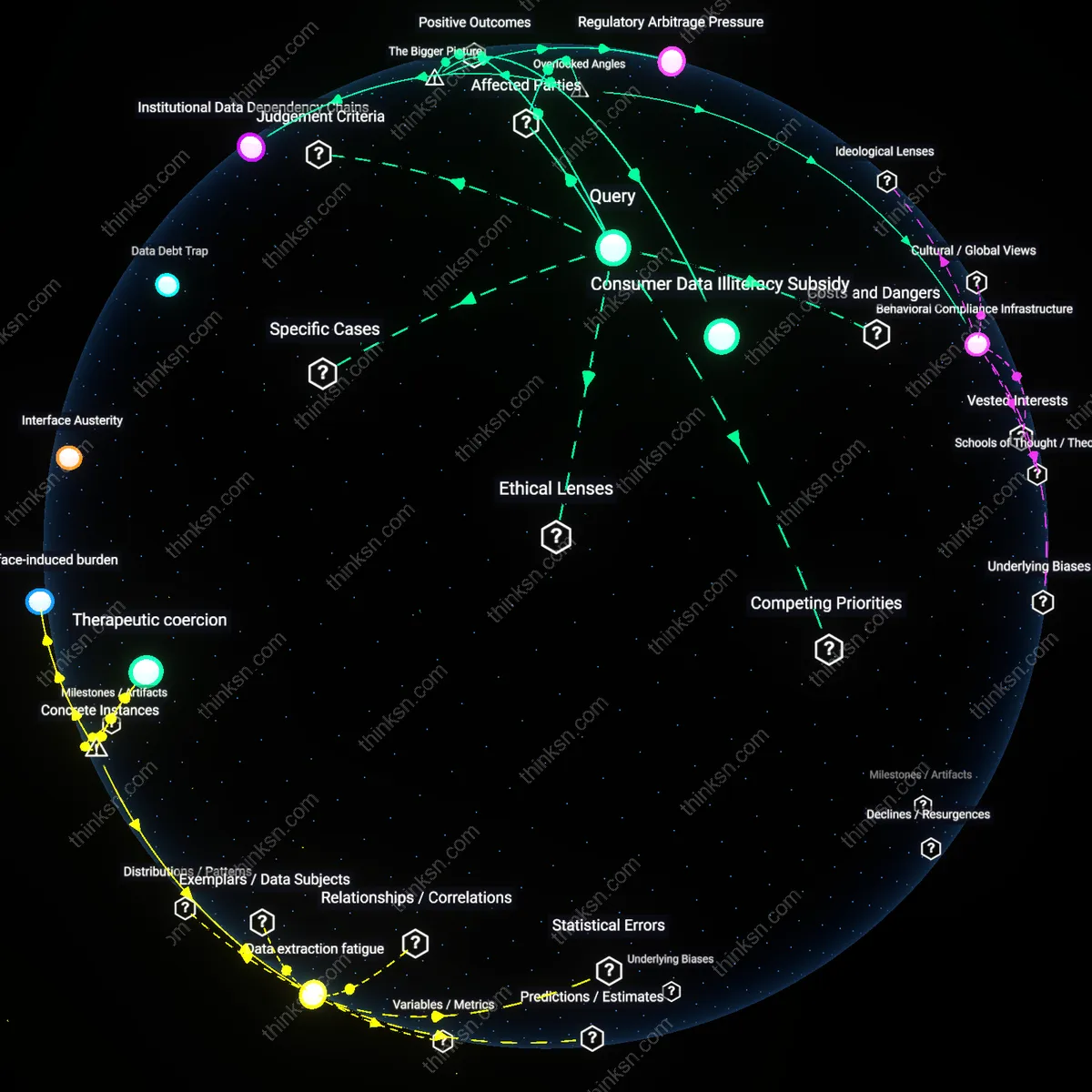

Consumer Data Illiteracy Subsidy

The effectiveness of dark-pattern consent interfaces in health-tracking apps depends on and reinforces widespread public misunderstanding of data rights and digital privacy trade-offs, allowing corporations to extract behavioral surplus with minimal resistance. Most users lack both the technical knowledge to interpret data-sharing implications and the cognitive bandwidth to scrutinize every prompt, particularly in health contexts where perceived personal benefit (e.g., wellness insights) overrides caution. This dynamic is amplified by the absence of standardized, public digital literacy programs in countries like the U.S., where health tech operates in a privatized, market-driven ecosystem. The underappreciated point is that corporate data strategies are structurally dependent on this knowledge asymmetry—it is not a bug but an enabling condition for scalability.