Does Delegating Lesson Planning to AI Risk Educator Skill Atrophy?

Analysis reveals 6 key thematic connections.

Key Findings

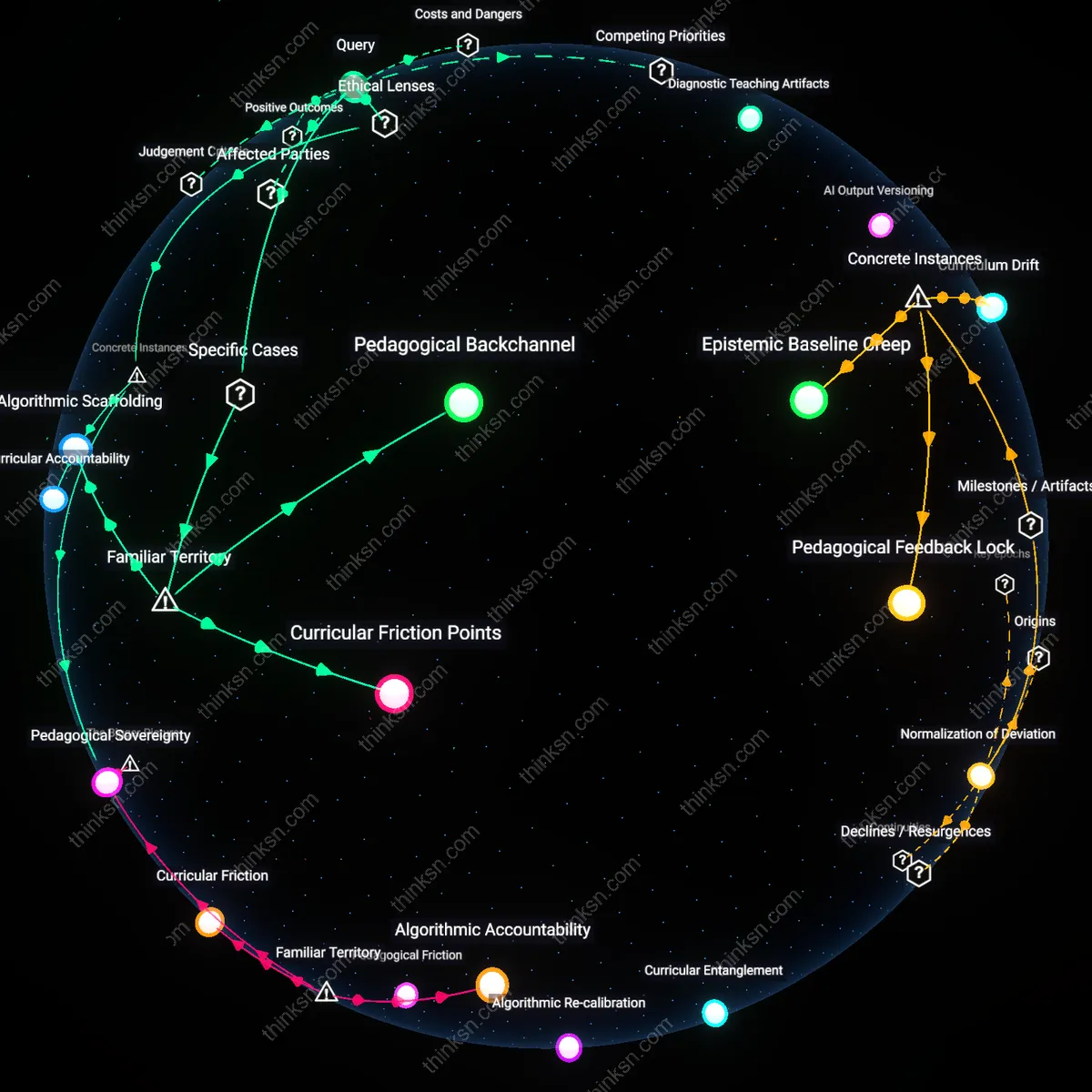

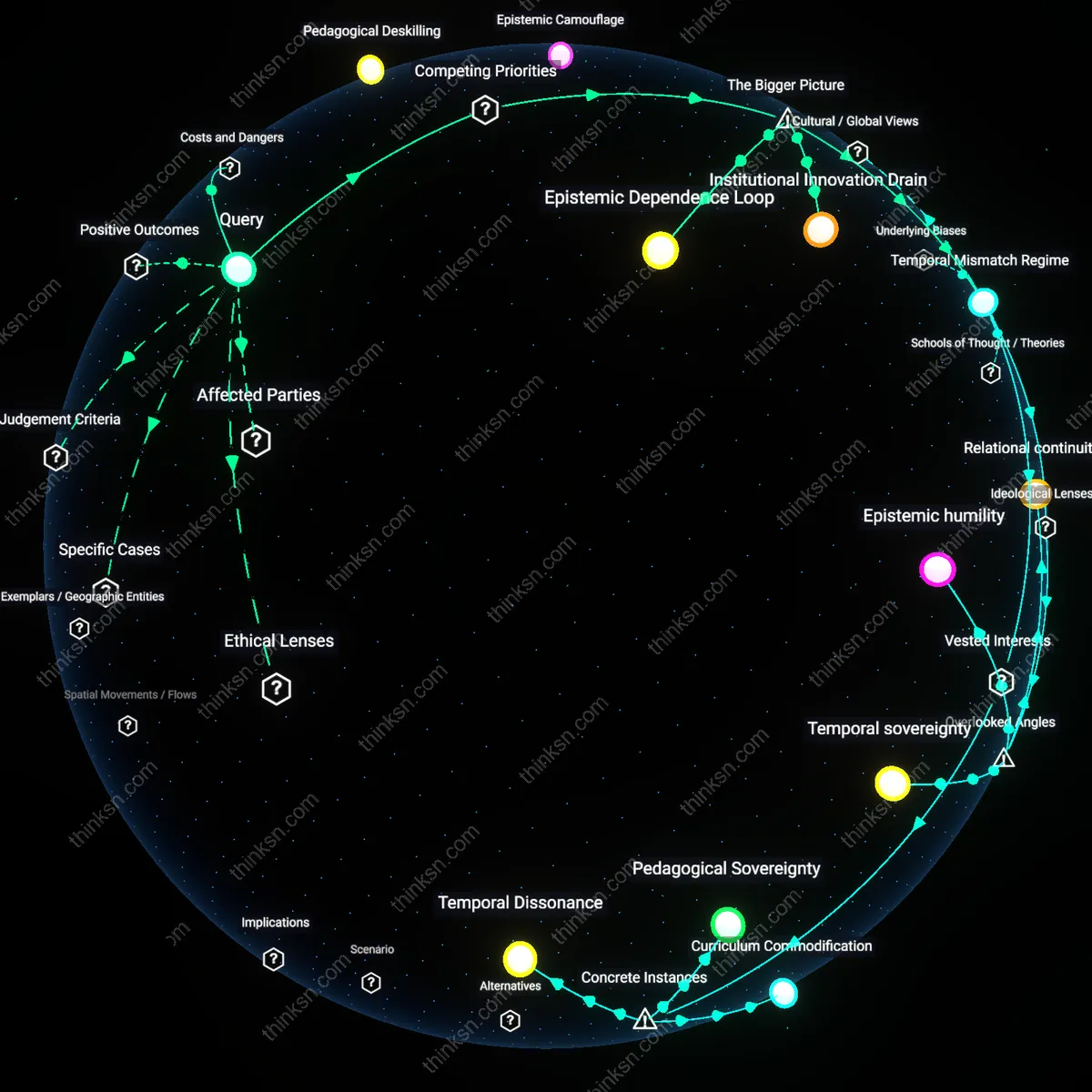

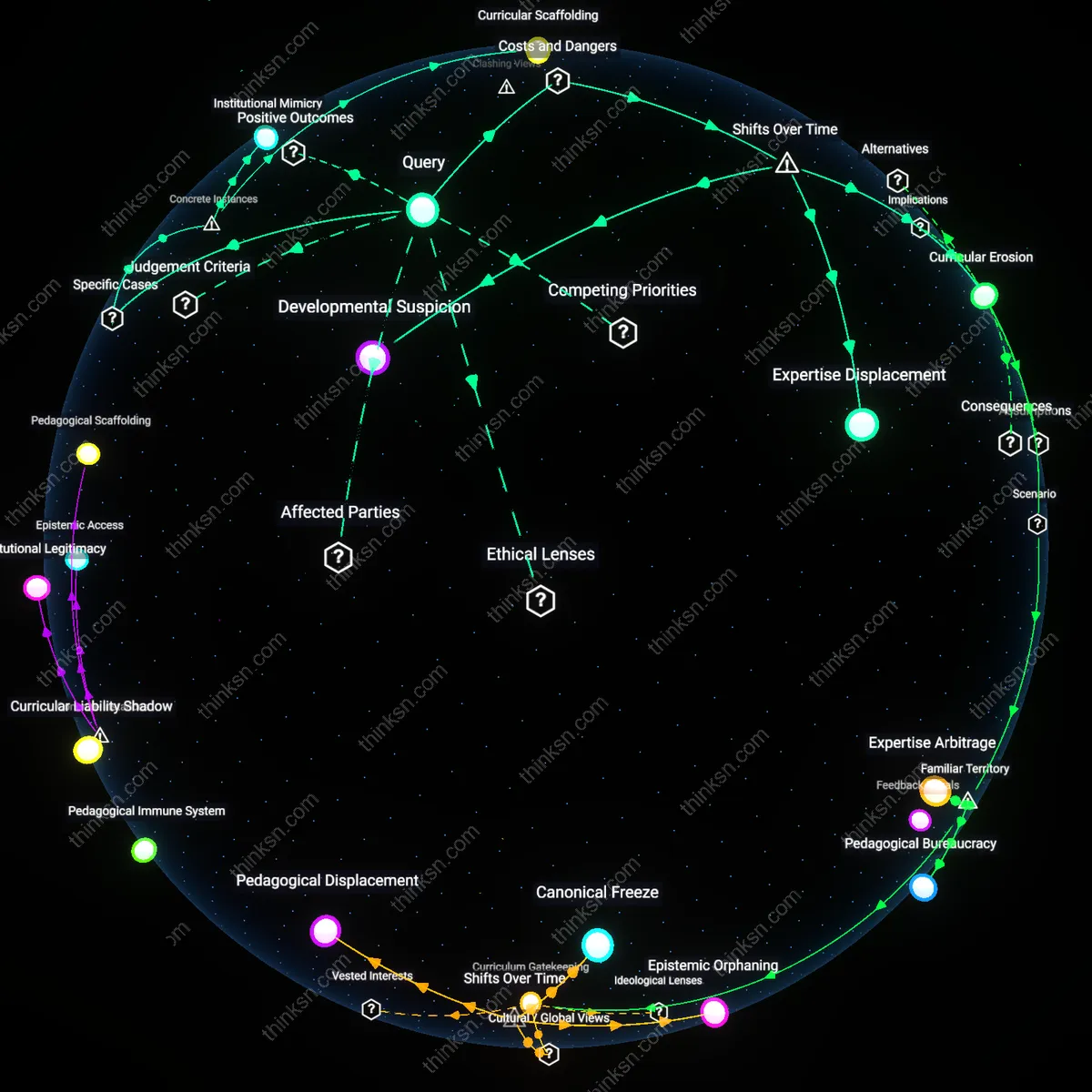

Pedagogical Sovereignty

The Helsinki Public Library’s integration of AI-driven curriculum templates for adult education programs preserved educator autonomy by legally mandating teacher-led modifications to AI outputs, ensuring that municipal pedagogical standards remained under professional discretion rather than algorithmic prescription. This arrangement, grounded in Finland’s Public Sector Duty to Serve legislation and justified through republican civic virtue theory, institutionalized educators as final arbiters of instructional design, thereby maintaining their skill fluency while leveraging AI efficiency. The non-obvious insight is that AI support does not erode professional judgment when legal frameworks treat educator oversight as a constitutional-level safeguard against technocratic depersonalization, not merely an operational preference.

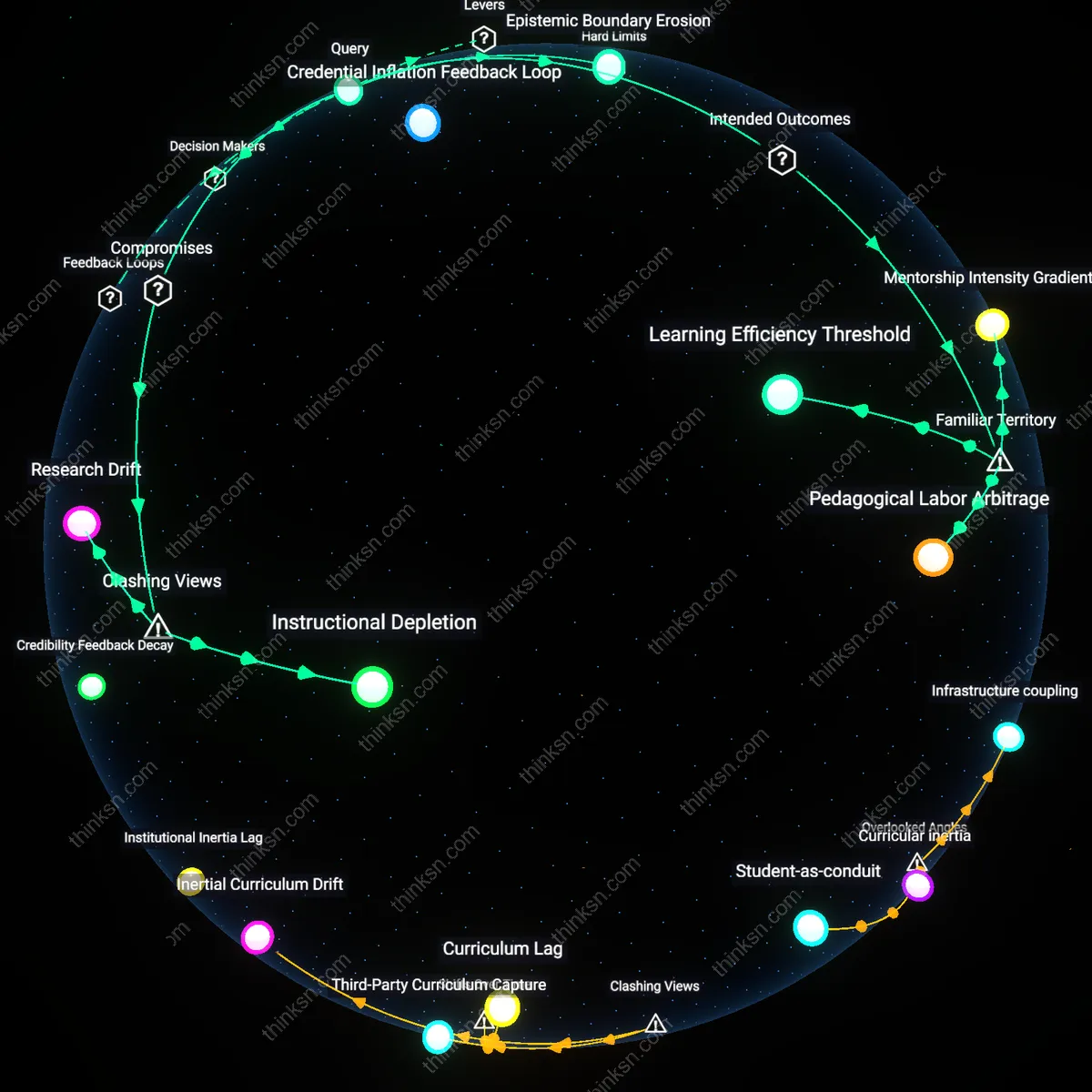

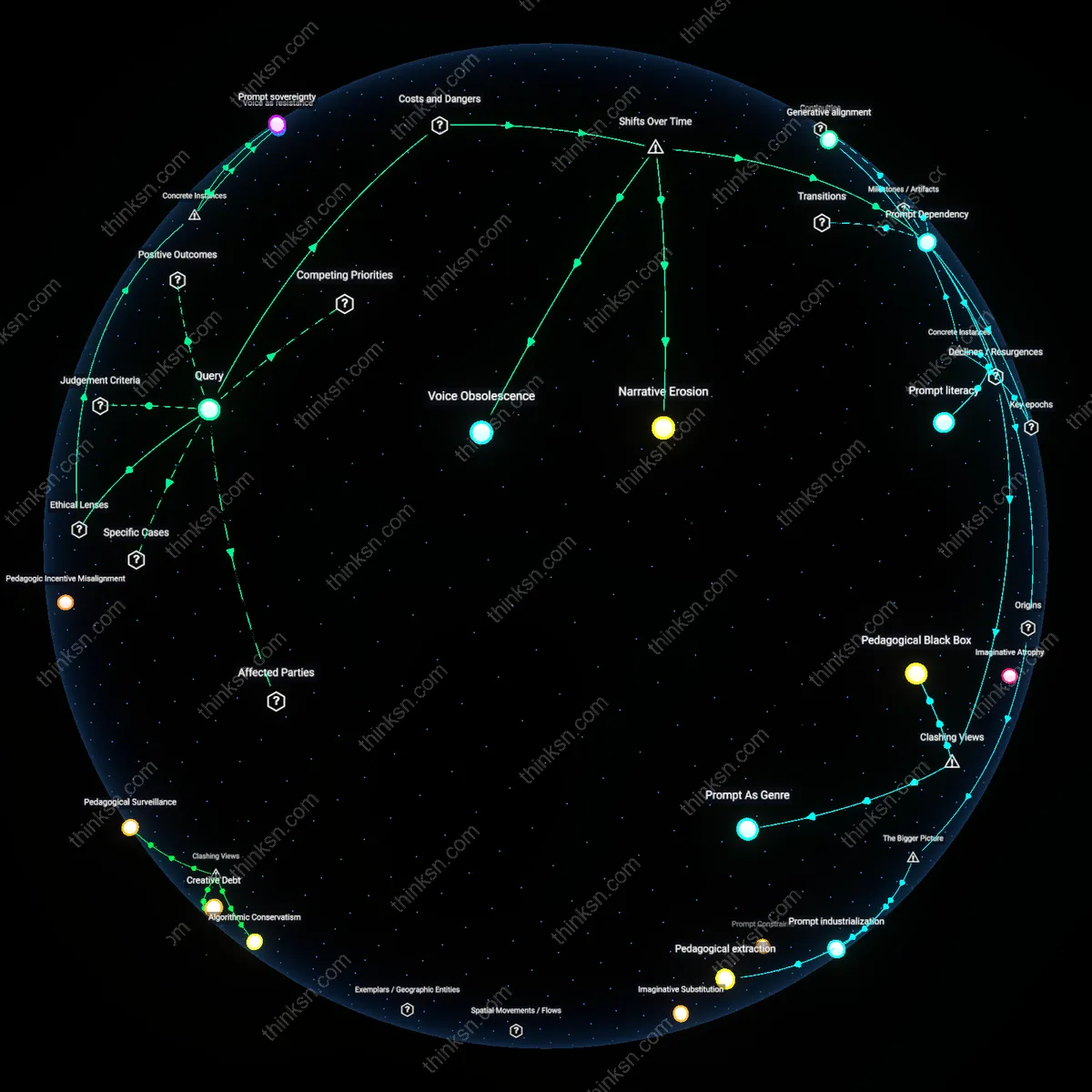

Algorithmic Apprenticeship

At High Tech High in San Diego, a pilot program embedded AI lesson planners within a three-year teacher residency model, where novice educators were required to reverse-engineer and critique AI-generated units before delivering them, transforming algorithmic assistance into a form of dialectical training. Rooted in situated learning theory and aligned with Deweyan progressivism, this mechanism treated AI not as a replacement but as a 'straw man' curriculum, forcing skill development through structured critique. The underappreciated outcome was that early-career teachers developed deeper diagnostic abilities in personalizing instruction precisely because they were required to confront and correct algorithmic oversimplifications, revealing AI’s latent function as a pedagogical sparring partner.

Curricular Accountability

In 2022, the Gauteng Department of Education in South Africa faced public backlash when AI-generated lesson plans for rural schools omitted culturally specific references, prompting the Constitutional Court to reaffirm that personalized student interaction is a constitutional imperative under Section 28, leading to a policy requiring AI outputs to undergo community educator veto power. Grounded in Ubuntu ethics and transformative constitutionalism, this case made visible that algorithmic tools cannot satisfy the relational duty of teaching without localized moral authorship, and that skill retention is enforced through political accountability rather than technical design. The overlooked insight is that ethical AI use in education emerges not from optimization but from legally codified responsibility gaps that place teachers in a fiduciary role between automation and student dignity.

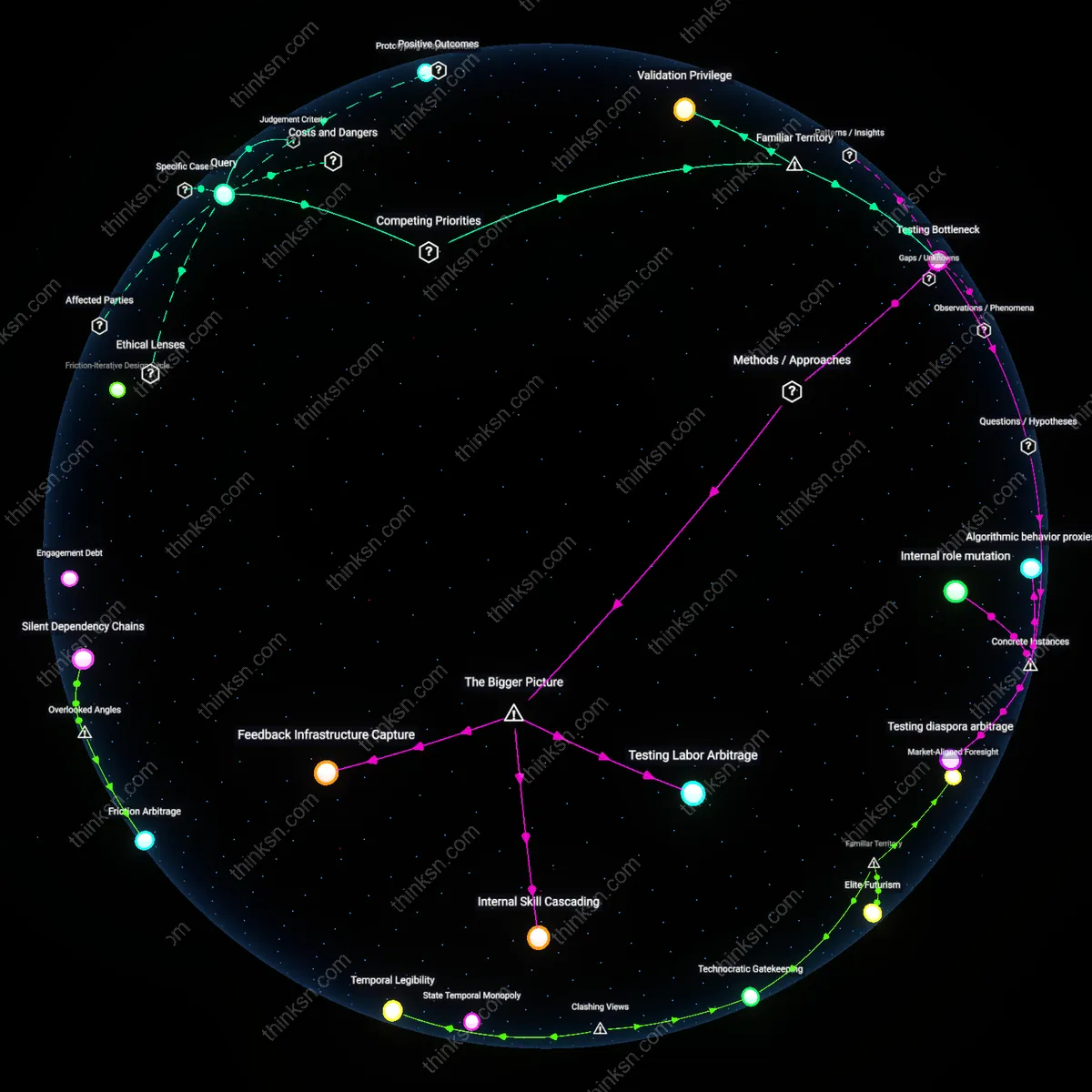

Algorithmic Scaffolding

Integrate AI lesson planners directly into teacher workflow tools like Google Classroom or Canvas so educators interact with AI-generated content in the same environment where they make instructional decisions. This allows teachers to accept, modify, or reject AI suggestions while preserving their pedagogical agency, as seen in pilot programs like those at the Oakland Unified School District, where AI templates for differentiated math lessons are embedded into existing planning dashboards. The non-obvious insight is that maintaining educators’ cognitive engagement with lesson design does not require avoiding AI, but rather designing AI to function as a modifiable draft rather than a final product—transforming AI from a replacement into a collaborative substrate.

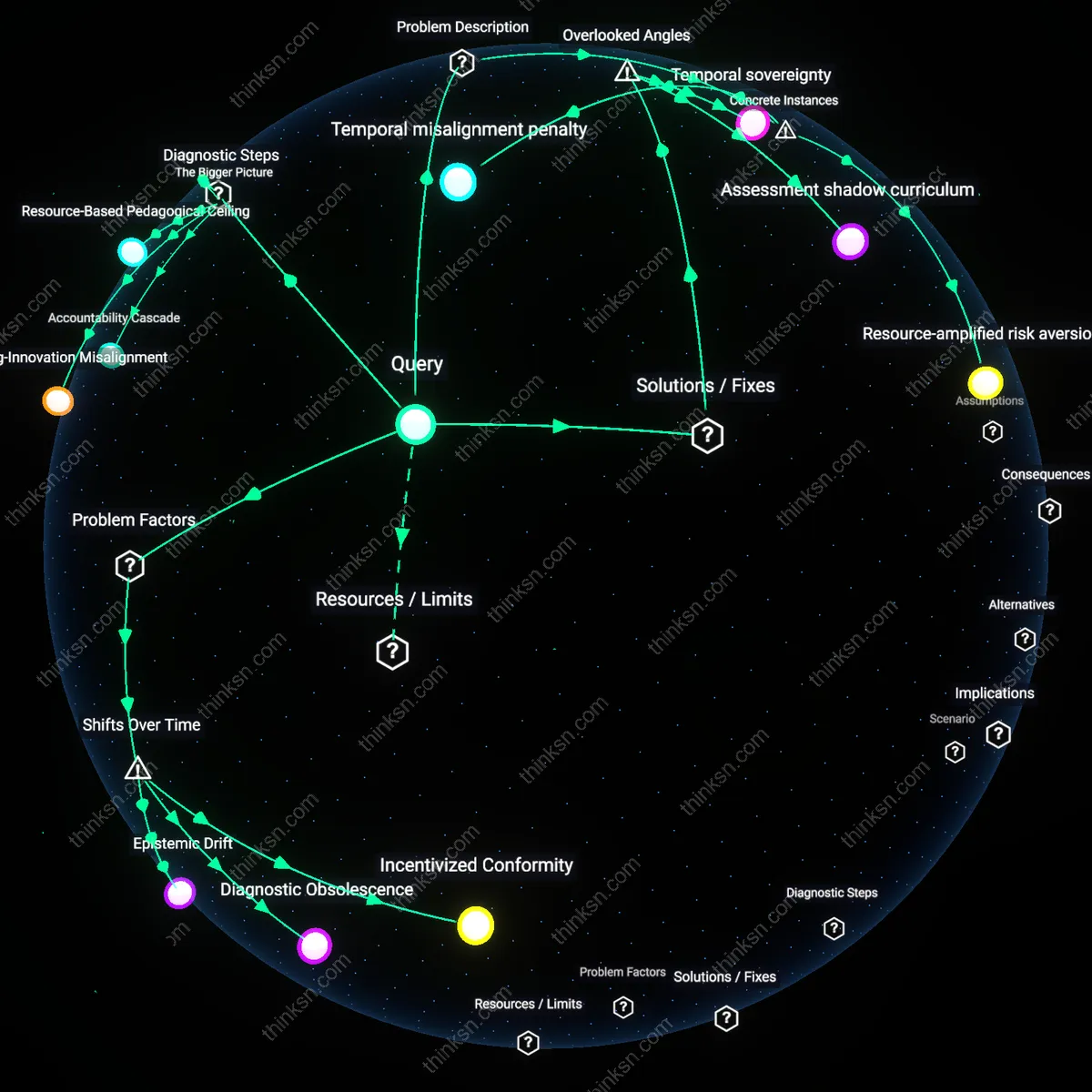

Pedagogical Backchannel

Use AI to log and visualize the instructional decisions teachers make when overriding automated lesson suggestions, as implemented in the New York City Department of Education’s AI coaching initiative with the Relay Graduate School of Education. By capturing why a special education teacher in Queens modifies an AI-generated reading plan for a dyslexic student, the system creates a feedback loop that strengthens professional judgment while supplying data for AI refinement. The overlooked dynamic here is that routine interaction with AI can amplify educator expertise when every correction becomes a deliberate, documented teaching decision—not friction to be minimized, but signal to be leveraged.

Curricular Friction Points

Design AI lesson planners to deliberately introduce controlled gaps in personalization—such as leaving student interest surveys incomplete or omitting scaffolding for certain learning styles—requiring teachers to actively fill them, as tested in the Summit Learning Program’s platform in high schools across Washington state. This forces targeted re-engagement with individual student needs, ensuring that personalization does not become fully automated but instead reserves specific decision rights for educators. The counterintuitive mechanism is that intentional imperfection in AI outputs can promote deeper teacher-student interaction by preserving non-delegable judgment moments in the planning process.