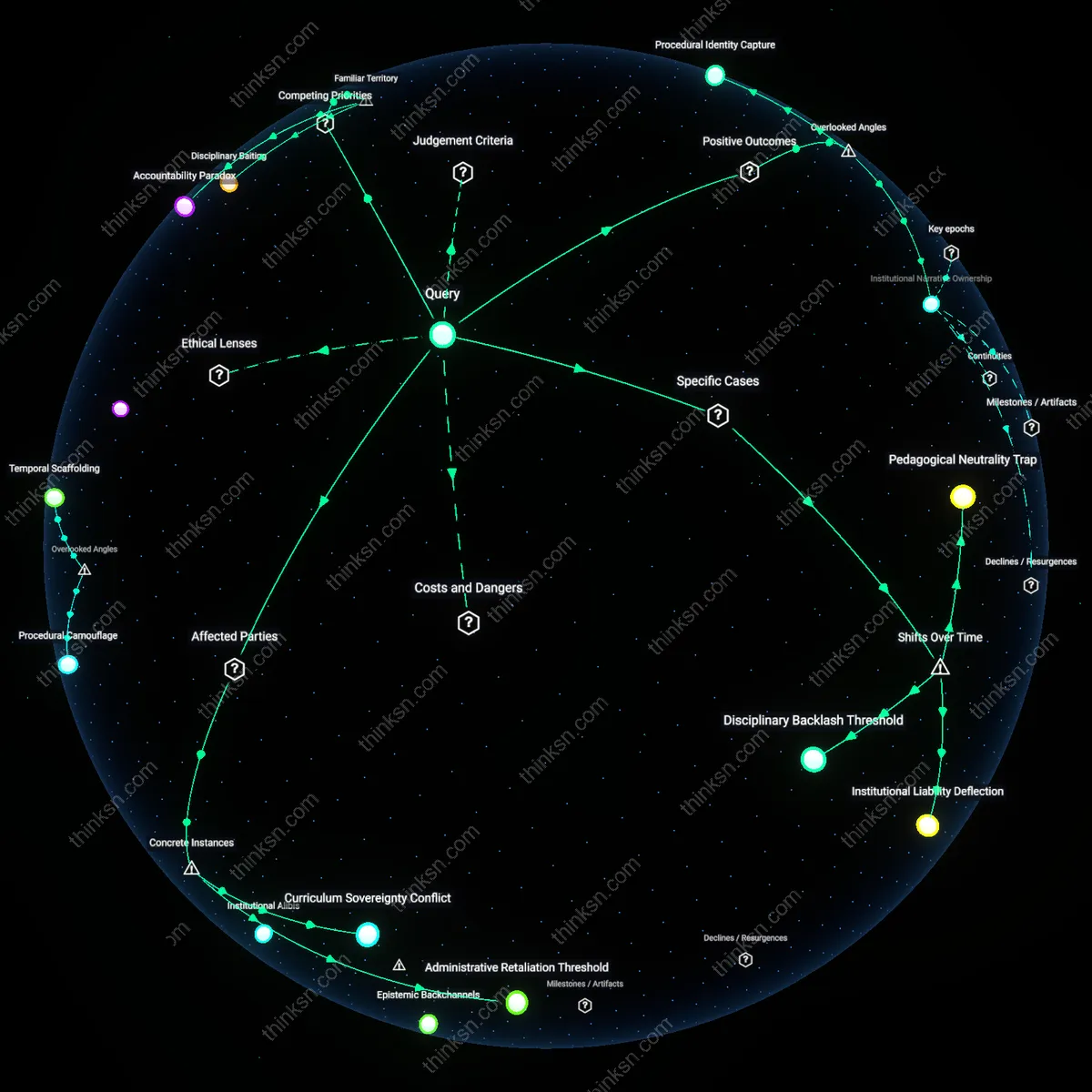

Do Standardized Tests Stifle Innovative Teaching in Underfunded Schools?

Analysis reveals 11 key thematic connections.

Key Findings

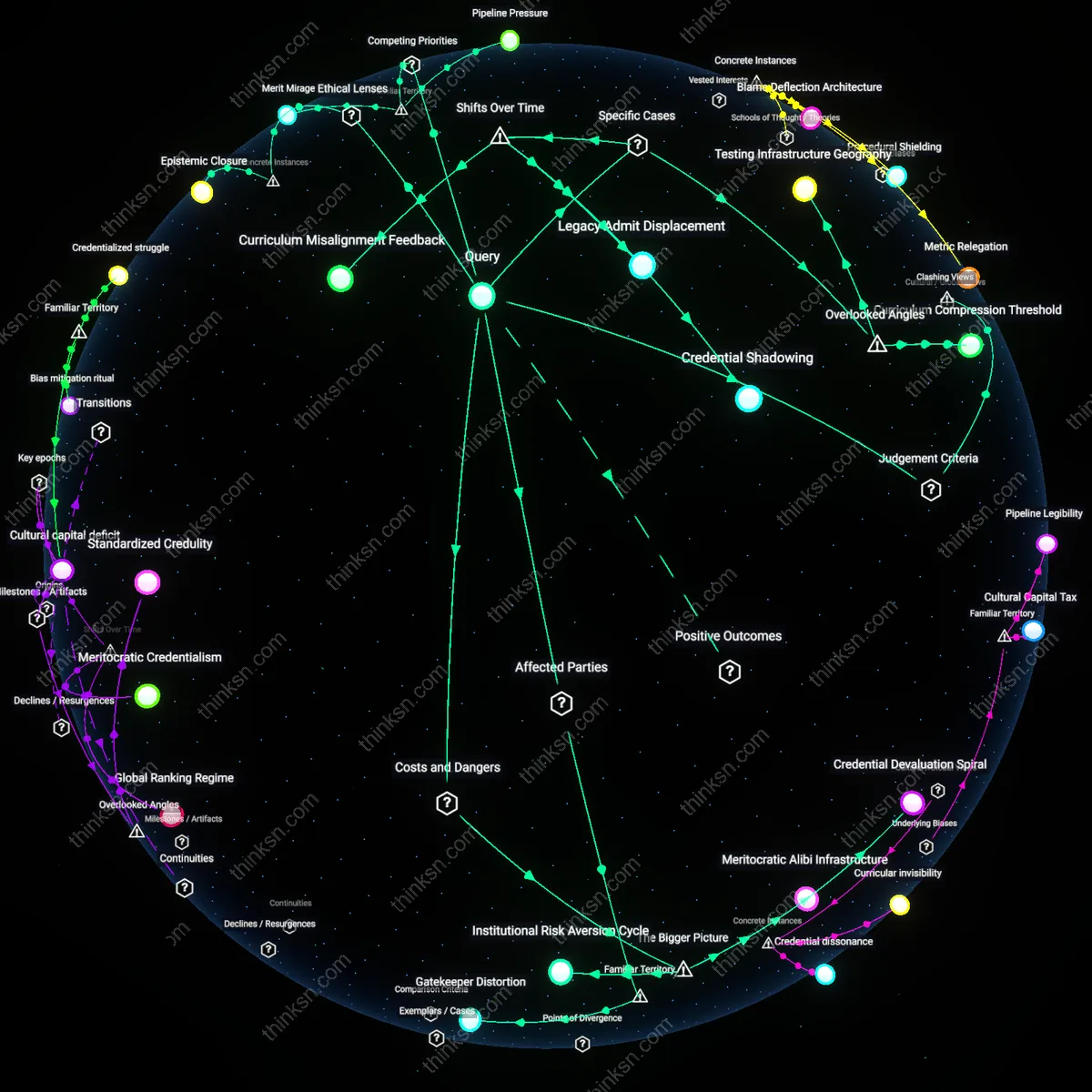

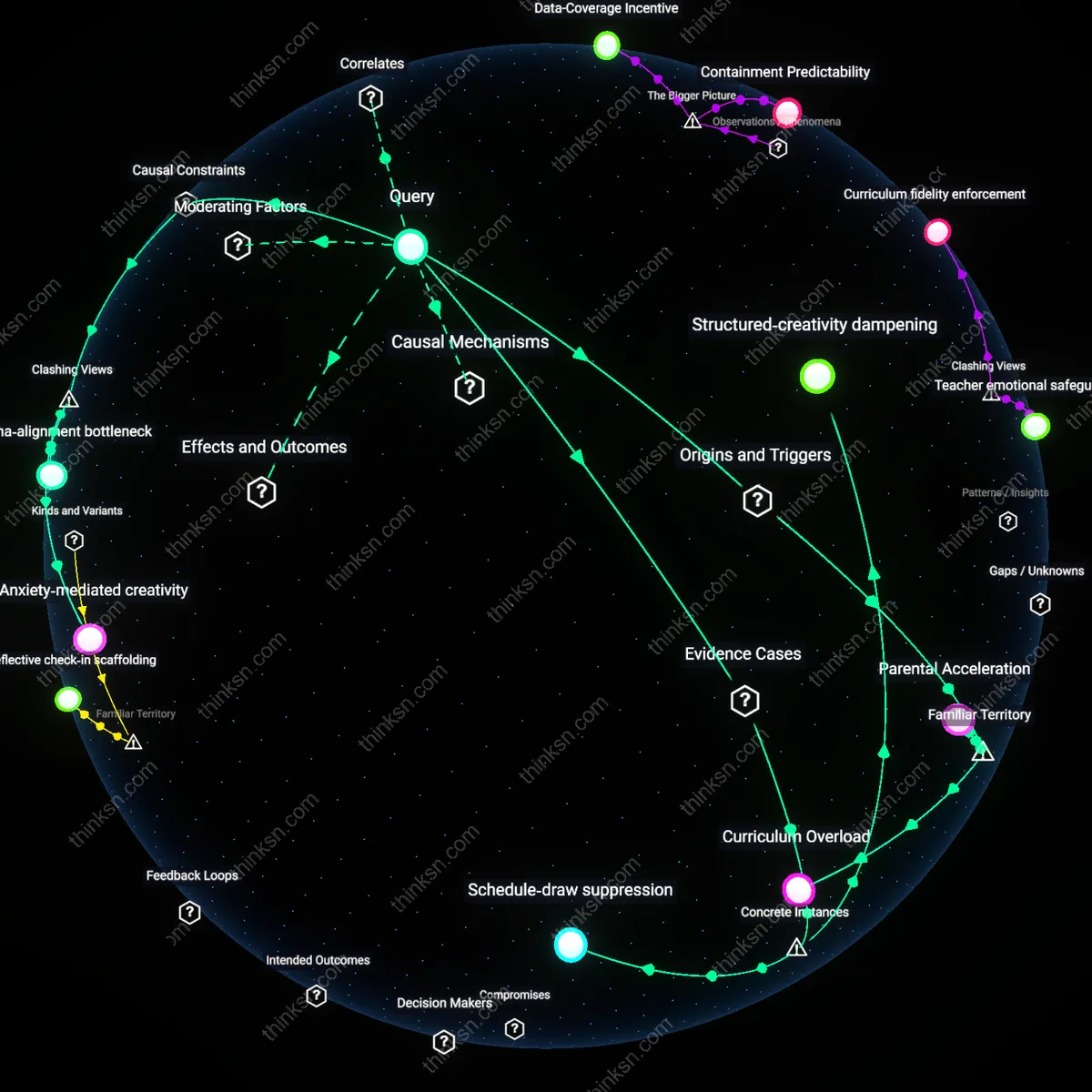

Incentivized curriculum narrowing

In Atlanta Public Schools during the 2009 cheating scandal, teachers and administrators systematically altered student test answers not solely due to individual misconduct but because the evaluation system tied teacher advancement and school funding directly to standardized test scores, creating a structural incentive to prioritize test prep over exploratory or project-based learning, particularly acute in under-resourced schools where supplemental support was absent; this reveals how high-stakes accountability regimes compress pedagogical scope into repetitive drilling, actively suppressing innovative methods that don’t yield immediate score gains.

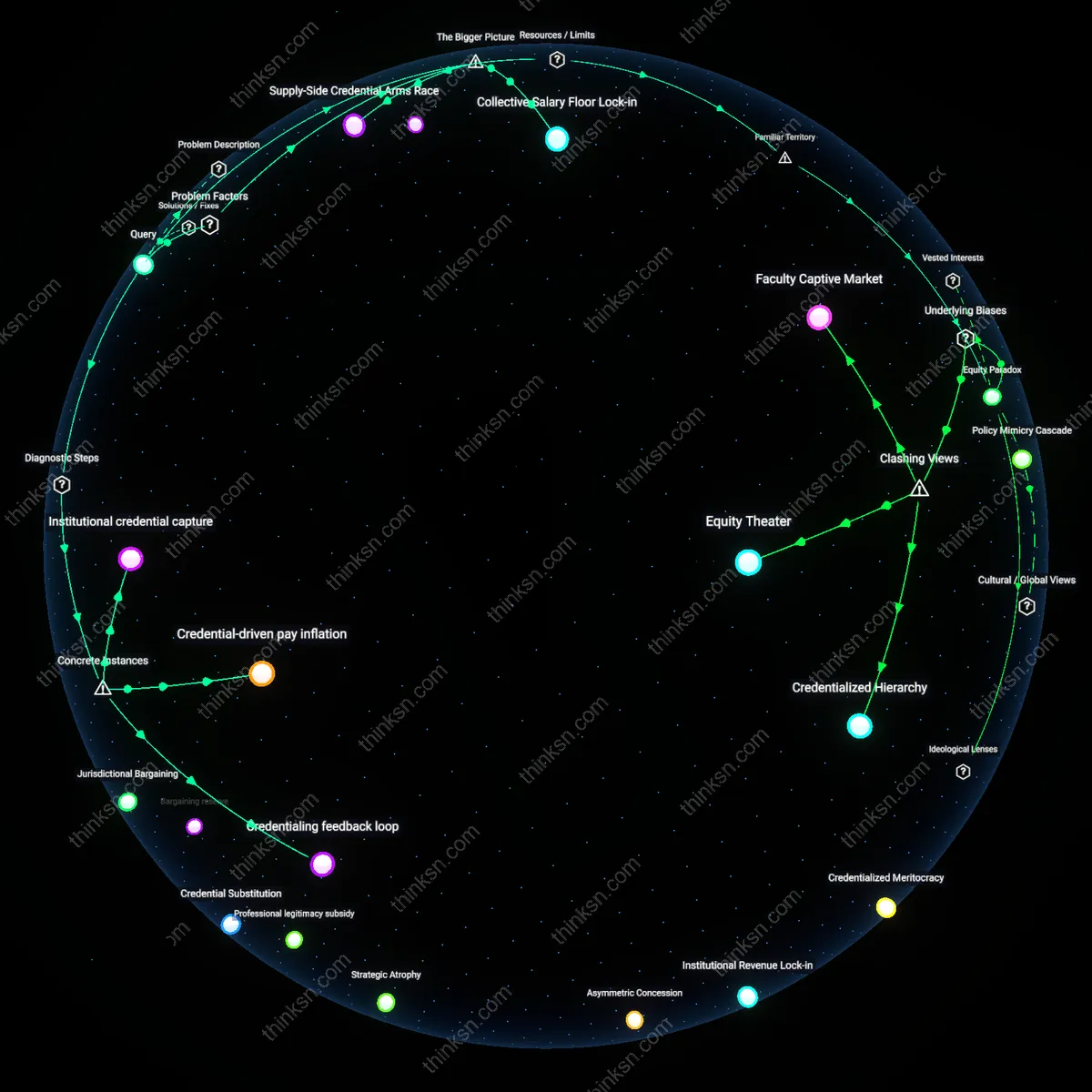

Resource-amplified risk aversion

In Detroit Public Schools between 2015 and 2017, teachers in underfunded schools reported abandoning interdisciplinary units and experiential learning after state-imposed evaluations linked 50% of their effectiveness ratings to student growth percentiles on standardized tests, where the absence of teaching assistants, updated materials, or small-group supports made experimental methods too risky given potential evaluation penalties; this illustrates how the same policy generates divergent pedagogical freedom based on resource availability, turning innovation into a privilege of well-equipped schools.

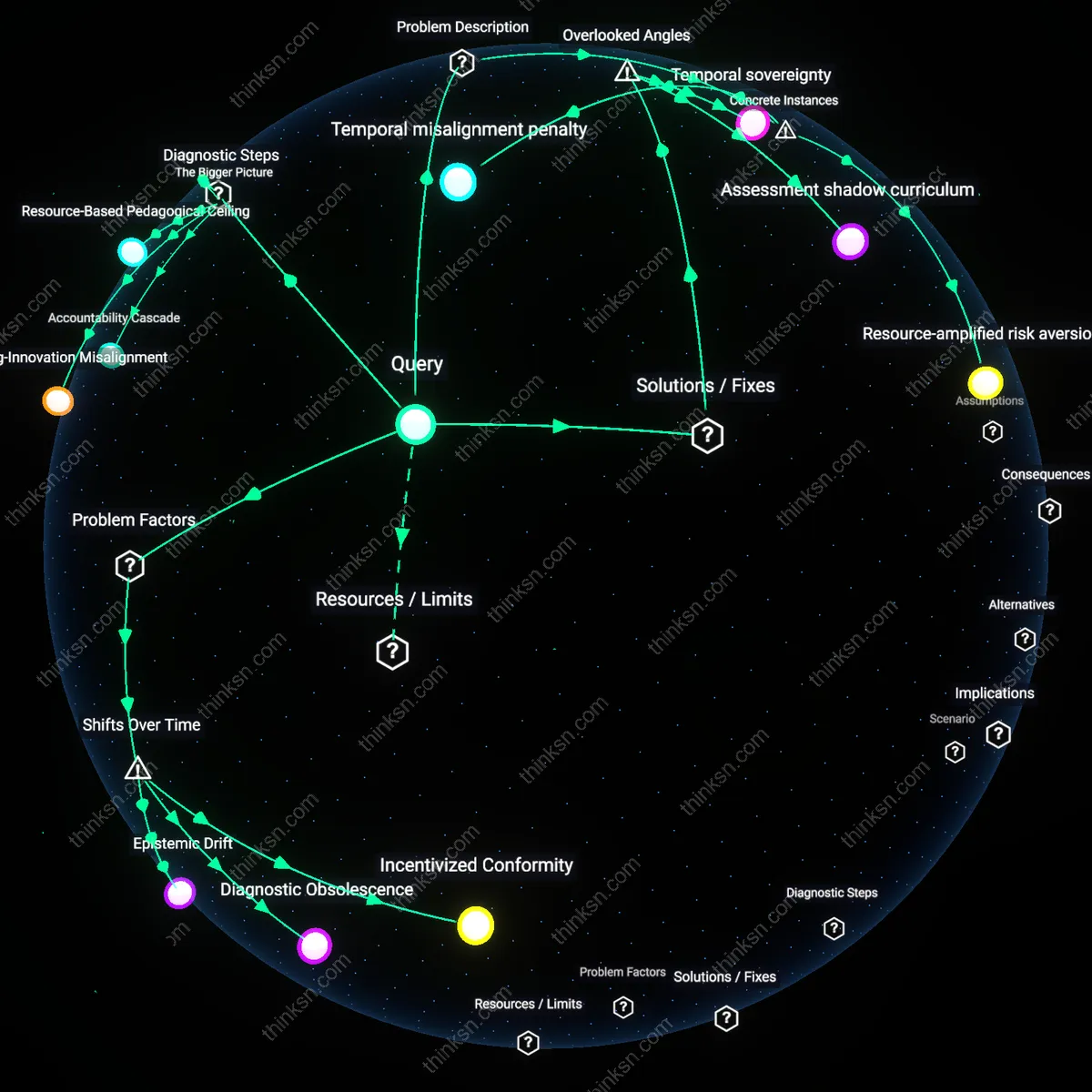

Temporal misalignment penalty

In New Orleans’ Recovery School District post-Katrina, charter school teachers attempting inquiry-based science instruction in low-income middle schools found their value-added scores depressed in initial years because conceptual understanding developed through open-ended labs took longer to translate into test performance than rote memorization, leading school leaders to demand instructional shifts toward drill-based models despite observed student engagement and cognitive growth; this exposes how standardized testing regimes systematically penalize teaching methods with delayed academic payoff, distorting innovation toward short-term measurable outcomes.

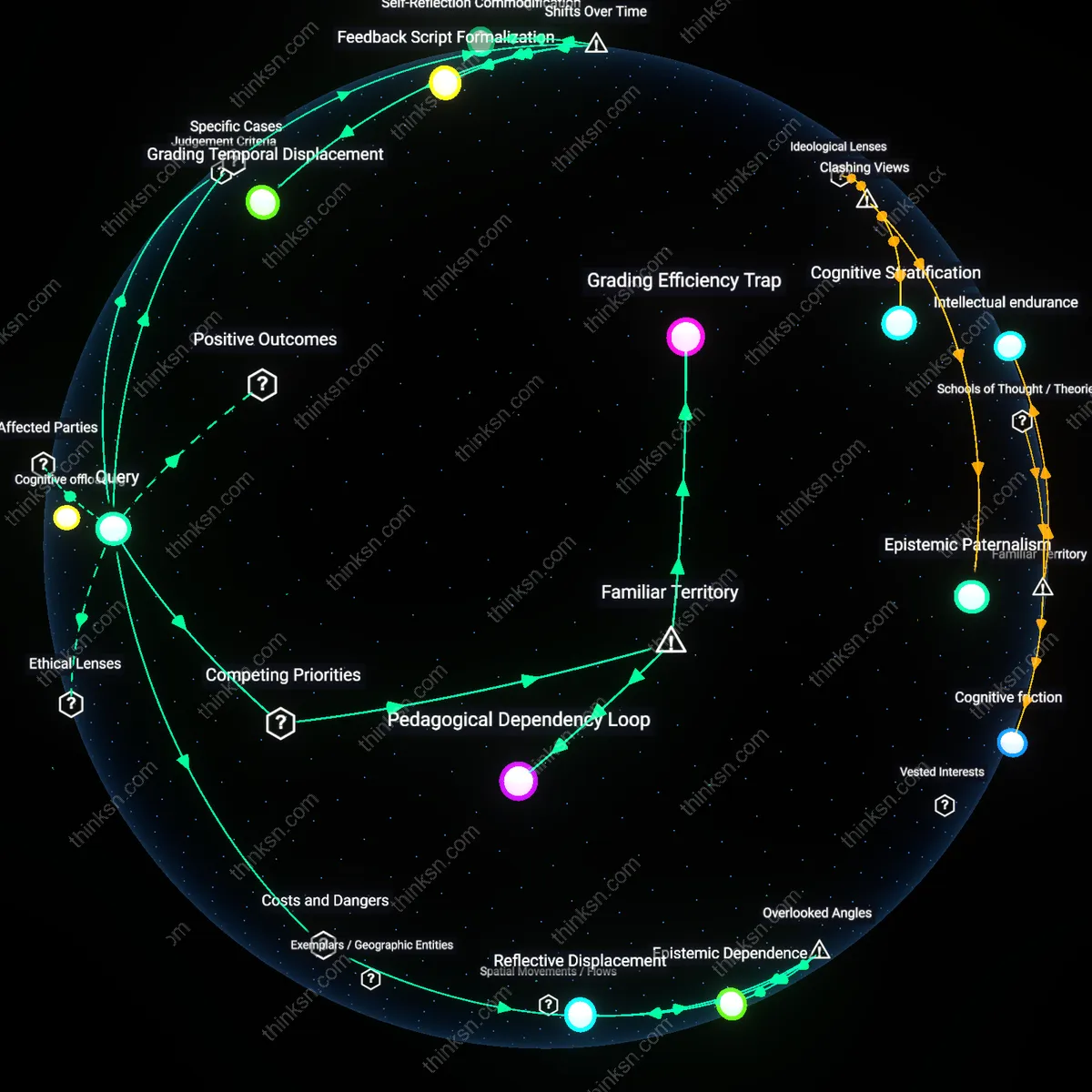

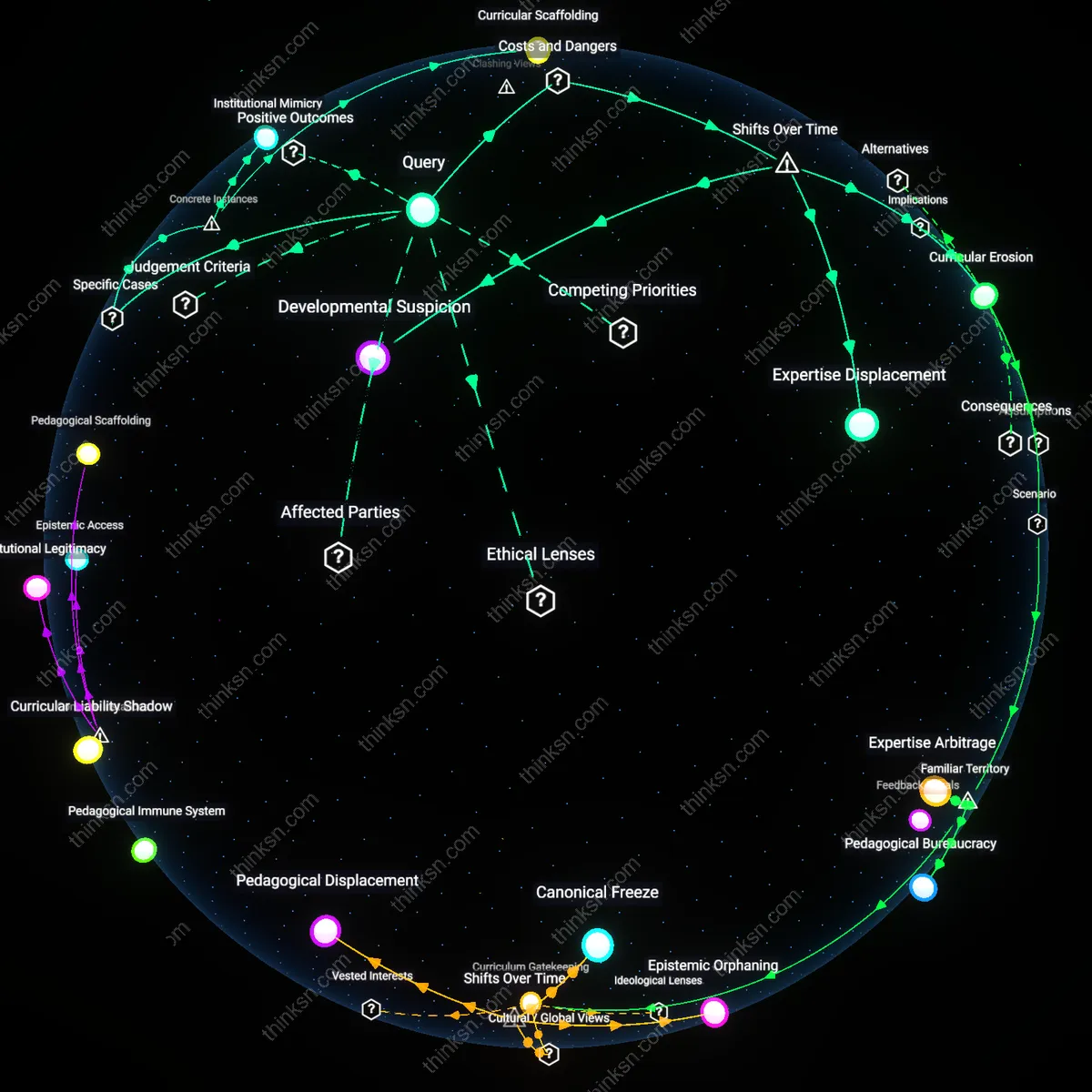

Incentivized Conformity

Standardized test-based teacher evaluations entrenched post-No Child Left Behind (2001) made measurable, repeatable instruction more valuable than exploratory or student-led pedagogy, particularly in under-resourced schools reliant on federal funding. This shift tied school survival directly to test outcomes, causing administrators and teachers to prioritize curriculum alignment and test prep over methods that risked poor scores despite potential long-term learning gains. The mechanism—funding-contingent performance metrics—transformed instructional innovation from an educational goal into an institutional risk, especially where resources couldn’t buffer against failure. What’s underappreciated is that this didn’t eliminate innovation but displaced it into covert, unmeasured spaces, producing a system where effective but non-compliant teaching must be hidden.

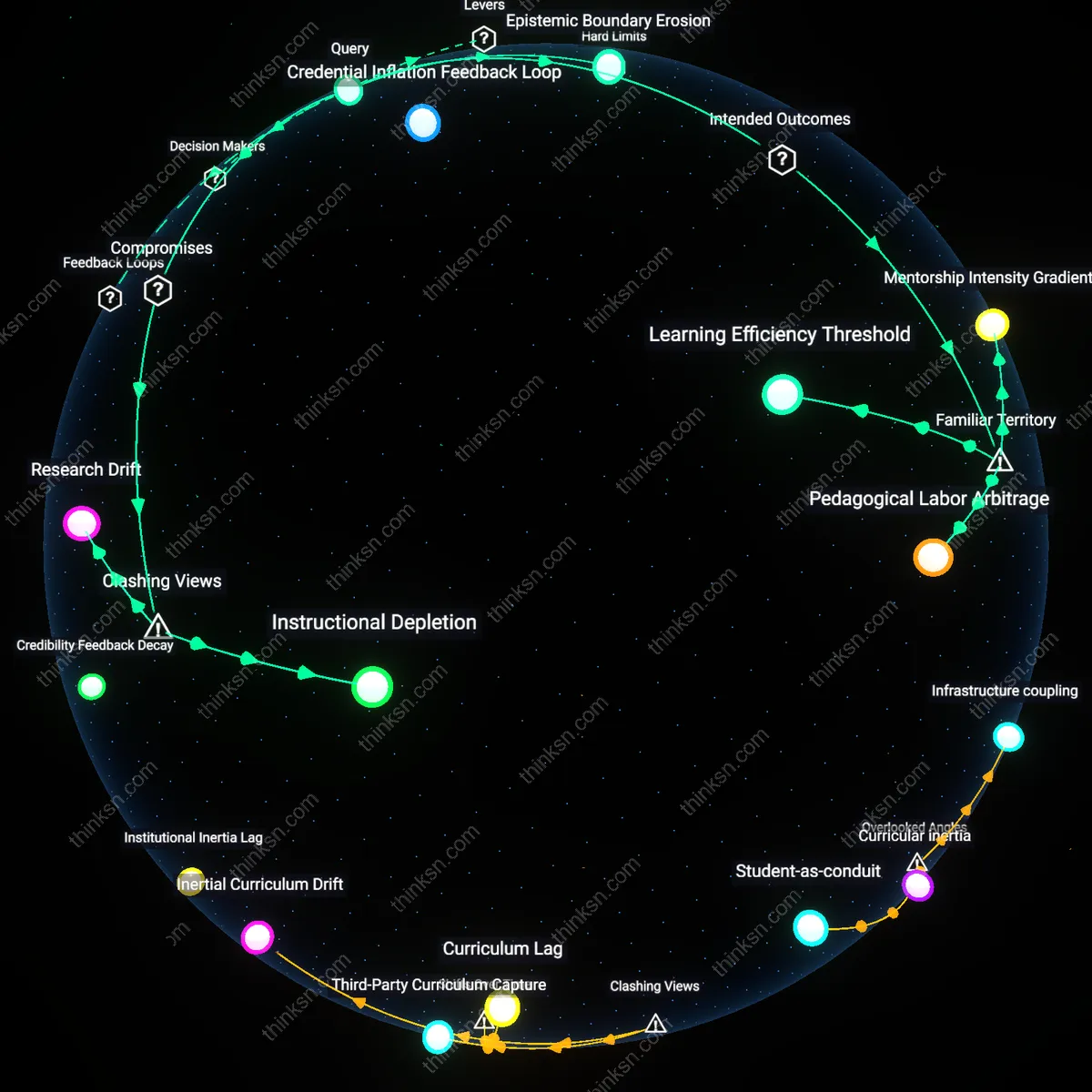

Diagnostic Obsolescence

As high-stakes testing regimes matured in the 2010s, the tests used to evaluate teachers became increasingly static while classroom needs and societal demands evolved toward critical thinking and digital literacy—capabilities poorly captured by multiple-choice formats dominant since the 1980s. In under-resourced schools, where external validation is most sought, the persistence of outdated assessment tools created a growing rift between evaluated performance and actual pedagogical relevance. The technical stagnation of the tests, locked into scalable, low-cost models, thus retroactively invalidated the innovation they claimed to incentivize. The overlooked consequence is that the metric didn’t just misalign with innovation—it fossilized a version of teaching suited to a pre-digital, industrial-era classroom.

Epistemic Drift

Following the expansion of value-added modeling (VAM) in teacher evaluation during the 2010s, statistical proxies for teacher effectiveness began displacing local knowledge of pedagogy, especially in districts with unstable leadership and high teacher turnover. In under-resourced schools, where teaching methods are often adapted collectively to community needs, the imposition of standardized metrics detached evaluation from contextual practice, rendering culturally responsive or experimental methods statistically 'noisy' and therefore discouraged. This shift—from judgment rooted in community and professional observation to abstracted data—transformed innovation from a shared pedagogical project into a career liability. The critical but unseen outcome is a gradual hollowing out of professional judgment, where teachers internalize data norms not as tools but as constraints.

Testing-Innovation Misalignment

Standardized test metrics suppress teaching innovation when curriculum designers in high-poverty schools prioritize test-prep alignment over pedagogical experimentation. State-mandated accountability systems create pressure on district administrators and principals to reduce instructional variance, leading them to discourage methods not proven to raise scores—such as project-based learning—even when those methods improve engagement or critical thinking. This dynamic is most acute in under-resourced schools where external funding and political legitimacy depend on measurable performance gains. The non-obvious consequence is not just constraint on teachers, but the systemic erasure of context-sensitive pedagogy that could address deeper inequities in learning conditions.

Accountability Cascade

Teacher evaluation tied to test scores activates a top-down compliance culture that disrupts autonomous professional judgment in urban school networks reliant on public oversight. When school boards and state education agencies use value-added models to rate teachers, principals respond by standardizing lesson plans and pacing guides to minimize risk, particularly in schools facing sanctions for low performance. This institutional risk-aversion spreads through middle management, where instructional coaches are repurposed as compliance monitors rather than innovation facilitators. The overlooked mechanism is how accountability, intended to improve equity, becomes a force of instructional homogenization precisely where differentiated teaching is most needed.

Resource-Based Pedagogical Ceiling

In under-resourced schools, standardized testing entrenches a floor of instructional ambition that pre-empts innovation because teachers lack bandwidth to design beyond basic skill remediation. Chronic underfunding in districts like Detroit or Cleveland means educators face overcrowded classrooms, absent materials, and trauma-affected students—conditions that make experimental teaching methods logistically unfeasible. When test scores become the primary measure of teacher efficacy, administrators rationally allocate scarce professional development time toward drill-based strategies with short-term score gains rather than exploratory models requiring sustained investment. The unacknowledged outcome is that testing doesn’t merely discourage innovation—it codifies an inequitable ceiling on what pedagogy can exist in underfunded contexts.

Temporal sovereignty

Establish teacher-controlled innovation time banks that let educators in under-resourced schools accrue and trade protected hours for non-tested pedagogical trials, with evaluation systems adjusted to recognize these efforts. Standard reform models assume time is neutral, but in high-poverty schools, leadership often allocates time reactively—toward remediation, discipline, and test prep—eroding what little autonomy teachers have to experiment. The non-obvious issue is that time isn’t just scarce; its distribution is politically governed, often reinforcing compliance over creativity. By formalizing tradable innovation time as a recognized professional currency, this intervention surfaces time not as a logistical variable but as a form of sovereignty—control over scheduling as a prerequisite for pedagogical agency, a dimension typically ignored in test-based accountability structures.

Assessment shadow curriculum

Mandate that evaluation systems in under-resourced schools include a documented counter-curriculum of non-tested student outputs—such as dialogue logs, project prototypes, or peer feedback records—reviewed by external pedagogical auditors. The hidden dependency here is that standardized testing doesn’t merely measure learning; it produces an assessment shadow curriculum, an invisible layer of instructional behaviors generated in anticipation of testing protocols, which displaces exploratory, discussion-based, or arts-integrated teaching even when formally permitted. Most reforms target test weightings or cutoffs, but miss how anticipated evaluation practices structurally colonize classroom culture long before test day. Capturing non-tested artifacts reintroduces legitimacy to unmeasured forms of intellectual engagement, exposing the covert curriculum of compliance that standardized evaluation silently institutionalizes.