Do AI Prototyping Tools Enhance or Undermine User Testing Expertise?

Analysis reveals 4 key thematic connections.

Key Findings

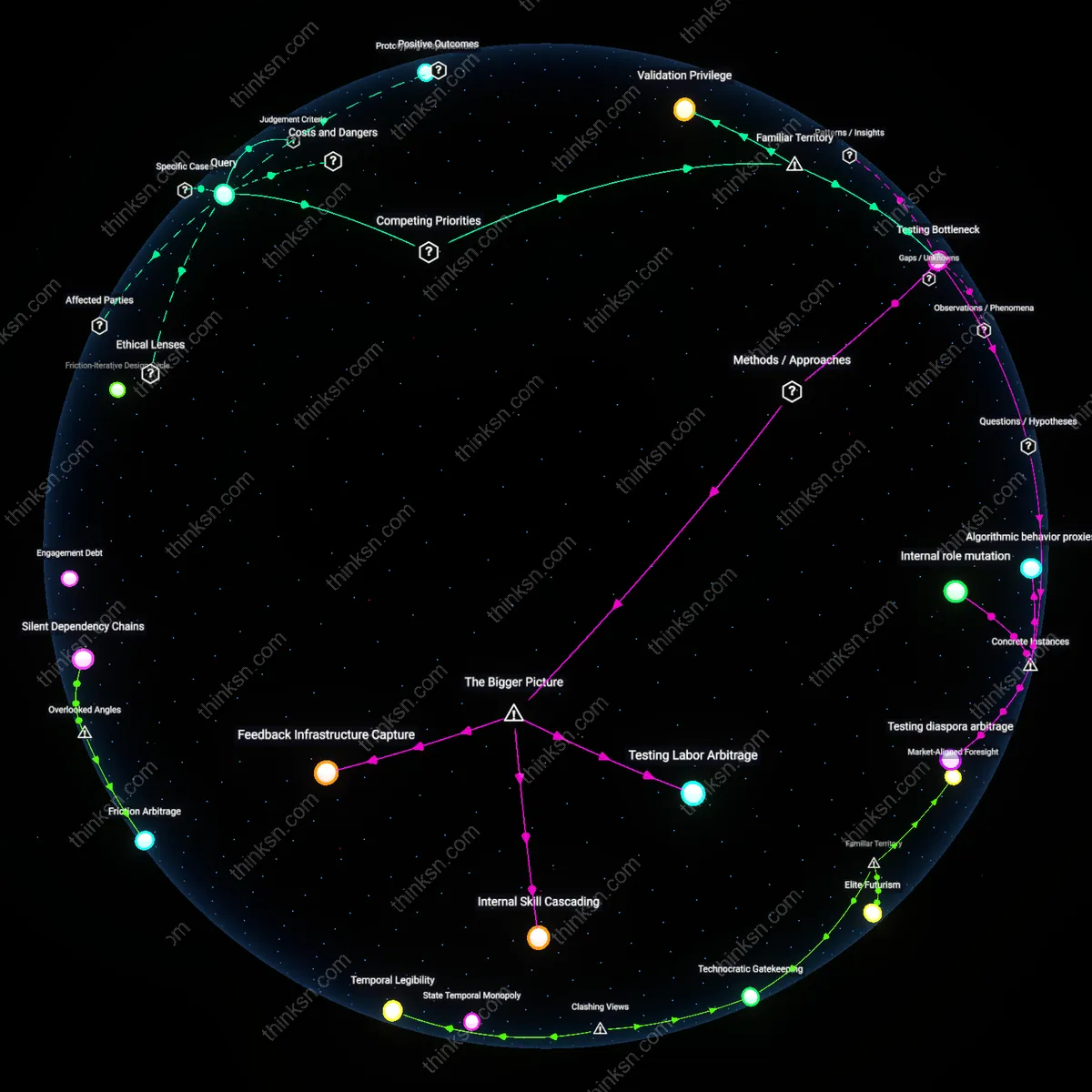

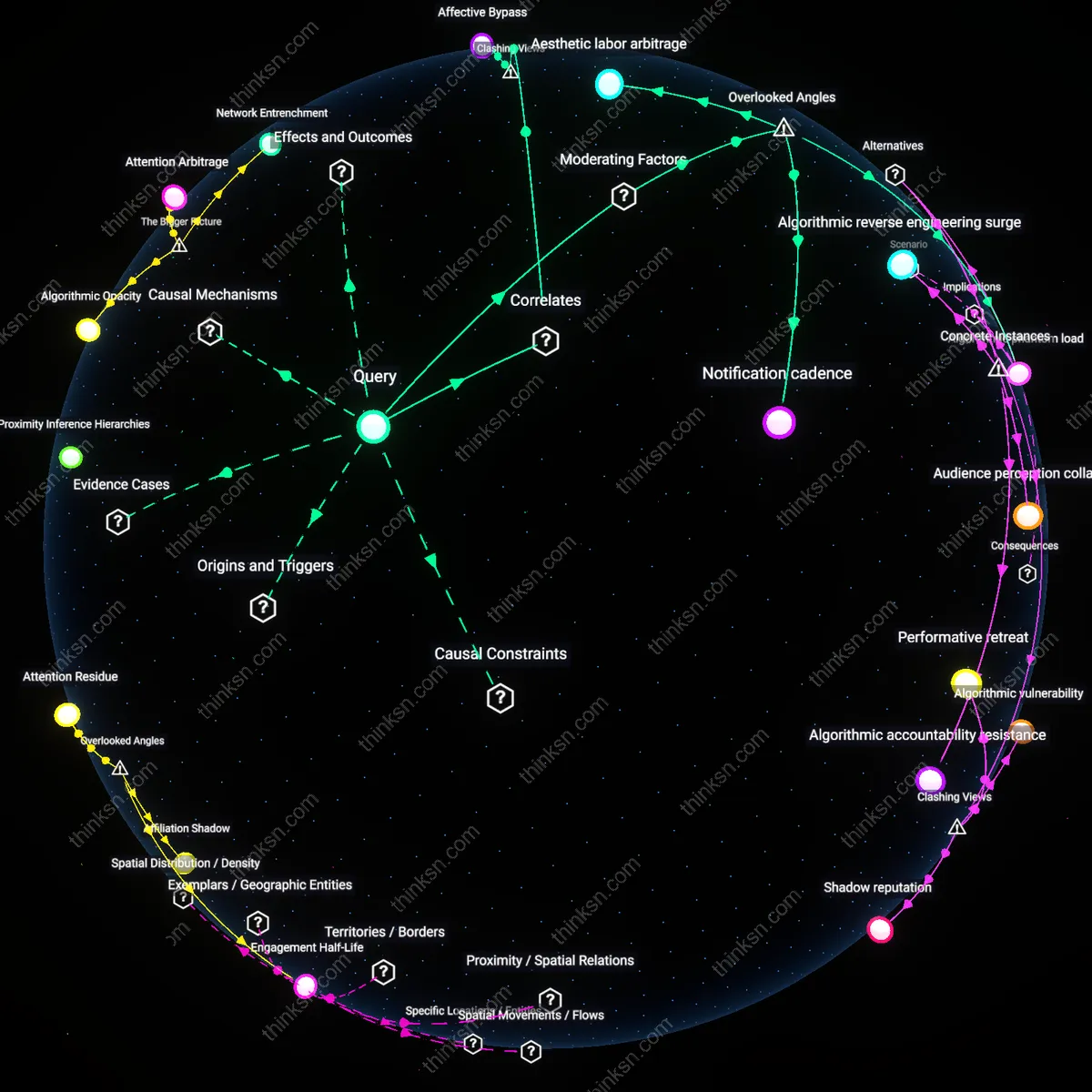

Prototyping Displacement

The rising value of deep user-testing expertise does not counterbalance AI-driven job losses in prototyping because efficiency now favors computational speed over human insight in early-stage design. Since the 2010s, digital product development shifted from iterative physical mockups requiring human testers to AI-generated prototypes rapidly validated through synthetic data and behavioral modeling, reducing the need for live user testing cohorts. This transition, anchored in Silicon Valley’s adoption of generative design tools post-2018, reveals that user expertise has been demoted from a core input to a late-stage audit function—its value absorbed and minimized rather than compensated. The non-obvious consequence is that user-testing roles are not eliminated outright but metabolized into automated feedback loops, eroding their labor-market centrality.

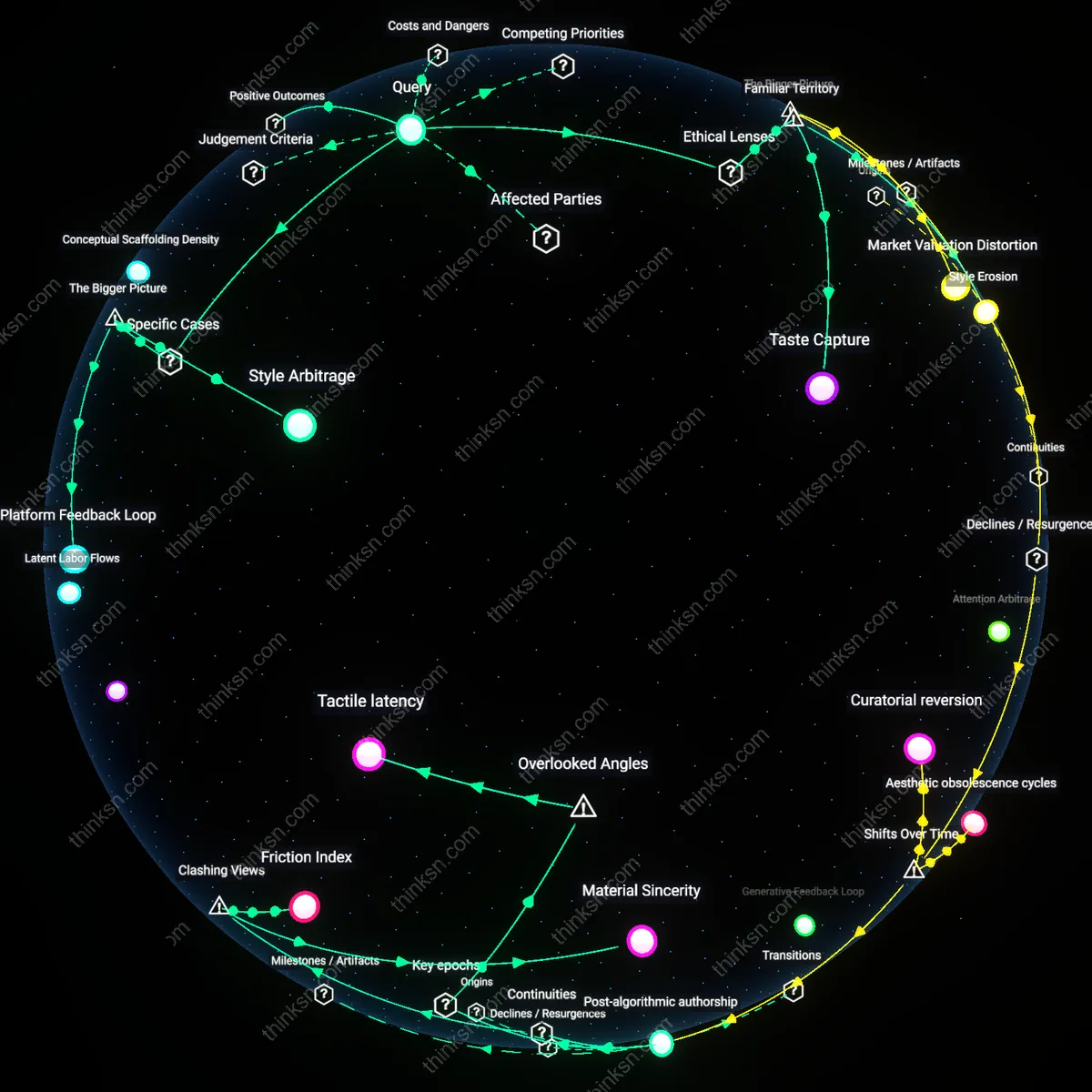

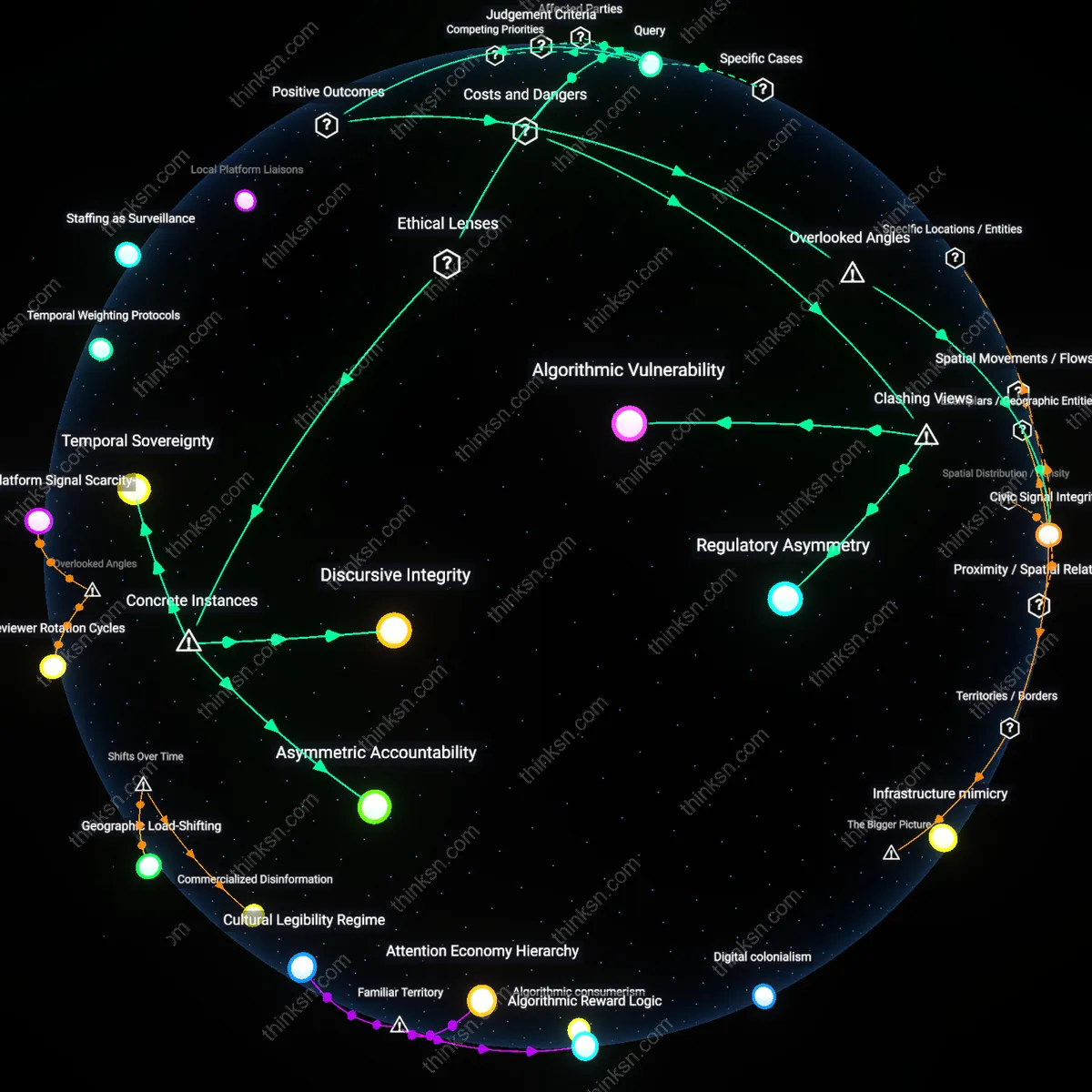

Judgment Arbitrage

Deep user-testing expertise gains moral and practical leverage against AI prototyping job losses by asserting autonomy in defining 'valid use' against algorithmically optimized designs. In the pre-2010 industrial design era, user testing was evaluative and episodic; but after the smartphone-era expansion of UX as ethical gatekeeping—especially post-2015 regulatory scrutiny on addictive interfaces—human testers became custodians of normative boundaries. This shift, catalyzed by EU and California privacy reforms, elevated testers from passive observers to arbiters of acceptable engagement, creating a counterweight to AI’s efficiency gains by invoking dignity and consent as uncodifiable benchmarks. The underappreciated dynamic is that such expertise now functions less as feedback than as a veto infrastructure.

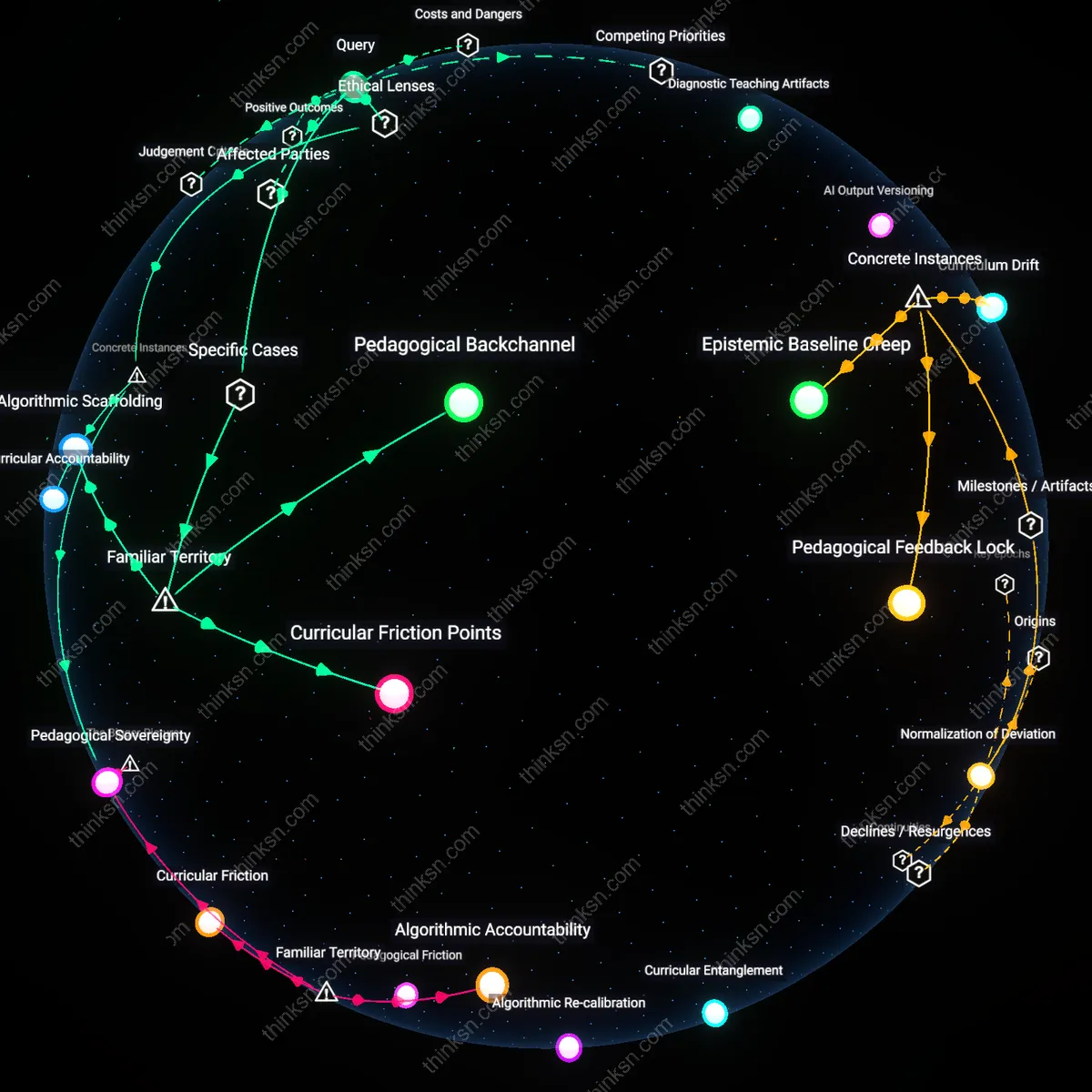

Testing Bottleneck

The rising value of deep user-testing expertise intensifies competition for a limited pool of skilled evaluators, creating a bottleneck that slows deployment despite faster AI prototyping. As companies use AI to generate prototypes at scale—especially in software, consumer tech, and UX design—the need to validate these rapidly produced iterations against real human behavior grows disproportionately. This bottleneck emerges not from lack of prototypes but from the irreducible time required to recruit, observe, and interpret user responses in context-sensitive environments like healthcare or education. The non-obvious insight is that in familiar innovation pipelines, where speed is assumed to increase end-to-end, the human evaluation phase becomes the new rate-limiting step, not the ideation or prototyping phase.

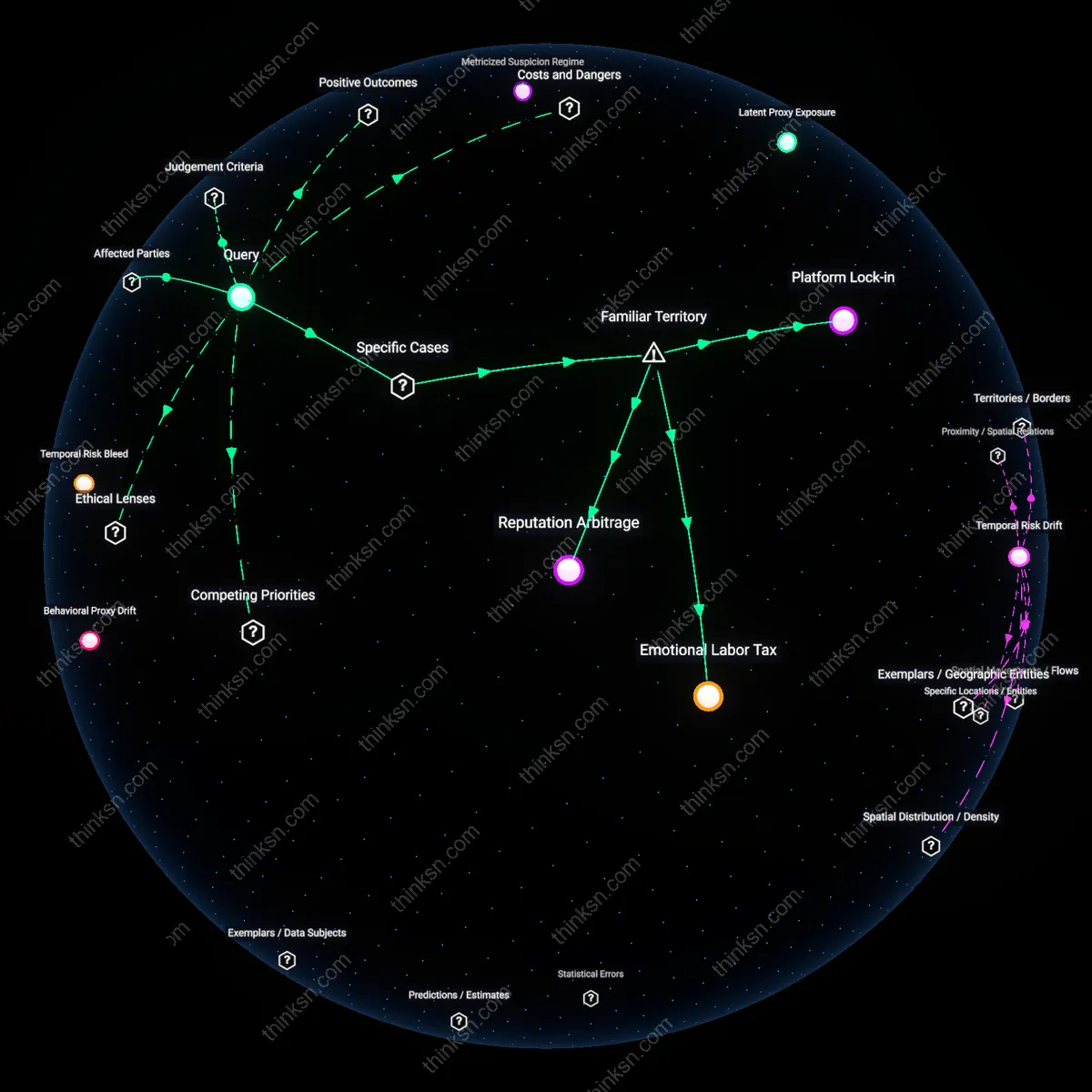

Validation Privilege

Firms with established access to diverse user communities and longitudinal behavioral data gain structural advantage, making deep user-testing expertise a gatekept asset rather than a democratized counterbalance. As AI accelerates prototyping, organizations lacking real-world user access—such as startups or public sector teams—cannot validate designs effectively, even if they generate compelling concepts. This dynamic reinforces a hierarchy where value accrues not to those who innovate fastest, but to those who can best substantiate impact through trusted user insights, typically large tech firms or well-funded consultancies. The underappreciated reality within common narratives of AI democratization is that validation, not ideation, becomes the new site of exclusion.