Is AI Worth the Cost for Educators Over Mentorship and Research?

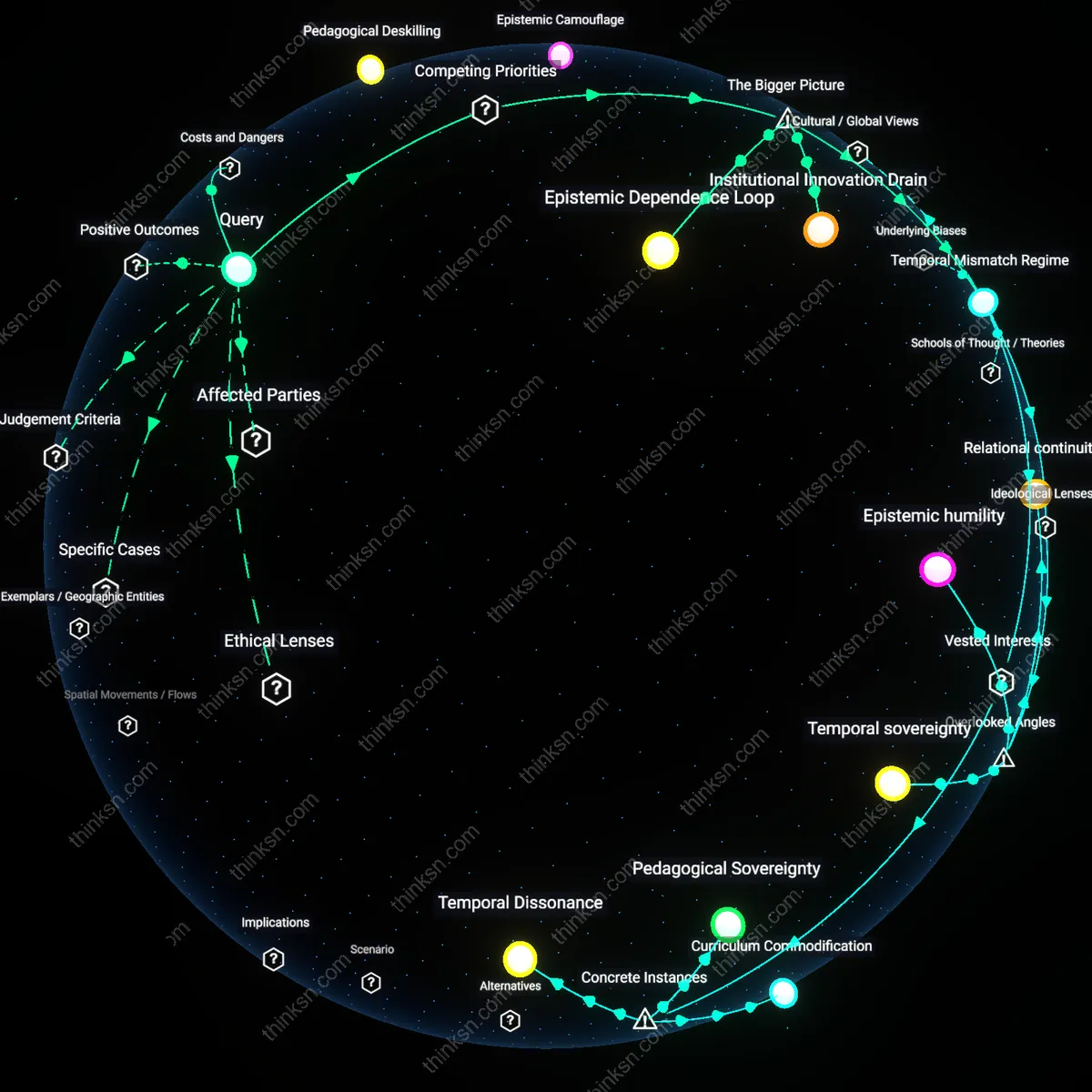

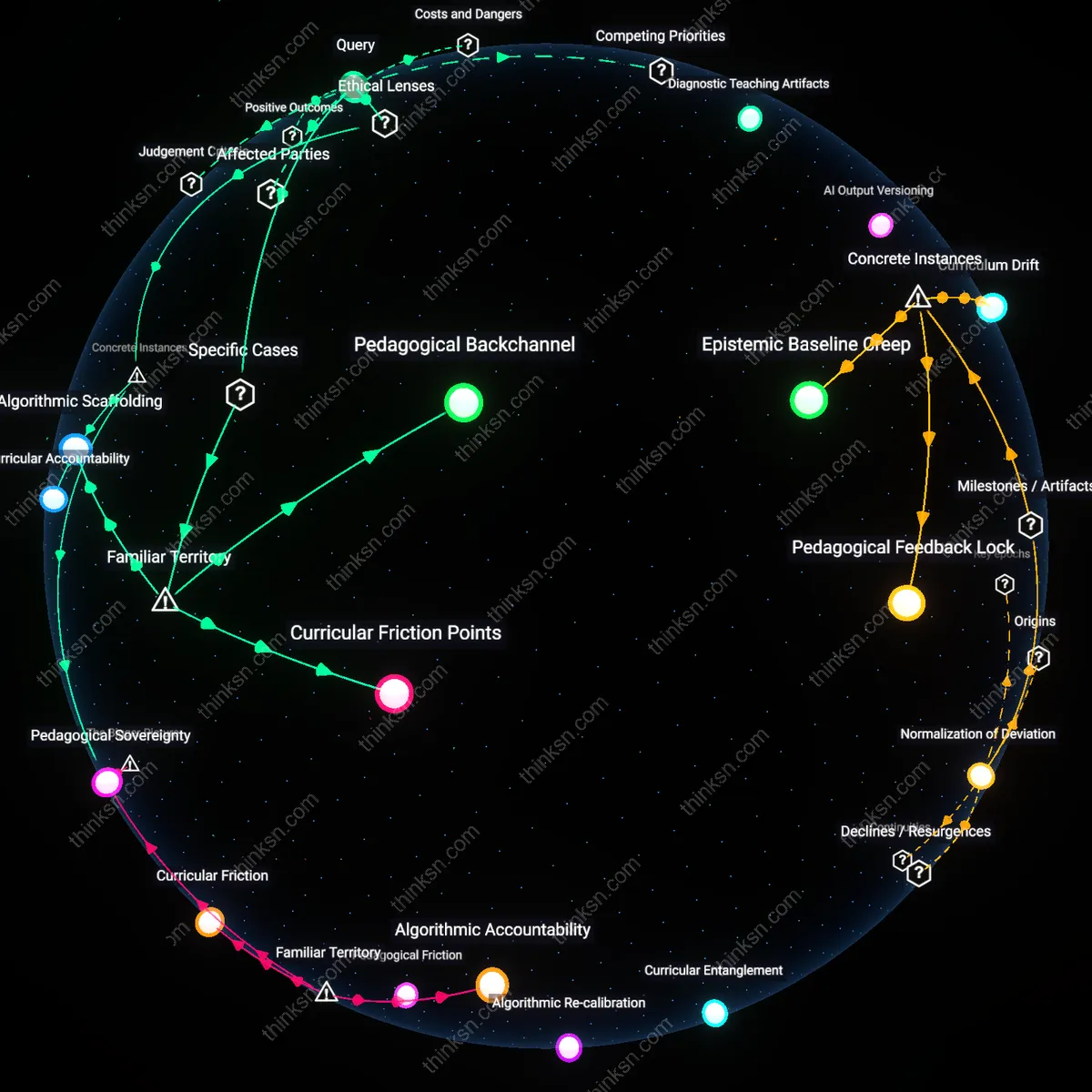

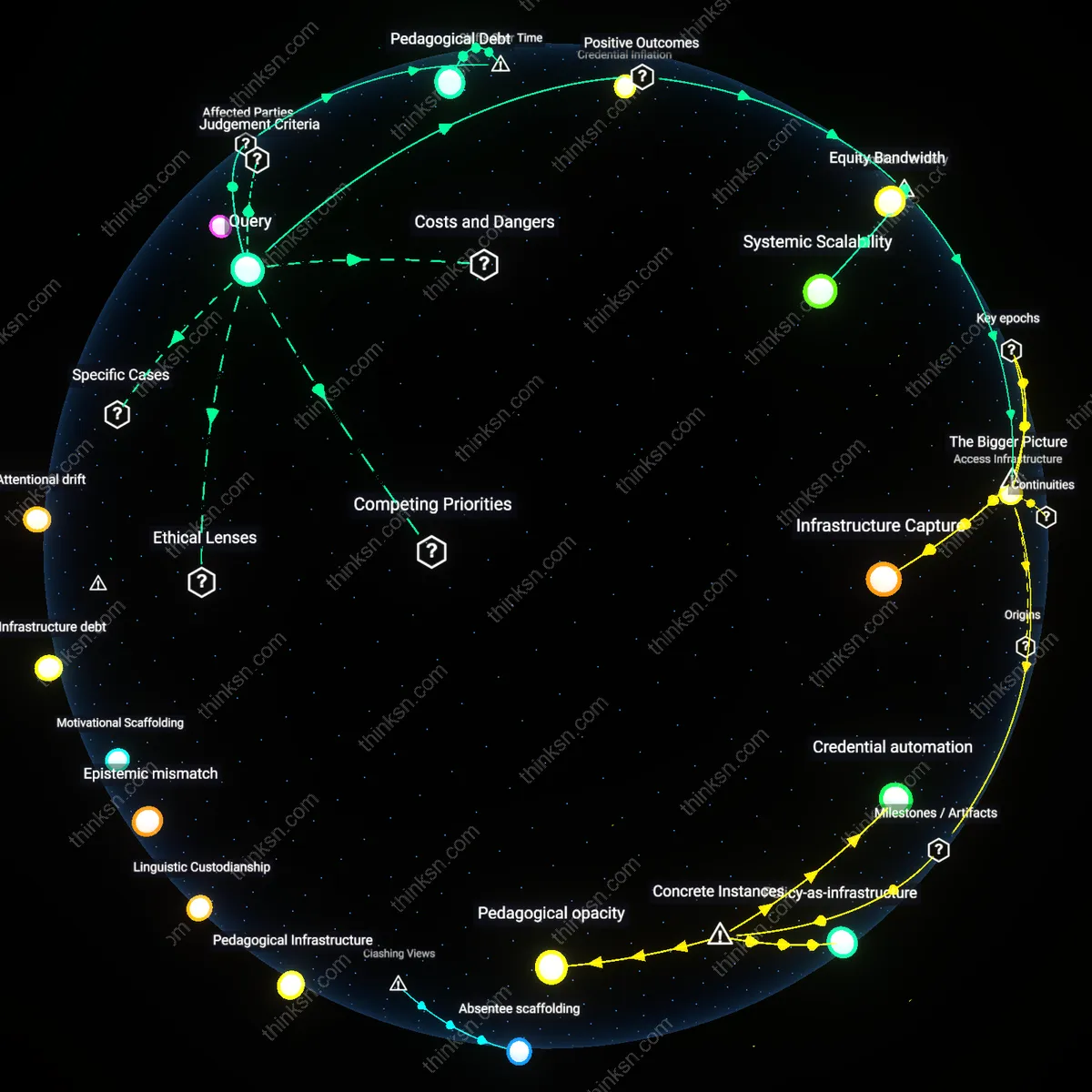

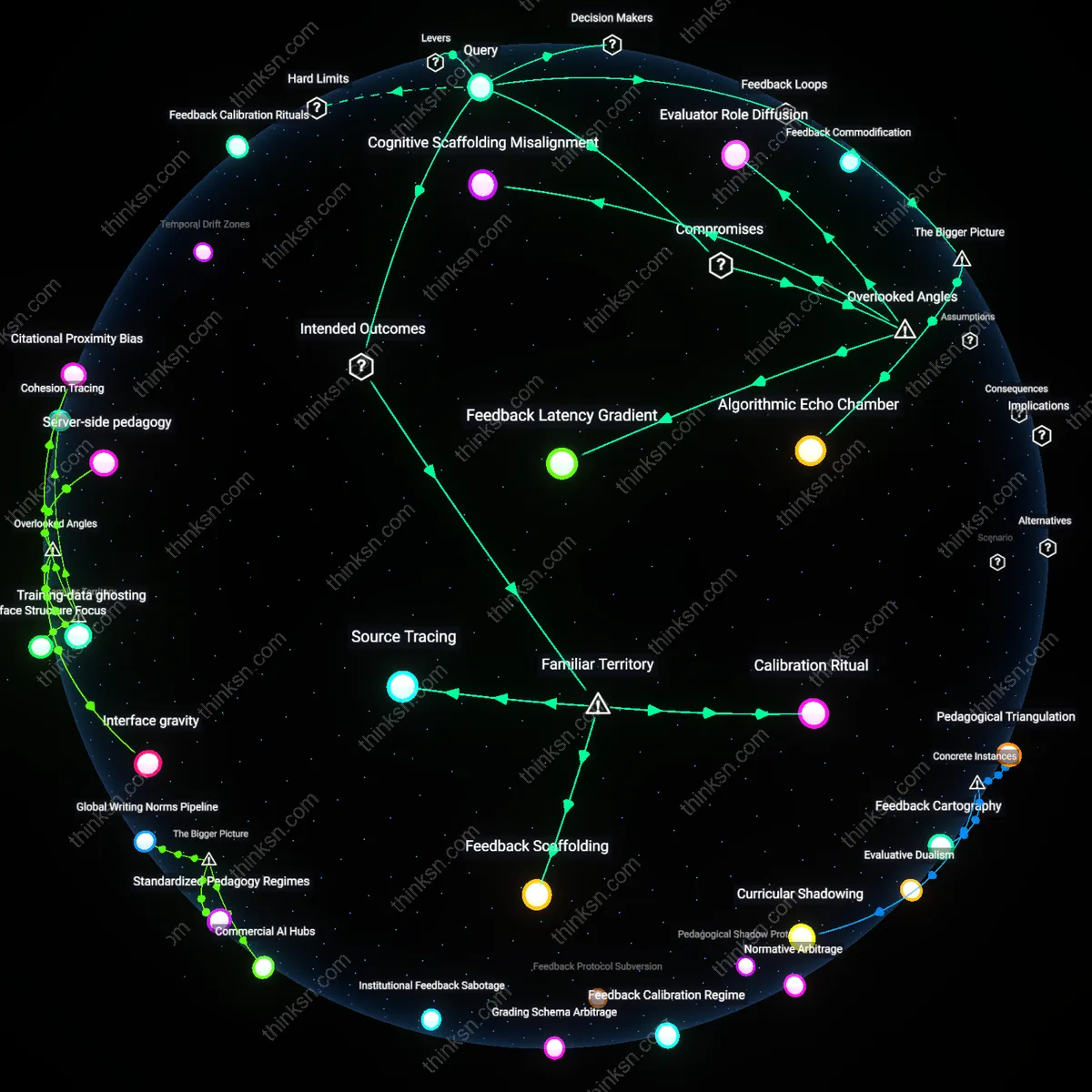

Analysis reveals 6 key thematic connections.

Key Findings

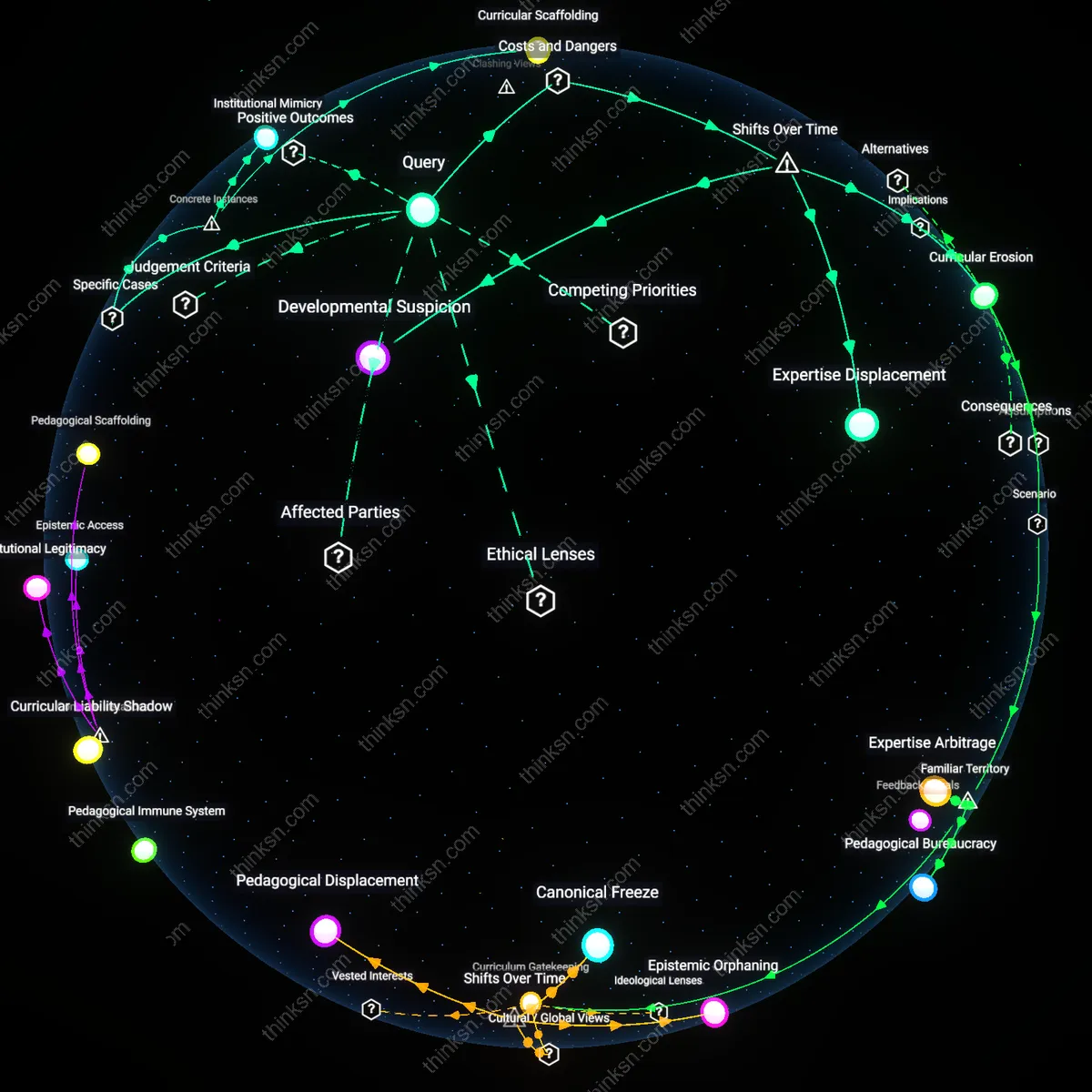

Pedagogical Deskilling

A senior educator who prioritizes AI-generated lesson plans over mentorship and pedagogical research risks normalizing a dependency on algorithmic instruction that systematically devalues contextual classroom judgment, replacing it with scalable but reductive templates shaped by data-driven design logics rather than educational insight, which erases the lived experience of both teachers and students; this shift quietly dismantles decades of hard-won advances in responsive teaching by conflating efficiency with efficacy, ultimately transferring curricular authority from educators to technocratic engineers whose incentives are misaligned with student development—what is non-obvious is that this is not a passive adoption but an active surrender of professional agency to systems designed to minimize human deviation.

Research Substitution

Investing primarily in AI-generated lesson plans creates a structural disincentive for knowledge transfer between generations of educators, as administrative reward systems increasingly favor speed and standardization over the slow, iterative work of mentoring, thereby transforming experienced teachers into mere validators of pre-packaged content rather than guides shaping practice through lived wisdom; this dynamic collapses the intergenerational scaffolding that sustains pedagogical evolution and replaces it with a plug-and-play model that cannot adapt to local cultural or emotional contexts—the underappreciated danger being that mentorship, once weakened, cannot be restored through technology but requires sustained relational time that AI actively displaces by redefining productivity as output, not depth.

Epistemic Camouflage

When AI-generated lesson plans are treated as empirically grounded innovations, they obscure the absence of peer-reviewed validation and position machine outputs as equivalent to pedagogical research, allowing institutions to claim evidence-based practice while bypassing actual inquiry, thereby creating a simulation of professional rigor without the cost or challenge of real research; this counterfeit epistemology protects administrators from accountability by mimicking scholarly structure through citation-like formatting and data visualization, but hollows out the critical function of research—to question assumptions—revealing that the greatest danger is not inaccuracy but the illusion of legitimacy that neutralizes dissent and entrenches top-down control under academic camouflage.

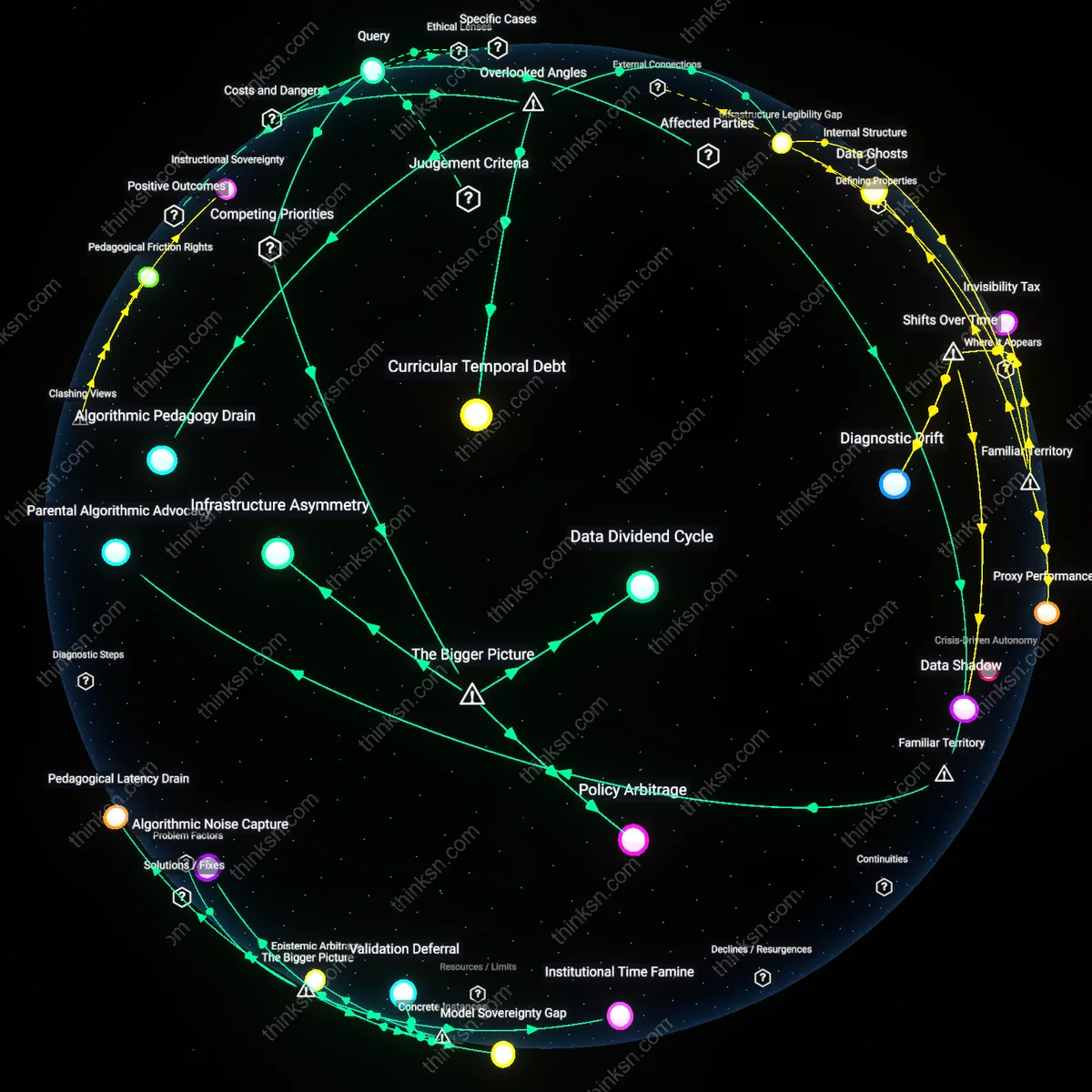

Institutional Innovation Drain

A senior educator must prioritize mentorship and pedagogical research over AI-generated lesson plans because reliance on algorithmic content risks offloading curricular innovation to tech vendors, thereby weakening the institution’s capacity to develop context-sensitive teaching practices. This shift occurs as school districts increasingly outsource curriculum development to AI platforms under pressure to demonstrate rapid digital transformation, which redirects funding and attention from internal professional learning communities to third-party tools. The mechanism—vendor-led standardization—privileges scalability over pedagogical nuance, eroding the very expertise educators are expected to steward. What is underappreciated is that this represents not a technological upgrade but a silent reallocation of intellectual authority from educators to edtech firms, a systemic transfer embedded in procurement incentives and policy benchmarks for 'modernization.'

Temporal Mismatch Regime

Senior educators preserve professional value by resisting the immediate productivity promises of AI-generated lesson plans in favor of long-term investments in mentorship and research, because the time horizons of AI efficiency gains are structurally incompatible with the slow, relational work of pedagogical development. School accountability systems, driven by annual test scores and short fiscal cycles, reward quick implementation over sustained growth, creating a misalignment where AI tools flourish while mentorship programs languish for lack of measurable short-term ROI. This dynamic is sustained by policymakers and district administrators who respond to political pressures for visible results, thereby institutionalizing a temporal regime that devalues developmental time. The non-obvious insight is that the conflict is not between tools and people, but between competing conceptions of time embedded in governance structures that make long-term professional learning systematically invisible.

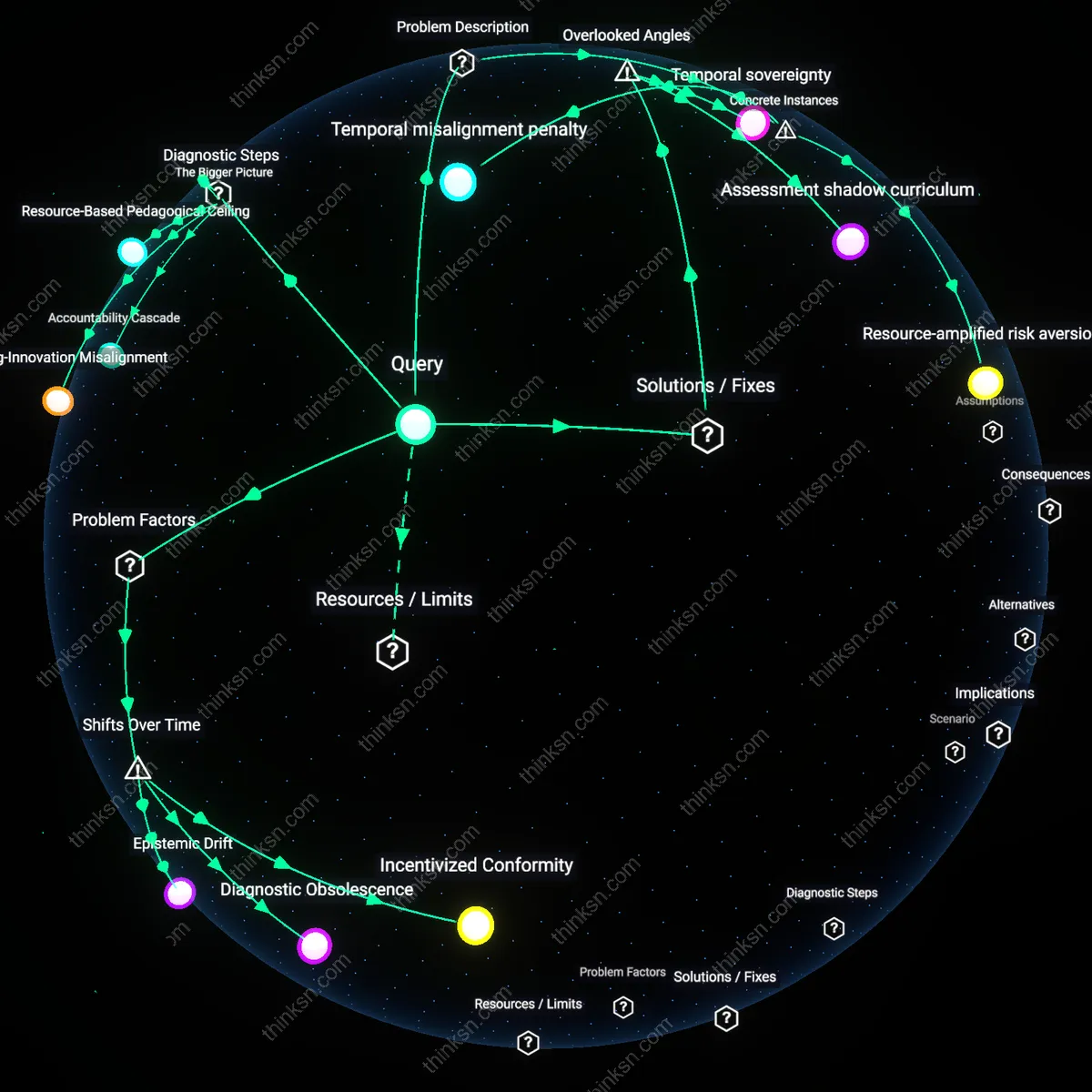

Epistemic Dependence Loop

By favoring mentorship and research over AI-generated plans, senior educators disrupt an emerging cycle in which repeated use of algorithmic content redefines professional judgment as secondary to data-driven outputs, ultimately narrowing the scope of what counts as valid pedagogical knowledge. This loop is activated when AI systems, trained on aggregated teaching data, reproduce standardized practices that marginalize context-specific innovations, pushing educators to conform to algorithmic norms to meet evaluation criteria shaped by those same systems. The cycle is upheld by centralized assessment regimes and AI vendors who jointly define 'effectiveness' through quantifiable engagement metrics, displacing qualitative, experience-based insight. The critical yet overlooked force here is that epistemic authority is not simply lost but actively reconstructed through feedback between AI design and institutional evaluation, making dependence self-reinforcing.