Trusting Climate AI: Predict or Precaution in Ambiguous Data?

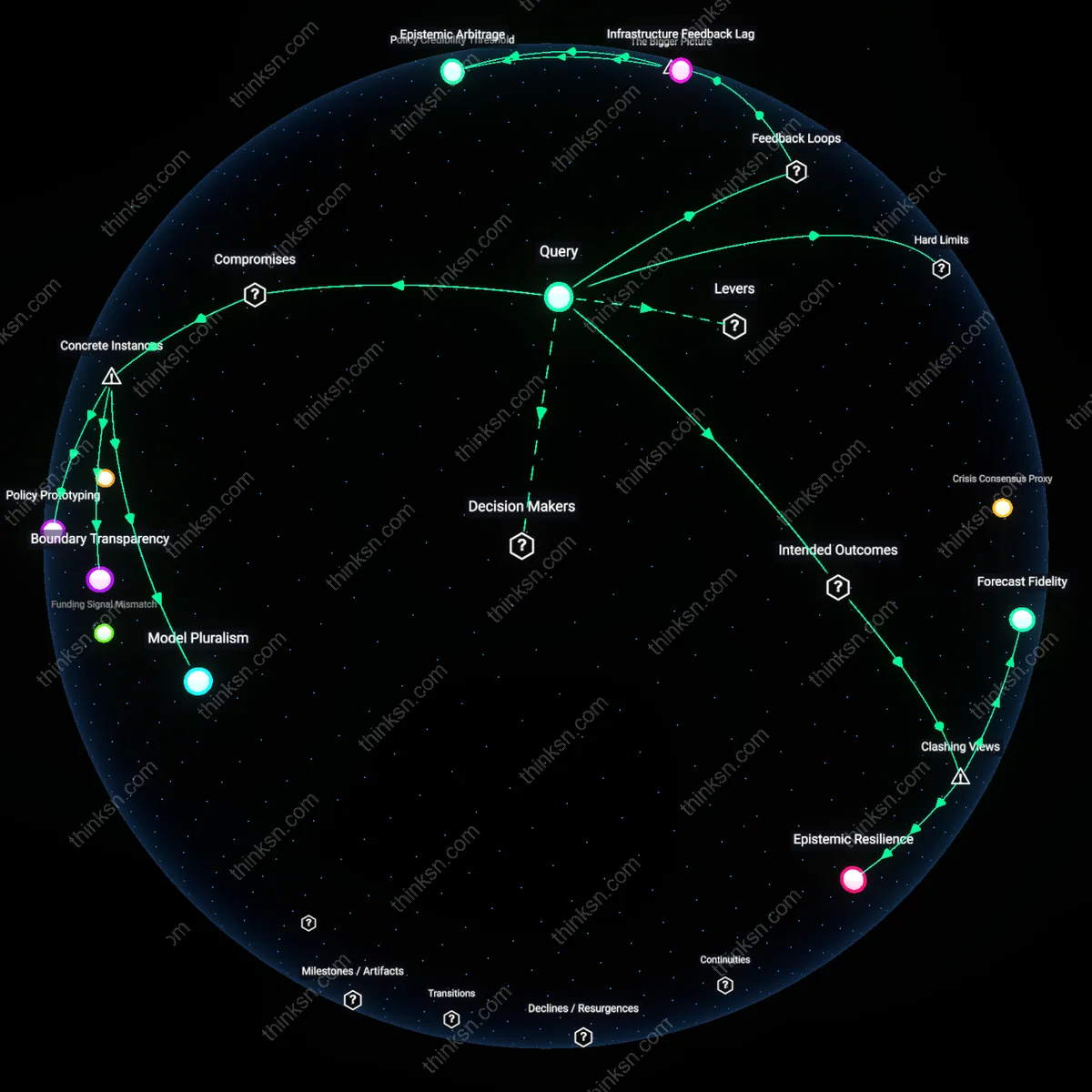

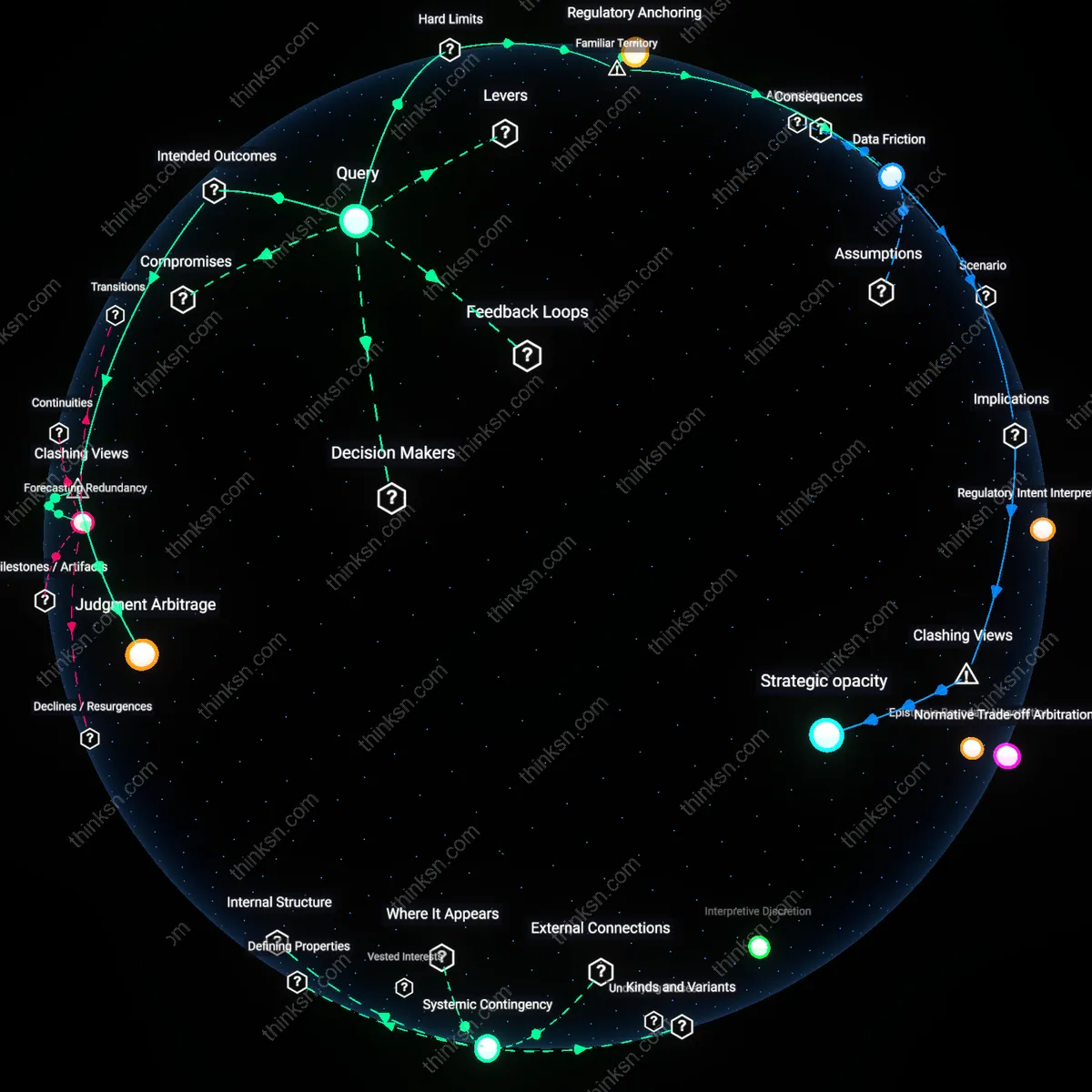

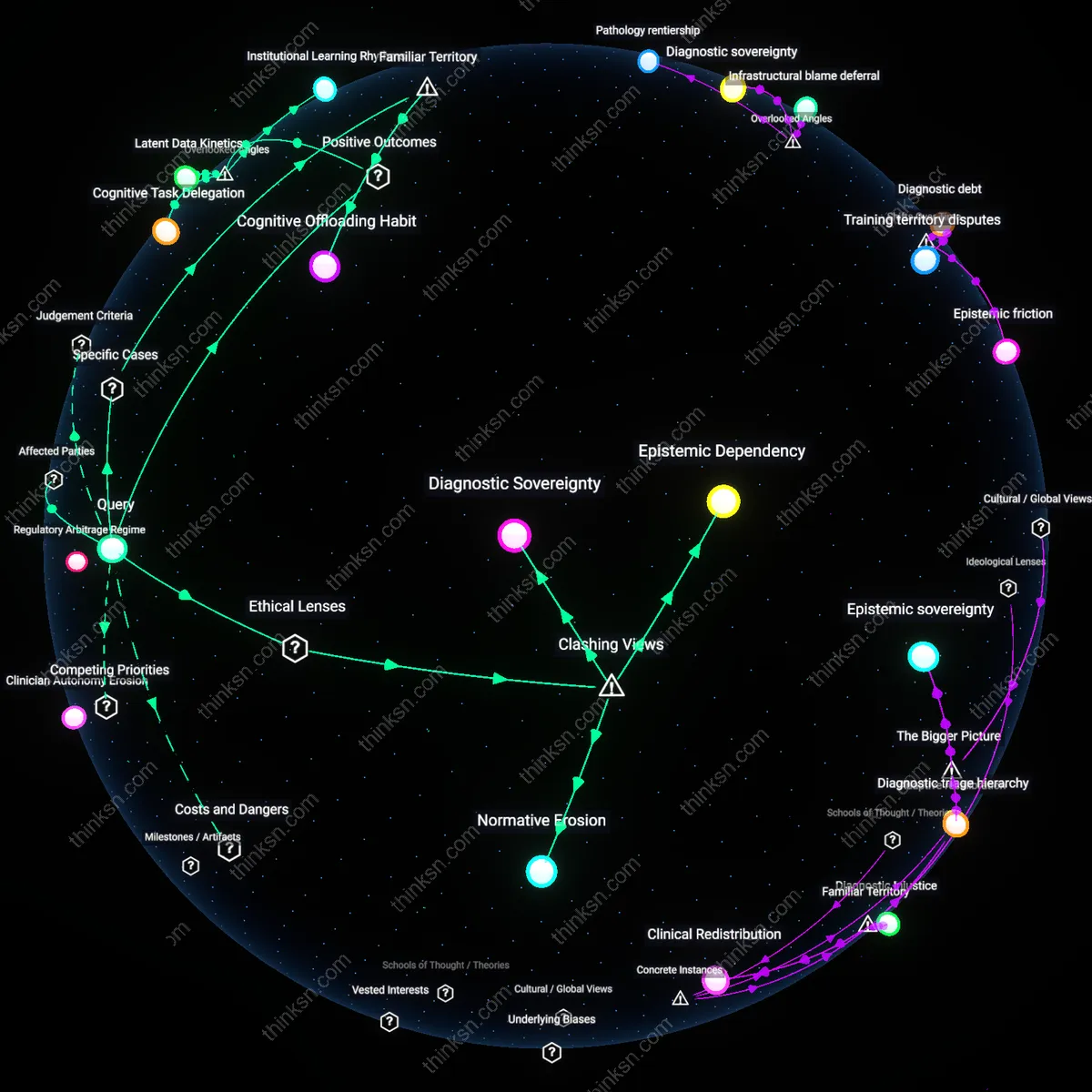

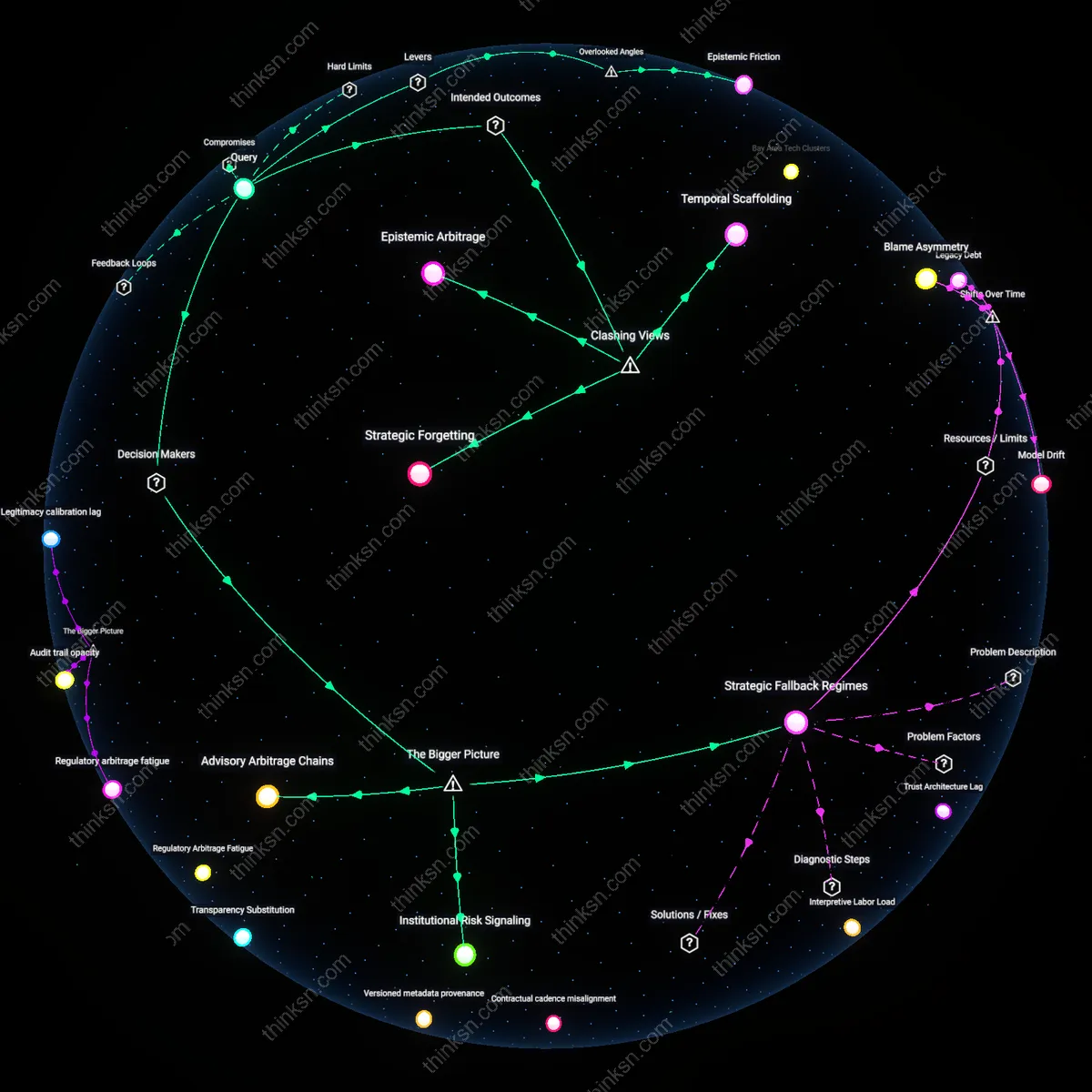

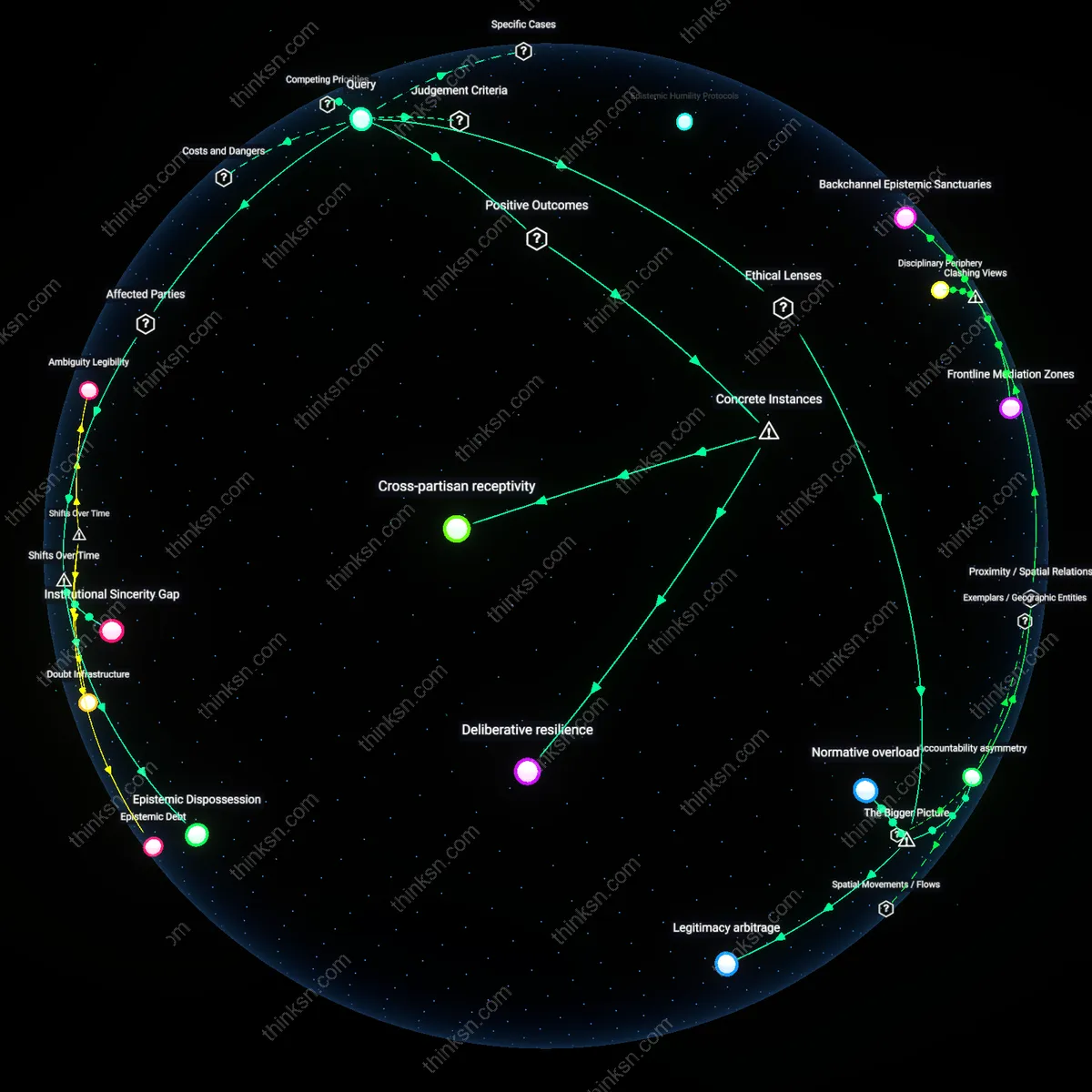

Analysis reveals 10 key thematic connections.

Key Findings

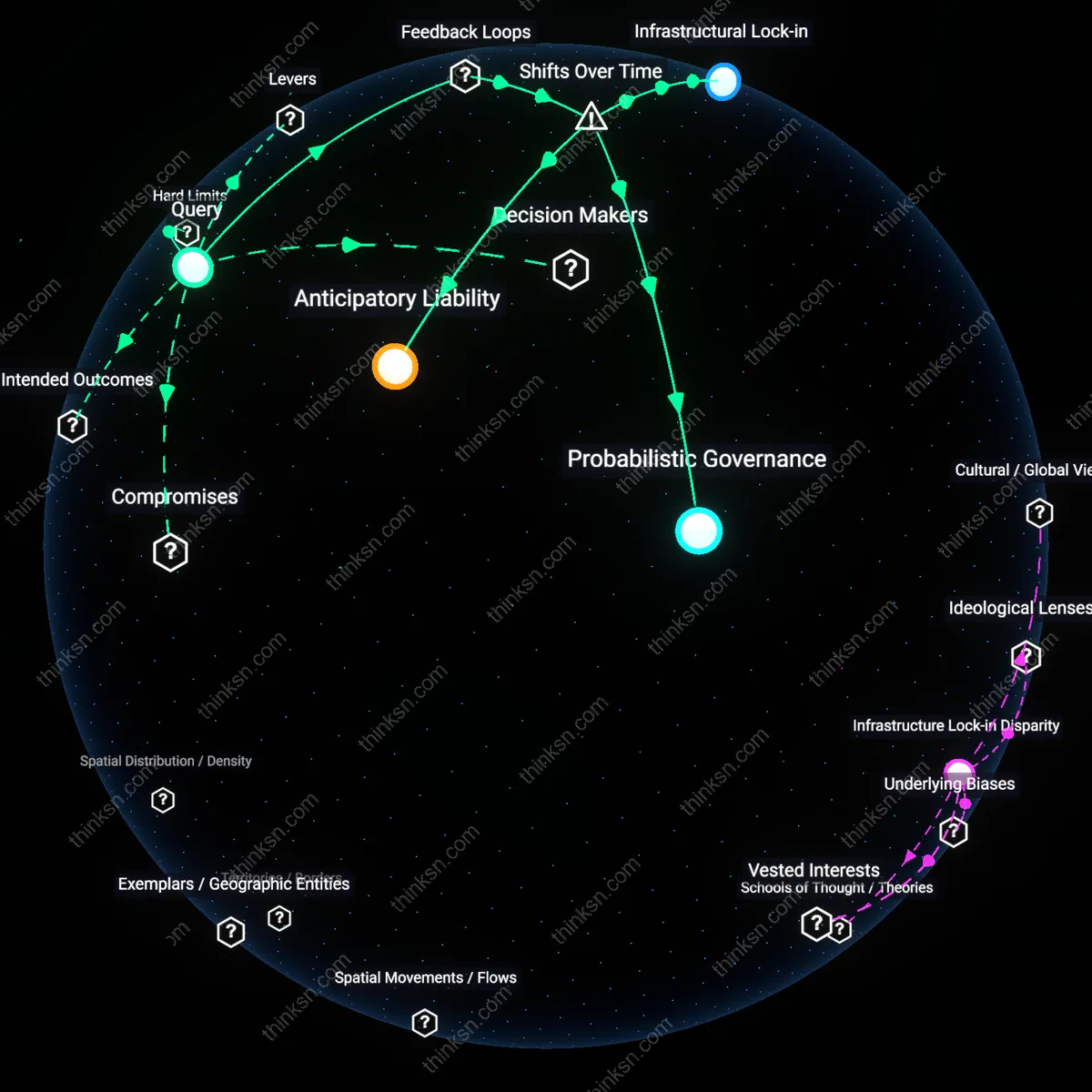

Regulatory Ground Truth

A data scientist must anchor model credibility in legally codified standards like IPCC reporting protocols and national emissions inventories, because these form the non-negotiable baseline against which all climate predictions are ultimately judged in policy forums such as UNFCCC negotiations or national climate action plans. These frameworks impose hard limits on what counts as valid evidence, constraining model freedom but also offering a stable reference point even when empirical validation lags. The non-obvious insight is that trust is not derived from predictive accuracy alone, but from institutional alignment—models gain authority by inheriting the legitimacy of the regulatory machinery they serve, which most practitioners overlook when prioritizing statistical sophistication over procedural conformity.

Crisis Consensus Proxy

A data scientist should treat historical crisis responses—such as post-hurricane rebuilding expenditures or drought-triggered water rationing laws—as observable proxies for societal trust in climate forecasts, because these actions reveal de facto acceptance of model-driven risk assessments by municipal governments and federal agencies like FEMA or the USGS. These decisions instantiate trust through resource allocation under uncertainty, forming a feedback loop where past model influence legitimizes future predictions even when direct validation fails. The underappreciated truth is that trust is not solely epistemic but behavioral—what policymakers fund or regulate becomes the real-world referent for forecast credibility, not peer-reviewed accuracy metrics.

Policy Credibility Threshold

A data scientist can stabilize trust in AI climate forecasts by aligning model uncertainty with the policy credibility threshold, where government legitimacy hinges on predictive reliability under high-stakes decisions. When climate models inform infrastructure or relocation policies, public compliance depends not on accuracy alone but on whether predictions consistently avoid catastrophic underestimation—creating a reinforcing loop where model conservatism strengthens institutional trust, which in turn increases data access and model refinement. This dynamic is sustained by policymakers’ need to avoid blame for underpreparedness, which pressures scientists to prioritize conservative scenarios, inadvertently reinforcing risk-averse modeling that further entrenches policy dependence on worst-case projections. The non-obvious insight is that trust emerges not from validation against ground truth—which may take decades—but from the model’s ability to preserve policy legitimacy in the face of uncertain outcomes.

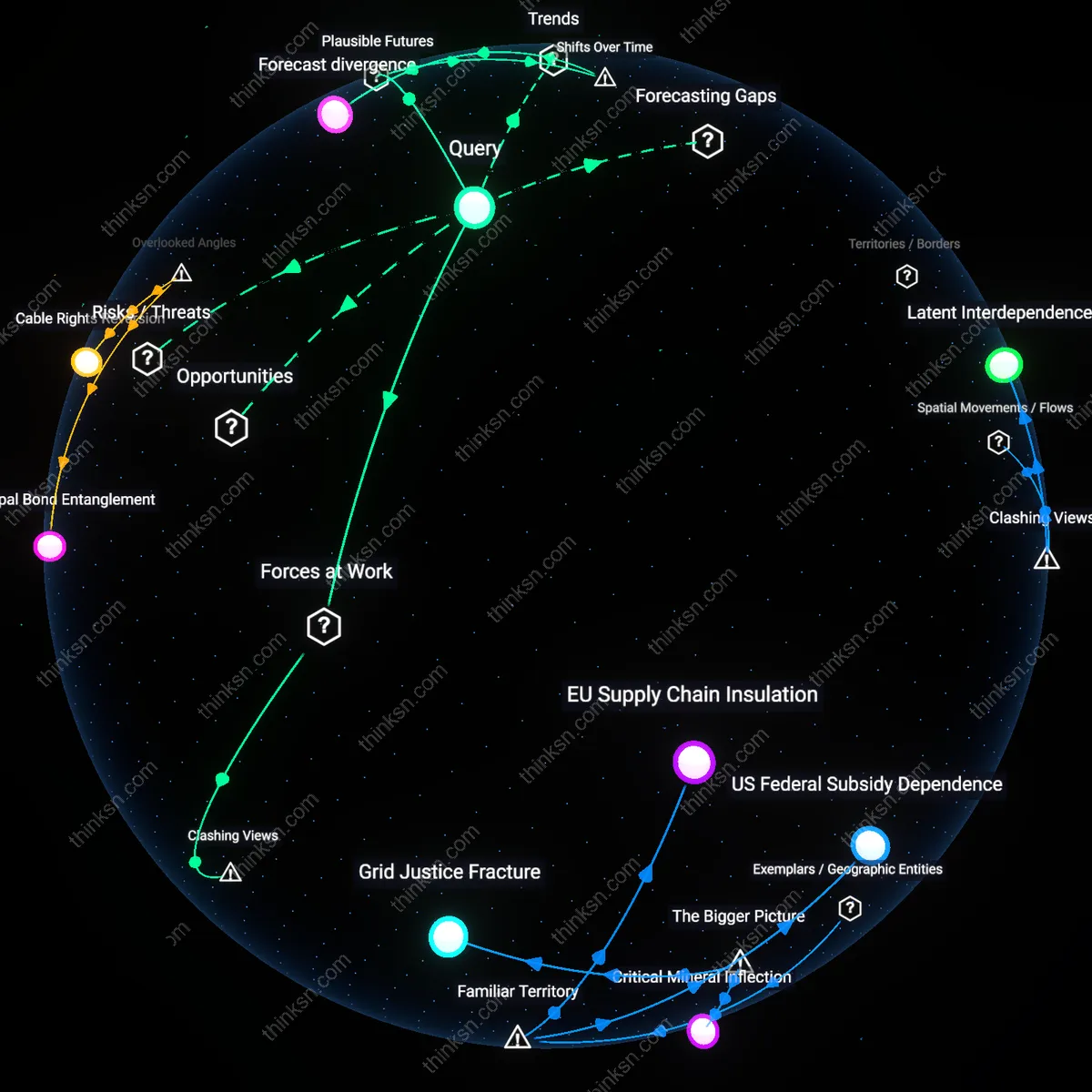

Epistemic Arbitrage

A data scientist can exploit epistemic arbitrage by positioning AI model ambiguity as a strategic resource for cross-institutional negotiation between scientists, insurers, and infrastructure planners who operate on different temporal horizons. Since climate insurance markets require probabilistic inputs for pricing risk while municipal planners demand categorical guidance for zoning, the model’s inconclusive validation becomes a flexible boundary object that enables each group to interpret uncertainty in ways that preserve their operational logic—creating a balancing loop where no single actor bears full responsibility for prediction failure. This stability emerges through repeated model reinterpretation in forums like FEMA risk assessment panels or IPCC working groups, where the model’s limitations become a substrate for distributed accountability. The underappreciated reality is that trust in AI predictions is maintained not through consensus on truth, but through the functional utility of ambiguity in preserving system-wide coordination.

Infrastructure Feedback Lag

A data scientist can modulate trust in AI climate forecasts by explicitly quantifying the infrastructure feedback lag—the delay between model deployment and built-environment response—which decouples short-term predictive failure from long-term systemic consequence. Because seawalls, grids, and transit systems take decades to build, early adoption of marginally accurate models creates a reinforcing loop where even weak signals shape trillion-dollar investments that later validate the original predictions by altering exposure and vulnerability. In coastal cities like Jakarta or Miami, this manifests as AI-informed retreat policies that reduce population density in flood zones, thereby decreasing observed damage over time and retrospectively legitimizing the initial model—even if its projections were speculative. The critical insight is that trust is retroactively constructed through engineered conditions that make the model appear prescient, not through ex ante validation.

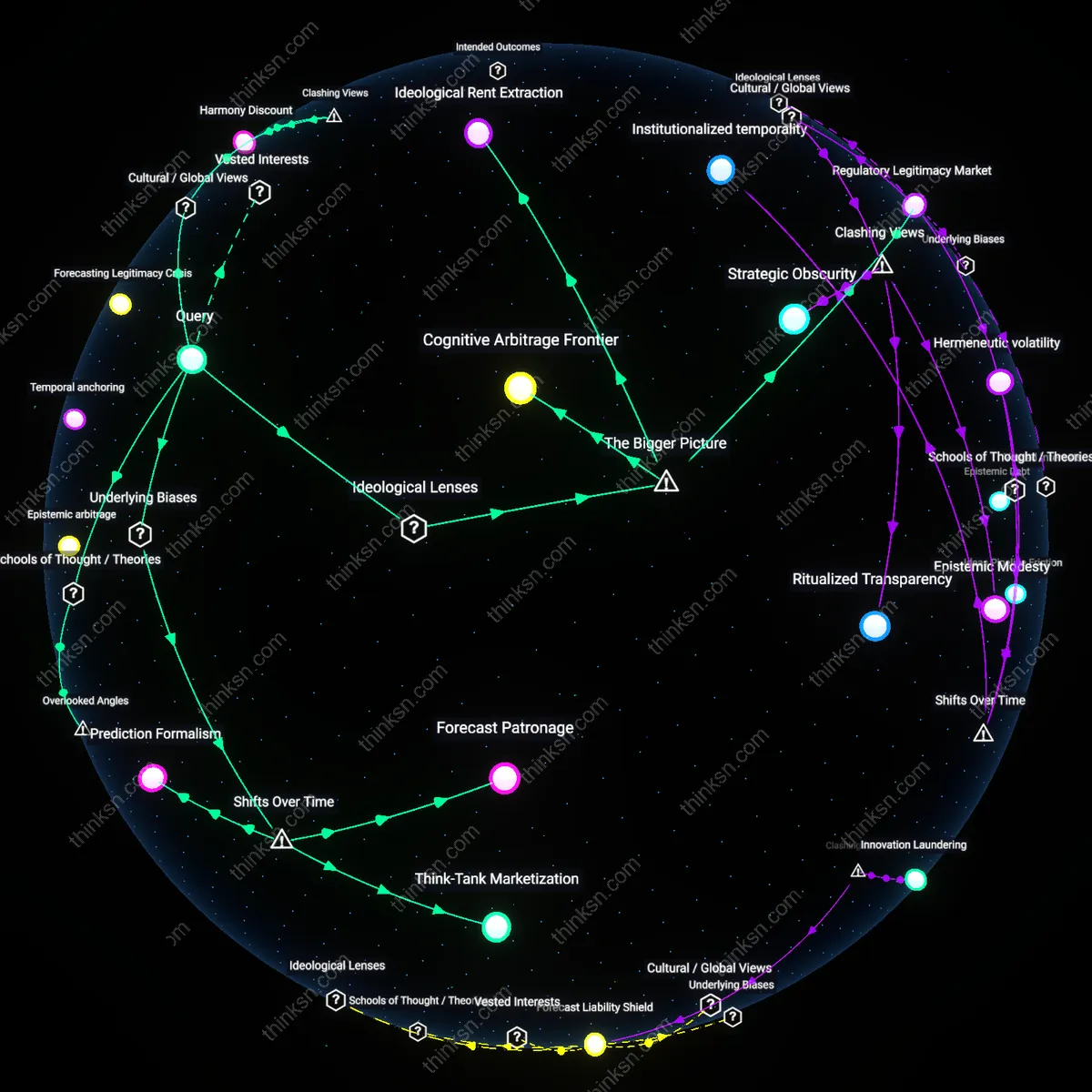

Model Pluralism

In the 2013–2015 California drought forecasting efforts, the Department of Water Resources enhanced trust in AI-driven climate predictions by triangulating outputs across physically constrained models, statistical emulators, and stakeholder-informed scenario models despite inconclusive validation due to unprecedented arid conditions; by designing inter-model comparison protocols that emphasized structural diversity over individual accuracy, they maintained credibility in high-stakes water rationing decisions, revealing that resilience to uncertainty emerges not from model consensus but from deliberate heterogeneity in modeling assumptions—this strategy compromised computational efficiency and policy agility for increased epistemic robustness, exposing that trust in forecasting is less about predictive fidelity and more about robust disagreement among representations.

Boundary Transparency

During the 2021 IPCC AR6 sea-level rise projections, the Hadley Centre and Potsdam Institute prioritized public documentation of model boundary conditions—such as ice-sheet collapse thresholds and emissions lock-in periods—over consolidating a single authoritative forecast, allowing policymakers to assess which assumptions drove divergent outcomes when observational validation was lagging; this approach institutionalized the disclosure of unresolved physical processes as a trust-building mechanism, thereby compromising the desire for decisive policy signals in favor of explicit scientific contingency, illustrating that trust in high-stakes forecasts often depends more on the visibility of epistemic limits than on the precision of the prediction itself.

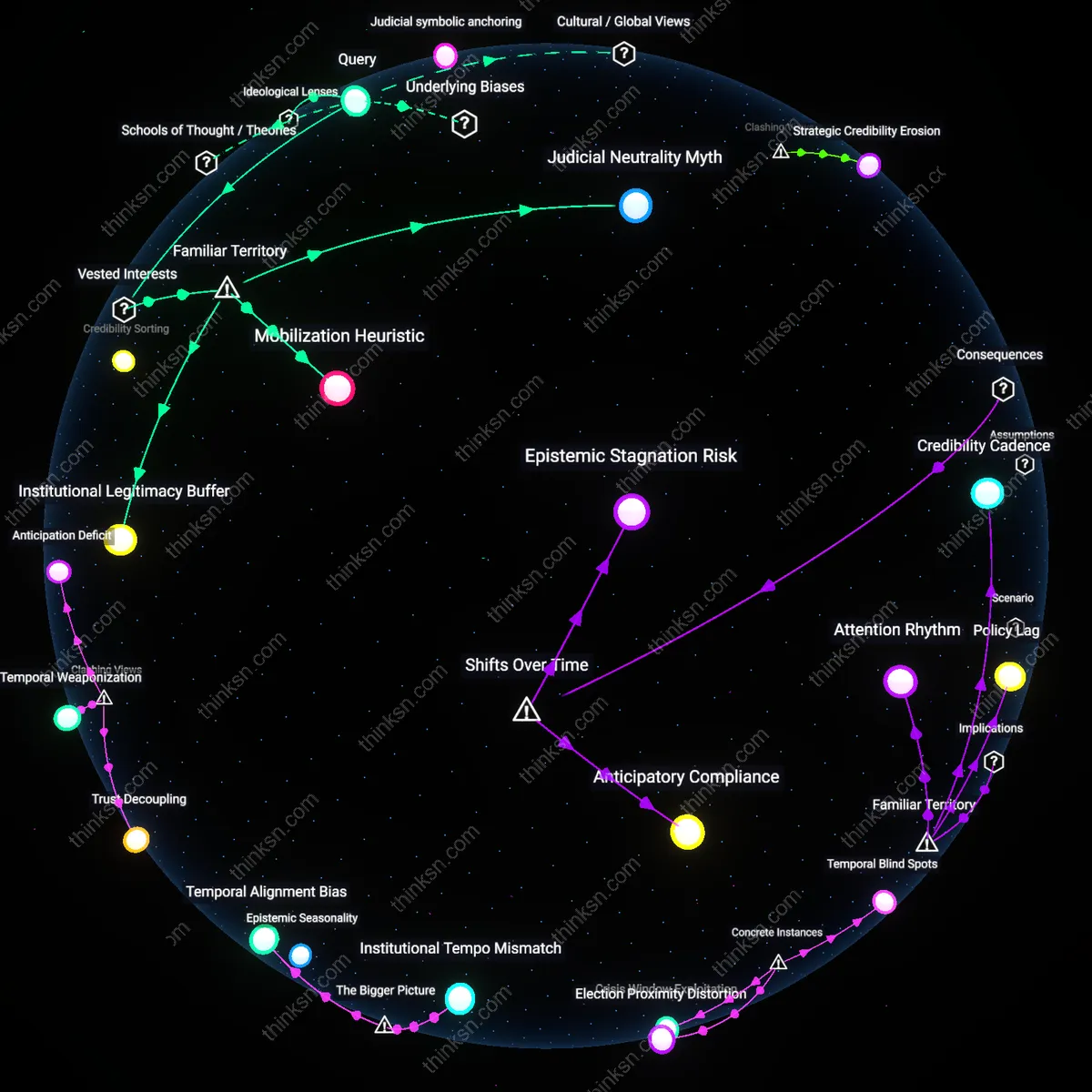

Policy Prototyping

In Bangladesh’s 2020–2022 coastal salinity intrusion forecasting initiative, the government and the IWMI deployed AI models not to determine final policy, but to run iterative simulations with local farmers to test the acceptability of agricultural adaptation measures under uncertain climate projections; by treating model outputs as inputs to participatory governance rather than as settled facts, they compromised predictive authority for social validation, revealing that trust is built not through validation alone but through shared experiential rehearsal of model consequences—this dynamic showed that in contexts of epistemic ambiguity, legitimacy emerges from repeated social testing of model implications rather than from technical correctness.

Forecast Fidelity

A data scientist can assess trust by auditing model behavior under extreme counterfactual climates rather than historical validation, shifting focus from accuracy on past data to logical coherence in uncharted conditions—such as simulating a +4°C world with collapsed Atlantic circulation. This involves stress-testing model responses against known physical thresholds using climate emulation platforms like DOE’s E3SM, where trust emerges not from outcome verification but from mechanistic plausibility under duress. Unlike conventional validation that assumes continuity between past and future, this approach treats climate forecasting as an anticipatory governance tool rather than a statistical extrapolation, revealing that confidence stems from structural integrity under disruption.

Epistemic Resilience

A data scientist must measure trust via the model's capacity to absorb and integrate contradictory evidence without collapsing into incoherence—such as when regional climate models conflict with observed monsoon shifts in South Asia. By embedding models within adaptive learning systems like the World Climate Research Programme’s Grand Challenges, where contradictions trigger formal model recalibration protocols rather than rejection, trust becomes a function of evolutionary robustness. This challenges the default assumption that inconclusive validation undermines credibility, showing instead that survivability amid epistemic conflict is the true benchmark for high-stakes forecasting.