Regulatory Legitimacy

Human analysts must certify algorithmic decisions in financial regulation to maintain institutional accountability. Regulators like the SEC or ECB require documented human oversight for enforcement actions, because legal frameworks presume responsibility must reside with a person, not a model—this creates a procedural chokepoint where even superior AI outputs must be ratified by a compliance officer or risk manager. The non-obvious reality is that this isn't about accuracy, but about preserving the legitimacy of state authority in adjudicating financial misconduct.

Interpretive Discretion

Human analysts are required when financial rules demand contextual judgment beyond pattern recognition, such as determining 'market abuse' under MiFID II. These rules rely on intent, motive, and circumstantial nuance—elements AI cannot access—so regulators mandate analyst intervention to interpret behavior within broader market narratives. The overlooked point is that the law treats certain financial concepts as inherently contestable, requiring human reasoning not because it's better, but because the category itself resists algorithmic closure.

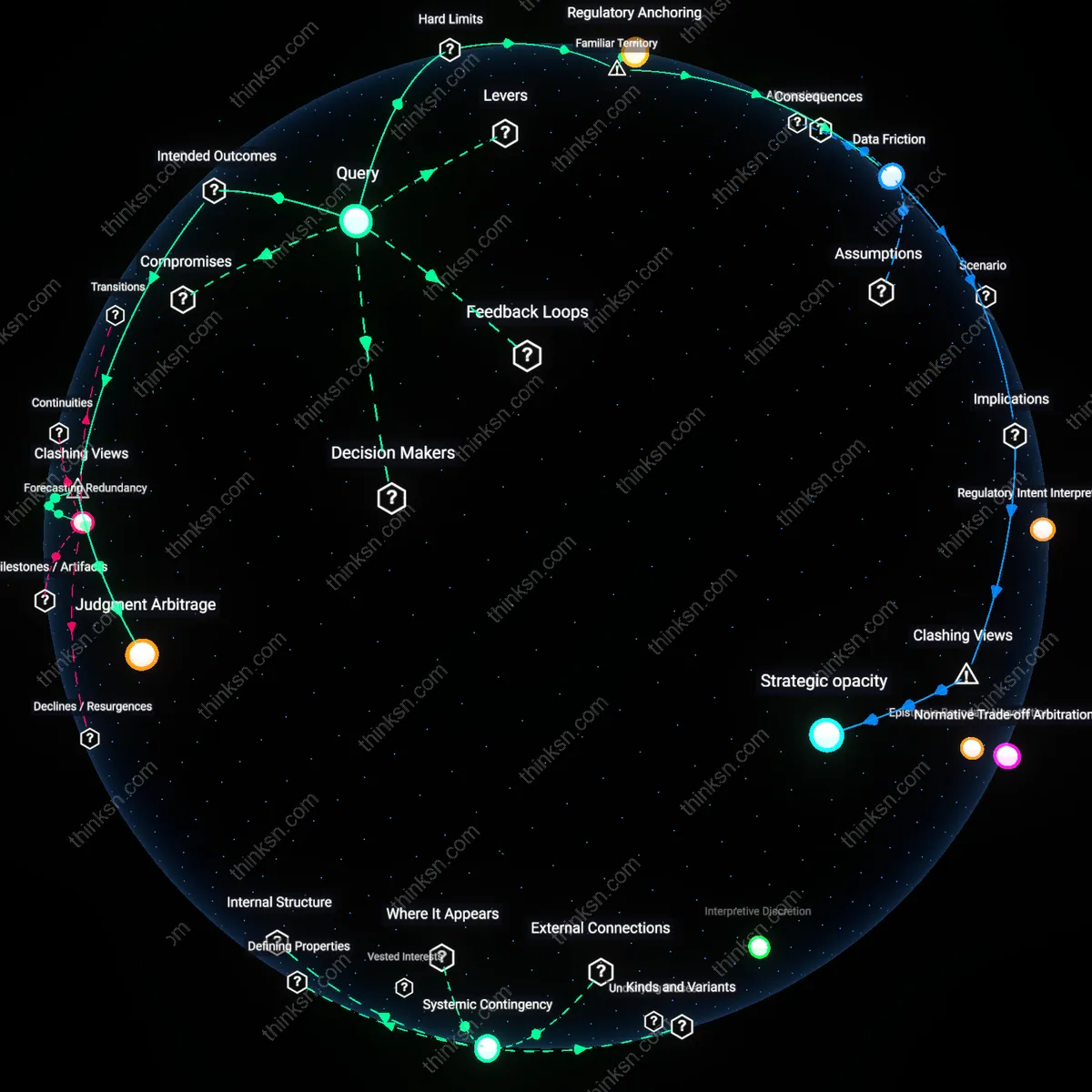

Systemic Contingency

During market crises like a flash crash or liquidity freeze, human analysts override AI-driven regulatory monitors because systemic risk lacks historical precedents for reliable training data. Bodies like the Federal Reserve or FINRA activate emergency protocols that suspend automated surveillance in favor of expert discretion, recognizing that novel instability requires adaptive reasoning. What’s underappreciated is that the financial system is designed to delegate crisis authority to humans not due to superior skill, but because the system’s own continuity depends on assignable agency in unscripted events.

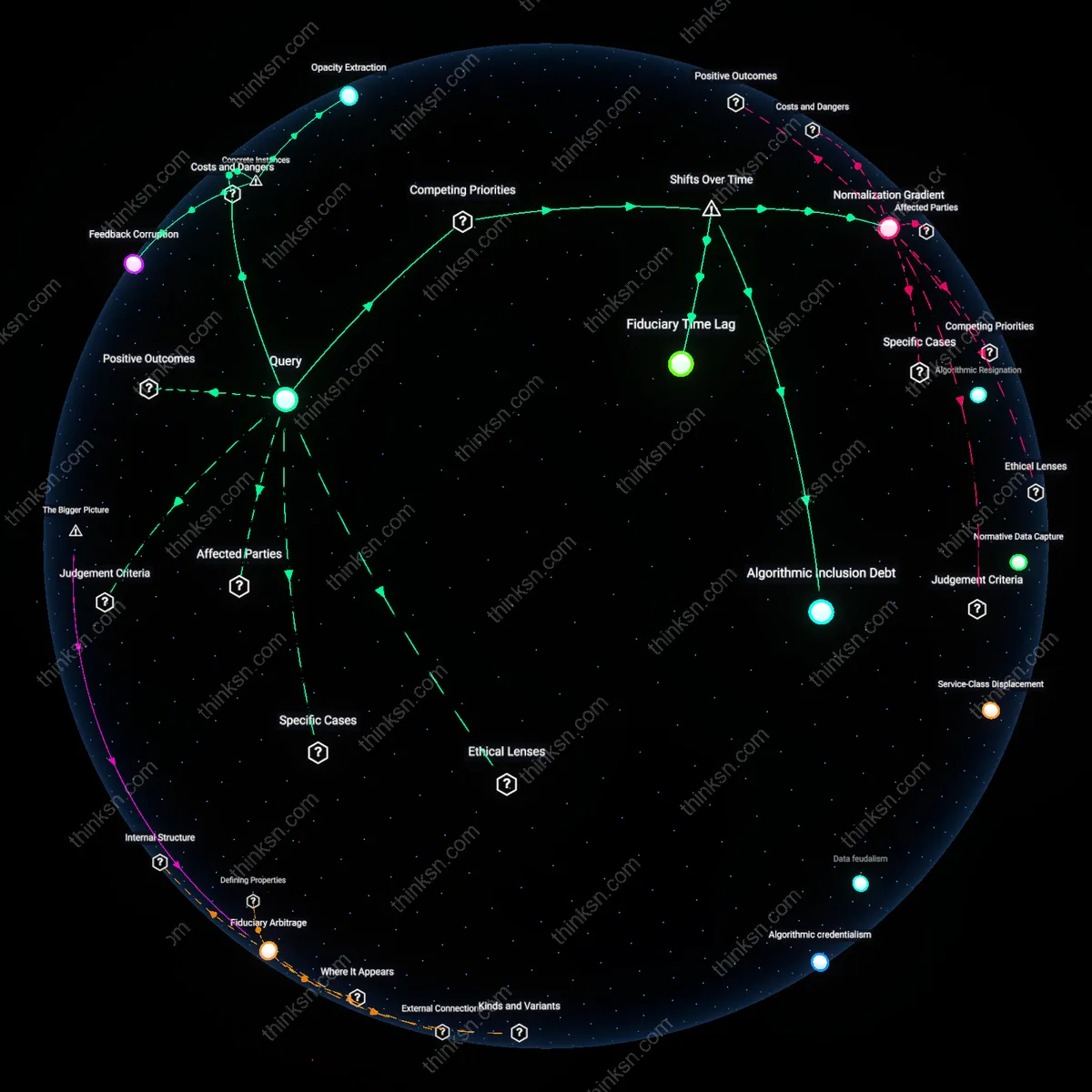

Regulatory epistemic inertia

Human analysts must intervene when AI-generated risk assessments contradict entrenched regulatory assumptions because institutions like the U.S. Securities and Exchange Commission rely on historically codified models of market behavior that prioritize interpretability over predictive accuracy. This creates a systemic dependency where AI outputs—despite superior performance—are deferred to human analysts who act as translators to reconcile algorithmic insights with legacy epistemological frameworks that demand causal legibility, particularly during events like stress-testing cycles or Basel III compliance reviews. What is overlooked is that the bottleneck is not AI explainability per se, but the institutional resistance to updating the epistemic foundations of regulation itself—where the perceived legitimacy of a decision depends on alignment with established theoretical narratives, not empirical correctness.

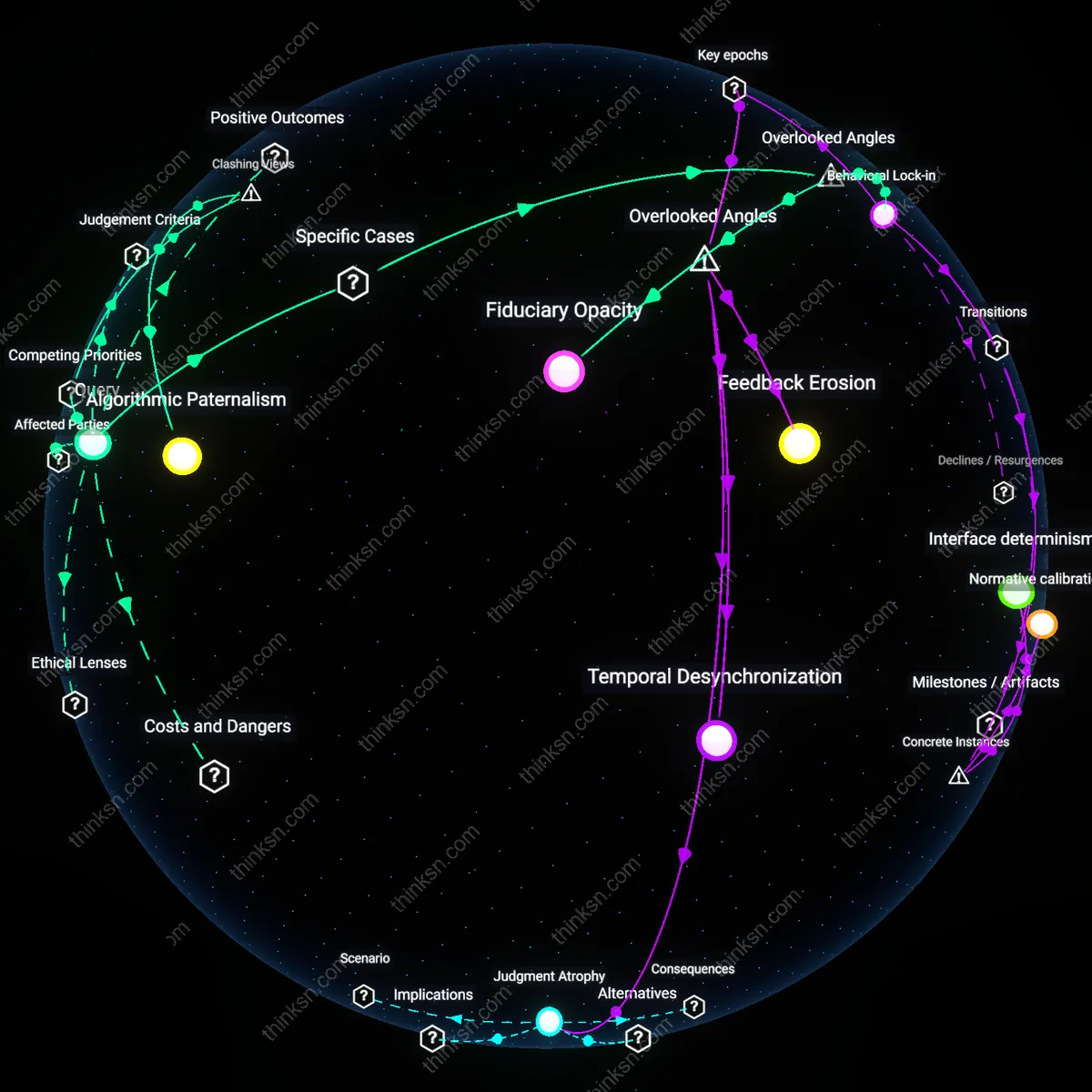

Moral anchoring points

Human analysts are required at moments when AI identifies optimal but ethically destabilizing regulatory pathways, such as when machine learning models suggest relaxing capital requirements for high-risk fintechs based on novel behavioral data, creating a legitimacy gap that only human judgment can resolve under scrutiny from bodies like the Financial Stability Board. These junctures function as moral anchoring points where regulators must visibly attribute responsibility to accountable agents, not algorithms, to sustain public trust in moments of systemic ambiguity, such as post-crisis oversight or crypto-asset classification. The overlooked dimension is that human intervention is not a corrective to AI error but a ritualized performance of accountability, insulating institutions from reputational contagion by preserving the fiction of discretionary oversight—even when AI outperforms across all technical metrics.

Inter-jurisdictional ambiguity buffers

Human analysts become indispensable when AI systems produce divergent compliance recommendations across overlapping jurisdictions, such as when an AI model optimizes capital reserves under both EU’s CRD VI and U.S. Dodd-Frank rules but generates conflicting outcomes due to implicit normative weighting of risk factors. In such cases, regulators from bodies like the Basel Committee observe that only human analysts can navigate the uncodified 'soft law' practices and political sensitivities that govern cross-border enforcement tolerance, effectively acting as ambiguity buffers who absorb diplomatic risk by making discretionary alignment calls. The non-obvious reality is that AI’s precision becomes a liability in fragmented regulatory ecosystems, where inconsistency is strategically tolerated and human judgment functions as a covert coordination mechanism to prevent jurisdictional conflict.

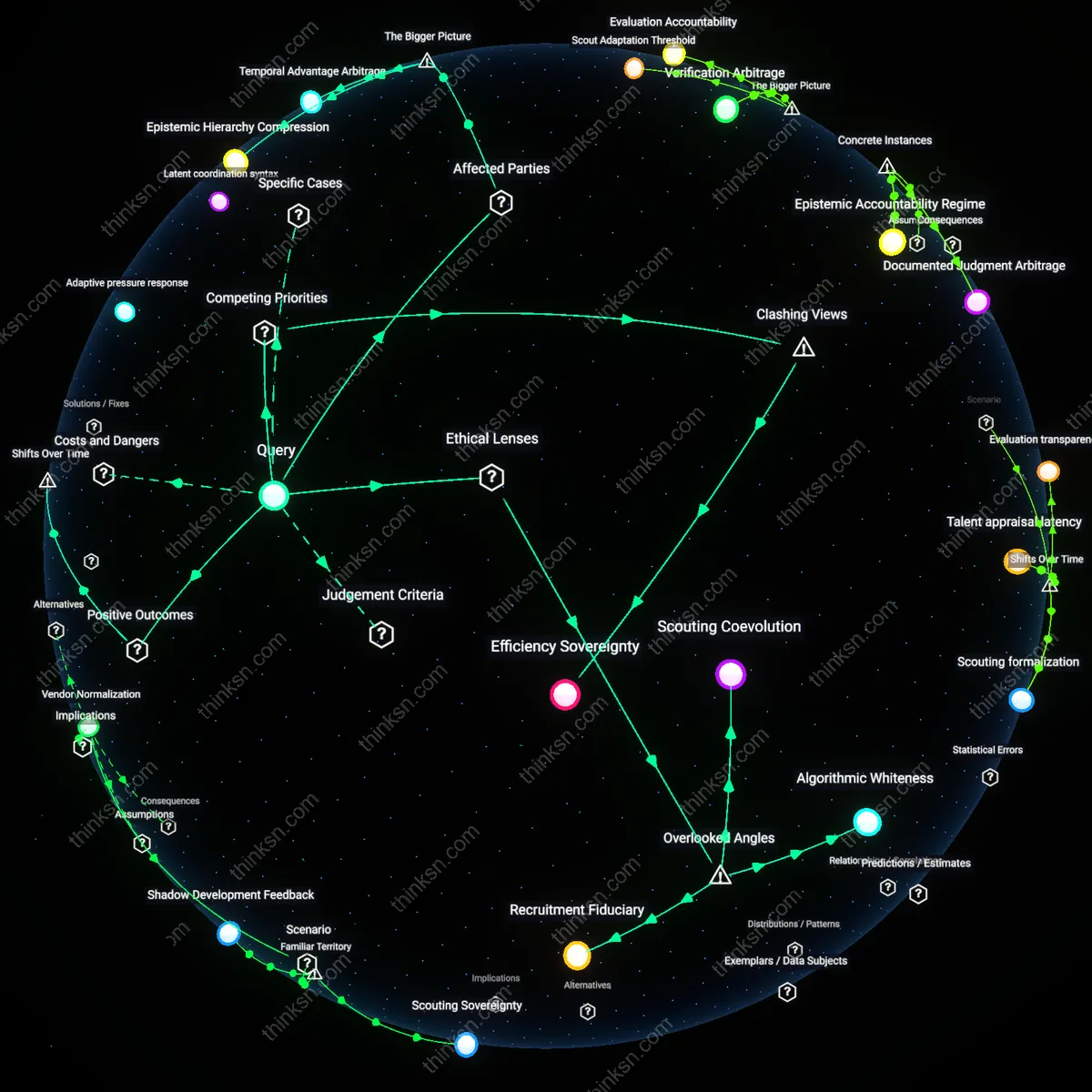

Regulatory Intent Interpretation

At the U.S. Securities and Exchange Commission’s Division of Corporation Finance, human analysts must review AI-generated risk assessments in IPO filings because algorithms cannot reliably discern the strategic omissions or rhetorical framing that signal material misrepresentation, as seen in the 2021 review of Rivian Automotive’s Form S-1, where understated supply chain dependencies were flagged by staff as intentional risk dilution rather than data gaps, revealing that legal materiality often turns on contextual judgment AI lacks.

Normative Trade-off Arbitration

During the European Central Bank’s 2020 stress-testing cycle, human supervisors overruled AI models that recommended uniform capital surcharges on banks with similar risk profiles because the models failed to account for national economic stability externalities, exemplified when Bundesbank officials argued for differentiated treatment of German Landesbanken due to regional fiscal roles despite AI-identified weaknesses, demonstrating that regulatory outcomes often require balancing systemic fairness against macroprudential priorities beyond algorithmic optimization.

Epistemic Boundary Negotiation

In the UK Financial Conduct Authority’s 2022 probe into AI-driven credit scoring by Monzo Bank, human analysts intervened to redefine the boundary of acceptable model opacity when the system could not explain why rural applicants received lower scores despite strong repayment histories, leading investigators to reject statistical accuracy as sufficient justification and insist on socially intelligible causality, exposing that regulatory legitimacy sometimes depends on contestable knowledge standards rather than performance metrics.

Regulatory Legitimacy Threshold

Human analysts are required at points of enforcement finality in financial regulation, such as the U.S. Securities and Exchange Commission's adjudication of AI-generated suspicious activity reports, because automated systems lack the institutional authority to impose legal sanctions. The SEC’s Division of Enforcement must corroborate AI outputs with human-reviewed evidence before initiating actions, as seen in the 2021 scrutiny of Robinhood’s payment for order flow practices where algorithmic red flags required qualitative validation. This juncture exists not due to AI’s technical limits, but because legal legitimacy in U.S. administrative law depends on traceable human judgment to satisfy due process and accountability standards. The non-obvious insight is that AI can outperform humans in detection accuracy, yet systemic trust in regulatory outcomes necessitates human sign-off as a procedural anchor.

Interpretive Discretion Bottleneck

Human analysts must intervene when AI systems encounter novel financial instruments subject to ambiguous regulatory classification, such as the European Central Bank’s assessment of algorithmically traded green bonds under the EU’s Sustainable Finance Disclosure Regulation (SFDR). In Luxembourg, where 45% of EU-domiciled funds are registered, compliance officers are required to manually interpret whether AI-categorized ESG exposures meet ‘do no significant harm’ criteria, because rule-based models cannot resolve jurisdictional gray zones during market innovation. This bottleneck emerges not from AI’s inability to process data, but from the dynamic mismatch between fast-evolving financial engineering and slow-moving legislative frameworks, making human discretion essential to prevent regulatory arbitrage. The underappreciated dynamic is that superior AI performance in pattern recognition becomes irrelevant when the governing rules themselves are indeterminate.

Systemic Narrative Construction

Human analysts are indispensable in crafting post-crisis narratives that shape macroprudential policy, such as the Bank of England’s 2023 stress test response to AI-identified vulnerabilities in commercial real estate lending. While machine learning models outperformed humans in flagging early default risks across 12 UK banks, the Prudential Regulation Authority relied on senior analysts to synthesize AI outputs into a coherent causal story for Parliament and financial institutions. This narrative construction is required because policymakers and the public reject black-box justifications for systemic interventions, even when accurate, triggering political and behavioral feedback loops. The key insight is that AI’s analytical superiority fails to generate institutional buy-in without human-mediated sense-making, revealing that regulatory decisions depend on interpretability ecosystems, not just detection fidelity.