Balancing Free Speech and Safety in Online Controversy

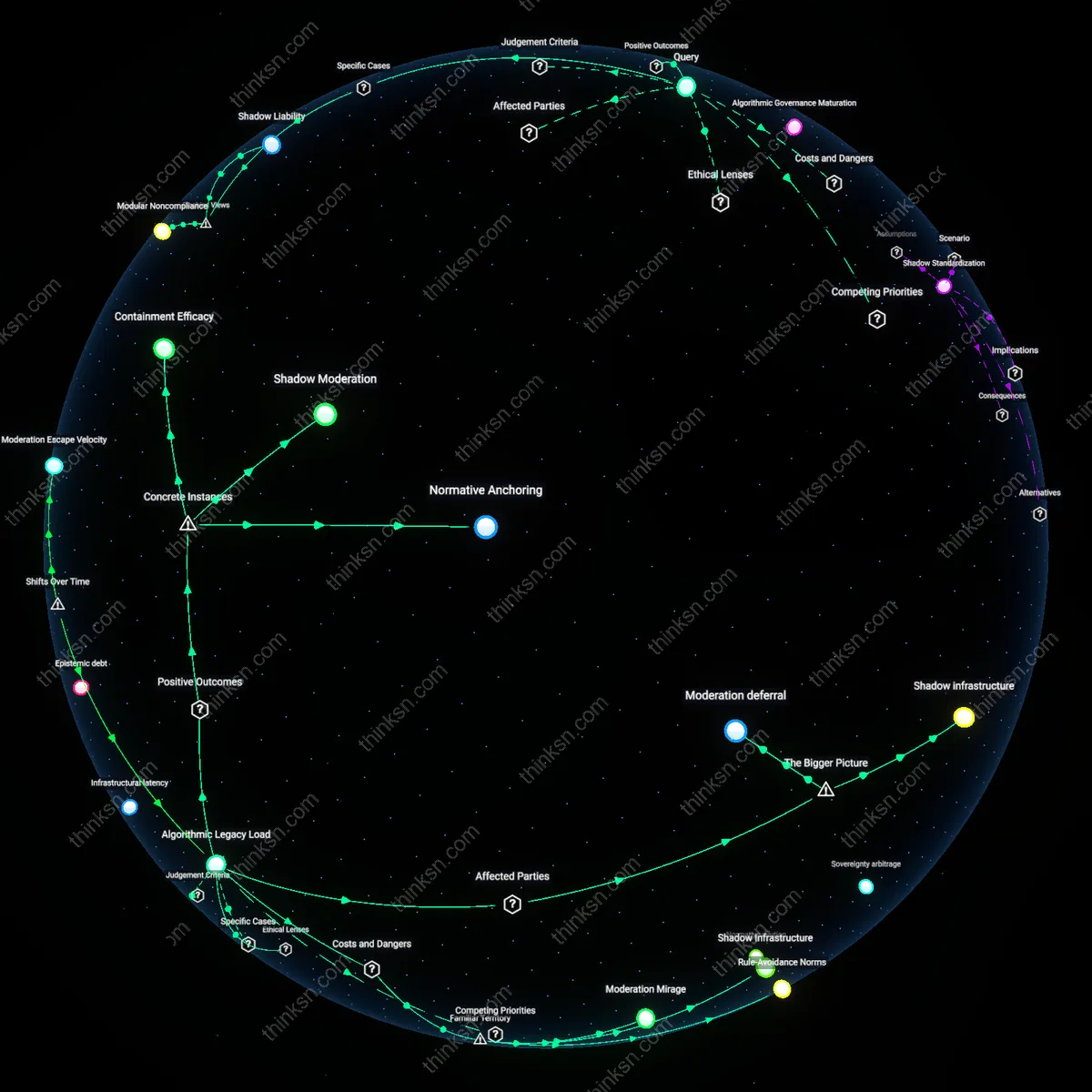

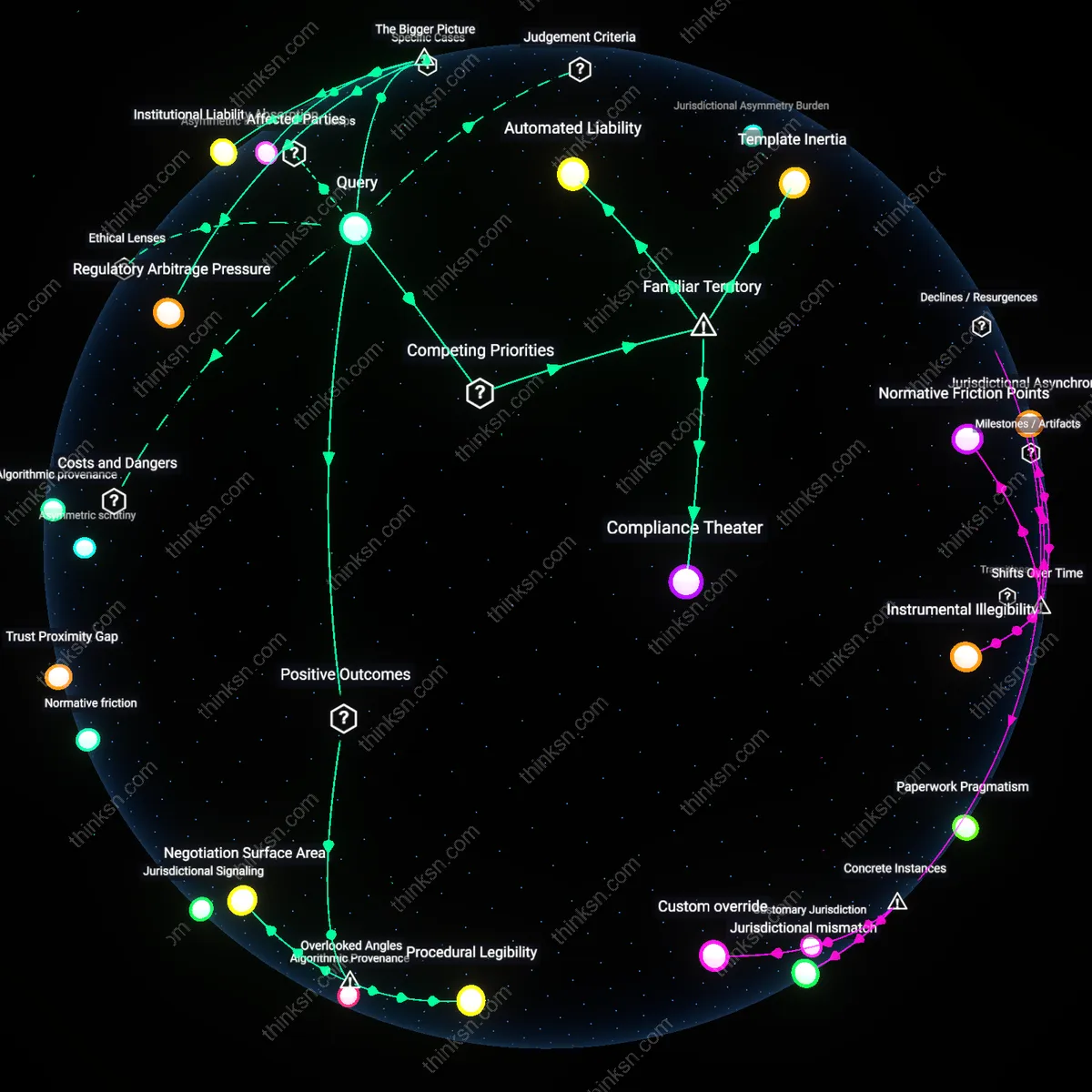

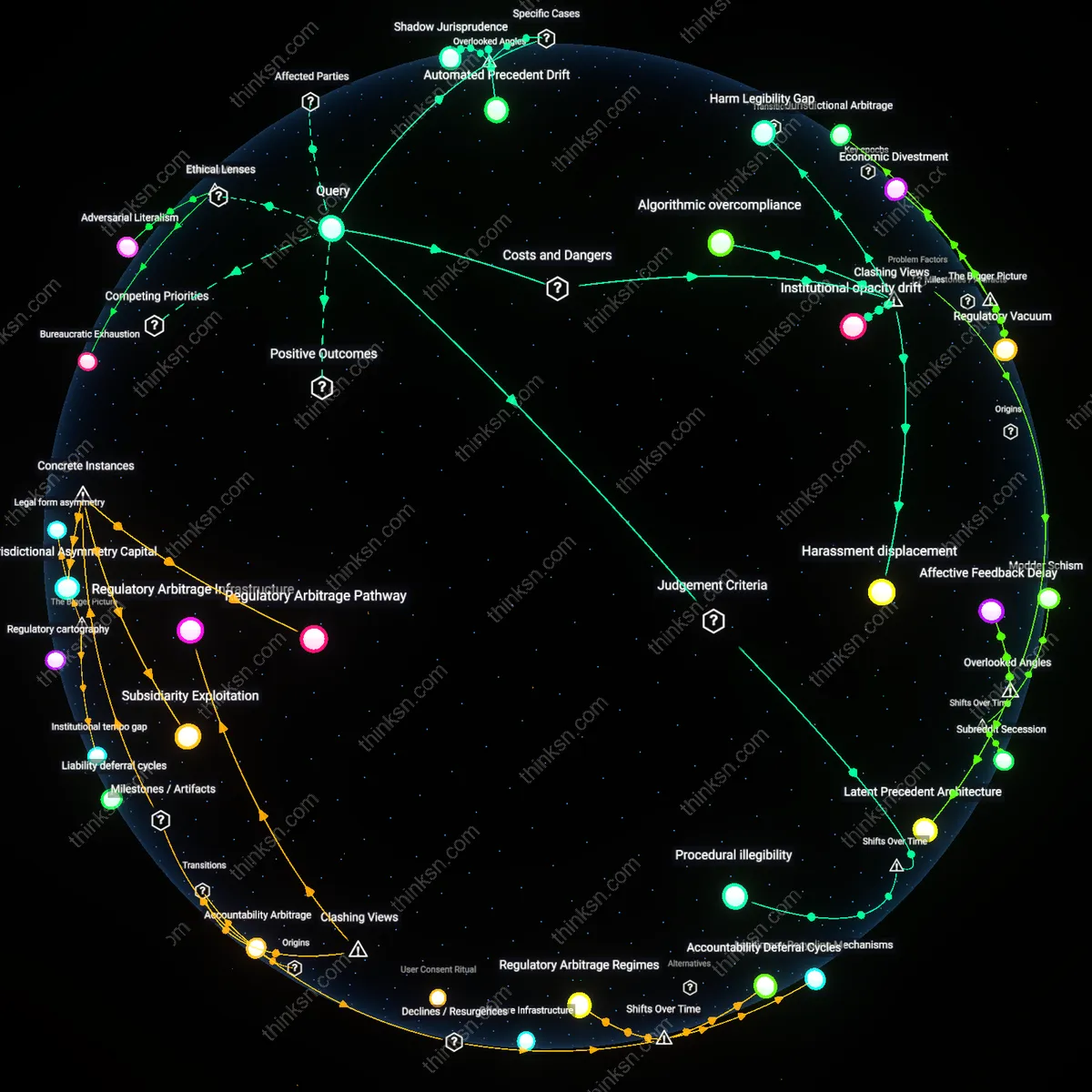

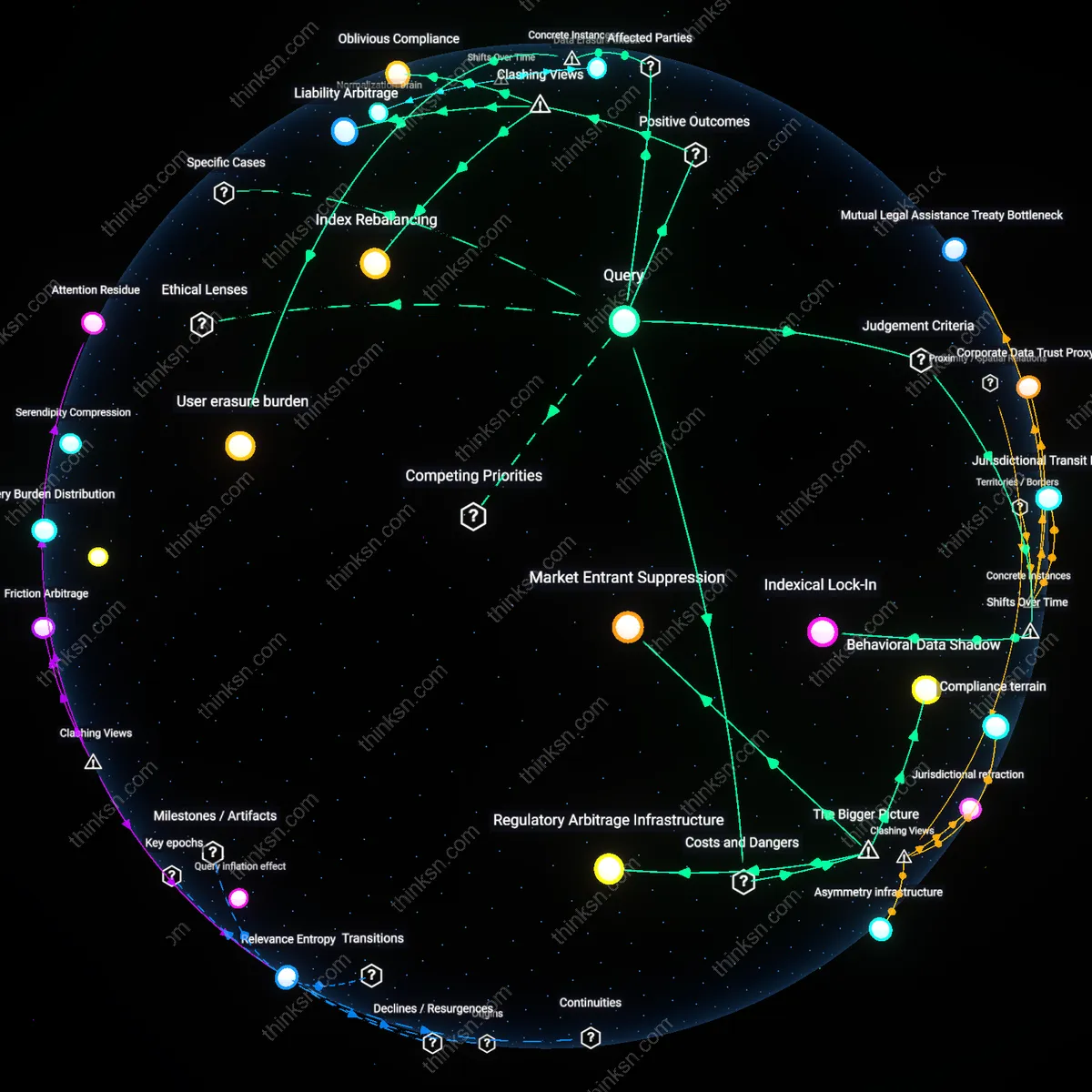

Analysis reveals 8 key thematic connections.

Key Findings

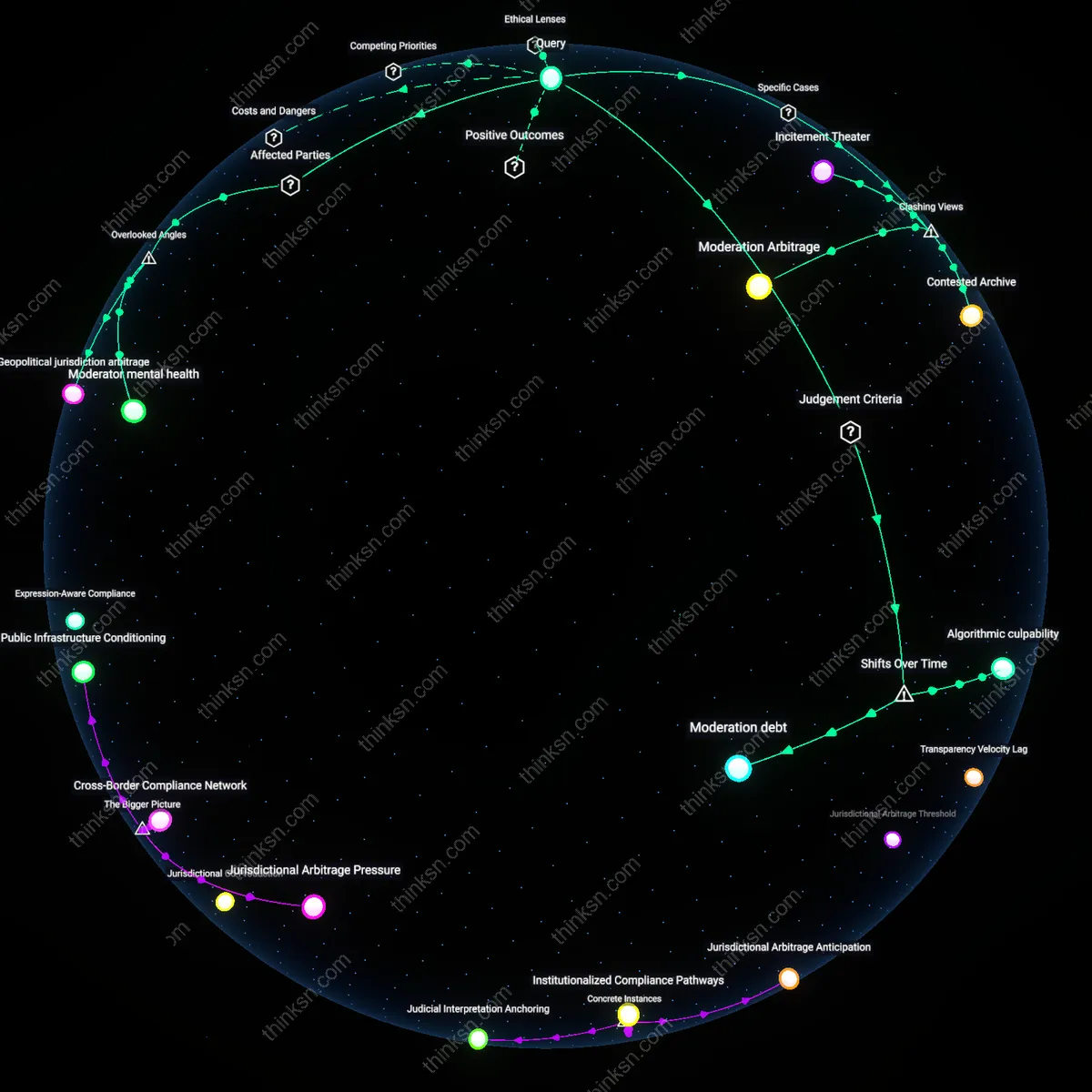

Moderator mental health

Online platforms should institutionalize mental health support for content moderators as a foundational component of equitable moderation policy, because these workers absorb cumulative psychological harm from repeated exposure to violent and politically charged material while making real-time decisions under ambiguous guidelines. This hidden labor cost shapes the consistency and fairness of content decisions—moderators experiencing burnout are more likely to over-enforce or under-enforce rules, disproportionately affecting marginalized political voices. The mental toll on this workforce is a systemic pressure point that destabilizes both safety and free expression, yet it remains unaccounted for in nearly all policy debates, which treat moderation as a technical or legal challenge rather than a human endurance task.

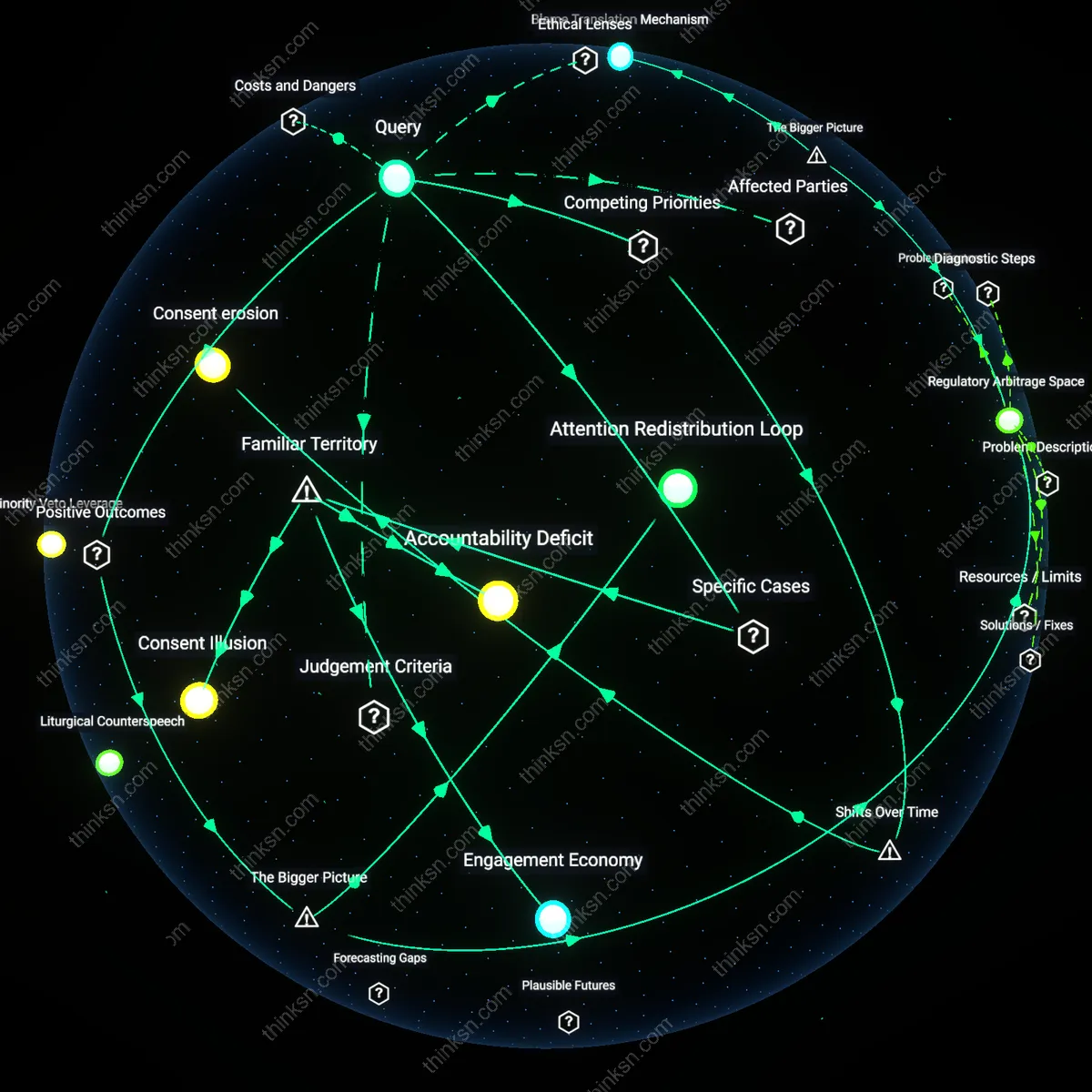

Algorithmic opacity debt

Regulatory frameworks must require platform disclosure of training data provenance for recommendation algorithms, because undisclosed ideological skew in training corpora systematically amplifies certain forms of political analysis as incitement while suppressing others with similar rhetorical intensity. This form of hidden bias—embedded during development rather than deployment—creates a structural drift in enforcement that disadvantages anti-establishment or radical critiques, even when they remain non-violent. The lack of auditability of these foundational data layers constitutes an unacknowledged debt in platform accountability, one that distorts the balance between safety and expression long before any human moderator intervenes.

Geopolitical jurisdiction arbitrage

States with strong free press traditions should form reciprocal legal safe harbors for politically controversial analysis published in countries where moderation policies are weaponized to suppress dissent, because transnational publishers increasingly route speech through jurisdictions with stronger protections to evade local censorship disguised as violence prevention. This creates a de facto tiered global speech regime where the ability to publish controversial political analysis depends not on content but on the server location and diplomatic alliances of the platform. The strategic movement of digital speech infrastructure—often invisible in platform transparency reports—alters the real-world impact of moderation policies and shifts responsibility from corporate actors to the diplomatic architecture of data sovereignty.

Moderation debt

Platforms must default to preserving contested political speech unless incitement meets a jurisdictionally specific, legally adjudicated threshold, because the erosion of intermediary liability protections since the early 2000s—particularly post-2016 electoral interference—has shifted content moderation from user-driven, community-moderated norms to reactive, centralized enforcement that prioritizes regulatory optics over due process; this has created a systemic backlog of ambiguous takedown decisions that platforms delay or resolve inconsistently, disproportionately silencing dissident voices under the guise of safety. The non-obvious consequence is not censorship per se but the accumulation of unaddressed moderation rationales—the residual condition of having to justify past, present, and future takedowns without a stable legal or ethical framework—revealing moderation debt as the deferred cost of unresolved normative conflict in private governance.

Algorithmic culpability

The balance should be enforced through third-party audit rights over recommendation algorithms, because the shift from chronological feeds to engagement-optimized ranking systems after 2012 fundamentally altered the distribution of incendiary content—not by creating new speech, but by amplifying marginal voices with disproportionate velocity when they trigger outrage. The practical mechanism lies in EU DSA-mandated audits and selective U.S. state laws, where technical assessors can now trace whether a platform’s architecture converted controversial analysis into de facto incitement via boosting; the underappreciated dynamic is that responsibility has migrated from the speaker to the amplifier, rendering traditional free expression frameworks blind to how distributional power redefines harm. This transition crystallizes algorithmic culpability—the condition in which procedural neutrality in publishing is negated by the operational bias of automated dissemination.

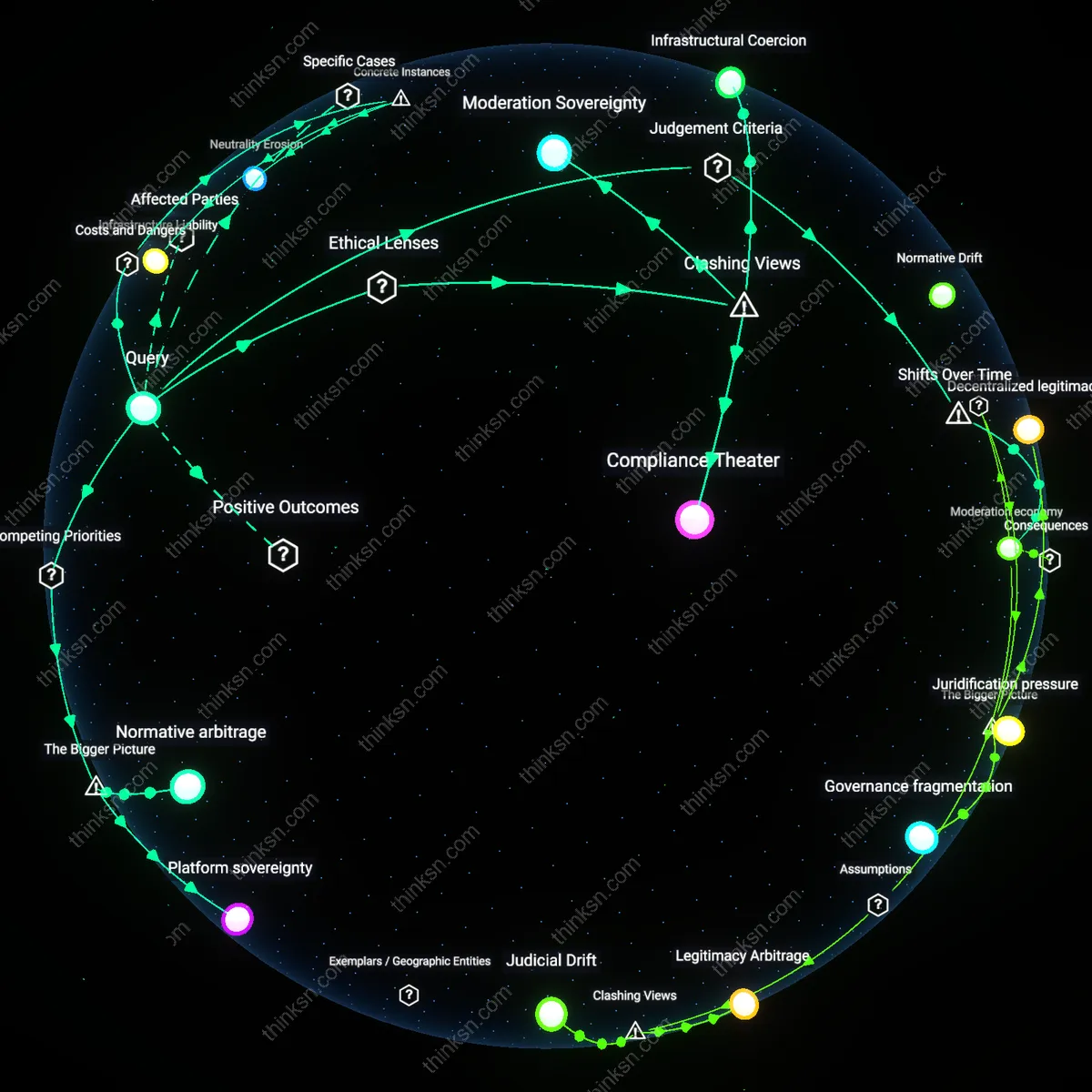

Moderation Arbitrage

Platforms should prioritize jurisdictional inconsistency in content rules to expose how political speech is selectively suppressed under anti-incitement pretexts. In Myanmar, Facebook’s delayed response to military-backed hate speech—while simultaneously enforcing strict norms in European markets—revealed not a failure of policy but a calculated use of regulatory fragmentation, where enforcement gaps become operational features; this demonstrates that uneven platform governance is not a flaw but an exploited condition, challenging the assumption that uniform moderation would better protect free expression and underscoring how corporations leverage legal pluralism to avoid accountability.

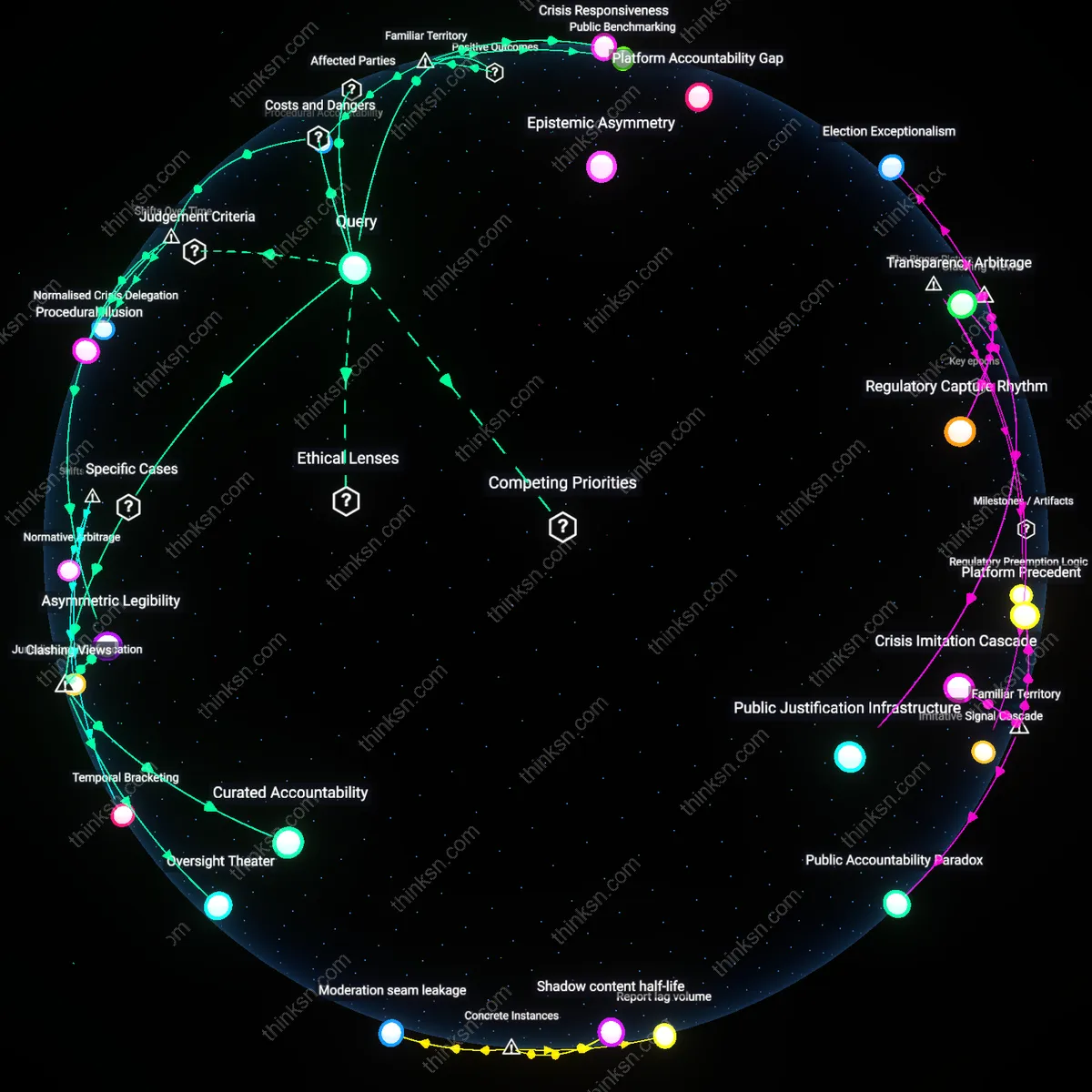

Incitement Theater

The balance is already skewed toward symbolic compliance, where platforms remove visibly extreme content while preserving systemic amplification of coded political violence, as seen in the U.S. with Parler’s post-January 6 moderation overhaul—despite purging explicit calls for violence, the platform retained algorithms that boosted conspiratorial framing from figures like Dan Bongino, revealing that deplatforming spectacle risks replacing actual safety with performative hygiene; this challenges the notion that removal-based moderation meaningfully curbs incitement, exposing it instead as a public relations mechanism that legitimizes platforms as neutral arbiters while preserving ideological infrastructure.

Contested Archive

The right to publish controversial political analysis is most at risk not from over-removal, but from selective legitimization within platform-curated knowledge ecosystems, as evidenced by YouTube’s differential treatment of Palestinian vs. Israeli citizen journalism during the 2021 Gaza conflict—where identical recording conditions and violent footage from Palestinians were flagged or age-restricted while Israeli content remained accessible—uncovering a moderation logic that treats non-Western political testimony as inherently suspect; this inverts the free-speech debate by showing that preservation, not deletion, is the political act, and that visibility itself is stratified by geopolitical epistemology.