Should You Trust AI for Legal Contracts? Error Rates vs. Convenience

Analysis reveals 9 key thematic connections.

Key Findings

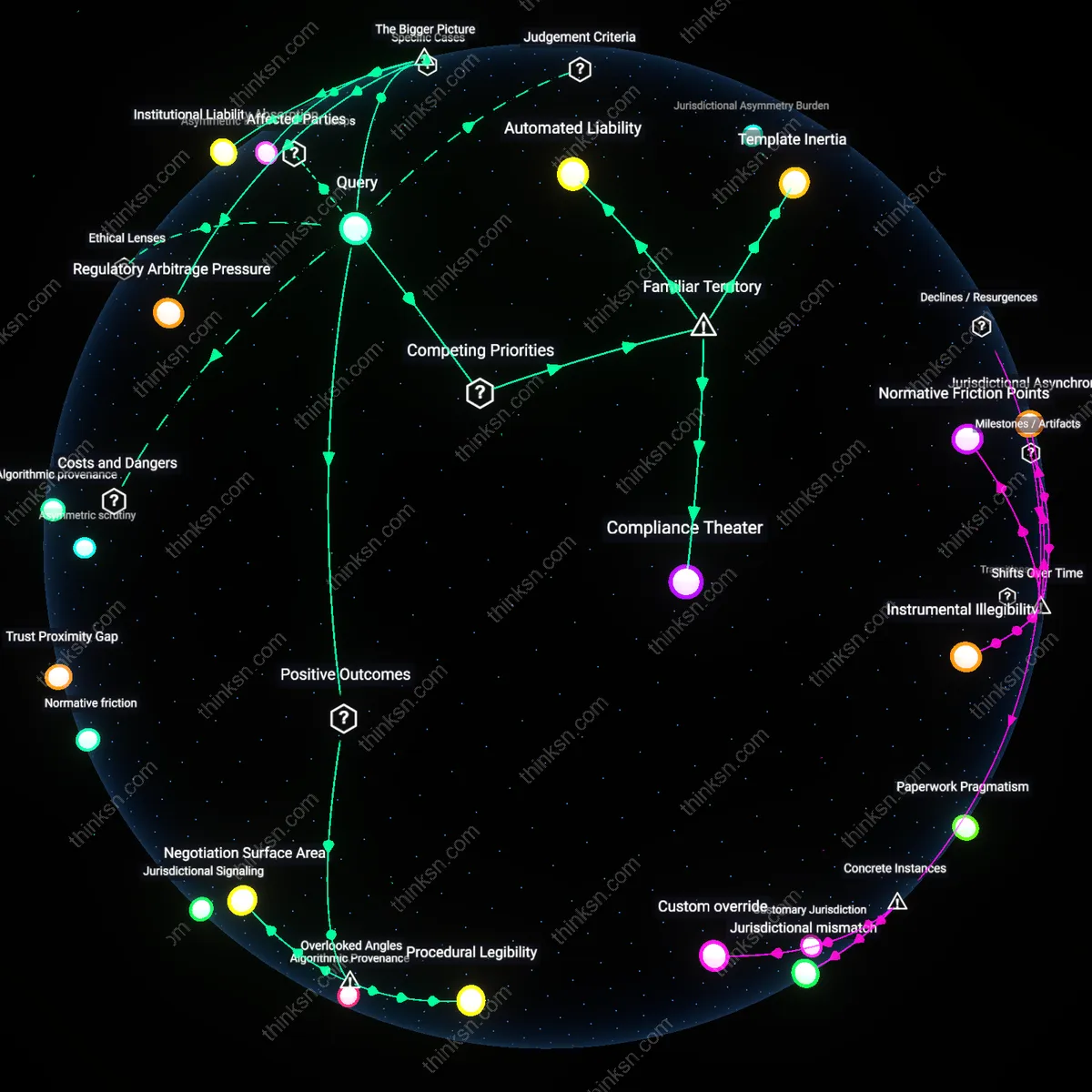

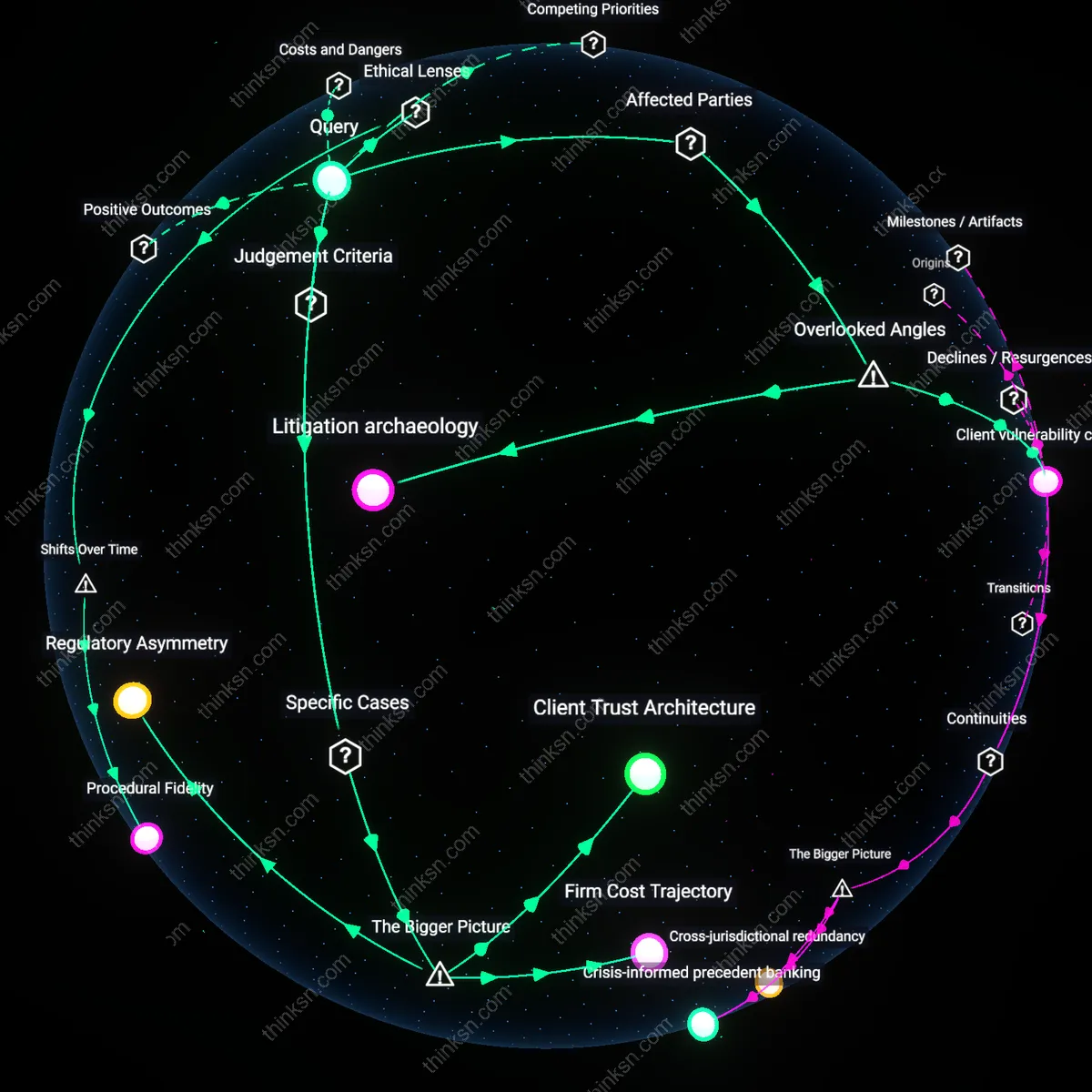

Procedural Legibility

One should evaluate AI-generated legal contracts by measuring how they enhance procedural transparency for non-lawyer stakeholders during contract execution. When small business owners can trace how specific clauses were generated—such as through version logs tied to jurisdictional updates in real-time—they gain actionable insight into compliance pathways, reducing reliance on reactive legal intervention. This shifts trust from outcome accuracy alone to the visibility of rule application, a dimension typically ignored in favor of error-counting benchmarks. The overlooked dynamic is that trust in legal tools often stems not from perceived perfection but from the ability to audit decisions mid-process, which AI systems with embedded regulatory metadata uniquely enable.

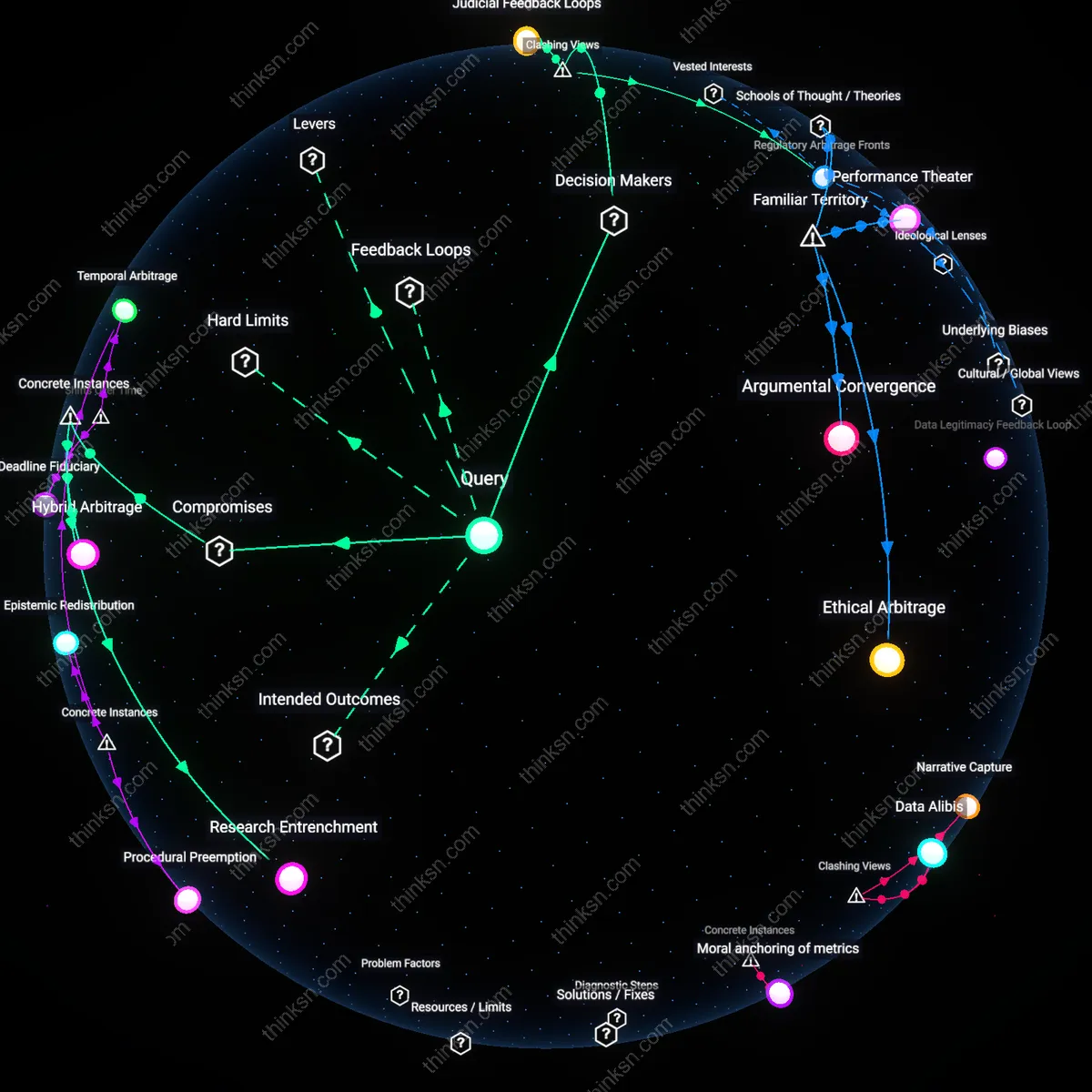

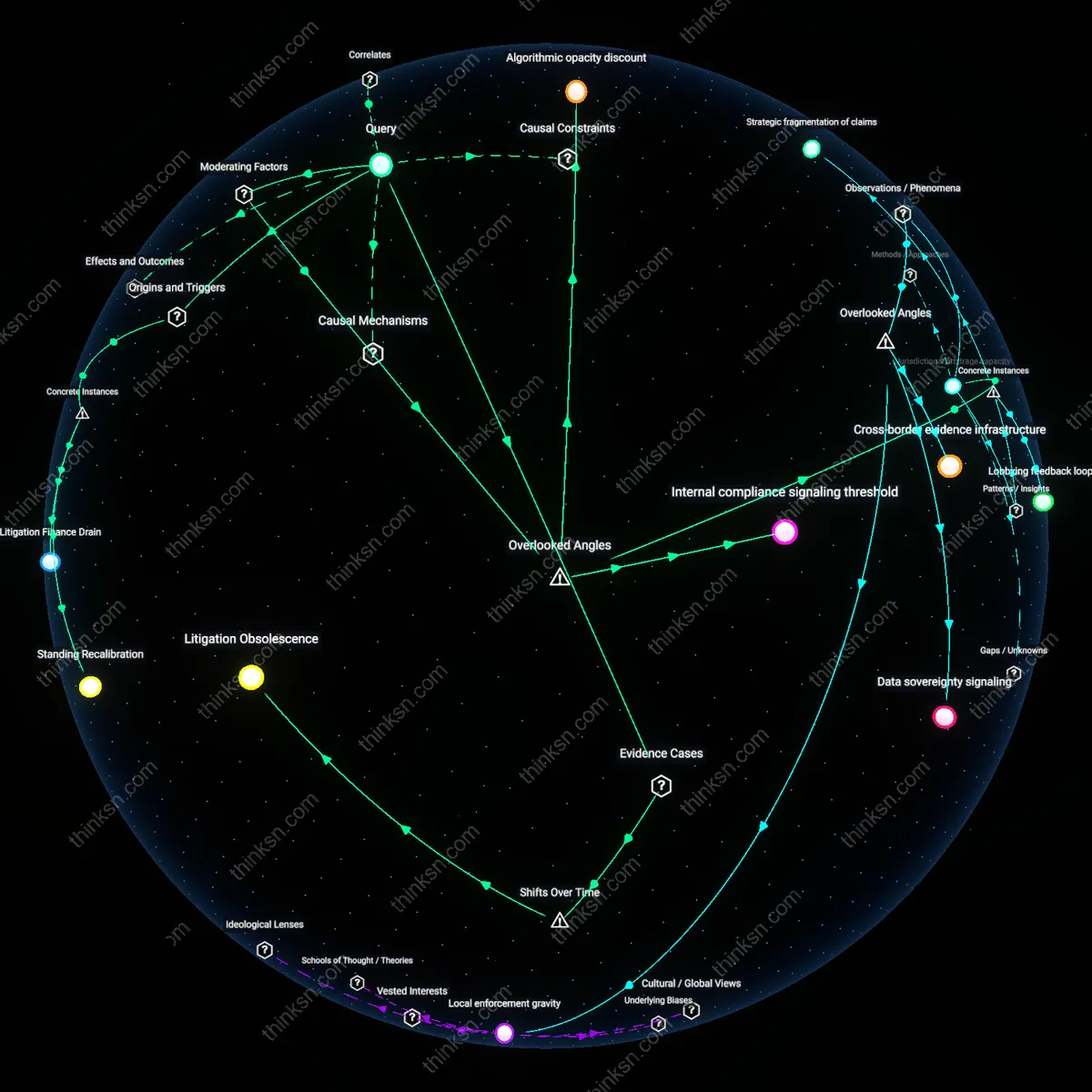

Regulatory Arbitrage Cost

One should assess trustworthiness by calculating how AI contract tools expose small businesses to hidden liabilities in cross-jurisdictional operations, particularly when default templates reflect favorable but non-applicable statutes. For example, a Texas-based LLC using an AI-generated operating agreement trained predominantly on California norms may inadvertently adopt fiduciary standards that heighten litigation exposure. The critical but unexamined factor is that AI systems do not neutralize jurisdictional risk—they redistribute it through subtle lexical alignment with high-prevalence legal cultures, making trust a function of misalignment cost rather than internal consistency. This reframes evaluation from syntactic correctness to geographic risk profiling.

Negotiation Surface Area

One should judge trustworthiness by how AI-generated contracts expand the scope for bilateral adjustment between parties, especially when clauses are tagged with amendable rationale fields that explain trade-offs behind standard terms. Platforms like Co-Counsel for Law Clerk embed alterable presets with references to ABA model norms, enabling vendor-client pairs to co-modify clauses with shared understanding, turning contracts into collaborative interfaces rather than static declarations. The underappreciated shift is from viewing contracts as final products to treating them as negotiation scaffolds—where trust is built through mutual agency, not just legal enforceability, altering the core metric from error rate to interactive refinement potential.

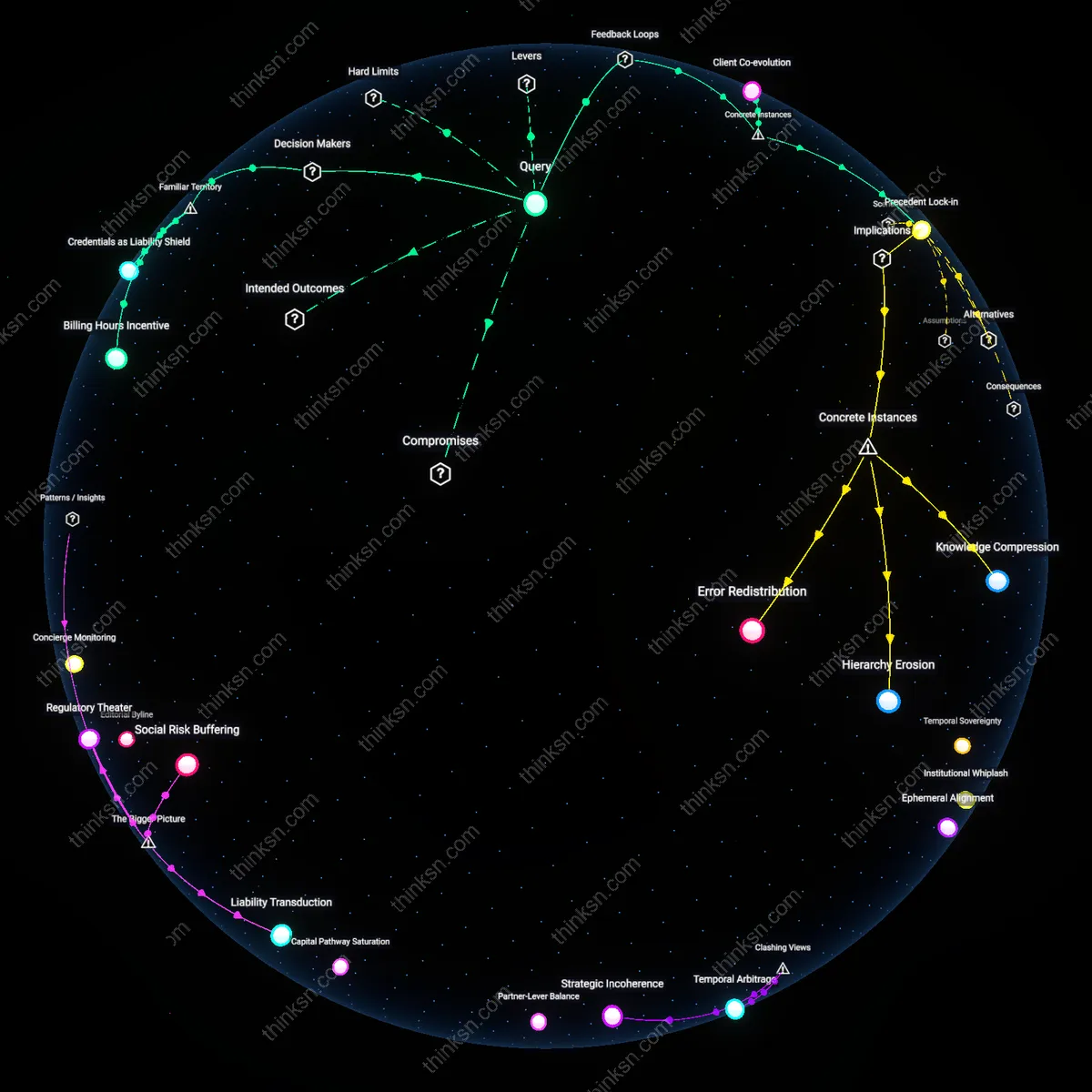

Automated Liability

One must delegate legal validation to licensed attorneys because AI-generated contracts, while fast and cheap, lack standing in court when contested. This creates a dependency where small businesses absorb legal risk to preserve affordability, revealing that automation’s cost savings are functionally exchanged for vulnerability in dispute resolution. The non-obvious reality is that most users assume AI tools are legally vetted by design, when in fact no jurisdiction currently recognizes AI as a legal signatory or authoritative interpreter, making attorney review a mandatory circuit-breaker.

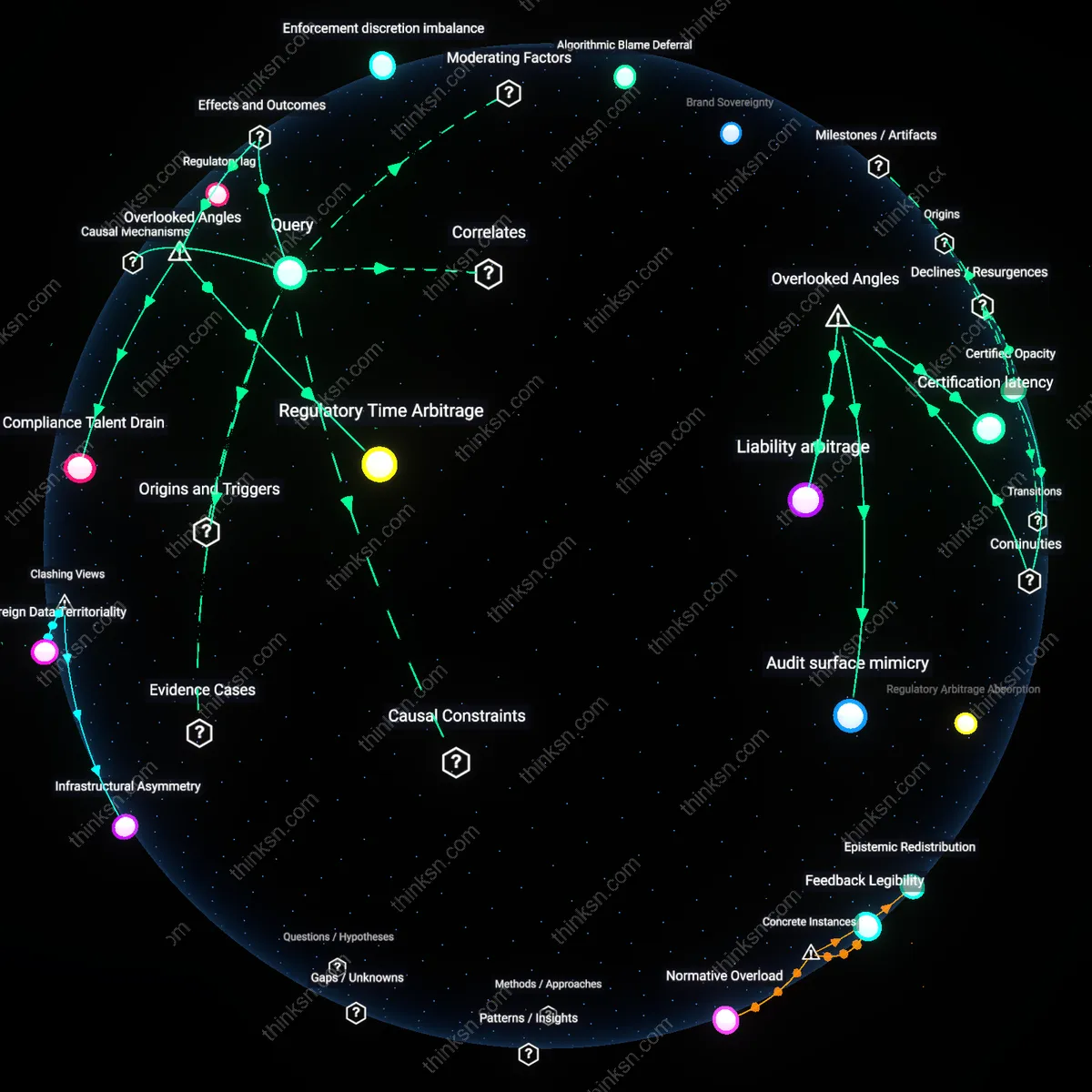

Template Inertia

One should audit contract templates against state-specific statutes because AI systems predominantly train on federal or high-frequency legal language, systematically underrepresenting local amendments that govern small business operations. This produces documents that appear compliant but fail in enforcement due to jurisdictional misalignment, a gap users rarely detect because polished formatting mimics professional drafting. The underappreciated mechanism is how AI replicates the most common patterns rather than the most accurate ones, privileging familiarity over precision in legal writing.

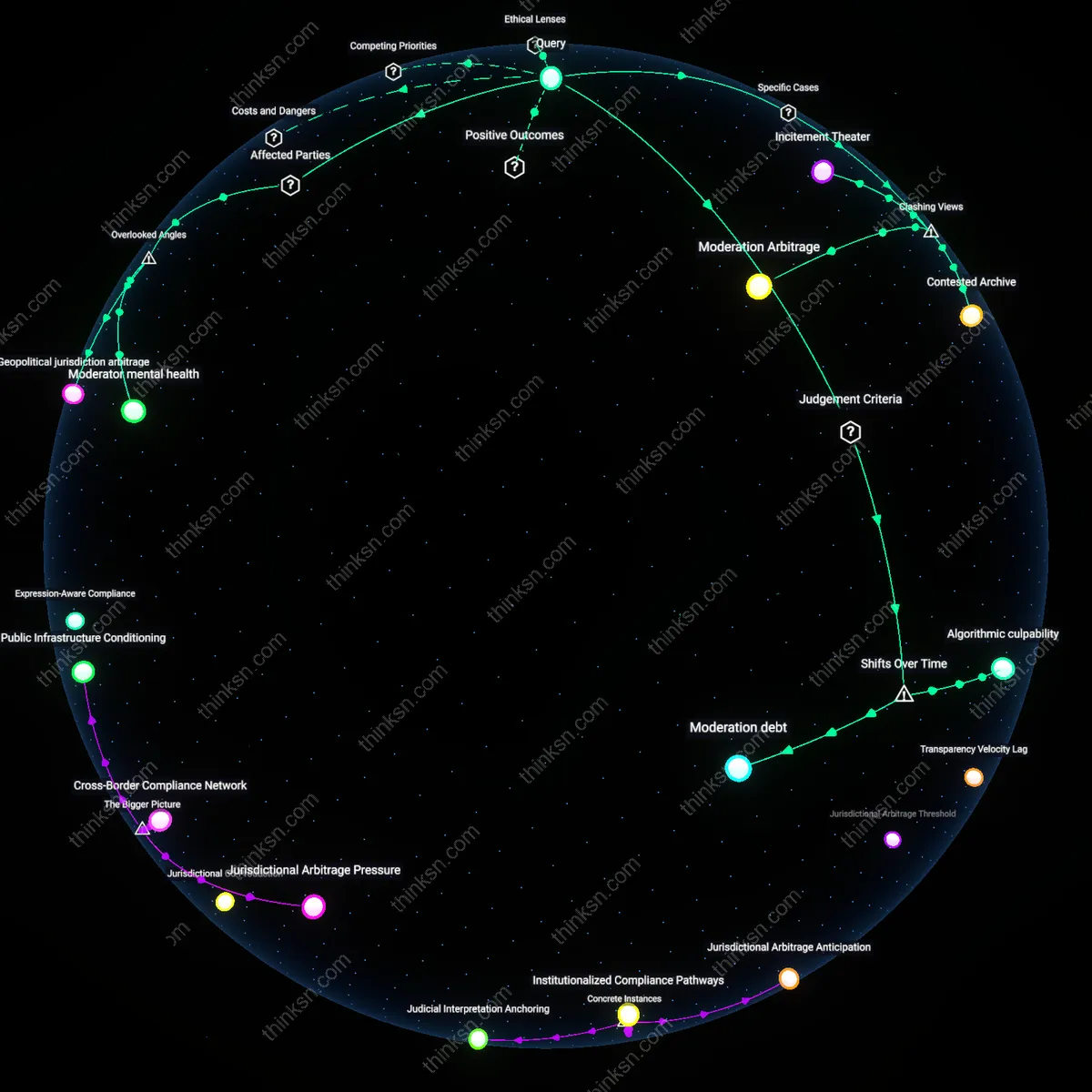

Compliance Theater

One must assume AI-generated contracts perform compliance performatively to reassure users rather than ensure enforceability, because the perceived presence of legal language satisfies psychological thresholds for safety without triggering actual legal scrutiny. Small business owners, pressed for time and legal resources, accept these documents as sufficient due to surface legitimacy, not substantive review—this tradeoff between perceived diligence and actual risk protection reveals an ecosystem where legal security is simulated because true reliability is too costly to distribute equitably.

Regulatory Arbitrage Pressure

One should evaluate AI-generated legal contracts by assessing how jurisdictions like Wyoming, which offer fast-track business incorporation with minimal oversight, create incentives for legal tech startups to prioritize speed over accuracy in contract generation. This dynamic attracts small businesses seeking low-cost compliance, but the permissive regulatory environment enables AI tools to operate without mandatory validation against case law or statutory updates, shifting liability onto users. The underappreciated consequence is that legal reliability erodes not due to technological limits alone, but because state-level economic competition actively disincentivizes rigorous third-party auditing of AI outputs.

Institutional Liability Absorption

One should evaluate AI-generated legal contracts by examining how entities like LegalZoom, when serving as both AI developer and registered legal service provider, absorb liability through indemnification clauses that insulate small businesses from direct consequences of contract errors. This mechanism functions through state bar associations permitting limited legal accountability for non-attorney-led platforms, enabling a hybrid model where AI outputs are backstopped institutionally rather than technically. The non-obvious implication is that trustworthiness is socially constructed via corporate liability buffering, not derived from algorithmic precision.

Asymmetric Legal Feedback Loops

One should evaluate AI-generated legal contracts by analyzing how small businesses in Texas’s energy sector use AI-revised operating agreements that, when disputed, rarely enter formal adjudication due to mandatory arbitration clauses, thereby starving the AI training process of corrective legal feedback. This occurs because contract disputes are resolved privately through industry-specific ADR forums that do not produce public rulings, preventing error correction at the model level. The systemic issue is that legal reliability cannot improve empirically when the primary source of validation—judicial precedent—is structurally excluded from the feedback infrastructure.