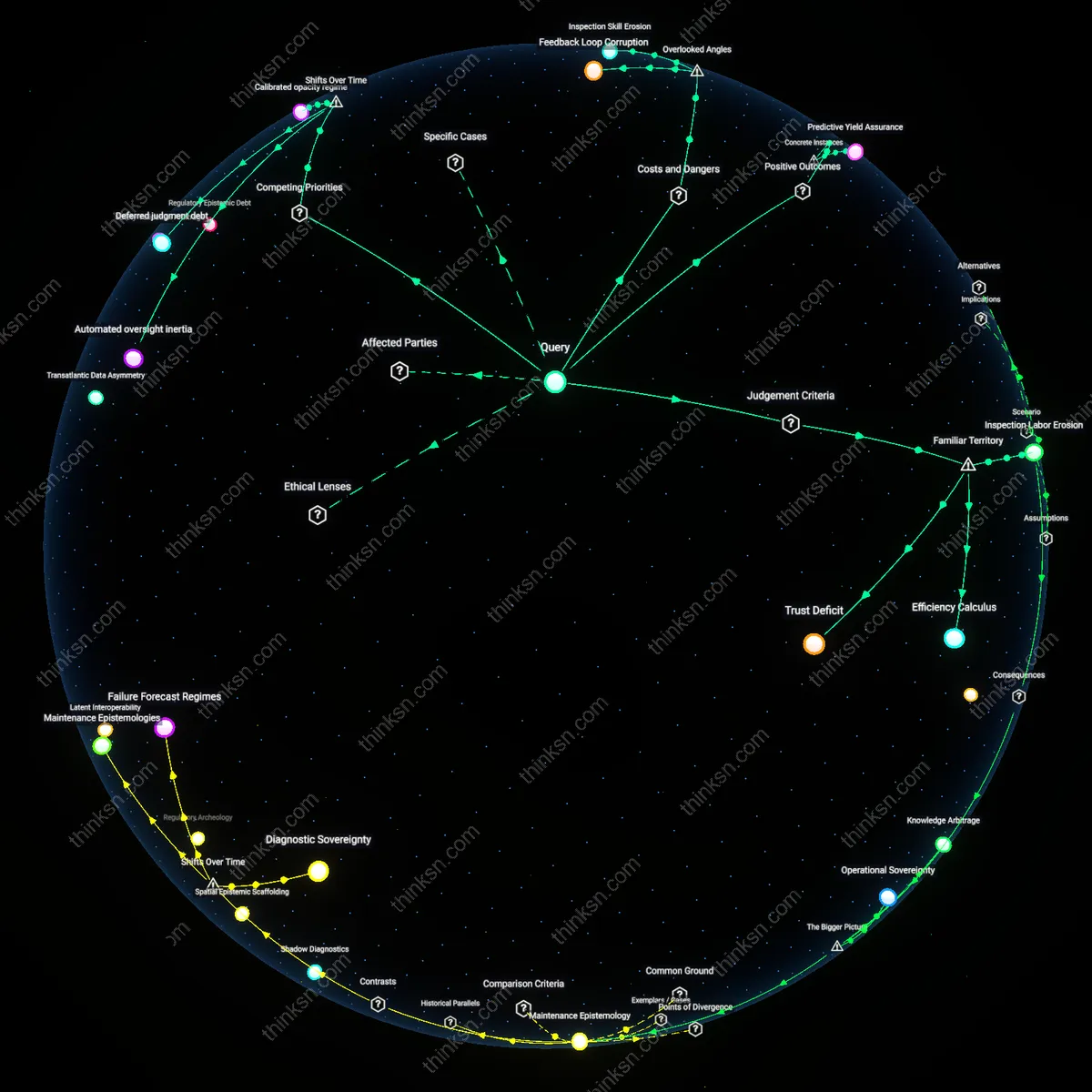

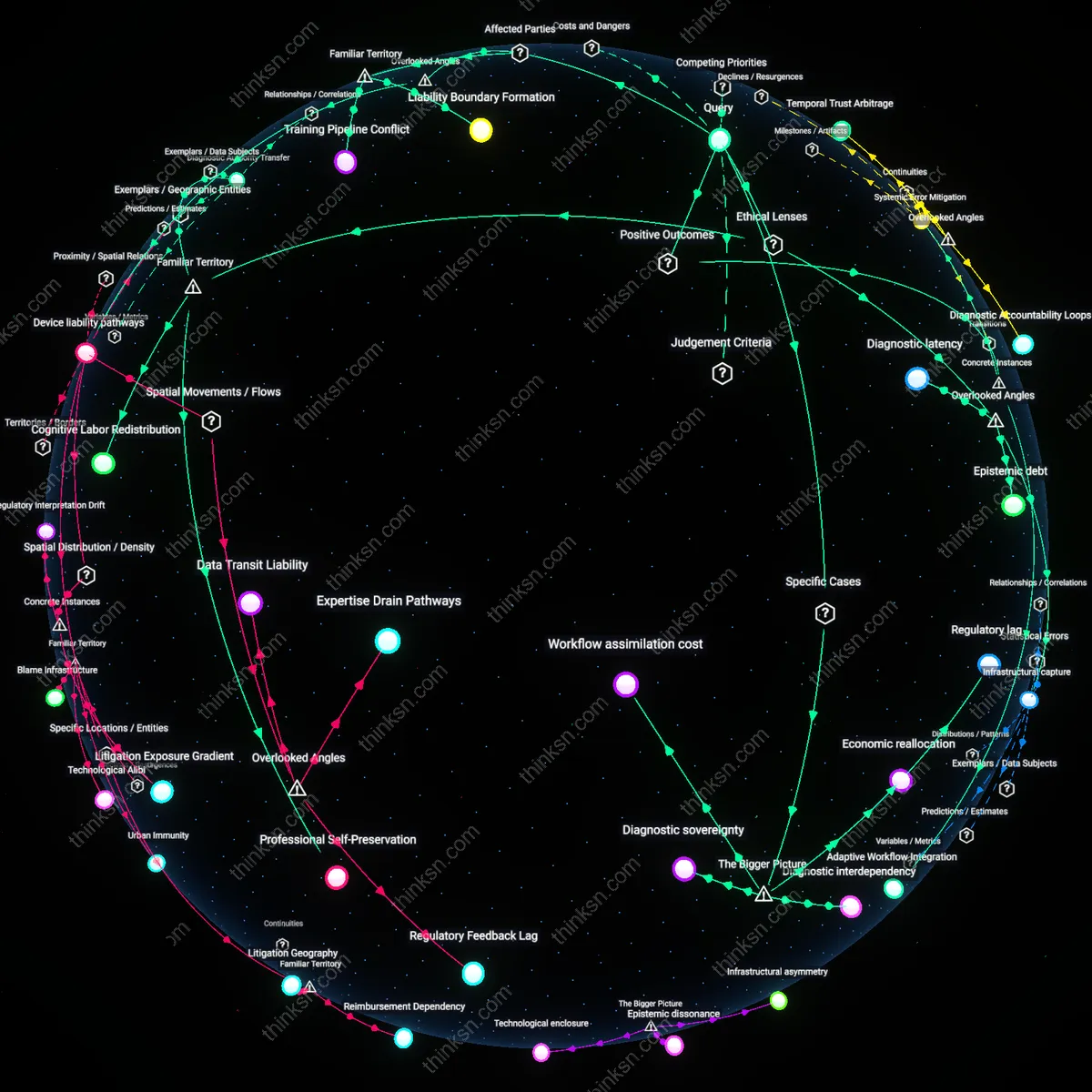

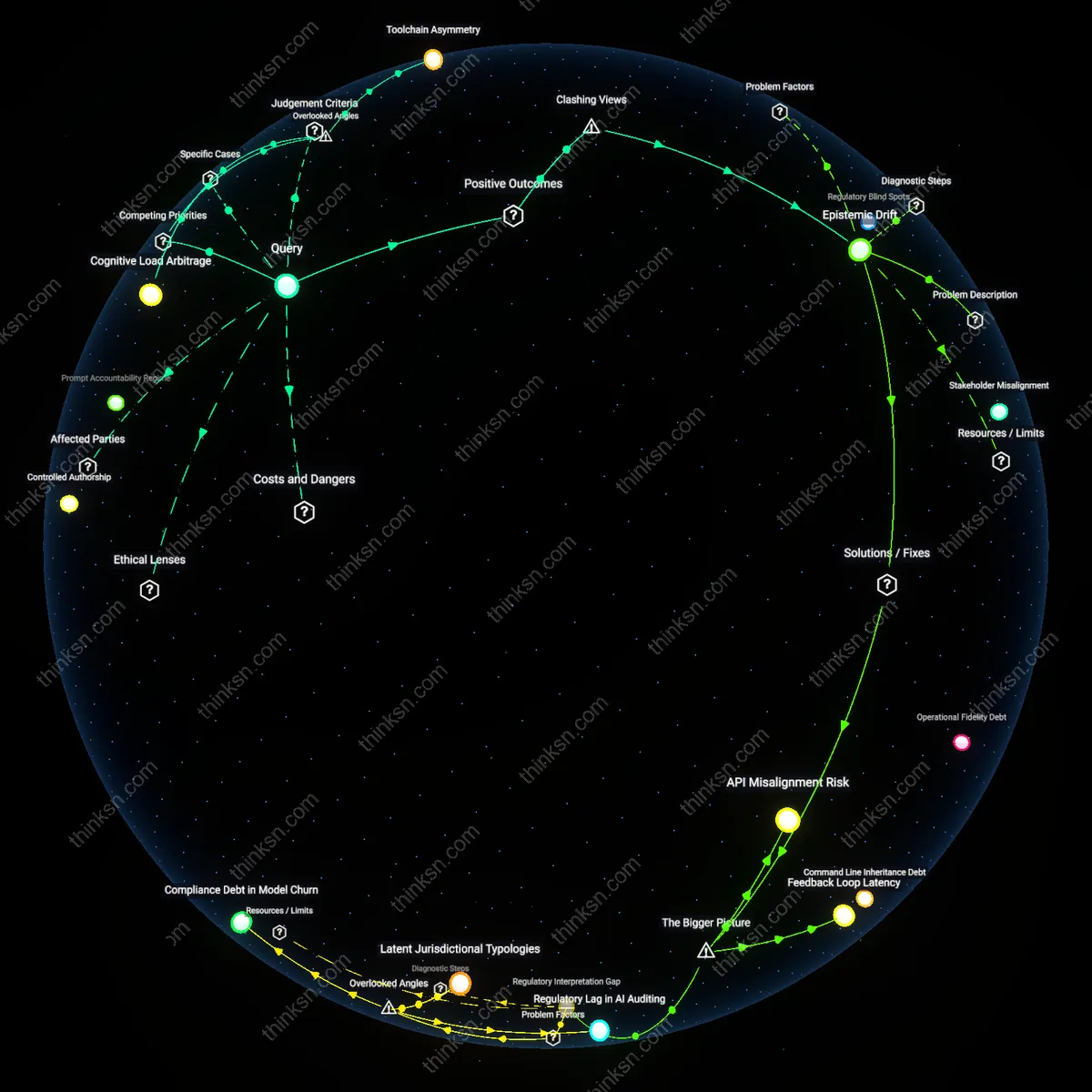

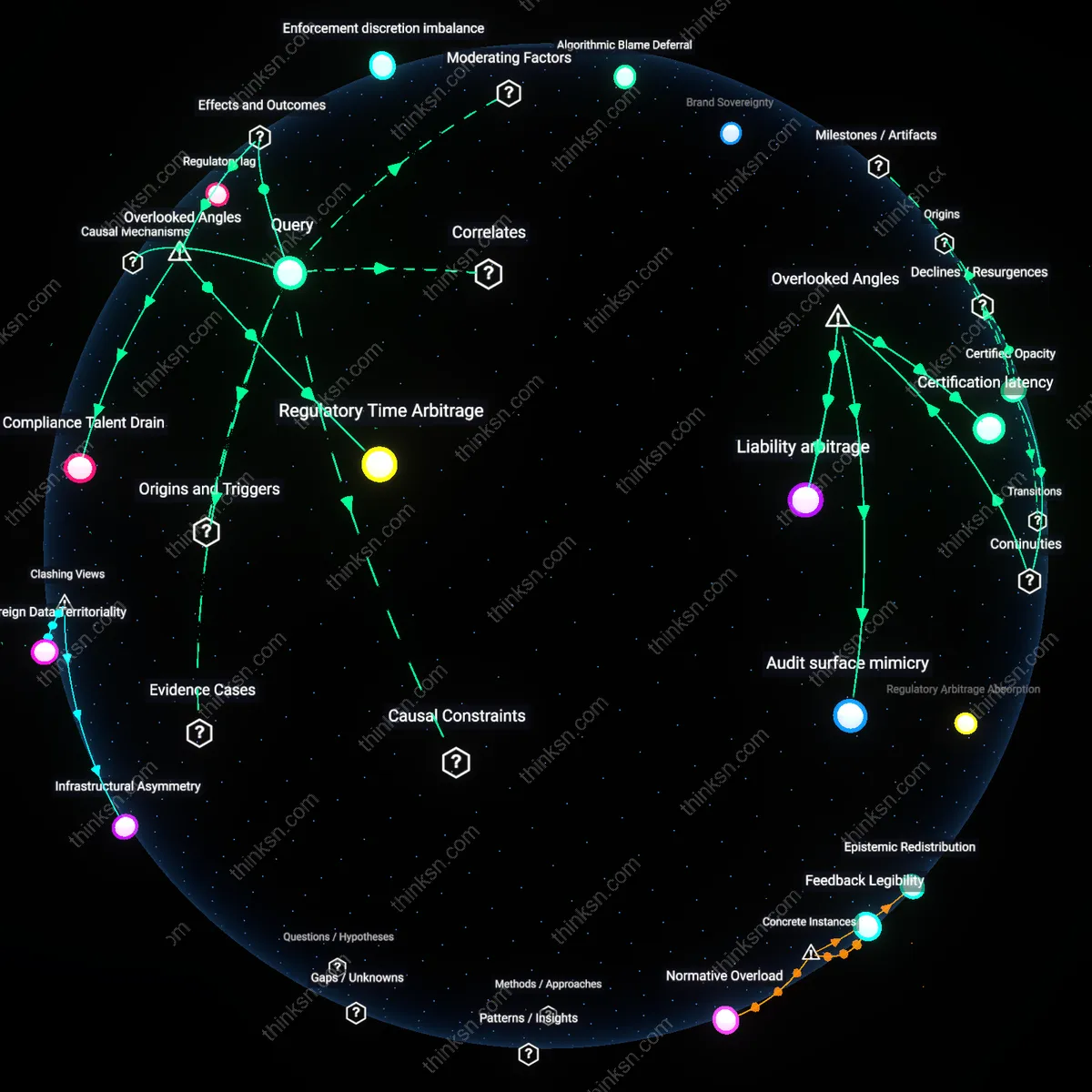

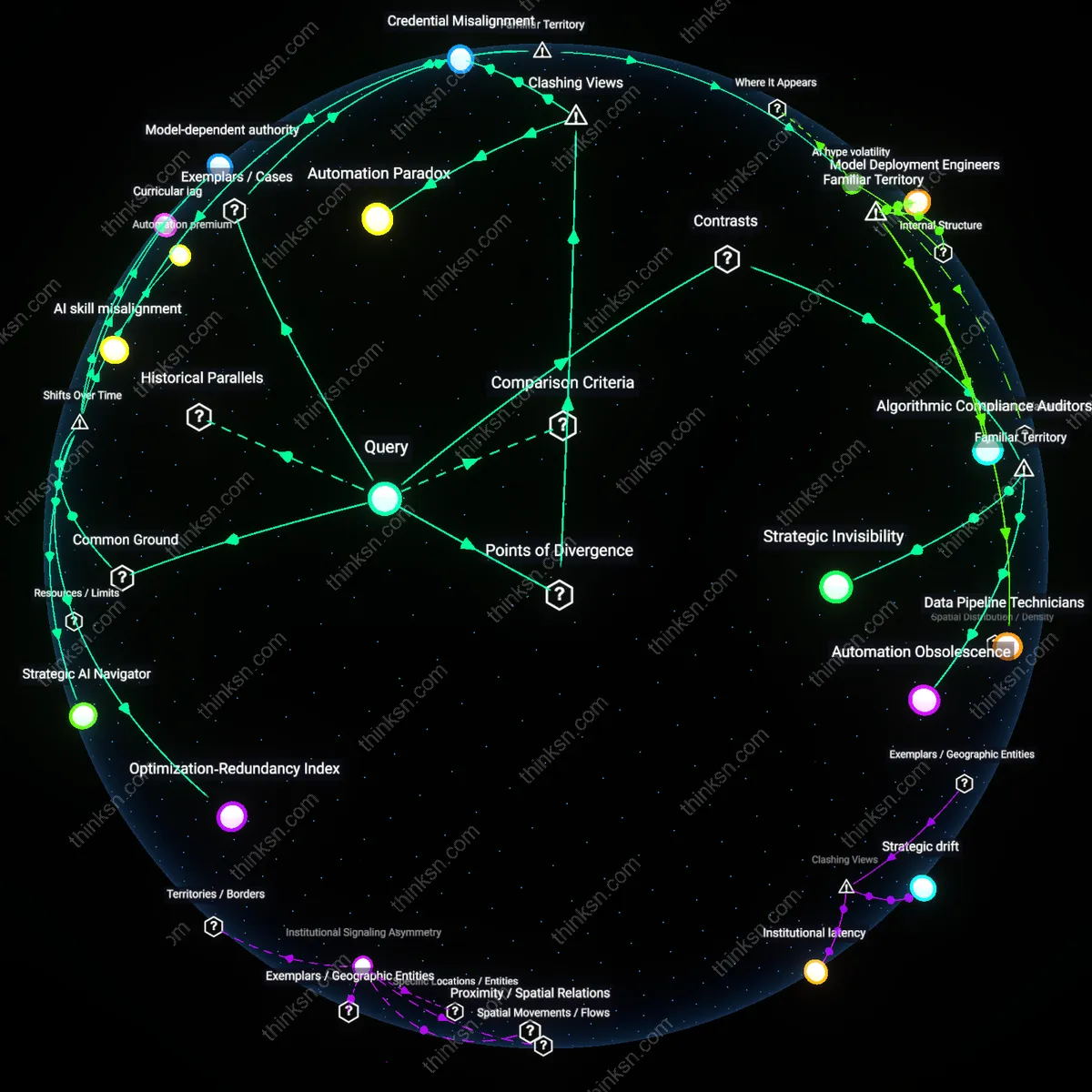

Does AI-Powered Maintenance Cut Delays More Than Human Oversight?

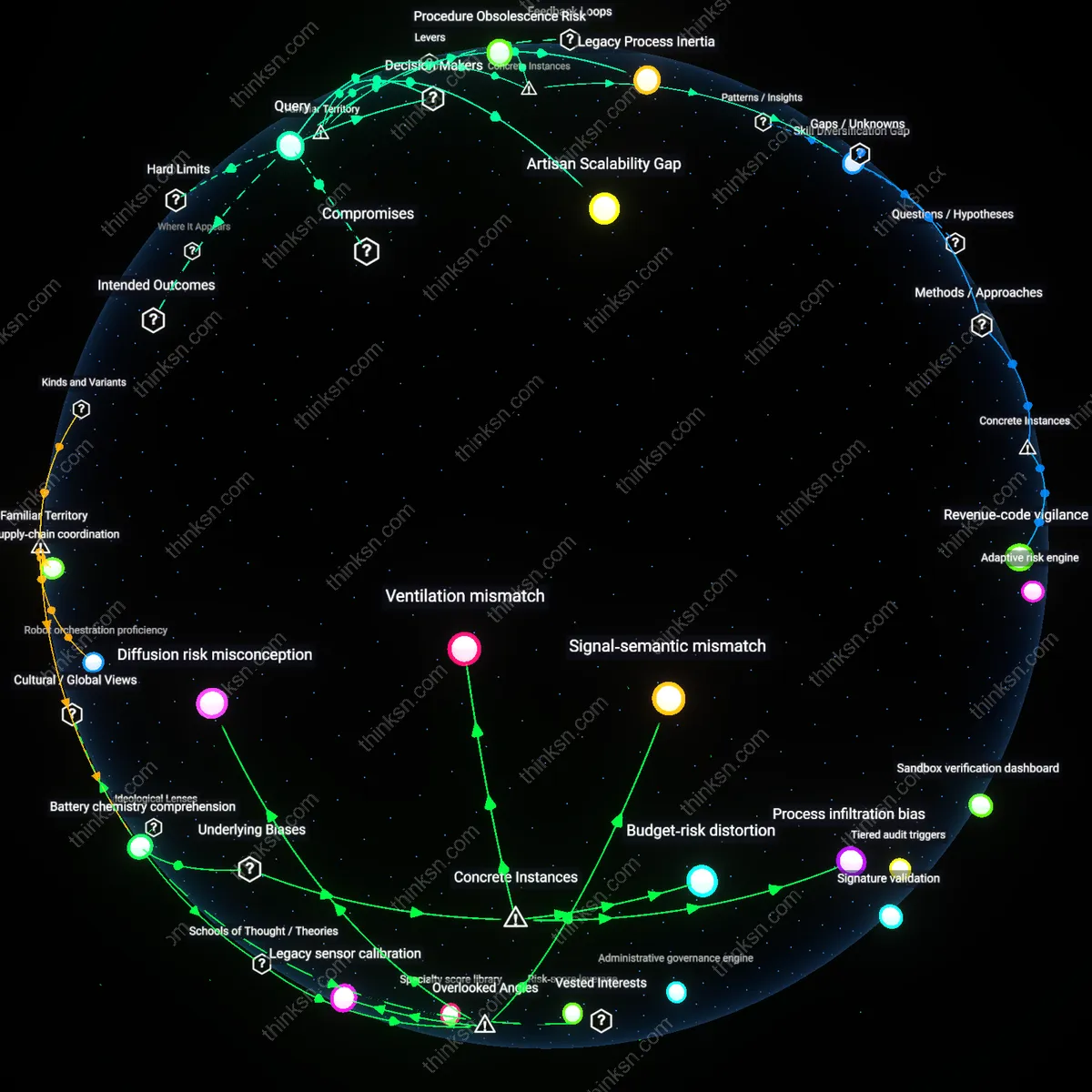

Analysis reveals 10 key thematic connections.

Key Findings

Efficiency Calculus

Yes, because reducing operational delays through AI predictions aligns with transportation and logistics industries' entrenched prioritization of economic efficiency, where metrics like on-time performance and asset utilization govern decision-making; this system privileges algorithmic forecasting over human labor not due to superior ethics but due to its integration into existing cost-benefit models that assign quantifiable value to time and revenue loss; the underappreciated reality is that these models rarely account for the asymmetric risk of rare but catastrophic failures that human inspectors might detect through contextual awareness.

Trust Deficit

No, because the public’s familiarity with high-profile AI failures in safety-critical domains—such as autonomous vehicles or medical diagnostics—fuels skepticism about opaque algorithmic judgments, especially when human oversight is sidelined; this lens reflects a widespread cultural reliance on visible human accountability in risk management, where deference to machines feels like abdication rather than progress; the non-obvious insight is that even statistically superior AI systems may erode public trust when they lack narrative transparency, making socially acceptable risk more important than technically minimized risk.

Inspection Labor Erosion

No, because replacing human inspections with AI predictions undermines the professional autonomy and institutional knowledge of skilled technicians, particularly in unionized or regulation-heavy sectors like aviation or rail, where inspection routines are embedded in labor contracts and safety culture; this dynamic reveals an unacknowledged power shift from experienced workers to data scientists and corporate managers who control algorithmic thresholds; the hidden consequence is the long-term degradation of adaptive expertise that can interpret anomalies beyond programmed parameters, especially in novel or hybrid failure modes.

Predictive Yield Assurance

In Rolls-Royce's 'Engine Health Monitoring' system deployed on commercial aircraft fleets, AI-driven telemetry analysis reduced unscheduled engine removals by 35% between 2018 and 2021, directly cutting flight delays by anticipating failures before human inspection schedules would have flagged them; the system processes real-time vibration, temperature, and performance data through proprietary machine learning models accessible to airline operators and MRO providers, creating a shared signal for maintenance timing that outperforms calendar-based checks; this case reveals that when failure prediction is continuously calibrated to operational data, it not only improves logistical reliability but also institutionalizes a new form of accountability where maintenance outcomes are measured in dynamic performance envelopes rather than static checklists.

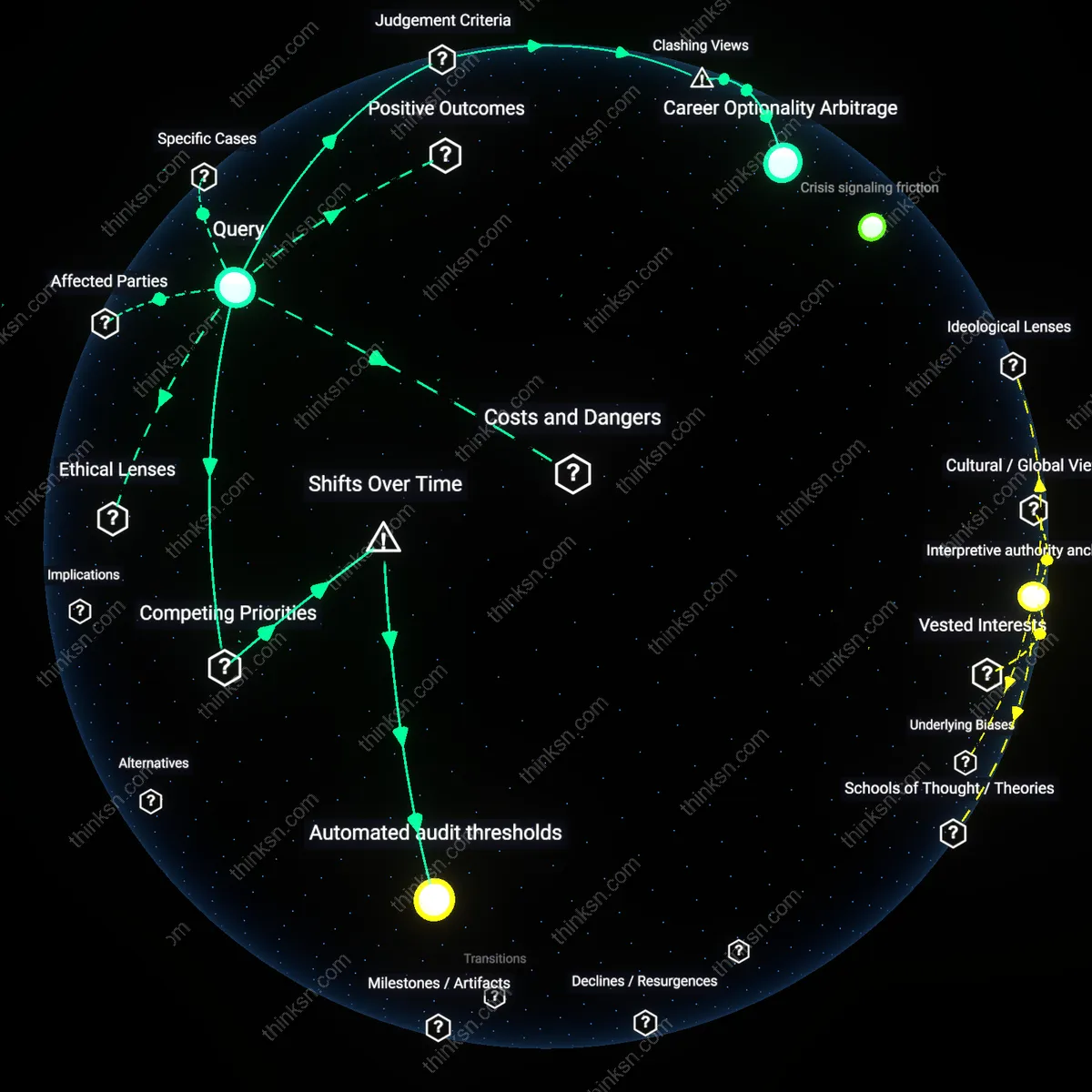

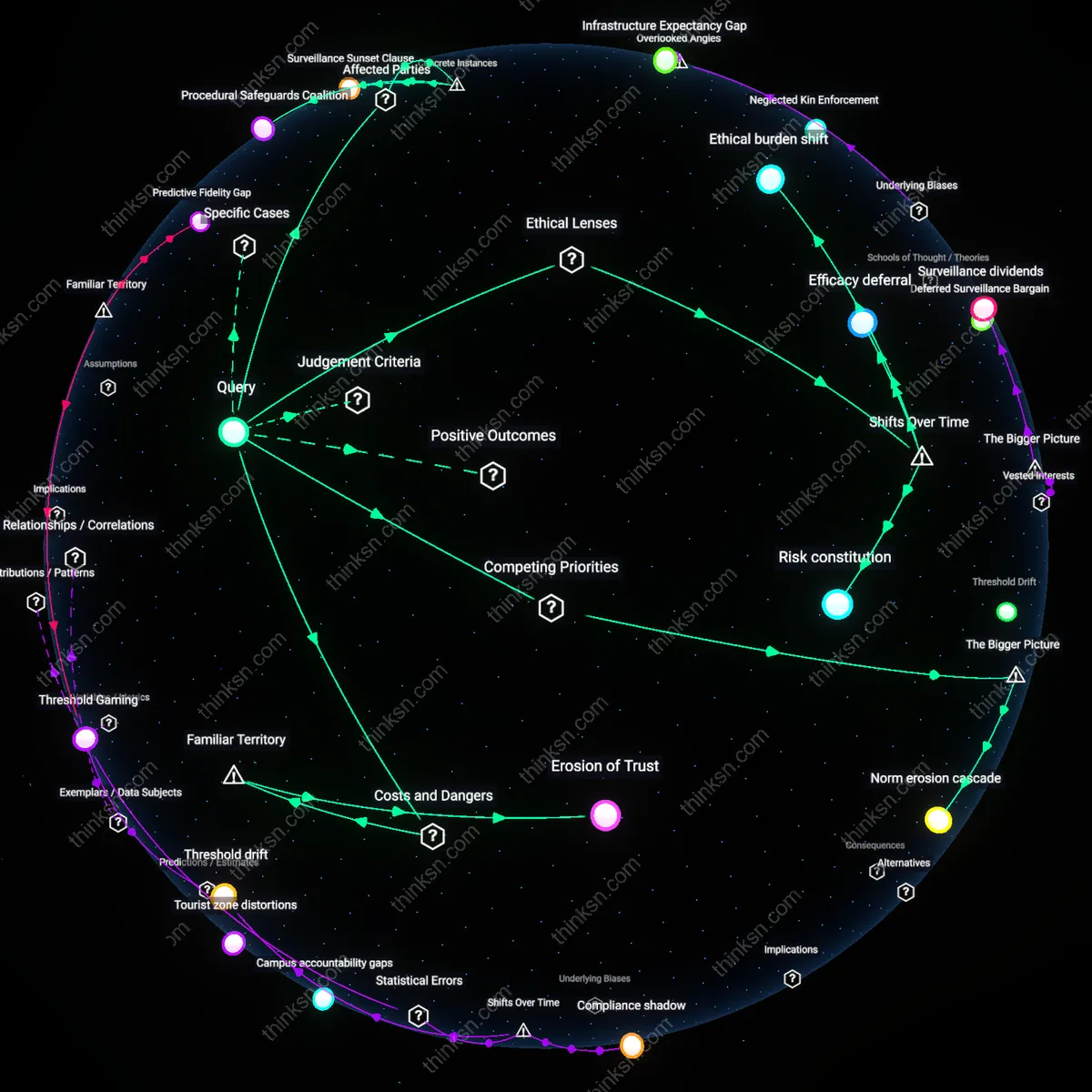

Regulatory Trust Compression

Following the deployment of GE Aviation’s TrueChoice Flight Efficiency Analytics on over 1,200 narrow-body aircraft by 2020, European Union Aviation Safety Agency (EASA) approved extended maintenance intervals for CFM56 engines based on AI-verified health trends, marking the first time a major regulator accepted algorithmic confidence as a substitute for fixed-schedule inspections; the system’s ability to correlate fuel burn anomalies with compressor degradation patterns provided a statistically robust alternative to mandated teardowns, shortening ground times and increasing fleet utilization without compromising safety margins; this case exposes how AI prediction can accelerate regulatory validation cycles, effectively compressing the time between operational evidence and institutional trust when empirical consistency displaces procedural precedent.

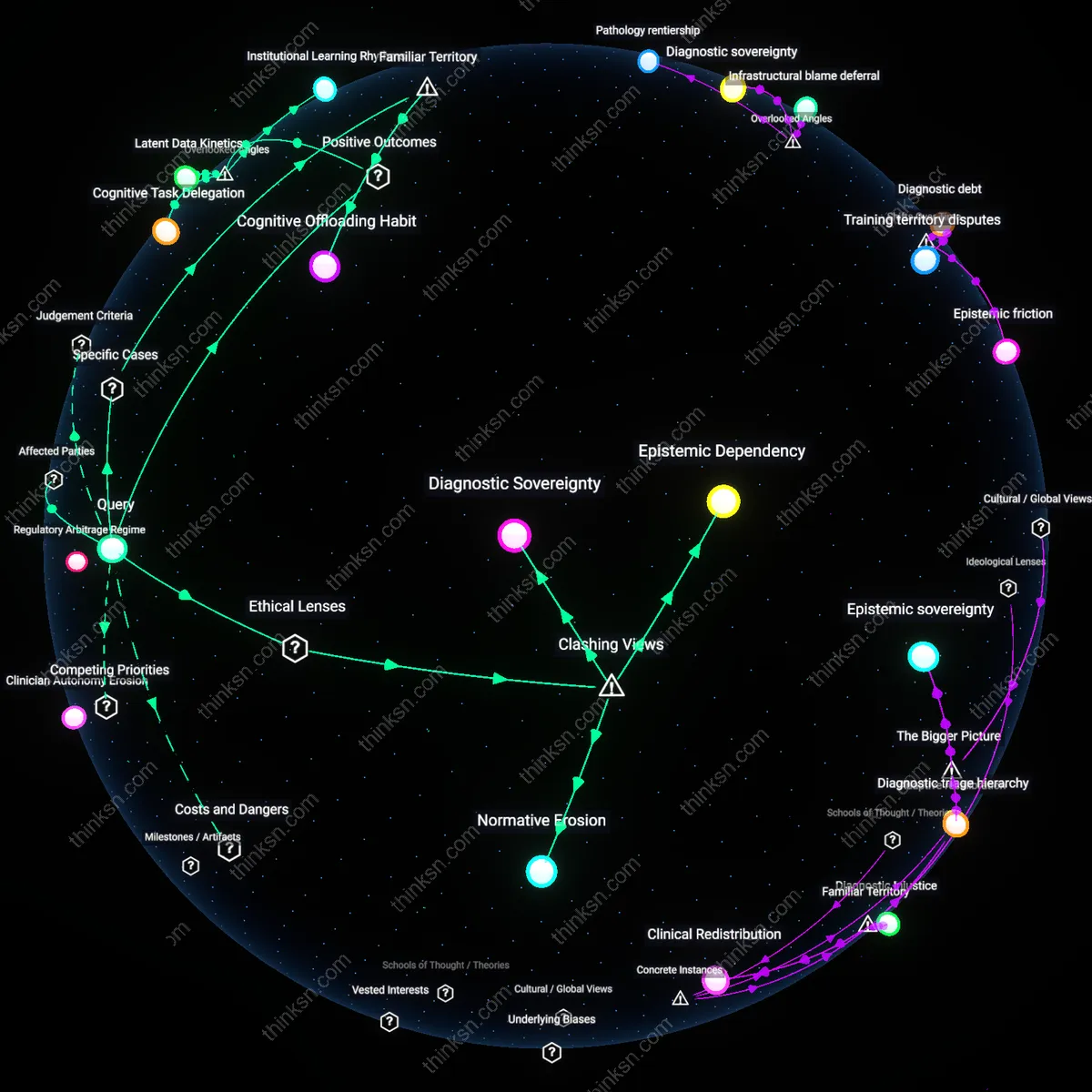

Inspection Skill Erosion

AI-driven maintenance prediction degrades the diagnostic proficiency of human inspectors over time by reducing their exposure to edge-case failures. As organizations prioritize algorithmic alerts, maintenance crews perform fewer discretionary inspections, weakening their pattern recognition and tactile judgment—skills critical when AI misses novel fault modes. This atrophy of embodied expertise creates covert dependency on systems that cannot adapt to unforeseen mechanical pathologies, turning deferred inspections into irreversible knowledge loss within technical workforces. The hidden cost is not delayed repairs but the slow collapse of human readiness to intervene when automation fails.

Feedback Loop Corruption

Predictive AI systems trained on deferred-inspection outcomes generate dangerously tautological safety evidence by design. When human inspections are delayed due to algorithmic 'confidence,' the absence of discovered faults is recorded as validation of AI accuracy, even if defects were simply masked or progressing invisibly. This creates a negative feedback loop where reduced human verification leads to more deferrals, reinforcing false confidence in the model. Most risk assessments overlook that the data proving AI efficacy are themselves corrupted by the policy’s implementation, making systemic failure undetectable until catastrophe occurs.

Automated oversight inertia

AI-driven maintenance prediction reduces delays more than human inspections by institutionalizing algorithmic risk assessment in aviation logistics, shifting from mid-20th-century federally mandated visual check regimes to data-fed prognostic systems after 2010, embedding reliance on machine learning models that marginalize technician discretion despite known edge-case blind spots, revealing how operational efficiency gains produce automated oversight inertia, where historical trust in human fallibility is replaced by unchallengeable predictive chains.

Deferred judgment debt

The ethical cost of deferring human inspections emerges not in initial implementation but in the accumulation of deferred judgment debt after the 2015 transition from scheduled to condition-based maintenance in railway networks, where AI systems absorbed inspection authority from unionized signal maintainers, creating a temporal lag in accountability as algorithmic decisions delay visible failure until systemic thresholds are breached, exposing how time-shifting risk erodes labor-based safety epistemologies in favor of statistically optimized, but historically untested, judgment deferrals.

Calibrated opacity regime

Since the 2020 integration of proprietary AI diagnostics in maritime shipping fleets, the balance between predictive efficiency and ethical oversight has been recalibrated through a shift from transparent mechanical audits to black-box performance contracts with OEMs, where compliance is validated through outcome metrics rather than process scrutiny, producing a calibrated opacity regime in which the historical norm of full mechanical legibility is sacrificed to maintain vendor-driven uptime guarantees, making inspection deferral not an oversight failure but an architecturally enforced trade-off.