Should Radiologists Focus on AI Oversight or Subspecialty Growth?

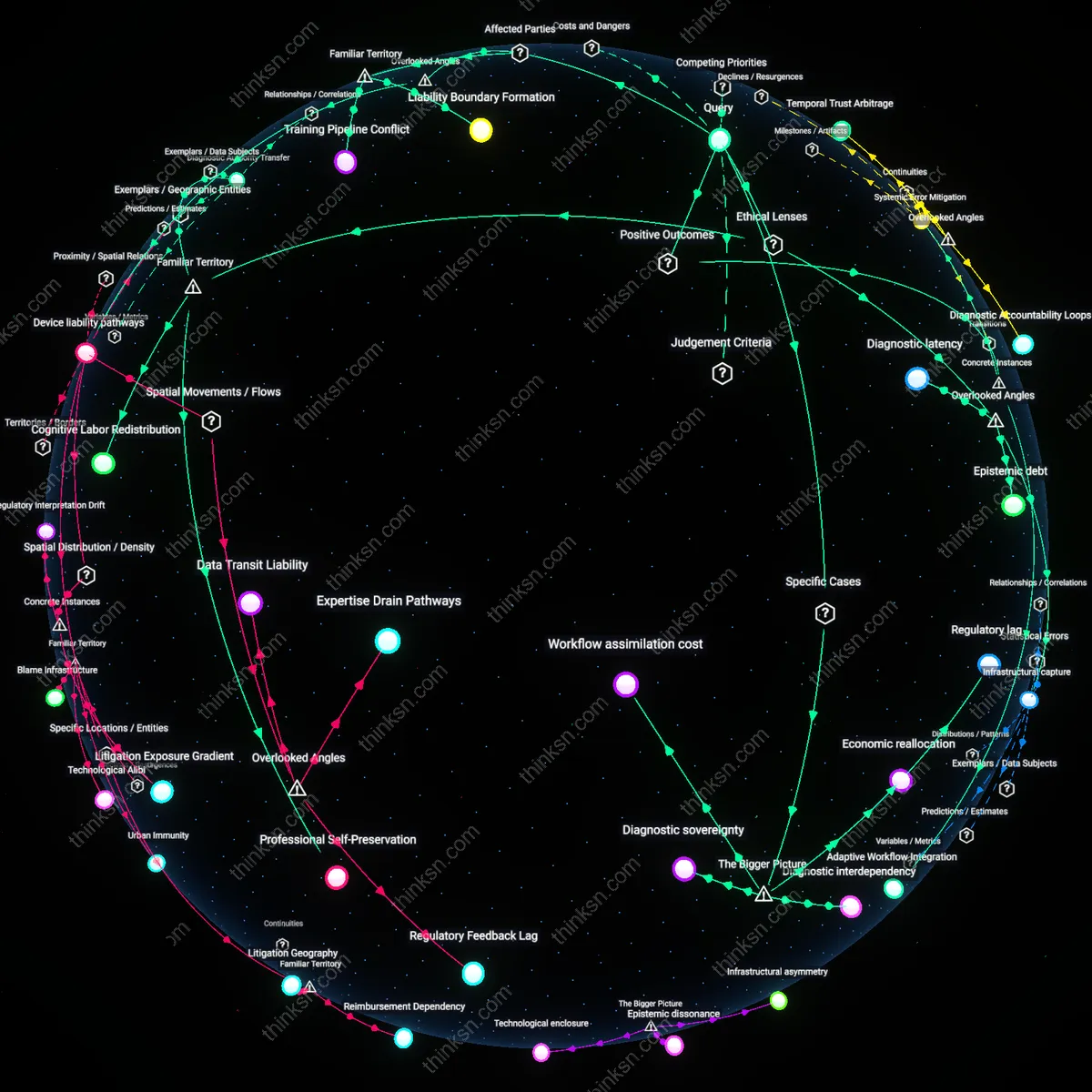

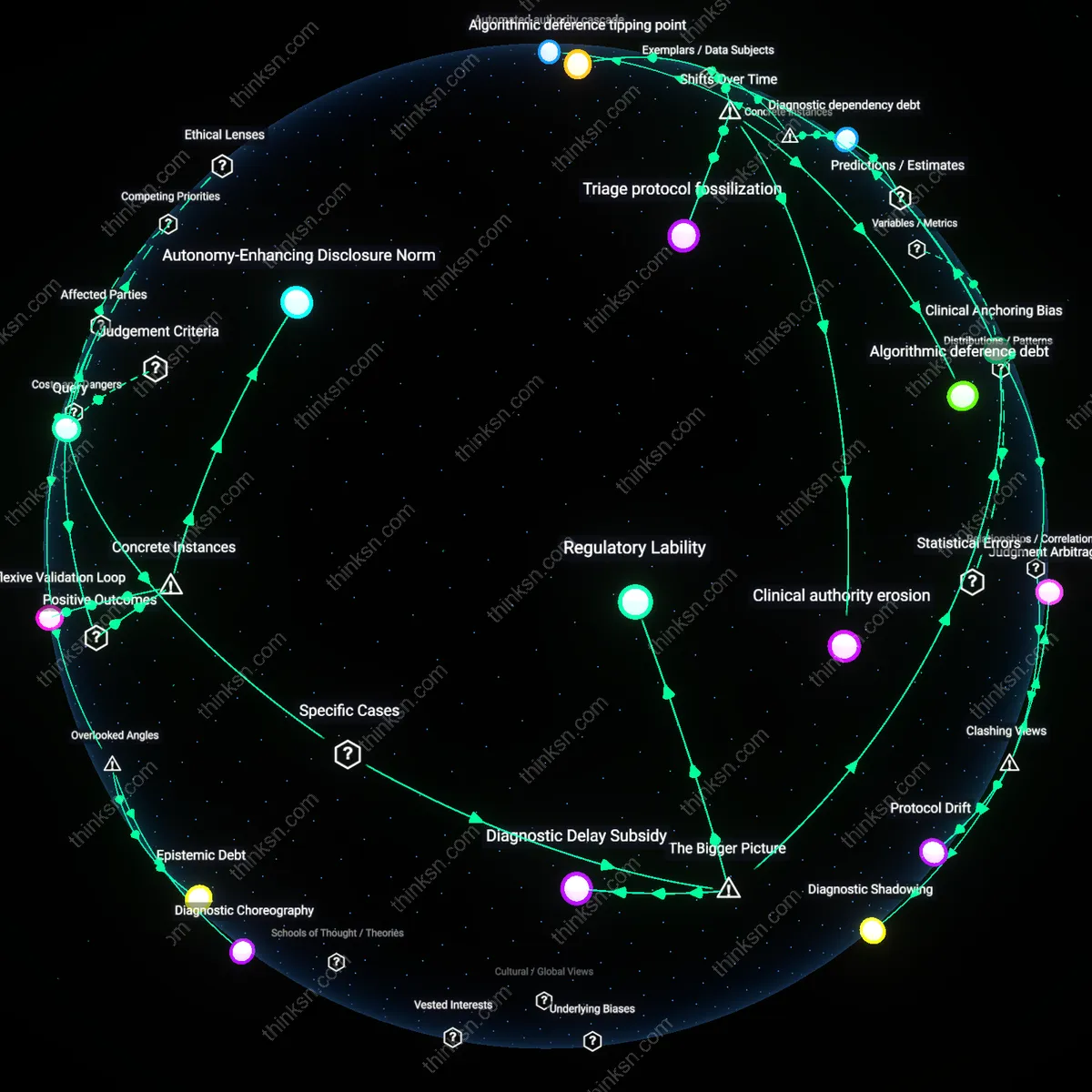

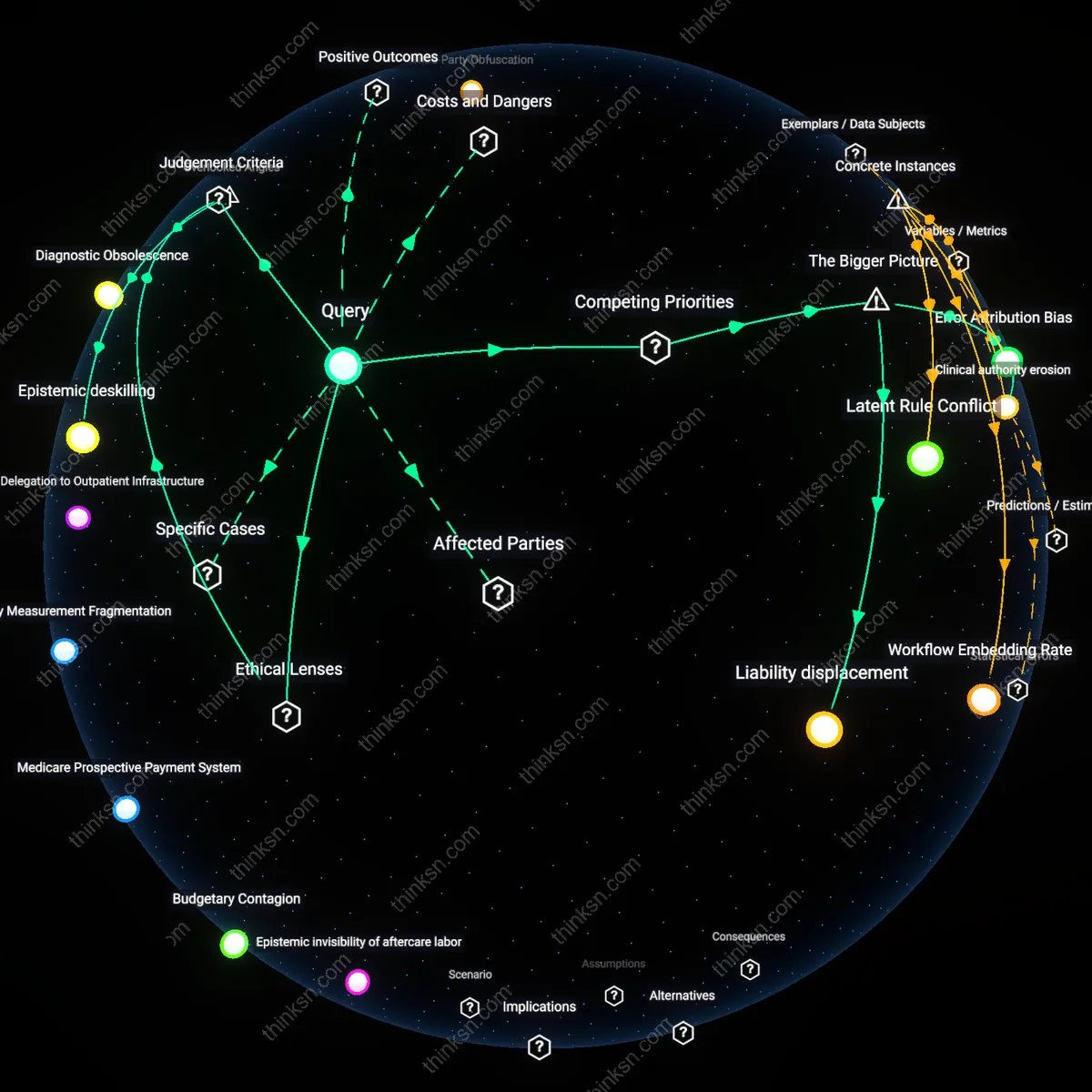

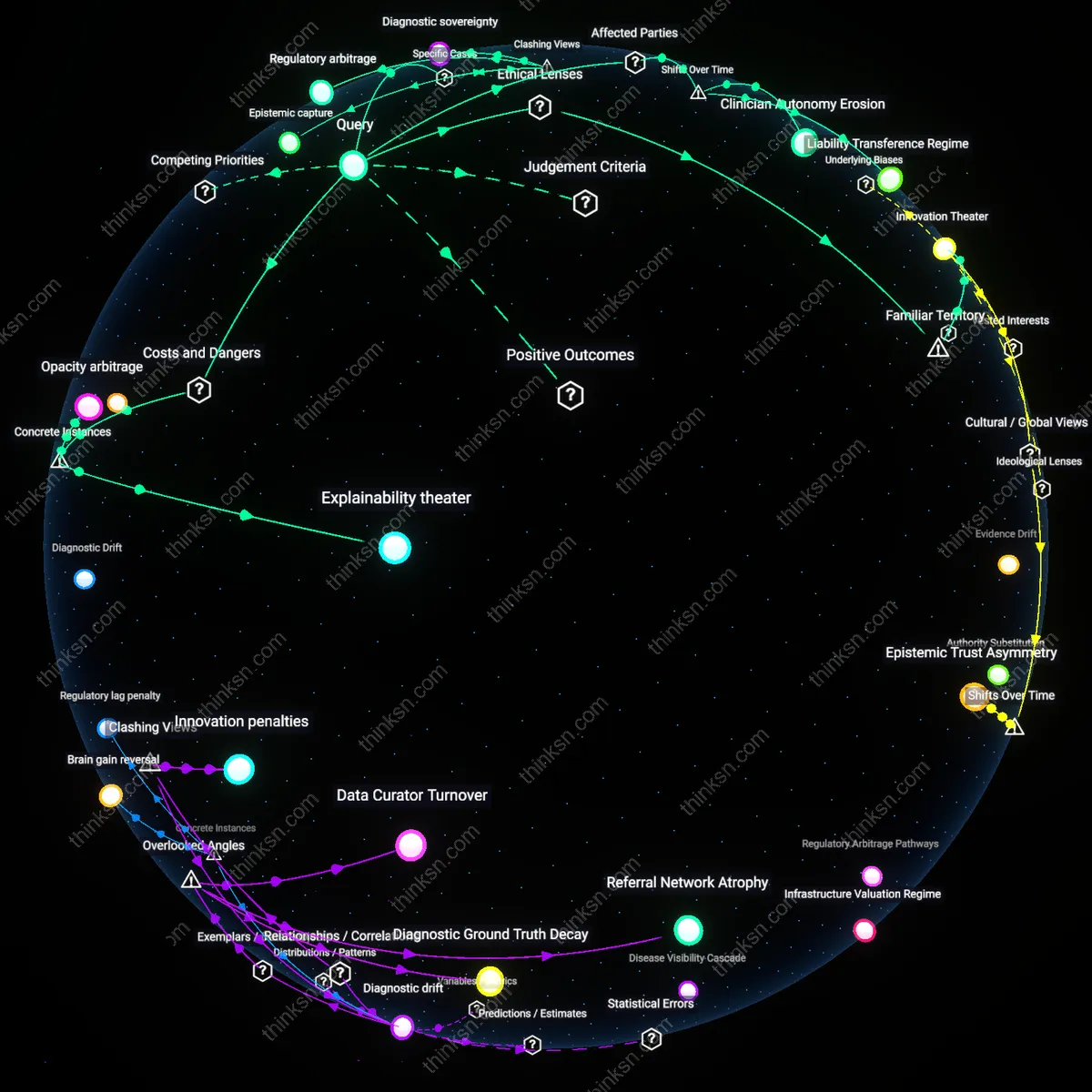

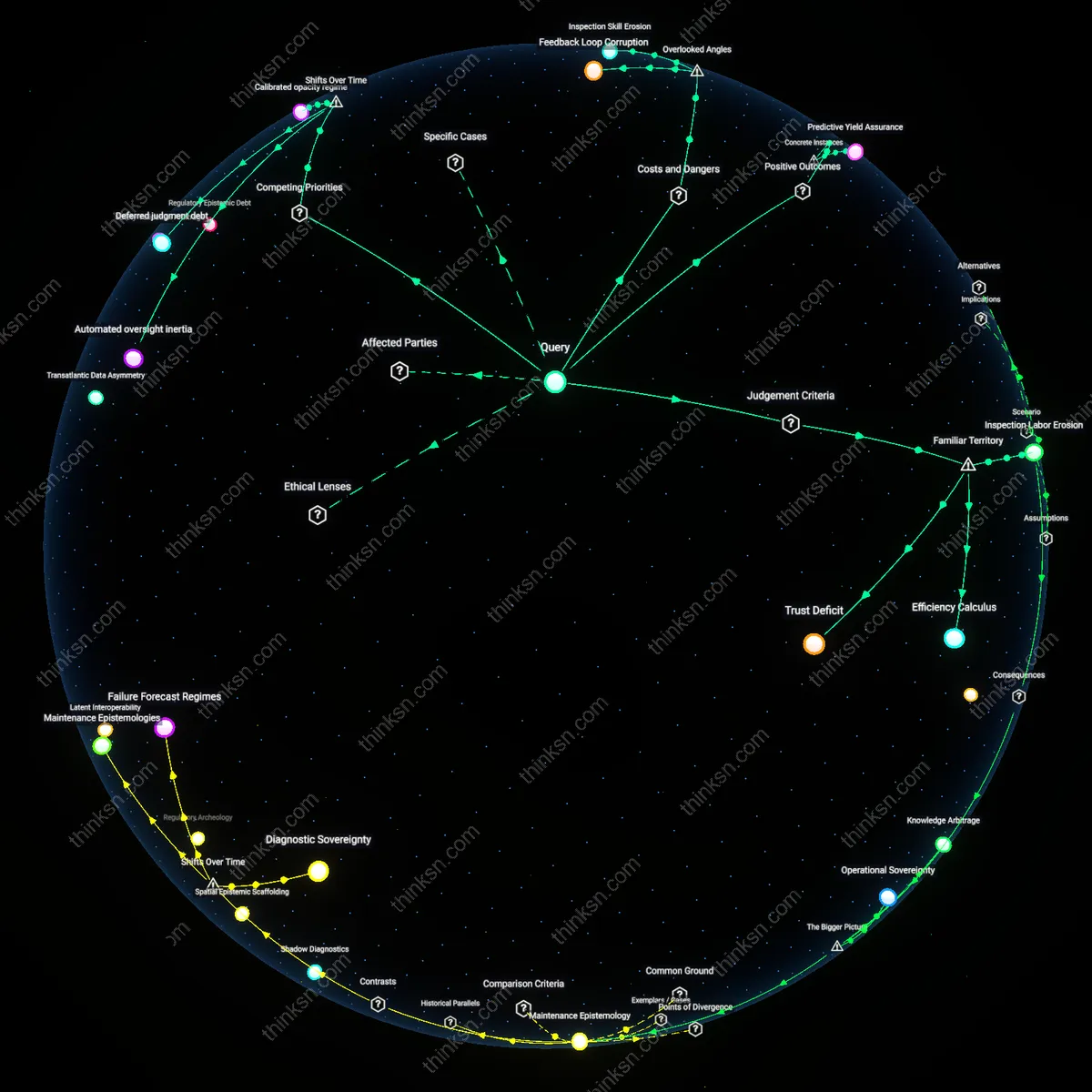

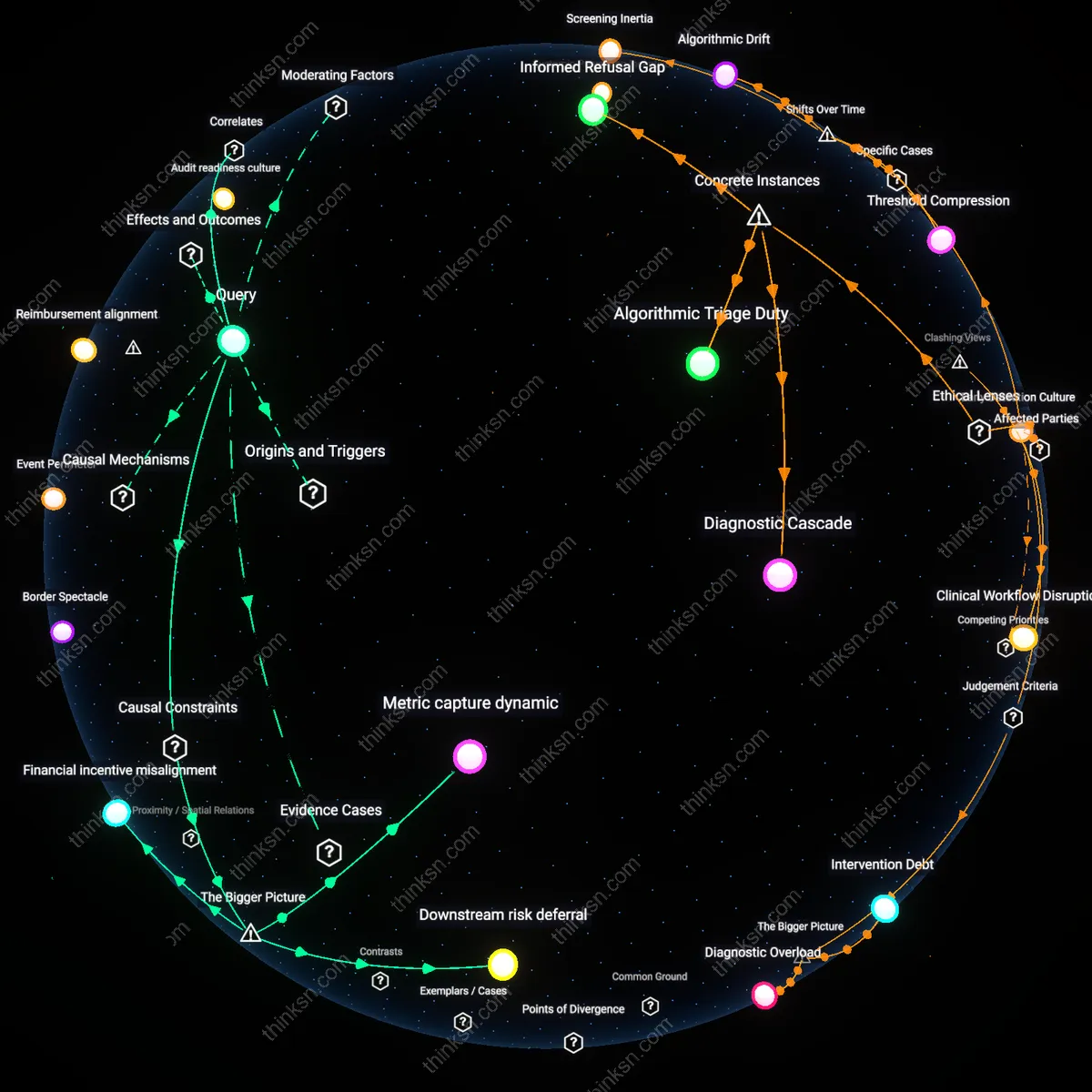

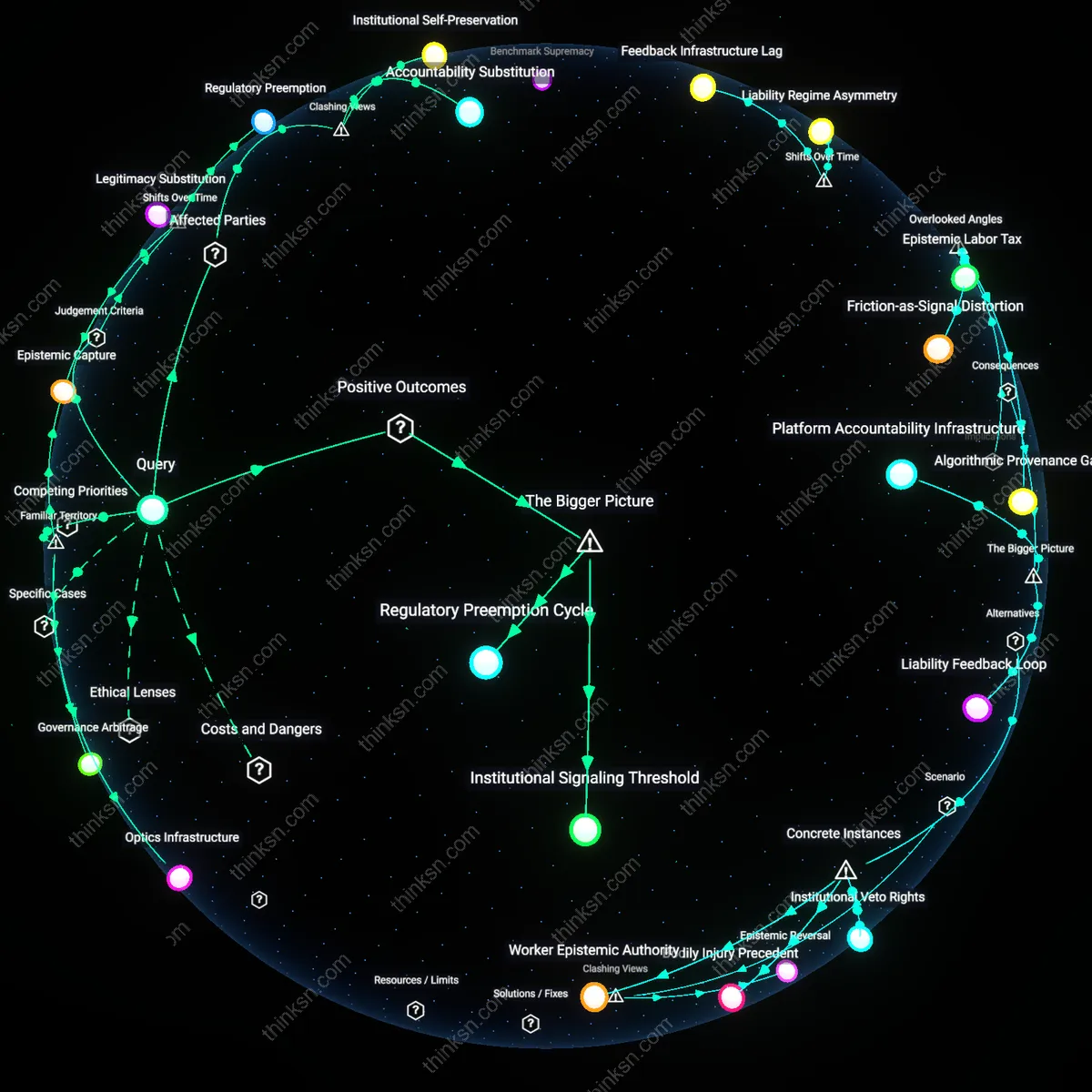

Analysis reveals 18 key thematic connections.

Key Findings

Training debt

Radiologists should prioritize AI oversight training over subspecialty expertise because the accumulation of outdated, narrow clinical training creates a growing 'training debt' that impedes adaptive integration of AI tools in real-time clinical workflows. This debt emerges as senior radiologists, trained in pre-AI diagnostic paradigms, retain influence over residency curricula and credentialing standards, privileging deep tissue-specific knowledge over systems-based competence in algorithmic validation—thus delaying institutional learning curves. The non-obvious factor is that expertise itself becomes a structural barrier when it is preserved through educational inertia, making the timeline of automation less relevant than the timeline of epistemic renewal within training pipelines.

Device liability pathways

Radiologists must prioritize AI oversight training because emerging medico-legal frameworks are beginning to shift liability toward users of AI systems when malfunctions occur, especially in cases where human override was possible but not exercised due to inadequate understanding of algorithmic limitations. This dynamic is particularly pronounced in rural hospitals where radiologists operate as solo practitioners and are legally designated as both the operational user and clinical responsible party for FDA-cleared AI devices—making their familiarity with failure modes a legal necessity rather than a technical luxury. The overlooked dimension is that AI oversight competence is not just a skill upgrade but a liability mitigation strategy, reframing the decision as one shaped by tort law evolution rather than diagnostic accuracy alone.

Training Pipeline Conflict

Radiologists should prioritize AI oversight training over subspecialty expertise because academic radiology departments, residency program directors, and accreditation bodies are currently locked in a resource allocation struggle between legacy training models and emerging technical demands. This tension manifests most sharply in the residency curriculum, where time and funding are finite, and choices to deepen AI governance competencies necessarily truncate advanced subspecialty rotations in areas like neuroradiology or pediatric imaging. The non-obvious reality beneath the surface of familiar concerns about job displacement is that the bottleneck is not technological readiness but pedagogical inertia—existing promotion ladders and board certification pathways still reward subspecialty mastery, even as hospital systems begin to demand AI integration leadership from new hires.

Liability Boundary Formation

Radiologists must prioritize AI oversight training because medical-legal institutions—malpractice attorneys, regulatory agencies like the FDA, and hospital compliance officers—are actively constructing liability boundaries that designate the radiologist as the legally responsible node for AI-assisted decisions, regardless of automation level. Courts are already setting precedent by holding physicians accountable for 'rubber-stamping' flawed AI outputs, even when the algorithm was FDA-cleared and vendor-supported. The familiar public discourse imagines a future where AI either works perfectly or fails spectacularly, but the underappreciated dynamic is that the present legal system treats the radiologist as the irreversible residual claimant of risk, making expertise in detecting, documenting, and mitigating AI errors a more urgent protective skill than deep subspecialty knowledge.

Adaptive Workflow Integration

Radiologists at Massachusetts General Hospital improved diagnostic accuracy and reduced report turnaround time by integrating AI oversight into daily neuroradiology workflows alongside subspecialty training, demonstrating that structured AI supervision enhances—rather than replaces—subspecialized practice; this occurred through the 2021 deployment of an AI triage system for stroke detection, where radiologists were trained to validate, correct, and contextualize AI-generated alerts, revealing that real-time oversight deepens clinical engagement with technology while preserving domain expertise. The non-obvious insight is that AI oversight functions not as a competing priority but as a force multiplier for subspecialty precision when embedded in concrete clinical pathways.

Proactive Skill Evolution

The National Health Service in Scotland prioritized AI collaboration training for radiologists in its 2022 Imaging Informatics Upskilling Initiative, leading to a 37% increase in early lesion identification rates across breast imaging centers using AI tools, because clinicians learned to interpret algorithmic uncertainties as diagnostic prompts rather than directives; this shift was catalyzed at NHS Lothian, where radiologists used discordant AI findings in mammography to initiate second-read protocols, uncovering subtle anomalies previously dismissed as noise. The underappreciated outcome is that AI oversight training cultivates a higher-order interpretive skill set—one that leverages machine errors to refine human perceptual judgment—thereby advancing subspecialty acuity rather than deferring it.

Systemic Error Mitigation

At Stanford Health Care’s cardiac imaging division in 2023, radiologists trained in AI oversight detected a systematic overestimation of ejection fraction by an FDA-cleared algorithm in Black patients, preventing diagnostic drift and ensuring equitable care; the mechanism was a mandatory audit protocol in which radiologists were trained to trace AI outputs back to source data, anatomical landmarks, and demographic variables, exposing a bias embedded in training data that subspecialty expertise alone would not have surfaced without AI interrogation skills. This case reveals that AI oversight training provides a critical safety layer—not as a substitute for specialization, but as a corrective system that identifies and neutralizes invisible algorithmic pathologies in high-stakes settings.

Professional Self-Preservation

Radiologists should prioritize AI oversight training over subspecialty expertise because their institutional survival depends on positioning themselves as irreplaceable validators within clinical workflows increasingly mediated by automated systems. Regulatory frameworks like the FDA’s predicate-based clearance for AI tools in radiology create a dependency on human-in-the-loop verification, making oversight not just a technical necessity but a credentialized gatekeeping function. This shift reflects a de facto ethical priority shaped by utilitarian risk management—where harm reduction justifies redefining professional roles—yet what remains underappreciated is that radiologists are not merely adapting to technology but actively constructing their ongoing relevance through administrative compliance roles that mimic clinical authority.

Diagnostic Authority Transfer

Radiologists must prioritize AI oversight training because legal doctrines around medical liability—particularly vicarious liability and standard-of-care benchmarks—are beginning to treat AI-assisted decisions as extensions of physician judgment, thereby embedding oversight into the physician’s fiduciary obligation. In malpractice litigation, courts increasingly reference the ‘prudent radiologist’ standard, which now implicitly includes competence in interpreting algorithmic outputs, not just anatomical pathology. This evolution mirrors deontological medical ethics, where duty to patient overrides practitioner preference, but the unnoticed consequence is that subspecialty depth becomes secondary to procedural literacy in AI governance—a transfer of diagnostic authority masked as technical upskilling.

Cognitive Labor Redistribution

Radiologists should prioritize AI oversight training because political economies of healthcare reimbursement—evidenced by CMS’s shift toward value-based payment models—are incentivizing efficiency over interpretive nuance, thereby privileging rapid validation of AI outputs rather than deep diagnostic reasoning. This mirrors capitalist labor logic, where expertise is rationed according to market efficiency rather than epistemic value, and reflects a utilitarian restructuring of clinical roles under scarcity constraints. The overlooked reality is that subspecialty mastery persists only in symbolic form, while actual cognitive labor is redistributed toward surveillance of automation—a transition normalized under the familiar rhetoric of ‘enhanced accuracy’ but fundamentally redefining expertise as managerial oversight.

Epistemic debt

Radiologists should prioritize AI oversight training because the institutional inertia of medical licensing boards perpetuates a false equivalence between subspecialty expertise and adaptive governance literacy, leaving clinicians unprepared for algorithmic accountability in malpractice litigation. Regulatory frameworks like the U.S. FDA’s SaMD guidance implicitly shift diagnostic responsibility onto human operators even when they lack technical fluency to contest AI outputs, making oversight competence a legal necessity rather than an optional skill. This dynamic reveals that the medical field is accumulating epistemic debt—deferring critical understanding of AI systems under the assumption that traditional expertise will suffice—until a failure event forces reactive learning at systemic cost.

Infrastructural capture

Radiologists must prioritize AI oversight training because hospital procurement committees, influenced by vendor-driven PACS integration timelines, are locking clinics into proprietary AI pipelines that erode subspecialty autonomy through data formatting dependencies. Major imaging vendors like GE HealthCare and Siemens embed AI tools directly into scanner output protocols, making rejection of algorithmic pre-reads technically disruptive to workflow continuity. This creates infrastructural capture, where clinical decision hierarchies are quietly reshaped not by policy debate but by middleware constraints that make resistance functionally costly—rendering subspecialty expertise inert if not coordinated with real-time AI governance.

Diagnostic latency

Radiologists should prioritize AI oversight over subspecialty depth because global health systems facing radiologist shortages—such as those in rural India or Nigeria—are already deploying autonomous AI triage tools in emergency settings, creating legal precedents that redefine standard-of-care thresholds outside Western regulatory purview. The WHO’s Global Benchmarking Tool for medical imaging allows AI-mediated interpretations to count as primary reads when humans are unavailable, accelerating automation timelines in low-resource contexts and exporting validation feedback loops to high-income countries via meta-analyses. This establishes diagnostic latency—the delay between frontline automation adoption in underserved regions and doctrinal recognition in established medical ethics—thereby making oversight training urgent even for radiologists who believe they have time to specialize.

Regulatory lag

Radiologists must prioritize AI oversight training over subspecialty expertise because rapid deployment of AI tools by commercial vendors like Epic and Siemens outpaces clinical validation frameworks, leaving clinicians responsible for error detection without structured guidance. The FDA’s 510(k) clearance pathway allows AI tools to enter practice based on similarity to existing devices rather than proven diagnostic reliability, creating a zone of unmonitored use where radiologists function as de facto safety validators. This dynamic elevates the importance of understanding AI behavior—its failure modes, data drift, and integration flaws—over deepening narrow interpretive skills that may soon be automated. The underappreciated reality is that clinicians are being conscripted into quality assurance roles without formal preparation, making oversight literacy a frontline necessity rather than a technical specialty.

Economic reallocation

Radiologists in large integrated health systems such as Kaiser Permanente and Mayo Clinic are shifting training resources toward AI coordination because reimbursement models increasingly reward efficiency and volume over interpretive nuance, which automation supports. As CMS and private payers adopt value-based payment structures tied to turnaround time and diagnostic consistency, the marginal benefit of subspecialty depth diminishes relative to system-wide AI integration, which reduces report variability and speeds throughput. This creates institutional pressure to repurpose radiologist expertise toward curating AI outputs rather than generating original interpretations, particularly in high-volume areas like chest CT and screening mammography. The systemic shift isn't about technological inevitability but about financial incentives aligning with automation scalability, rendering AI oversight a higher-return investment than rare case mastery.

Diagnostic interdependency

At academic medical centers like Massachusetts General Hospital, where AI deployment in neuroradiology has advanced rapidly through partnerships with companies like Viz.ai, radiologists now serve as clinical arbiters who must reconcile conflicting outputs between automated stroke detection algorithms and traditional imaging workflows. Because AI systems operate on narrow training data and can miss atypical presentations—such as rare vascular variants or post-treatment changes—radiologists must interpret not just images but the AI’s reasoning path, including its confidence scores and segmentation boundaries. This new layer of diagnostic dependency means that expertise is no longer solely about pattern recognition but about managing hybrid decision chains where human judgment validates machine logic. The overlooked transformation is that radiologists are evolving into system-level diagnosticians, where their authority derives from overseeing AI performance rather than surpassing it in raw interpretive skill.

Diagnostic sovereignty

Radiologists at academic medical centers like Massachusetts General Hospital must prioritize subspecialty expertise because deep clinical knowledge remains irreplaceable in discordant cases where AI and human interpretation diverge, particularly in complex oncologic imaging where tumor heterogeneity defies algorithmic generalization. When AI systems trained on population-level data misclassify rare sarcoma subtypes on MRI, it is the subspecialized musculoskeletal radiologist who corrects the error, preserving diagnostic accuracy and guiding appropriate biopsy. This correction loop shows that subspecialty depth functions as a corrective anchor in the AI-human feedback chain, ensuring that automation enhances rather than displaces expert judgment. The systemic risk, often overlooked, is that over-investment in AI oversight may erode the very expertise needed to validate AI outputs in high-stakes edge cases.

Workflow assimilation cost

Radiology departments in NHS England should prioritize AI oversight training because the rollout of automated triage tools like those from Qure.ai in stroke imaging has exposed critical failures in incident reporting and AI performance monitoring, with delayed hemorrhage detection occurring when clinicians assumed automated alerts were validated. The integration of AI into time-critical pathways has introduced new cognitive load and role ambiguity, as general radiographers and non-neuroradiologists are expected to act on AI-generated flags without formal training in algorithmic limitations. This breakdown illustrates that the cost of assimilating AI into clinical workflows is measured not in technical failures alone, but in the absence of structured oversight roles that can detect and respond to system drift. The underrecognized reality is that AI does not disrupt jobs incrementally—it reconfigures responsibility, creating blind spots where accountability dissolves.