Strategic Sensemaking

Human officers prove their worth by enacting strategic sensemaking that identifies regulatory intent behind sudden shifts, enabling organizations to align with emergent policy directions before formal rules are codified; this operates through senior compliance leaders interpreting fragmented signals—such as legislative debates, enforcement rhetoric, and geopolitical pressures—into actionable compliance postures, a function algorithms cannot replicate because they lack access to informal or ambiguous context; the non-obvious element is that value isn't in faster execution but in pre-regulatory interpretation, challenging the assumption that compliance is reactive rather than anticipatory.

Institutional Trust Webs

Human officers maintain legitimacy during regulatory shocks by activating institutional trust webs—networks of auditors, regulators, and internal stakeholders who rely on personal credibility and historical judgment to vouch for organizational integrity when algorithms produce inconclusive or conflicting outputs; this mechanism functions through long-standing inter-agency relationships and reputation capital accumulated over repeated, high-stakes interactions, which become critical when rule-based systems fail due to novelty; this contradicts the dominant view that automation erodes trust deficits, revealing instead that trust is preserved through human particularity, not systemic neutrality.

Regulatory Improvisation

Human officers demonstrate irreplaceable value through regulatory improvisation—the real-time recombination of legal principles, ethical norms, and operational constraints to stabilize compliance processes amid unanticipated change; this occurs within crisis-response teams that dynamically reinterpret existing frameworks to plug gaps left by frozen algorithmic models, such as during the 2023 EU Digital Services Act enforcement surge when automated content moderation failed under novel scope definitions; the underappreciated reality is that compliance under disruption relies less on accuracy and more on adaptive coherence, undermining the ideal of fully deterministic regulatory systems.

Human Oversight Layer

Deploy senior compliance officers as real-time validators of AI-generated interpretations during unanticipated regulatory changes. These officers leverage institutional memory and cross-jurisdictional experience to assess whether algorithmic outputs align with regulatory intent, especially when rules conflict or contain ambiguous phrasing; this layer operates through existing escalation protocols in financial and healthcare compliance units, where precedent-based judgment remains legally admissible. The non-obvious element is that their value does not stem from faster decisions, but from being officially designated liability absorbers when automated systems fail—fulfilling audit and governance expectations that algorithms cannot.

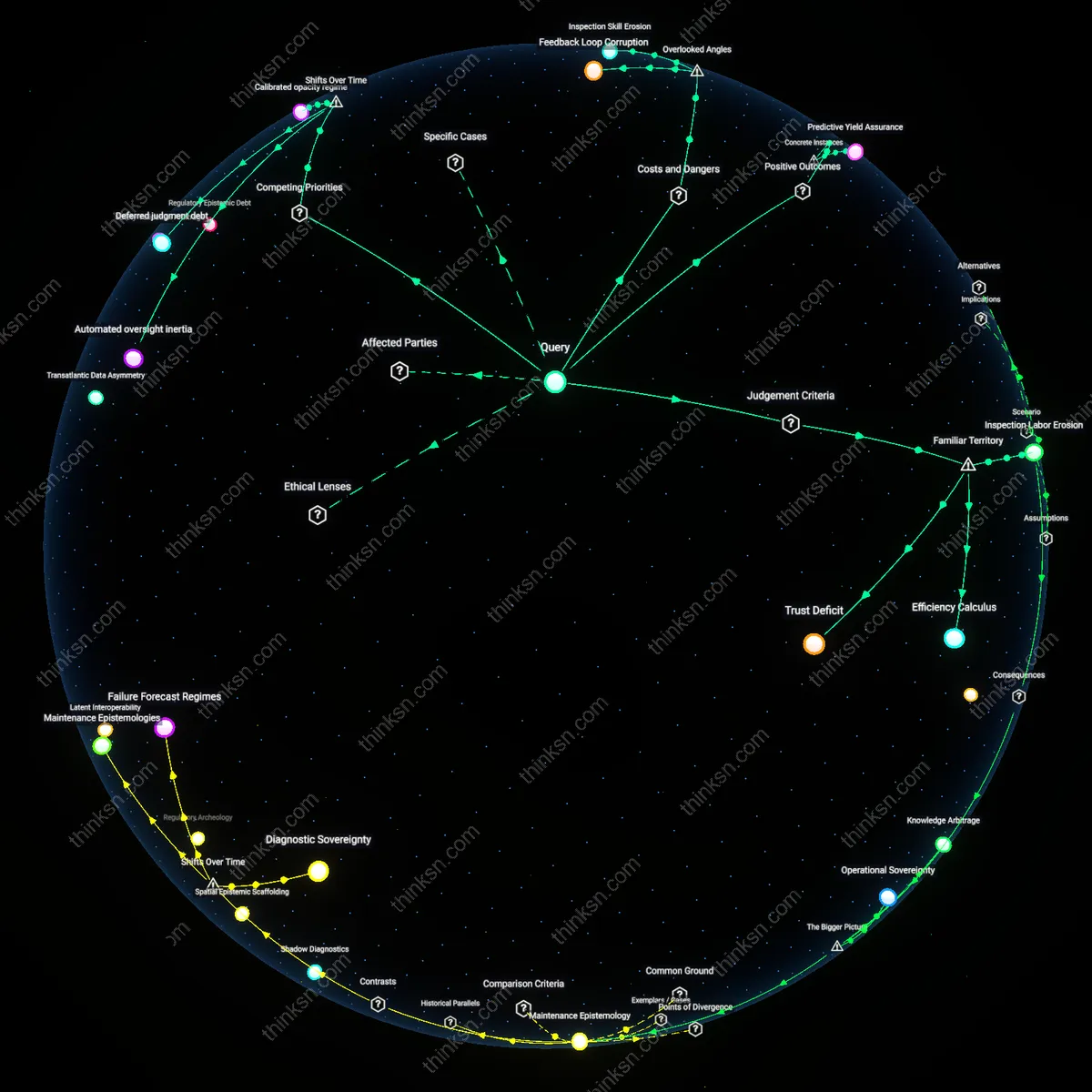

Regulatory Immune Response

Train specialized rapid-response teams within compliance departments to activate during regulatory shocks, using war-gaming and scenario forecasting drawn from historical crises like Dodd-Frank or GDPR rollout. These teams combine legal analysts, data scientists, and frontline auditors to manually reconstruct risk exposure before AI models can be retrained, operating through crisis cells similar to those used by central banks during market collapses. What’s rarely acknowledged is that their effectiveness depends not on expertise alone, but on pre-established authority to suspend AI outputs—a formal override mechanism that treats unexpected regulation like a system breach.

Interpretive Precedent Cache

Institutionalize a continuously updated repository of past regulatory ambiguities and human resolutions—such as SEC enforcement actions or court rulings on compliance gray areas—that compliance officers contribute to and draw from during unscripted events. This database functions as a reference ontology for judgment calls, integrated into hybrid review workflows at firms like asset managers and insurers where regulatory latitude is high. The underappreciated reality is that this cache doesn’t just inform decisions; it becomes audit-trail evidence of reasonable intent, fulfilling the 'good faith effort' standard regulators expect when rules shift abruptly.

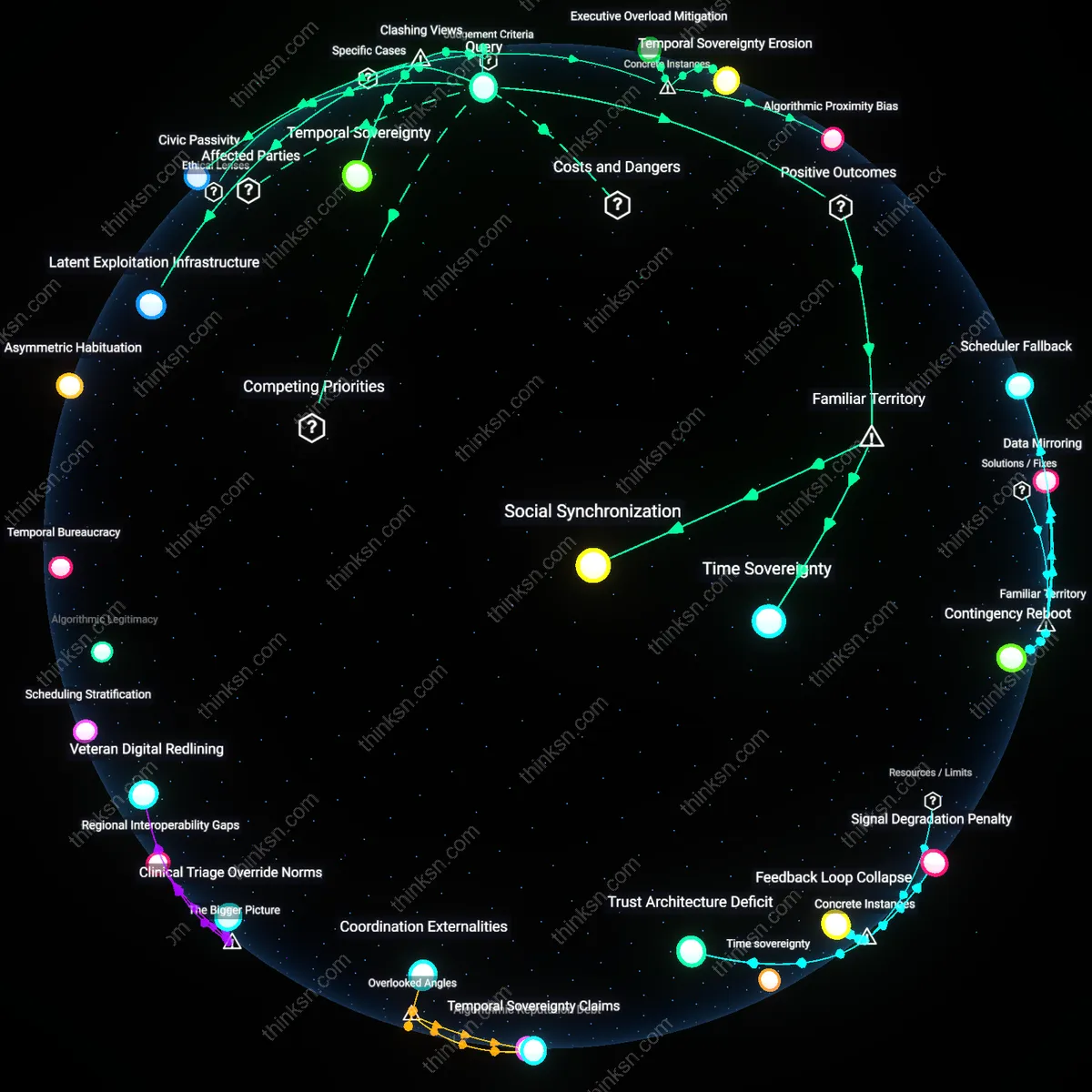

Regulatory foresight capacity

Human officers retain value by activating informal intelligence networks across agencies and industries to detect emerging regulatory risks before formal rules crystallize, a function no algorithm can replicate because it depends on trust-based human relationships and ambiguous signal interpretation. This capacity emerges from sustained inter-agency liaison roles and private-sector engagement forums where subtle shifts in policy language or enforcement priorities are shared unofficially, allowing officers to recalibrate compliance strategies in real time. The significance lies in the fact that rule-based AI systems are constrained by codified data, while human officers operate within a dynamic epistemic ecosystem where meaning is negotiated, not parsed — a systemic advantage rooted in social proximity to regulatory genesis points.

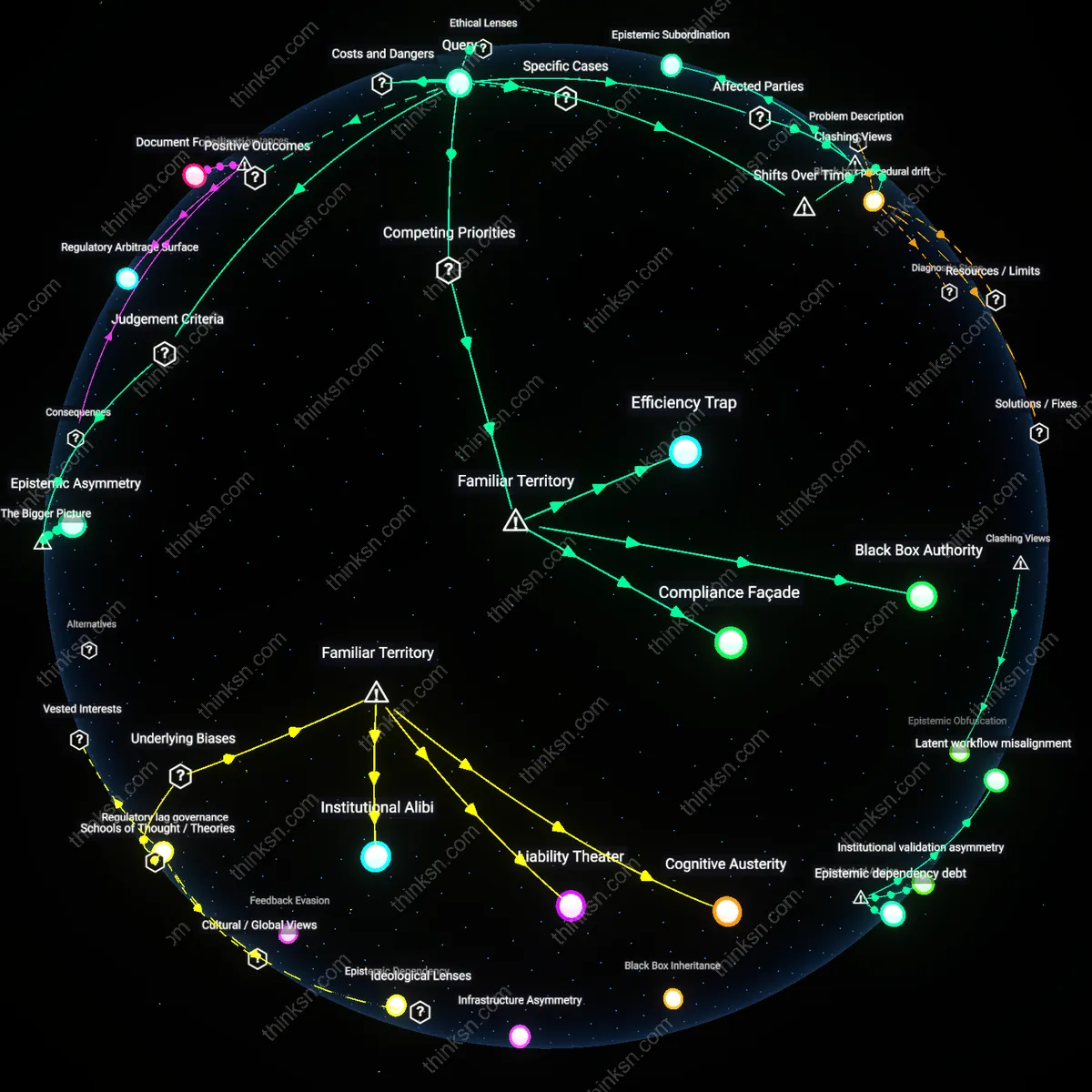

Interpretive authority anchoring

During regulatory shocks, human officers prove essential by exercising interpretive authority to stabilize organizational compliance responses, leveraging their position within legal-executive feedback loops that grant them access to provisional guidance from rule-makers. Unlike AI, which requires stable input parameters, officers can negotiate ambiguity by consulting regulators directly, referencing precedent flexibly, and justifying temporary deviations under principles of good faith. This function is systemically critical because regulatory shifts often create implementation vacuums where literal compliance is impossible — and only humans embedded in institutional trust networks can secure legitimacy for adaptive responses, preventing systemic paralysis.

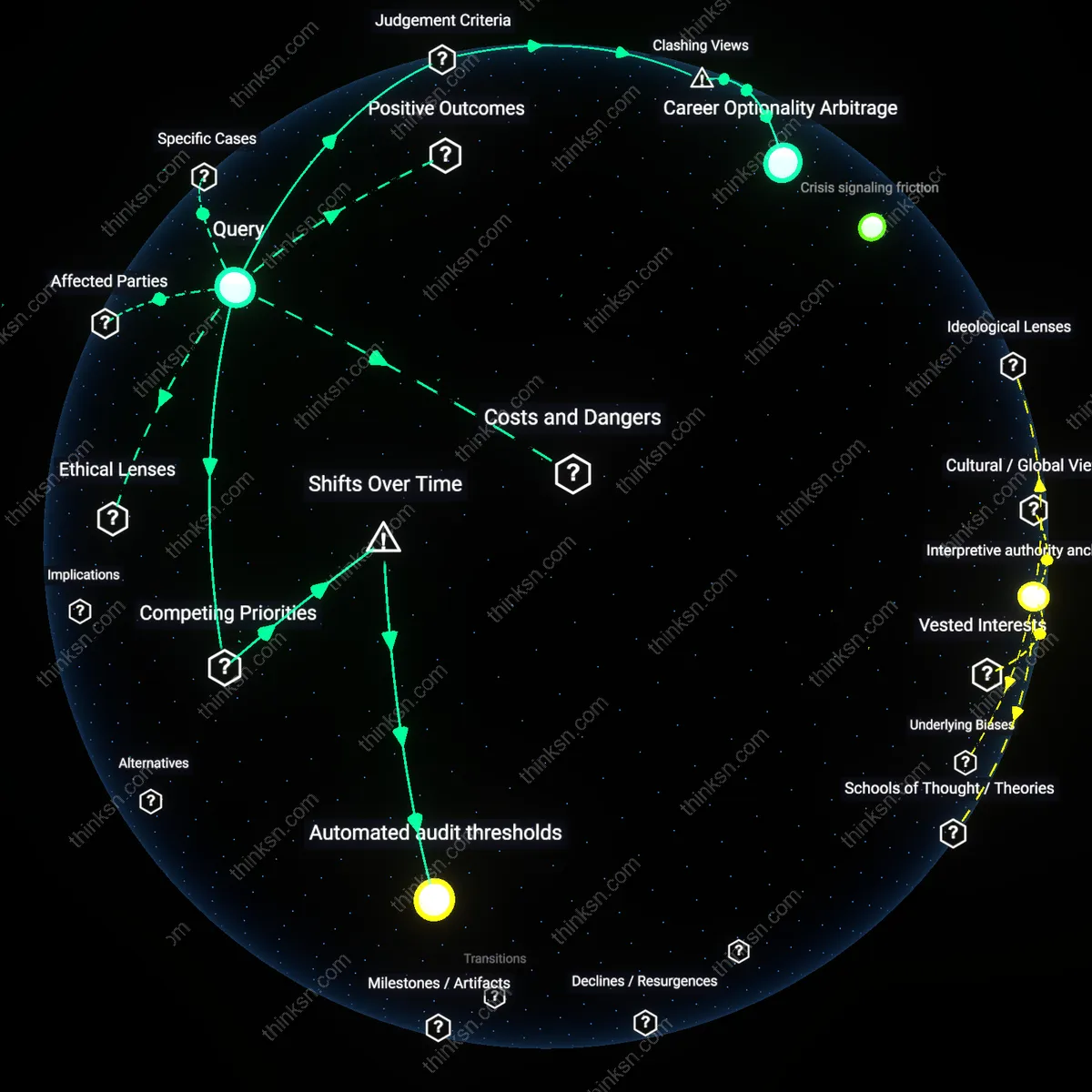

Crisis signaling friction

Human officers serve as essential friction points that slow down automated compliance systems during regulatory upheaval, using procedural judgment to suspend algorithmic enforcement when outputs risk legal or reputational harm. This braking function operates through hierarchical override protocols in financial and health regulators, where officers must sign off on high-impact decisions before they are executed. The underappreciated systemic role is not decision-making per se, but the deliberate introduction of deliberative delay — a safeguard that preserves institutional accountability when AI would otherwise accelerate compliance missteps, revealing that human worth is not in speed but in strategic inertia.