Is Deepening Domain Skills Worth Trade-Off in AI Prompt Engineering?

Analysis reveals 5 key thematic connections.

Key Findings

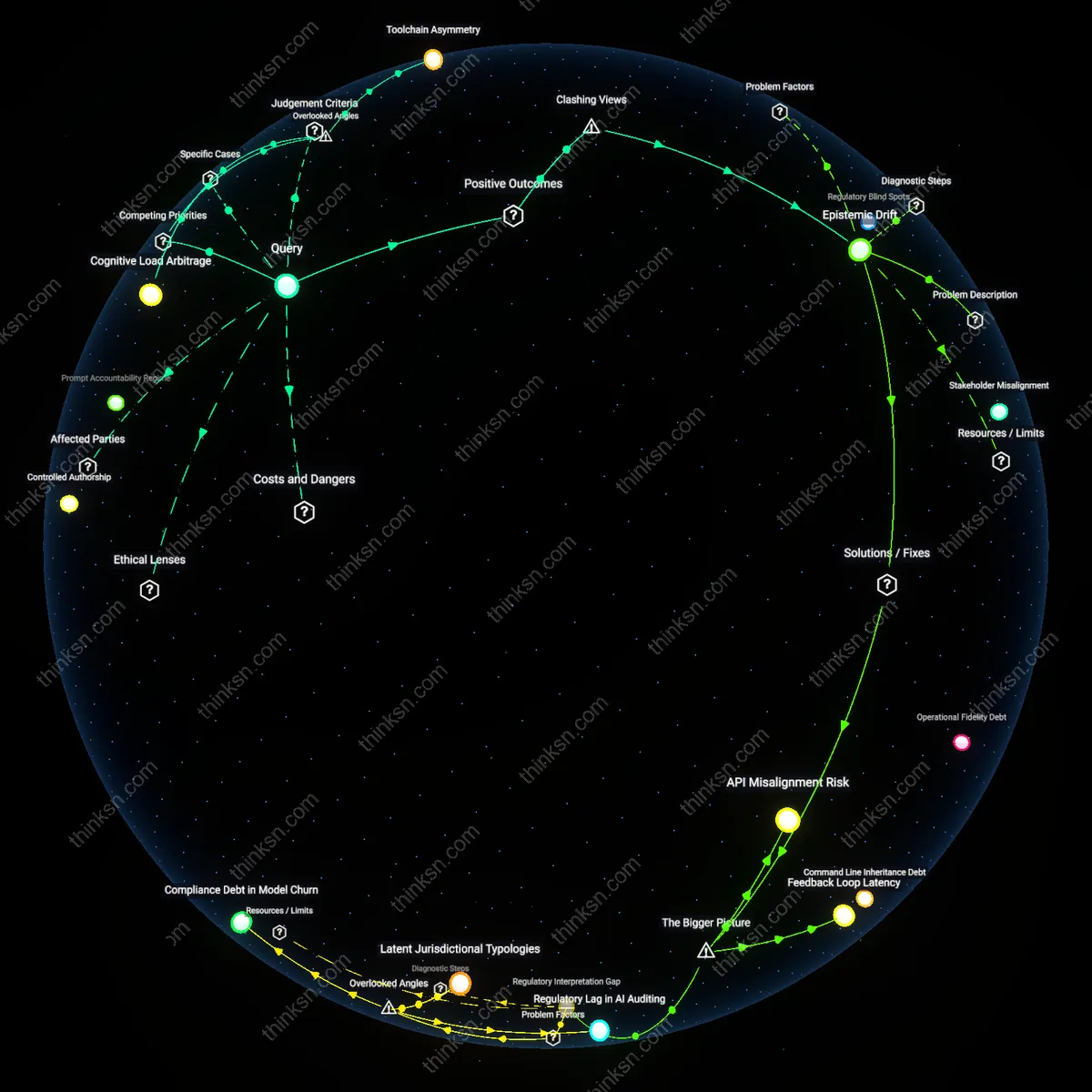

Epistemic Drift

Developing deeper expertise in technical writing enhances systemic resilience in AI-integrated workplaces by counteracting the epistemic drift caused by overreliance on large language models trained on degraded or circular sources. As LLMs increasingly draw from poorly sourced online content shaped by other LLMs, technical writers with rigorous research and verification skills become critical guardians of accuracy, especially in regulated sectors like medical device documentation or aerospace compliance where errors propagate catastrophically. Their role shifts from passive documentation to active truth maintenance—an underrecognized stabilizing function that resists the degradation of institutional knowledge, challenging the notion that prompt engineering’s agility is inherently superior in dynamic environments.

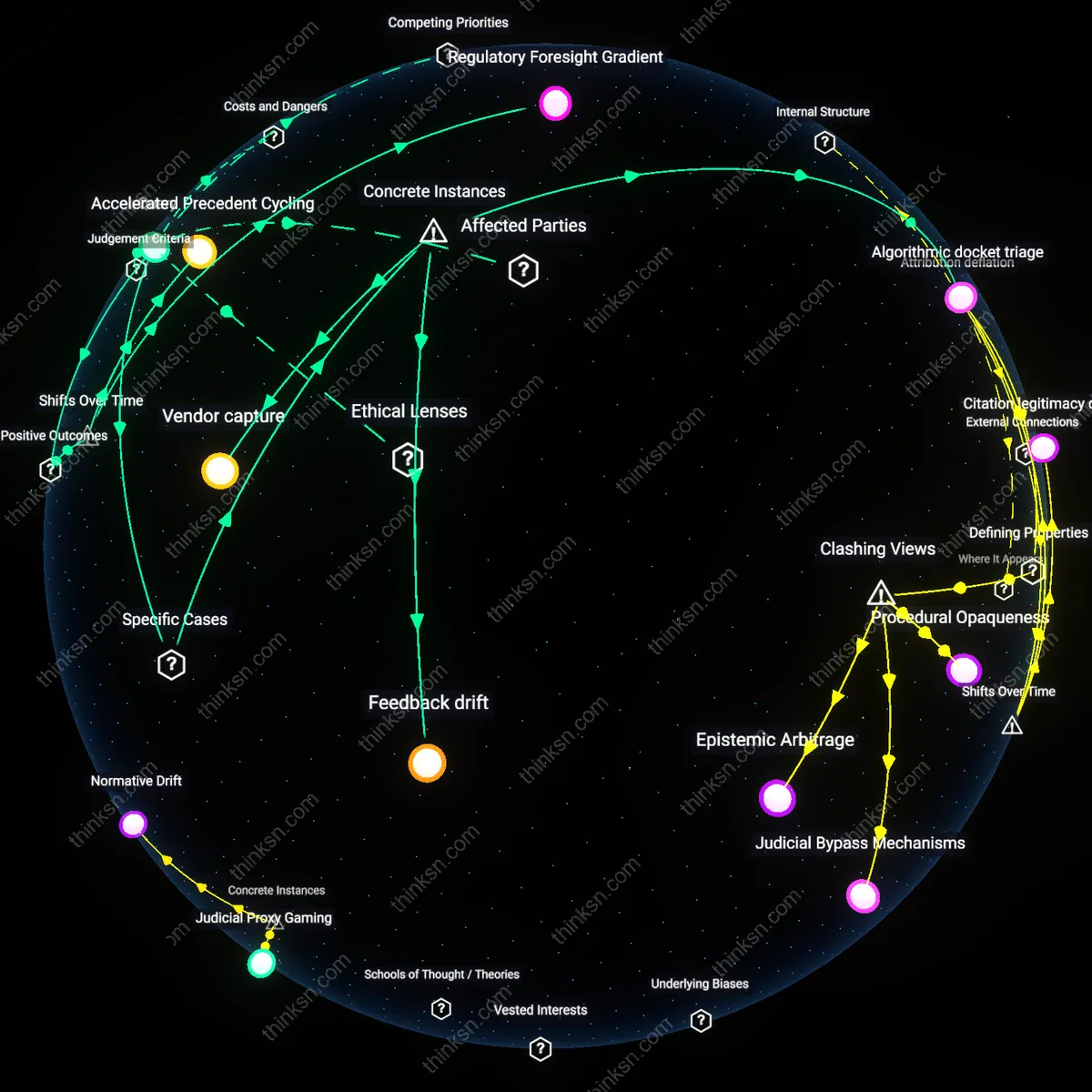

Labor Stratification

Choosing prompt engineering over technical writing accelerates labor stratification within knowledge industries by privileging speed and interface manipulation over accountability and traceability, particularly in consultancies and digital agencies servicing rapid AI adoption. Firms like Accenture and Deloitte now incentivize prompt tuning as a billable skill, positioning it as a premium service despite its shallow learning curve, while relegating traditional technical writers to lower-tier roles focused on post-processing AI outputs. This constructs a new hierarchy where power accrues not to those who ensure semantic fidelity but to those who master ill-explained model behaviors—revealing that the push toward prompt engineering is less about utility and more about redefining expertise in ways that obscure responsibility and consolidate control among technical intermediaries.

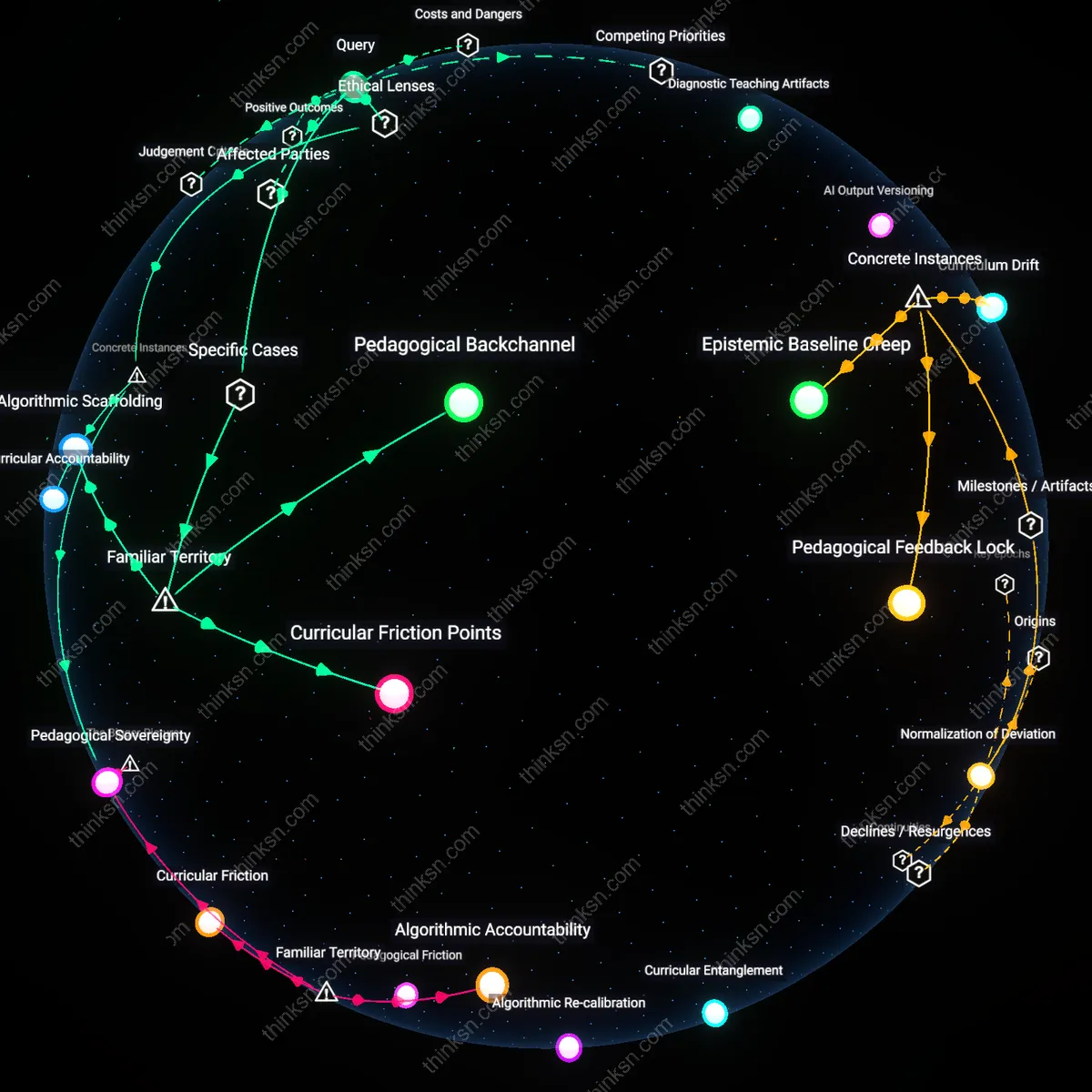

Cognitive Load Arbitrage

One should prioritize technical writing expertise over AI prompt engineering because mastering domain-specific knowledge expression reduces cognitive load in human-AI collaboration loops, a bottleneck most organizations silently face. When technical writers encode deep procedural knowledge into structured, model-agnostic language, they lower the mental effort required for non-experts to generate accurate AI outputs, making their skill indispensable even as prompt tools proliferate. This dynamic is underappreciated because most analyses focus on prompt efficiency, not the hidden tax of interpretive labor organizations pay when subject-matter nuance is lost in translation to AI systems.

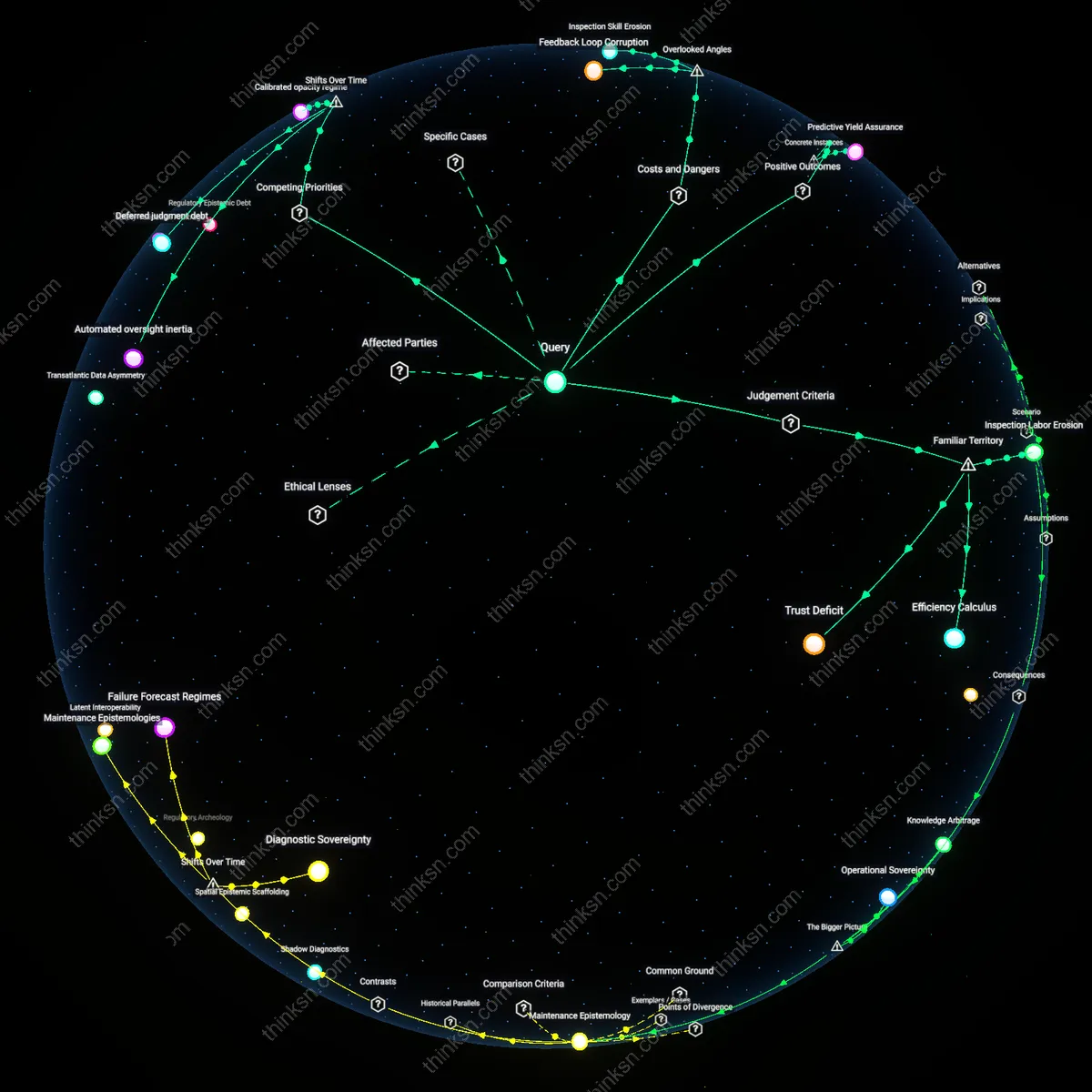

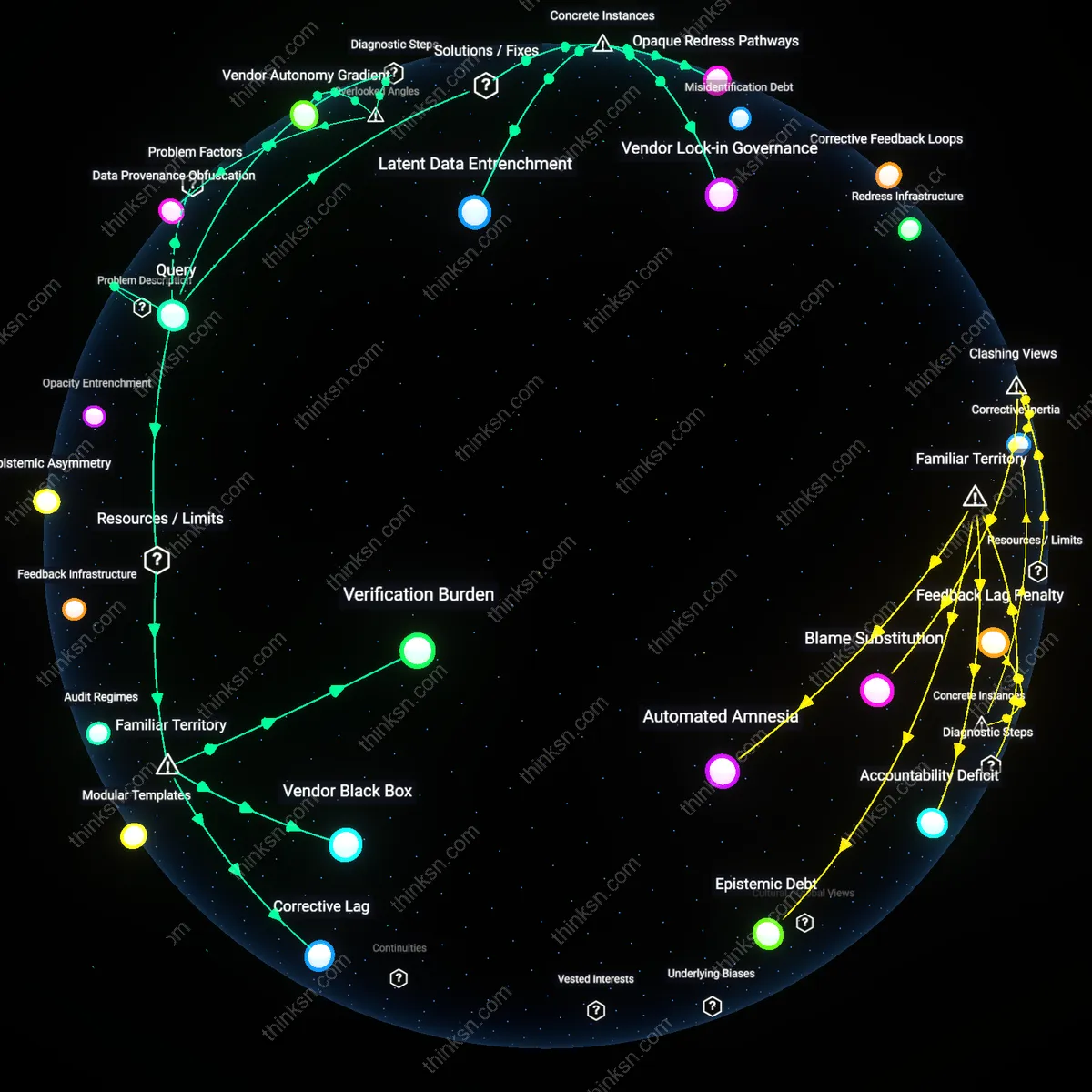

Toolchain Asymmetry

Pursuing technical writing offers greater long-term leverage than prompt engineering due to its asymmetrical integration within enterprise toolchains, where documentation systems are often legacy-bound, regulated, and resistant to automation churn. Technical writers who understand both compliance architecture (e.g., FDA validation logs, ISO-mandated procedures) and AI augmentation can control the flow of information across semi-automated workflows, effectively gatekeeping reliable AI integration. This dimension is rarely acknowledged because discussions assume AI tools operate in frictionless environments, ignoring how institutional inertia grants outsized influence to those who manage inputs to AI systems within constrained, high-stakes pipelines.

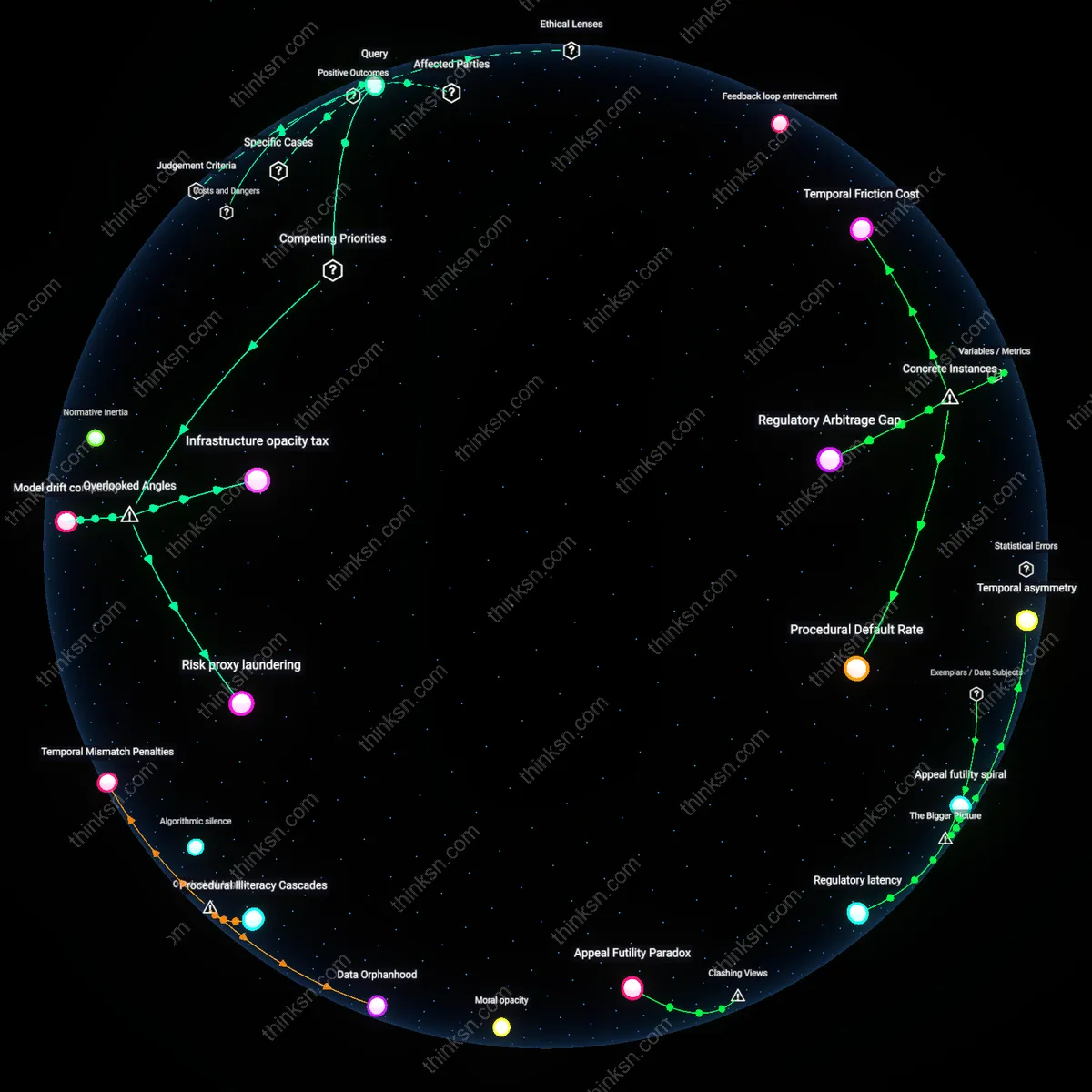

Epistemic Debt

Choosing technical writing over prompt engineering mitigates organizational epistemic debt—the accumulating cost of relying on opaque, context-poor AI outputs that erode collective understanding of core processes. Technical writers who embed traceable reasoning, source provenance, and revision logic into documents create knowledge scaffolding that resists AI-driven obsolescence, whereas prompt engineers optimize for immediate output fluency without addressing long-term comprehension decay. This tradeoff is invisible in most career advice because success metrics favor short-term productivity gains, obscuring how the loss of explanatory depth weakens organizational resilience over time.