Is CFPB Failing to Protect Digital Consumers Against Big FinTech?

Analysis reveals 5 key thematic connections.

Key Findings

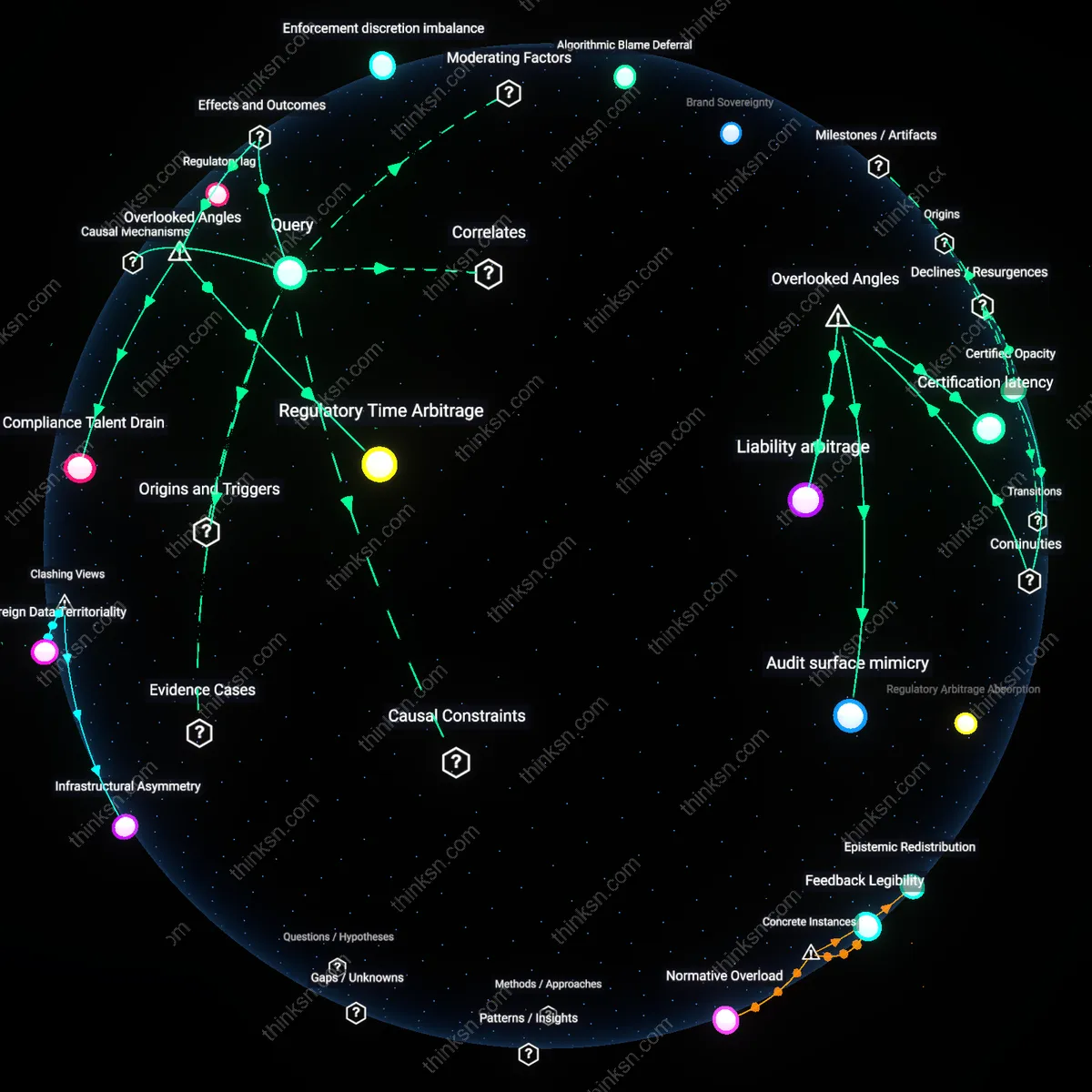

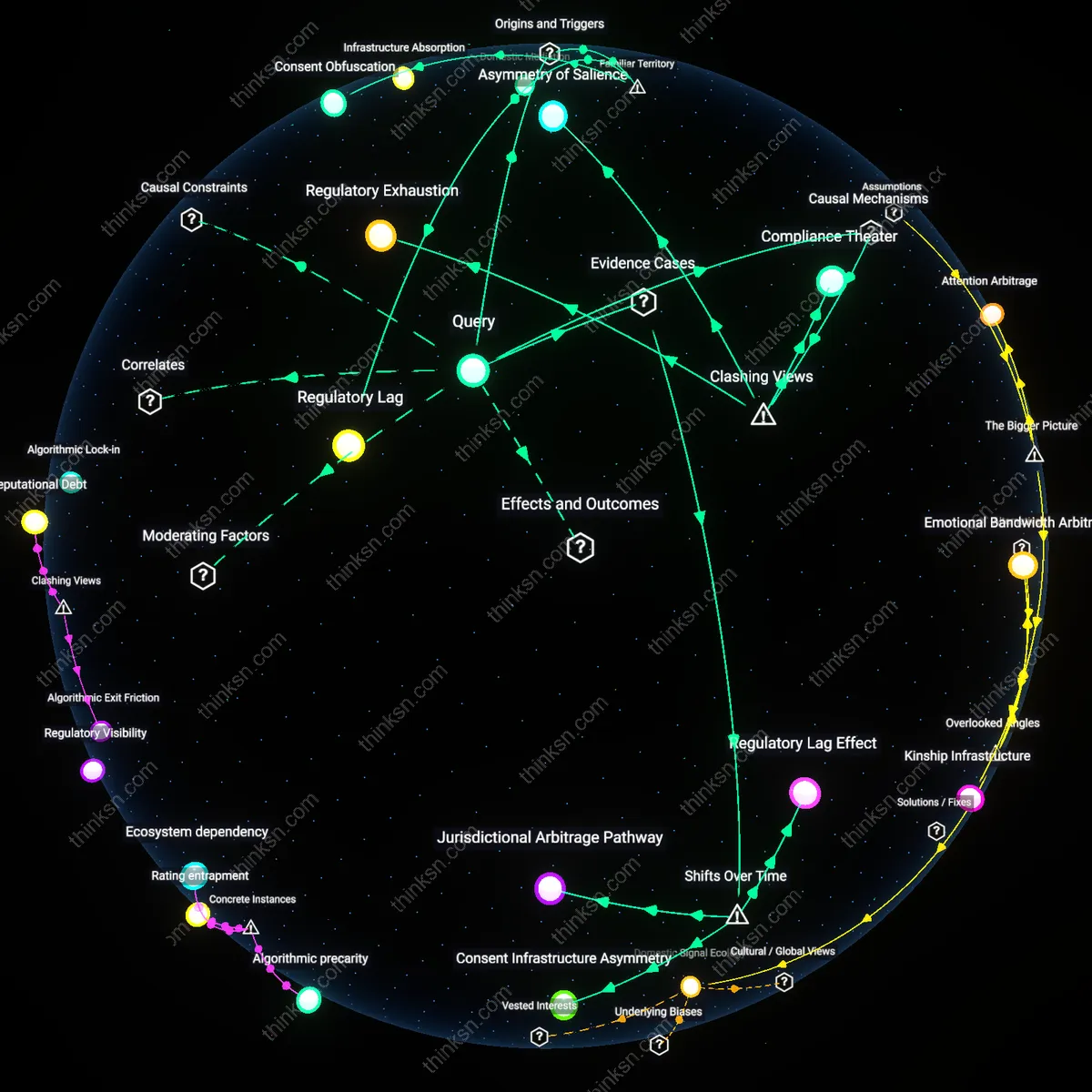

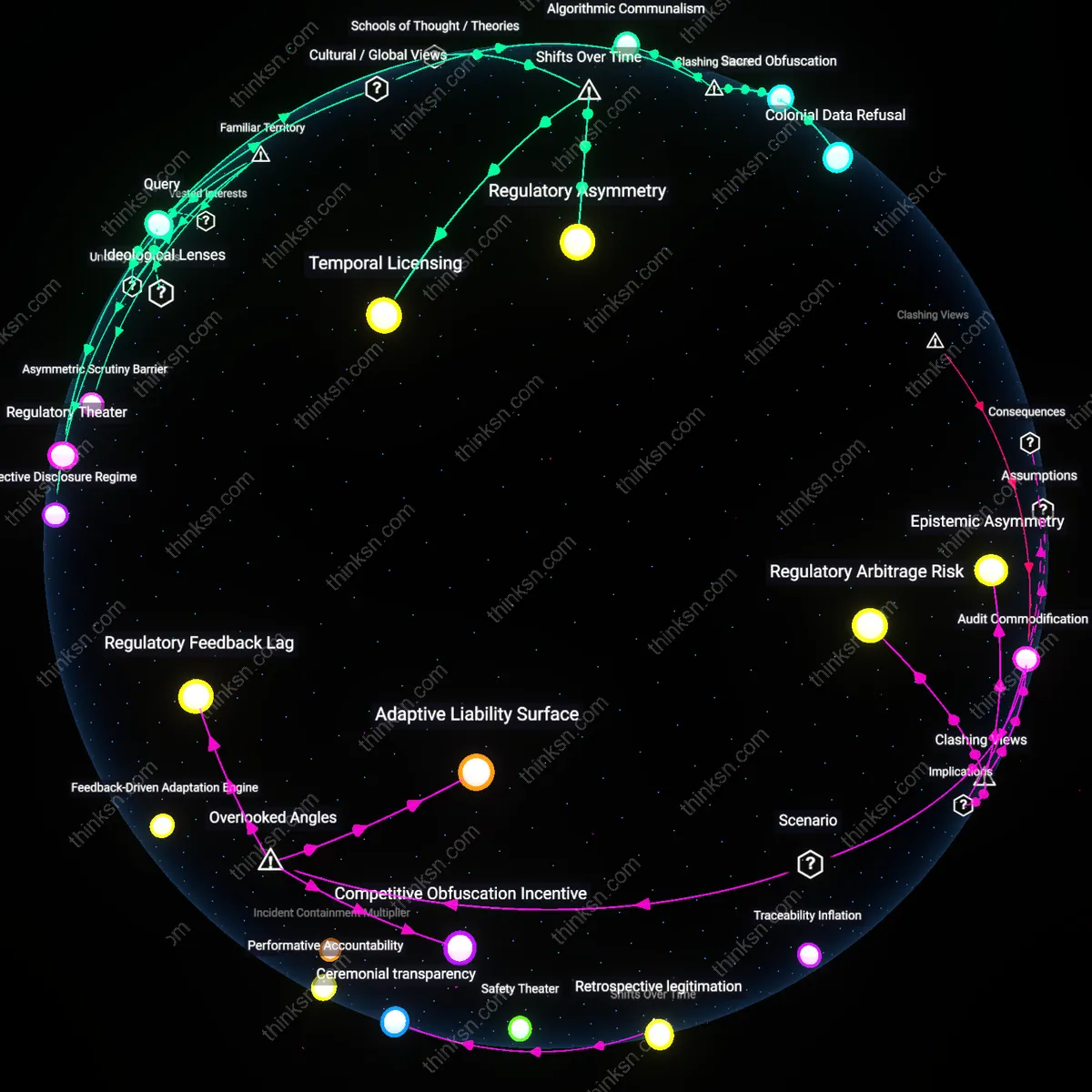

Regulatory lag

The CFPB’s enforcement struggles reveal that rulemaking cycles cannot keep pace with rapid product iteration at major fintech firms. Tech-driven financial products deploy algorithmic features and user interface designs faster than traditional regulatory processes can assess or respond to harms, creating windows of unchecked consumer exposure. This gap is most pronounced in areas like embedded finance or real-time lending, where the CFPB must rely on ex-post investigations rather than anticipatory oversight. The underappreciated reality is that speed isn’t just a technical advantage for fintechs—it’s a structural shield against accountability.

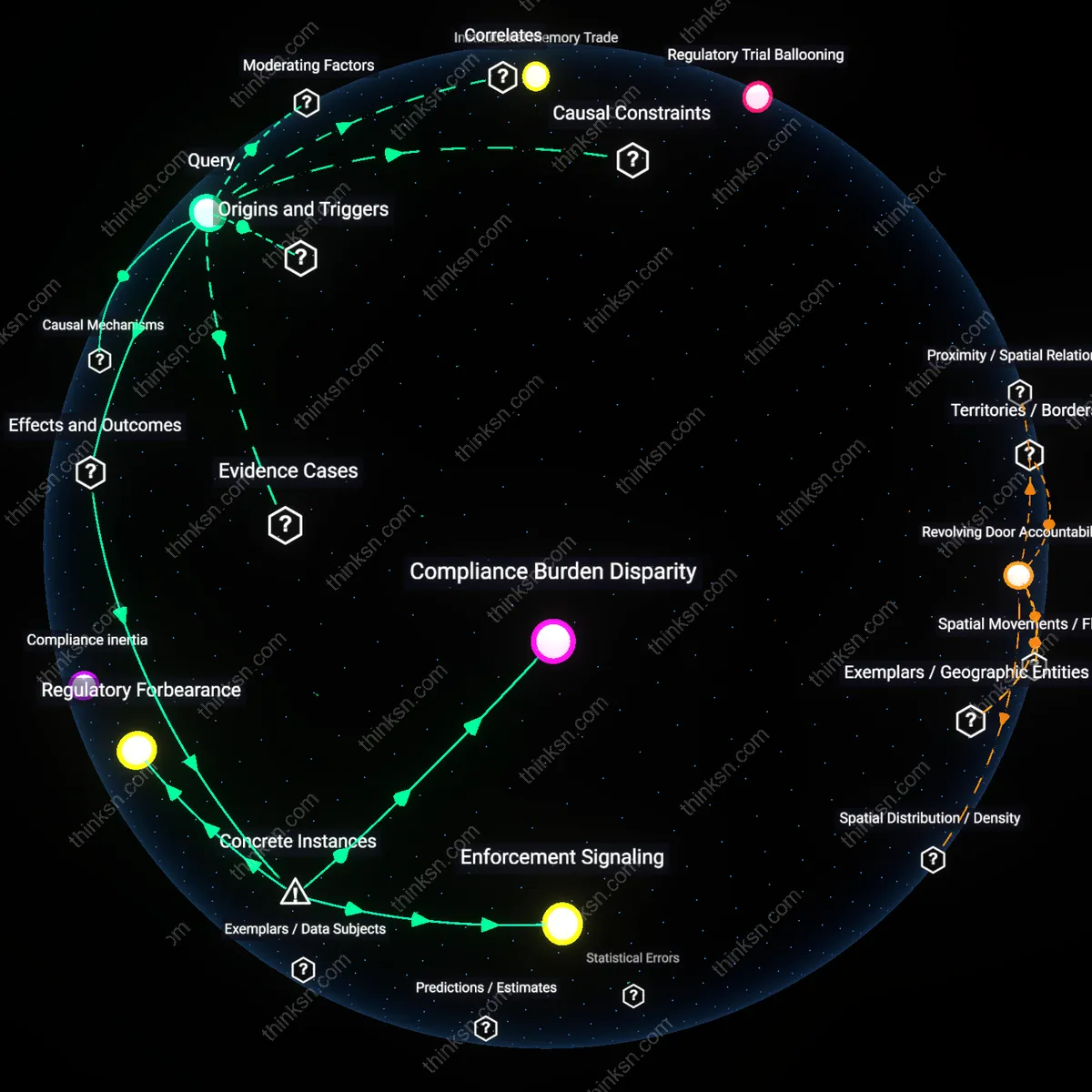

Enforcement discretion imbalance

The CFPB’s reliance on negotiated settlements over litigation reveals how asymmetrical resources tilt enforcement outcomes in favor of well-funded fintechs. Large companies deploy armies of compliance officers and legal teams to delay, narrow, or reframe allegations, forcing the CFPB to prioritize visible wins over systemic challenges. This dynamic renders penalties ritualistic rather than corrective, especially when fines are treated as cost-of-doing-business calculations. What seems like weakness is actually a rational adaptation by firms exploiting the agency’s capacity constraints—a pattern familiar from Wall Street enforcement after 2008.

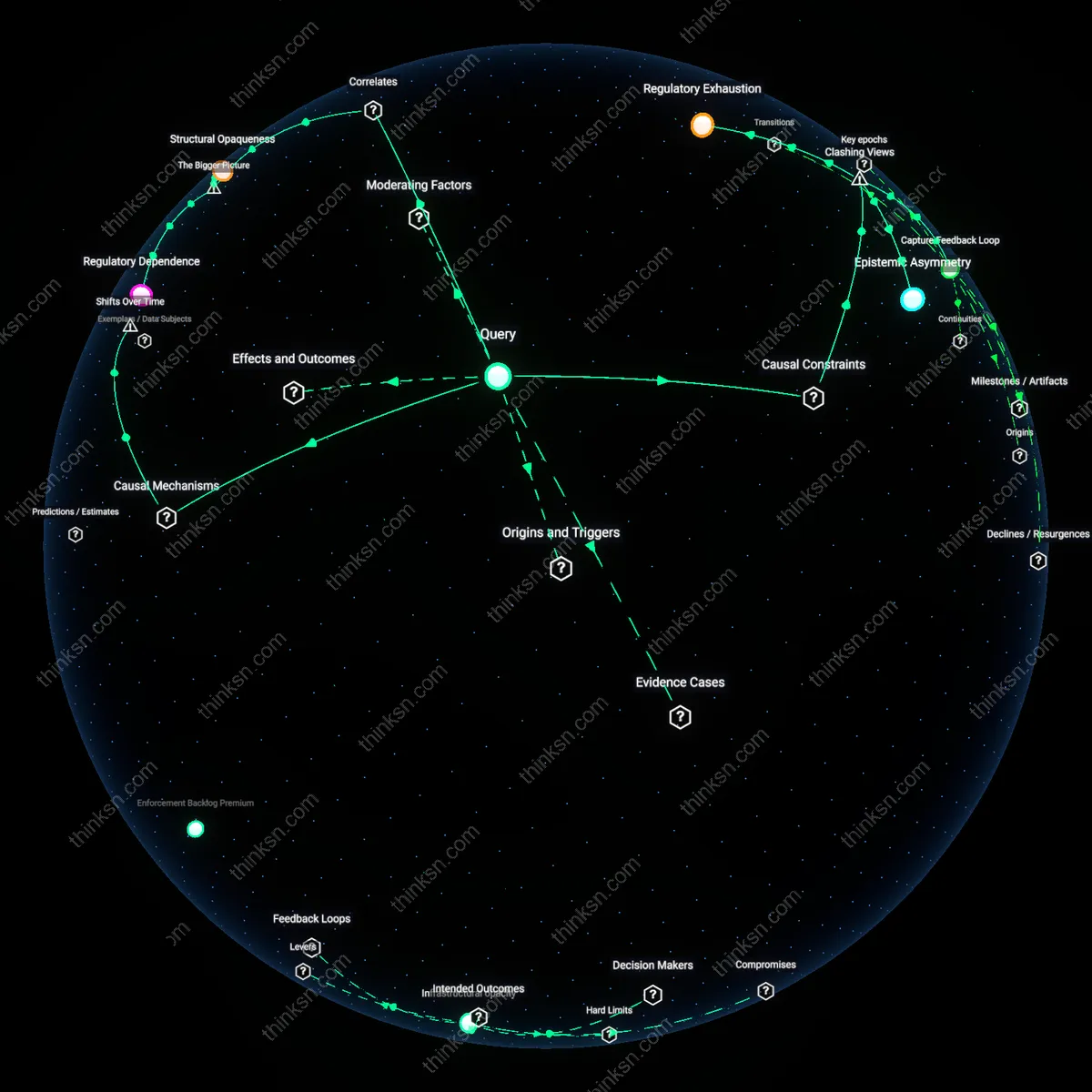

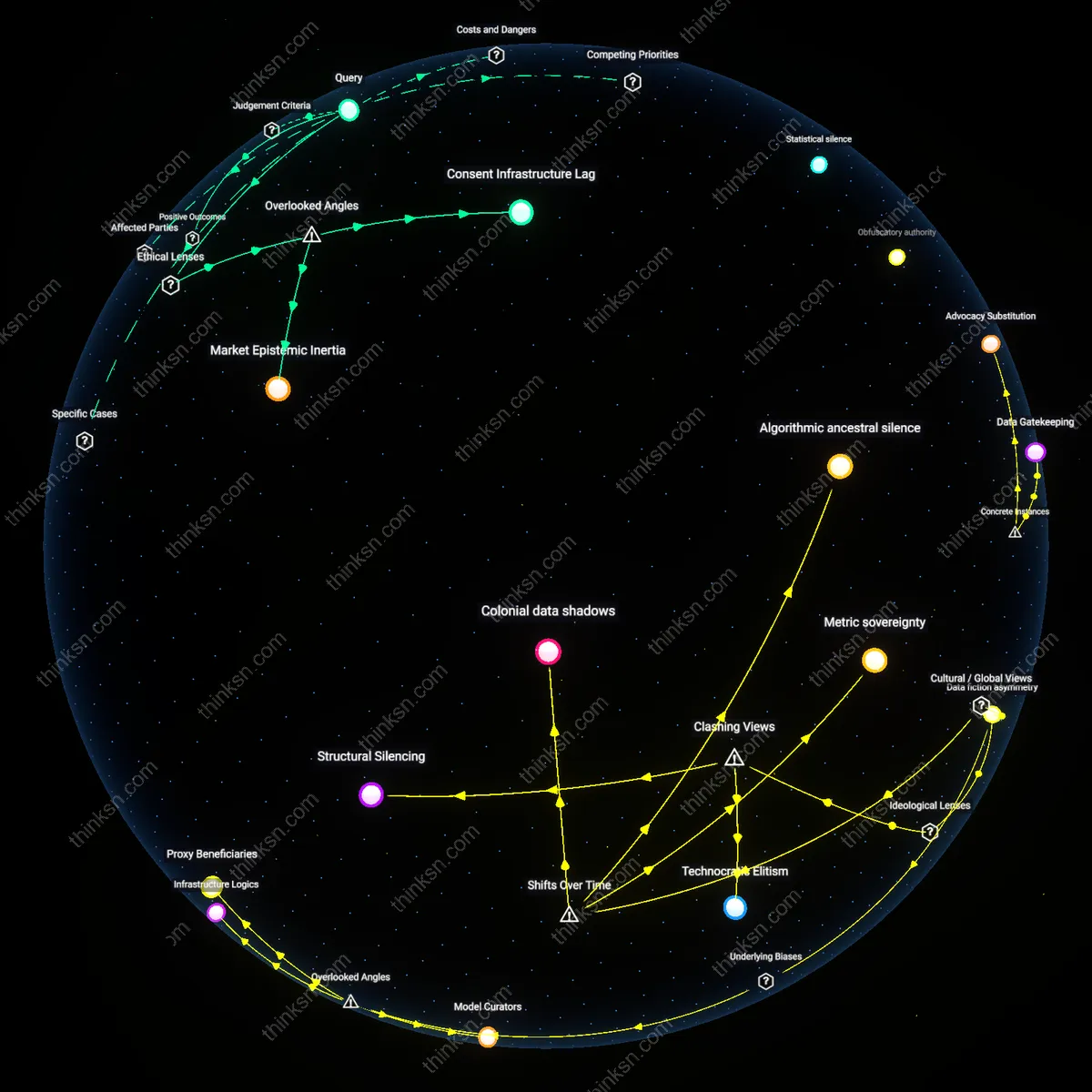

Digital opacity

Fintech companies obscure consumer harm through complex data flows and opaque algorithms, making it difficult for the CFPB to isolate violations under existing consumer protection statutes. Personalization engines, dynamic pricing models, and black-box credit scoring systems generate outcomes that are individually rational but collectively discriminatory or deceptive—yet hard to prove under fair lending or UDAAP frameworks. The public assumes transparency is a regulatory default, but in digital finance, the architecture itself conceals misconduct, turning code into a compliance alibi.

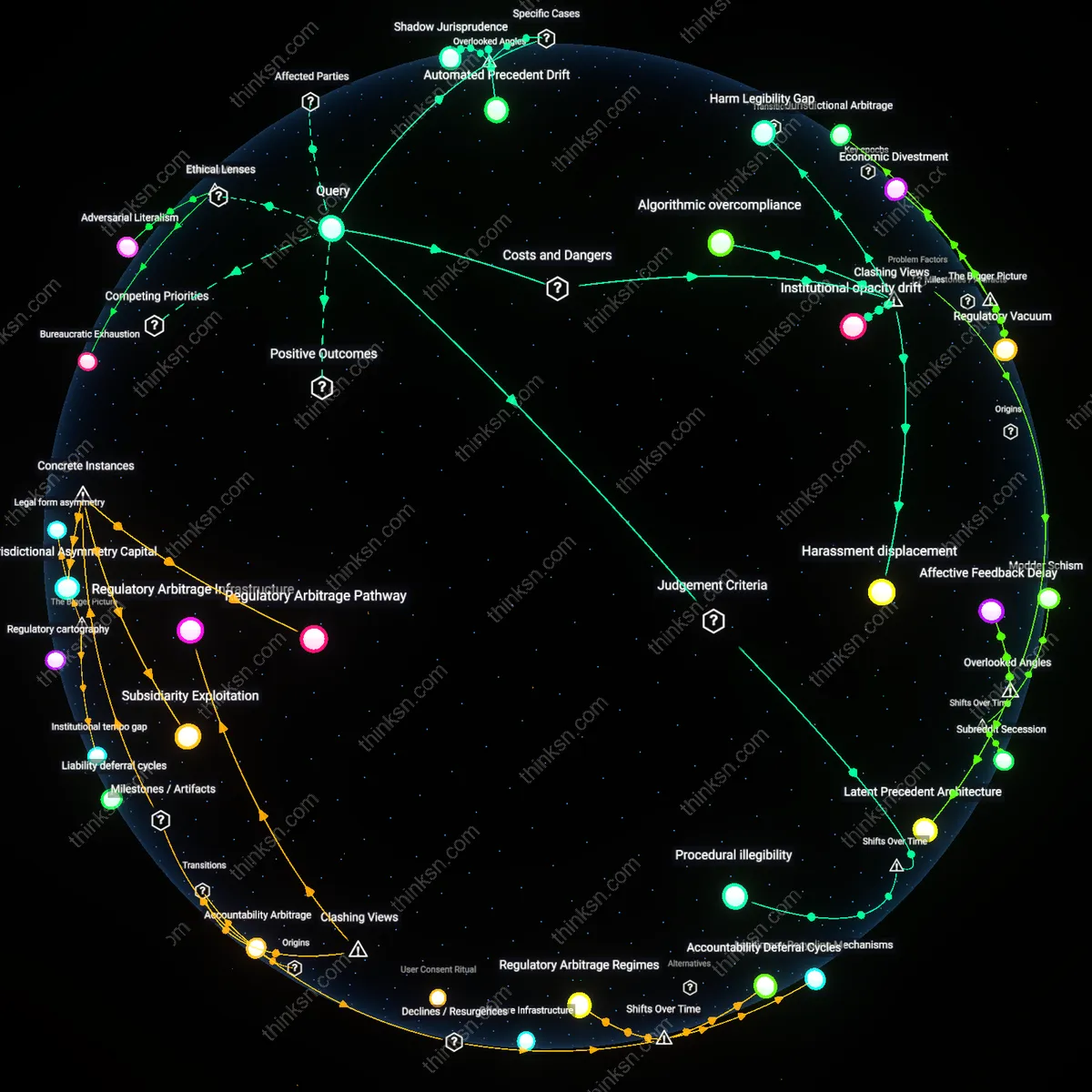

Regulatory Time Arbitrage

The delayed enforcement timelines of the CFPB enable fintech firms to operationalize legal gray zones before penalties take effect, effectively monetizing regulatory lag. Fintech companies exploit the multi-year gap between product launch and enforcement by designing business models around rapid customer acquisition and data harvesting, knowing penalties rarely precede market dominance. This creates a de facto subsidy where firms treat fines as amortized costs rather than deterrents, altering the risk calculus of noncompliance in digital markets. The overlooked mechanism is the weaponization of bureaucratic pace—a temporal misalignment most analyses interpret as weakness rather than a predictable vulnerability that firms actively exploit.

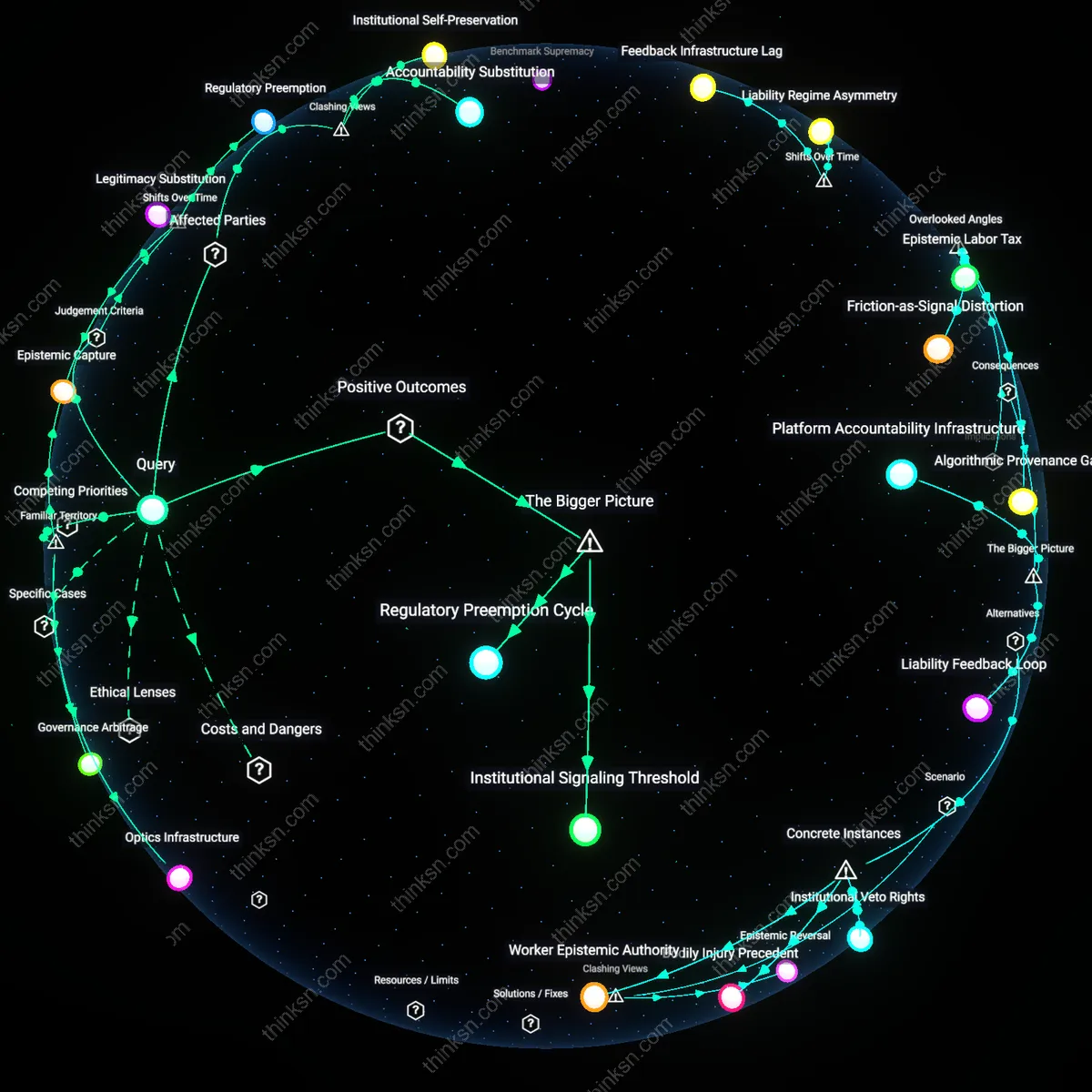

Compliance Talent Drain

Persistent underfunding of the CFPB has led to a strategic exodus of specialized compliance officers to fintech firms, shifting regulatory foresight from public to private control. Former examiners bring institutional knowledge of enforcement thresholds and detection thresholds into private-sector roles where they design regulatory evasion into product roadmaps before launch. This quiet migration transforms compliance into a competitive advantage for firms that hire these insiders, creating a regulatory asymmetry where those most capable of detecting violations instead prevent them from arising—on paper. This hidden transfer of institutional memory undermines enforcement predictability and is rarely acknowledged in discussions of regulatory capacity.