Should Architects Learn AI or Secure Legacy Systems?

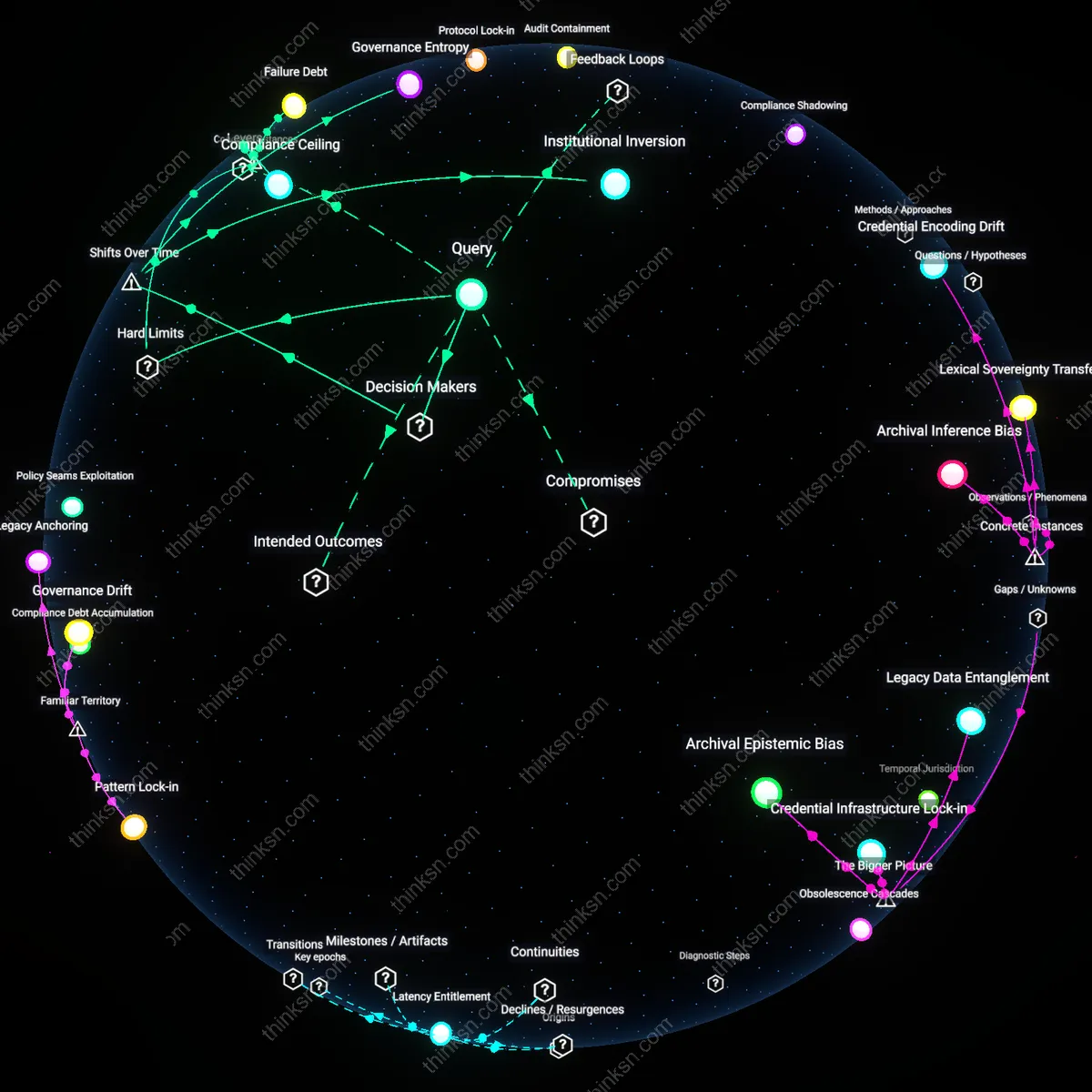

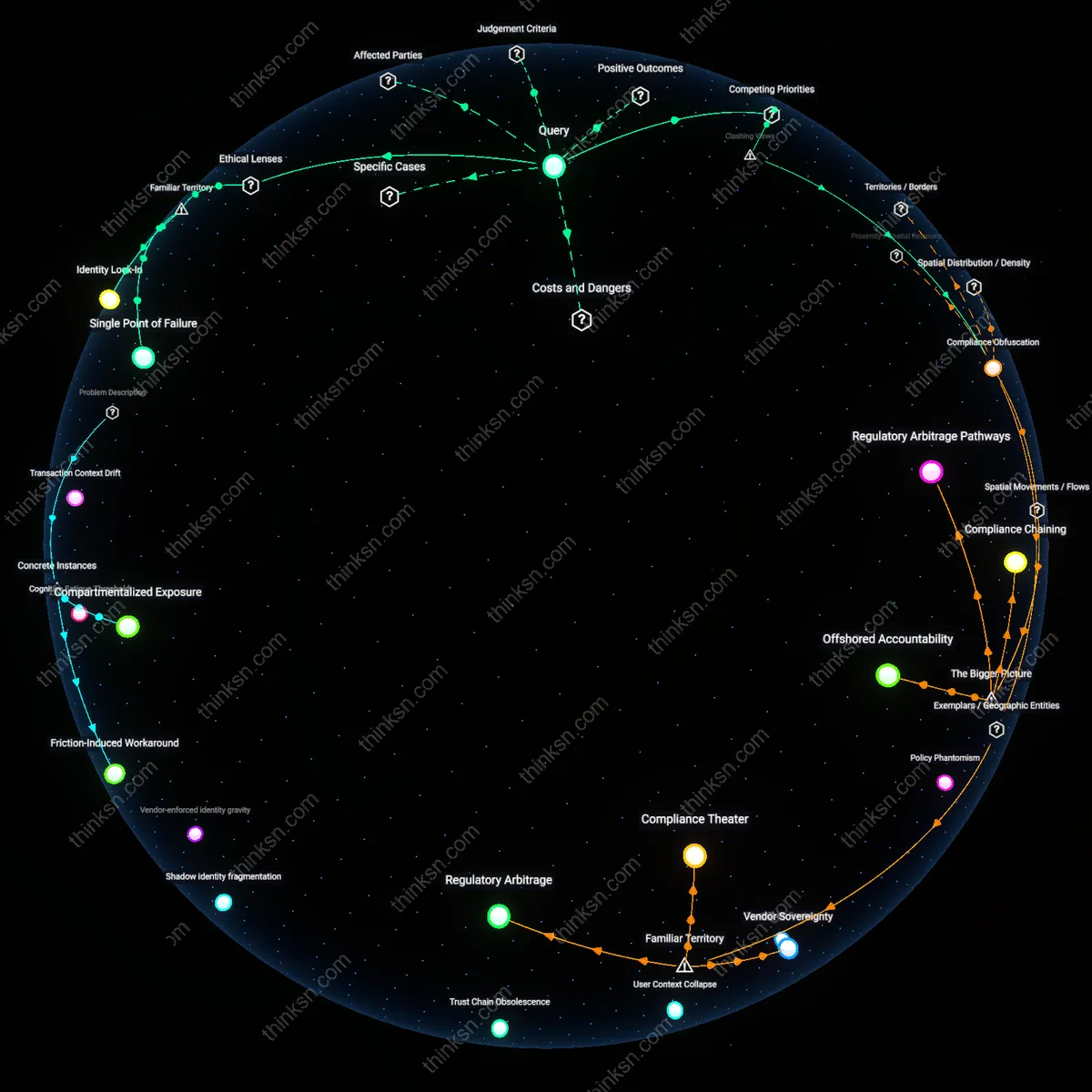

Analysis reveals 5 key thematic connections.

Key Findings

Compliance Ceiling

A senior software architect must prioritize legacy system security over AI integration when operating within heavily regulated environments because non-compliance risks can result in immediate operational shutdowns, as seen in the 2017 Equifax breach aftermath, where failure to patch a known vulnerability in legacy infrastructure led to one of the largest data breaches in U.S. history despite growing interest in predictive analytics at the time; this reveals that regulatory frameworks impose a hard boundary—here, mandated by the FTC and state regulators—beyond which even transformative AI capabilities cannot justify weakened security postures, making the permissible innovation envelope smaller than technological feasibility suggests.

Failure Debt

In 2018, the UK’s National Health Service (NHS) chose to delay AI-driven diagnostic tool integrations in favor of stabilizing aging IT systems across regional trusts, recognizing that unreliable data pipelines from insecure legacy infrastructure would poison any downstream AI model’s output; this decision illustrates how unresolved technical failures in core systems accrue as failure debt, a condition where each patchwork fix increases the risk of cascading breakdowns, making the strategic trade-off not between security and innovation but between operational survival and irreversible systemic collapse.

Trust Surface

When Microsoft Azure integrated AI services into its cloud platform while maintaining support for legacy Windows Server environments, it created a dual-track architecture that treated legacy systems not as obstacles but as trust anchors, ensuring that security protocols from proven systems governed the rollout of AI components—this approach, visible in the 2020 Azure Sentinel deployment, demonstrates that legacy systems can define the trust surface of new AI integrations, shifting the strategic trade-off from replacement to orchestration, where security becomes the enabler rather than the inhibitor of innovation.

Governance Entropy

Senior software architects must align AI integration and legacy security decisions through institutional risk forums, not technical benchmarks. Following the post-2017 shift toward decentralized AI deployment, authority over system risk has diffused from centralized IT governance to fragmented product teams, cloud providers, and compliance units—producing a condition where no single actor controls the full risk surface. This mechanism reveals that strategic trade-offs are no longer decided by architectural merit but by which institution claims jurisdiction over risk mitigation, making governance structure the determining factor in outcomes. What is non-obvious is that technical debt in legacy systems is increasingly leveraged as a regulatory shield, delaying AI adoption under the guise of security compliance.

Institutional Inversion

The strategic trade-off is resolved when cybersecurity audit regimes become the primary vehicle for AI adoption in regulated sectors. Since the 2020 surge in AI-driven compliance automation, legacy security mandates—once barriers to innovation—have been repurposed by financial and healthcare institutions to legitimize embedding AI models as audit-trail generators, fraud detectors, or access controllers, flipping the relationship where AI now enters through the backdoor of security infrastructure. This reversal operates via regulatory reporting systems that reward automated oversight, making compliance teams the unintended champions of AI integration. The non-obvious outcome is that security is no longer a constraint on AI but has become its institutional Trojan horse.

Deeper Analysis

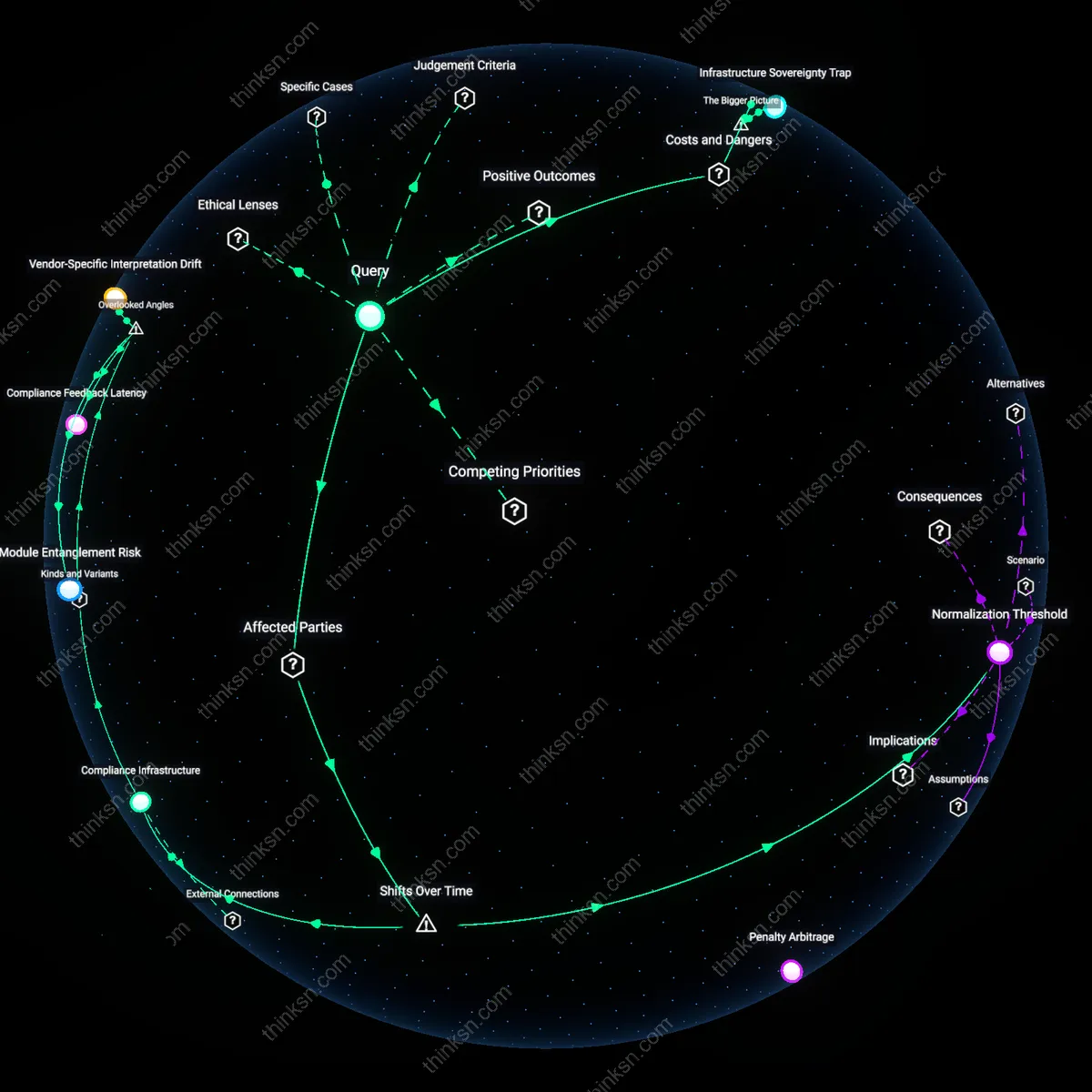

How do the security protocols from legacy systems actually shape the design and placement of AI components in a dual-track architecture like Azure's?

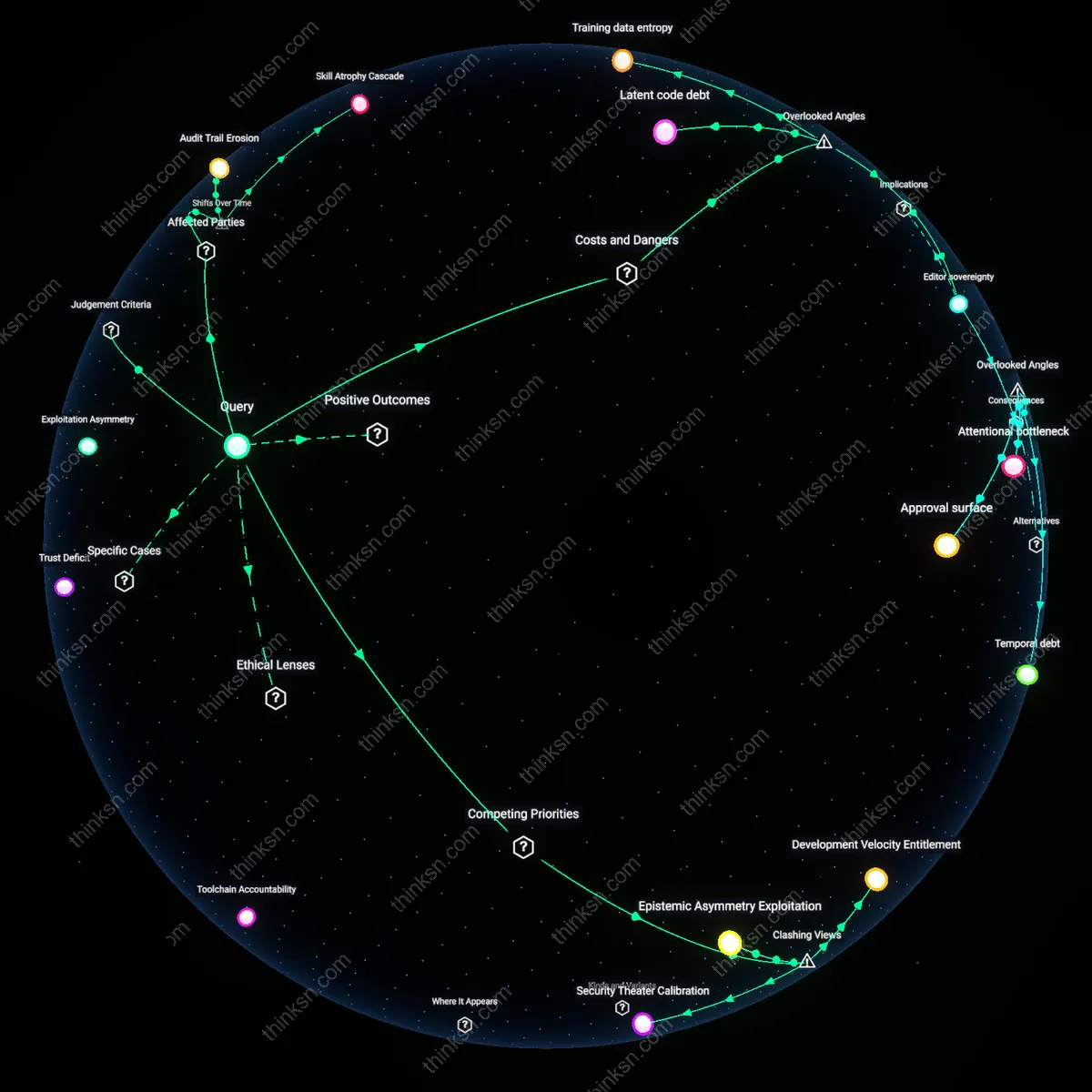

Protocol Inertia

Legacy security protocols directly determine where AI components can be provisioned in Azure’s dual-track architecture by mandating compliance with pre-existing identity and access management frameworks designed for monolithic, non-distributed systems. This constraint forces AI modules—especially those involving real-time inference or data ingestion—into isolated trust zones like Azure Government or air-gapped virtual networks, not because of technical necessity but due to institutional adherence to FIPS 140-2 and FedRAMP-compliant authentication flows that predate machine learning workflows. The non-obvious outcome is that AI placement becomes a function of bureaucratic path dependency rather than computational efficiency, exposing how cybersecurity legacy systems act as silent architects of cloud topology.

Trust Contagion

Legacy security protocols inadvertently spread risk aversion into AI component design by treating probabilistic models as untrustworthy data conduits, thus requiring AI services in Azure’s dual-track setup to be confined behind synchronous validation layers originally built for static database transactions. These layers—such as Azure Policy enforced through ARM template checks and Azure Blueprints—were calibrated for deterministic workloads, not adaptive AI behavior, which causes AI deployments to be segmented away from real-time decision loops into segregated 'AI enclaves' where outputs must be frozen and audited before integration. This reveals that the dominant security model treats AI not as a participant in decision-making but as a contaminant, reshaping system topology around the fear of autonomous propagation.

Surface Rigidity

The persistence of perimeter-based security models from legacy systems hardens the network surfaces around AI components in Azure, compelling the placement of AI services within the primary track despite latency costs, simply because they must remain within reach of centralized SIEM tools like Azure Sentinel that rely on deterministic log schemas. These tools were built for firewall and Active Directory monitoring, not for interpreting model drift or adversarial input detection, yet they exert gravitational pull on AI architecture by defining what constitutes a 'reportable event,' thereby freezing AI components into legacy observability grids. The result is that AI placement is less about performance optimization and more about ritual compliance to forensic standards that cannot interpret AI-specific threats, making security protocol surface area a constraining material property.

Legacy Identity Anchors

Azure Active Directory integration in Microsoft’s cloud migration mandates that AI workloads authenticate through pre-existing enterprise identity stores, forcing AI components in dual-track architectures to route inference requests through legacy directory services rather than adopting decentralized credentialing. At a major European bank modernizing fraud detection, this requirement led to AI models being physically co-located with on-premises AD servers to minimize latency and audit gaps, exposing how decades-old identity protocols constrain AI topology. The non-obvious insight is that identity, not compute or data, often becomes the gravitational center around which AI systems must structurally align.

Compliance-by-Architecture

When the UK’s National Health Service deployed Azure-based AI for radiology triage under a dual-track system, mandatory adherence to ISO 27001 controls inherited from legacy PACS systems required that all model outputs pass through encrypted audit logging subsystems originally designed for human-accessed patient records. This forced the AI pipeline to split inference from interpretation, inserting a compliance gateway before results could enter clinical workflows. The overlooked consequence is that regulatory artifacts from pre-AI systems induce architectural bifurcations that compromise end-to-end AI performance even when technically unnecessary.

Network Perimeter Lock-in

In a JPMorgan Chase deployment of market sentiment AI using Azure, pre-existing network segmentation rules from 2008-era SOX-compliant trading systems required that no externally sourced AI model could reside in the same virtual network segment as transaction execution engines, even when hosted in the cloud. This compelled Microsoft engineers to implement a mirrored 'shadow AI' topology where models run in isolated enclaves and push results via air-gapped event queues. The key insight is not that security isolates systems, but that legacy network perimeters become spatial constraints that physically dictate where AI components can be placed in cloud architectures.

Legacy Constraint Inheritance

Legacy security protocols directly determine AI component placement in Azure’s dual-track architecture by mandating that AI services adhere to pre-existing identity, access, and encryption standards designed for monolithic systems; this forces AI modules to be embedded in regulated, perimeter-secured zones rather than dynamically provisioned across the cloud fabric. Azure engineering teams must align AI workloads with established Azure Active Directory policies, network security groups, and audit logging infrastructures originally built for VM-based applications, which constrains deployment topologies and slows integration with agile, decentralized compute pools. The non-obvious consequence is that legacy compliance logic becomes embedded in AI system design not through technical necessity but through institutional risk avoidance, where deviations trigger compliance escalations by enterprise governance boards tied to older control frameworks.

Risk Surface Misalignment

Legacy security protocols inadvertently expand the effective attack surface of AI components in Azure by forcing them into conventional, network-accessible endpoints that mirror older service models, rather than allowing isolation or functional segmentation aligned with AI-specific threat profiles. Because existing DDoS protections, firewall rules, and intrusion detection systems were designed for stateful applications with predictable API contracts, AI services are provisioned behind reverse proxies and API gateways that introduce latency and expose metadata patterns, undermining their resilience to adversarial querying. The overlooked dynamic is that security compatibility becomes a design driver that overrides performance and model integrity considerations, privileging organizational coherence in threat modeling at the cost of architectural precision for emerging threats.

Trust Inheritance

Legacy identity and access management protocols from on-premises systems compel the initial placement of AI components within Azure's trusted cloud perimeters rather than at the edge or in decentralized nodes. This is because hybrid environments inherit Active Directory-based authentication and role-based access control (RBAC) schemas from prior enterprise architectures, binding AI workloads to centralized policy enforcement points that mirror historical data center boundaries; as a result, AI systems are architected to operate downstream of these legacy trust anchors, even when technically capable of autonomous operation. The non-obvious consequence is that security debt from pre-cloud eras actively constrains the topology of modern AI deployment, revealing how past assumptions about network boundaries continue to shape where intelligent agents can be trusted to act.

Protocol Lock-in

The persistence of older encryption standards and certificate management practices from mainframe and client-server eras forces AI components in Azure’s dual-track architecture to interface through bridged security gateways that translate between modern API-centric protocols and legacy TLS 1.0/1.1 or PKI infrastructures. Industrial enterprises maintaining compliance with sector-specific regulations (e.g., HIPAA, SOX) often retain outdated cryptographic baselines, requiring AI modules handling sensitive data to be funneled through intermediary enclaves that sanitize inputs and repackage communications—effectively creating a temporal bottleneck. This reveals how regulatory path dependence, rather than technical capability, has institutionalized protocol mediation layers that segment AI deployment options along generational fault lines.

Audit Containment

Legacy SOX and FIPS-compliant logging requirements designed for monolithic applications have led Microsoft to embed AI analytics components within isolated audit-controlled zones in Azure, where data flows are constrained to pre-approved pathways that mirror 2000s-era change management frameworks. These zones prioritize backward compatibility with legacy SIEM tools and structured log formats over real-time model adaptability, effectively quarantining AI systems in compartments engineered for retrospective compliance rather than dynamic risk assessment. The shift from event-based auditing to predictive governance has been stalled not by AI limitations, but by the enduring authority of archival verification practices, exposing how compliance memory anchors innovation within historically defined boundaries.

Compliance Shadowing

Legacy identity and access management systems in German financial institutions using Azure's dual-track architecture force AI workloads to be physically provisioned within national data centers to satisfy audit trails originally designed for mainframe-era transaction logging. This constraint, rooted in Bundesdatenschutzgesetz compliance routines, compels AI model training pipelines to inherit rigid data locality rules not because of model sensitivity or latency needs, but because legacy IAM protocols cannot authenticate cross-border data flows under vintage audit frameworks. The non-obvious implication is that AI topology choices are silently dictated not by ML engineering requirements, but by decades-old ticketing schemas and approval chains embedded in COBOL-derived access logs — a dependency invisible in cloud architecture diagrams but enforced in operational practice.

Protocol Inertia Debt

In NHS Scotland’s Azure-deployed patient risk prediction system, the placement of AI inference components behind legacy HL7 over MLLP messaging gateways necessitates that real-time models respond within 2.8-second windows to avoid abort signals hardcoded into 1990s clinical middleware. This timing constraint, originating from slow serial transmission protocols for laboratory results, forces AI endpoints to be stripped of dynamic batch optimization and pinned to low-latency VM SKUs despite underutilization. Most analysts overlook that the AI’s architectural 'performance' demands are not driven by clinical urgency but by un-upgraded messaging stack timeouts — revealing that temporal expectations from legacy systems create hidden hardware binding effects on AI components.

Credential Translation Tax

Australian energy grid operators integrating AI-based load forecasting in Azure must route all model outputs through legacy SCADA systems that accept input only via OPC DA protocol authentications mapped to individual named user accounts—bypassing OAuth2 and managed identities. As a result, AI services must spawn ephemeral credential wrappers that impersonate human operators, embedding identity translation layers that fragment audit continuity and constrain deployment topology to subnet-isolated jump hosts in Perth and Brisbane. The overlooked reality is that AI placement is geographically anchored not by data proximity or regulatory jurisdiction, but by the spatial distribution of legacy authentication endpoints that cannot process service principals, exposing a hidden tax of synthetic identity mediation in hybrid control environments.

Explore further:

- What happens when AI systems need to make time-sensitive decisions but are trapped behind security checks meant for static data?

- Where else might old identity systems be quietly shaping how AI is built, even when no one’s talking about it?

- How did the way AI services are built in Azure change over time as older security rules kept getting applied to newer projects?

What happens when AI systems need to make time-sensitive decisions but are trapped behind security checks meant for static data?

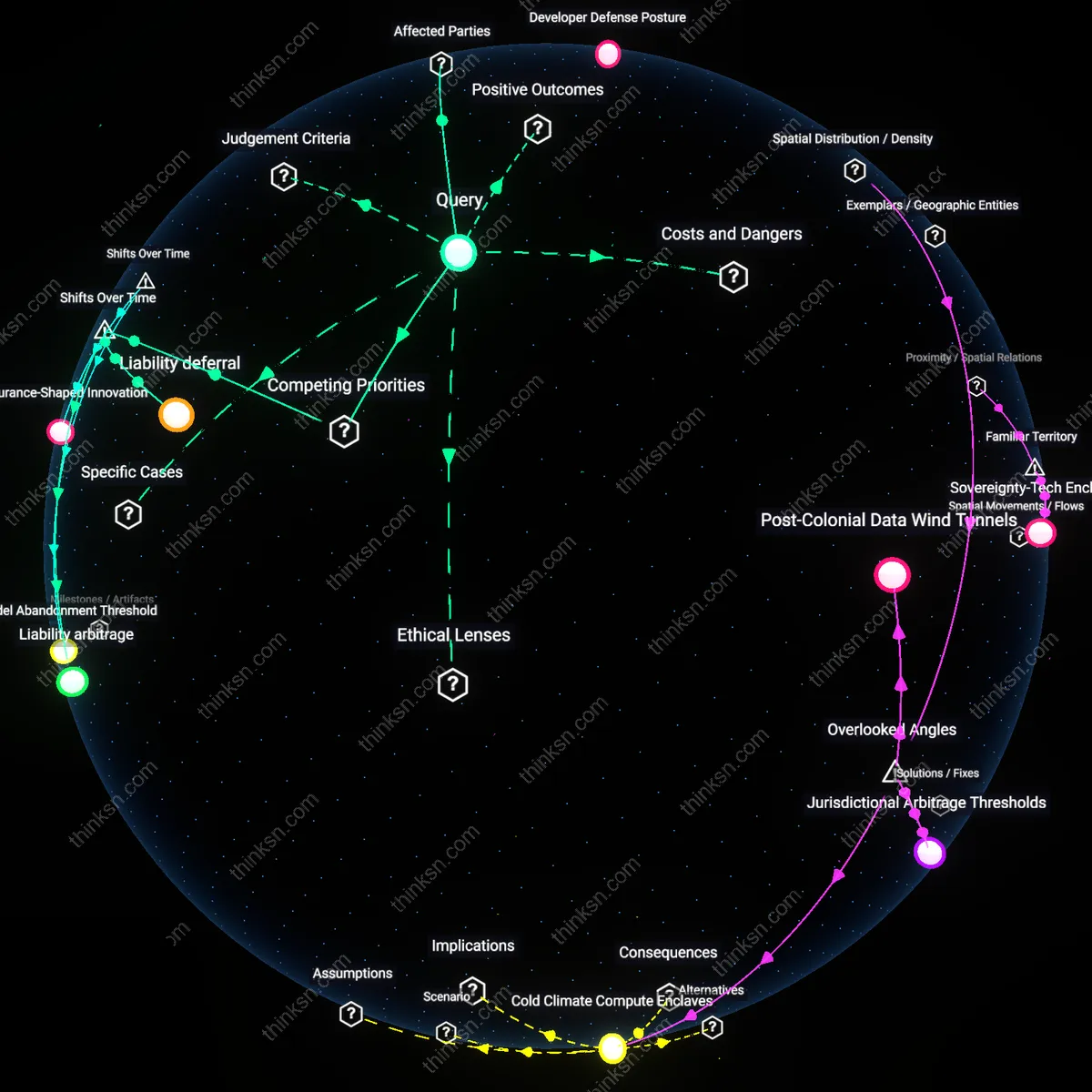

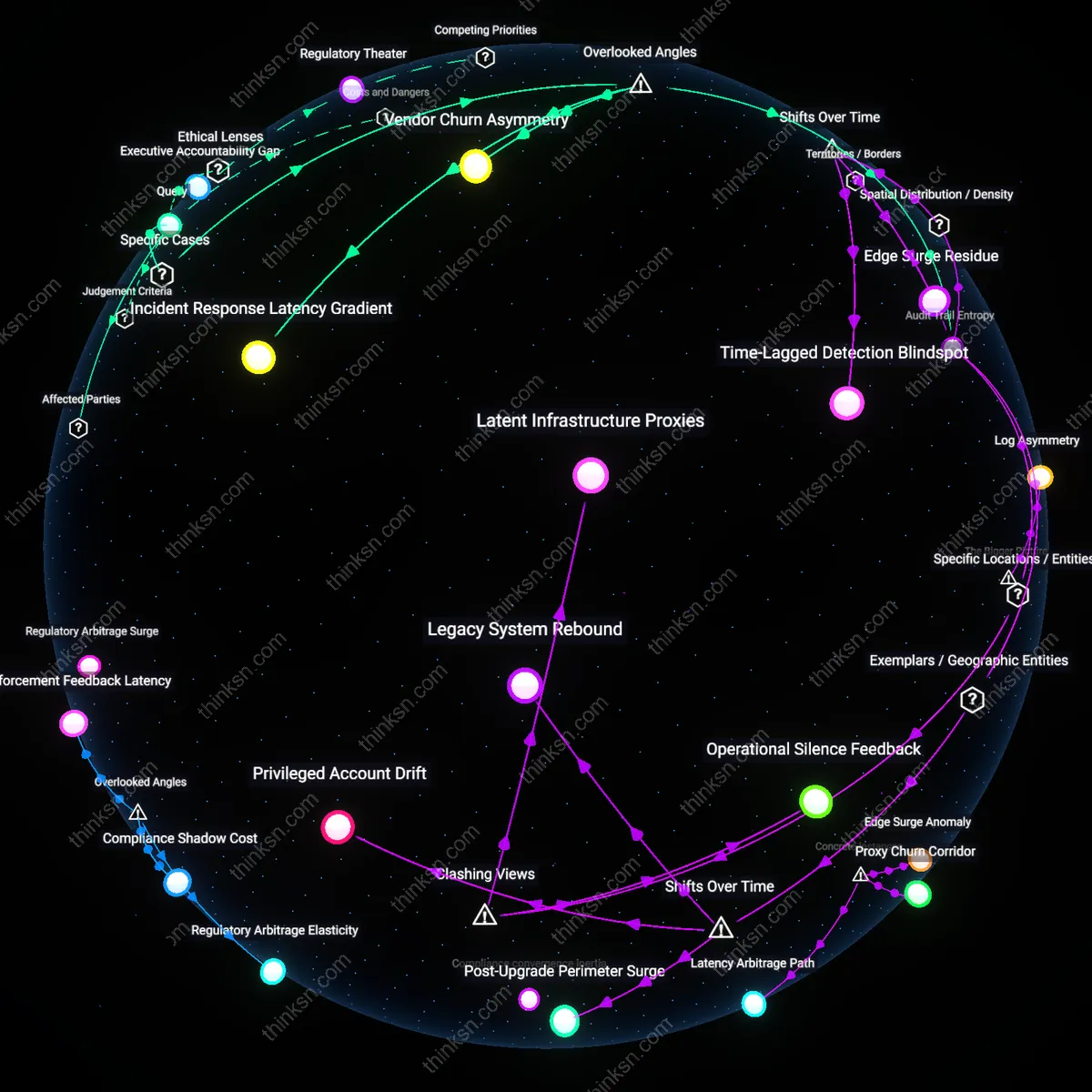

Latency Debt

Security checks originally designed for batch-processed datasets in the early 2010s assumed decision pipelines could afford minutes-long validation cycles, but when real-time AI systems in autonomous vehicles and financial trading began operating on millisecond timescales after 2020, those same checks created unbounded latency accumulation, revealing a temporal mismatch between static governance infrastructure and dynamic operational tempo; this debt is not resolved by faster hardware, but by the deferred cost of compliance bottlenecks that systematically de-synchronize decision windows from approval windows, a tension invisible until AI moved from post-hoc analysis to closed-loop control.

Temporal Misalignment

Pre-2015, data governance treated information as inert until accessed, with security protocols gated around storage and transfer; however, when reinforcement learning systems deployed in medical triage and aerospace systems after 2022 required live inference under time-locked conditions, these protocols failed because they could not distinguish between archival access and urgent computational need, exposing a fundamental shift from data-as-asset to data-as-action—where the same check that once prevented leaks now prevents life-saving interventions, making the timing of access as consequential as the access itself.

Decision Trapping

Until the mid-2010s, AI validation was episodic, occurring during model deployment or updates, but with the rise of adaptive systems in logistics and defense that revised policies in seconds, continuous security re-certification became mandatory—yet existing frameworks like NIST SP 800-53 were built for stable configurations, not fluid cognition; this produces decision trapping, where AI agents generate valid responses but are blocked from execution until human or automated clearance, a phenomenon that emerged only when operational tempo exceeded procedural rhythm, revealing policy as a bottleneck rather than a safeguard.

Latency Entitlement

Security protocols assume all data accesses have equal temporal tolerance, but time-sensitive AI systems in emergency dispatch networks experience decisional obsolescence when authentication handshakes delay action by more than 200 milliseconds—meaning the system computes optimal responses to events that have already evolved beyond intervention. This creates a hidden hierarchy where AI capabilities are artificially throttled not by processing power but by security architectures designed for forensic auditability rather than operational tempo, a constraint rarely modeled in either cybersecurity or AI safety frameworks. The overlooked dynamic is that timing thresholds for effective action become de facto rights to bypass checks, generating an unacknowledged 'latency entitlement' that determines which systems can function autonomously under real-time pressure.

Temporal Jurisdiction

When autonomous medical triage AIs in battlefield field hospitals must wait for cloud-based encryption key validation before releasing treatment recommendations, the delay shifts decision authority retroactively to centralized defense IT departments that neither understand trauma timelines nor bear clinical accountability—effectively ceding life-or-death timing judgments to infrastructure stewards. This reveals that security checks don’t just impose delays; they redistribute temporal jurisdiction across institutional boundaries, embedding calendar logic into systems designed around event-driven urgency. The critical but ignored factor is that time-based access controls conflate data integrity with decision relevance, mistaking encryption latency for governance when it actually determines which organizational tier controls the present moment.

Obsolescence Cascades

In high-frequency financial trading, AI models that require real-time rebalancing based on market shifts are frequently invalidated by pre-execution compliance scans that take 50–100ms, causing trades to execute against stale volatility estimates and triggering compounding errors in downstream risk models—an effect amplified during flash crashes when security queues back up. Most analyses focus on throughput or accuracy degradation, but the deeper issue is that delayed AI actions don’t just fail individually; they introduce temporally poisoned data into training feedback loops, propagating obsolescence across system generations. This cascade effect transforms isolated latency into systemic drift, a phenomenon invisible to static risk assessments that assume decoupled decision cycles.

Where else might old identity systems be quietly shaping how AI is built, even when no one’s talking about it?

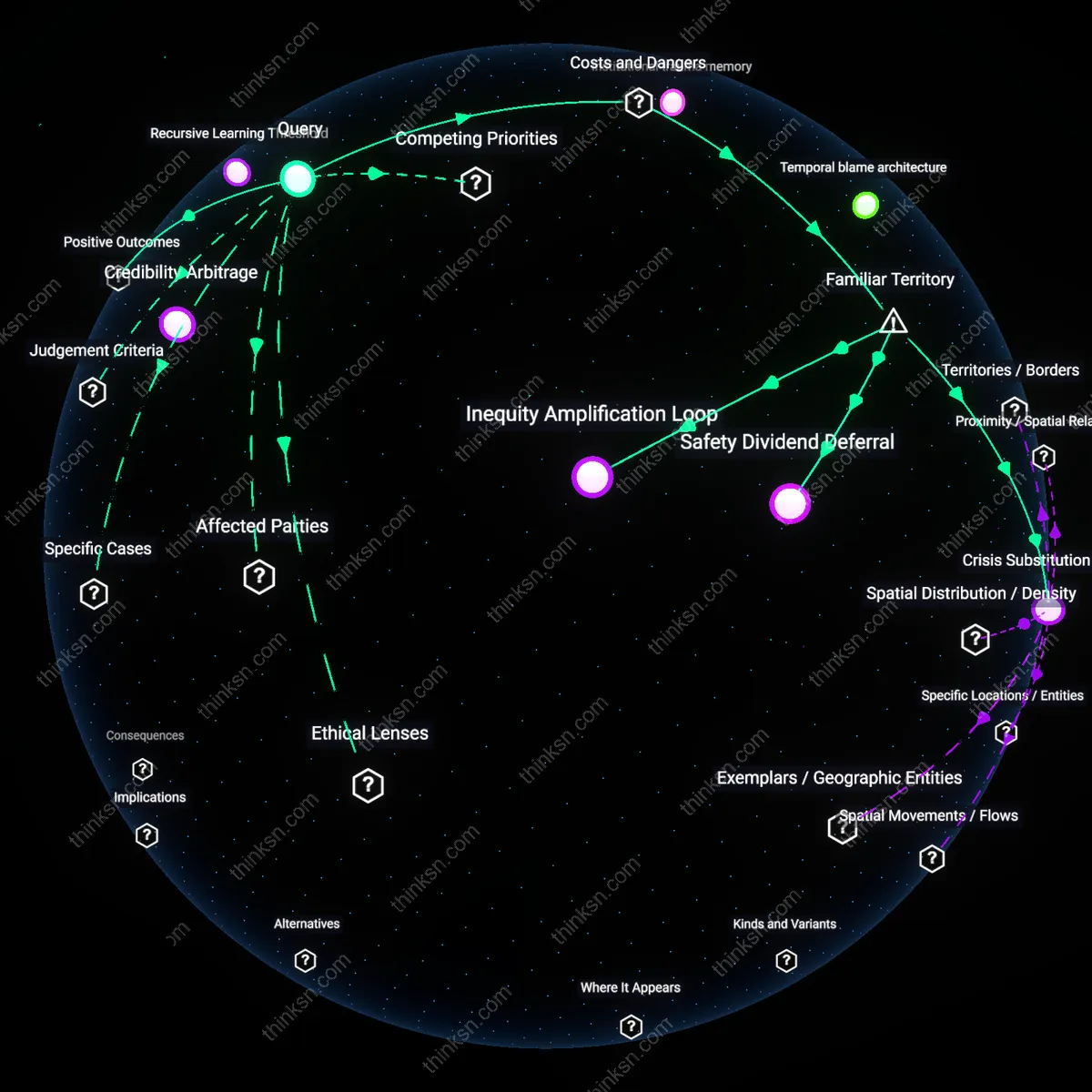

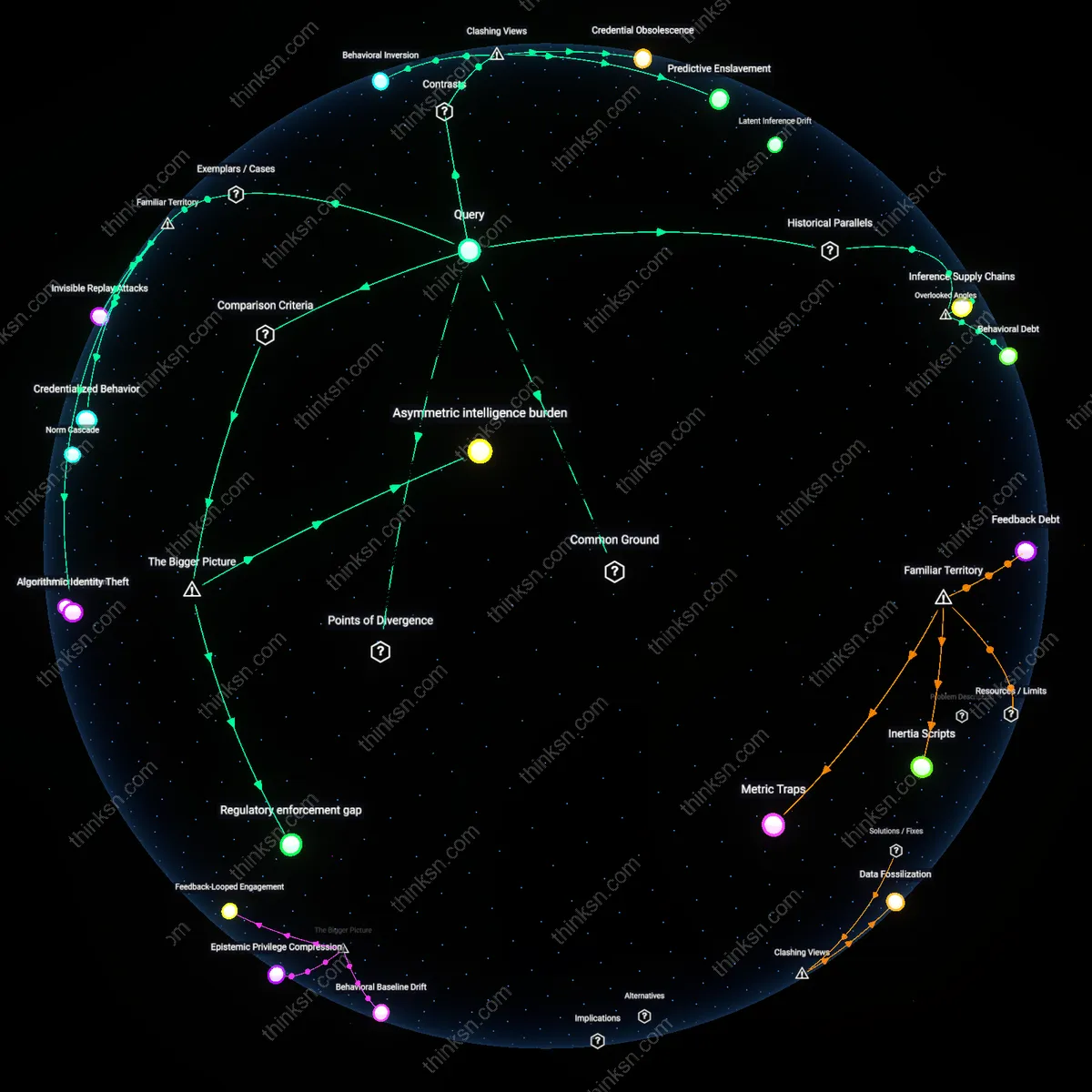

Archival Inference Bias

Legacy census classification systems in the UK, particularly the 1991 racial categorization framework, continue to structure how ethnic identifiers are encoded in NHS digital health AI tools, despite being obsolete in official statistics since 2001; this persistence occurs because data pipelines repurpose old administrative taxonomies as training labels, embedding historically contested categories—like 'Black Caribbean' or 'White Other'—into diagnostic algorithms, thereby institutionalizing outdated social constructs as biological proxies. The non-obvious mechanism here is not mere data reuse but the unnoticed epistemic delegation to analog-era classification regimes, which now silently determine how health disparities are modeled in machine learning systems.

Credential Encoding Drift

MIT’s early 2000s Certificate Authority infrastructure, designed for academic identity verification, became the de facto template for OAuth schema layouts in major AI-driven identity providers like Google Identity and Azure Active Directory, transferring assumptions about hierarchical trust and static affiliation into decentralized authentication models; this results in AI systems interpreting user roles through institutional membership binaries—e.g., 'student,' 'faculty,' 'staff'—even in open, anonymous web environments where such distinctions are meaningless. The overlooked consequence is a structural imposition of academic feudalism onto broad digital identity graphs, skewing personalization and access logic in AI platforms not built for institutional context.

Lexical Sovereignty Transfer

The European Union’s 1987 Interinstitutional Metadata Registry, created to standardize multilingual legal document indexing across member states, has been quietly repurposed by natural language processing teams at firms like SAP and Deutsche Telekom to define canonical semantic ontologies for enterprise AI; legacy terms like 'national regime' or 'competent authority'—deeply rooted in postwar administrative law—now shape how NLP models parse governance-related queries in customer service bots, even outside legal domains. This reveals a silent jurisdictional leakage, where Cold War-era bureaucratic terminology governs how AI understands authority, compliance, and identity, independent of user intent or contemporary political reality.

Legacy Data Entanglement

Outdated government identity databases are being repurposed as training data for AI systems that classify citizenship eligibility, because budget-constrained agencies reuse existing civil registry records without auditing for historical biases. This mechanism embeds mid-20th-century racial and colonial classifications into modern migration algorithms through data lineage rather than explicit design, privileging administrative continuity over ethical scrutiny. The non-obvious consequence is that AI inherits not just data, but the procedural logic of systems built for exclusionary regimes, where invisibility in records often functioned as a tool of disenfranchisement.

Credential Infrastructure Lock-in

Private identity verification platforms like those used in banking and telecoms are silently shaping AI risk thresholds by anchoring 'verified identity' to legacy KYC (Know Your Customer) standards from the 1990s, which prioritize document possession over lived identity. Because fintech AI systems must comply with these pre-digital compliance architectures to gain regulatory approval, they reproduce assumptions that marginalize populations with informal or community-based identification. The underappreciated dynamic is that AI validation metrics are being gamed by alignment to bureaucratic legibility rather than real-world identity complexity, reinforcing infrastructural inequalities through certification dependencies.

Archival Epistemic Bias

National archives and historical population registers—such as India’s pre-Partition census rolls or France’s colonial Indigénat records—are being digitized and fed into cultural heritage AI models without critical metadata about their original administrative violence, enabling machine learning systems to naturalize categorizations that once underpinned discriminatory policies. These datasets are treated as neutral historical sources, but their structural omissions and power-laden taxonomies become invisible priors in language and image models trained on archival text. The overlooked systemic force is that AI inherits not only content from these systems but a silent epistemology of state control, where identity is reducible to controlled enumeration.

How did the way AI services are built in Azure change over time as older security rules kept getting applied to newer projects?

Inherited Entitlements Lock-in

Azure’s shift from perimeter-based to identity-centric security did not phase out legacy access rules but instead layered new AI services atop frozen heritage policies, where outdated role assignments in Azure Active Directory continued to govern resource access despite zero-trust initiatives; this persistence was enforced by enterprise dependency on unmodified service principals from pre-2018 deployments, revealing that backward compatibility became a de facto security standard, undermining intent-based policy engines in newer AI workloads.

Compliance Debt Accumulation

As Azure AI services adopted automated governance via Policy as Code post-2020, pre-existing regulatory compliance templates—originally designed for static virtual machines—were compulsorily inherited by dynamic, data-intensive AI pipelines, forcing teams to retroactively justify model training jobs under infrastructure-era audit rules; this misalignment created a shadow burden where legal risk was displaced rather than resolved, exposing how regulatory precedent overrides architectural modernity in cloud trust frameworks.

Policy Seams Exploitation

The integration of Azure Machine Learning with governed data stores required adherence to legacy network security groups (NSGs) designed for monolithic applications, yet AI workloads' ephemeral compute bursts routinely violated NSG stateful tracking assumptions, leading operators to loosen rules site-wide; this systemic workaround emerged not from negligence but from operational necessity, demonstrating that discontinuities between old traffic models and new processing paradigms generate exploitable boundaries within security enforcement itself.

Legacy Anchoring

Older Azure security rules became default templates for new AI services, embedding compliance constraints from pre-AI workloads into next-gen deployments. Enterprises reusing standardized network policies, identity roles, and data governance modules—originally built for on-premises ERP or CRM systems—applied them to large language model pipelines, where low-latency inference and distributed data ingestion now had to comply with rigid, centralized access controls. This created friction between cloud-native agility and inherited perimeter-based security, privileging auditability over adaptability in ways that silently shaped AI model deployment patterns. The non-obvious outcome under Familiar Territory is that 'security' increasingly meant continuity with past policy artifacts, not real-time risk calculus.

Pattern Lock-in

Microsoft’s documentation and Azure Quickstart templates propagated older role-based access control (RBAC) and virtual network (VNet) isolation models as best practices for AI projects, cementing them as cognitive defaults for developers. These templates—designed when AI workloads were rare and treated like batch analytics—were reused for real-time inferencing systems, even as those systems required dynamic scaling and API chaining across services. The replication of familiar structures (like NSGs and private endpoints) created a self-reinforcing design language where innovation meant fitting inside known boxes, not redefining them. What gets overlooked in Familiar Territory is how template ecosystems, not technical limits, became the primary governors of architectural possibility.

Governance Drift

Enterprise cloud governance bodies extended legacy data classification schemes—originally for financial records and PII—into AI content moderation and prompt logging, forcing generative models to undergo the same audit cycles as transactional databases. As a result, AI services were required to preserve state and input history in ways that contradicted their ephemeral, probabilistic nature, slowing iteration and increasing storage costs. This grafting of document-centric compliance onto generative systems reveals, under Familiar Territory, how governance rituals persist not because they fit the technology, but because they match organizational memory of what 'control' looks like.