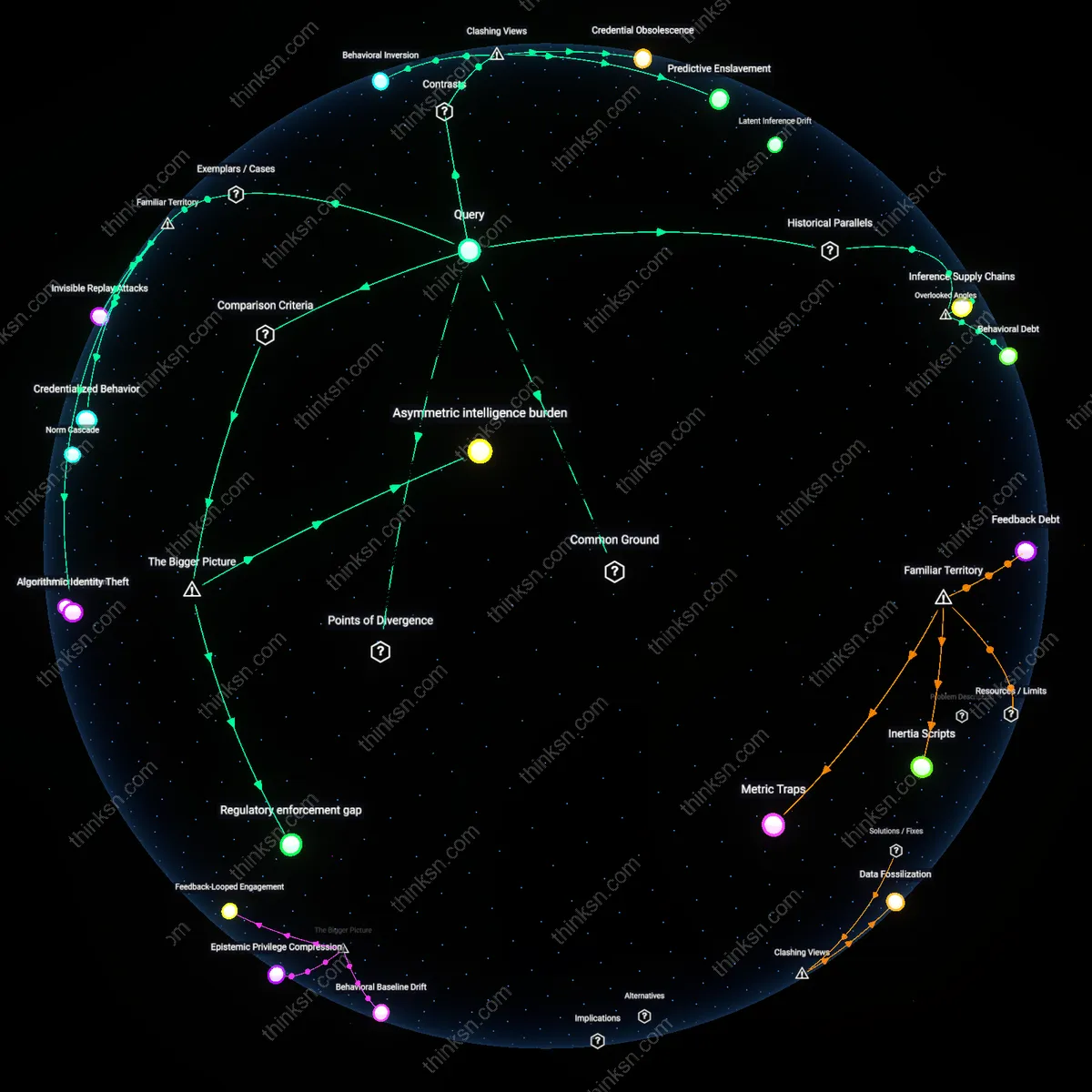

Is Behavioral Profiling a Bigger Cyber Risk than Credential Theft?

Analysis reveals 11 key thematic connections.

Key Findings

Behavioral Debt

Weaponized behavioral profiling poses a structurally irreversible risk compared to credential theft because it exploits learned behavioral patterns that cannot be reset like passwords, analogous to how the permanent exposure of a diplomat’s social trust networks in Cold War Eastern Europe enabled lifelong coercion where stolen documents could be replaced. Unlike credential theft, which targets discrete access points, behavioral profiling embeds exploitation into the predictability of routine action—making the individual their own vulnerability surface—via systems like smart home analytics or app usage timing, a shift most risk models ignore because they treat data as transactional rather than cumulative. This non-obvious persistence redefines personal risk as a function of behavioral history, not just current security hygiene.

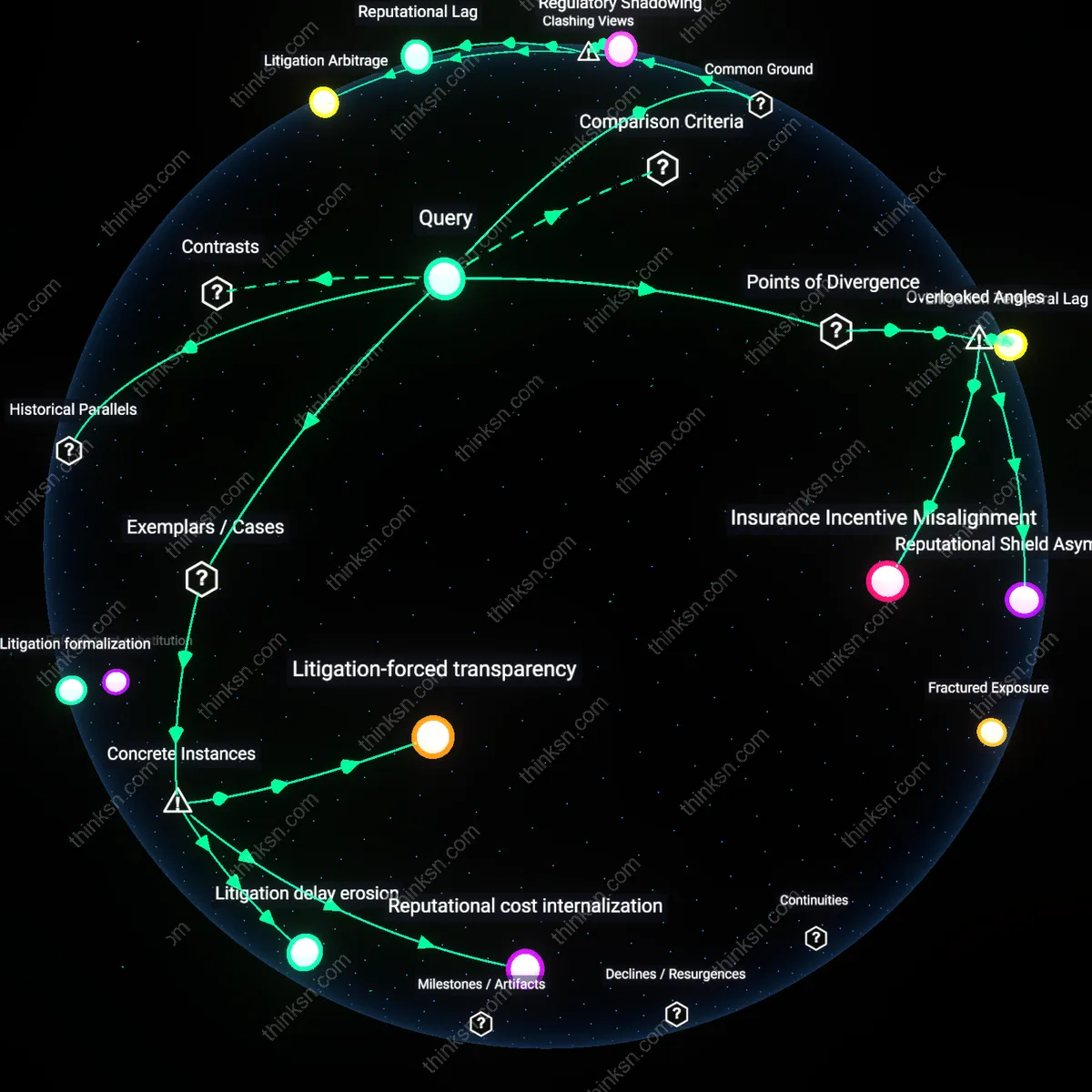

Inference Supply Chains

The danger of weaponized behavioral data exceeds credential theft in strategic latency because its value emerges not from direct access but from integration into distributed inference supply chains, much like how colonial botanists relied on indigenous knowledge networks to identify valuable plants without crediting or controlling the source. Today, behavioral datasets are stripped from platform users but weaponized only when fused with actuarial models, geospatial tracking, and credit scoring—systems often operated by third parties with no direct relationship to the victim—making accountability diffuse and defenses reactive. This overlooked interdependence means personal risk is shaped less by one’s own data hygiene than by invisible downstream synthesis, a dependency absent from standard threat models.

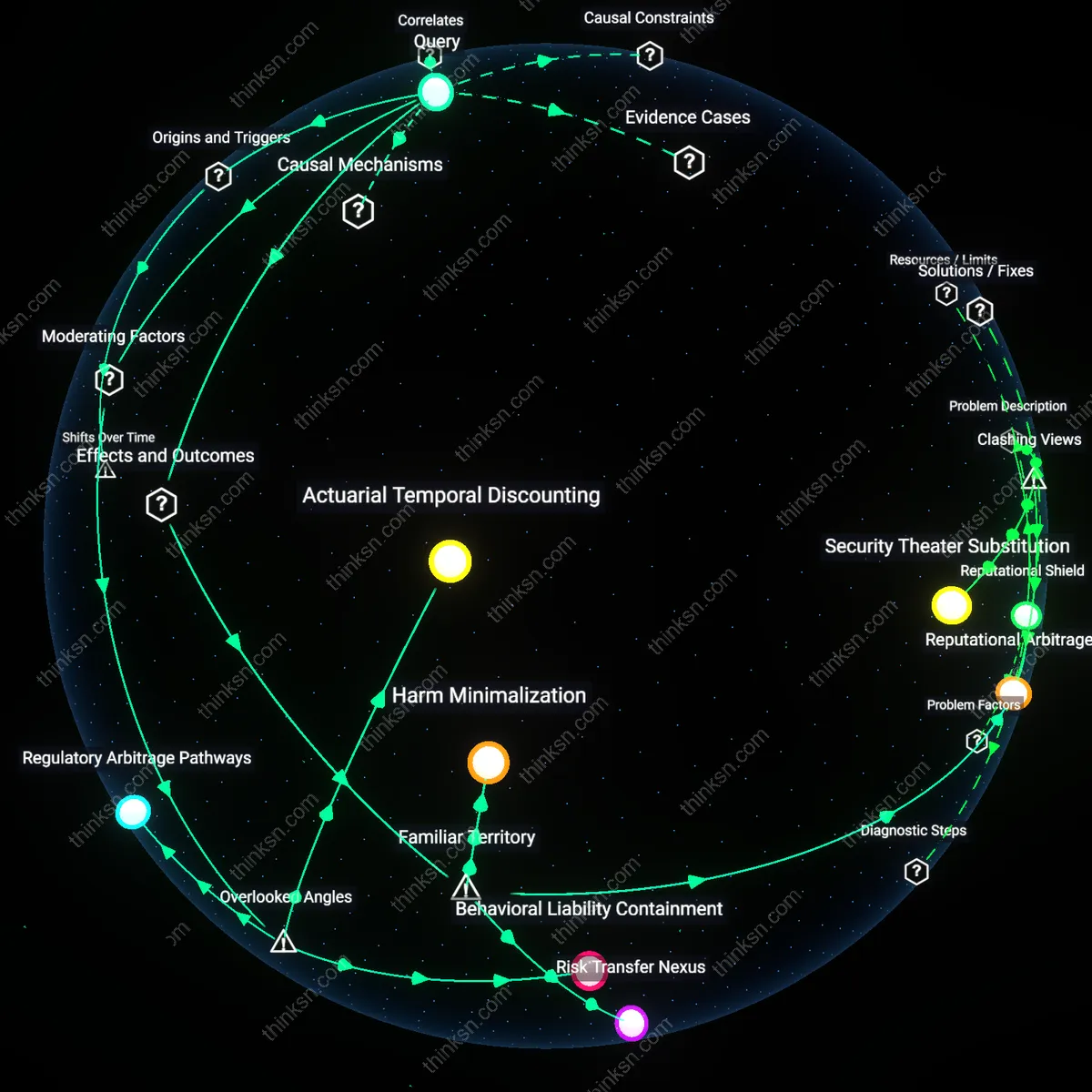

Temporal Weaponization

Behavioral profiling enables temporal weaponization—strategic manipulation of future decision-making through past behavior—whereas credential theft is temporally bounded to the moment of compromise, a distinction mirrored in pre-digital panopticon designs where continuous observation altered behavior over time rather than enabling one-off breaches. Systems like predictive policing algorithms or personalized disinformation campaigns exploit this by nudging choices years after data collection, leveraging the durability of inferred traits; this delayed-action mechanism operates through long-term data retention policies at firms like data brokers or health analytics platforms, which are rarely seen as threat vectors. The overlooked reality is that risk accumulates not from exposure but from the extended shelf life of behavioral inference, transforming privacy loss into a form of slow violence.

Behavioral Inversion

The threat of weaponized behavioral profiling data is not more severe than credential theft because it does not enable direct system access, but because it subverts identity perception—actors like political consultancies use microtargeting algorithms trained on Facebook-derived psychographics to simulate authentic voter intent, thereby manipulating behavior without authentication. This operates through feedback loops in digital advertising ecosystems where observed actions are repurposed as predictive levers, making the mechanism analytically distinct from theft-based intrusions. The non-obvious insight is that the danger lies not in bypassing security but in redefining what counts as voluntary action, undermining the epistemic basis of consent.

Credential Obsolescence

Weaponized behavioral data is less about new forms of harm and more about the erosion of the very conditions under which credentials matter—state intelligence agencies and data brokers like Clearview AI reconstruct identity through facial recognition and social graph mapping, rendering passwords and 2FA irrelevant not by stealing them but by making authentication secondary to continuous surveillance. This functions through ambient biometric tracking in public space, a system where access is determined by passive recognition rather than active proof. The dissonant finding is that we are moving toward a post-credential security paradigm, one in which the traditional model of 'protected secrets' collapses under persistent observation.

Predictive Enslavement

Unlike credential theft, which exploits discrete vulnerabilities in trust systems, weaponized behavioral profiling threatens autonomy by pre-emptively scripting choices—insurance firms such as UnitedHealth use claims-linked behavioral models to adjust coverage in real time, conditioning care access on anticipated risk trajectories derived from grocery purchases, location trails, and social media use. This mechanism operates via algorithmic risk stratification embedded in service delivery infrastructures, where prediction becomes a form of control. The underappreciated reality is that the primary risk is not impersonation but the elimination of surprise, spontaneity, and deviance in human behavior—subjects are coerced not by force but by foregone conclusions.

Asymmetric intelligence burden

Weaponized behavioral profiling data presents a qualitatively higher personal risk than credential theft by redistributing the strategic burden of intelligence asymmetry from institutions to individuals. Traditional credential theft operates through a known threat model where actors like cybercriminals exploit stolen passwords or two-factor tokens—an attack surface well-mitigated by multi-factor authentication and zero-trust frameworks adopted by financial and government services. In contrast, behavioral profiling weaponization occurs through opaque data supply chains (e.g., data brokers selling smartphone location traces to third-party hedge funds or adversarial states), enabling threat actors to construct detailed psychological models without needing credentials at all. This forces individuals to defend not just their access points but their behavioral patterns—over which they have little control or visibility—resulting in a structural imbalance where defensive complexity grows non-linearly for the user, while attackers gain deeper inference power through ambient surveillance.

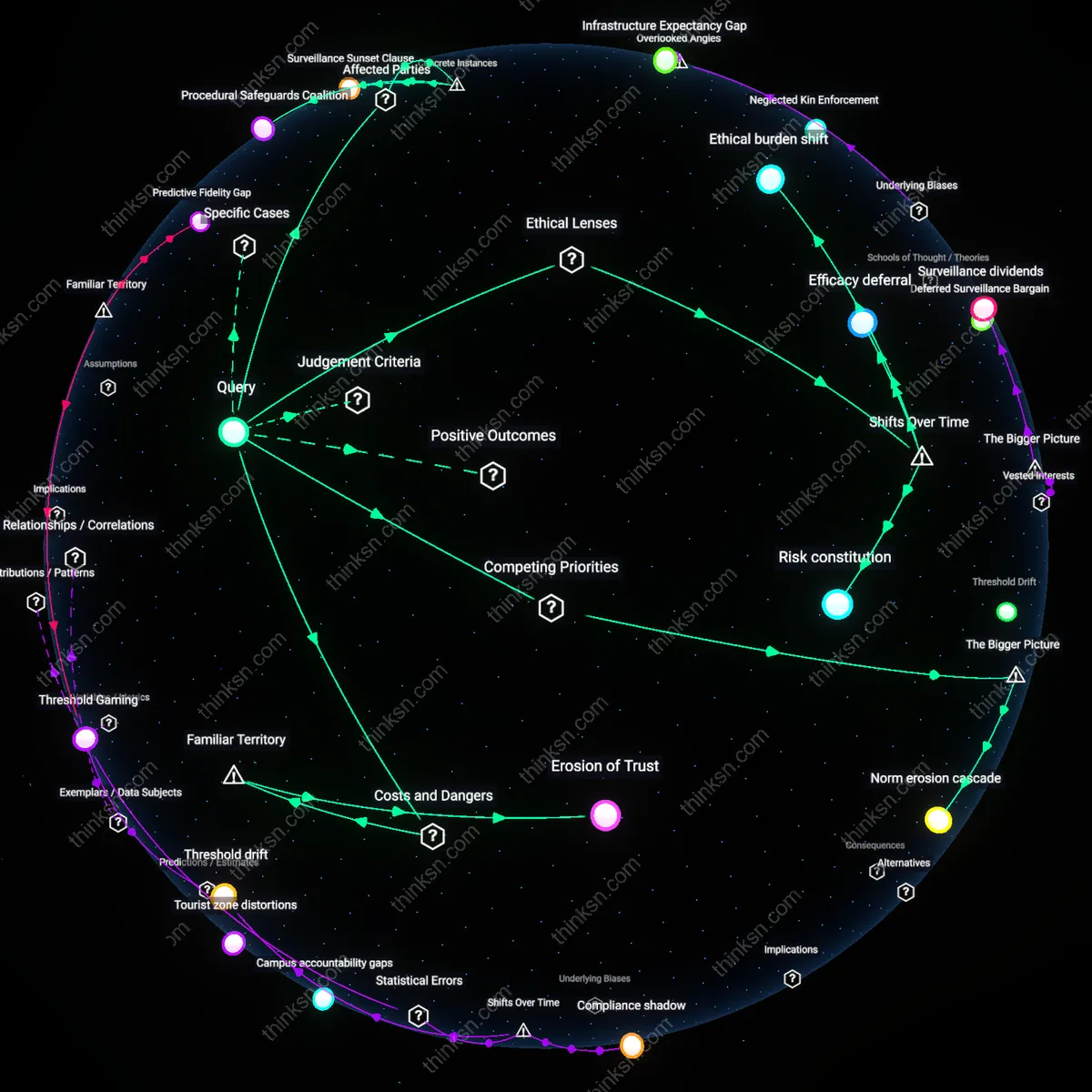

Regulatory enforcement gap

Behavioral profiling data is more dangerous than credential theft in the long term because it thrives in regulatory blind spots where existing privacy laws, like GDPR or CCPA, fail to classify cognitive manipulation as harm. Credential theft is recognized under current legal frameworks as a breach with clear liability, compensable damage, and forensic traceability—allowing entities like Equifax to be held accountable after 2017’s credential exposure. In contrast, the weaponization of behavioral profiles—such as Cambridge Analytica’s microtargeting of swing voters via psychographic segmentation—exploits personal data in ways that are legally permissible under platform terms but ethically and socially destructive, as they bypass informed consent through interface design and data aggregation loopholes. The underappreciated dynamic is that compliance with privacy regulations does not prevent abuse when the regulation itself defines harm too narrowly, allowing legally compliant systems to become instruments of covert influence operations.

Algorithmic Identity Theft

Weaponized behavioral profiling poses a greater personal risk than credential theft because it enables impersonation not through stolen passwords but through replicated decision patterns, as seen with Cambridge Analytica’s use of Facebook-derived psychographics to manipulate voter behavior. The mechanism operates through microtargeting systems that learn and exploit cognitive vulnerabilities, making deception adaptive and context-aware, unlike static credential misuse. What’s underappreciated is that people still frame data breaches in terms of login exposure, not identity simulation, despite evidence that synthetic behavioral models can bypass not just security but judgment.

Credentialized Behavior

The threat of behavioral profiling now mirrors traditional credential theft because platforms like Clearview AI transform biometric and behavioral patterns into reusable authentication tokens, enabling persistent tracking and unauthorized access similar to stolen passwords. This operates through facial recognition systems trained on scraped public data, where a ‘profile’ becomes a new kind of key—not to accounts, but to personal autonomy. While most associate identity theft with account takeovers, the unspoken shift is that walking in public can now trigger access to dossiers as valid as a Social Security number in the wrong system.

Invisible Replay Attacks

Behavioral profiling data enables a form of digital replay attack that exceeds credential theft in stealth and scale, exemplified by how insurance companies like Vitality use telematics and smartwatch data to dynamically adjust risk premiums based on inferred habits. The system works by continuously authenticating behavior as truth, treating routine as evidence, which allows third parties to act upon predicted actions before decisions are even made. Unlike credential theft—seen as a discrete breach—this reframes normalcy itself as a vulnerability, a risk most people don’t perceive because they equate privacy harm only with hacking, not with being accurately known.