Could AI Liability Laws Threaten Investor Growth and Security?

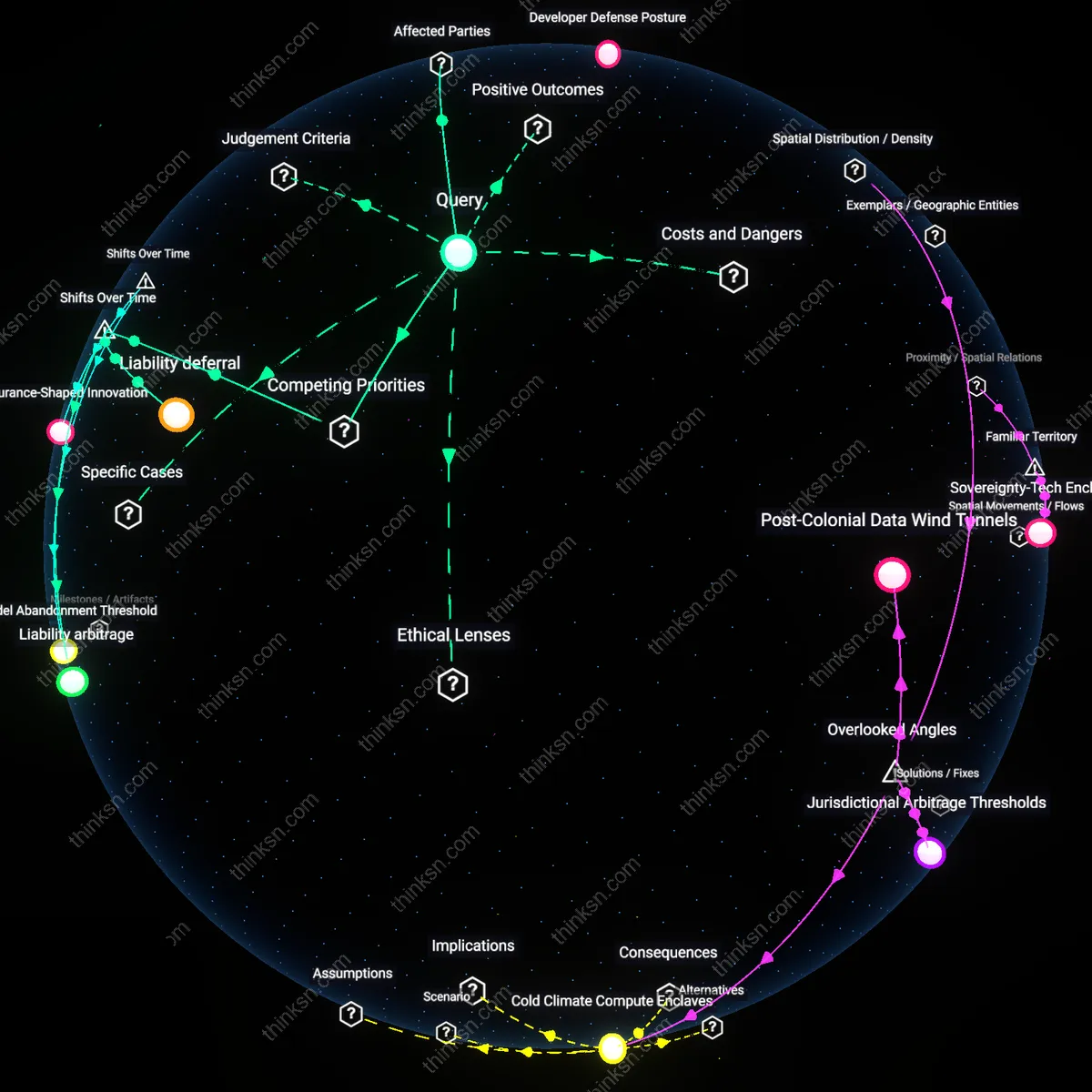

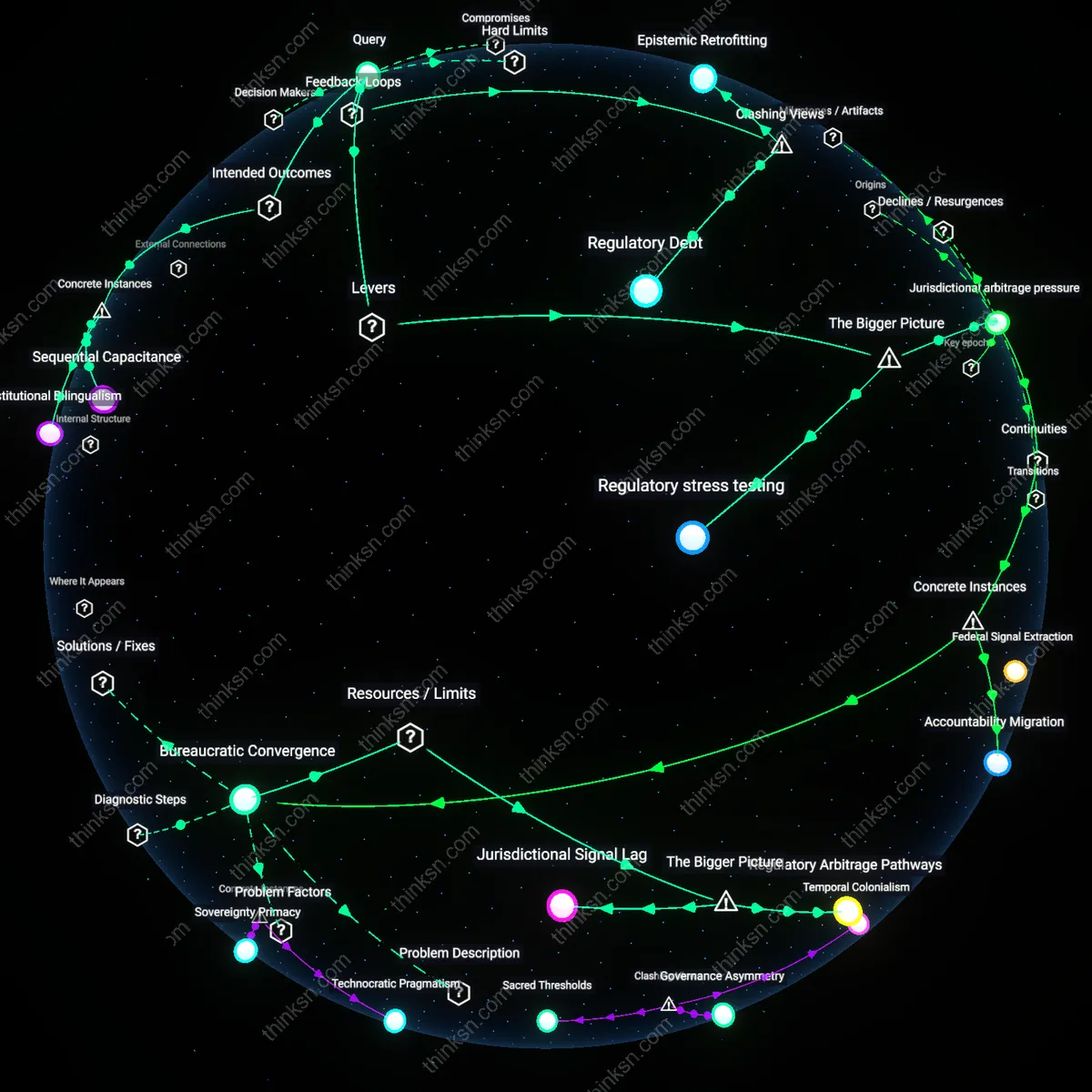

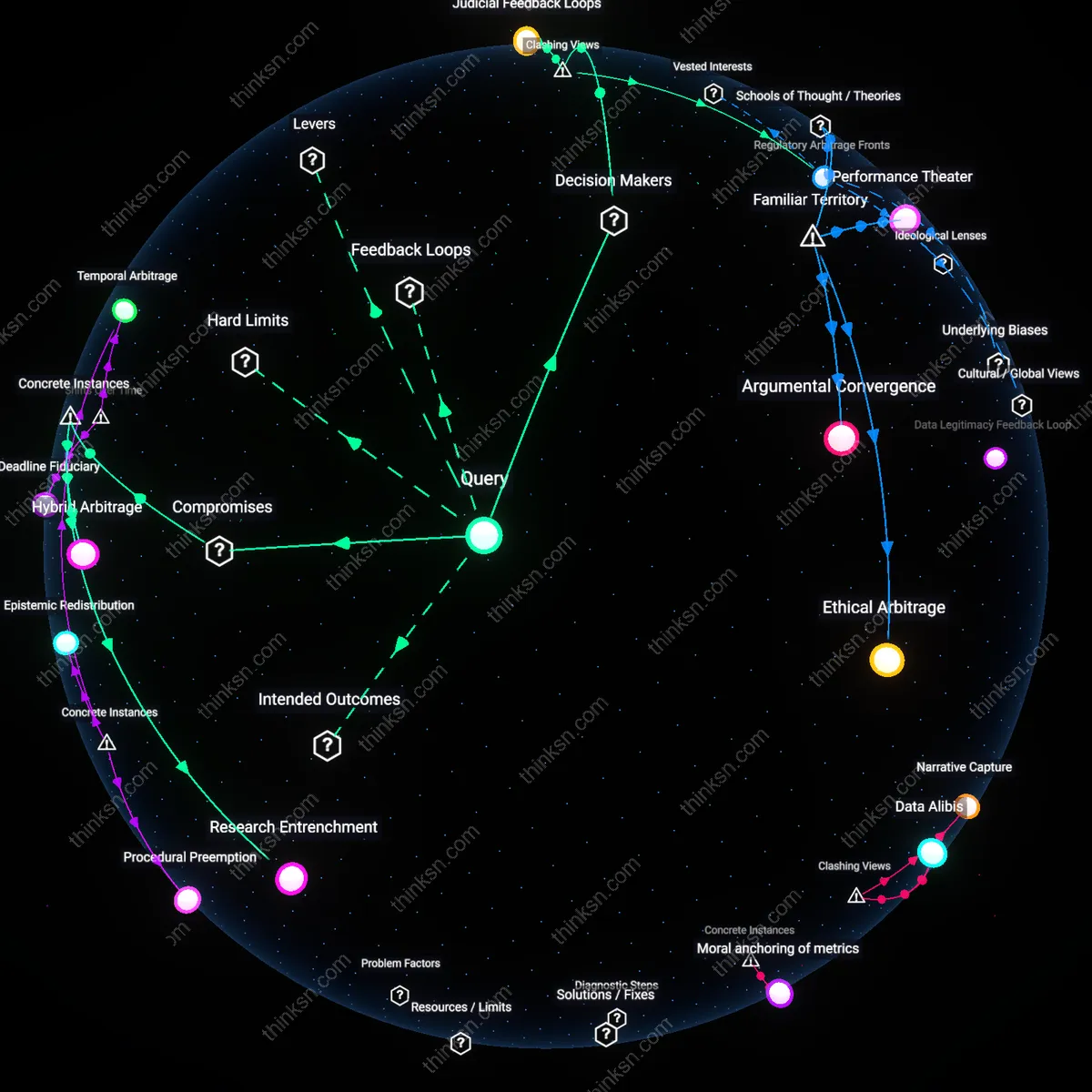

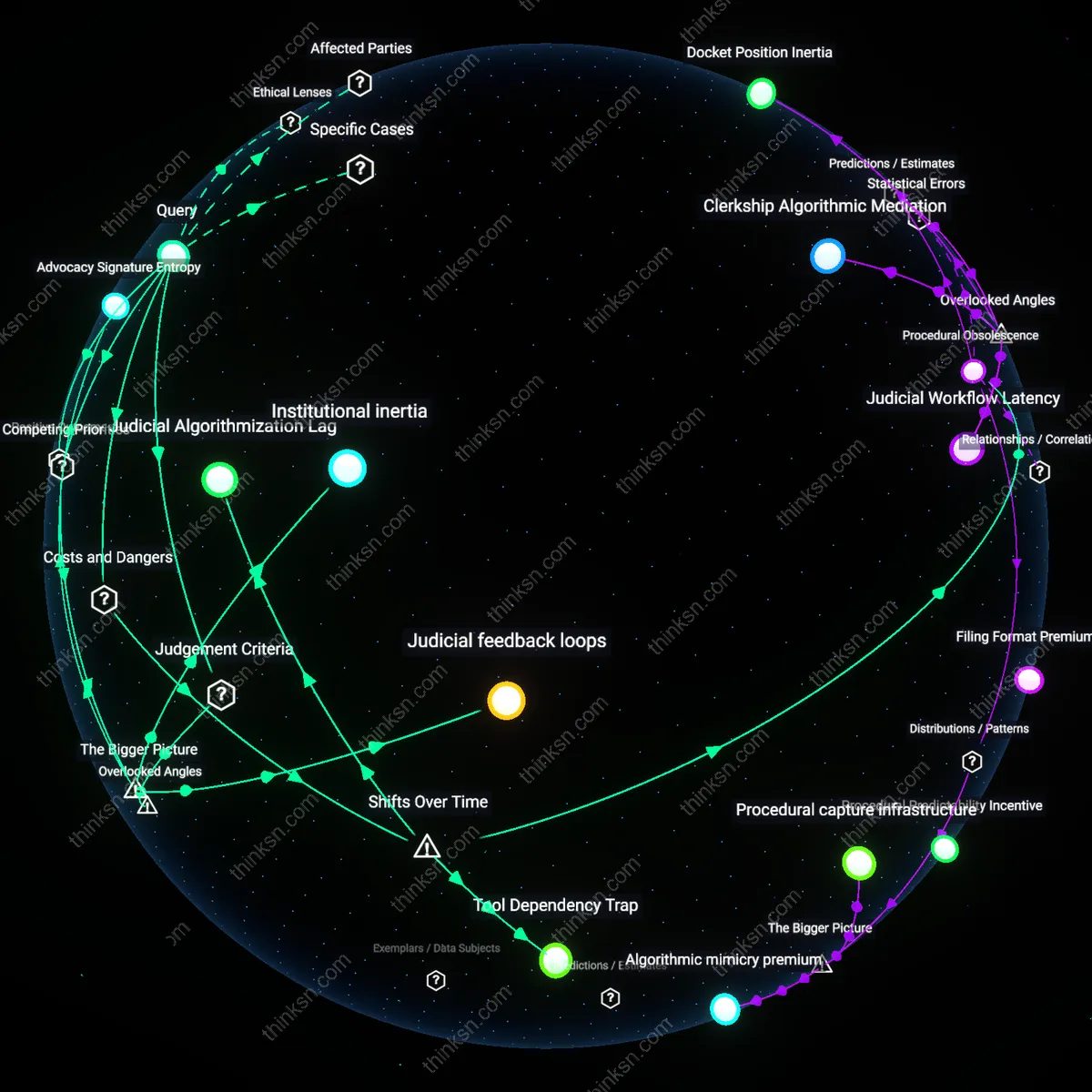

Analysis reveals 6 key thematic connections.

Key Findings

Developer Defense Posture

Investors should monitor how AI developers alter code documentation and update practices in response to proposed retroactive liability laws. Tech firms like those in Silicon Valley are increasingly instituting audit trails and version-controlled model releases not just for compliance, but as legal shields—making visible changes to code provenance a signal of future liability exposure. This shift is significant because it reveals a quiet recalibration of engineering culture toward legal defensibility, where the act of logging becomes a proxy for accountability. What’s underappreciated is that these internal process changes, often invisible to market analysts, function as early indicators of systemic risk that precede financial disclosure.

Insurance Feedback Loop

Investors can gauge legal risk by tracking how AI liability insurance premiums shift following legislative hearings or court rulings involving algorithmic harm. Major underwriters in Lloyd’s of London and AIG are now pricing policies based on jurisdiction-specific retroactivity clauses, creating a real-time market signal that reflects anticipated enforcement severity. This mechanism is critical because it transforms legal uncertainty into quantifiable risk capital, which in turn shapes which AI applications get funded. The underappreciated insight is that insurers, not regulators, are becoming the de facto standard-setters for acceptable AI risk due to their appetite to avoid unbounded liability exposure.

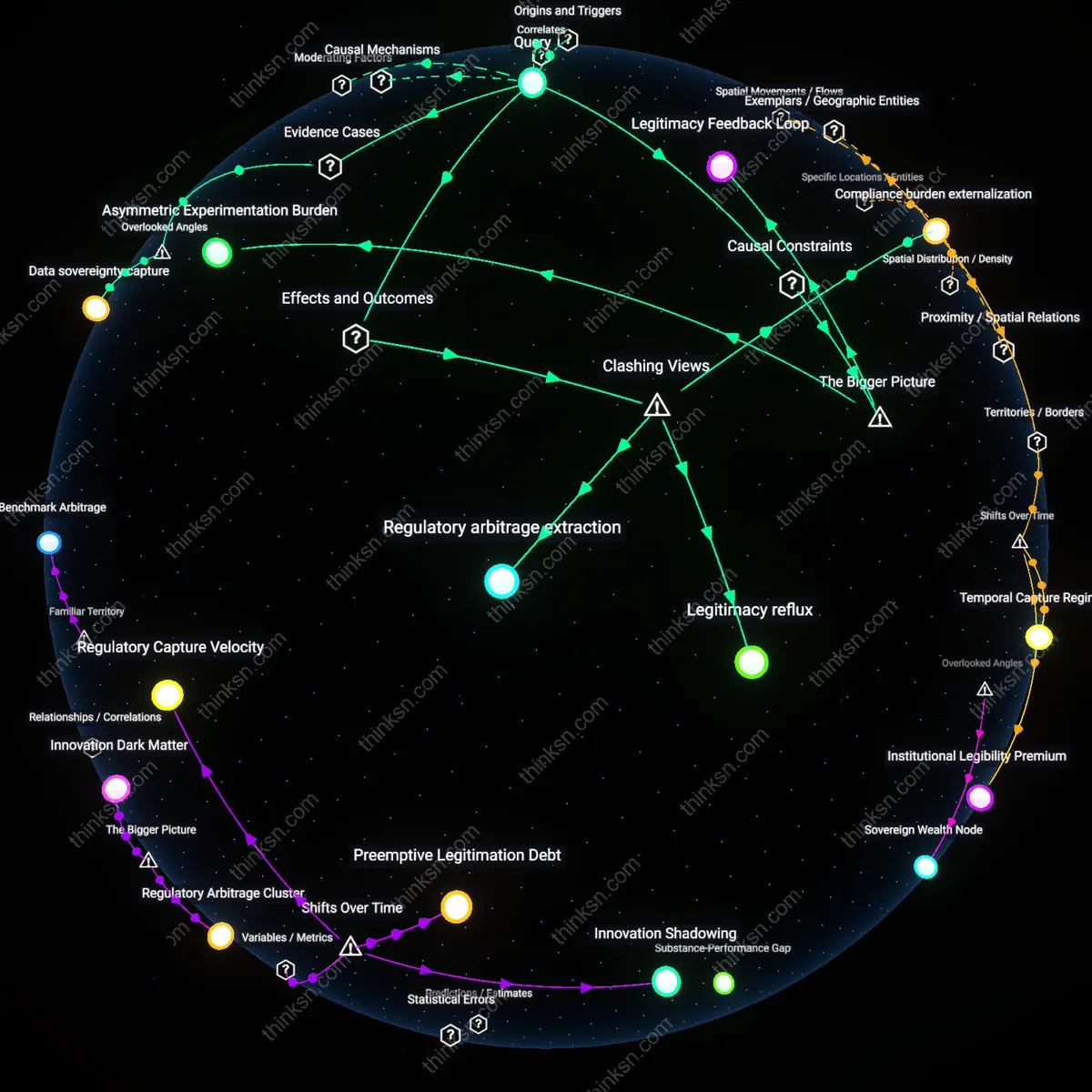

Regulatory Arbitrage Frontier

Investors should assess where companies are relocating AI training infrastructure to jurisdictions with explicit liability safe harbors, such as Canada’s AI and Data Act or Switzerland’s experimental legal exemptions. Firms like Anthropic and Cohere have begun splitting development pipelines across borders, keeping high-risk model iterations outside retroactive-law reach. This spatial workaround matters because it turns national legal differences into durable competitive advantages. The overlooked consequence is that retroactive laws may not reduce risk overall but instead redistribute it geographically, fostering shadow innovation ecosystems insulated from user protections.

Liability deferral

Investors can assess legal risks by analyzing how regulatory latency—the gap between AI deployment and formal liability frameworks—enables firms to operate in a de facto liability-free window, where early market capture is prioritized over compliance foresight. This mechanism favors aggressive scaling over risk mitigation, as seen in the 2016–2020 deployment phase of facial recognition systems when law enforcement adoption surged without clear accountability statutes, creating a market incentive to defer legal exposure. The non-obvious implication is that retroactive legislation doesn't just alter future behavior but retroactively monetizes past risk-taking, turning historical regulatory absence into a liability time bomb.

Innovation burden

Investors must evaluate how the post-2023 shift from experimental AI governance to binding liability regimes transforms compliance into a fixed cost of innovation, where safety investments directly subtract from R&D capacity. This zero-sum trade emerges clearly in sectors like autonomous vehicles, where post-2020 accident litigation prompted firms like Waymo to divert engineering resources from feature expansion to audit-trail generation and explainability modules—functions that yield no market differentiation but reduce legal exposure. The underappreciated consequence is that liability pressure doesn't merely constrain growth; it restructures the innovation process itself, privileging defensibility over novelty.

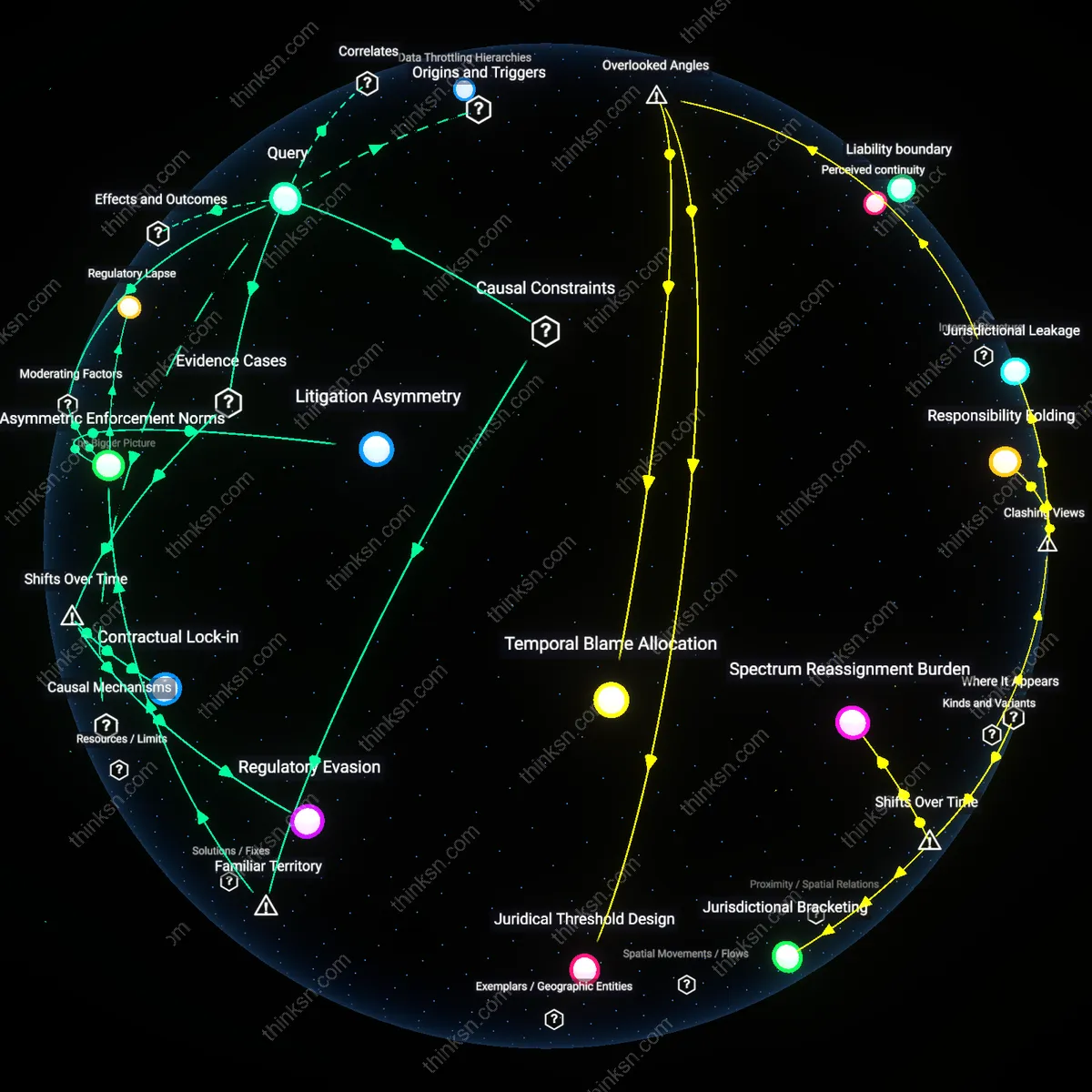

Liability arbitrage

Investors should track how firms increasingly relocate AI development infrastructure to jurisdictions with weak tort enforcement—such as certain ASEAN states post-2021—to decouple product impact from legal consequence, enabling global market reach without commensurate liability exposure. This spatial workaround became viable only after the EU proposed strict AI Act liability rules in 2021, triggering a strategic shift among U.S.-based generative AI startups to host training systems offshore while maintaining client interfaces in regulated markets. The overlooked dynamic is that retroactive liability doesn't uniformly increase risk—it creates a new geography of legal vulnerability, where location of deployment matters less than location of operational control.